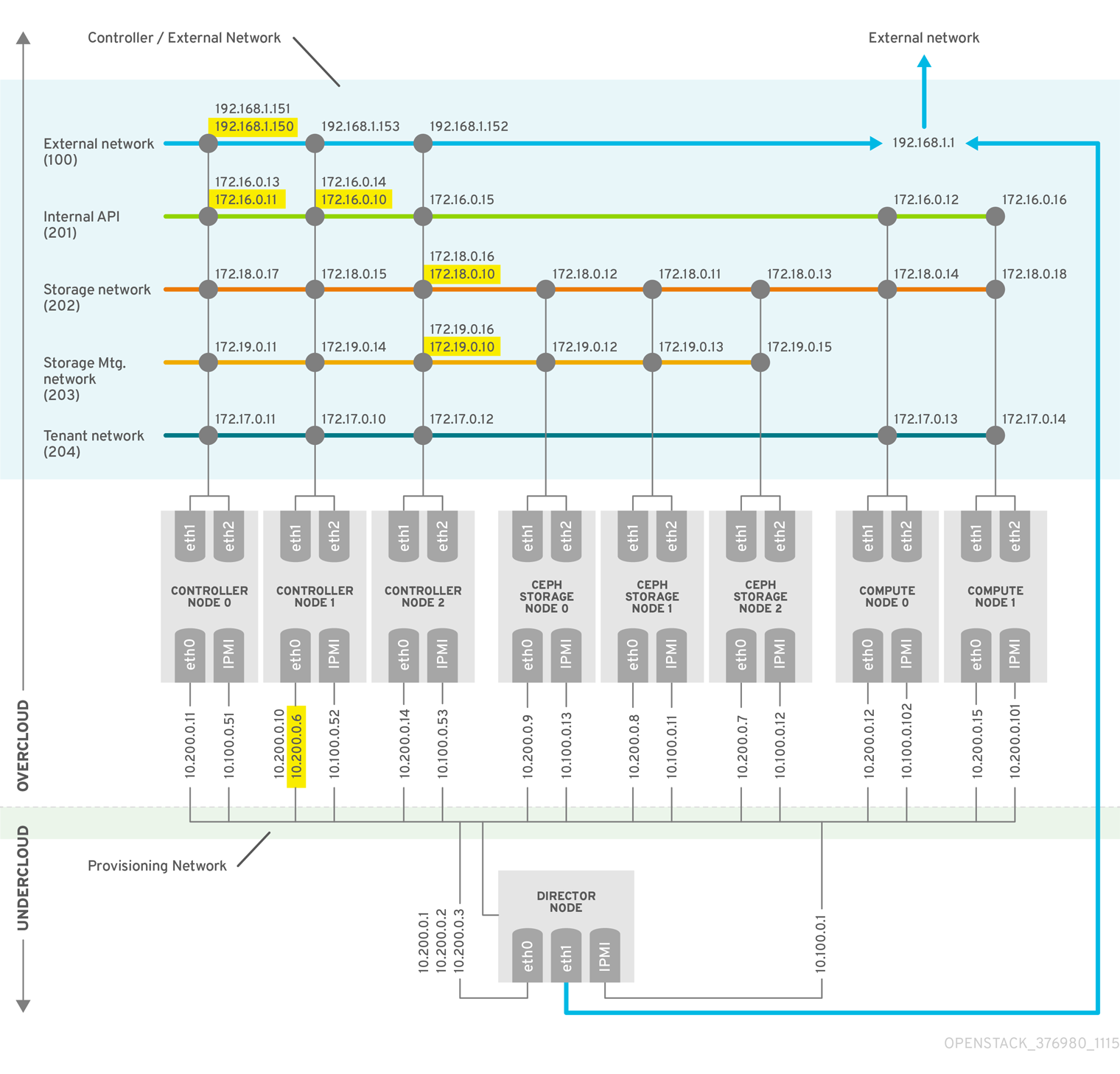

Chapter 2. Example deployment: High availability cluster with Compute and Ceph

The following example scenario shows the architecture, hardware and network specifications, as well as the undercloud and overcloud configuration files for a high availability deployment with the OpenStack Compute service and Red Hat Ceph Storage.

This deployment is intended to use as a reference for test environments and is not supported for production environments.

Figure 2.1. Example high availability deployment architecture

For more information about deploying a Red Hat Ceph Storage cluster, see Deploying an Overcloud with Containerized Red Hat Ceph.

For more information about deploying Red Hat OpenStack Platform with director, see Director Installation and Usage.

2.1. Hardware specifications

The following table shows the hardware used in the example deployment. You can adjust the CPU, memory, storage, or NICs as needed in your own test deployment.

Table 2.1. Physical computers

| Number of Computers | Purpose | CPUs | Memory | Disk Space | Power Management | NICs |

|---|---|---|---|---|---|---|

| 1 | undercloud node | 4 | 6144 MB | 40 GB | IPMI | 2 (1 external; 1 on provisioning) + 1 IPMI |

| 3 | Controller nodes | 4 | 6144 MB | 40 GB | IPMI | 3 (2 bonded on overcloud; 1 on provisioning) + 1 IPMI |

| 3 | Ceph Storage nodes | 4 | 6144 MB | 40 GB | IPMI | 3 (2 bonded on overcloud; 1 on provisioning) + 1 IPMI |

| 2 | Compute nodes (add more as needed) | 4 | 6144 MB | 40 GB | IPMI | 3 (2 bonded on overcloud; 1 on provisioning) + 1 IPMI |

Review the following guidelines when you plan hardware assignments:

- Controller nodes

- Most non-storage services run on Controller nodes. All services are replicated across the three nodes, and are configured as active-active or active-passive services. An HA environment requires a minimum of three nodes.

- Red Hat Ceph Storage nodes

- Storage services run on these nodes and provide pools of Red Hat Ceph Storage areas to the Compute nodes. A minimum of three nodes are required.

- Compute nodes

- Virtual machine (VM) instances run on Compute nodes. You can deploy as many Compute nodes as you need to meet your capacity requirements, as well as migration and reboot operations. You must connect Compute nodes to the storage network and to the tenant network, to ensure that VMs can access the storage nodes, the VMs on other Compute nodes, and the public networks.

- STONITH

- You must configure a STONITH device for each node that is a part of the Pacemaker cluster in a highly available overcloud. Deploying a highly available overcloud without STONITH is not supported. For more information on STONITH and Pacemaker, see Fencing in a Red Hat High Availability Cluster and Support Policies for RHEL High Availability Clusters.

2.2. Network specifications

The following table shows the network configuration used in the example deployment.

This example does not include hardware redundancy for the control plane and the provisioning network where the overcloud keystone admin endpoint is configured.

Table 2.2. Physical and virtual networks

| Physical NICs | Purpose | VLANs | Description |

|---|---|---|---|

| eth0 | Provisioning network (undercloud) | N/A | Manages all nodes from director (undercloud) |

| eth1 and eth2 | Controller/External (overcloud) | N/A | Bonded NICs with VLANs |

| External network | VLAN 100 | Allows access from outside the environment to the tenant networks, internal API, and OpenStack Horizon Dashboard | |

| Internal API | VLAN 201 | Provides access to the internal API between Compute nodes and Controller nodes | |

| Storage access | VLAN 202 | Connects Compute nodes to storage media | |

| Storage management | VLAN 203 | Manages storage media | |

| Tenant network | VLAN 204 | Provides tenant network services to RHOSP |

In addition to the network configuration, you must deploy the following components:

- Provisioning network switch

- This switch must be able to connect the undercloud to all the physical computers in the overcloud.

- The NIC on each overcloud node that is connected to this switch must be able to PXE boot from the undercloud.

-

The

portfastparameter must be enabled.

- Controller/External network switch

- This switch must be configured to perform VLAN tagging for the other VLANs in the deployment.

- Allow only VLAN 100 traffic to external networks.

- Networking hardware and keystone endpoint

To prevent a Controller node network card or network switch failure disrupting overcloud services availability, ensure that the keystone admin endpoint is located on a network that uses bonded network cards or networking hardware redundancy.

If you move the keystone endpoint to a different network, such as

internal_api, ensure that the undercloud can reach the VLAN or subnet. For more information, see the Red Hat Knowledgebase solution How to migrate Keystone Admin Endpoint to internal_api network.

2.3. Undercloud configuration files

The example deployment uses the following undercloud configuration files.

instackenv.json

{

"nodes": [

{

"pm_password": "testpass",

"memory": "6144",

"pm_addr": "10.100.0.11",

"mac": [

"2c:c2:60:3b:b3:94"

],

"pm_type": "pxe_ipmitool",

"disk": "40",

"arch": "x86_64",

"cpu": "1",

"pm_user": "admin"

},

{

"pm_password": "testpass",

"memory": "6144",

"pm_addr": "10.100.0.12",

"mac": [

"2c:c2:60:51:b7:fb"

],

"pm_type": "pxe_ipmitool",

"disk": "40",

"arch": "x86_64",

"cpu": "1",

"pm_user": "admin"

},

{

"pm_password": "testpass",

"memory": "6144",

"pm_addr": "10.100.0.13",

"mac": [

"2c:c2:60:76:ce:a5"

],

"pm_type": "pxe_ipmitool",

"disk": "40",

"arch": "x86_64",

"cpu": "1",

"pm_user": "admin"

},

{

"pm_password": "testpass",

"memory": "6144",

"pm_addr": "10.100.0.51",

"mac": [

"2c:c2:60:08:b1:e2"

],

"pm_type": "pxe_ipmitool",

"disk": "40",

"arch": "x86_64",

"cpu": "1",

"pm_user": "admin"

},

{

"pm_password": "testpass",

"memory": "6144",

"pm_addr": "10.100.0.52",

"mac": [

"2c:c2:60:20:a1:9e"

],

"pm_type": "pxe_ipmitool",

"disk": "40",

"arch": "x86_64",

"cpu": "1",

"pm_user": "admin"

},

{

"pm_password": "testpass",

"memory": "6144",

"pm_addr": "10.100.0.53",

"mac": [

"2c:c2:60:58:10:33"

],

"pm_type": "pxe_ipmitool",

"disk": "40",

"arch": "x86_64",

"cpu": "1",

"pm_user": "admin"

},

{

"pm_password": "testpass",

"memory": "6144",

"pm_addr": "10.100.0.101",

"mac": [

"2c:c2:60:31:a9:55"

],

"pm_type": "pxe_ipmitool",

"disk": "40",

"arch": "x86_64",

"cpu": "2",

"pm_user": "admin"

},

{

"pm_password": "testpass",

"memory": "6144",

"pm_addr": "10.100.0.102",

"mac": [

"2c:c2:60:0d:e7:d1"

],

"pm_type": "pxe_ipmitool",

"disk": "40",

"arch": "x86_64",

"cpu": "2",

"pm_user": "admin"

}

],

"overcloud": {"password": "7adbbbeedc5b7a07ba1917e1b3b228334f9a2d4e",

"endpoint": "http://192.168.1.150:5000/v2.0/"

}

}

undercloud.conf

[DEFAULT] image_path = /home/stack/images local_ip = 10.200.0.1/24 undercloud_public_vip = 10.200.0.2 undercloud_admin_vip = 10.200.0.3 undercloud_service_certificate = /etc/pki/instack-certs/undercloud.pem local_interface = eth0 masquerade_network = 10.200.0.0/24 dhcp_start = 10.200.0.5 dhcp_end = 10.200.0.24 network_cidr = 10.200.0.0/24 network_gateway = 10.200.0.1 #discovery_interface = br-ctlplane discovery_iprange = 10.200.0.150,10.200.0.200 discovery_runbench = 1 undercloud_admin_password = testpass ...

network-environment.yaml

resource_registry:

OS::TripleO::BlockStorage::Net::SoftwareConfig: /home/stack/templates/nic-configs/cinder-storage.yaml

OS::TripleO::Compute::Net::SoftwareConfig: /home/stack/templates/nic-configs/compute.yaml

OS::TripleO::Controller::Net::SoftwareConfig: /home/stack/templates/nic-configs/controller.yaml

OS::TripleO::ObjectStorage::Net::SoftwareConfig: /home/stack/templates/nic-configs/swift-storage.yaml

OS::TripleO::CephStorage::Net::SoftwareConfig: /home/stack/templates/nic-configs/ceph-storage.yaml

parameter_defaults:

InternalApiNetCidr: 172.16.0.0/24

TenantNetCidr: 172.17.0.0/24

StorageNetCidr: 172.18.0.0/24

StorageMgmtNetCidr: 172.19.0.0/24

ExternalNetCidr: 192.168.1.0/24

InternalApiAllocationPools: [{'start': '172.16.0.10', 'end': '172.16.0.200'}]

TenantAllocationPools: [{'start': '172.17.0.10', 'end': '172.17.0.200'}]

StorageAllocationPools: [{'start': '172.18.0.10', 'end': '172.18.0.200'}]

StorageMgmtAllocationPools: [{'start': '172.19.0.10', 'end': '172.19.0.200'}]

# Leave room for floating IPs in the External allocation pool

ExternalAllocationPools: [{'start': '192.168.1.150', 'end': '192.168.1.199'}]

InternalApiNetworkVlanID: 201

StorageNetworkVlanID: 202

StorageMgmtNetworkVlanID: 203

TenantNetworkVlanID: 204

ExternalNetworkVlanID: 100

# Set to the router gateway on the external network

ExternalInterfaceDefaultRoute: 192.168.1.1

# Set to "br-ex" if using floating IPs on native VLAN on bridge br-ex

NeutronExternalNetworkBridge: "''"

# Customize bonding options if required

BondInterfaceOvsOptions:

"bond_mode=active-backup lacp=off other_config:bond-miimon-interval=100"

2.4. Overcloud configuration files

The example deployment uses the following overcloud configuration files.

/var/lib/config-data/haproxy/etc/haproxy/haproxy.cfg (Controller nodes)

This file identifies the services that HAProxy manages. It contains the settings for the services that HAProxy monitors. This file is identical on all Controller nodes.

# This file is managed by Puppet

global

daemon

group haproxy

log /dev/log local0

maxconn 20480

pidfile /var/run/haproxy.pid

ssl-default-bind-ciphers !SSLv2:kEECDH:kRSA:kEDH:kPSK:+3DES:!aNULL:!eNULL:!MD5:!EXP:!RC4:!SEED:!IDEA:!DES

ssl-default-bind-options no-sslv3

stats socket /var/lib/haproxy/stats mode 600 level user

stats timeout 2m

user haproxy

defaults

log global

maxconn 4096

mode tcp

retries 3

timeout http-request 10s

timeout queue 2m

timeout connect 10s

timeout client 2m

timeout server 2m

timeout check 10s

listen aodh

bind 192.168.1.150:8042 transparent

bind 172.16.0.10:8042 transparent

mode http

http-request set-header X-Forwarded-Proto https if { ssl_fc }

http-request set-header X-Forwarded-Proto http if !{ ssl_fc }

option httpchk

server overcloud-controller-0.internalapi.localdomain 172.16.0.13:8042 check fall 5 inter 2000 rise 2

server overcloud-controller-1.internalapi.localdomain 172.16.0.14:8042 check fall 5 inter 2000 rise 2

server overcloud-controller-2.internalapi.localdomain 172.16.0.15:8042 check fall 5 inter 2000 rise 2

listen cinder

bind 192.168.1.150:8776 transparent

bind 172.16.0.10:8776 transparent

mode http

http-request set-header X-Forwarded-Proto https if { ssl_fc }

http-request set-header X-Forwarded-Proto http if !{ ssl_fc }

option httpchk

server overcloud-controller-0.internalapi.localdomain 172.16.0.13:8776 check fall 5 inter 2000 rise 2

server overcloud-controller-1.internalapi.localdomain 172.16.0.14:8776 check fall 5 inter 2000 rise 2

server overcloud-controller-2.internalapi.localdomain 172.16.0.15:8776 check fall 5 inter 2000 rise 2

listen glance_api

bind 192.168.1.150:9292 transparent

bind 172.18.0.10:9292 transparent

mode http

http-request set-header X-Forwarded-Proto https if { ssl_fc }

http-request set-header X-Forwarded-Proto http if !{ ssl_fc }

option httpchk GET /healthcheck

server overcloud-controller-0.internalapi.localdomain 172.18.0.17:9292 check fall 5 inter 2000 rise 2

server overcloud-controller-1.internalapi.localdomain 172.18.0.15:9292 check fall 5 inter 2000 rise 2

server overcloud-controller-2.internalapi.localdomain 172.18.0.16:9292 check fall 5 inter 2000 rise 2

listen gnocchi

bind 192.168.1.150:8041 transparent

bind 172.16.0.10:8041 transparent

mode http

http-request set-header X-Forwarded-Proto https if { ssl_fc }

http-request set-header X-Forwarded-Proto http if !{ ssl_fc }

option httpchk

server overcloud-controller-0.internalapi.localdomain 172.16.0.13:8041 check fall 5 inter 2000 rise 2

server overcloud-controller-1.internalapi.localdomain 172.16.0.14:8041 check fall 5 inter 2000 rise 2

server overcloud-controller-2.internalapi.localdomain 172.16.0.15:8041 check fall 5 inter 2000 rise 2

listen haproxy.stats

bind 10.200.0.6:1993 transparent

mode http

stats enable

stats uri /

stats auth admin:PnDD32EzdVCf73CpjHhFGHZdV

listen heat_api

bind 192.168.1.150:8004 transparent

bind 172.16.0.10:8004 transparent

mode http

http-request set-header X-Forwarded-Proto https if { ssl_fc }

http-request set-header X-Forwarded-Proto http if !{ ssl_fc }

option httpchk

timeout client 10m

timeout server 10m

server overcloud-controller-0.internalapi.localdomain 172.16.0.13:8004 check fall 5 inter 2000 rise 2

server overcloud-controller-1.internalapi.localdomain 172.16.0.14:8004 check fall 5 inter 2000 rise 2

server overcloud-controller-2.internalapi.localdomain 172.16.0.15:8004 check fall 5 inter 2000 rise 2

listen heat_cfn

bind 192.168.1.150:8000 transparent

bind 172.16.0.10:8000 transparent

mode http

http-request set-header X-Forwarded-Proto https if { ssl_fc }

http-request set-header X-Forwarded-Proto http if !{ ssl_fc }

option httpchk

timeout client 10m

timeout server 10m

server overcloud-controller-0.internalapi.localdomain 172.16.0.13:8000 check fall 5 inter 2000 rise 2

server overcloud-controller-1.internalapi.localdomain 172.16.0.14:8000 check fall 5 inter 2000 rise 2

server overcloud-controller-2.internalapi.localdomain 172.16.0.15:8000 check fall 5 inter 2000 rise 2

listen horizon

bind 192.168.1.150:80 transparent

bind 172.16.0.10:80 transparent

mode http

cookie SERVERID insert indirect nocache

option forwardfor

option httpchk

server overcloud-controller-0.internalapi.localdomain 172.16.0.13:80 check cookie overcloud-controller-0 fall 5 inter 2000 rise 2

server overcloud-controller-1.internalapi.localdomain 172.16.0.14:80 check cookie overcloud-controller-0 fall 5 inter 2000 rise 2

server overcloud-controller-2.internalapi.localdomain 172.16.0.15:80 check cookie overcloud-controller-0 fall 5 inter 2000 rise 2

listen keystone_admin

bind 192.168.24.15:35357 transparent

mode http

http-request set-header X-Forwarded-Proto https if { ssl_fc }

http-request set-header X-Forwarded-Proto http if !{ ssl_fc }

option httpchk GET /v3

server overcloud-controller-0.ctlplane.localdomain 192.168.24.9:35357 check fall 5 inter 2000 rise 2

server overcloud-controller-1.ctlplane.localdomain 192.168.24.8:35357 check fall 5 inter 2000 rise 2

server overcloud-controller-2.ctlplane.localdomain 192.168.24.18:35357 check fall 5 inter 2000 rise 2

listen keystone_public

bind 192.168.1.150:5000 transparent

bind 172.16.0.10:5000 transparent

mode http

http-request set-header X-Forwarded-Proto https if { ssl_fc }

http-request set-header X-Forwarded-Proto http if !{ ssl_fc }

option httpchk GET /v3

server overcloud-controller-0.internalapi.localdomain 172.16.0.13:5000 check fall 5 inter 2000 rise 2

server overcloud-controller-1.internalapi.localdomain 172.16.0.14:5000 check fall 5 inter 2000 rise 2

server overcloud-controller-2.internalapi.localdomain 172.16.0.15:5000 check fall 5 inter 2000 rise 2

listen mysql

bind 172.16.0.10:3306 transparent

option tcpka

option httpchk

stick on dst

stick-table type ip size 1000

timeout client 90m

timeout server 90m

server overcloud-controller-0.internalapi.localdomain 172.16.0.13:3306 backup check inter 1s on-marked-down shutdown-sessions port 9200

server overcloud-controller-1.internalapi.localdomain 172.16.0.14:3306 backup check inter 1s on-marked-down shutdown-sessions port 9200

server overcloud-controller-2.internalapi.localdomain 172.16.0.15:3306 backup check inter 1s on-marked-down shutdown-sessions port 9200

listen neutron

bind 192.168.1.150:9696 transparent

bind 172.16.0.10:9696 transparent

mode http

http-request set-header X-Forwarded-Proto https if { ssl_fc }

http-request set-header X-Forwarded-Proto http if !{ ssl_fc }

option httpchk

server overcloud-controller-0.internalapi.localdomain 172.16.0.13:9696 check fall 5 inter 2000 rise 2

server overcloud-controller-1.internalapi.localdomain 172.16.0.14:9696 check fall 5 inter 2000 rise 2

server overcloud-controller-2.internalapi.localdomain 172.16.0.15:9696 check fall 5 inter 2000 rise 2

listen nova_metadata

bind 172.16.0.10:8775 transparent

option httpchk

server overcloud-controller-0.internalapi.localdomain 172.16.0.13:8775 check fall 5 inter 2000 rise 2

server overcloud-controller-1.internalapi.localdomain 172.16.0.14:8775 check fall 5 inter 2000 rise 2

server overcloud-controller-2.internalapi.localdomain 172.16.0.15:8775 check fall 5 inter 2000 rise 2

listen nova_novncproxy

bind 192.168.1.150:6080 transparent

bind 172.16.0.10:6080 transparent

balance source

http-request set-header X-Forwarded-Proto https if { ssl_fc }

http-request set-header X-Forwarded-Proto http if !{ ssl_fc }

option tcpka

timeout tunnel 1h

server overcloud-controller-0.internalapi.localdomain 172.16.0.13:6080 check fall 5 inter 2000 rise 2

server overcloud-controller-1.internalapi.localdomain 172.16.0.14:6080 check fall 5 inter 2000 rise 2

server overcloud-controller-2.internalapi.localdomain 172.16.0.15:6080 check fall 5 inter 2000 rise 2

listen nova_osapi

bind 192.168.1.150:8774 transparent

bind 172.16.0.10:8774 transparent

mode http

http-request set-header X-Forwarded-Proto https if { ssl_fc }

http-request set-header X-Forwarded-Proto http if !{ ssl_fc }

option httpchk

server overcloud-controller-0.internalapi.localdomain 172.16.0.13:8774 check fall 5 inter 2000 rise 2

server overcloud-controller-1.internalapi.localdomain 172.16.0.14:8774 check fall 5 inter 2000 rise 2

server overcloud-controller-2.internalapi.localdomain 172.16.0.15:8774 check fall 5 inter 2000 rise 2

listen nova_placement

bind 192.168.1.150:8778 transparent

bind 172.16.0.10:8778 transparent

mode http

http-request set-header X-Forwarded-Proto https if { ssl_fc }

http-request set-header X-Forwarded-Proto http if !{ ssl_fc }

option httpchk

server overcloud-controller-0.internalapi.localdomain 172.16.0.13:8778 check fall 5 inter 2000 rise 2

server overcloud-controller-1.internalapi.localdomain 172.16.0.14:8778 check fall 5 inter 2000 rise 2

server overcloud-controller-2.internalapi.localdomain 172.16.0.15:8778 check fall 5 inter 2000 rise 2

listen panko

bind 192.168.1.150:8977 transparent

bind 172.16.0.10:8977 transparent

http-request set-header X-Forwarded-Proto https if { ssl_fc }

http-request set-header X-Forwarded-Proto http if !{ ssl_fc }

option httpchk

server overcloud-controller-0.internalapi.localdomain 172.16.0.13:8977 check fall 5 inter 2000 rise 2

server overcloud-controller-1.internalapi.localdomain 172.16.0.14:8977 check fall 5 inter 2000 rise 2

server overcloud-controller-2.internalapi.localdomain 172.16.0.15:8977 check fall 5 inter 2000 rise 2

listen redis

bind 172.16.0.13:6379 transparent

balance first

option tcp-check

tcp-check send AUTH\ V2EgUh2pvkr8VzU6yuE4XHsr9\r\n

tcp-check send PING\r\n

tcp-check expect string +PONG

tcp-check send info\ replication\r\n

tcp-check expect string role:master

tcp-check send QUIT\r\n

tcp-check expect string +OK

server overcloud-controller-0.internalapi.localdomain 172.16.0.13:6379 check fall 5 inter 2000 rise 2

server overcloud-controller-1.internalapi.localdomain 172.16.0.14:6379 check fall 5 inter 2000 rise 2

server overcloud-controller-2.internalapi.localdomain 172.16.0.15:6379 check fall 5 inter 2000 rise 2

listen swift_proxy_server

bind 192.168.1.150:8080 transparent

bind 172.18.0.10:8080 transparent

option httpchk GET /healthcheck

timeout client 2m

timeout server 2m

server overcloud-controller-0.storage.localdomain 172.18.0.17:8080 check fall 5 inter 2000 rise 2

server overcloud-controller-1.storage.localdomain 172.18.0.15:8080 check fall 5 inter 2000 rise 2

server overcloud-controller-2.storage.localdomain 172.18.0.16:8080 check fall 5 inter 2000 rise 2/etc/corosync/corosync.conf file (Controller nodes)

This file defines the cluster infrastructure, and is available on all Controller nodes.

totem {

version: 2

cluster_name: tripleo_cluster

transport: udpu

token: 10000

}

nodelist {

node {

ring0_addr: overcloud-controller-0

nodeid: 1

}

node {

ring0_addr: overcloud-controller-1

nodeid: 2

}

node {

ring0_addr: overcloud-controller-2

nodeid: 3

}

}

quorum {

provider: corosync_votequorum

}

logging {

to_logfile: yes

logfile: /var/log/cluster/corosync.log

to_syslog: yes

}/etc/ceph/ceph.conf (Ceph nodes)

This file contains Ceph high availability settings, including the hostnames and IP addresses of the monitoring hosts.

[global] osd_pool_default_pgp_num = 128 osd_pool_default_min_size = 1 auth_service_required = cephx mon_initial_members = overcloud-controller-0,overcloud-controller-1,overcloud-controller-2 fsid = 8c835acc-6838-11e5-bb96-2cc260178a92 cluster_network = 172.19.0.11/24 auth_supported = cephx auth_cluster_required = cephx mon_host = 172.18.0.17,172.18.0.15,172.18.0.16 auth_client_required = cephx osd_pool_default_size = 3 osd_pool_default_pg_num = 128 public_network = 172.18.0.17/24