Chapter 3. Red Hat Ceph Storage installation

As a storage administrator, you can use the cephadm utility to deploy new Red Hat Ceph Storage clusters.

The cephadm utility manages the entire life cycle of a Ceph cluster. Installation and management tasks comprise two types of operations:

- Day One operations involve installing and bootstrapping a bare-minimum, containerized Ceph storage cluster, running on a single node. Day One also includes deploying the Monitor and Manager daemons and adding Ceph OSDs.

-

Day Two operations use the Ceph orchestration interface,

cephadm orch, or the Red Hat Ceph Storage Dashboard to expand the storage cluster by adding other Ceph services to the storage cluster.

3.1. Prerequisites

- At least one running virtual machine (VM) or bare-metal server with an active internet connection.

- Red Hat Enterprise Linux 8.4 EUS or later.

- Ansible 2.9 or later.

- A valid Red Hat subscription with the appropriate entitlements.

- Root-level access to all nodes.

- An active Red Hat Network (RHN) or service account to access the Red Hat Registry.

- Remove troubling configurations in iptables so that refresh of iptables services does not cause issues to the cluster. For an example, refer to the Verifying firewall rules are configured for default Ceph ports section of the Red Hat Ceph Storage Configuration Guide.

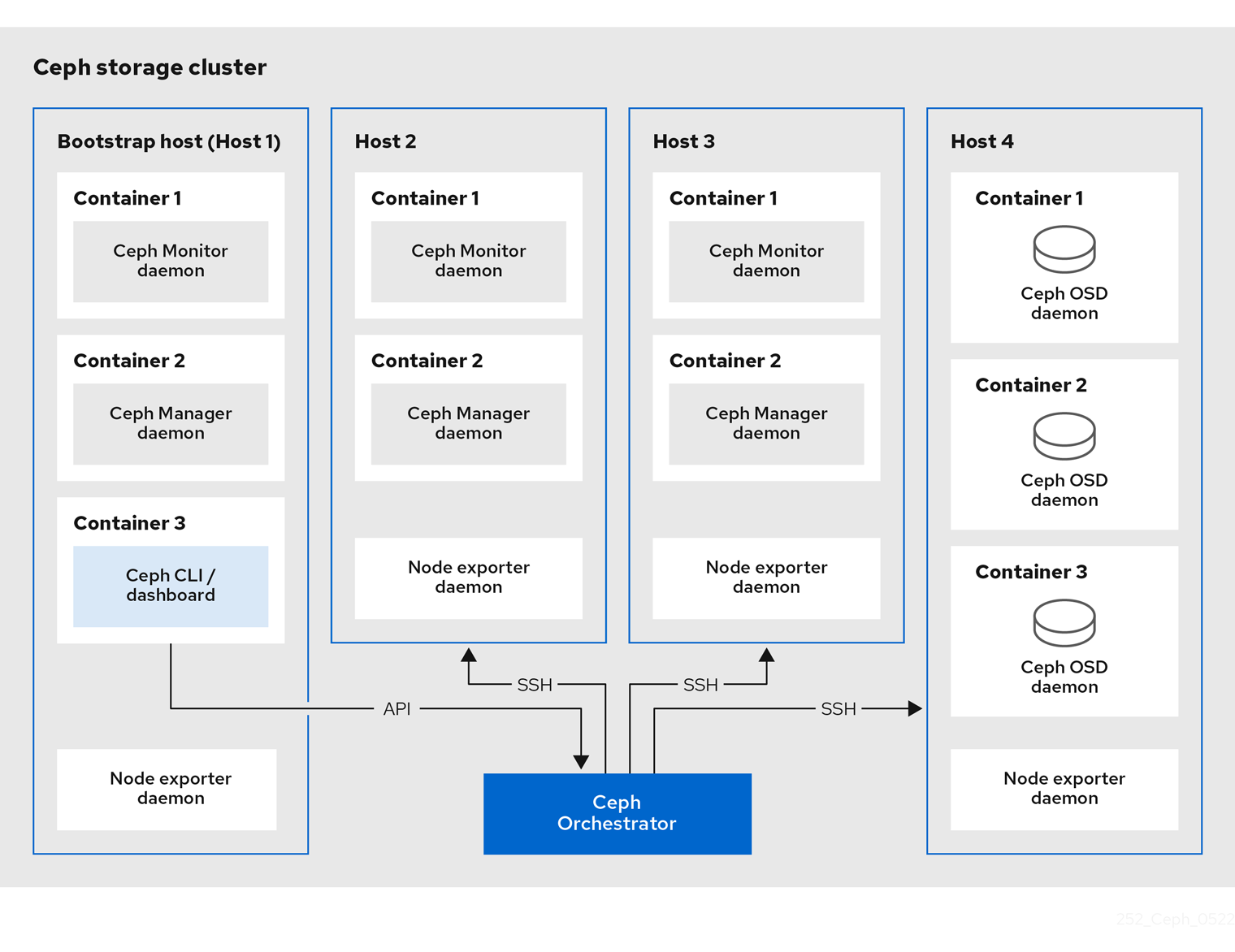

3.2. The cephadm utility

The cephadm utility deploys and manages a Ceph storage cluster. It is tightly integrated with both the command-line interface (CLI) and the Red Hat Ceph Storage Dashboard web interface, so that you can manage storage clusters from either environment. cephadm uses SSH to connect to hosts from the manager daemon to add, remove, or update Ceph daemon containers. It does not rely on external configuration or orchestration tools such as Ansible or Rook.

The cephadm utility is available after running the preflight playbook on a host.

The cephadm utility consists of two main components:

-

The

cephadmshell. -

The

cephadmorchestrator.

The cephadm shell

The cephadm shell launches a bash shell within a container. This enables you to perform “Day One” cluster setup tasks, such as installation and bootstrapping, and to invoke ceph commands.

There are two ways to invoke the cephadm shell:

Enter

cephadm shellat the system prompt:Example

[root@host01 ~]# cephadm shell [ceph: root@host01 /]# ceph -s

At the system prompt, type

cephadm shelland the command you want to execute:Example

[root@host01 ~]# cephadm shell ceph -s

If the node contains configuration and keyring files in /etc/ceph/, the container environment uses the values in those files as defaults for the cephadm shell. However, if you execute the cephadm shell on a Ceph Monitor node, the cephadm shell inherits its default configuration from the Ceph Monitor container, instead of using the default configuration.

The cephadm orchestrator

The cephadm orchestrator enables you to perform “Day Two” Ceph functions, such as expanding the storage cluster and provisioning Ceph daemons and services. You can use the cephadm orchestrator through either the command-line interface (CLI) or the web-based Red Hat Ceph Storage Dashboard. Orchestrator commands take the form ceph orch.

The cephadm script interacts with the Ceph orchestration module used by the Ceph Manager.

3.3. How cephadm works

The cephadm command manages the full lifecycle of a Red Hat Ceph Storage cluster. The cephadm command can perform the following operations:

- Bootstrap a new Red Hat Ceph Storage cluster.

- Launch a containerized shell that works with the Red Hat Ceph Storage command-line interface (CLI).

- Aid in debugging containerized daemons.

The cephadm command uses ssh to communicate with the nodes in the storage cluster. This allows you to add, remove, or update Red Hat Ceph Storage containers without using external tools. Generate the ssh key pair during the bootstrapping process, or use your own ssh key.

The cephadm bootstrapping process creates a small storage cluster on a single node, consisting of one Ceph Monitor and one Ceph Manager, as well as any required dependencies. You then use the orchestrator CLI or the Red Hat Ceph Storage Dashboard to expand the storage cluster to include nodes, and to provision all of the Red Hat Ceph Storage daemons and services. You can perform management functions through the CLI or from the Red Hat Ceph Storage Dashboard web interface.

The cephadm utility is a new feature in Red Hat Ceph Storage 5. It does not support older versions of Red Hat Ceph Storage.

3.4. The cephadm-ansible playbooks

The cephadm-ansible package is a collection of Ansible playbooks to simplify workflows that are not covered by cephadm. After installation, the playbooks are located in /usr/share/cephadm-ansible/.

Red Hat Enterprise Linux 9 and later does not support the cephadm-ansible playbook.

The cephadm-ansbile package includes the following playbooks:

-

cephadm-preflight.yml -

cephadm-clients.yml -

cephadm-purge-cluster.yml

The cephadm-preflight playbook

Use the cephadm-preflight playbook to initially setup hosts before bootstrapping the storage cluster and before adding new nodes or clients to your storage cluster. This playbook configures the Ceph repository and installs some prerequisites such as podman, lvm2, chronyd, and cephadm.

The cephadm-clients playbook

Use the cephadm-clients playbook to set up client hosts. This playbook handles the distribution of configuration and keyring files to a group of Ceph clients.

The cephadm-purge-cluster playbook

Use the cephadm-purge-cluster playbook to remove a Ceph cluster. This playbook purges a Ceph cluster managed with cephadm.

Additional Resources

-

For more information about the

cephadm-preflightplaybook, see Running the preflight playbook. -

For more information about the

cephadm-clientsplaybook, see Running the cephadm-clients playbook. -

For more information about the

cephadm-purge-clusterplaybook, see Purging the Ceph storage cluster.

3.5. Registering the Red Hat Ceph Storage nodes to the CDN and attaching subscriptions

Red Hat Ceph Storage 5 is supported on Red Hat Enterprise Linux 8.4 EUS or later.

Prerequisites

- At least one running virtual machine (VM) or bare-metal server with an active internet connection.

- Red Hat Enterprise Linux 8.4 EUS or later.

- A valid Red Hat subscription with the appropriate entitlements.

- Root-level access to all nodes.

Procedure

Register the node, and when prompted, enter your Red Hat Customer Portal credentials:

Syntax

subscription-manager register

Pull the latest subscription data from the CDN:

Syntax

subscription-manager refresh

List all available subscriptions for Red Hat Ceph Storage:

Syntax

subscription-manager list --available --matches 'Red Hat Ceph Storage'- Identify the appropriate subscription and retrieve its Pool ID.

Attach a pool ID to gain access to the software entitlements. Use the Pool ID you identified in the previous step.

Syntax

subscription-manager attach --pool=POOL_IDDisable the default software repositories, and then enable the server and the extras repositories on the respective version of Red Hat Enterprise Linux:

Red Hat Enterprise Linux 8

subscription-manager repos --disable=* subscription-manager repos --enable=rhel-8-for-x86_64-baseos-rpms subscription-manager repos --enable=rhel-8-for-x86_64-appstream-rpms

Red Hat Enterprise Linux 9

subscription-manager repos --disable=* subscription-manager repos --enable=rhel-9-for-x86_64-baseos-rpms subscription-manager repos --enable=rhel-9-for-x86_64-appstream-rpms

Update the system to receive the latest packages for Red Hat Enterprise Linux:

Syntax

# dnf update

- Subscribe to Red Hat Ceph Storage 5 content. Follow the instructions in How to Register Ceph with Red Hat Satellite 6.

Enable the

ceph-toolsrepository:Red Hat Enterprise Linux 8

subscription-manager repos --enable=rhceph-5-tools-for-rhel-8-x86_64-rpms

Red Hat Enterprise Linux 9

subscription-manager repos --enable=rhceph-5-tools-for-rhel-9-x86_64-rpms

- Repeat the above steps on all nodes you are adding to the cluster.

Install

cephadm-ansibleon Red Hat Enterprise Linux 8:Example

dnf install cephadm-ansible

ImportantSkip this step for Red Hat Enterprise Linux 9 as

cephadm-ansibleis not supported.

3.6. Configuring Ansible inventory location

You can configure inventory location files for the cephadm-ansible staging and production environments. The Ansible inventory hosts file contains all the hosts that are part of the storage cluster. You can list nodes individually in the inventory hosts file or you can create groups such as [mons],[osds], and [rgws] to provide clarity to your inventory and ease the usage of the --limit option to target a group or node when running a playbook.

If deploying clients, client nodes must be defined in a dedicated [clients] group.

Skip these steps for Red Hat Enterprise Linux 9 as cephadm-ansible is not supported.

Prerequisites

- An Ansible administration node.

- Root-level access to the Ansible administration node.

-

The

cephadm-ansiblepackage is installed on the node.

Procedure

Navigate to the

/usr/share/cephadm-ansible/directory:[root@admin ~]# cd /usr/share/cephadm-ansible

Optional: Create subdirectories for staging and production:

[root@admin cephadm-ansible]# mkdir -p inventory/staging inventory/production

Optional: Edit the

ansible.cfgfile and add the following line to assign a default inventory location:[defaults] inventory = ./inventory/staging

Optional: Create an inventory

hostsfile for each environment:[root@admin cephadm-ansible]# touch inventory/staging/hosts [root@admin cephadm-ansible]# touch inventory/production/hosts

Open and edit each

hostsfile and add the nodes and[admin]group:NODE_NAME_1 NODE_NAME_2 [admin] ADMIN_NODE_NAME_1

- Replace NODE_NAME_1 and NODE_NAME_2 with the Ceph nodes such as monitors, OSDs, MDSs, and gateway nodes.

Replace ADMIN_NODE_NAME_1 with the name of the node where the admin keyring is stored.

Example

host02 host03 host04 [admin] host01

NoteIf you set the inventory location in the

ansible.cfgfile to staging, you need to run the playbooks in the staging environment as follows:Syntax

ansible-playbook -i inventory/staging/hosts PLAYBOOK.ymlTo run the playbooks in the production environment:

Syntax

ansible-playbook -i inventory/production/hosts PLAYBOOK.yml

3.7. Enabling SSH login as root user on Red Hat Enterprise Linux 9

Red Hat Enterprise Linux 9 does not support SSH login as a root user even if PermitRootLogin parameter is set to yes in the /etc/ssh/sshd_config file. You get the following error:

Example

[root@host01 ~]# ssh root@myhostname root@myhostname password: Permission denied, please try again.

You can run one of the following methods to enable login as a root user:

- Use "Allow root SSH login with password" flag while setting the root password during installation of Red Hat Enterprise Linux 9.

-

Manually set the

PermitRootLoginparameter after Red Hat Enterprise Linux 9 installation.

This section describes manual setting of the PermitRootLogin parameter.

Prerequisites

- Root-level access to all nodes.

Procedure

Open the

etc/ssh/sshd_configfile and set thePermitRootLogintoyes:Example

[root@admin ~]# echo 'PermitRootLogin yes' >> /etc/ssh/sshd_config.d/01-permitrootlogin.conf

Restart the

SSHservice:Example

[root@admin ~]# systemctl restart sshd.service

Login to the node as the

rootuser:Syntax

ssh root@HOST_NAMEReplace HOST_NAME with the host name of the Ceph node.

Example

[root@admin ~]# ssh root@host01

Enter the

rootpassword when prompted.

Additional Resources

- For more information, see the Not able to login as root user via ssh in RHEL 9 server Knowledgebase solution.

3.8. Creating an Ansible user with sudo access

You can create an Ansible user with password-less root access on all nodes in the storage cluster to run the cephadm-ansible playbooks. The Ansible user must be able to log into all the Red Hat Ceph Storage nodes as a user that has root privileges to install software and create configuration files without prompting for a password.

If you are a non-root user on a Red Hat Enterprise Linux 9, you can follow the steps for this creating the user, else you can skip these steps for Red Hat Enterprise Linux 9.

Prerequisites

- Root-level access to all nodes.

- For Red Hat Enterprise Linux 9, to log in as a root user, see Enabling SSH log in as root user on Red Hat Enterprise Linux 9

Procedure

Log in to the node as the

rootuser:Syntax

ssh root@HOST_NAMEReplace HOST_NAME with the host name of the Ceph node.

Example

[root@admin ~]# ssh root@host01

Enter the

rootpassword when prompted.Create a new Ansible user:

Syntax

adduser USER_NAMEReplace USER_NAME with the new user name for the Ansible user.

Example

[root@host01 ~]# adduser ceph-admin

ImportantDo not use

cephas the user name. Thecephuser name is reserved for the Ceph daemons. A uniform user name across the cluster can improve ease of use, but avoid using obvious user names, because intruders typically use them for brute-force attacks.Set a new password for this user:

Syntax

passwd USER_NAMEReplace USER_NAME with the new user name for the Ansible user.

Example

[root@host01 ~]# passwd ceph-admin

Enter the new password twice when prompted.

Configure

sudoaccess for the newly created user:Syntax

cat << EOF >/etc/sudoers.d/USER_NAME $USER_NAME ALL = (root) NOPASSWD:ALL EOFReplace USER_NAME with the new user name for the Ansible user.

Example

[root@host01 ~]# cat << EOF >/etc/sudoers.d/ceph-admin ceph-admin ALL = (root) NOPASSWD:ALL EOF

Assign the correct file permissions to the new file:

Syntax

chmod 0440 /etc/sudoers.d/USER_NAMEReplace USER_NAME with the new user name for the Ansible user.

Example

[root@host01 ~]# chmod 0440 /etc/sudoers.d/ceph-admin

- Repeat the above steps on all nodes in the storage cluster.

Additional Resources

- For more information about creating user accounts, see the Getting started with managing user accounts section in the Configuring basic system settings chapter of the Red Hat Enterprise Linux 8 guide.

3.9. Configuring SSH

As a storage administrator, with Cephadm, you can use an SSH key to securely authenticate with remote hosts. The SSH key is stored in the monitor to connect to remote hosts.

Prerequisites

- An Ansible administration node.

- Root-level access to the Ansible administration node.

-

The

cephadm-ansiblepackage is installed on the node.

Procedure

-

Navigate to the

cephadm-ansibledirectory. Generate a new SSH key:

Example

[ceph-admin@admin cephadm-ansible]$ ceph cephadm generate-key

Retrieve the public portion of the SSH key:

Example

[ceph-admin@admin cephadm-ansible]$ ceph cephadm get-pub-key

Delete the currently stored SSH key:

Example

[ceph-admin@admin cephadm-ansible]$ceph cephadm clear-key

Restart the mgr daemon to reload the configuration:

Example

[ceph-admin@admin cephadm-ansible]$ ceph mgr fail

3.9.1. Configuring a different SSH user

As a storage administrator, you can configure a non-root SSH user who can log into all the Ceph cluster nodes with enough privileges to download container images, start containers, and execute commands without prompting for a password.

Prior to configuring a non-root SSH user, the cluster SSH key needs to be added to the user’s authorized_keys file and non-root users must have passwordless sudo access.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- An Ansible administration node.

- Root-level access to the Ansible administration node.

-

The

cephadm-ansiblepackage is installed on the node. -

Add the cluster SSH keys to the user’s

authorized_keys. - Enable passwordless sudo access for the non-root users.

Procedure

-

Navigate to the

cephadm-ansibledirectory. Provide Cephadm the name of the user who is going to perform all the Cephadm operations:

Syntax

[ceph-admin@admin cephadm-ansible]$ ceph cephadm set-user <user>

Example

[ceph-admin@admin cephadm-ansible]$ ceph cephadm set-user user

Retrieve the SSH public key.

Syntax

ceph cephadm get-pub-key > ~/ceph.pub

Example

[ceph-admin@admin cephadm-ansible]$ ceph cephadm get-pub-key > ~/ceph.pub

Copy the SSH keys to all the hosts.

Syntax

ssh-copy-id -f -i ~/ceph.pub USER@HOST

Example

[ceph-admin@admin cephadm-ansible]$ ssh-copy-id ceph-admin@host01

3.10. Enabling password-less SSH for Ansible

Generate an SSH key pair on the Ansible administration node and distribute the public key to each node in the storage cluster so that Ansible can access the nodes without being prompted for a password.

If you are a non-root user on a Red Hat Enterprise Linux 9, you can follow the steps for this creating the user, else you can skip these steps for Red Hat Enterprise Linux 9.

Prerequisites

- Access to the Ansible administration node.

- Ansible user with sudo access to all nodes in the storage cluster.

- For Red Hat Enterprise Linux 9, to log in as a root user, see Enabling SSH log in as root user on Red Hat Enterprise Linux 9

Procedure

Generate the SSH key pair, accept the default file name and leave the passphrase empty:

[ceph-admin@admin ~]$ ssh-keygen

Copy the public key to all nodes in the storage cluster:

ssh-copy-id USER_NAME@HOST_NAME

Replace USER_NAME with the new user name for the Ansible user. Replace HOST_NAME with the host name of the Ceph node.

Example

[ceph-admin@admin ~]$ ssh-copy-id ceph-admin@host01

Create the user’s SSH

configfile:[ceph-admin@admin ~]$ touch ~/.ssh/config

Open for editing the

configfile. Set values for theHostnameandUseroptions for each node in the storage cluster:Host host01 Hostname HOST_NAME User USER_NAME Host host02 Hostname HOST_NAME User USER_NAME ...

Replace HOST_NAME with the host name of the Ceph node. Replace USER_NAME with the new user name for the Ansible user.

Example

Host host01 Hostname host01 User ceph-admin Host host02 Hostname host02 User ceph-admin Host host03 Hostname host03 User ceph-admin

ImportantBy configuring the

~/.ssh/configfile you do not have to specify the-u USER_NAMEoption each time you execute theansible-playbookcommand.Set the correct file permissions for the

~/.ssh/configfile:[ceph-admin@admin ~]$ chmod 600 ~/.ssh/config

Additional Resources

-

The

ssh_config(5)manual page. - See Using secure communications between two systems with OpenSSH.

3.11. Running the preflight playbook

This Ansible playbook configures the Ceph repository and prepares the storage cluster for bootstrapping. It also installs some prerequisites, such as podman, lvm2, chronyd, and cephadm. The default location for cephadm-ansible and cephadm-preflight.yml is /usr/share/cephadm-ansible.

The preflight playbook uses the cephadm-ansible inventory file to identify all the admin and nodes in the storage cluster.

Skip these steps for Red Hat Enterprise Linux 9 as cephadm-ansible is not supported.

The default location for the inventory file is /usr/share/cephadm-ansible/hosts. The following example shows the structure of a typical inventory file:

Example

host02 host03 host04 [admin] host01

The [admin] group in the inventory file contains the name of the node where the admin keyring is stored. On a new storage cluster, the node in the [admin] group will be the bootstrap node. To add additional admin hosts after bootstrapping the cluster see Setting up the admin node in the Installation Guide for more information.

Run the preflight playbook before you bootstrap the initial host.

If you are performing a disconnected installation, see Running the preflight playbook for a disconnected installation.

Prerequisites

- Root-level access to the Ansible administration node.

Ansible user with sudo and passwordless

sshaccess to all nodes in the storage cluster.NoteIn the below example, host01 is the bootstrap node.

Procedure

-

Navigate to the the

/usr/share/cephadm-ansibledirectory. Open and edit the

hostsfile and add your nodes:Example

host02 host03 host04 [admin] host01

Run the preflight playbook:

Syntax

ansible-playbook -i INVENTORY_FILE cephadm-preflight.yml --extra-vars "ceph_origin=rhcs"Example

[ceph-admin@admin cephadm-ansible]$ ansible-playbook -i hosts cephadm-preflight.yml --extra-vars "ceph_origin=rhcs"

After installation is complete,

cephadmresides in the/usr/sbin/directory.Use the

--limitoption to run the preflight playbook on a selected set of hosts in the storage cluster:Syntax

ansible-playbook -i INVENTORY_FILE cephadm-preflight.yml --extra-vars "ceph_origin=rhcs" --limit GROUP_NAME|NODE_NAME

Replace GROUP_NAME with a group name from your inventory file. Replace NODE_NAME with a specific node name from your inventory file.

NoteOptionally, you can group your nodes in your inventory file by group name such as

[mons],[osds], and[mgrs]. However, admin nodes must be added to the[admin]group and clients must be added to the[clients]group.Example

[ceph-admin@admin cephadm-ansible]$ ansible-playbook -i hosts cephadm-preflight.yml --extra-vars "ceph_origin=rhcs" --limit clients [ceph-admin@admin cephadm-ansible]$ ansible-playbook -i hosts cephadm-preflight.yml --extra-vars "ceph_origin=rhcs" --limit host01

When you run the preflight playbook,

cephadm-ansibleautomatically installschronydandceph-commonon the client nodes.The preflight playbook installs

chronydbut configures it for a single NTP source. If you want to configure multiple sources or if you have a disconnected environment, see the following documentation for more information:

3.12. Bootstrapping a new storage cluster

The cephadm utility performs the following tasks during the bootstrap process:

- Installs and starts a Ceph Monitor daemon and a Ceph Manager daemon for a new Red Hat Ceph Storage cluster on the local node as containers.

-

Creates the

/etc/cephdirectory. -

Writes a copy of the public key to

/etc/ceph/ceph.pubfor the Red Hat Ceph Storage cluster and adds the SSH key to the root user’s/root/.ssh/authorized_keysfile. -

Applies the

_adminlabel to the bootstrap node. -

Writes a minimal configuration file needed to communicate with the new cluster to

/etc/ceph/ceph.conf. -

Writes a copy of the

client.adminadministrative secret key to/etc/ceph/ceph.client.admin.keyring. -

Deploys a basic monitoring stack with Prometheus, Grafana, and other tools such as

node-exporterandalert-manager.

If you are performing a disconnected installation, see Performing a disconnected installation.

If you have existing Prometheus services that you want to run with the new storage cluster, or if you are running Ceph with Rook, use the --skip-monitoring-stack option with the cephadm bootstrap command. This option bypasses the basic monitoring stack so that you can manually configure it later.

If you are deploying a monitoring stack, see Deploying the monitoring stack using the Ceph Orchestrator in the Red Hat Ceph Storage Operations Guide.

Bootstrapping provides the default user name and password for the initial login to the Dashboard. Bootstrap requires you to change the password after you log in.

Before you begin the bootstrapping process, ensure that the container image that you want to use has the same version of Red Hat Ceph Storage as cephadm. If the two versions do not match, bootstrapping fails at the Creating initial admin user stage.

Before you begin the bootstrapping process, you must create a username and password for the registry.redhat.io container registry. For more information about Red Hat container registry authentication, see the knowledge base article Red Hat Container Registry Authentication

Prerequisites

- An IP address for the first Ceph Monitor container, which is also the IP address for the first node in the storage cluster.

-

Login access to

registry.redhat.io. -

A minimum of 10 GB of free space for

/var/lib/containers/. - Root-level access to all nodes.

If the storage cluster includes multiple networks and interfaces, be sure to choose a network that is accessible by any node that uses the storage cluster.

If the local node uses fully-qualified domain names (FQDN), then add the --allow-fqdn-hostname option to cephadm bootstrap on the command line.

Run cephadm bootstrap on the node that you want to be the initial Monitor node in the cluster. The IP_ADDRESS option should be the IP address of the node you are using to run cephadm bootstrap.

If you want to deploy a storage cluster using IPV6 addresses, then use the IPV6 address format for the --mon-ip IP_ADDRESS option. For example: cephadm bootstrap --mon-ip 2620:52:0:880:225:90ff:fefc:2536 --registry-json /etc/mylogin.json.

Configuring Ceph Object Gateway multi-site on Red Hat Ceph Storage 5.1 is not supported due to several open issues. For more information, see the knowledge base article Red Hat Ceph Storage 5.1 does not support multi-site configuration.

Use the --yes-i-know flag while bootstrapping a new Red Hat Ceph Storage cluster to get past the warning about multi-site regressions.

Follow the knowledge base article How to upgrade from Red Hat Ceph Storage 4.2z4 to 5.0z4 with the bootstrapping procedure if you are planning for a new installation of Red Hat Ceph Storage 5.0z4.

Procedure

Bootstrap a storage cluster:

Syntax

cephadm bootstrap --cluster-network NETWORK_CIDR --mon-ip IP_ADDRESS --registry-url registry.redhat.io --registry-username USER_NAME --registry-password PASSWORD --yes-i-know

Example

[root@host01 ~]# cephadm bootstrap --cluster-network 10.10.128.0/24 --mon-ip 10.10.128.68 --registry-url registry.redhat.io --registry-username myuser1 --registry-password mypassword1 --yes-i-know

NoteIf you want internal cluster traffic routed over the public network, you can omit the

--cluster-network NETWORK_CIDRoption.The script takes a few minutes to complete. Once the script completes, it provides the credentials to the Red Hat Ceph Storage Dashboard URL, a command to access the Ceph command-line interface (CLI), and a request to enable telemetry.

Example

Ceph Dashboard is now available at: URL: https://host01:8443/ User: admin Password: i8nhu7zham Enabling client.admin keyring and conf on hosts with "admin" label You can access the Ceph CLI with: sudo /usr/sbin/cephadm shell --fsid 266ee7a8-2a05-11eb-b846-5254002d4916 -c /etc/ceph/ceph.conf -k /etc/ceph/ceph.client.admin.keyring Please consider enabling telemetry to help improve Ceph: ceph telemetry on For more information see: https://docs.ceph.com/docs/master/mgr/telemetry/ Bootstrap complete.

Additional Resources

- For more information about the recommended bootstrap command options, see Recommended cephadm bootstrap command options.

- For more information about the options available for the bootstrap command, see Bootstrap command options.

- For information about using a JSON file to contain login credentials for the bootstrap process, see Using a JSON file to protect login information.

3.12.1. Recommended cephadm bootstrap command options

The cephadm bootstrap command has multiple options that allow you to specify file locations, configure ssh settings, set passwords, and perform other initial configuration tasks.

Red Hat recommends that you use a basic set of command options for cephadm bootstrap. You can configure additional options after your initial cluster is up and running.

The following examples show how to specify the recommended options.

Syntax

cephadm bootstrap --ssh-user USER_NAME --mon-ip IP_ADDRESS --allow-fqdn-hostname --registry-json REGISTRY_JSON

Example

[root@host01 ~]# cephadm bootstrap --ssh-user ceph-admin --mon-ip 10.10.128.68 --allow-fqdn-hostname --registry-json /etc/mylogin.json

For non-root users, see the Creating an Ansible user with sudo access section and Enabling password-less SSH for Ansible section for more details.

Additional Resources

-

For more information about the

--registry-jsonoption, see Using a JSON file to protect login information. -

For more information about all available

cephadm bootstrapoptions, see Bootstrap command options. - For more information about bootstrapping the storage cluster as a non-root user, see Bootstrapping the storage cluster as a non-root user.

3.12.2. Using a JSON file to protect login information

As a storage administrator, you might choose to add login and password information to a JSON file, and then refer to the JSON file for bootstrapping. This protects the login credentials from exposure.

You can also use a JSON file with the cephadm --registry-login command.

Prerequisites

- An IP address for the first Ceph Monitor container, which is also the IP address for the first node in the storage cluster.

-

Login access to

registry.redhat.io. -

A minimum of 10 GB of free space for

/var/lib/containers/. - Root-level access to all nodes.

Procedure

Create the JSON file. In this example, the file is named

mylogin.json.Syntax

{ "url":"REGISTRY_URL", "username":"USER_NAME", "password":"PASSWORD" }Example

{ "url":"registry.redhat.io", "username":"myuser1", "password":"mypassword1" }Bootstrap a storage cluster:

Syntax

cephadm bootstrap --mon-ip IP_ADDRESS --registry-json /etc/mylogin.jsonExample

[root@host01 ~]# cephadm bootstrap --mon-ip 10.10.128.68 --registry-json /etc/mylogin.json

3.12.3. Bootstrapping a storage cluster using a service configuration file

To bootstrap the storage cluster and configure additional hosts and daemons using a service configuration file, use the --apply-spec option with the cephadm bootstrap command. The configuration file is a .yaml file that contains the service type, placement, and designated nodes for services that you want to deploy.

If you want to use a non-default realm or zone for applications such as multi-site, configure your Ceph Object Gateway daemons after you bootstrap the storage cluster, instead of adding them to the configuration file and using the --apply-spec option. This gives you the opportunity to create the realm or zone you need for the Ceph Object Gateway daemons before deploying them. See the Red Hat Ceph Storage Operations Guide for more information.

If deploying a Ceph iSCSI gateway, NFS-Ganesha gateway, or Metadata Server (MDS) service, configure them after bootstrapping the storage cluster.

- To deploy a Ceph iSCSI gateway or Ceph NFS-Ganesha gateway, you must create a RADOS pool first.

- To deploy the MDS service, you must create a CephFS volume first.

See the Red Hat Ceph Storage Operations Guide for more information.

Starting with Red Hat Ceph Storage 5.1, if you run the bootstrap command with --apply-spec option, ensure to include the IP address of the bootstrap host in the specification file. This prevents resolving the IP address to loopback address while re-adding the bootstrap host where active Ceph Manager is already running.

If you do not use the --apply spec option during bootstrap and instead use ceph orch apply command with another specification file which includes re-adding the host and contains an active Ceph Manager running, then ensure to explicitly provide the addr field. This is applicable for applying any specification file after bootstrapping.

Prerequisites

- At least one running virtual machine (VM) or server.

- Red Hat Enterprise Linux 8.4 EUS or later.

- Root-level access to all nodes.

-

Login access to

registry.redhat.io. -

Passwordless

sshis set up on all hosts in the storage cluster. -

cephadmis installed on the node that you want to be the initial Monitor node in the storage cluster.

Procedure

- Log in to the bootstrap host.

Create the service configuration

.yamlfile for your storage cluster. The example file directscephadm bootstrapto configure the initial host and two additional hosts, and it specifies that OSDs be created on all available disks.Example

service_type: host addr: host01 hostname: host01 --- service_type: host addr: host02 hostname: host02 --- service_type: host addr: host03 hostname: host03 --- service_type: host addr: host04 hostname: host04 --- service_type: mon placement: host_pattern: "host[0-2]" --- service_type: osd service_id: my_osds placement: host_pattern: "host[1-3]" data_devices: all: true

Bootstrap the storage cluster with the

--apply-specoption:Syntax

cephadm bootstrap --apply-spec CONFIGURATION_FILE_NAME --mon-ip MONITOR_IP_ADDRESS --ssh-private-key PRIVATE_KEY --ssh-public-key PUBLIC_KEY --registry-url registry.redhat.io --registry-username USER_NAME --registry-password PASSWORD

Example

[root@host01 ~]# cephadm bootstrap --apply-spec initial-config.yaml --mon-ip 10.10.128.68 --ssh-private-key /home/ceph/.ssh/id_rsa --ssh-public-key /home/ceph/.ssh/id_rsa.pub --registry-url registry.redhat.io --registry-username myuser1 --registry-password mypassword1

The script takes a few minutes to complete. Once the script completes, it provides the credentials to the Red Hat Ceph Storage Dashboard URL, a command to access the Ceph command-line interface (CLI), and a request to enable telemetry.

- Once your storage cluster is up and running, see the Red Hat Ceph Storage Operations Guide for more information about configuring additional daemons and services.

Additional Resources

- For more information about the options available for the bootstrap command, see the Bootstrap command options.

3.12.4. Bootstrapping the storage cluster as a non-root user

To bootstrap the Red Hat Ceph Storage cluster as a non-root user on the bootstrap node, use the --ssh-user option with the cephadm bootstrap command. --ssh-user specifies a user for SSH connections to cluster nodes.

Non-root users must have passwordless sudo access. See the Creating an Ansible user with sudo access section and Enabling password-less SSH for Ansible_ section for more details.

Prerequisites

- An IP address for the first Ceph Monitor container, which is also the IP address for the initial Monitor node in the storage cluster.

-

Login access to

registry.redhat.io. -

A minimum of 10 GB of free space for

/var/lib/containers/. - SSH public and private keys.

-

Passwordless

sudoaccess to the bootstrap node.

Procedure

Change to

sudoon the bootstrap node:Syntax

su - SSH_USER_NAMEExample

[root@host01 ~]# su - ceph Last login: Tue Sep 14 12:00:29 EST 2021 on pts/0

Establish the SSH connection to the bootstrap node:

Example

[ceph@host01 ~]# ssh host01 Last login: Tue Sep 14 12:03:29 EST 2021 on pts/0

Optional: Invoke the

cephadm bootstrapcommand.NoteUsing private and public keys is optional. If SSH keys have not previously been created, these can be created during this step.

Include the

--ssh-private-keyand--ssh-public-keyoptions:Syntax

cephadm bootstrap --ssh-user USER_NAME --mon-ip IP_ADDRESS --ssh-private-key PRIVATE_KEY --ssh-public-key PUBLIC_KEY --registry-url registry.redhat.io --registry-username USER_NAME --registry-password PASSWORD

Example

cephadm bootstrap --ssh-user ceph-admin --mon-ip 10.10.128.68 --ssh-private-key /home/ceph/.ssh/id_rsa --ssh-public-key /home/ceph/.ssh/id_rsa.pub --registry-url registry.redhat.io --registry-username myuser1 --registry-password mypassword1

Additional Resources

-

For more information about all available

cephadm bootstrapoptions, see Bootstrap command options. - For more information about utilizing Ansible to automate bootstrapping a rootless cluster, see the knowledge base article Red Hat Ceph Storage 5 rootless deployment utilizing ansible ad-hoc commands.

3.12.5. Bootstrap command options

The cephadm bootstrap command bootstraps a Ceph storage cluster on the local host. It deploys a MON daemon and a MGR daemon on the bootstrap node, automatically deploys the monitoring stack on the local host, and calls ceph orch host add HOSTNAME.

The following table lists the available options for cephadm bootstrap.

cephadm bootstrap option | Description |

|---|---|

| --config CONFIG_FILE, -c CONFIG_FILE |

CONFIG_FILE is the |

| --cluster-network NETWORK_CIDR |

Use the subnet defined by NETWORK_CIDR for internal cluster traffic. This is specified in CIDR notation. For example: |

| --mon-id MON_ID | Bootstraps on the host named MON_ID. Default value is the local host. |

| --mon-addrv MON_ADDRV | mon IPs (e.g., [v2:localipaddr:3300,v1:localipaddr:6789]) |

| --mon-ip IP_ADDRESS |

IP address of the node you are using to run |

| --mgr-id MGR_ID | Host ID where a MGR node should be installed. Default: randomly generated. |

| --fsid FSID | Cluster FSID. |

| --output-dir OUTPUT_DIR | Use this directory to write config, keyring, and pub key files. |

| --output-keyring OUTPUT_KEYRING | Use this location to write the keyring file with the new cluster admin and mon keys. |

| --output-config OUTPUT_CONFIG | Use this location to write the configuration file to connect to the new cluster. |

| --output-pub-ssh-key OUTPUT_PUB_SSH_KEY | Use this location to write the public SSH key for the cluster. |

| --skip-ssh | Skip the setup of the ssh key on the local host. |

| --initial-dashboard-user INITIAL_DASHBOARD_USER | Initial user for the dashboard. |

| --initial-dashboard-password INITIAL_DASHBOARD_PASSWORD | Initial password for the initial dashboard user. |

| --ssl-dashboard-port SSL_DASHBOARD_PORT | Port number used to connect with the dashboard using SSL. |

| --dashboard-key DASHBOARD_KEY | Dashboard key. |

| --dashboard-crt DASHBOARD_CRT | Dashboard certificate. |

| --ssh-config SSH_CONFIG | SSH config. |

| --ssh-private-key SSH_PRIVATE_KEY | SSH private key. |

| --ssh-public-key SSH_PUBLIC_KEY | SSH public key. |

| --ssh-user SSH_USER | Sets the user for SSH connections to cluster hosts. Passwordless sudo is needed for non-root users. |

| --skip-mon-network | Sets mon public_network based on the bootstrap mon ip. |

| --skip-dashboard | Do not enable the Ceph Dashboard. |

| --dashboard-password-noupdate | Disable forced dashboard password change. |

| --no-minimize-config | Do not assimilate and minimize the configuration file. |

| --skip-ping-check | Do not verify that the mon IP is pingable. |

| --skip-pull | Do not pull the latest image before bootstrapping. |

| --skip-firewalld | Do not configure firewalld. |

| --allow-overwrite | Allow the overwrite of existing –output-* config/keyring/ssh files. |

| --allow-fqdn-hostname | Allow fully qualified host name. |

| --skip-prepare-host | Do not prepare host. |

| --orphan-initial-daemons | Do not create initial mon, mgr, and crash service specs. |

| --skip-monitoring-stack | Do not automatically provision the monitoring stack] (prometheus, grafana, alertmanager, node-exporter). |

| --apply-spec APPLY_SPEC | Apply cluster spec file after bootstrap (copy ssh key, add hosts and apply services). |

| --registry-url REGISTRY_URL |

Specifies the URL of the custom registry to log into. For example: |

| --registry-username REGISTRY_USERNAME | User name of the login account to the custom registry. |

| --registry-password REGISTRY_PASSWORD | Password of the login account to the custom registry. |

| --registry-json REGISTRY_JSON | JSON file containing registry login information. |

Additional Resources

-

For more information about the

--skip-monitoring-stackoption, see Adding hosts. -

For more information about logging into the registry with the

registry-jsonoption, see help for theregistry-logincommand. -

For more information about

cephadmoptions, see help forcephadm.

3.12.6. Configuring a private registry for a disconnected installation

You can use a disconnected installation procedure to install cephadm and bootstrap your storage cluster on a private network. A disconnected installation uses a private registry for installation. Use this procedure when the Red Hat Ceph Storage nodes do NOT have access to the Internet during deployment.

Follow this procedure to set up a secure private registry using authentication and a self-signed certificate. Perform these steps on a node that has both Internet access and access to the local cluster.

Using an insecure registry for production is not recommended.

Prerequisites

- At least one running virtual machine (VM) or server with an active internet connection.

- Red Hat Enterprise Linux 8.4 EUS or later.

-

Login access to

registry.redhat.io. - Root-level access to all nodes.

Procedure

- Log in to the node that has access to both the public network and the cluster nodes.

Register the node, and when prompted, enter the appropriate Red Hat Customer Portal credentials:

Example

[root@admin ~]# subscription-manager register

Pull the latest subscription data:

Example

[root@admin ~]# subscription-manager refresh

List all available subscriptions for Red Hat Ceph Storage:

Example

[root@admin ~]# subscription-manager list --available --all --matches="*Ceph*"

Copy the Pool ID from the list of available subscriptions for Red Hat Ceph Storage.

Attach the subscription to get access to the software entitlements:

Syntax

subscription-manager attach --pool=POOL_IDReplace POOL_ID with the Pool ID identified in the previous step.

Disable the default software repositories, and enable the server and the extras repositories:

Red Hat Enterprise Linux 8

[root@admin ~]# subscription-manager repos --disable=* [root@admin ~]# subscription-manager repos --enable=rhel-8-for-x86_64-baseos-rpms [root@admin ~]# subscription-manager repos --enable=rhel-8-for-x86_64-appstream-rpms

Red Hat Enterprise Linux 9

[root@admin ~]# subscription-manager repos --disable=* [root@admin ~]# subscription-manager repos --enable=rhel-9-for-x86_64-baseos-rpms [root@admin ~]# subscription-manager repos --enable=rhel-9-for-x86_64-appstream-rpms

Install the

podmanandhttpd-toolspackages:Example

[root@admin ~]# dnf install -y podman httpd-tools

Create folders for the private registry:

Example

[root@admin ~]# mkdir -p /opt/registry/{auth,certs,data}The registry will be stored in

/opt/registryand the directories are mounted in the container running the registry.-

The

authdirectory stores thehtpasswdfile the registry uses for authentication. -

The

certsdirectory stores the certificates the registry uses for authentication. -

The

datadirectory stores the registry images.

-

The

Create credentials for accessing the private registry:

Syntax

htpasswd -bBc /opt/registry/auth/htpasswd PRIVATE_REGISTRY_USERNAME PRIVATE_REGISTRY_PASSWORD

-

The

boption provides the password from the command line. -

The

Boption stores the password usingBcryptencryption. -

The

coption creates thehtpasswdfile. - Replace PRIVATE_REGISTRY_USERNAME with the username to create for the private registry.

Replace PRIVATE_REGISTRY_PASSWORD with the password to create for the private registry username.

Example

[root@admin ~]# htpasswd -bBc /opt/registry/auth/htpasswd myregistryusername myregistrypassword1

-

The

Create a self-signed certificate:

Syntax

openssl req -newkey rsa:4096 -nodes -sha256 -keyout /opt/registry/certs/domain.key -x509 -days 365 -out /opt/registry/certs/domain.crt -addext "subjectAltName = DNS:LOCAL_NODE_FQDN"Replace LOCAL_NODE_FQDN with the fully qualified host name of the private registry node.

NoteYou will be prompted for the respective options for your certificate. The

CN=value is the host name of your node and should be resolvable by DNS or the/etc/hostsfile.Example

[root@admin ~]# openssl req -newkey rsa:4096 -nodes -sha256 -keyout /opt/registry/certs/domain.key -x509 -days 365 -out /opt/registry/certs/domain.crt -addext "subjectAltName = DNS:admin.lab.redhat.com"

NoteWhen creating a self-signed certificate, be sure to create a certificate with a proper Subject Alternative Name (SAN). Podman commands that require TLS verification for certificates that do not include a proper SAN, return the following error: x509: certificate relies on legacy Common Name field, use SANs or temporarily enable Common Name matching with GODEBUG=x509ignoreCN=0

Create a symbolic link to

domain.certto allowskopeoto locate the certificate with the file extension.cert:Example

[root@admin ~]# ln -s /opt/registry/certs/domain.crt /opt/registry/certs/domain.cert

Add the certificate to the trusted list on the private registry node:

Syntax

cp /opt/registry/certs/domain.crt /etc/pki/ca-trust/source/anchors/ update-ca-trust trust list | grep -i "LOCAL_NODE_FQDN"Replace LOCAL_NODE_FQDN with the FQDN of the private registry node.

Example

[root@admin ~]# cp /opt/registry/certs/domain.crt /etc/pki/ca-trust/source/anchors/ [root@admin ~]# update-ca-trust [root@admin ~]# trust list | grep -i "admin.lab.redhat.com" label: admin.lab.redhat.comCopy the certificate to any nodes that will access the private registry for installation and update the trusted list:

Example

[root@admin ~]# scp /opt/registry/certs/domain.crt root@host01:/etc/pki/ca-trust/source/anchors/ [root@admin ~]# ssh root@host01 [root@host01 ~]# update-ca-trust [root@host01 ~]# trust list | grep -i "admin.lab.redhat.com" label: admin.lab.redhat.comStart the local secure private registry:

Syntax

[root@admin ~]# podman run --restart=always --name NAME_OF_CONTAINER \ -p 5000:5000 -v /opt/registry/data:/var/lib/registry:z \ -v /opt/registry/auth:/auth:z \ -v /opt/registry/certs:/certs:z \ -e "REGISTRY_AUTH=htpasswd" \ -e "REGISTRY_AUTH_HTPASSWD_REALM=Registry Realm" \ -e REGISTRY_AUTH_HTPASSWD_PATH=/auth/htpasswd \ -e "REGISTRY_HTTP_TLS_CERTIFICATE=/certs/domain.crt" \ -e "REGISTRY_HTTP_TLS_KEY=/certs/domain.key" \ -e REGISTRY_COMPATIBILITY_SCHEMA1_ENABLED=true \ -d registry:2Replace NAME_OF_CONTAINER with a name to assign to the container.

Example

[root@admin ~]# podman run --restart=always --name myprivateregistry \ -p 5000:5000 -v /opt/registry/data:/var/lib/registry:z \ -v /opt/registry/auth:/auth:z \ -v /opt/registry/certs:/certs:z \ -e "REGISTRY_AUTH=htpasswd" \ -e "REGISTRY_AUTH_HTPASSWD_REALM=Registry Realm" \ -e REGISTRY_AUTH_HTPASSWD_PATH=/auth/htpasswd \ -e "REGISTRY_HTTP_TLS_CERTIFICATE=/certs/domain.crt" \ -e "REGISTRY_HTTP_TLS_KEY=/certs/domain.key" \ -e REGISTRY_COMPATIBILITY_SCHEMA1_ENABLED=true \ -d registry:2

This starts the private registry on port 5000 and mounts the volumes of the registry directories in the container running the registry.

On the local registry node, verify that

registry.redhat.iois in the container registry search path.Open for editing the

/etc/containers/registries.conffile, and addregistry.redhat.ioto theunqualified-search-registrieslist, if it does not exist:Example

unqualified-search-registries = ["registry.redhat.io", "registry.access.redhat.com", "registry.fedoraproject.org", "registry.centos.org", "docker.io"]

Login to

registry.redhat.iowith your Red Hat Customer Portal credentials:Syntax

podman login registry.redhat.io

Copy the following Red Hat Ceph Storage 5 image, Prometheus images, and Dashboard image from the Red Hat Customer Portal to the private registry:

Table 3.1. Custom image details for monitoring stack

Monitoring stack component Image details Prometheus

registry.redhat.io/openshift4/ose-prometheus:v4.10

Grafana

registry.redhat.io/rhceph/rhceph-5-dashboard-rhel8:latest

Node-exporter

registry.redhat.io/openshift4/ose-prometheus-node-exporter:v4.10

AlertManager

registry.redhat.io/openshift4/ose-prometheus-alertmanager:v4.10

HAProxy

registry.redhat.io/rhceph/rhceph-haproxy-rhel8:latest

Keepalived

registry.redhat.io/rhceph/keepalived-rhel8:latest

SNMP Gateway

registry.redhat.io/rhceph/snmp-notifier-rhel8:latest

Syntax

podman run -v /CERTIFICATE_DIRECTORY_PATH:/certs:Z -v /CERTIFICATE_DIRECTORY_PATH/domain.cert:/certs/domain.cert:Z --rm registry.redhat.io/rhel8/skopeo:8.5-8 skopeo copy --remove-signatures --src-creds RED_HAT_CUSTOMER_PORTAL_LOGIN:RED_HAT_CUSTOMER_PORTAL_PASSWORD --dest-cert-dir=./certs/ --dest-creds PRIVATE_REGISTRY_USERNAME:PRIVATE_REGISTRY_PASSWORD docker://registry.redhat.io/SRC_IMAGE:SRC_TAG docker://LOCAL_NODE_FQDN:5000/DST_IMAGE:DST_TAG

- Replace CERTIFICATE_DIRECTORY_PATH with the directory path to the self-signed certificates.

- Replace RED_HAT_CUSTOMER_PORTAL_LOGIN and RED_HAT_CUSTOMER_PORTAL_PASSWORD with your Red Hat Customer Portal credentials.

- Replace PRIVATE_REGISTRY_USERNAME and PRIVATE_REGISTRY_PASSWORD with the private registry credentials.

- Replace SRC_IMAGE and SRC_TAG with the name and tag of the image to copy from registry.redhat.io.

- Replace DST_IMAGE and DST_TAG with the name and tag of the image to copy to the private registry.

Replace LOCAL_NODE_FQDN with the FQDN of the private registry.

Example

[root@admin ~]# podman run -v /opt/registry/certs:/certs:Z -v /opt/registry/certs/domain.cert:/certs/domain.cert:Z --rm registry.redhat.io/rhel8/skopeo:8.5-8 skopeo copy --remove-signatures --src-creds myusername:mypassword1 --dest-cert-dir=./certs/ --dest-creds myregistryusername:myregistrypassword1 docker://registry.redhat.io/rhceph/rhceph-5-rhel8:latest docker://admin.lab.redhat.com:5000/rhceph/rhceph-5-rhel8:latest [root@admin ~]# podman run -v /opt/registry/certs:/certs:Z -v /opt/registry/certs/domain.cert:/certs/domain.cert:Z --rm registry.redhat.io/rhel8/skopeo:8.5-8 skopeo copy --remove-signatures --src-creds myusername:mypassword1 --dest-cert-dir=./certs/ --dest-creds myregistryusername:myregistrypassword1 docker://registry.redhat.io/openshift4/ose-prometheus-node-exporter:v4.10 docker://admin.lab.redhat.com:5000/openshift4/ose-prometheus-node-exporter:v4.10 [root@admin ~]# podman run -v /opt/registry/certs:/certs:Z -v /opt/registry/certs/domain.cert:/certs/domain.cert:Z --rm registry.redhat.io/rhel8/skopeo:8.5-8 skopeo copy --remove-signatures --src-creds myusername:mypassword1 --dest-cert-dir=./certs/ --dest-creds myregistryusername:myregistrypassword1 docker://registry.redhat.io/rhceph/rhceph-5-dashboard-rhel8:latest docker://admin.lab.redhat.com:5000/rhceph/rhceph-5-dashboard-rhel8:latest [root@admin ~]# podman run -v /opt/registry/certs:/certs:Z -v /opt/registry/certs/domain.cert:/certs/domain.cert:Z --rm registry.redhat.io/rhel8/skopeo:8.5-8 skopeo copy --remove-signatures --src-creds myusername:mypassword1 --dest-cert-dir=./certs/ --dest-creds myregistryusername:myregistrypassword1 docker://registry.redhat.io/openshift4/ose-prometheus:v4.10 docker://admin.lab.redhat.com:5000/openshift4/ose-prometheus:v4.10 [root@admin ~]# podman run -v /opt/registry/certs:/certs:Z -v /opt/registry/certs/domain.cert:/certs/domain.cert:Z --rm registry.redhat.io/rhel8/skopeo:8.5-8 skopeo copy --remove-signatures --src-creds myusername:mypassword1 --dest-cert-dir=./certs/ --dest-creds myregistryusername:myregistrypassword1 docker://registry.redhat.io/openshift4/ose-prometheus-alertmanager:v4.10 docker://admin.lab.redhat.com:5000/openshift4/ose-prometheus-alertmanager:v4.10

Using the

curlcommand, verify the images reside in the local registry:Syntax

curl -u PRIVATE_REGISTRY_USERNAME:PRIVATE_REGISTRY_PASSWORD https://LOCAL_NODE_FQDN:5000/v2/_catalog

Example

[root@admin ~]# curl -u myregistryusername:myregistrypassword1 https://admin.lab.redhat.com:5000/v2/_catalog {"repositories":["openshift4/ose-prometheus","openshift4/ose-prometheus-alertmanager","openshift4/ose-prometheus-node-exporter","rhceph/rhceph-5-dashboard-rhel8","rhceph/rhceph-5-rhel8"]}

Additional Resources

- For more information on different image Ceph package versions, see the knowledge base solution for details on What are the Red Hat Ceph Storage releases and corresponding Ceph package versions?

3.12.7. Running the preflight playbook for a disconnected installation

You use the cephadm-preflight.yml Ansible playbook to configure the Ceph repository and prepare the storage cluster for bootstrapping. It also installs some prerequisites, such as podman, lvm2, chronyd, and cephadm.

Skip these steps for Red Hat Enterprise Linux 9 as cephadm-preflight playbook is not supported.

The preflight playbook uses the cephadm-ansible inventory hosts file to identify all the nodes in the storage cluster. The default location for cephadm-ansible, cephadm-preflight.yml, and the inventory hosts file is /usr/share/cephadm-ansible/.

The following example shows the structure of a typical inventory file:

Example

host02 host03 host04 [admin] host01

The [admin] group in the inventory file contains the name of the node where the admin keyring is stored.

Run the preflight playbook before you bootstrap the initial host.

Prerequisites

-

The

cephadm-ansiblepackage is installed on the Ansible administration node. - Root-level access to all nodes in the storage cluster.

-

Passwordless

sshis set up on all hosts in the storage cluster. Nodes configured to access a local YUM repository server with the following repositories enabled:

- rhel-8-for-x86_64-baseos-rpms

- rhel-8-for-x86_64-appstream-rpms

- rhceph-5-tools-for-rhel-8-x86_64-rpms

For more information about setting up a local YUM repository, see the knowledge base article Creating a Local Repository and Sharing with Disconnected/Offline/Air-gapped Systems

Procedure

-

Navigate to the

/usr/share/cephadm-ansibledirectory on the Ansible administration node. -

Open and edit the

hostsfile and add your nodes. Run the preflight playbook with the

ceph_originparameter set tocustomto use a local YUM repository:Syntax

ansible-playbook -i INVENTORY_FILE cephadm-preflight.yml --extra-vars "ceph_origin=custom" -e "custom_repo_url=CUSTOM_REPO_URL"

Example

[ceph-admin@admin cephadm-ansible]$ ansible-playbook -i hosts cephadm-preflight.yml --extra-vars "ceph_origin=custom" -e "custom_repo_url=http://mycustomrepo.lab.redhat.com/x86_64/os/"

After installation is complete,

cephadmresides in the/usr/sbin/directory.Alternatively, you can use the

--limitoption to run the preflight playbook on a selected set of hosts in the storage cluster:Syntax

ansible-playbook -i INVENTORY_FILE cephadm-preflight.yml --extra-vars "ceph_origin=custom" -e "custom_repo_url=CUSTOM_REPO_URL" --limit GROUP_NAME|NODE_NAME

Replace GROUP_NAME with a group name from your inventory file. Replace NODE_NAME with a specific node name from your inventory file.

Example

[ceph-admin@admin cephadm-ansible]$ ansible-playbook -i hosts cephadm-preflight.yml --extra-vars "ceph_origin=custom" -e "custom_repo_url=http://mycustomrepo.lab.redhat.com/x86_64/os/" --limit clients [ceph-admin@admin cephadm-ansible]$ ansible-playbook -i hosts cephadm-preflight.yml --extra-vars "ceph_origin=custom" -e "custom_repo_url=http://mycustomrepo.lab.redhat.com/x86_64/os/" --limit host02

NoteWhen you run the preflight playbook,

cephadm-ansibleautomatically installschronydandceph-commonon the client nodes.

3.12.8. Performing a disconnected installation

Before you can perform the installation, you must obtain a Red Hat Ceph Storage container image, either from a proxy host that has access to the Red Hat registry or by copying the image to your local registry.

If your local registry uses a self-signed certificate with a local registry, ensure you have added the trusted root certificate to the bootstrap host. For more information, see Configuring a private registry for a disconnected installation.

Red Hat Ceph Storage 5 is supported on Red Hat Enterprise Linux 8.4 EUS or later.

Before you begin the bootstrapping process, make sure that the container image that you want to use has the same version of Red Hat Ceph Storage as cephadm. If the two versions do not match, bootstrapping fails at the Creating initial admin user stage.

Prerequisites

- At least one running virtual machine (VM) or server.

- Root-level access to all nodes.

-

Passwordless

sshis set up on all hosts in the storage cluster. - The preflight playbook has been run on the bootstrap host in the storage cluster. For more information, see Running the preflight playbook for a disconnected installation.

- A private registry has been configured and the bootstrap node has access to it. For more information, see Configuring a private registry for a disconnected installation.

- A Red Hat Ceph Storage container image resides in the custom registry.

Procedure

- Log in to the bootstrap host.

Bootstrap the storage cluster:

Syntax

cephadm --image PRIVATE_REGISTRY_NODE_FQDN:5000/CUSTOM_IMAGE_NAME:IMAGE_TAG bootstrap --mon-ip IP_ADDRESS --registry-url PRIVATE_REGISTRY_NODE_FQDN:5000 --registry-username PRIVATE_REGISTRY_USERNAME --registry-password PRIVATE_REGISTRY_PASSWORD

- Replace PRIVATE_REGISTRY_NODE_FQDN with the fully qualified domain name of your private registry.

- Replace CUSTOM_IMAGE_NAME and IMAGE_TAG with the name and tag of the Red Hat Ceph Storage container image that resides in the private registry.

-

Replace IP_ADDRESS with the IP address of the node you are using to run

cephadm bootstrap. - Replace PRIVATE_REGISTRY_USERNAME with the username to create for the private registry.

Replace PRIVATE_REGISTRY_PASSWORD with the password to create for the private registry username.

Example

[root@host01 ~]# cephadm --image admin.lab.redhat.com:5000/rhceph/rhceph-5-rhel8:latest bootstrap --mon-ip 10.10.128.68 --registry-url admin.lab.redhat.com:5000 --registry-username myregistryusername --registry-password myregistrypassword1

The script takes a few minutes to complete. Once the script completes, it provides the credentials to the Red Hat Ceph Storage Dashboard URL, a command to access the Ceph command-line interface (CLI), and a request to enable telemetry.

Ceph Dashboard is now available at: URL: https://host01:8443/ User: admin Password: i8nhu7zham Enabling client.admin keyring and conf on hosts with "admin" label You can access the Ceph CLI with: sudo /usr/sbin/cephadm shell --fsid 266ee7a8-2a05-11eb-b846-5254002d4916 -c /etc/ceph/ceph.conf -k /etc/ceph/ceph.client.admin.keyring Please consider enabling telemetry to help improve Ceph: ceph telemetry on For more information see: https://docs.ceph.com/docs/master/mgr/telemetry/ Bootstrap complete.

After the bootstrap process is complete, see Changing configurations of custom container images for disconnected installations to configure the container images.

Additional Resources

- Once your storage cluster is up and running, see the Red Hat Ceph Storage Operations Guide for more information about configuring additional daemons and services.

3.12.9. Changing configurations of custom container images for disconnected installations

After you perform the initial bootstrap for disconnected nodes, you must specify custom container images for monitoring stack daemons. You can override the default container images for monitoring stack daemons, since the nodes do not have access to the default container registry.

Make sure that the bootstrap process on the initial host is complete before making any configuration changes.

By default, the monitoring stack components are deployed based on the primary Ceph image. For disconnected environment of the storage cluster, you can use the latest available monitoring stack component images.

When using a custom registry, be sure to log in to the custom registry on newly added nodes before adding any Ceph daemons.

Syntax

# ceph cephadm registry-login --registry-url CUSTOM_REGISTRY_NAME --registry_username REGISTRY_USERNAME --registry_password REGISTRY_PASSWORD

Example

# ceph cephadm registry-login --registry-url myregistry --registry_username myregistryusername --registry_password myregistrypassword1

Prerequisites

- At least one running virtual machine (VM) or server.

- Red Hat Enterprise Linux 8.4 EUS or Red Hat Enterprise Linux 8.5.

- Root-level access to all nodes.

-

Passwordless

sshis set up on all hosts in the storage cluster.

Procedure

Set the custom container images with the

ceph configcommand:Syntax

ceph config set mgr mgr/cephadm/OPTION_NAME CUSTOM_REGISTRY_NAME/CONTAINER_NAME

Use the following options for OPTION_NAME:

container_image_prometheus container_image_grafana container_image_alertmanager container_image_node_exporter

Example

[root@host01 ~]# ceph config set mgr mgr/cephadm/container_image_prometheus myregistry/mycontainer [root@host01 ~]# ceph config set mgr mgr/cephadm/container_image_grafana myregistry/mycontainer [root@host01 ~]# ceph config set mgr mgr/cephadm/container_image_alertmanager myregistry/mycontainer [root@host01 ~]# ceph config set mgr mgr/cephadm/container_image_node_exporter myregistry/mycontainer

Redeploy

node-exporter:Syntax

ceph orch redeploy node-exporter

If any of the services do not deploy, you can redeploy them with the ceph orch redeploy command.

By setting a custom image, the default values for the configuration image name and tag will be overridden, but not overwritten. The default values change when updates become available. By setting a custom image, you will not be able to configure the component for which you have set the custom image for automatic updates. You will need to manually update the configuration image name and tag to be able to install updates.

If you choose to revert to using the default configuration, you can reset the custom container image. Use

ceph config rmto reset the configuration option:Syntax

ceph config rm mgr mgr/cephadm/OPTION_NAMEExample

ceph config rm mgr mgr/cephadm/container_image_prometheus

Additional Resources

- For more information about performing a disconnected installation, see Performing a disconnected installation.

3.13. Distributing SSH keys

You can use the cephadm-distribute-ssh-key.yml playbook to distribute the SSH keys instead of creating and distributing the keys manually. The playbook distributes an SSH public key over all hosts in the inventory.

You can also generate an SSH key pair on the Ansible administration node and distribute the public key to each node in the storage cluster so that Ansible can access the nodes without being prompted for a password.

Prerequisites

- Ansible is installed on the administration node.

- Access to the Ansible administration node.

- Ansible user with sudo access to all nodes in the storage cluster.

- Bootstrapping is completed. See the Bootstrapping a new storage cluster section in the Red Hat Ceph Storage Installation Guide.

Procedure

Navigate to the

/usr/share/cephadm-ansibledirectory on the Ansible administration node:Example

[ansible@admin ~]$ cd /usr/share/cephadm-ansible

From the Ansible administration node, distribute the SSH keys. The optional

cephadm_pubkey_pathparameter is the full path name of the SSH public key file on the ansible controller host.NoteIf

cephadm_pubkey_pathis not specified, the playbook gets the key from thecephadm get-pub-keycommand. This implies that you have at least bootstrapped a minimal cluster.Syntax

ansible-playbook -i INVENTORY_HOST_FILE cephadm-distribute-ssh-key.yml -e cephadm_ssh_user=USER_NAME -e cephadm_pubkey_path= home/cephadm/ceph.key -e admin_node=ADMIN_NODE_NAME_1

Example

[ansible@admin cephadm-ansible]$ ansible-playbook -i hosts cephadm-distribute-ssh-key.yml -e cephadm_ssh_user=ceph-admin -e cephadm_pubkey_path=/home/cephadm/ceph.key -e admin_node=host01 [ansible@admin cephadm-ansible]$ ansible-playbook -i hosts cephadm-distribute-ssh-key.yml -e cephadm_ssh_user=ceph-admin -e admin_node=host01

3.14. Launching the cephadm shell

The cephadm shell command launches a bash shell in a container with all of the Ceph packages installed. This enables you to perform “Day One” cluster setup tasks, such as installation and bootstrapping, and to invoke ceph commands.

Prerequisites

- A storage cluster that has been installed and bootstrapped.

- Root-level access to all nodes in the storage cluster.

Procedure

There are two ways to launch the cephadm shell:

Enter

cephadm shellat the system prompt. This example invokes theceph -scommand from within the shell.Example

[root@host01 ~]# cephadm shell [ceph: root@host01 /]# ceph -s

At the system prompt, type

cephadm shelland the command you want to execute:Example

[root@host01 ~]# cephadm shell ceph -s cluster: id: f64f341c-655d-11eb-8778-fa163e914bcc health: HEALTH_OK services: mon: 3 daemons, quorum host01,host02,host03 (age 94m) mgr: host01.lbnhug(active, since 59m), standbys: host02.rofgay, host03.ohipra mds: 1/1 daemons up, 1 standby osd: 18 osds: 18 up (since 10m), 18 in (since 10m) rgw: 4 daemons active (2 hosts, 1 zones) data: volumes: 1/1 healthy pools: 8 pools, 225 pgs objects: 230 objects, 9.9 KiB usage: 271 MiB used, 269 GiB / 270 GiB avail pgs: 225 active+clean io: client: 85 B/s rd, 0 op/s rd, 0 op/s wr

If the node contains configuration and keyring files in /etc/ceph/, the container environment uses the values in those files as defaults for the cephadm shell. If you execute the cephadm shell on a MON node, the cephadm shell inherits its default configuration from the MON container, instead of using the default configuration.

3.15. Verifying the cluster installation

Once the cluster installation is complete, you can verify that the Red Hat Ceph Storage 5 installation is running properly.

There are two ways of verifying the storage cluster installation as a root user:

-

Run the

podman pscommand. -

Run the

cephadm shell ceph -s.

Prerequisites

- Root-level access to all nodes in the storage cluster.

Procedure

Run the

podman pscommand:Example

[root@host01 ~]# podman ps

NoteIn Red Hat Ceph Storage 5, the format of the

systemdunits has changed. In theNAMEScolumn, the unit files now include theFSID.Run the

cephadm shell ceph -scommand:Example

[root@host01 ~]# cephadm shell ceph -s cluster: id: f64f341c-655d-11eb-8778-fa163e914bcc health: HEALTH_OK services: mon: 3 daemons, quorum host01,host02,host03 (age 94m) mgr: host01.lbnhug(active, since 59m), standbys: host02.rofgay, host03.ohipra mds: 1/1 daemons up, 1 standby osd: 18 osds: 18 up (since 10m), 18 in (since 10m) rgw: 4 daemons active (2 hosts, 1 zones) data: volumes: 1/1 healthy pools: 8 pools, 225 pgs objects: 230 objects, 9.9 KiB usage: 271 MiB used, 269 GiB / 270 GiB avail pgs: 225 active+clean io: client: 85 B/s rd, 0 op/s rd, 0 op/s wr

NoteThe health of the storage cluster is in HEALTH_WARN status as the hosts and the daemons are not added.

3.16. Adding hosts

Bootstrapping the Red Hat Ceph Storage installation creates a working storage cluster, consisting of one Monitor daemon and one Manager daemon within the same container. As a storage administrator, you can add additional hosts to the storage cluster and configure them.

-

For Red Hat Enterprise Linux 8, running the preflight playbook installs

podman,lvm2,chronyd, andcephadmon all hosts listed in the Ansible inventory file. -

For Red Hat Enterprise Linux 9, you need to manually install

podman,lvm2,chronyd, andcephadmon all hosts and skip steps for running ansible playbooks as the preflight playbook is not supported. When using a custom registry, be sure to log in to the custom registry on newly added nodes before adding any Ceph daemons.

.Syntax [source,subs="verbatim,quotes"] ---- # ceph cephadm registry-login --registry-url _CUSTOM_REGISTRY_NAME_ --registry_username _REGISTRY_USERNAME_ --registry_password _REGISTRY_PASSWORD_ ----

.Example ---- # ceph cephadm registry-login --registry-url myregistry --registry_username myregistryusername --registry_password myregistrypassword1 ----

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level or user with sudo access to all nodes in the storage cluster.

- Register the nodes to the CDN and attach subscriptions.

-

Ansible user with sudo and passwordless

sshaccess to all nodes in the storage cluster.

Procedure

+

In the following procedure, use either root, as indicated, or the username with which the user is bootstrapped.

From the node that contains the admin keyring, install the storage cluster’s public SSH key in the root user’s

authorized_keysfile on the new host:Syntax

ssh-copy-id -f -i /etc/ceph/ceph.pub user@NEWHOSTExample

[root@host01 ~]# ssh-copy-id -f -i /etc/ceph/ceph.pub root@host02 [root@host01 ~]# ssh-copy-id -f -i /etc/ceph/ceph.pub root@host03

Navigate to the

/usr/share/cephadm-ansibledirectory on the Ansible administration node.Example

[ceph-admin@admin ~]$ cd /usr/share/cephadm-ansible

From the Ansible administration node, add the new host to the Ansible inventory file. The default location for the file is

/usr/share/cephadm-ansible/hosts. The following example shows the structure of a typical inventory file:Example

[ceph-admin@admin ~]$ cat hosts host02 host03 host04 [admin] host01

NoteIf you have previously added the new host to the Ansible inventory file and run the preflight playbook on the host, skip to step 4.

Run the preflight playbook with the

--limitoption for Red Hat Enterprise Linux 8:Syntax

ansible-playbook -i INVENTORY_FILE cephadm-preflight.yml --extra-vars "ceph_origin=rhcs" --limit NEWHOST

Example

[ceph-admin@admin cephadm-ansible]$ ansible-playbook -i hosts cephadm-preflight.yml --extra-vars "ceph_origin=rhcs" --limit host02

The preflight playbook installs

podman,lvm2,chronyd, andcephadmon the new host. After installation is complete,cephadmresides in the/usr/sbin/directory.For Red Hat Enterprise Linux 9, install

podman,lvm2,chronyd, andcephadmmanually:[root@host01 ~]# dnf install podman lvm2 chronyd cephadm

From the bootstrap node, use the

cephadmorchestrator to add the new host to the storage cluster:Syntax

ceph orch host add NEWHOSTExample

[ceph: root@host01 /]# ceph orch host add host02 Added host 'host02' with addr '10.10.128.69' [ceph: root@host01 /]# ceph orch host add host03 Added host 'host03' with addr '10.10.128.70'

Optional: You can also add nodes by IP address, before and after you run the preflight playbook. If you do not have DNS configured in your storage cluster environment, you can add the hosts by IP address, along with the host names.

Syntax

ceph orch host add HOSTNAME IP_ADDRESS

Example

[ceph: root@host01 /]# ceph orch host add host02 10.10.128.69 Added host 'host02' with addr '10.10.128.69'

Verification

View the status of the storage cluster and verify that the new host has been added. The STATUS of the hosts is blank, in the output of the

ceph orch host lscommand.Example

[ceph: root@host01 /]# ceph orch host ls

Additional Resources

- See the Registering Red Hat Ceph Storage nodes to the CDN and attaching subscriptions section in the Red Hat Ceph Storage Installation Guide.

- See the Creating an Ansible user with sudo access section in the Red Hat Ceph Storage Installation Guide.

3.16.1. Using the addr option to identify hosts

The addr option offers an additional way to contact a host. Add the IP address of the host to the addr option. If ssh cannot connect to the host by its hostname, then it uses the value stored in addr to reach the host by its IP address.

Prerequisites

- A storage cluster that has been installed and bootstrapped.

- Root-level access to all nodes in the storage cluster.

Procedure

Run this procedure from inside the cephadm shell.

Add the IP address:

Syntax

ceph orch host add HOSTNAME IP_ADDR

Example

[ceph: root@host01 /]# ceph orch host add host01 10.10.128.68

If adding a host by hostname results in that host being added with an IPv6 address instead of an IPv4 address, use ceph orch host to specify the IP address of that host:

ceph orch host set-addr HOSTNAME IP_ADDR

To convert the IP address from IPv6 format to IPv4 format for a host you have added, use the following command:

ceph orch host set-addr HOSTNAME IPV4_ADDRESS

3.16.2. Adding multiple hosts

Use a YAML file to add multiple hosts to the storage cluster at the same time.

Be sure to create the hosts.yaml file within a host container, or create the file on the local host and then use the cephadm shell to mount the file within the container. The cephadm shell automatically places mounted files in /mnt. If you create the file directly on the local host and then apply the hosts.yaml file instead of mounting it, you might see a File does not exist error.

Prerequisites

- A storage cluster that has been installed and bootstrapped.

- Root-level access to all nodes in the storage cluster.

Procedure

-

Copy over the public

sshkey to each of the hosts that you want to add. -

Use a text editor to create a

hosts.yamlfile. Add the host descriptions to the

hosts.yamlfile, as shown in the following example. Include the labels to identify placements for the daemons that you want to deploy on each host. Separate each host description with three dashes (---).Example

service_type: host addr: hostname: host02 labels: - mon - osd - mgr --- service_type: host addr: hostname: host03 labels: - mon - osd - mgr --- service_type: host addr: hostname: host04 labels: - mon - osd

If you created the

hosts.yamlfile within the host container, invoke theceph orch applycommand:Example

[root@host01 ~]# ceph orch apply -i hosts.yaml Added host 'host02' with addr '10.10.128.69' Added host 'host03' with addr '10.10.128.70' Added host 'host04' with addr '10.10.128.71'

If you created the

hosts.yamlfile directly on the local host, use thecephadmshell to mount the file:Example

[root@host01 ~]# cephadm shell --mount hosts.yaml -- ceph orch apply -i /mnt/hosts.yaml

View the list of hosts and their labels:

Example

[root@host01 ~]# ceph orch host ls HOST ADDR LABELS STATUS host02 host02 mon osd mgr host03 host03 mon osd mgr host04 host04 mon osd

NoteIf a host is online and operating normally, its status is blank. An offline host shows a status of OFFLINE, and a host in maintenance mode shows a status of MAINTENANCE.

3.16.3. Adding hosts in disconnected deployments

If you are running a storage cluster on a private network and your host domain name server (DNS) cannot be reached through private IP, you must include both the host name and the IP address for each host you want to add to the storage cluster.

Prerequisites

- A running storage cluster.

- Root-level access to all hosts in the storage cluster.

Procedure

Invoke the

cephadmshell.Syntax

[root@host01 ~]# cephadm shell

Add the host:

Syntax

ceph orch host add HOST_NAME HOST_ADDRESS

Example

[ceph: root@host01 /]# ceph orch host add host03 10.10.128.70

3.16.4. Removing hosts

You can remove hosts of a Ceph cluster with the Ceph Orchestrators. All the daemons are removed with the drain option which adds the _no_schedule label to ensure that you cannot deploy any daemons or a cluster till the operation is complete.

If you are removing the bootstrap host, be sure to copy the admin keyring and the configuration file to another host in the storage cluster before you remove the host.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to all the nodes.

- Hosts are added to the storage cluster.

- All the services are deployed.

- Cephadm is deployed on the nodes where the services have to be removed.

Procedure

Log into the Cephadm shell:

Example

[root@host01 ~]# cephadm shell

Fetch the host details:

Example

[ceph: root@host01 /]# ceph orch host ls

Drain all the daemons from the host:

Syntax

ceph orch host drain HOSTNAMEExample

[ceph: root@host01 /]# ceph orch host drain host02

The

_no_schedulelabel is automatically applied to the host which blocks deployment.Check the status of OSD removal:

Example

[ceph: root@host01 /]# ceph orch osd rm status

When no placement groups (PG) are left on the OSD, the OSD is decommissioned and removed from the storage cluster.

Check if all the daemons are removed from the storage cluster:

Syntax