Chapter 4. Configuring CodeReady Workspaces

The following chapter describes configuration methods and options for Red Hat CodeReady Workspaces, with some user stories as example.

- Section 4.1, “Advanced configuration options for the CodeReady Workspaces server component” describes advanced configuration methods to use when the previous method is not applicable.

The next sections describe some specific user stories.

- Section 4.2, “Configuring project strategies”

- Section 4.5, “Running more than one workspace at a time”

- Section 4.7, “Configuring workspaces nodeSelector”

- Section 4.8, “Configuring Red Hat CodeReady Workspaces server hostname”

- Section 4.9, “Configuring labels for OpenShift Route”

- Section 4.10, “Configuring labels for OpenShift Route”

- Section 4.11, “Deploying CodeReady Workspaces with support for Git repositories with self-signed certificates”

- Section 4.12, “Installing CodeReady Workspaces using storage classes”

- Section 4.4, “Configuring storage types”

- Section 4.13, “Importing untrusted TLS certificates to CodeReady Workspaces”

- Section 4.14, “Switching between external and internal DNS names in inter-component communication”

- Section 4.15, “Setting up the RH-SSO codeready-workspaces-username-readonly theme for the Red Hat CodeReady Workspaces login page”

- Section 4.16, “Mounting a secret as a file or an environment variable into a Red Hat CodeReady Workspaces container”

4.1. Advanced configuration options for the CodeReady Workspaces server component

The following section describes advanced deployment and configuration methods for the CodeReady Workspaces server component.

4.1.1. Understanding CodeReady Workspaces server advanced configuration using the Operator

The following section describes the CodeReady Workspaces server component advanced configuration method for a deployment using the Operator.

Advanced configuration is necessary to:

-

Add environment variables not automatically generated by the Operator from the standard

CheClusterCustom Resource fields. -

Override the properties automatically generated by the Operator from the standard

CheClusterCustom Resource fields.

The customCheProperties field, part of the CheCluster Custom Resource server settings, contains a map of additional environment variables to apply to the CodeReady Workspaces server component.

Example 4.1. Override the default memory limit for workspaces

-

Add the

CHE_WORKSPACE_DEFAULT__MEMORY__LIMIT__MBproperty tocustomCheProperties:

apiVersion: org.eclipse.che/v1

kind: CheCluster

# [...]

spec:

server:

# [...]

customCheProperties:

CHE_WORKSPACE_DEFAULT__MEMORY__LIMIT__MB: "2048"

# [...]

Previous versions of the CodeReady Workspaces Operator had a configMap named custom to fulfill this role. If the CodeReady Workspaces Operator finds a configMap with the name custom, it adds the data it contains into the customCheProperties field, redeploys CodeReady Workspaces, and deletes the custom configMap.

Additional resources

-

For the list of all parameters available in the

CheClusterCustom Resource, see Chapter 2, Configuring the CodeReady Workspaces installation. -

For the list of all parameters available to configure

customCheProperties, see Section 4.1.2, “CodeReady Workspaces server component system properties reference”.

4.1.2. CodeReady Workspaces server component system properties reference

The following document describes all possible configuration properties of the CodeReady Workspaces server component.

4.1.2.1. Che server

Table 4.1. Che server

| Environment Variable Name | Default value | Description |

|---|---|---|

|

|

| Folder where CodeReady Workspaces stores internal data objects. |

|

|

| API service. Browsers initiate REST communications to CodeReady Workspaces server with this URL. |

|

|

| API service internal network url. Back-end services should initiate REST communications to CodeReady Workspaces server with this URL |

|

|

| CodeReady Workspaces websocket major endpoint. Provides basic communication endpoint for major websocket interactions and messaging. |

|

|

| Your projects are synchronized from the CodeReady Workspaces server into the machine running each workspace. This is the directory in the machine where your projects are placed. |

|

|

|

Used when OpenShift-type components in a devfile request project PVC creation (Applied in case of 'unique' and 'per workspace' PVC strategy. In case of the 'common' PVC strategy, it is rewritten with the value of the |

|

|

| Defines the directory inside the machine where all the workspace logs are placed. Provide this value into the machine, for example, as an environment variable. This is to ensure that agent developers can use this directory to back up agent logs. |

|

| Configures proxies used by runtimes powering workspaces. | |

|

| Configuresproxies used by runtimes powering workspaces. | |

|

| Configuresproxiesused by runtimes powering workspaces. | |

|

|

|

By default, when users access a workspace with its URL, the workspace automatically starts (if currently stopped). Set this to |

|

|

|

Workspace threads pool configuration. This pool is used for workspace-related operations that require asynchronous execution, for example, starting and stopping. Possible values are |

|

|

|

This property is ignored when pool type is different from |

|

|

|

This property is ignored when pool type is not set to |

|

|

| This property specifies how many threads to use for workspace server liveness probes. |

|

|

| HTTP proxy setting for workspace JVM. |

|

|

| Java command-line options added to JVMs running in workspaces. |

|

|

| Maven command-line options added to JVMs running agents in workspaces. |

|

|

| RAM limit default for each machine that has no RAM settings in its environment. Value less or equal to 0 is interpreted as disabling the limit. |

|

|

| RAM request for each container that has no explicit RAM settings in its environment. This amount is allocated when the workspace container is created. This property may not be supported by all infrastructure implementations. Currently it is supported by OpenShift. A memory request exceeding the memory limit is ignored, and only the limit size is used. Value less or equal to 0 is interpreted as disabling the limit. |

|

|

|

CPU limit for each container that has no CPU settings in its environment. Specify either in floating point cores number, for example, |

|

|

| CPU request for each container that has no CPU settings in environment. A CPU request exceeding the CPU limit is ignored, and only limit number is used. Value less or equal to 0 is interpreted as disabling the limit. |

|

|

| RAM limit and request for each sidecar that has no RAM settings in the CodeReady Workspaces plug-in configuration. Value less or equal to 0 is interpreted as disabling the limit. |

|

|

| RAMlimit and request for each sidecar that has no RAM settings in the CodeReady Workspaces plug-in configuration. Value less or equal to 0 is interpreted as disabling the limit. |

|

|

|

CPU limit and request default for each sidecar that has no CPU settings in the CodeReady Workspaces plug-in configuration. Specify either in floating point cores number, for example, |

|

|

|

CPUlimit and request default for each sidecar that has no CPU settings in the CodeReady Workspaces plug-in configuration. Specify either in floating point cores number, for example, |

|

|

|

Defines image-pulling strategy for sidecars. Possible values are: |

|

|

| Period of inactive workspaces suspend job execution. |

|

|

| The period of the cleanup of the activity table. The activity table can contain invalid or stale data if some unforeseen errors happen, like a server crash at a peculiar point in time. The default is to run the cleanup job every hour. |

|

|

| The delay after server startup to start the first activity clean up job. |

|

|

| Delay before first workspace idleness check job started to avoid mass suspend if ws master was unavailable for period close to inactivity timeout. |

|

|

| Period of stopped temporary workspaces cleanup job execution. |

|

|

| Periodof stopped temporary workspaces cleanup job execution. |

|

|

| Number of sequential successful pings to server after which it is treated as available. Note: the property is common for all servers e.g. workspace agent, terminal, exec etc. |

|

|

| Interval, in milliseconds, between successive pings to workspace server. |

|

|

| List of servers names which require liveness probes |

|

|

| Limit size of the logs collected from single container that can be observed by che-server when debugging workspace startup. default 10MB=10485760 |

|

|

| If true, 'stop-workspace' role with the edit privileges will be granted to the 'che' ServiceAccount if OpenShift OAuth is enabled. This configuration is mainly required for workspace idling when the OpenShift OAuth is enabled. |

4.1.2.2. Authentication parameters

Table 4.2. Authentication parameters

| Environment Variable Name | Default value | Description |

|---|---|---|

|

|

| CodeReady Workspaces has a single identity implementation, so this does not change the user experience. If true, enables user creation at API level |

|

|

| Authentication error page address |

|

| Reserved user names | |

|

|

| You can setup GitHub OAuth to automate authentication to remote repositories. You need to first register this application with GitHub OAuth. |

|

|

| Youcan setup GitHub OAuth to automate authentication to remote repositories. You need to first register this application with GitHub OAuth. |

|

|

| Youcansetup GitHub OAuth to automate authentication to remote repositories. You need to first register this application with GitHub OAuth. |

|

|

| YoucansetupGitHub OAuth to automate authentication to remote repositories. You need to first register this application with GitHub OAuth. |

|

|

| YoucansetupGitHubOAuth to automate authentication to remote repositories. You need to first register this application with GitHub OAuth. |

|

|

| Configuration of OpenShift OAuth client. Used to obtain OpenShift OAuth token. |

|

|

| Configurationof OpenShift OAuth client. Used to obtain OpenShift OAuth token. |

|

|

| ConfigurationofOpenShift OAuth client. Used to obtain OpenShift OAuth token. |

|

|

| ConfigurationofOpenShiftOAuth client. Used to obtain OpenShift OAuth token. |

4.1.2.3. Internal

Table 4.3. Internal

| Environment Variable Name | Default value | Description |

|---|---|---|

|

|

| CodeReady Workspaces extensions can be scheduled executions on a time basis. This configures the size of the thread pool allocated to extensions that are launched on a recurring schedule. |

|

|

| DB initialization and migration configuration |

|

|

| DBinitialization and migration configuration |

|

| DBinitializationand migration configuration | |

|

|

| DBinitializationandmigration configuration |

|

|

| DBinitializationandmigrationconfiguration |

|

|

| DBinitializationandmigrationconfiguration |

4.1.2.4. OpenShift Infra parameters

Table 4.4. OpenShift Infra parameters

| Environment Variable Name | Default value | Description |

|---|---|---|

|

| Configuration of OpenShift client that Infra will use | |

|

| Configurationof OpenShift client that Infra will use | |

|

|

| Defines the way how servers are exposed to the world in OpenShift infra. List of strategies implemented in CodeReady Workspaces: default-host, multi-host, single-host |

|

|

| Defines the way in which the workspace plugins and editors are exposed in the single-host mode. Supported exposures: - 'native': Exposes servers using OpenShift Ingresses. Works only on Kubernetes. - 'gateway': Exposes servers using reverse-proxy gateway. |

|

|

| Defines the way how to expose devfile endpoints, thus end-user’s applications, in single-host server strategy. They can either follow the single-host strategy and be exposed on subpaths, or they can be exposed on subdomains. - 'multi-host': expose on subdomains - 'single-host': expose on subpaths |

|

|

| Defines labels which will be set to ConfigMaps configuring single-host gateway. |

|

|

Used to generate domain for a server in a workspace in case property | |

|

|

DEPRECATED - please do not change the value of this property otherwise the existing workspaces will loose data. Do not set it on new installations. Defines OpenShift project in which all workspaces will be created. If not set, every workspace will be created in a new namespace, where namespace = workspace id It’s possible to use <username> and <userid> placeholders (e.g.: che-workspace-<username>). In that case, new namespace will be created for each user. Service account with permission to create new namespace must be used. Ignored for OpenShift infra. Use | |

|

|

| Indicates whether CodeReady Workspaces server is allowed to create namespaces/projects for user workspaces, or they’re intended to be created manually by cluster administrator. This property is also used by the OpenShift infra. |

|

|

| Defines default OpenShift project in which user’s workspaces are created if user does not override it. It’s possible to use <username>, <userid> and <workspaceid> placeholders (e.g.: che-workspace-<username>). In that case, new namespace will be created for each user (or workspace). Is used by OpenShift infra as well to specify Project |

|

|

| Defines whether che-server should try to label the workspace namespaces. |

|

|

|

List of labels to find Namespaces/Projects that are used for CodeReady Workspaces Workspaces. They are used to: - find prepared Namespaces/Projects for users in combination with |

|

|

|

List of annotations to find Namespaces/Projects prepared for CodeReady Workspaces users workspaces. Only Namespaces/Projects matching the |

|

|

| Defines if a user is able to specify OpenShift project (or OpenShift project) different from the default. It’s NOT RECOMMENDED to configured true without OAuth configured. This property is also used by the OpenShift infra. |

|

|

|

Defines Kubernetes Service Account name which should be specified to be bound to all workspaces pods. Note that OpenShift Infrastructure won’t create the service account and it should exist. OpenShift infrastructure will check if project is predefined(if |

|

|

| Specifies optional, additional cluster roles to use with the workspace service account. Note that the cluster role names must already exist, and the CodeReady Workspaces service account needs to be able to create a Role Binding to associate these cluster roles with the workspace service account. The names are comma separated. This property deprecates 'che.infra.kubernetes.cluster_role_name'. |

|

|

| Defines time frame that limits the Kubernetes workspace start time |

|

|

| Defines the timeout in minutes that limits the period for which OpenShift Route become ready |

|

|

| If during workspace startup an unrecoverable event defined in the property occurs, terminate workspace immediately instead of waiting until timeout Note that this SHOULD NOT include a mere 'Failed' reason, because that might catch events that are not unrecoverable. A failed container startup is handled explicitly by CodeReady Workspaces server. |

|

|

| Defines whether use the Persistent Volume Claim for che workspace needs e.g backup projects, logs etc or disable it. |

|

|

| Defined which strategy will be used while choosing PVC for workspaces. Supported strategies: - 'common' All workspaces in the same Kubernetes Namespace will reuse the same PVC. Name of PVC may be configured with 'che.infra.kubernetes.pvc.name'. Existing PVC will be used or new one will be created if it doesn’t exist. - 'unique' Separate PVC for each workspace’s volume will be used. Name of PVC is evaluated as '{che.infra.kubernetes.pvc.name} + '-' + {generated_8_chars}'. Existing PVC will be used or a new one will be created if it doesn’t exist. - 'per-workspace' Separate PVC for each workspace will be used. Name of PVC is evaluated as '{che.infra.kubernetes.pvc.name} + '-' + {WORKSPACE_ID}'. Existing PVC will be used or a new one will be created if it doesn’t exist. |

|

|

| Defines whether to run a job that creates workspace’s subpath directories in persistent volume for the 'common' strategy before launching a workspace. Necessary in some versions of OpenShift as workspace subpath volume mounts are created with root permissions, and thus cannot be modified by workspaces running as a user (presents an error importing projects into a workspace in CodeReady Workspaces). The default is 'true', but should be set to false if the version of Openshift/Kubernetes creates subdirectories with user permissions. Relevant issue: https://github.com/kubernetes/kubernetes/issues/41638 Note that this property has effect only if the 'common' PVC strategy used. |

|

|

| Defines the settings of PVC name for che workspaces. Each PVC strategy supplies this value differently. See doc for che.infra.kubernetes.pvc.strategy property |

|

| Defines the storage class of Persistent Volume Claim for the workspaces. Empty strings means 'use default'. | |

|

|

| Defines the size of Persistent Volume Claim of che workspace. Format described here: https://docs.openshift.com/container-platform/4.4/storage/understanding-persistent-storage.html |

|

|

| Pod that is launched when performing persistent volume claim maintenance jobs on OpenShift |

|

|

| Image pull policy of container that used for the maintenance jobs on Kubernetes/OpenShift cluster |

|

|

| Defines pod memory limit for persistent volume claim maintenance jobs |

|

|

| Defines Persistent Volume Claim access mode. Note that for common PVC strategy changing of access mode affects the number of simultaneously running workspaces. If OpenShift flavor where che running is using PVs with RWX access mode then a limit of running workspaces at the same time bounded only by che limits configuration like(RAM, CPU etc). Detailed information about access mode is described here: https://docs.openshift.com/container-platform/4.4/storage/understanding-persistent-storage.html |

|

|

|

Defines whether CodeReady Workspaces Server should wait workspaces PVCs to become bound after creating. It’s used by all PVC strategies. It should be set to |

|

|

| Defined range of ports for installers servers By default, installer will use own port, but if it conflicts with another installer servers then OpenShift infrastructure will reconfigure installer to use first available from this range |

|

|

| Definedrange of ports for installers servers By default, installer will use own port, but if it conflicts with another installer servers then OpenShift infrastructure will reconfigure installer to use first available from this range |

|

|

| Defines annotations for ingresses which are used for servers exposing. Value depends on the kind of ingress controller. OpenShift infrastructure ignores this property because it uses Routes instead of ingresses. Note that for a single-host deployment strategy to work, a controller supporting URL rewriting has to be used (so that URLs can point to different servers while the servers don’t need to support changing the app root). The che.infra.kubernetes.ingress.path.rewrite_transform property defines how the path of the ingress should be transformed to support the URL rewriting and this property defines the set of annotations on the ingress itself that instruct the chosen ingress controller to actually do the URL rewriting, potentially building on the path transformation (if required by the chosen ingress controller). For example for nginx ingress controller 0.22.0 and later the following value is recommended: {'ingress.kubernetes.io/rewrite-target': '/$1','ingress.kubernetes.io/ssl-redirect': 'false',\ 'ingress.kubernetes.io/proxy-connect-timeout': '3600','ingress.kubernetes.io/proxy-read-timeout': '3600'} and the che.infra.kubernetes.ingress.path.rewrite_transform should be set to '%s(.*)' For nginx ingress controller older than 0.22.0, the rewrite-target should be set to merely '/' and the path transform to '%s' (see the the che.infra.kubernetes.ingress.path.rewrite_transform property). Please consult the nginx ingress controller documentation for the explanation of how the ingress controller uses the regular expression present in the ingress path and how it achieves the URL rewriting. |

|

|

| Defines a 'recipe' on how to declare the path of the ingress that should expose a server. The '%s' represents the base public URL of the server and is guaranteed to end with a forward slash. This property must be a valid input to the String.format() method and contain exactly one reference to '%s'. Please see the description of the che.infra.kubernetes.ingress.annotations_json property to see how these two properties interplay when specifying the ingress annotations and path. If not defined, this property defaults to '%s' (without the quotes) which means that the path is not transformed in any way for use with the ingress controller. |

|

|

| Additional labels to add into every Ingress created by CodeReady Workspaces server to allow clear identification. |

|

|

| Defines security context for pods that will be created by OpenShift Infra This is ignored by OpenShift infra |

|

|

| Definessecurity context for pods that will be created by OpenShift Infra This is ignored by OpenShift infra |

|

|

|

Defines grace termination period for pods that will be created by Kubernetes / OpenShift infrastructures Grace termination period of Kubernetes / OpenShift workspace’s pods defaults '0', which allows to terminate pods almost instantly and significantly decrease the time required for stopping a workspace. Note: if |

|

|

|

Number of maximum concurrent async web requests (http requests or ongoing web socket calls) supported in the underlying shared http client of the |

|

|

|

Numberof maximum concurrent async web requests (http requests or ongoing web socket calls) supported in the underlying shared http client of the |

|

|

| Max number of idle connections in the connection pool of the Kubernetes-client shared http client |

|

|

| Keep-alive timeout of the connection pool of the Kubernetes-client shared http client in minutes |

|

|

| Creates Ingresses with Transport Layer Security (TLS) enabled In OpenShift infrastructure, Routes will be TLS-enabled |

|

| Name of a secret that should be used when creating workspace ingresses with TLS Ignored by OpenShift infrastructure | |

|

|

| Data for TLS Secret that should be used for workspaces Ingresses cert and key should be encoded with Base64 algorithm These properties are ignored by OpenShift infrastructure |

|

|

| Datafor TLS Secret that should be used for workspaces Ingresses cert and key should be encoded with Base64 algorithm These properties are ignored by OpenShift infrastructure |

|

|

|

Defines the period with which runtimes consistency checks will be performed. If runtime has inconsistent state then runtime will be stopped automatically. Value must be more than 0 or |

|

|

| Name of cofig map in CodeReady Workspaces server namespace with additional CA TLS certificates to be propagated into all user’s workspaces. If the property is set on OpenShift 4 infrastructure, and che.infra.openshift.trusted_ca.dest_configmap_labels includes config.openshift.io/inject-trusted-cabundle=true label, then cluster CA bundle will be propagated too. |

|

|

| |

|

|

| Configures path on workspace containers where the CA bundle should be mount. Content of config map specified by che.infra.kubernetes.trusted_ca.dest_configmap is mounted. |

|

| Comma separated list of labels to add to the CA certificates config map in user workspace. See che.infra.kubernetes.trusted_ca.dest_configmap property. |

4.1.2.5. OpenShift Infra parameters

Table 4.5. OpenShift Infra parameters

| Environment Variable Name | Default value | Description |

|---|---|---|

|

| DEPRECATED - please do not change the value of this property otherwise the existing workspaces will loose data. Do not set it on new installations. Defines OpenShift namespace in which all workspaces will be created. If not set, every workspace will be created in a new project, where project name = workspace id It’s possible to use <username> and <userid> placeholders (e.g.: che-workspace-<username>). In that case, new project will be created for each user. OpenShift oauth or service account with permission to create new projects must be used. If the project pointed to by this property exists, it will be used for all workspaces. If it does not exist, the namespace specified by the che.infra.kubernetes.namespace.default will be created and used. | |

|

|

| Comma separated list of labels to add to the CA certificates config map in user workspace. See che.infra.kubernetes.trusted_ca.dest_configmap property. This default value is used for automatic cluster CA bundle injection in Openshift 4. |

|

|

| Additional labels to add into every Route created by CodeReady Workspaces server to allow clear identification. |

4.1.2.6. Experimental properties

Table 4.6. Experimental properties

| Environment Variable Name | Default value | Description |

|---|---|---|

|

|

| Docker image of CodeReady Workspaces plugin broker app that resolves workspace tooling configuration and copies plugins dependencies to a workspace Note these images are overridden by the CodeReady Workspaces Operator by default; changing the images here will not have an effect if CodeReady Workspaces is installed via Operator. |

|

|

| Dockerimage of CodeReady Workspaces plugin broker app that resolves workspace tooling configuration and copies plugins dependencies to a workspace Note these images are overridden by the CodeReady Workspaces Operator by default; changing the images here will not have an effect if CodeReady Workspaces is installed via Operator. |

|

|

| Configures the default behavior of the plugin brokers when provisioning plugins into a workspace. If set to true, the plugin brokers will attempt to merge plugins when possible (i.e. they run in the same sidecar image and do not have conflicting settings). This value is the default setting used when the devfile does not specify otherwise, via the 'mergePlugins' attribute. |

|

|

| Docker image of CodeReady Workspaces plugin broker app that resolves workspace tooling configuration and copies plugins dependencies to a workspace |

|

|

| Defines the timeout in minutes that limits the max period of result waiting for plugin broker. |

|

|

| Workspace tooling plugins registry endpoint. Should be a valid HTTP URL. Example: http://che-plugin-registry-eclipse-che.192.168.65.2.nip.io In case CodeReady Workspaces plugins tooling is not needed value 'NULL' should be used |

|

|

| Workspace tooling plugins registry 'internal' endpoint. Should be a valid HTTP URL. Example: http://devfile-registry.che.svc.cluster.local:8080 In case CodeReady Workspaces plugins tooling is not needed value 'NULL' should be used |

|

|

| Devfile Registry endpoint. Should be a valid HTTP URL. Example: http://che-devfile-registry-eclipse-che.192.168.65.2.nip.io In case CodeReady Workspaces plugins tooling is not needed value 'NULL' should be used |

|

|

| Devfile Registry 'internal' endpoint. Should be a valid HTTP URL. Example: http://plugin-registry.che.svc.cluster.local:8080 In case CodeReady Workspaces plugins tooling is not needed value 'NULL' should be used |

|

|

| The configuration property that defines available values for storage types that clients like Dashboard should propose for users during workspace creation/update. Available values: - 'persistent': Persistent Storage slow I/O but persistent. - 'ephemeral': Ephemeral Storage allows for faster I/O but may have limited storage and is not persistent. - 'async': Experimental feature: Asynchronous storage is combination of Ephemeral and Persistent storage. Allows for faster I/O and keep your changes, will backup on stop and restore on start workspace. Will work only if: - che.infra.kubernetes.pvc.strategy='common' - che.limits.user.workspaces.run.count=1 - che.infra.kubernetes.namespace.allow_user_defined=false - che.infra.kubernetes.namespace.default contains <username> in other cases remove 'async' from the list. |

|

|

| The configuration property that defines a default value for storage type that clients like Dashboard should propose for users during workspace creation/update. The 'async' value not recommended as default type since it’s experimental |

|

|

| Configures in which way secure servers will be protected with authentication. Suitable values: - 'default': jwtproxy is configured in a pass-through mode. So, servers should authenticate requests themselves. - 'jwtproxy': jwtproxy will authenticate requests. So, servers will receive only authenticated ones. |

|

|

| Jwtproxy issuer string, token lifetime and optional auth page path to route unsigned requests to. |

|

|

| Jwtproxyissuer string, token lifetime and optional auth page path to route unsigned requests to. |

|

|

| Jwtproxyissuerstring, token lifetime and optional auth page path to route unsigned requests to. |

|

|

| Jwtproxyissuerstring,token lifetime and optional auth page path to route unsigned requests to. |

|

|

| Jwtproxyissuerstring,tokenlifetime and optional auth page path to route unsigned requests to. |

|

|

| Jwtproxyissuerstring,tokenlifetimeand optional auth page path to route unsigned requests to. |

|

|

| Jwtproxyissuerstring,tokenlifetimeandoptional auth page path to route unsigned requests to. |

|

|

| Jwtproxyissuerstring,tokenlifetimeandoptionalauth page path to route unsigned requests to. |

4.1.2.7. Configuration of major "/websocket" endpoint

Table 4.7. Configuration of major "/websocket" endpoint

| Environment Variable Name | Default value | Description |

|---|---|---|

|

|

| Maximum size of the JSON RPC processing pool in case if pool size would be exceeded message execution will be rejected |

|

|

| Initial json processing pool. Minimum number of threads that used to process major JSON RPC messages. |

|

|

| Configuration of queue used to process Json RPC messages. |

|

|

| Port the the http server endpoint that would be exposed with Prometheus metrics |

4.1.2.8. CORS settings

Table 4.8. CORS settings

| Environment Variable Name | Default value | Description |

|---|---|---|

|

|

| CORS filter on WS Master is turned off by default. Use environment variable 'CHE_CORS_ENABLED=true' to turn it on 'cors.allowed.origins' indicates which request origins are allowed |

|

|

| 'cors.support.credentials' indicates if it allows processing of requests with credentials (in cookies, headers, TLS client certificates) |

4.1.2.9. Factory defaults

Table 4.9. Factory defaults

| Environment Variable Name | Default value | Description |

|---|---|---|

|

|

| Editor and plugin which will be used for factories which are created from remote git repository which doesn’t contain any CodeReady Workspaces-specific workspace descriptor Multiple plugins must be comma-separated, for example: pluginFooPublisher/pluginFooName/pluginFooVersion,pluginBarPublisher/pluginBarName/pluginBarVersion |

|

|

| Editorand plugin which will be used for factories which are created from remote git repository which doesn’t contain any CodeReady Workspaces-specific workspace descriptor Multiple plugins must be comma-separated, for example: pluginFooPublisher/pluginFooName/pluginFooVersion,pluginBarPublisher/pluginBarName/pluginBarVersion |

|

|

| Devfile filenames to look on repository-based factories (like GitHub etc). Factory will try to locate those files in the order they enumerated in the property. |

4.1.2.10. Devfile defaults

Table 4.10. Devfile defaults

| Environment Variable Name | Default value | Description |

|---|---|---|

|

|

|

Default Editor that should be provisioned into Devfile if there is no specified Editor Format is |

|

|

|

Default Plugins which should be provisioned for Default Editor. All the plugins from this list that are not explicitly mentioned in the user-defined devfile will be provisioned but only when the default editor is used or if the user-defined editor is the same as the default one (even if in different version). Format is comma-separated |

|

|

| Defines comma-separated list of labels for selecting secrets from a user namespace, which will be mount into workspace containers as a files or env variables. Only secrets that match ALL given labels will be selected. |

|

|

| Plugin is added in case async storage feature will be enabled in workspace config and supported by environment |

|

|

| Docker image for the CodeReady Workspaces async storage |

|

|

| Optionally configures node selector for workspace pod. Format is comma-separated key=value pairs, e.g: disktype=ssd,cpu=xlarge,foo=bar |

|

|

| The timeout for the Asynchronous Storage Pod shutdown after stopping the last used workspace. Value less or equal to 0 interpreted as disabling shutdown ability. |

|

|

| Defines the period with which the Asynchronous Storage Pod stopping ability will be performed (once in 30 minutes by default) |

|

|

| Bitbucket endpoints used for factory integrations. Comma separated list of bitbucket server URLs or NULL if no integration expected. |

4.1.2.11. Che system

Table 4.11. Che system

| Environment Variable Name | Default value | Description |

|---|---|---|

|

|

| System Super Privileged Mode. Grants users with the manageSystem permission additional permissions for getByKey, getByNameSpace, stopWorkspaces, and getResourcesInformation. These are not given to admins by default and these permissions allow admins gain visibility to any workspace along with naming themselves with admin privileges to those workspaces. |

|

|

| Grant system permission for 'che.admin.name' user. If the user already exists it’ll happen on component startup, if not - during the first login when user is persisted in the database. |

4.1.2.12. Workspace limits

Table 4.12. Workspace limits

| Environment Variable Name | Default value | Description |

|---|---|---|

|

|

| Workspaces are the fundamental runtime for users when doing development. You can set parameters that limit how workspaces are created and the resources that are consumed. The maximum amount of RAM that a user can allocate to a workspace when they create a new workspace. The RAM slider is adjusted to this maximum value. |

|

|

| The length of time that a user is idle with their workspace when the system will suspend the workspace and then stopping it. Idleness is the length of time that the user has not interacted with the workspace, meaning that one of our agents has not received interaction. Leaving a browser window open counts toward idleness. |

|

|

| The length of time in milliseconds that a workspace will run, regardless of activity, before the system will suspend it. Set this property if you want to automatically stop workspaces after a period of time. The default is zero, meaning that there is no run timeout. |

4.1.2.13. Users workspace limits

Table 4.13. Users workspace limits

| Environment Variable Name | Default value | Description |

|---|---|---|

|

|

| The total amount of RAM that a single user is allowed to allocate to running workspaces. A user can allocate this RAM to a single workspace or spread it across multiple workspaces. |

|

|

| The maximum number of workspaces that a user is allowed to create. The user will be presented with an error message if they try to create additional workspaces. This applies to the total number of both running and stopped workspaces. |

|

|

| The maximum number of running workspaces that a single user is allowed to have. If the user has reached this threshold and they try to start an additional workspace, they will be prompted with an error message. The user will need to stop a running workspace to activate another. |

4.1.2.14. Organizations workspace limits

Table 4.14. Organizations workspace limits

| Environment Variable Name | Default value | Description |

|---|---|---|

|

|

| The total amount of RAM that a single organization (team) is allowed to allocate to running workspaces. An organization owner can allocate this RAM however they see fit across the team’s workspaces. |

|

|

| The maximum number of workspaces that a organization is allowed to own. The organization will be presented an error message if they try to create additional workspaces. This applies to the total number of both running and stopped workspaces. |

|

|

| The maximum number of running workspaces that a single organization is allowed. If the organization has reached this threshold and they try to start an additional workspace, they will be prompted with an error message. The organization will need to stop a running workspace to activate another. |

|

|

| Address that will be used as from email for email notifications |

4.1.2.15. Organizations notifications settings

Table 4.15. Organizations notifications settings

| Environment Variable Name | Default value | Description |

|---|---|---|

|

|

| Organization notifications sunjects and templates |

|

|

| Organizationnotifications sunjects and templates |

|

|

| |

|

|

| |

|

|

| |

|

|

| |

|

|

| |

|

|

|

4.1.2.16. Multi-user-specific OpenShift infrastructure configuration

Table 4.16. Multi-user-specific OpenShift infrastructure configuration

| Environment Variable Name | Default value | Description |

|---|---|---|

|

|

|

Alias of the Openshift identity provider registered in Keycloak, that should be used to create workspace OpenShift resources in Openshift namespaces owned by the current CodeReady Workspaces user. Should be set to NULL if |

4.1.2.17. Keycloak configuration

Table 4.17. Keycloak configuration

| Environment Variable Name | Default value | Description |

|---|---|---|

|

|

|

Url to keycloak identity provider server Can be set to NULL only if |

|

|

| Internal network service Url to keycloak identity provider server |

|

|

|

Keycloak realm is used to authenticate users Can be set to NULL only if |

|

|

| Keycloak client id in che.keycloak.realm that is used by dashboard, ide and cli to authenticate users |

|

|

| URL to access OSO oauth tokens |

|

|

| URL to access Github oauth tokens |

|

|

| The number of seconds to tolerate for clock skew when verifying exp or nbf claims. |

|

|

|

Use the OIDC optional |

|

|

|

URL to the Keycloak Javascript adapter we want to use. if set to NULL, then the default used value is |

|

|

| Base URL of an alternate OIDC provider that provides a discovery endpoint as detailed in the following specification https://openid.net/specs/openid-connect-discovery-1_0.html#ProviderConfig |

|

|

|

Set to true when using an alternate OIDC provider that only supports fixed redirect Urls This property is ignored when |

|

|

| Username claim to be used as user display name when parsing JWT token if not defined the fallback value is 'preferred_username' |

|

|

| Configuration of OAuth Authentication Service that can be used in 'embedded' or 'delegated' mode. If set to 'embedded', then the service work as a wrapper to CodeReady Workspaces’s OAuthAuthenticator ( as in Single User mode). If set to 'delegated', then the service will use Keycloak IdentityProvider mechanism. Runtime Exception wii be thrown, in case if this property is not set properly. |

|

|

| Configuration for enabling removing user from Keycloak server on removing user from CodeReady Workspaces database. By default it’s disabled. Can be enabled in some special cases when deleting a user in CodeReady Workspaces database should execute removing related-user from Keycloak. For correct work need to set admin username ${che.keycloak.admin_username} and password ${che.keycloak.admin_password}. |

|

|

| Keycloak admin username. Will be used for deleting user from Keycloak on removing user from CodeReady Workspaces database. Make sense only in case ${che.keycloak.cascade_user_removal_enabled} set to 'true' |

|

|

| Keycloak admin password. Will be used for deleting user from Keycloak on removing user from CodeReady Workspaces database. Make sense only in case ${che.keycloak.cascade_user_removal_enabled} set to 'true' |

|

|

|

User name adjustment configuration. CodeReady Workspaces needs to use the usernames as part of K8s object names and labels and therefore has stricter requirements on their format than the identity providers usually allow (it needs them to be DNS-compliant). The adjustment is represented by comma-separated key-value pairs. These are sequentially used as arguments to the String.replaceAll function on the original username. The keys are regular expressions, values are replacement strings that replace the characters in the username that match the regular expression. The modified username will only be stored in the CodeReady Workspaces database and will not be advertised back to the identity provider. It is recommended to use DNS-compliant characters as replacement strings (values in the key-value pairs). Example: |

Additional resources

4.2. Configuring project strategies

The OpenShift project where a new workspace Pod is deployed depends on the CodeReady Workspaces server configuration. By default, every workspace is deployed in a distinct OpenShift project, but the user can configure the CodeReady Workspaces server to deploy all workspaces in one specific OpenShift project. The name of a OpenShift project must be provided as a CodeReady Workspaces server configuration property and cannot be changed at runtime.

With Operator installer, OpenShift project strategies are configured using server.workspaceNamespaceDefault property.

Operator CheCluster CR patch

apiVersion: org.eclipse.che/v1

kind: CheCluster

metadata:

name: <che-cluster-name>

spec:

server:

workspaceNamespaceDefault: <workspace-namespace> 1- 1

- - CodeReady Workspaces workspace namespace configuration

The underlying environment variable that CodeReady Workspaces server uses is CHE_INFRA_KUBERNETES_NAMESPACE_DEFAULT.

CHE_INFRA_KUBERNETES_NAMESPACE and CHE_INFRA_OPENSHIFT_PROJECT are legacy variables. Keep these variables unset for a new installations. Changing these variables during an update can lead to data loss.

By default, only one workspace in the same project can be running at one time. See Section 4.5, “Running more than one workspace at a time”.

Kubernetes limits the length of a namespace name to 63 characters (this includes the evaluated placeholders). Additionally, the names (after placeholder evaluation) must be valid DNS names.

On OpenShift with multi-host server exposure strategy, the length is further limited to 49 characters.

Be aware that the <userid> placeholder is evaluated into a 36 character long UUID string.

For strategies where creating new project is needed, make sure that che ServiceAccount has enough permissions to do so. With OpenShift OAuth, the authenticated User must have privileges to create new project.

4.2.1. One project per user strategy

The strategy isolates each user in their own project.

To use the strategy, set the CodeReady Workspaces workspace namespace configuration value to contain one or more user identifiers. Currently supported identifiers are <username> and <userid>.

Example 4.2. One project per user

To assign project names composed of a `codeready-ws` prefix and individual usernames (codeready-ws-user1, codeready-ws-user2), set:

Operator installer (CheCluster CustomResource)

...

spec:

server:

workspaceNamespaceDefault: codeready-ws-<username>

...4.2.2. One project per workspace strategy

The strategy creates a new project for each new workspace.

To use the strategy, set the CodeReady Workspaces workspace namespace configuration value to contain the <workspaceID> identifier. It can be used alone or combined with other identifiers or any string.

Example 4.3. One project per workspace

To assign project names composed of a `codeready-ws` prefix and workspace id, set:

Operator installer (CheCluster CustomResource)

...

spec:

server:

workspaceNamespaceDefault: codeready-ws-<workspaceID>

...4.2.3. One project for all workspaces strategy

The strategy uses one predefined project for all workspaces.

To use the strategy, set the CodeReady Workspaces workspace namespace configuration value to the name of the desired project to use.

Example 4.4. One project for all workspaces

To have all workspaces created in `codeready-ws` project, set:

Operator installer (CheCluster CustomResource)

...

spec:

server:

workspaceNamespaceDefault: codeready-ws

...4.2.4. Allowing user-defined workspace projects

CodeReady Workspaces server can be configured to honor the user selection of a project when a workspace is created. This feature is disabled by default. To allow user-defined workspace projects:

For Operator deployments, set the following field in the CheCluster Custom Resource:

... server: allowUserDefinedWorkspaceNamespaces: true ...

4.2.5. Handling incompatible usernames or user IDs

CodeReady Workspaces server automatically checks usernames and IDs for compatibility with Kubernetes objects naming convention before creating a project from a template. Incompatible username or IDs are reduced to the nearest valid name by replacing groups of unsuitable symbols with the - symbol. To avoid collisions, a random 6-symbol suffix is added and the result is stored in preferences for reuse.

4.2.6. Pre-creating projects for users

To pre-create projects for users, use project labels and annotations. Such namespace is used in preference to CHE_INFRA_KUBERNETES_NAMESPACE_DEFAULT variable.

metadata:

labels:

app.kubernetes.io/part-of: che.eclipse.org

app.kubernetes.io/component: workspaces-namespace

annotations:

che.eclipse.org/username: <username> 1- 1

- target user’s username

To configure the labels, set the CHE_INFRA_KUBERNETES_NAMESPACE_LABELS to desired labels. To configure the annotations, set the CHE_INFRA_KUBERNETES_NAMESPACE_ANNOTATIONS to desired annotations. See the CodeReady Workspaces server component system properties reference for more details.

We do not recommend to create multiple namespaces for single user. It may lead to undefined behavior.

On OpenShift with OAuth, target user must have admin role privileges in target namespace:

apiVersion: rbac.authorization.k8s.io/v1 kind: RoleBinding metadata: name: admin namespace: <namespace> 1 roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: admin subjects: - apiGroup: rbac.authorization.k8s.io kind: User name: <username> 2

On Kubernetes, che ServiceAccount must have a cluster-wide list and get namespaces permissions as well as an admin role in target namespace.

4.2.7. Labeling the namespaces

CodeReady Workspaces updates the workspace’s namespace on workspace startup by adding the labels defined in CHE_INFRA_KUBERNETES_NAMESPACE_LABELS. To do so, che ServiceAccout has to have the following cluster-wide permissions to update and get namespaces:

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: <cluster-role-name> 1

rules:

- apiGroups:

- ""

resources:

- namespaces

verbs:

- update

- get- 1

- name of the cluster role

apiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRoleBinding metadata: name: <cluster-role-binding-name> 1 subjects: - kind: ServiceAccount name: <service-account-name> 2 namespace: <service-accout-namespace> 3 roleRef: kind: ClusterRole name: <cluster-role-name> 4 apiGroup: rbac.authorization.k8s.io

CodeReady Workspaces does not fail to start a workspace for lack of permissions, it only logs the warning. If you see the warnings in CodeReady Workspaces logs, consider disabling the feature with setting CHE_INFRA_KUBERNETES_NAMESPACE_LABEL=false.

4.3. Configuring storage strategies

This section describes how to configure storage strategies for CodeReady Workspaces workspaces.

4.3.1. Storage strategies for codeready-workspaces workspaces

Workspace Pods use Persistent Volume Claims (PVCs), which are bound to the physical Persistent Volumes (PVs) with ReadWriteOnce access mode. It is possible to configure how the CodeReady Workspaces server uses PVCs for workspaces. The individual methods for this configuration are called PVC strategies:

| strategy | details | pros | cons |

|---|---|---|---|

| unique | One PVC per workspace volume or user-defined PVC | Storage isolation | An undefined number of PVs is required |

| per-workspace (default) | One PVC for one workspace | Easier to manage and control storage compared to unique strategy | PV count still is not known and depends on workspaces number |

| common | One PVC for all workspaces in one OpenShift namespace | Easy to manage and control storage | If PV does not support ReadWriteMany (RWX) access mode then workspaces must be in a separate OpenShift namespaces Or there must not be more than 1 running workspace per namespace at the same time |

Red Hat CodeReady Workspaces uses the common PVC strategy in combination with the "one project per user" project strategy when all CodeReady Workspaces workspaces operate in the user’s project, sharing one PVC.

4.3.1.1. The common PVC strategy

All workspaces inside a OpenShift project use the same Persistent Volume Claim (PVC) as the default data storage when storing data such as the following in their declared volumes:

- projects

- workspace logs

- additional Volumes defined by a use

When the common PVC strategy is in use, user-defined PVCs are ignored and volumes that refer to these user-defined PVCs are replaced with a volume that refers to the common PVC. In this strategy, all CodeReady Workspaces workspaces use the same PVC. When the user runs one workspace, it only binds to one node in the cluster at a time.

The corresponding containers volume mounts link to a common volume, and sub-paths are prefixed with <workspace-ID> or <original-PVC-name>. For more details, see Section 4.3.1.4, “How subpaths are used in PVCs”.

The CodeReady Workspaces Volume name is identical to the name of the user-defined PVC. It means that if a machine is configured to use a CodeReady Workspaces volume with the same name as the user-defined PVC has, they will use the same shared folder in the common PVC.

When a workspace is deleted, a corresponding subdirectory (${ws-id}) is deleted in the PV directory.

Restrictions on using the common PVC strategy

When the common strategy is used and a workspace PVC access mode is ReadWriteOnce (RWO), only one node can simultaneously use the PVC.

If there are several nodes, you can use the common strategy, but:

-

The workspace PVC access mode must be reconfigured to

ReadWriteMany(RWM), so multiple nodes can use this PVC simultaneously. - Only one workspace in the same project may be running. See Section 4.5, “Running more than one workspace at a time”.

The common PVC strategy is not suitable for large multi-node clusters. Therefore, it is best to use it in single-node clusters. However, in combination with the per-workspace project strategy, the common PVC strategy is usable for clusters with not more than 75 nodes. The PVC used with this strategy must be large enough to accommodate all projects to prevent a situation in which one project depletes the resources of others.

4.3.1.2. The per-workspace PVC strategy

The per-workspace strategy is similar to the common PVC strategy. The only difference is that all workspace Volumes, but not all the workspaces, use the same PVC as the default data storage for:

- projects

- workspace logs

- additional Volumes defined by a user

With this strategy, CodeReady Workspaces keeps its workspace data in assigned PVs that are allocated by a single PVC.

The per-workspace PVC strategy is the most universal strategy out of the PVC strategies available and acts as a proper option for large multi-node clusters with a higher amount of users. Using the per-workspace PVC strategy, users can run multiple workspaces simultaneously, results in more PVCs being created.

4.3.1.3. The unique PVC strategy

When using the `unique `PVC strategy, every CodeReady Workspaces Volume of a workspace has its own PVC. This means that workspace PVCs are:

Created when a workspace starts for the first time. Deleted when a corresponding workspace is deleted.

User-defined PVCs are created with the following specifics:

- They are provisioned with generated names to prevent naming conflicts with other PVCs in a project.

-

Subpaths of the mounted Physical persistent volumes that reference user-defined PVCs are prefixed with

<workspace-ID>or<PVC-name>. This ensures that the same PV data structure is set up with different PVC strategies. For details, see Section 4.3.1.4, “How subpaths are used in PVCs”.

The unique PVC strategy is suitable for larger multi-node clusters with a lesser amount of users. Since this strategy operates with separate PVCs for each volume in a workspace, vastly more PVCs are created.

4.3.1.4. How subpaths are used in PVCs

Subpaths illustrate the folder hierarchy in the Persistent Volumes (PV).

/pv0001

/workspaceID1

/workspaceID2

/workspaceIDn

/che-logs

/projects

/<volume1>

/<volume2>

/<User-defined PVC name 1 | volume 3>

...

When a user defines volumes for components in the devfile, all components that define the volume of the same name will be backed by the same directory in the PV as <PV-name>, <workspace-ID>, or `<original-PVC-name>. Each component can have this location mounted on a different path in its containers.

Example

Using the common PVC strategy, user-defined PVCs are replaced with subpaths on the common PVC. When the user references a volume as my-volume, it is mounted in the common-pvc with the /workspace-id/my-volume subpath.

4.3.2. Configuring a CodeReady Workspaces workspace with a persistent volume strategy

A persistent volume (PV) acts as a virtual storage instance that adds a volume to a cluster.

A persistent volume claim (PVC) is a request to provision persistent storage of a specific type and configuration, available in the following CodeReady Workspaces storage configuration strategies:

- Common

- Per-workspace

- Unique

The mounted PVC is displayed as a folder in a container file system.

4.3.2.1. Configuring a PVC strategy using the Operator

The following section describes how to configure workspace persistent volume claim (PVC) strategies of a CodeReady Workspaces server using the Operator.

It is not recommended to reconfigure PVC strategies on an existing CodeReady Workspaces cluster with existing workspaces. Doing so causes data loss.

Operators are software extensions to OpenShift that use Custom Resources to manage applications and their components.

When deploying CodeReady Workspaces using the Operator, configure the intended strategy by modifying the spec.storage.pvcStrategy property of the CheCluster Custom Resource object YAML file.

Prerequisites

-

The

octool is available.

Procedure

The following procedure steps are available for OpenShift command-line tool, '`oc’.

To do changes to the CheCluster YAML file, choose one of the following:

Create a new cluster by executing the

oc applycommand. For example:$ oc apply -f <my-cluster.yaml>Update the YAML file properties of an already running cluster by executing the

oc patchcommand. For example:$ oc patch checluster codeready-workspaces --type=json \ -p '[{"op": "replace", "path": "/spec/storage/pvcStrategy", "value": "per-workspace"}]'

Depending on the strategy used, replace the per-workspace option in the above example with unique or common.

4.4. Configuring storage types

Red Hat CodeReady Workspaces supports three types of storage with different capabilities:

- Persistent

- Ephemeral

- Asynchronous

4.4.1. Persistent storage

Persistent storage allows storing user changes directly in the mounted Persistent Volume. User changes are kept safe by the OpenShift infrastructure (storage backend) at the cost of slow I/O, especially with many small files. For example, Node.js projects tend to have many dependencies and the node_modules/ directory is filled with thousands of small files.

I/O speeds vary depending on the Storage Classes configured in the environment.

Persistent storage is the default mode for new workspaces. To make this setting visible in workspace configuration, add the following to the devfile:

attributes: persistVolumes: 'true'

4.4.2. Ephemeral storage

Ephemeral storage saves files to the emptyDir volume. This volume is initially empty. When a Pod is removed from a node, the data in the emptyDir volume is deleted forever. This means that all changes are lost on workspace stop or restart.

To save the changes, commit and push to the remote before stopping an ephemeral workspace.

Ephemeral mode provides faster I/O than persistent storage. To enable this storage type, add the following to workspace configuration:

attributes: persistVolumes: 'false'

Table 4.18. Comparison between I/O of ephemeral (emptyDir) and persistent modes on AWS EBS

| Command | Ephemeral | Persitent |

|---|---|---|

| Clone Red Hat CodeReady Workspaces | 0 m 19 s | 1 m 26 s |

| Generate 1000 random files | 1 m 12 s | 44 m 53 s |

4.4.3. Asynchronous storage

Asynchronous storage is an experimental feature.

Asynchronous storage is a combination of persistent and ephemeral modes. The initial workspace container mounts the emptyDir volume. Then a backup is performed on workspace stop, and changes are restored on workspace start. Asynchronous storage provides fast I/O (similar to ephemeral mode), and workspace project changes are persisted.

Synchronization is performed by the rsync tool using the SSH protocol. When a workspace is configured with asynchronous storage, the workspace-data-sync plug-in is automatically added to the workspace configuration. The plug-in runs the rsync command on workspace start to restore changes. When a workspace is stopped, it sends changes to the permanent storage.

For relatively small projects, the restore procedure is fast, and project source files are immediately available after Che-Theia is initialized. In case rsync takes longer, the synchronization process is shown in the Che-Theia status-bar area. (Extension in Che-Theia repository).

Asynchronous mode has the following limitations:

- Supports only the common PVC strategy

- Supports only the per-user project strategy

- Only one workspace can be running at a time

To configure asynchronous storage for a workspace, add the following to workspace configuration:

attributes: asyncPersist: 'true' persistVolumes: 'false'

4.4.4. Configuring storage type defaults for CodeReady Workspaces dashboard

Use the following two che.properties to configure the default client values in CodeReady Workspaces dashboard:

che.workspace.storage.available_typesDefines available values for storage types that clients like the dashboard propose for users during workspace creation or update. Available values:

persistent,ephemeral, andasync. Separate multiple values by commas. For example:che.workspace.storage.available_types=persistent,ephemeral,async

che.workspace.storage.preferred_typeDefines the default value for storage type that clients like the dashboard propose for users during workspace creation. The

asyncvalue is not recommended as the default type because it is experimental. For example:che.workspace.storage.preferred_type=persistent

Then users are able to configure Storage Type on the Create Custom Workspace tab on CodeReady Workspaces dashboard during workspace creation. Storage type for existing workspace can be configured in on Overview tab of the workspace details.

4.4.5. Idling asynchronous storage Pods

CodeReady Workspaces can shut down the Asynchronous Storage Pod when not used for a configured period of time.

Use these configuration properties to adjust the behavior:

che.infra.kubernetes.async.storage.shutdown_timeout_min- Defines the idle time after which the asynchronous storage Pod is stopped following the stopping of the last active workspace. The default value is 120 minutes.

che.infra.kubernetes.async.storage.shutdown_check_period_min- Defines the frequency with which the asynchronous storage Pod is checked for idleness. The default value is 30 minutes.

4.5. Running more than one workspace at a time

This procedure describes how to run more than one workspace simultaneously. This makes it possible for multiple workspace contexts per user to run in parallel.

Prerequisites

-

The

'`oc’tool is available. An instance of CodeReady Workspaces running in OpenShift.

NoteThe following commands use the default OpenShift project,

openshift-workspaces, as a user’s example for the-noption.

Procedure

-

Change the default limit of

1to-1to allow an unlimited number of concurrent workspaces per user:

$ oc patch checluster codeready-workspaces -n openshift-workspaces --type merge \

-p '{ "spec": { "server": {"customCheProperties": {"CHE_LIMITS_USER_WORKSPACES_RUN_COUNT": "-1"} } }}'Set the

per-workspaceoruniquePVC strategy. See Section 4.3, “Configuring storage strategies”.NoteWhen using the common PVC strategy, configure the persistent volumes to use the

ReadWriteManyaccess mode. That way, any of the user’s concurrent workspaces can read from and write to the common PVC.

4.6. Configuring workspace exposure strategies

The following section describes how to configure workspace exposure strategies of a CodeReady Workspaces server and ensure that applications running inside are not vulnerable to outside attacks.

4.6.1. Configuring workspace exposure strategies using an Operator

Operators are software extensions to OpenShift that use Custom Resources to manage applications and their components.

Prerequisites

-

The

octool is available.

Procedure

When deploying CodeReady Workspaces using the Operator, configure the intended strategy by modifying the spec.server.serverExposureStrategy property of the CheCluster Custom Resource object YAML file.

The supported values for spec.server.serverExposureStrategy are:

See Section 4.6.2, “Workspace exposure strategies” for more detail about individual strategies.

To activate changes done to CheCluster YAML file, do one of the following:

Create a new cluster by executing the

crwctlcommand with applying a patch. For example:$ crwctl server:deploy --installer=operator --platform=<platform> \ --che-operator-cr-patch-yaml=patch.yamlNoteFor a list of available OpenShift deployment platforms, use

crwctl server:deploy --platform --help.Use the following

patch.yamlfile:apiVersion: org.eclipse.che/v1 kind: CheCluster metadata: name: eclipse-che spec: server: serverExposureStrategy: '<exposure-strategy>' 1- 1

- - used workspace exposure strategy

Update the YAML file properties of an already running cluster by executing the

oc patchcommand. For example:$ oc patch checluster codeready-workspaces --type=json \ -p '[{"op": "replace", "path": "/spec/server/serverExposureStrategy", "value": "<exposure-strategy>"}]' \ 1 -n openshift-workspaces- 1

- - used workspace exposure strategy

4.6.2. Workspace exposure strategies

Specific components of workspaces need to be made accessible outside of the OpenShift cluster. This is typically the user interface of the workspace’s IDE, but it can also be the web UI of the application being developed. This enables developers to interact with the application during the development process.

The supported way of making workspace components available to the users is referred to as a strategy. This strategy defines whether new subdomains are created for the workspace components and what hosts these components are available on.

CodeReady Workspaces supports:

-

multi-hoststrategy single-hoststrategy-

with the

gatewaysubtype

-

with the

4.6.2.1. Multi-host strategy

With multi-host strategy, each workspace component is assigned a new subdomain of the main domain configured for the CodeReady Workspaces server. This is the default strategy.

This strategy is the easiest to understand from the perspective of component deployment because any paths present in the URL to the component are received as they are by the component.

On a CodeReady Workspaces server secured using the Transport Layer Security (TLS) protocol, creating new subdomains for each component of each workspace requires a wildcard certificate to be available for all such subdomains for the CodeReady Workspaces deployment to be practical.

4.6.2.2. Single-host strategy

With single-host strategy, all workspaces are deployed to sub-paths of the main CodeReady Workspaces server domain.

This is convenient for TLS-secured CodeReady Workspaces servers because it is sufficient to have a single certificate for the CodeReady Workspaces server, which will cover all the workspace component deployments as well.

Single-host strategy have two subtypes with different implementation methods. First subtype is named native. This strategy is available and default on Kubernetes, but not on OpenShift, since it uses Ingresses for servers exposing. The second subtype named gateway, works both on OpenShift, and uses a special pod with reverse-proxy running inside to route requests.

With gateway single-host strategy, cluster network policies has to be configured so that workspace’s services are reachable from reverse-proxy Pod (typically in CodeReady Workspaces project). These typically lives in different project.

There are two ways of exposing the endpoints specified in the devfile. These can be configured using the CHE_INFRA_KUBERNETES_SINGLEHOST_WORKSPACE_DEVFILE__ENDPOINT__EXPOSURE environment variable of the CodeReady Workspaces. This environment variable is only effective with the single-host server strategy and is applicable to all workspaces of all users.

4.6.2.2.1. devfile endpoints: single-host

CHE_INFRA_KUBERNETES_SINGLEHOST_WORKSPACE_DEVFILE__ENDPOINT__EXPOSURE: 'single-host'

This single-host configuration exposes the endpoints on subpaths, for example: https://<che-host>/serverihzmuqqc/go-cli-server-8080. This limits the exposed components and user applications. Any absolute URL generated on the server side that points back to the server does not work. This is because the server is hidden behind a path-rewriting reverse proxy that hides the unique URL path prefix from the component or user application.

For example, when the user accesses the hypothetical [https://codeready-<openshift_deployment_name>.<domain_name>/component-prefix-djh3d/app/index.php] URL, the application sees the request coming to https://internal-host/app/index.php. If the application used the host in the URL that it generates in its UI, it would not work because the internal host is different from the externally visible host. However, if the application used an absolute path as the URL (for the example above, this would be /app/index.php), such URL would still not work. This is because on the outside, such URL does not point to the application, because it is missing the component-specific prefix.

Therefore, only applications that use relative URLs in their UI work with the single-host workspace exposure strategy.

4.6.2.2.2. devfile endpoints: multi-host

CHE_INFRA_KUBERNETES_SINGLEHOST_WORKSPACE_DEVFILE__ENDPOINT__EXPOSURE: 'multi-host'

This single-host configuration exposes the endpoints on subdomains, for example: http://serverihzmuqqc-go-cli-server-8080.<che-host>. These endpoints are exposed on an unsecured HTTP port. A dedicated Ingress or Route is used for such endpoints, even with gateway single-host setup.

This configuration limits the usability of previews shown directly in the editor page when CodeReady Workspaces is configured with TLS. Since https pages allow communication only with secured endpoints, users must open their application previews in another browser tab.

4.6.3. Security considerations

This section explains the security impact of using different CodeReady Workspaces workspace exposure strategies.

All the security-related considerations in this section are only applicable to CodeReady Workspaces in multiuser mode. The single user mode does not impose any security restrictions.

4.6.3.1. JSON web token (JWT) proxy

All CodeReady Workspaces plug-ins, editors, and components can require authentication of the user accessing them. This authentication is performed using a JSON web token (JWT) proxy that functions as a reverse proxy of the corresponding component, based on its configuration, and performs the authentication on behalf of the component.

The authentication uses a redirect to a special page on the CodeReady Workspaces server that propagates the workspace and user-specific authentication token (workspace access token) back to the originally requested page.

The JWT proxy accepts the workspace access token from the following places in the incoming requests, in the following order:

- The token query parameter

- The Authorization header in the bearer-token format

-

The

access_tokencookie

4.6.3.2. Secured plug-ins and editors

CodeReady Workspaces users do not need to secure workspace plug-ins and workspace editors (such as Che-Theia). This is because the JWT proxy authentication is transparent to the user and is governed by the plug-in or editor definition in their meta.yaml descriptors.

4.6.3.3. Secured container-image components

Container-image components can define custom endpoints for which the devfile author can require CodeReady Workspaces-provided authentication, if needed. This authentication is configured using two optional attributes of the endpoint:

-

secure- A boolean attribute that instructs the CodeReady Workspaces server to put the JWT proxy in front of the endpoint. Such endpoints have to be provided with the workspace access token in one of the several ways explained in Section 4.6.3.1, “JSON web token (JWT) proxy”. The default value of the attribute isfalse. -

cookiesAuthEnabled- A boolean attribute that instructs the CodeReady Workspaces server to automatically redirect the unauthenticated requests for current user authentication as described in Section 4.6.3.1, “JSON web token (JWT) proxy”. Setting this attribute totruehas security consequences because it makes Cross-site request forgery (CSRF) attacks possible. The default value of the attribute isfalse.

4.6.3.4. Cross-site request forgery attacks

Cookie-based authentication can make an application secured by a JWT proxy prone to Cross-site request forgery (CSRF) attacks. See the Cross-site request forgery Wikipedia page and other resources to ensure your application is not vulnerable.

4.6.3.5. Phishing attacks

An attacker who is able to create an Ingress or route inside the cluster with the workspace that shares the host with some services behind a JWT proxy, the attacker may be able to create a service and a specially forged Ingress object. When such a service or Ingress is accessed by a legitimate user that was previously authenticated with a workspace, it can lead to the attacker stealing the workspace access token from the cookies sent by the legitimate user’s browser to the forged URL. To eliminate this attack vector, configure OpenShift to disallow setting the host of an Ingress.

4.7. Configuring workspaces nodeSelector

This section describes how to configure nodeSelector for Pods of CodeReady Workspaces workspaces.

Procedure

CodeReady Workspaces uses the CHE_WORKSPACE_POD_NODE__SELECTOR environment variable to configure nodeSelector. This variable may contain a set of comma-separated key=value pairs to form the nodeSelector rule, or NULL to disable it.

CHE_WORKSPACE_POD_NODE__SELECTOR=disktype=ssd,cpu=xlarge,[key=value]

nodeSelector must be configured during CodeReady Workspaces installation. This prevents existing workspaces from failing to run due to volumes affinity conflict caused by existing workspace PVC and Pod being scheduled in different zones.

To avoid Pods and PVCs to be scheduled in different zones on large, multi-zone clusters, create an additional StorageClass object (pay attention to the allowedTopologies field), which will coordinate the PVC creation process.

Pass the name of this newly created StorageClass to CodeReady Workspaces through the CHE_INFRA_KUBERNETES_PVC_STORAGE__CLASS__NAME environment variable. A default empty value of this variable instructs CodeReady Workspaces to use the cluster’s default StorageClass.

4.8. Configuring Red Hat CodeReady Workspaces server hostname

This procedure describes how to configure Red Hat CodeReady Workspaces to use custom hostname.

Prerequisites

-

The

octool is available. - The certificate and the private key files are generated.

To generate the pair of private key and certificate the same CA must be used as for other Red Hat CodeReady Workspaces hosts.

Ask a DNS provider to point the custom hostname to the cluster ingress.

Procedure

Pre-create a project for CodeReady Workspaces:

$ oc create project openshift-workspaces

Create a tls secret:

$ oc create secret tls ${secret} \ 1 --key ${key_file} \ 2 --cert ${cert_file} \ 3 -n openshift-workspacesSet the following values in the Custom Resource:

spec: server: cheHost: <hostname> 1 cheHostTLSSecret: <secret> 2If CodeReady Workspaces has been already deployed and CodeReady Workspaces reconfiguring to use a new CodeReady Workspaces hostname is required, log in using RH-SSO and select the

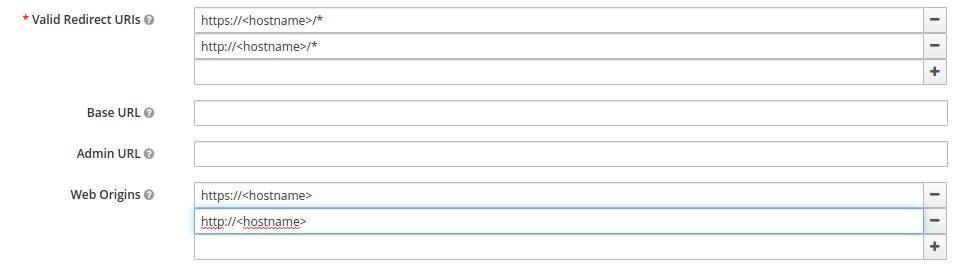

codeready-publicclient in theCodeReady Workspacesrealm and updateValidate Redirect URIsandWeb Originsfields with the value of the CodeReady Workspaces hostname.

4.9. Configuring labels for OpenShift Route

This procedure describes how to configure labels for OpenShift Route to organize and categorize (scope and select) objects.

Prerequisites

-

The

octool is available. - An instance of CodeReady Workspaces running in OpenShift.

Use comma to separate labels: key1=value1,key2=value2

Procedure

To configure labels for OpenShift Route update the Custom Resource with the following commands: