Security hardening

Enhancing security of Red Hat Enterprise Linux 8 systems

Abstract

Providing feedback on Red Hat documentation

We appreciate your feedback on our documentation. Let us know how we can improve it.

Submitting feedback through Jira (account required)

- Log in to the Jira website.

- Click Create in the top navigation bar.

- Enter a descriptive title in the Summary field.

- Enter your suggestion for improvement in the Description field. Include links to the relevant parts of the documentation.

- Click Create at the bottom of the dialogue.

Chapter 1. Securing RHEL during and right after installation

Security begins even before you start the installation of Red Hat Enterprise Linux. Configuring your system securely from the beginning makes it easier to implement additional security settings later.

1.1. Disk partitioning

The recommended practices for disk partitioning differ for installations on bare-metal machines and for virtualized or cloud environments that support adjusting virtual disk hardware and file systems containing already-installed operating systems.

To ensure separation and protection of data on bare-metal installations, create separate partitions for the /boot, /, /home, /tmp, and /var/tmp/ directories:

/boot-

This partition is the first partition that is read by the system during boot up. The boot loader and kernel images that are used to boot your system into RHEL 8 are stored in this partition. This partition should not be encrypted. If this partition is included in

/and that partition is encrypted or otherwise becomes unavailable then your system is not able to boot. /home-

When user data (

/home) is stored in/instead of in a separate partition, the partition can fill up causing the operating system to become unstable. Also, when upgrading your system to the next version of RHEL 8 it is a lot easier when you can keep your data in the/homepartition as it is not be overwritten during installation. If the root partition (/) becomes corrupt your data could be lost forever. By using a separate partition there is slightly more protection against data loss. You can also target this partition for frequent backups. /tmpand/var/tmp/-

Both the

/tmpand/var/tmp/directories are used to store data that does not need to be stored for a long period of time. However, if a lot of data floods one of these directories it can consume all of your storage space. If this happens and these directories are stored within/then your system could become unstable and crash. For this reason, moving these directories into their own partitions is a good idea.

For virtual machines or cloud instances, the separate /boot, /home, /tmp, and /var/tmp partitions are optional because you can increase the virtual disk size and the / partition if it begins to fill up. Set up monitoring to regularly check the / partition usage so that it does not fill up before you increase the virtual disk size accordingly.

During the installation process, you have an option to encrypt partitions. You must supply a passphrase. This passphrase serves as a key to unlock the bulk encryption key, which is used to secure the partition’s data.

1.2. Restricting network connectivity during the installation process

When installing RHEL 8, the installation medium represents a snapshot of the system at a particular time. Because of this, it may not be up-to-date with the latest security fixes and may be vulnerable to certain issues that were fixed only after the system provided by the installation medium was released.

When installing a potentially vulnerable operating system, always limit exposure only to the closest necessary network zone. The safest choice is the “no network” zone, which means to leave your machine disconnected during the installation process. In some cases, a LAN or intranet connection is sufficient while the Internet connection is the riskiest. To follow the best security practices, choose the closest zone with your repository while installing RHEL 8 from a network.

1.3. Installing the minimum amount of packages required

It is best practice to install only the packages you will use because each piece of software on your computer could possibly contain a vulnerability. If you are installing from the DVD media, take the opportunity to select exactly what packages you want to install during the installation. If you find you need another package, you can always add it to the system later.

1.4. Post-installation procedures

The following steps are the security-related procedures that should be performed immediately after installation of RHEL 8.

Update your system. Enter the following command as root:

# yum updateEven though the firewall service,

firewalld, is automatically enabled with the installation of Red Hat Enterprise Linux, it might be explicitly disabled, for example, in the Kickstart configuration. In such a case, re-enable the firewall.To start

firewalldenter the following commands as root:# systemctl start firewalld # systemctl enable firewalld

To enhance security, disable services you do not need. For example, if no printers are installed on your computer, disable the

cupsservice by using the following command:# systemctl disable cupsTo review active services, enter the following command:

$ systemctl list-units | grep service

1.5. Disabling SMT to prevent CPU security issues by using the web console

Disable Simultaneous Multi Threading (SMT) in case of attacks that misuse CPU SMT. Disabling SMT can mitigate security vulnerabilities, such as L1TF or MDS.

Disabling SMT might lower the system performance.

Prerequisites

- You have installed the RHEL 8 web console.

- You have enabled the cockpit service.

Your user account is allowed to log in to the web console.

For instructions, see Installing and enabling the web console.

Procedure

Log in to the RHEL 8 web console.

For details, see Logging in to the web console.

- In the Overview tab find the System information field and click View hardware details.

On the CPU Security line, click Mitigations.

If this link is not present, it means that your system does not support SMT, and therefore is not vulnerable.

- In the CPU Security Toggles table, turn on the Disable simultaneous multithreading (nosmt) option.

- Click the button.

After the system restart, the CPU no longer uses SMT.

Chapter 2. Switching RHEL to FIPS mode

To enable the cryptographic module self-checks mandated by the Federal Information Processing Standard (FIPS) 140-2, you must operate RHEL 8 in FIPS mode. Starting the installation in FIPS mode is the recommended method if you aim for FIPS compliance.

2.1. Federal Information Processing Standards 140 and FIPS mode

The Federal Information Processing Standards (FIPS) Publication 140 is a series of computer security standards developed by the National Institute of Standards and Technology (NIST) to ensure the quality of cryptographic modules. The FIPS 140 standard ensures that cryptographic tools implement their algorithms correctly. Runtime cryptographic algorithm and integrity self-tests are some of the mechanisms to ensure a system uses cryptography that meets the requirements of the standard.

RHEL in FIPS mode

To ensure that your RHEL system generates and uses all cryptographic keys only with FIPS-approved algorithms, you must switch RHEL to FIPS mode.

You can enable FIPS mode by using one of the following methods:

- Starting the installation in FIPS mode

- Switching the system into FIPS mode after the installation

If you aim for FIPS compliance, start the installation in FIPS mode. This avoids cryptographic key material regeneration and reevaluation of the compliance of the resulting system associated with converting already deployed systems.

To operate a FIPS-compliant system, create all cryptographic key material in FIPS mode. Furthermore, the cryptographic key material must never leave the FIPS environment unless it is securely wrapped and never unwrapped in non-FIPS environments.

The FIPS - Federal Information Processing Standards section on the Product compliance Red Hat Customer Portal page provides an overview of the validation status of cryptographic modules for selected RHEL minor releases.

Switching to FIPS mode after the installation

Switching the system to FIPS mode by using the fips-mode-setup tool does not guarantee compliance with the FIPS 140 standard. Re-generating all cryptographic keys after setting the system to FIPS mode may not be possible. For example, in the case of an existing IdM realm with users' cryptographic keys you cannot re-generate all the keys. If you cannot start the installation in FIPS mode, always enable FIPS mode as the first step after the installation, before you make any post-installation configuration steps or install any workloads.

The fips-mode-setup tool also uses the FIPS system-wide cryptographic policy internally. But on top of what the update-crypto-policies --set FIPS command does, fips-mode-setup ensures the installation of the FIPS dracut module by using the fips-finish-install tool, it also adds the fips=1 boot option to the kernel command line and regenerates the initial RAM disk.

Furthermore, enforcement of restrictions required in FIPS mode depends on the content of the /proc/sys/crypto/fips_enabled file. If the file contains 1, RHEL core cryptographic components switch to mode, in which they use only FIPS-approved implementations of cryptographic algorithms. If /proc/sys/crypto/fips_enabled contains 0, the cryptographic components do not enable their FIPS mode.

FIPS in crypto-policies

The FIPS system-wide cryptographic policy helps to configure higher-level restrictions. Therefore, communication protocols supporting cryptographic agility do not announce ciphers that the system refuses when selected. For example, the ChaCha20 algorithm is not FIPS-approved, and the FIPS cryptographic policy ensures that TLS servers and clients do not announce the TLS_ECDHE_ECDSA_WITH_CHACHA20_POLY1305_SHA256 TLS cipher suite, because any attempt to use such a cipher fails.

If you operate RHEL in FIPS mode and use an application providing its own FIPS-mode-related configuration options, ignore these options and the corresponding application guidance. The system running in FIPS mode and the system-wide cryptographic policies enforce only FIPS-compliant cryptography. For example, the Node.js configuration option --enable-fips is ignored if the system runs in FIPS mode. If you use the --enable-fips option on a system not running in FIPS mode, you do not meet the FIPS-140 compliance requirements.

Additional resources

2.2. Installing the system with FIPS mode enabled

To enable the cryptographic module self-checks mandated by the Federal Information Processing Standard (FIPS) 140, enable FIPS mode during the system installation.

Only enabling FIPS mode during the RHEL installation ensures that the system generates all keys with FIPS-approved algorithms and continuous monitoring tests in place.

After you complete the setup of FIPS mode, you cannot switch off FIPS mode without putting the system into an inconsistent state. If your scenario requires this change, the only correct way is a complete re-installation of the system.

Procedure

-

Add the

fips=1option to the kernel command line during the system installation. - During the software selection stage, do not install any third-party software.

- After the installation, the system starts in FIPS mode automatically.

Verification

After the system starts, check that FIPS mode is enabled:

$ fips-mode-setup --check FIPS mode is enabled.

Additional resources

2.3. Switching the system to FIPS mode

The system-wide cryptographic policies contain a policy level that enables cryptographic algorithms in accordance with the requirements by the Federal Information Processing Standard (FIPS) Publication 140. The fips-mode-setup tool that enables or disables FIPS mode internally uses the FIPS system-wide cryptographic policy.

Switching the system to FIPS mode by using the FIPS system-wide cryptographic policy does not guarantee compliance with the FIPS 140 standard. Re-generating all cryptographic keys after setting the system to FIPS mode may not be possible. For example, in the case of an existing IdM realm with users' cryptographic keys you cannot re-generate all the keys.

Only enabling FIPS mode during the RHEL installation ensures that the system generates all keys with FIPS-approved algorithms and continuous monitoring tests in place.

The fips-mode-setup tool uses the FIPS policy internally. But on top of what the update-crypto-policies command with the --set FIPS option does, fips-mode-setup ensures the installation of the FIPS dracut module by using the fips-finish-install tool, it also adds the fips=1 boot option to the kernel command line and regenerates the initial RAM disk.

After you complete the setup of FIPS mode, you cannot switch off FIPS mode without putting the system into an inconsistent state. If your scenario requires this change, the only correct way is a complete re-installation of the system.

Procedure

To switch the system to FIPS mode:

# fips-mode-setup --enable Kernel initramdisks are being regenerated. This might take some time. Setting system policy to FIPS Note: System-wide crypto policies are applied on application start-up. It is recommended to restart the system for the change of policies to fully take place. FIPS mode will be enabled. Please reboot the system for the setting to take effect.Restart your system to allow the kernel to switch to FIPS mode:

# reboot

Verification

After the restart, you can check the current state of FIPS mode:

# fips-mode-setup --check FIPS mode is enabled.

Additional resources

-

fips-mode-setup(8)man page on your system - Security Requirements for Cryptographic Modules on the National Institute of Standards and Technology (NIST) web site.

2.4. Enabling FIPS mode in a container

To enable the full set of cryptographic module self-checks mandated by the Federal Information Processing Standard Publication 140-2 (FIPS mode), the host system kernel must be running in FIPS mode. Depending on the version of your host system, enabling FIPS mode on containers either is fully automatic or requires only one command.

The fips-mode-setup command does not work correctly in containers, and it cannot be used to enable or check FIPS mode in this scenario.

Prerequisites

- The host system must be in FIPS mode.

Procedure

On hosts running RHEL 8.1 and 8.2: Set the FIPS cryptographic policy level in the container using the following command, and ignore the advice to use the

fips-mode-setupcommand:$ update-crypto-policies --set FIPS-

On hosts running RHEL 8.4 and later: On systems with FIPS mode enabled, the

podmanutility automatically enables FIPS mode on supported containers.

Additional resources

2.5. List of RHEL applications using cryptography that is not compliant with FIPS 140-2

To pass all relevant cryptographic certifications, such as FIPS 140, use libraries from the core cryptographic components set. These libraries, except from libgcrypt, also follow the RHEL system-wide cryptographic policies.

See the RHEL core cryptographic components Red Hat Knowledgebase article for an overview of the core cryptographic components, the information on how are they selected, how are they integrated into the operating system, how do they support hardware security modules and smart cards, and how do cryptographic certifications apply to them.

In addition to the following table, in some RHEL 8 Z-stream releases (for example, 8.1.1), the Firefox browser packages have been updated, and they contain a separate copy of the NSS cryptography library. This way, Red Hat wants to avoid the disruption of rebasing such a low-level component in a patch release. As a result, these Firefox packages do not use a FIPS 140-2-validated module.

List of RHEL 8 applications that use cryptography not compliant with FIPS 140-2

- FreeRADIUS

- The RADIUS protocol uses MD5.

- Ghostscript

- Custom cryptography implementation (MD5, RC4, SHA-2, AES) to encrypt and decrypt documents.

- iPXE

- Cryptographic stack for TLS is compiled in, however, it is unused.

- Libica

- Software fallbacks for various algorithms such as RSA and ECDH through CPACF instructions.

- Ovmf (UEFI firmware), Edk2, shim

- Full cryptographic stack (an embedded copy of the OpenSSL library).

- Perl

- HMAC, HMAC-SHA1, HMAC-MD5, SHA-1, SHA-224,…

- Pidgin

- Implements DES and RC4.

- QAT Engine

- Mixed hardware and software implementation of cryptographic primitives (RSA, EC, DH, AES,…).

- Samba [1]

- Implements AES, DES, and RC4.

- SWTPM

- Explicitly disables FIPS mode in its OpenSSL usage.

- Valgrind

- AES, hashes [2]

- zip

- Custom cryptography implementation (insecure PKWARE encryption algorithm) to encrypt and decrypt archives using a password.

Additional resources

- FIPS - Federal Information Processing Standards section on the Product compliance Red Hat Customer Portal page

- RHEL core cryptographic components (Red Hat Knowledgebase)

Chapter 3. Using system-wide cryptographic policies

The system-wide cryptographic policies is a system component that configures the core cryptographic subsystems, covering the TLS, IPsec, SSH, DNSSec, and Kerberos protocols. It provides a small set of policies, which the administrator can select.

3.1. System-wide cryptographic policies

When a system-wide policy is set up, applications in RHEL follow it and refuse to use algorithms and protocols that do not meet the policy, unless you explicitly request the application to do so. That is, the policy applies to the default behavior of applications when running with the system-provided configuration but you can override it if required.

RHEL 8 contains the following predefined policies:

DEFAULT- The default system-wide cryptographic policy level offers secure settings for current threat models. It allows the TLS 1.2 and 1.3 protocols, as well as the IKEv2 and SSH2 protocols. The RSA keys and Diffie-Hellman parameters are accepted if they are at least 2048 bits long.

LEGACY-

Ensures maximum compatibility with Red Hat Enterprise Linux 5 and earlier; it is less secure due to an increased attack surface. In addition to the

DEFAULTlevel algorithms and protocols, it includes support for the TLS 1.0 and 1.1 protocols. The algorithms DSA, 3DES, and RC4 are allowed, while RSA keys and Diffie-Hellman parameters are accepted if they are at least 1023 bits long. FUTUREA stricter forward-looking security level intended for testing a possible future policy. This policy does not allow the use of SHA-1 in signature algorithms. It allows the TLS 1.2 and 1.3 protocols, as well as the IKEv2 and SSH2 protocols. The RSA keys and Diffie-Hellman parameters are accepted if they are at least 3072 bits long. If your system communicates on the public internet, you might face interoperability problems.

ImportantBecause a cryptographic key used by a certificate on the Customer Portal API does not meet the requirements by the

FUTUREsystem-wide cryptographic policy, theredhat-support-toolutility does not work with this policy level at the moment.To work around this problem, use the

DEFAULTcryptographic policy while connecting to the Customer Portal API.FIPSConforms with the FIPS 140 requirements. The

fips-mode-setuptool, which switches the RHEL system into FIPS mode, uses this policy internally. Switching to theFIPSpolicy does not guarantee compliance with the FIPS 140 standard. You also must re-generate all cryptographic keys after you set the system to FIPS mode. This is not possible in many scenarios.RHEL also provides the

FIPS:OSPPsystem-wide subpolicy, which contains further restrictions for cryptographic algorithms required by the Common Criteria (CC) certification. The system becomes less interoperable after you set this subpolicy. For example, you cannot use RSA and DH keys shorter than 3072 bits, additional SSH algorithms, and several TLS groups. SettingFIPS:OSPPalso prevents connecting to Red Hat Content Delivery Network (CDN) structure. Furthermore, you cannot integrate Active Directory (AD) into the IdM deployments that useFIPS:OSPP, communication between RHEL hosts usingFIPS:OSPPand AD domains might not work, or some AD accounts might not be able to authenticate.NoteYour system is not CC-compliant after you set the

FIPS:OSPPcryptographic subpolicy. The only correct way to make your RHEL system compliant with the CC standard is by following the guidance provided in thecc-configpackage. See Common Criteria section on the Product compliance Red Hat Customer Portal page for a list of certified RHEL versions, validation reports, and links to CC guides.

Red Hat continuously adjusts all policy levels so that all libraries provide secure defaults, except when using the LEGACY policy. Even though the LEGACY profile does not provide secure defaults, it does not include any algorithms that are easily exploitable. As such, the set of enabled algorithms or acceptable key sizes in any provided policy may change during the lifetime of Red Hat Enterprise Linux.

Such changes reflect new security standards and new security research. If you must ensure interoperability with a specific system for the whole lifetime of Red Hat Enterprise Linux, you should opt-out from the system-wide cryptographic policies for components that interact with that system or re-enable specific algorithms using custom cryptographic policies.

The specific algorithms and ciphers described as allowed in the policy levels are available only if an application supports them:

LEGACY | DEFAULT | FIPS | FUTURE | |

|---|---|---|---|---|

| IKEv1 | no | no | no | no |

| 3DES | yes | no | no | no |

| RC4 | yes | no | no | no |

| DH | min. 1024-bit | min. 2048-bit | min. 2048-bit[a] | min. 3072-bit |

| RSA | min. 1024-bit | min. 2048-bit | min. 2048-bit | min. 3072-bit |

| DSA | yes | no | no | no |

| TLS v1.0 | yes | no | no | no |

| TLS v1.1 | yes | no | no | no |

| SHA-1 in digital signatures | yes | yes | no | no |

| CBC mode ciphers | yes | yes | yes | no[b] |

| Symmetric ciphers with keys < 256 bits | yes | yes | yes | no |

| SHA-1 and SHA-224 signatures in certificates | yes | yes | yes | no |

[a]

You can use only Diffie-Hellman groups defined in RFC 7919 and RFC 3526.

[b]

CBC ciphers are disabled for TLS. In a non-TLS scenario, AES-128-CBC is disabled but AES-256-CBC is enabled. To disable also AES-256-CBC, apply a custom subpolicy.

| ||||

Additional resources

-

crypto-policies(7)andupdate-crypto-policies(8)man pages on your system - Product compliance (Red Hat Customer Portal)

3.2. Changing the system-wide cryptographic policy

You can change the system-wide cryptographic policy on your system by using the update-crypto-policies tool and restarting your system.

Prerequisites

- You have root privileges on the system.

Procedure

Optional: Display the current cryptographic policy:

$ update-crypto-policies --show DEFAULTSet the new cryptographic policy:

# update-crypto-policies --set <POLICY> <POLICY>

Replace

<POLICY>with the policy or subpolicy you want to set, for exampleFUTURE,LEGACYorFIPS:OSPP.Restart the system:

# reboot

Verification

Display the current cryptographic policy:

$ update-crypto-policies --show

<POLICY>

Additional resources

- For more information on system-wide cryptographic policies, see System-wide cryptographic policies

3.3. Switching the system-wide cryptographic policy to mode compatible with earlier releases

The default system-wide cryptographic policy in Red Hat Enterprise Linux 8 does not allow communication using older, insecure protocols. For environments that require to be compatible with Red Hat Enterprise Linux 6 and in some cases also with earlier releases, the less secure LEGACY policy level is available.

Switching to the LEGACY policy level results in a less secure system and applications.

Procedure

To switch the system-wide cryptographic policy to the

LEGACYlevel, enter the following command asroot:# update-crypto-policies --set LEGACY Setting system policy to LEGACY

Additional resources

-

For the list of available cryptographic policy levels, see the

update-crypto-policies(8)man page on your system. -

For defining custom cryptographic policies, see the

Custom Policiessection in theupdate-crypto-policies(8)man page and theCrypto Policy Definition Formatsection in thecrypto-policies(7)man page on your system.

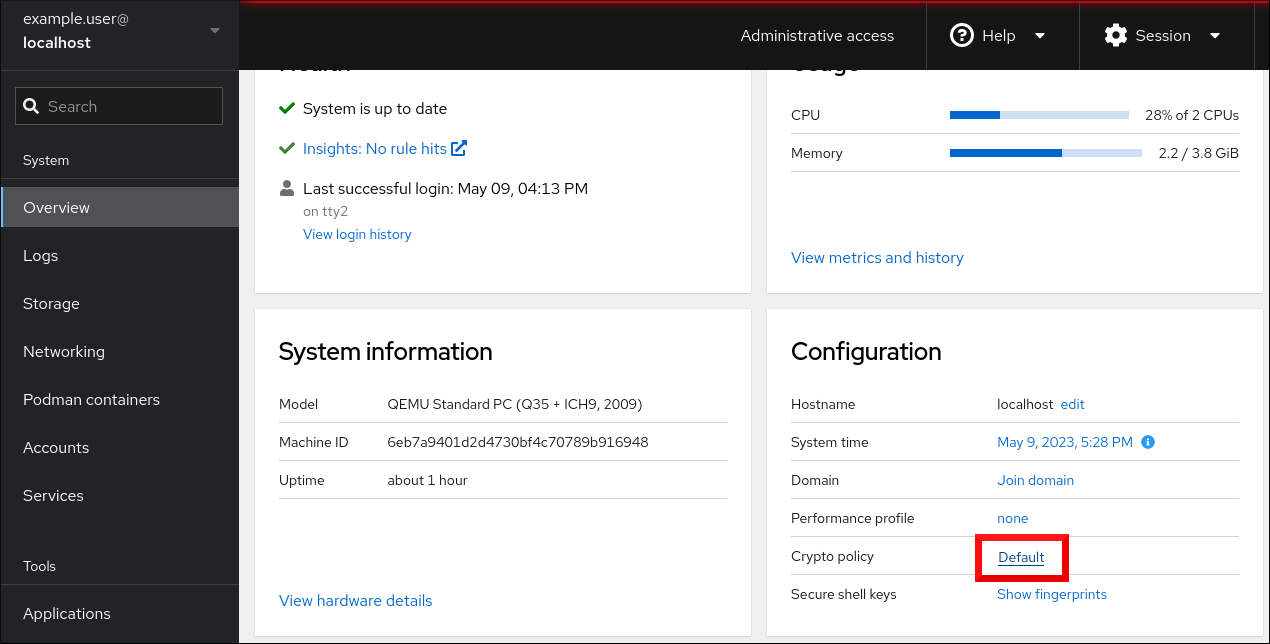

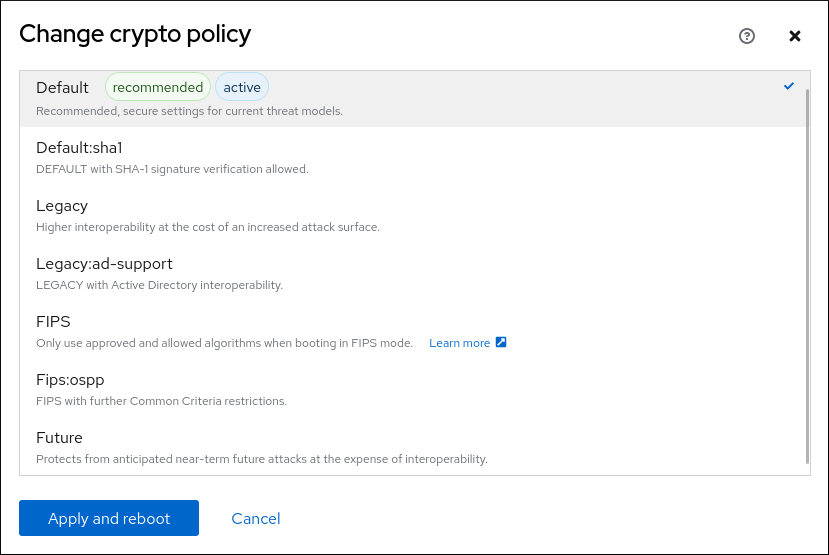

3.4. Setting up system-wide cryptographic policies in the web console

You can set one of system-wide cryptographic policies and subpolicies directly in the RHEL web console interface. Besides the four predefined system-wide cryptographic policies, you can also apply the following combinations of policies and subpolicies through the graphical interface now:

DEFAULT:SHA1-

The

DEFAULTpolicy with theSHA-1algorithm enabled. LEGACY:AD-SUPPORT-

The

LEGACYpolicy with less secure settings that improve interoperability for Active Directory services. FIPS:OSPP-

The

FIPSpolicy with further restrictions required by the Common Criteria for Information Technology Security Evaluation standard.

Because the FIPS:OSPP system-wide subpolicy contains further restrictions for cryptographic algorithms required by the Common Criteria (CC) certification, the system is less interoperable after you set it. For example, you cannot use RSA and DH keys shorter than 3072 bits, additional SSH algorithms, and several TLS groups. Setting FIPS:OSPP also prevents connecting to Red Hat Content Delivery Network (CDN) structure. Furthermore, you cannot integrate Active Directory (AD) into the IdM deployments that use FIPS:OSPP, communication between RHEL hosts using FIPS:OSPP and AD domains might not work, or some AD accounts might not be able to authenticate.

Note that your system is not CC-compliant after you set the FIPS:OSPP cryptographic subpolicy. The only correct way to make your RHEL system compliant with the CC standard is by following the guidance provided in the cc-config package. See the Common Criteria section on the Product compliance Red Hat Customer Portal page for a list of certified RHEL versions, validation reports, and links to CC guides hosted at the National Information Assurance Partnership (NIAP) website.

Prerequisites

- You have installed the RHEL 8 web console.

- You have enabled the cockpit service.

Your user account is allowed to log in to the web console.

For instructions, see Installing and enabling the web console.

-

You have

rootprivileges or permissions to enter administrative commands withsudo.

Procedure

Log in to the RHEL 8 web console.

For details, see Logging in to the web console.

In the Configuration card of the Overview page, click your current policy value next to Crypto policy.

In the Change crypto policy dialog window, click on the policy you want to start using on your system.

- Click the button.

Verification

After the restart, log back in to web console, and check that the Crypto policy value corresponds to the one you selected.

Alternatively, you can enter the

update-crypto-policies --showcommand to display the current system-wide cryptographic policy in your terminal.

3.5. Excluding an application from following system-wide cryptographic policies

You can customize cryptographic settings used by your application preferably by configuring supported cipher suites and protocols directly in the application.

You can also remove a symlink related to your application from the /etc/crypto-policies/back-ends directory and replace it with your customized cryptographic settings. This configuration prevents the use of system-wide cryptographic policies for applications that use the excluded back end. Furthermore, this modification is not supported by Red Hat.

3.5.1. Examples of opting out of the system-wide cryptographic policies

wget

To customize cryptographic settings used by the wget network downloader, use --secure-protocol and --ciphers options. For example:

$ wget --secure-protocol=TLSv1_1 --ciphers="SECURE128" https://example.com

See the HTTPS (SSL/TLS) Options section of the wget(1) man page for more information.

curl

To specify ciphers used by the curl tool, use the --ciphers option and provide a colon-separated list of ciphers as a value. For example:

$ curl https://example.com --ciphers '@SECLEVEL=0:DES-CBC3-SHA:RSA-DES-CBC3-SHA'

See the curl(1) man page for more information.

Firefox

Even though you cannot opt out of system-wide cryptographic policies in the Firefox web browser, you can further restrict supported ciphers and TLS versions in Firefox’s Configuration Editor. Type about:config in the address bar and change the value of the security.tls.version.min option as required. Setting security.tls.version.min to 1 allows TLS 1.0 as the minimum required, security.tls.version.min 2 enables TLS 1.1, and so on.

OpenSSH

To opt out of the system-wide cryptographic policies for your OpenSSH server, uncomment the line with the CRYPTO_POLICY= variable in the /etc/sysconfig/sshd file. After this change, values that you specify in the Ciphers, MACs, KexAlgoritms, and GSSAPIKexAlgorithms sections in the /etc/ssh/sshd_config file are not overridden.

See the sshd_config(5) man page for more information.

To opt out of system-wide cryptographic policies for your OpenSSH client, perform one of the following tasks:

-

For a given user, override the global

ssh_configwith a user-specific configuration in the~/.ssh/configfile. -

For the entire system, specify the cryptographic policy in a drop-in configuration file located in the

/etc/ssh/ssh_config.d/directory, with a two-digit number prefix smaller than 5, so that it lexicographically precedes the05-redhat.conffile, and with a.confsuffix, for example,04-crypto-policy-override.conf.

See the ssh_config(5) man page for more information.

Libreswan

See the Configuring IPsec connections that opt out of the system-wide crypto policies in the Securing networks document for detailed information.

Additional resources

-

update-crypto-policies(8)man page on your system

3.6. Customizing system-wide cryptographic policies with subpolicies

Use this procedure to adjust the set of enabled cryptographic algorithms or protocols.

You can either apply custom subpolicies on top of an existing system-wide cryptographic policy or define such a policy from scratch.

The concept of scoped policies allows enabling different sets of algorithms for different back ends. You can limit each configuration directive to specific protocols, libraries, or services.

Furthermore, directives can use asterisks for specifying multiple values using wildcards.

The /etc/crypto-policies/state/CURRENT.pol file lists all settings in the currently applied system-wide cryptographic policy after wildcard expansion. To make your cryptographic policy more strict, consider using values listed in the /usr/share/crypto-policies/policies/FUTURE.pol file.

You can find example subpolicies in the /usr/share/crypto-policies/policies/modules/ directory. The subpolicy files in this directory contain also descriptions in lines that are commented out.

Customization of system-wide cryptographic policies is available from RHEL 8.2. You can use the concept of scoped policies and the option of using wildcards in RHEL 8.5 and newer.

Procedure

Checkout to the

/etc/crypto-policies/policies/modules/directory:# cd /etc/crypto-policies/policies/modules/Create subpolicies for your adjustments, for example:

# touch MYCRYPTO-1.pmod # touch SCOPES-AND-WILDCARDS.pmod

ImportantUse upper-case letters in file names of policy modules.

Open the policy modules in a text editor of your choice and insert options that modify the system-wide cryptographic policy, for example:

# vi MYCRYPTO-1.pmodmin_rsa_size = 3072 hash = SHA2-384 SHA2-512 SHA3-384 SHA3-512

# vi SCOPES-AND-WILDCARDS.pmod# Disable the AES-128 cipher, all modes cipher = -AES-128-* # Disable CHACHA20-POLY1305 for the TLS protocol (OpenSSL, GnuTLS, NSS, and OpenJDK) cipher@TLS = -CHACHA20-POLY1305 # Allow using the FFDHE-1024 group with the SSH protocol (libssh and OpenSSH) group@SSH = FFDHE-1024+ # Disable all CBC mode ciphers for the SSH protocol (libssh and OpenSSH) cipher@SSH = -*-CBC # Allow the AES-256-CBC cipher in applications using libssh cipher@libssh = AES-256-CBC+

- Save the changes in the module files.

Apply your policy adjustments to the

DEFAULTsystem-wide cryptographic policy level:# update-crypto-policies --set DEFAULT:MYCRYPTO-1:SCOPES-AND-WILDCARDSTo make your cryptographic settings effective for already running services and applications, restart the system:

# reboot

Verification

Check that the

/etc/crypto-policies/state/CURRENT.polfile contains your changes, for example:$ cat /etc/crypto-policies/state/CURRENT.pol | grep rsa_size min_rsa_size = 3072

Additional resources

-

Custom Policiessection in theupdate-crypto-policies(8)man page on your system -

Crypto Policy Definition Formatsection in thecrypto-policies(7)man page on your system - How to customize crypto policies in RHEL 8.2 Red Hat blog article

3.7. Disabling SHA-1 by customizing a system-wide cryptographic policy

Because the SHA-1 hash function has an inherently weak design, and advancing cryptanalysis has made it vulnerable to attacks, RHEL 8 does not use SHA-1 by default. Nevertheless, some third-party applications, for example, public signatures, still use SHA-1. To disable the use of SHA-1 in signature algorithms on your system, you can use the NO-SHA1 policy module.

The NO-SHA1 policy module disables the SHA-1 hash function only in signatures and not elsewhere. In particular, the NO-SHA1 module still allows the use of SHA-1 with hash-based message authentication codes (HMAC). This is because HMAC security properties do not rely on the collision resistance of the corresponding hash function, and therefore the recent attacks on SHA-1 have a significantly lower impact on the use of SHA-1 for HMAC.

If your scenario requires disabling a specific key exchange (KEX) algorithm combination, for example, diffie-hellman-group-exchange-sha1, but you still want to use both the relevant KEX and the algorithm in other combinations, see the Red Hat Knowledgebase solution Steps to disable the diffie-hellman-group1-sha1 algorithm in SSH for instructions on opting out of system-wide crypto-policies for SSH and configuring SSH directly.

The module for disabling SHA-1 is available from RHEL 8.3. Customization of system-wide cryptographic policies is available from RHEL 8.2.

Procedure

Apply your policy adjustments to the

DEFAULTsystem-wide cryptographic policy level:# update-crypto-policies --set DEFAULT:NO-SHA1To make your cryptographic settings effective for already running services and applications, restart the system:

# reboot

Additional resources

-

Custom Policiessection in theupdate-crypto-policies(8)man page on your system -

Crypto Policy Definition Formatsection in thecrypto-policies(7)man page on your system - How to customize crypto policies in RHEL Red Hat blog article.

3.8. Creating and setting a custom system-wide cryptographic policy

For specific scenarios, you can customize the system-wide cryptographic policy by creating and using a complete policy file.

Customization of system-wide cryptographic policies is available from RHEL 8.2.

Procedure

Create a policy file for your customizations:

# cd /etc/crypto-policies/policies/ # touch MYPOLICY.pol

Alternatively, start by copying one of the four predefined policy levels:

# cp /usr/share/crypto-policies/policies/DEFAULT.pol /etc/crypto-policies/policies/MYPOLICY.polEdit the file with your custom cryptographic policy in a text editor of your choice to fit your requirements, for example:

# vi /etc/crypto-policies/policies/MYPOLICY.polSwitch the system-wide cryptographic policy to your custom level:

# update-crypto-policies --set MYPOLICYTo make your cryptographic settings effective for already running services and applications, restart the system:

# reboot

Additional resources

-

Custom Policiessection in theupdate-crypto-policies(8)man page and theCrypto Policy Definition Formatsection in thecrypto-policies(7)man page on your system - How to customize crypto policies in RHEL Red Hat blog article

3.9. Enhancing security with the FUTURE cryptographic policy using the crypto_policies RHEL system role

You can use the crypto_policies RHEL system role to configure the FUTURE policy on your managed nodes. This policy helps to achieve for example:

- Future-proofing against emerging threats: anticipates advancements in computational power.

- Enhanced security: stronger encryption standards require longer key lengths and more secure algorithms.

- Compliance with high-security standards: for example in healthcare, telco, and finance the data sensitivity is high, and availability of strong cryptography is critical.

Typically, FUTURE is suitable for environments handling highly sensitive data, preparing for future regulations, or adopting long-term security strategies.

Legacy systems or software does not have to support the more modern and stricter algorithms and protocols enforced by the FUTURE policy. For example, older systems might not support TLS 1.3 or larger key sizes. This could lead to compatibility problems.

Also, using strong algorithms usually increases the computational workload, which could negatively affect your system performance.

Prerequisites

- You have prepared the control node and the managed nodes

- You are logged in to the control node as a user who can run playbooks on the managed nodes.

-

The account you use to connect to the managed nodes has

sudopermissions on them.

Procedure

Create a playbook file, for example

~/playbook.yml, with the following content:--- - name: Configure cryptographic policies hosts: managed-node-01.example.com tasks: - name: Configure the FUTURE cryptographic security policy on the managed node ansible.builtin.include_role: name: rhel-system-roles.crypto_policies vars: - crypto_policies_policy: FUTURE - crypto_policies_reboot_ok: trueThe settings specified in the example playbook include the following:

crypto_policies_policy: FUTURE-

Configures the required cryptographic policy (

FUTURE) on the managed node. It can be either the base policy or a base policy with some sub-policies. The specified base policy and sub-policies have to be available on the managed node. The default value isnull. It means that the configuration is not changed and thecrypto_policiesRHEL system role will only collect the Ansible facts. crypto_policies_reboot_ok: true-

Causes the system to reboot after the cryptographic policy change to make sure all of the services and applications will read the new configuration files. The default value is

false.

For details about all variables used in the playbook, see the

/usr/share/ansible/roles/rhel-system-roles.crypto_policies/README.mdfile on the control node.Validate the playbook syntax:

$ ansible-playbook --syntax-check ~/playbook.ymlNote that this command only validates the syntax and does not protect against a wrong but valid configuration.

Run the playbook:

$ ansible-playbook ~/playbook.yml

Because the FIPS:OSPP system-wide subpolicy contains further restrictions for cryptographic algorithms required by the Common Criteria (CC) certification, the system is less interoperable after you set it. For example, you cannot use RSA and DH keys shorter than 3072 bits, additional SSH algorithms, and several TLS groups. Setting FIPS:OSPP also prevents connecting to Red Hat Content Delivery Network (CDN) structure. Furthermore, you cannot integrate Active Directory (AD) into the IdM deployments that use FIPS:OSPP, communication between RHEL hosts using FIPS:OSPP and AD domains might not work, or some AD accounts might not be able to authenticate.

Note that your system is not CC-compliant after you set the FIPS:OSPP cryptographic subpolicy. The only correct way to make your RHEL system compliant with the CC standard is by following the guidance provided in the cc-config package. See the Common Criteria section on the Product compliance Red Hat Customer Portal page for a list of certified RHEL versions, validation reports, and links to CC guides hosted at the National Information Assurance Partnership (NIAP) website.

Verification

On the control node, create another playbook named, for example,

verify_playbook.yml:--- - name: Verification hosts: managed-node-01.example.com tasks: - name: Verify active cryptographic policy ansible.builtin.include_role: name: rhel-system-roles.crypto_policies - name: Display the currently active cryptographic policy ansible.builtin.debug: var: crypto_policies_activeThe settings specified in the example playbook include the following:

crypto_policies_active-

An exported Ansible fact that contains the currently active policy name in the format as accepted by the

crypto_policies_policyvariable.

Validate the playbook syntax:

$ ansible-playbook --syntax-check ~/verify_playbook.ymlRun the playbook:

$ ansible-playbook ~/verify_playbook.yml TASK [debug] ************************** ok: [host] => { "crypto_policies_active": "FUTURE" }The

crypto_policies_activevariable shows the active policy on the managed node.

Additional resources

-

/usr/share/ansible/roles/rhel-system-roles.crypto_policies/README.mdfile -

/usr/share/doc/rhel-system-roles/crypto_policies/directory -

update-crypto-policies(8)andcrypto-policies(7)manual pages

Chapter 4. Configuring applications to use cryptographic hardware through PKCS #11

Separating parts of your secret information about dedicated cryptographic devices, such as smart cards and cryptographic tokens for end-user authentication and hardware security modules (HSM) for server applications, provides an additional layer of security. In RHEL, support for cryptographic hardware through the PKCS #11 API is consistent across different applications, and the isolation of secrets on cryptographic hardware is not a complicated task.

4.1. Cryptographic hardware support through PKCS #11

Public-Key Cryptography Standard (PKCS) #11 defines an application programming interface (API) to cryptographic devices that hold cryptographic information and perform cryptographic functions.

PKCS #11 introduces the cryptographic token, an object that presents each hardware or software device to applications in a unified manner. Therefore, applications view devices such as smart cards, which are typically used by persons, and hardware security modules, which are typically used by computers, as PKCS #11 cryptographic tokens.

A PKCS #11 token can store various object types including a certificate; a data object; and a public, private, or secret key. These objects are uniquely identifiable through the PKCS #11 Uniform Resource Identifier (URI) scheme.

A PKCS #11 URI is a standard way to identify a specific object in a PKCS #11 module according to the object attributes. This enables you to configure all libraries and applications with the same configuration string in the form of a URI.

RHEL provides the OpenSC PKCS #11 driver for smart cards by default. However, hardware tokens and HSMs can have their own PKCS #11 modules that do not have their counterpart in the system. You can register such PKCS #11 modules with the p11-kit tool, which acts as a wrapper over the registered smart-card drivers in the system.

To make your own PKCS #11 module work on the system, add a new text file to the /etc/pkcs11/modules/ directory

You can add your own PKCS #11 module into the system by creating a new text file in the /etc/pkcs11/modules/ directory. For example, the OpenSC configuration file in p11-kit looks as follows:

$ cat /usr/share/p11-kit/modules/opensc.module

module: opensc-pkcs11.so4.2. Authenticating by SSH keys stored on a smart card

You can create and store ECDSA and RSA keys on a smart card and authenticate by the smart card on an OpenSSH client. Smart-card authentication replaces the default password authentication.

Prerequisites

-

On the client side, the

openscpackage is installed and thepcscdservice is running.

Procedure

List all keys provided by the OpenSC PKCS #11 module including their PKCS #11 URIs and save the output to the

keys.pubfile:$ ssh-keygen -D pkcs11: > keys.pubTransfer the public key to the remote server. Use the

ssh-copy-idcommand with thekeys.pubfile created in the previous step:$ ssh-copy-id -f -i keys.pub <username@ssh-server-example.com>Connect to <ssh-server-example.com> by using the ECDSA key. You can use just a subset of the URI, which uniquely references your key, for example:

$ ssh -i "pkcs11:id=%01?module-path=/usr/lib64/pkcs11/opensc-pkcs11.so" <ssh-server-example.com> Enter PIN for 'SSH key': [ssh-server-example.com] $Because OpenSSH uses the

p11-kit-proxywrapper and the OpenSC PKCS #11 module is registered to thep11-kittool, you can simplify the previous command:$ ssh -i "pkcs11:id=%01" <ssh-server-example.com> Enter PIN for 'SSH key': [ssh-server-example.com] $If you skip the

id=part of a PKCS #11 URI, OpenSSH loads all keys that are available in the proxy module. This can reduce the amount of typing required:$ ssh -i pkcs11: <ssh-server-example.com> Enter PIN for 'SSH key': [ssh-server-example.com] $Optional: You can use the same URI string in the

~/.ssh/configfile to make the configuration permanent:$ cat ~/.ssh/config IdentityFile "pkcs11:id=%01?module-path=/usr/lib64/pkcs11/opensc-pkcs11.so" $ ssh <ssh-server-example.com> Enter PIN for 'SSH key': [ssh-server-example.com] $

The

sshclient utility now automatically uses this URI and the key from the smart card.

Additional resources

-

p11-kit(8),opensc.conf(5),pcscd(8),ssh(1), andssh-keygen(1)man pages on your system

4.3. Configuring applications for authentication with certificates on smart cards

Authentication by using smart cards in applications may increase security and simplify automation. You can integrate the Public Key Cryptography Standard (PKCS) #11 URIs into your application by using the following methods:

-

The

Firefoxweb browser automatically loads thep11-kit-proxyPKCS #11 module. This means that every supported smart card in the system is automatically detected. For using TLS client authentication, no additional setup is required and keys and certificates from a smart card are automatically used when a server requests them. -

If your application uses the

GnuTLSorNSSlibrary, it already supports PKCS #11 URIs. Also, applications that rely on theOpenSSLlibrary can access cryptographic hardware modules, including smart cards, through thepkcs11engine provided by theopenssl-pkcs11package. -

Applications that require working with private keys on smart cards and that do not use

NSS,GnuTLS, norOpenSSLcan use thep11-kitAPI directly to work with cryptographic hardware modules, including smart cards, rather than using the PKCS #11 API of specific PKCS #11 modules. With the the

wgetnetwork downloader, you can specify PKCS #11 URIs instead of paths to locally stored private keys and certificates. This might simplify creation of scripts for tasks that require safely stored private keys and certificates. For example:$ wget --private-key 'pkcs11:token=softhsm;id=%01;type=private?pin-value=111111' --certificate 'pkcs11:token=softhsm;id=%01;type=cert' https://example.com/You can also specify PKCS #11 URI when using the

curltool:$ curl --key 'pkcs11:token=softhsm;id=%01;type=private?pin-value=111111' --cert 'pkcs11:token=softhsm;id=%01;type=cert' https://example.com/

Additional resources

-

curl(1),wget(1), andp11-kit(8)man pages on your system

4.4. Using HSMs protecting private keys in Apache

The Apache HTTP server can work with private keys stored on hardware security modules (HSMs), which helps to prevent the keys' disclosure and man-in-the-middle attacks. Note that this usually requires high-performance HSMs for busy servers.

For secure communication in the form of the HTTPS protocol, the Apache HTTP server (httpd) uses the OpenSSL library. OpenSSL does not support PKCS #11 natively. To use HSMs, you have to install the openssl-pkcs11 package, which provides access to PKCS #11 modules through the engine interface. You can use a PKCS #11 URI instead of a regular file name to specify a server key and a certificate in the /etc/httpd/conf.d/ssl.conf configuration file, for example:

SSLCertificateFile "pkcs11:id=%01;token=softhsm;type=cert" SSLCertificateKeyFile "pkcs11:id=%01;token=softhsm;type=private?pin-value=111111"

Install the httpd-manual package to obtain complete documentation for the Apache HTTP Server, including TLS configuration. The directives available in the /etc/httpd/conf.d/ssl.conf configuration file are described in detail in the /usr/share/httpd/manual/mod/mod_ssl.html file.

4.5. Using HSMs protecting private keys in Nginx

The Nginx HTTP server can work with private keys stored on hardware security modules (HSMs), which helps to prevent the keys' disclosure and man-in-the-middle attacks. Note that this usually requires high-performance HSMs for busy servers.

Because Nginx also uses the OpenSSL for cryptographic operations, support for PKCS #11 must go through the openssl-pkcs11 engine. Nginx currently supports only loading private keys from an HSM, and a certificate must be provided separately as a regular file. Modify the ssl_certificate and ssl_certificate_key options in the server section of the /etc/nginx/nginx.conf configuration file:

ssl_certificate /path/to/cert.pem ssl_certificate_key "engine:pkcs11:pkcs11:token=softhsm;id=%01;type=private?pin-value=111111";

Note that the engine:pkcs11: prefix is needed for the PKCS #11 URI in the Nginx configuration file. This is because the other pkcs11 prefix refers to the engine name.

Chapter 5. Controlling access to smart cards by using polkit

To cover possible threats that cannot be prevented by mechanisms built into smart cards, such as PINs, PIN pads, and biometrics, and for more fine-grained control, RHEL uses the polkit framework for controlling access control to smart cards.

System administrators can configure polkit to fit specific scenarios, such as smart-card access for non-privileged or non-local users or services.

5.1. Smart-card access control through polkit

The Personal Computer/Smart Card (PC/SC) protocol specifies a standard for integrating smart cards and their readers into computing systems. In RHEL, the pcsc-lite package provides middleware to access smart cards that use the PC/SC API. A part of this package, the pcscd (PC/SC Smart Card) daemon, ensures that the system can access a smart card using the PC/SC protocol.

Because access-control mechanisms built into smart cards, such as PINs, PIN pads, and biometrics, do not cover all possible threats, RHEL uses the polkit framework for more robust access control. The polkit authorization manager can grant access to privileged operations. In addition to granting access to disks, you can use polkit also to specify policies for securing smart cards. For example, you can define which users can perform which operations with a smart card.

After installing the pcsc-lite package and starting the pcscd daemon, the system enforces policies defined in the /usr/share/polkit-1/actions/ directory. The default system-wide policy is in the /usr/share/polkit-1/actions/org.debian.pcsc-lite.policy file. Polkit policy files use the XML format and the syntax is described in the polkit(8) man page on your system.

The polkitd service monitors the /etc/polkit-1/rules.d/ and /usr/share/polkit-1/rules.d/ directories for any changes in rule files stored in these directories. The files contain authorization rules in JavaScript format. System administrators can add custom rule files in both directories, and polkitd reads them in lexical order based on their file name. If two files have the same names, then the file in /etc/polkit-1/rules.d/ is read first.

If you need to enable smart-card support when the system security services daemon (SSSD) does not run as root, you must install the sssd-polkit-rules package. The package provides polkit integration with SSSD.

Additional resources

-

polkit(8),polkitd(8), andpcscd(8)man pages on your system

5.3. Displaying more detailed information about polkit authorization to PC/SC

In the default configuration, the polkit authorization framework sends only limited information to the Journal log. You can extend polkit log entries related to the PC/SC protocol by adding new rules.

Prerequisites

-

You have installed the

pcsc-litepackage on your system. -

The

pcscddaemon is running.

Procedure

Create a new file in the

/etc/polkit-1/rules.d/directory:# touch /etc/polkit-1/rules.d/00-test.rulesEdit the file in an editor of your choice, for example:

# vi /etc/polkit-1/rules.d/00-test.rulesInsert the following lines:

polkit.addRule(function(action, subject) { if (action.id == "org.debian.pcsc-lite.access_pcsc" || action.id == "org.debian.pcsc-lite.access_card") { polkit.log("action=" + action); polkit.log("subject=" + subject); } });Save the file, and exit the editor.

Restart the

pcscdandpolkitservices:# systemctl restart pcscd.service pcscd.socket polkit.service

Verification

-

Make an authorization request for

pcscd. For example, open the Firefox web browser or use thepkcs11-tool -Lcommand provided by theopenscpackage. Display the extended log entries, for example:

# journalctl -u polkit --since "1 hour ago" polkitd[1224]: <no filename>:4: action=[Action id='org.debian.pcsc-lite.access_pcsc'] polkitd[1224]: <no filename>:5: subject=[Subject pid=2020481 user=user' groups=user,wheel,mock,wireshark seat=null session=null local=true active=true]

Additional resources

-

polkit(8)andpolkitd(8)man pages.

5.4. Additional resources

- Controlling access to smart cards Red Hat Blog article.

Chapter 6. Scanning the system for configuration compliance and vulnerabilities

A compliance audit is a process of determining whether a given object follows all the rules specified in a compliance policy. The compliance policy is defined by security professionals who specify the required settings, often in the form of a checklist, that a computing environment should use.

Compliance policies can vary substantially across organizations and even across different systems within the same organization. Differences among these policies are based on the purpose of each system and its importance for the organization. Custom software settings and deployment characteristics also raise a need for custom policy checklists.

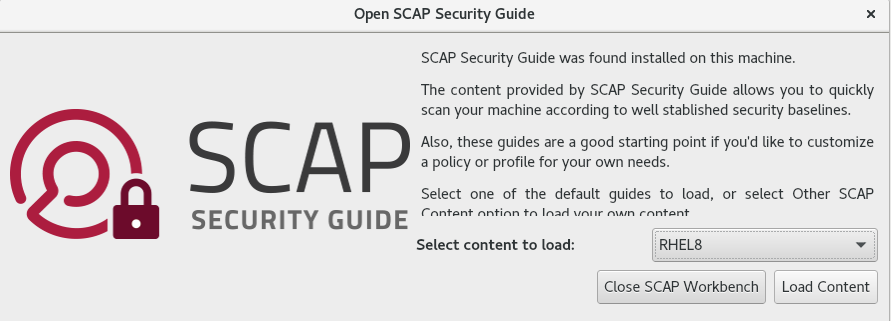

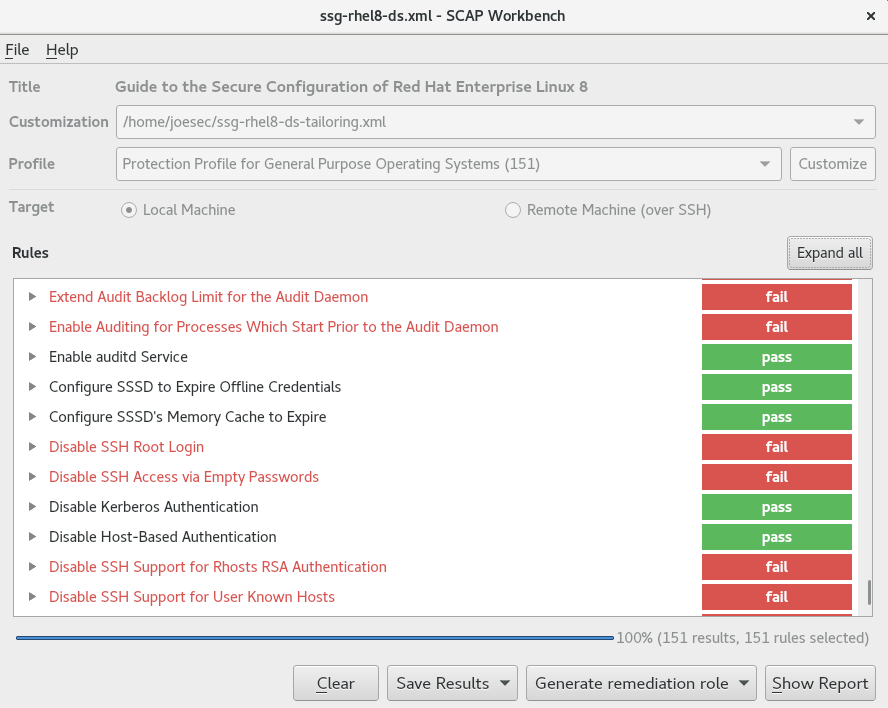

6.1. Configuration compliance tools in RHEL

You can perform a fully automated compliance audit in Red Hat Enterprise Linux by using the following configuration compliance tools. These tools are based on the Security Content Automation Protocol (SCAP) standard and are designed for automated tailoring of compliance policies.

- SCAP Workbench

-

The

scap-workbenchgraphical utility is designed to perform configuration and vulnerability scans on a single local or remote system. You can also use it to generate security reports based on these scans and evaluations. - OpenSCAP

The

OpenSCAPlibrary, with the accompanyingoscapcommand-line utility, is designed to perform configuration and vulnerability scans on a local system, to validate configuration compliance content, and to generate reports and guides based on these scans and evaluations.ImportantYou can experience memory-consumption problems while using OpenSCAP, which can cause stopping the program prematurely and prevent generating any result files. See the OpenSCAP memory-consumption problems Knowledgebase article for details.

- SCAP Security Guide (SSG)

-

The

scap-security-guidepackage provides collections of security policies for Linux systems. The guidance consists of a catalog of practical hardening advice, linked to government requirements where applicable. The project bridges the gap between generalized policy requirements and specific implementation guidelines. - Script Check Engine (SCE)

-

With SCE, which is an extension to the SCAP protocol, administrators can write their security content by using a scripting language, such as Bash, Python, and Ruby. The SCE extension is provided in the

openscap-engine-scepackage. The SCE itself is not part of the SCAP standard.

To perform automated compliance audits on multiple systems remotely, you can use the OpenSCAP solution for Red Hat Satellite.

Additional resources

-

oscap(8),scap-workbench(8), andscap-security-guide(8)man pages on your system - Red Hat Security Demos: Creating Customized Security Policy Content to Automate Security Compliance

- Red Hat Security Demos: Defend Yourself with RHEL Security Technologies

- Managing security compliance in Red Hat Satellite

6.2. Vulnerability scanning

6.2.1. Red Hat Security Advisories OVAL feed

Red Hat Enterprise Linux security auditing capabilities are based on the Security Content Automation Protocol (SCAP) standard. SCAP is a multi-purpose framework of specifications that supports automated configuration, vulnerability and patch checking, technical control compliance activities, and security measurement.

SCAP specifications create an ecosystem where the format of security content is well-known and standardized although the implementation of the scanner or policy editor is not mandated. This enables organizations to build their security policy (SCAP content) once, no matter how many security vendors they employ.

The Open Vulnerability Assessment Language (OVAL) is the essential and oldest component of SCAP. Unlike other tools and custom scripts, OVAL describes a required state of resources in a declarative manner. OVAL code is never executed directly but using an OVAL interpreter tool called scanner. The declarative nature of OVAL ensures that the state of the assessed system is not accidentally modified.

Like all other SCAP components, OVAL is based on XML. The SCAP standard defines several document formats. Each of them includes a different kind of information and serves a different purpose.

Red Hat Product Security helps customers evaluate and manage risk by tracking and investigating all security issues affecting Red Hat customers. It provides timely and concise patches and security advisories on the Red Hat Customer Portal. Red Hat creates and supports OVAL patch definitions, providing machine-readable versions of our security advisories.

Because of differences between platforms, versions, and other factors, Red Hat Product Security qualitative severity ratings of vulnerabilities do not directly align with the Common Vulnerability Scoring System (CVSS) baseline ratings provided by third parties. Therefore, we recommend that you use the RHSA OVAL definitions instead of those provided by third parties.

The RHSA OVAL definitions are available individually and as a complete package, and are updated within an hour of a new security advisory being made available on the Red Hat Customer Portal.

Each OVAL patch definition maps one-to-one to a Red Hat Security Advisory (RHSA). Because an RHSA can contain fixes for multiple vulnerabilities, each vulnerability is listed separately by its Common Vulnerabilities and Exposures (CVE) name and has a link to its entry in our public bug database.

The RHSA OVAL definitions are designed to check for vulnerable versions of RPM packages installed on a system. It is possible to extend these definitions to include further checks, for example, to find out if the packages are being used in a vulnerable configuration. These definitions are designed to cover software and updates shipped by Red Hat. Additional definitions are required to detect the patch status of third-party software.

The Red Hat Insights for Red Hat Enterprise Linux compliance service helps IT security and compliance administrators to assess, monitor, and report on the security policy compliance of Red Hat Enterprise Linux systems. You can also create and manage your SCAP security policies entirely within the compliance service UI.

6.2.2. Scanning the system for vulnerabilities

The oscap command-line utility enables you to scan local systems, validate configuration compliance content, and generate reports and guides based on these scans and evaluations. This utility serves as a front end to the OpenSCAP library and groups its functionalities to modules (sub-commands) based on the type of SCAP content it processes.

Prerequisites

-

The

openscap-scannerandbzip2packages are installed.

Procedure

Download the latest RHSA OVAL definitions for your system:

# wget -O - https://www.redhat.com/security/data/oval/v2/RHEL8/rhel-8.oval.xml.bz2 | bzip2 --decompress > rhel-8.oval.xmlScan the system for vulnerabilities and save results to the vulnerability.html file:

# oscap oval eval --report vulnerability.html rhel-8.oval.xml

Verification

Check the results in a browser of your choice, for example:

$ firefox vulnerability.html &

Additional resources

-

oscap(8)man page on your system - Red Hat OVAL definitions

- OpenSCAP memory consumption problems

6.2.3. Scanning remote systems for vulnerabilities

You can check remote systems for vulnerabilities with the OpenSCAP scanner by using the oscap-ssh tool over the SSH protocol.

Prerequisites

-

The

openscap-utilsandbzip2packages are installed on the system you use for scanning. -

The

openscap-scannerpackage is installed on the remote systems. - The SSH server is running on the remote systems.

Procedure

Download the latest RHSA OVAL definitions for your system:

# wget -O - https://www.redhat.com/security/data/oval/v2/RHEL8/rhel-8.oval.xml.bz2 | bzip2 --decompress > rhel-8.oval.xmlScan a remote system for vulnerabilities and save the results to a file:

# oscap-ssh <username>@<hostname> <port> oval eval --report <scan-report.html> rhel-8.oval.xmlReplace:

-

<username>@<hostname>with the user name and host name of the remote system. -

<port>with the port number through which you can access the remote system, for example,22. -

<scan-report.html>with the file name whereoscapsaves the scan results.

-

Additional resources

6.3. Configuration compliance scanning

6.3.1. Configuration compliance in RHEL

You can use configuration compliance scanning to conform to a baseline defined by a specific organization. For example, if you work with the US government, you might have to align your systems with the Operating System Protection Profile (OSPP), and if you are a payment processor, you might have to align your systems with the Payment Card Industry Data Security Standard (PCI-DSS). You can also perform configuration compliance scanning to harden your system security.

Red Hat recommends you follow the Security Content Automation Protocol (SCAP) content provided in the SCAP Security Guide package because it is in line with Red Hat best practices for affected components.

The SCAP Security Guide package provides content which conforms to the SCAP 1.2 and SCAP 1.3 standards. The openscap scanner utility is compatible with both SCAP 1.2 and SCAP 1.3 content provided in the SCAP Security Guide package.

Performing a configuration compliance scanning does not guarantee the system is compliant.

The SCAP Security Guide suite provides profiles for several platforms in a form of data stream documents. A data stream is a file that contains definitions, benchmarks, profiles, and individual rules. Each rule specifies the applicability and requirements for compliance. RHEL provides several profiles for compliance with security policies. In addition to the industry standard, Red Hat data streams also contain information for remediation of failed rules.

Structure of compliance scanning resources

Data stream ├── xccdf | ├── benchmark | ├── profile | | ├──rule reference | | └──variable | ├── rule | ├── human readable data | ├── oval reference ├── oval ├── ocil reference ├── ocil ├── cpe reference └── cpe └── remediation

A profile is a set of rules based on a security policy, such as OSPP, PCI-DSS, and Health Insurance Portability and Accountability Act (HIPAA). This enables you to audit the system in an automated way for compliance with security standards.

You can modify (tailor) a profile to customize certain rules, for example, password length. For more information about profile tailoring, see Customizing a security profile with SCAP Workbench.

6.3.2. Possible results of an OpenSCAP scan

Depending on the data stream and profile applied to an OpenSCAP scan, as well as various properties of your system, each rule may produce a specific result. These are the possible results with brief explanations of their meanings:

- Pass

- The scan did not find any conflicts with this rule.

- Fail

- The scan found a conflict with this rule.

- Not checked

- OpenSCAP does not perform an automatic evaluation of this rule. Check whether your system conforms to this rule manually.

- Not applicable

- This rule does not apply to the current configuration.

- Not selected

- This rule is not part of the profile. OpenSCAP does not evaluate this rule and does not display these rules in the results.

- Error

-

The scan encountered an error. For additional information, you can enter the

oscapcommand with the--verbose DEVELoption. File a support case on the Red Hat customer portal or open a ticket in the RHEL project in Red Hat Jira. - Unknown

-

The scan encountered an unexpected situation. For additional information, you can enter the

oscapcommand with the`--verbose DEVELoption. File a support case on the Red Hat customer portal or open a ticket in the RHEL project in Red Hat Jira.

6.3.3. Viewing profiles for configuration compliance

Before you decide to use profiles for scanning or remediation, you can list them and check their detailed descriptions using the oscap info subcommand.

Prerequisites

-

The

openscap-scannerandscap-security-guidepackages are installed.

Procedure

List all available files with security compliance profiles provided by the SCAP Security Guide project:

$ ls /usr/share/xml/scap/ssg/content/ ssg-firefox-cpe-dictionary.xml ssg-rhel6-ocil.xml ssg-firefox-cpe-oval.xml ssg-rhel6-oval.xml … ssg-rhel6-ds-1.2.xml ssg-rhel8-oval.xml ssg-rhel8-ds.xml ssg-rhel8-xccdf.xml …Display detailed information about a selected data stream using the

oscap infosubcommand. XML files containing data streams are indicated by the-dsstring in their names. In theProfilessection, you can find a list of available profiles and their IDs:$ oscap info /usr/share/xml/scap/ssg/content/ssg-rhel8-ds.xml Profiles: … Title: Health Insurance Portability and Accountability Act (HIPAA) Id: xccdf_org.ssgproject.content_profile_hipaa Title: PCI-DSS v3.2.1 Control Baseline for Red Hat Enterprise Linux 8 Id: xccdf_org.ssgproject.content_profile_pci-dss Title: OSPP - Protection Profile for General Purpose Operating Systems Id: xccdf_org.ssgproject.content_profile_ospp …Select a profile from the data stream file and display additional details about the selected profile. To do so, use

oscap infowith the--profileoption followed by the last section of the ID displayed in the output of the previous command. For example, the ID of the HIPPA profile isxccdf_org.ssgproject.content_profile_hipaa, and the value for the--profileoption ishipaa:$ oscap info --profile hipaa /usr/share/xml/scap/ssg/content/ssg-rhel8-ds.xml … Profile Title: Health Insurance Portability and Accountability Act (HIPAA) Description: The HIPAA Security Rule establishes U.S. national standards to protect individuals’ electronic personal health information that is created, received, used, or maintained by a covered entity. …

Additional resources

-

scap-security-guide(8)man page on your system - OpenSCAP memory consumption problems

6.3.4. Assessing configuration compliance with a specific baseline

You can determine whether your system or a remote system conforms to a specific baseline, and save the results in a report by using the oscap command-line tool.

Prerequisites

-

The

openscap-scannerandscap-security-guidepackages are installed. - You know the ID of the profile within the baseline with which the system should comply. To find the ID, see the Viewing profiles for configuration compliance section.

Procedure

Scan the local system for compliance with the selected profile and save the scan results to a file:

$ oscap xccdf eval --report <scan-report.html> --profile <profileID> /usr/share/xml/scap/ssg/content/ssg-rhel8-ds.xmlReplace:

-

<scan-report.html>with the file name whereoscapsaves the scan results. -

<profileID>with the profile ID with which the system should comply, for example,hipaa.

-

Optional: Scan a remote system for compliance with the selected profile and save the scan results to a file:

$ oscap-ssh <username>@<hostname> <port> xccdf eval --report <scan-report.html> --profile <profileID> /usr/share/xml/scap/ssg/content/ssg-rhel8-ds.xmlReplace:

-

<username>@<hostname>with the user name and host name of the remote system. -

<port>with the port number through which you can access the remote system. -

<scan-report.html>with the file name whereoscapsaves the scan results. -

<profileID>with the profile ID with which the system should comply, for example,hipaa.

-

Additional resources

-

scap-security-guide(8)man page on your system -

SCAP Security Guidedocumentation in the/usr/share/doc/scap-security-guide/directory -

/usr/share/doc/scap-security-guide/guides/ssg-rhel8-guide-index.html- [Guide to the Secure Configuration of Red Hat Enterprise Linux 8] installed with thescap-security-guide-docpackage - OpenSCAP memory consumption problems

6.4. Remediating the system to align with a specific baseline

You can remediate the RHEL system to align with a specific baseline. You can remediate the system to align with any profile provided by the SCAP Security Guide. For the details on listing the available profiles, see the Viewing profiles for configuration compliance section.

If not used carefully, running the system evaluation with the Remediate option enabled might render the system non-functional. Red Hat does not provide any automated method to revert changes made by security-hardening remediations. Remediations are supported on RHEL systems in the default configuration. If your system has been altered after the installation, running remediation might not make it compliant with the required security profile.

Prerequisites

-

The

scap-security-guidepackage is installed.

Procedure

Remediate the system by using the

oscapcommand with the--remediateoption:# oscap xccdf eval --profile <profileID> --remediate /usr/share/xml/scap/ssg/content/ssg-rhel8-ds.xmlReplace

<profileID>with the profile ID with which the system should comply, for example,hipaa.- Restart your system.

Verification

Evaluate compliance of the system with the profile, and save the scan results to a file:

$ oscap xccdf eval --report <scan-report.html> --profile <profileID> /usr/share/xml/scap/ssg/content/ssg-rhel8-ds.xmlReplace:

-

<scan-report.html>with the file name whereoscapsaves the scan results. -

<profileID>with the profile ID with which the system should comply, for example,hipaa.

-

Additional resources

-

scap-security-guide(8)andoscap(8)man pages on your system - Complementing the DISA benchmark using the SSG content Knowledgebase article

6.5. Remediating the system to align with a specific baseline using an SSG Ansible playbook

You can remediate your system to align with a specific baseline by using an Ansible playbook file from the SCAP Security Guide project. This example uses the Health Insurance Portability and Accountability Act (HIPAA) profile, but you can remediate to align with any other profile provided by the SCAP Security Guide. For the details on listing the available profiles, see the Viewing profiles for configuration compliance section.

If not used carefully, running the system evaluation with the Remediate option enabled might render the system non-functional. Red Hat does not provide any automated method to revert changes made by security-hardening remediations. Remediations are supported on RHEL systems in the default configuration. If your system has been altered after the installation, running remediation might not make it compliant with the required security profile.

Prerequisites

-

The

scap-security-guidepackage is installed. -

The

ansible-corepackage is installed. See the Ansible Installation Guide for more information. RHEL 8.6 or later is installed. For more information about installing RHEL, see Interactively installing RHEL from installation media.

NoteIn RHEL 8.5 and earlier versions, Ansible packages were provided through Ansible Engine instead of Ansible Core, and with a different level of support. Do not use Ansible Engine because the packages might not be compatible with Ansible automation content in RHEL 8.6 and later. For more information, see Scope of support for the Ansible Core package included in the RHEL 9 and RHEL 8.6 and later AppStream repositories.

Procedure

Remediate your system to align with HIPAA by using Ansible:

# ansible-playbook -i localhost, -c local /usr/share/scap-security-guide/ansible/rhel8-playbook-hipaa.yml- Restart the system.

Verification

Evaluate the compliance of the system with the HIPAA profile, and save the scan results to a file:

# oscap xccdf eval --profile hipaa --report <scan-report.html> /usr/share/xml/scap/ssg/content/ssg-rhel8-ds.xmlReplace

<scan-report.html>with the file name whereoscapsaves the scan results.

Additional resources

-

scap-security-guide(8)andoscap(8)man pages on your system - Ansible Documentation

6.6. Creating a remediation Ansible playbook to align the system with a specific baseline

You can create an Ansible playbook containing only the remediations that are required to align your system with a specific baseline. This playbook is smaller because it does not cover already satisfied requirements. Creating the playbook does not modify your system in any way, you only prepare a file for later application. This example uses the Health Insurance Portability and Accountability Act (HIPAA) profile.

In RHEL 8.6, Ansible Engine is replaced by the ansible-core package, which contains only built-in modules. Note that many Ansible remediations use modules from the community and Portable Operating System Interface (POSIX) collections, which are not included in the built-in modules. In this case, you can use Bash remediations as a substitute for Ansible remediations. The Red Hat Connector in RHEL 8.6 includes the Ansible modules necessary for the remediation playbooks to function with Ansible Core.

Prerequisites

-

The

scap-security-guidepackage is installed.

Procedure

Scan the system and save the results:

# oscap xccdf eval --profile hipaa --results <hipaa-results.xml> /usr/share/xml/scap/ssg/content/ssg-rhel8-ds.xmlFind the value of the result ID in the file with the results:

# oscap info <hipaa-results.xml>Generate an Ansible playbook based on the file generated in step 1:

# oscap xccdf generate fix --fix-type ansible --result-id <xccdf_org.open-scap_testresult_xccdf_org.ssgproject.content_profile_hipaa> --output <hipaa-remediations.yml> <hipaa-results.xml>-

Review the generated file, which contains the Ansible remediations for rules that failed during the scan performed in step 1. After reviewing this generated file, you can apply it by using the

ansible-playbook <hipaa-remediations.yml>command.

Verification

-

In a text editor of your choice, review that the generated

<hipaa-remediations.yml>file contains rules that failed in the scan performed in step 1.

Additional resources

-

scap-security-guide(8)andoscap(8)man pages on your system - Ansible Documentation

6.7. Creating a remediation Bash script for a later application

Use this procedure to create a Bash script containing remediations that align your system with a security profile such as HIPAA. Using the following steps, you do not do any modifications to your system, you only prepare a file for later application.

Prerequisites

-

The

scap-security-guidepackage is installed on your RHEL system.

Procedure

Use the