Prepare your environment

Planning, limits, and scalability for Red Hat OpenShift Service on AWS

Abstract

Chapter 1. Prerequisites checklist for deploying ROSA using STS

This is a high level checklist of prerequisites needed to create a Red Hat OpenShift Service on AWS (ROSA) (classic architecture) cluster with STS.

The machine that you run the installation process from must have access to the following:

- Amazon Web Services API and authentication service endpoints

-

Red Hat OpenShift API and authentication service endpoints (

api.openshift.comandsso.redhat.com) - Internet connectivity to obtain installation artifacts

Starting with version 1.2.7 of the ROSA CLI, all OIDC provider endpoint URLs on new clusters use Amazon CloudFront and the oidc.op1.openshiftapps.com domain. This change improves access speed, reduces latency, and improves resiliency for new clusters created with the ROSA CLI 1.2.7 or later. There are no supported migration paths for existing OIDC provider configurations.

1.1. Accounts and permissions

Ensure that you have the following accounts, credentials, and permissions.

1.1.1. AWS account

- Create an AWS account if you do not already have one.

- Gather the credentials required to log in to your AWS account.

- Ensure that your AWS account has sufficient permissions to use the ROSA CLI: Least privilege permissions for common ROSA CLI commands

Enable ROSA for your AWS account on the AWS console.

-

If your account is the management account for your organization (used for AWS billing purposes), you must have

aws-marketplace:Subscribepermissions available on your account. See Service control policy (SCP) prerequisites for more information, or see the AWS documentation for troubleshooting: AWS Organizations service control policy denies required AWS Marketplace permissions.

-

If your account is the management account for your organization (used for AWS billing purposes), you must have

1.1.2. Red Hat account

- Create a Red Hat account for the Red Hat Hybrid Cloud Console if you do not already have one.

- Gather the credentials required to log in to your Red Hat account.

1.2. CLI requirements

You need to download and install several CLI (command line interface) tools to be able to deploy a cluster.

1.2.1. AWS CLI (aws)

- Install the AWS Command Line Interface.

- Log in to your AWS account using the AWS CLI: Sign in through the AWS CLI

Verify your account identity:

$ aws sts get-caller-identity

Check whether the service role for ELB (Elastic Load Balancing) exists:

$ aws iam get-role --role-name "AWSServiceRoleForElasticLoadBalancing"

If the role does not exist, create it by running the following command:

$ aws iam create-service-linked-role --aws-service-name "elasticloadbalancing.amazonaws.com"

1.2.2. ROSA CLI (rosa)

- Install the ROSA CLI from the web console. See Installing the Red Hat OpenShift Service on AWS (ROSA) CLI, rosa for detailed instructions.

Log in to your Red Hat account by running

rosa loginand following the instructions in the command output:$ rosa login To login to your Red Hat account, get an offline access token at https://console.redhat.com/openshift/token/rosa ? Copy the token and paste it here:

Alternatively, you can copy the full

$ rosa login --token=abc…command and paste that in the terminal:$ rosa login --token=<abc..>

Confirm you are logged in using the correct account and credentials:

$ rosa whoami

1.2.3. OpenShift CLI (oc)

The OpenShift CLI (oc) is not required to deploy a Red Hat OpenShift Service on AWS cluster, but is a useful tool for interacting with your cluster after it is deployed.

- Download and install`oc` from the OpenShift Cluster Manager Command-line interface (CLI) tools page, or follow the instructions in Getting started with the OpenShift CLI.

Verify that the OpenShift CLI has been installed correctly by running the following command:

$ rosa verify openshift-client

1.3. AWS infrastructure prerequisites

Optionally, ensure that your AWS account has sufficient quota available to deploy a cluster.

$ rosa verify quota

This command only checks the total quota allocated to your account; it does not reflect the amount of quota already consumed from that quota. Running this command is optional because your quota is verified during cluster deployment. However, Red Hat recommends running this command to confirm your quota ahead of time so that deployment is not interrupted by issues with quota availability.

- For more information about resources provisioned during ROSA cluster deployment, see Provisioned AWS Infrastructure.

- For more information about the required AWS service quotas, see Required AWS service quotas.

1.4. Service Control Policy (SCP) prerequisites

ROSA clusters are hosted in an AWS account within an AWS organizational unit. A service control policy (SCP) is created and applied to the AWS organizational unit that manages what services the AWS sub-accounts are permitted to access.

- Ensure that your organization’s SCPs are not more restrictive than the roles and policies required by the cluster. For more information, see the Minimum set of effective permissions for SCPs.

- When you create a ROSA cluster, an associated AWS OpenID Connect (OIDC) identity provider is created.

1.5. Networking prerequisites

Prerequisites needed from a networking standpoint.

1.5.1. Minimum bandwidth

During cluster deployment, Red Hat OpenShift Service on AWS requires a minimum bandwidth of 120 Mbps between cluster resources and public internet resources. When network connectivity is slower than 120 Mbps (for example, when connecting through a proxy) the cluster installation process times out and deployment fails.

After deployment, network requirements are determined by your workload. However, a minimum bandwidth of 120 Mbps helps to ensure timely cluster and operator upgrades.

1.5.2. Firewall

- Configure your firewall to allow access to the domains and ports listed in AWS firewall prerequisites.

1.6. VPC requirements for PrivateLink clusters

If you choose to deploy a PrivateLink cluster, then be sure to deploy the cluster in the pre-existing BYO VPC:

Create a public and private subnet for each AZ that your cluster uses.

- Alternatively, implement transit gateway for internet and egress with appropriate routes.

The VPC’s CIDR block must contain the

Networking.MachineCIDRrange, which is the IP address for cluster machines.- The subnet CIDR blocks must belong to the machine CIDR that you specify.

Set both

enableDnsHostnamesandenableDnsSupporttotrue.- That way, the cluster can use the Route 53 zones that are attached to the VPC to resolve cluster internal DNS records.

Verify route tables by running:

---- $ aws ec2 describe-route-tables --filters "Name=vpc-id,Values=<vpc-id>" ----

- Ensure that the cluster can egress either through NAT gateway in public subnet or through transit gateway.

- Ensure whatever UDR you would like to follow is set up.

- You can also configure a cluster-wide proxy during or after install. Configuring a cluster-wide proxy for more details.

You can install a non-PrivateLink ROSA cluster in a pre-existing BYO VPC.

1.6.1. Additional custom security groups

During cluster creation, you can add additional custom security groups to a cluster that has an existing non-managed VPC. To do so, complete these prerequisites before you create the cluster:

- Create the custom security groups in AWS before you create the cluster.

- Associate the custom security groups with the VPC that you are using to create the cluster. Do not associate the custom security groups with any other VPC.

-

You may need to request additional AWS quota for

Security groups per network interface.

For more details see the detailed requirements for Security groups.

1.6.2. Custom DNS and domains

You can configure a custom domain name server and custom domain name for your cluster. To do so, complete the following prerequisites before you create the cluster:

-

By default, ROSA clusters require you to set the

domain name serversoption toAmazonProvidedDNSto ensure successful cluster creation and operation. - To use a custom DNS server and domain name for your cluster, the ROSA installer must be able to use VPC DNS with default DHCP options so that it can resolve internal IPs and services. This means that you must create a custom DHCP option set to forward DNS lookups to your DNS server, and associate this option set with your VPC before you create the cluster. For more information, see Deploying ROSA with a custom DNS resolver.

Confirm that your VPC is using VPC Resolver by running the following command:

$ aws ec2 describe-dhcp-options

Chapter 2. Detailed requirements for deploying ROSA using STS

Red Hat OpenShift Service on AWS (ROSA) provides a model that allows Red Hat to deploy clusters into a customer’s existing Amazon Web Service (AWS) account.

AWS Security Token Service (STS) is the recommended credential mode for installing and interacting with clusters on Red Hat OpenShift Service on AWS because it provides enhanced security.

Ensure that the following prerequisites are met before installing your cluster.

2.1. Customer requirements when using STS for deployment

The following prerequisites must be complete before you deploy a Red Hat OpenShift Service on AWS (ROSA) cluster that uses the AWS Security Token Service (STS).

2.1.1. AWS account

- Your AWS account must allow sufficient quota to deploy your cluster.

- If your organization applies and enforces SCP policies, these policies must not be more restrictive than the roles and policies required by the cluster.

- You can deploy native AWS services within the same AWS account.

- Your account must have a service-linked role to allow the installation program to configure Elastic Load Balancing (ELB). See "Creating the Elastic Load Balancing (ELB) service-linked role" for more information.

Additional resources

2.1.2. Support requirements

- Red Hat recommends that the customer have at least Business Support from AWS.

- Red Hat may have permission from the customer to request AWS support on their behalf.

- Red Hat may have permission from the customer to request AWS resource limit increases on the customer’s account.

- Red Hat manages the restrictions, limitations, expectations, and defaults for all Red Hat OpenShift Service on AWS clusters in the same manner, unless otherwise specified in this requirements section.

2.1.3. Security requirements

- Red Hat must have ingress access to EC2 hosts and the API server from allow-listed IP addresses.

- Red Hat must have egress allowed to the domains documented in the "Firewall prerequisites" section.

Additional resources

2.2. Requirements for using OpenShift Cluster Manager

The following configuration details are required only if you use OpenShift Cluster Manager to manage your clusters. If you use the CLI tools exclusively, then you can disregard these requirements.

2.2.1. AWS account association

When you provision Red Hat OpenShift Service on AWS (ROSA) using OpenShift Cluster Manager, you must associate the ocm-role and user-role IAM roles with your AWS account using your Amazon Resource Name (ARN). This association process is also known as account linking.

The ocm-role ARN is stored as a label in your Red Hat organization while the user-role ARN is stored as a label inside your Red Hat user account. Red Hat uses these ARN labels to confirm that the user is a valid account holder and that the correct permissions are available to perform provisioning tasks in the AWS account.

2.2.2. Associating your AWS account with IAM roles

You can associate or link your AWS account with existing IAM roles by using the Red Hat OpenShift Service on AWS (ROSA) CLI, rosa.

Prerequisites

- You have an AWS account.

- You have the permissions required to install AWS account-wide roles. See the "Additional resources" of this section for more information.

-

You have installed and configured the latest AWS (

aws) and ROSA (rosa) CLIs on your installation host. You have created the

ocm-roleanduser-roleIAM roles, but have not yet linked them to your AWS account. You can check whether your IAM roles are already linked by running the following commands:$ rosa list ocm-role

$ rosa list user-role

If

Yesis displayed in theLinkedcolumn for both roles, you have already linked the roles to an AWS account.

Procedure

From the CLI, link your

ocm-roleresource to your Red Hat organization by using your Amazon Resource Name (ARN):NoteYou must have Red Hat Organization Administrator privileges to run the

rosa linkcommand. After you link theocm-roleresource with your AWS account, it is visible for all users in the organization.$ rosa link ocm-role --role-arn <arn>

Example output

I: Linking OCM role ? Link the '<AWS ACCOUNT ID>` role with organization '<ORG ID>'? Yes I: Successfully linked role-arn '<AWS ACCOUNT ID>' with organization account '<ORG ID>'

From the CLI, link your

user-roleresource to your Red Hat user account by using your Amazon Resource Name (ARN):$ rosa link user-role --role-arn <arn>

Example output

I: Linking User role ? Link the 'arn:aws:iam::<ARN>:role/ManagedOpenShift-User-Role-125' role with organization '<AWS ID>'? Yes I: Successfully linked role-arn 'arn:aws:iam::<ARN>:role/ManagedOpenShift-User-Role-125' with organization account '<AWS ID>'

Additional resources

- See Account-wide IAM role and policy reference for a list of IAM roles needed for cluster creation.

2.2.3. Associating multiple AWS accounts with your Red Hat organization

You can associate multiple AWS accounts with your Red Hat organization. Associating multiple accounts lets you create Red Hat OpenShift Service on AWS (ROSA) clusters on any of the associated AWS accounts from your Red Hat organization.

With this feature, you can create clusters in different AWS regions by using multiple AWS profiles as region-bound environments.

Prerequisites

- You have an AWS account.

- You are using OpenShift Cluster Manager to create clusters.

- You have the permissions required to install AWS account-wide roles.

-

You have installed and configured the latest AWS (

aws) and ROSA (rosa) CLIs on your installation host. -

You have created your

ocm-roleanduser-roleIAM roles.

Procedure

To associate an additional AWS account, first create a profile in your local AWS configuration. Then, associate the account with your Red Hat organization by creating the ocm-role, user, and account roles in the additional AWS account.

To create the roles in an additional region, specify the --profile <aws-profile> parameter when running the rosa create commands and replace <aws_profile> with the additional account profile name:

To specify an AWS account profile when creating an OpenShift Cluster Manager role:

$ rosa create --profile <aws_profile> ocm-role

To specify an AWS account profile when creating a user role:

$ rosa create --profile <aws_profile> user-role

To specify an AWS account profile when creating the account roles:

$ rosa create --profile <aws_profile> account-roles

If you do not specify a profile, the default AWS profile is used.

2.3. Requirements for deploying a cluster in an opt-in region

An AWS opt-in region is a region that is not enabled by default. If you want to deploy a Red Hat OpenShift Service on AWS (ROSA) cluster that uses the AWS Security Token Service (STS) in an opt-in region, you must meet the following requirements:

- The region must be enabled in your AWS account. For more information about enabling opt-in regions, see Managing AWS Regions in the AWS documentation.

The security token version in your AWS account must be set to version 2. You cannot use version 1 security tokens for opt-in regions.

ImportantUpdating to security token version 2 can impact the systems that store the tokens, due to the increased token length. For more information, see the AWS documentation on setting STS preferences.

2.3.1. Setting the AWS security token version

If you want to create a Red Hat OpenShift Service on AWS (ROSA) cluster with the AWS Security Token Service (STS) in an AWS opt-in region, you must set the security token version to version 2 in your AWS account.

Prerequisites

- You have installed and configured the latest AWS CLI on your installation host.

Procedure

List the ID of the AWS account that is defined in your AWS CLI configuration:

$ aws sts get-caller-identity --query Account --output json

Ensure that the output matches the ID of the relevant AWS account.

List the security token version that is set in your AWS account:

$ aws iam get-account-summary --query SummaryMap.GlobalEndpointTokenVersion --output json

Example output

1

To update the security token version to version 2 for all regions in your AWS account, run the following command:

$ aws iam set-security-token-service-preferences --global-endpoint-token-version v2Token

ImportantUpdating to security token version 2 can impact the systems that store the tokens, due to the increased token length. For more information, see the AWS documentation on setting STS preferences.

2.4. Red Hat managed IAM references for AWS

When you use STS as your cluster credential method, Red Hat is not responsible for creating and managing Amazon Web Services (AWS) IAM policies, IAM users, or IAM roles. For information on creating these roles and policies, see the following sections on IAM roles.

-

To use the

ocmCLI, you must have anocm-roleanduser-roleresource. See OpenShift Cluster Manager IAM role resources. - If you have a single cluster, see Account-wide IAM role and policy reference.

- For each cluster, you must have the necessary operator roles. See Cluster-specific Operator IAM role reference.

2.5. Provisioned AWS Infrastructure

This is an overview of the provisioned Amazon Web Services (AWS) components on a deployed Red Hat OpenShift Service on AWS (ROSA) cluster.

2.5.1. EC2 instances

AWS EC2 instances are required to deploy the control plane and data plane functions for Red Hat OpenShift Service on AWS.

Instance types can vary for control plane and infrastructure nodes, depending on the worker node count.

At a minimum, the following EC2 instances are deployed:

-

Three

m5.2xlargecontrol plane nodes -

Two

r5.xlargeinfrastructure nodes -

Two

m5.xlargeworker nodes

The instance type shown for worker nodes is the default value, but you can customize the instance type for worker nodes according to the needs of your workload.

For further guidance on worker node counts, see the information about initial planning considerations in the "Limits and scalability" topic listed in the "Additional resources" section of this page.

2.5.2. Amazon Elastic Block Store storage

Amazon Elastic Block Store (Amazon EBS) block storage is used for both local node storage and persistent volume storage. The following values are the default size of the local, ephemeral storage provisioned for each EC2 instance.

Volume requirements for each EC2 instance:

Control Plane Volume

- Size: 350GB

- Type: gp3

- Input/Output Operations Per Second: 1000

Infrastructure Volume

- Size: 300GB

- Type: gp3

- Input/Output Operations Per Second: 900

Worker Volume

- Default size: 300GB

- Minimum size: 128GB

- Minimum size: 75GB

- Type: gp3

- Input/Output Operations Per Second: 900

Clusters deployed before the release of OpenShift Container Platform 4.11 use gp2 type storage by default.

2.5.3. Elastic Load Balancing

Each cluster can use up to two Classic Load Balancers for application router and up to two Network Load Balancers for API.

For more information, see the ELB documentation for AWS.

2.5.4. S3 storage

The image registry is backed by AWS S3 storage. Resources Pruning of resources is performed regularly to optimize S3 usage and cluster performance.

Two buckets are required with a typical size of 2TB each.

2.5.5. VPC

Configure your VPC according to the following requirements:

Subnets: Two subnets for a cluster with a single availability zone, or six subnets for a cluster with multiple availability zones.

Red Hat strongly recommends using unique subnets for each cluster. Sharing subnets between multiple clusters is not recommended.

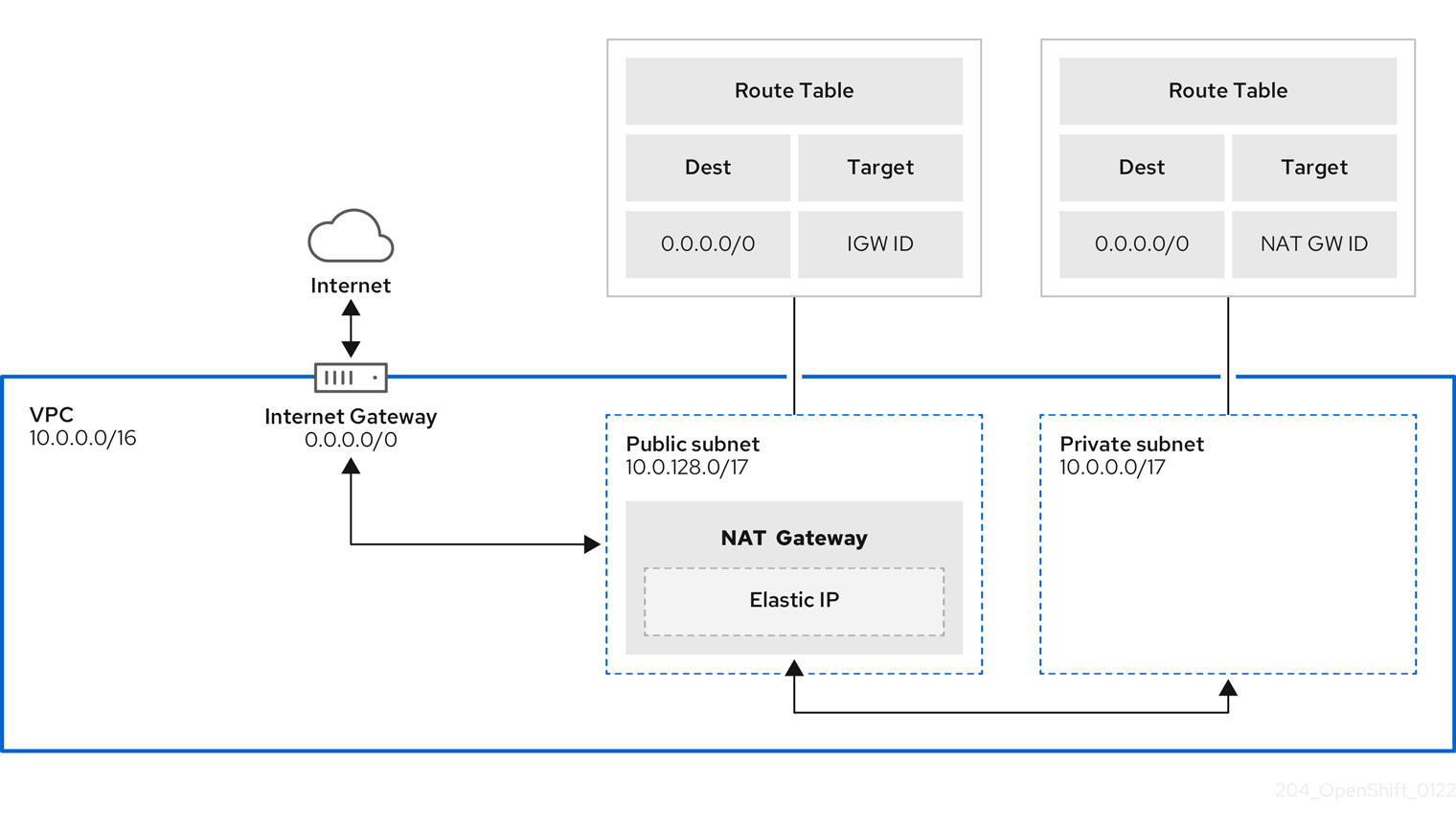

NoteA public subnet connects directly to the internet through an internet gateway. A private subnet connects to the internet through a network address translation (NAT) gateway.

- Route tables: One route table per private subnet, and one additional table per cluster.

- Internet gateways: One Internet Gateway per cluster.

- NAT gateways: One NAT Gateway per public subnet.

Figure 2.1. Sample VPC Architecture

2.5.6. Security groups

AWS security groups provide security at the protocol and port access level; they are associated with EC2 instances and Elastic Load Balancing (ELB) load balancers. Each security group contains a set of rules that filter traffic coming in and out of one or more EC2 instances.

Ensure that the ports required for cluster installation and operation are open on your network and configured to allow access between hosts. The requirements for the default security groups are listed in Required ports for default security groups.

| Group | Type | IP Protocol | Port range |

|---|---|---|---|

| MasterSecurityGroup |

|

|

|

|

|

| ||

|

|

| ||

|

|

| ||

| WorkerSecurityGroup |

|

|

|

|

|

| ||

| BootstrapSecurityGroup |

|

|

|

|

|

|

2.5.6.1. Additional custom security groups

When you create a cluster using an existing non-managed VPC, you can add additional custom security groups during cluster creation. Custom security groups are subject to the following limitations:

- You must create the custom security groups in AWS before you create the cluster. For more information, see Amazon EC2 security groups for Linux instances.

- You must associate the custom security groups with the VPC that the cluster will be installed into. Your custom security groups cannot be associated with another VPC.

- You might need to request additional quota for your VPC if you are adding additional custom security groups. For information on AWS quota requirements for ROSA, see Required AWS service quotas in Prepare your environment. For information on requesting an AWS quota increase, see Requesting a quota increase.

2.6. Networking prerequisites

2.6.1. Minimum bandwidth

During cluster deployment, Red Hat OpenShift Service on AWS requires a minimum bandwidth of 120 Mbps between cluster resources and public internet resources. When network connectivity is slower than 120 Mbps (for example, when connecting through a proxy) the cluster installation process times out and deployment fails.

After deployment, network requirements are determined by your workload. However, a minimum bandwidth of 120 Mbps helps to ensure timely cluster and operator upgrades.

2.6.2. AWS firewall prerequisites

If you are using a firewall to control egress traffic from your Red Hat OpenShift Service on AWS, you must configure your firewall to grant access to the certain domain and port combinations below. Red Hat OpenShift Service on AWS requires this access to provide a fully managed OpenShift service.

2.6.2.1. ROSA Classic

Only ROSA clusters deployed with PrivateLink can use a firewall to control egress traffic.

Prerequisites

- You have configured an Amazon S3 gateway endpoint in your AWS Virtual Private Cloud (VPC). This endpoint is required to complete requests from the cluster to the Amazon S3 service.

Procedure

Allowlist the following URLs that are used to install and download packages and tools:

Domain Port Function registry.redhat.io443

Provides core container images.

quay.io443

Provides core container images.

cdn01.quay.io443

Provides core container images.

cdn02.quay.io443

Provides core container images.

cdn03.quay.io443

Provides core container images.

cdn04.quay.io443

Provides core container images.

cdn05.quay.io443

Provides core container images.

cdn06.quay.io443

Provides core container images.

sso.redhat.com443

Required. The

https://console.redhat.com/openshiftsite uses authentication fromsso.redhat.comto download the pull secret and use Red Hat SaaS solutions to facilitate monitoring of your subscriptions, cluster inventory, chargeback reporting, and so on.quay-registry.s3.amazonaws.com443

Provides core container images.

quayio-production-s3.s3.amazonaws.com443

Provides core container images.

registry.access.redhat.com443

Hosts all the container images that are stored on the Red Hat Ecosytem Catalog. Additionally, the registry provides access to the

odoCLI tool that helps developers build on OpenShift and Kubernetes.access.redhat.com443

Required. Hosts a signature store that a container client requires for verifying images when pulling them from

registry.access.redhat.com.registry.connect.redhat.com443

Required for all third-party images and certified Operators.

console.redhat.com443

Required. Allows interactions between the cluster and OpenShift Console Manager to enable functionality, such as scheduling upgrades.

sso.redhat.com443

The

https://console.redhat.com/openshiftsite uses authentication fromsso.redhat.com.pull.q1w2.quay.rhcloud.com443

Provides core container images as a fallback when quay.io is not available.

catalog.redhat.com443

The

registry.access.redhat.comandhttps://registry.redhat.iosites redirect throughcatalog.redhat.com.oidc.op1.openshiftapps.com443

Used by ROSA for STS implementation with managed OIDC configuration.

Allowlist the following telemetry URLs:

Domain Port Function cert-api.access.redhat.com443

Required for telemetry.

api.access.redhat.com443

Required for telemetry.

infogw.api.openshift.com443

Required for telemetry.

console.redhat.com443

Required for telemetry and Red Hat Insights.

observatorium-mst.api.openshift.com443

Required for managed OpenShift-specific telemetry.

observatorium.api.openshift.com443

Required for managed OpenShift-specific telemetry.

Managed clusters require enabling telemetry to allow Red Hat to react more quickly to problems, better support the customers, and better understand how product upgrades impact clusters. For more information about how remote health monitoring data is used by Red Hat, see About remote health monitoring in the Additional resources section.

Allowlist the following Amazon Web Services (AWS) API URls:

Domain Port Function .amazonaws.com443

Required to access AWS services and resources.

Alternatively, if you choose to not use a wildcard for Amazon Web Services (AWS) APIs, you must allowlist the following URLs:

Domain Port Function ec2.amazonaws.com443

Used to install and manage clusters in an AWS environment.

events.<aws_region>.amazonaws.com443

Used to install and manage clusters in an AWS environment.

iam.amazonaws.com443

Used to install and manage clusters in an AWS environment.

route53.amazonaws.com443

Used to install and manage clusters in an AWS environment.

sts.amazonaws.com443

Used to install and manage clusters in an AWS environment, for clusters configured to use the global endpoint for AWS STS.

sts.<aws_region>.amazonaws.com443

Used to install and manage clusters in an AWS environment, for clusters configured to use regionalized endpoints for AWS STS. See AWS STS regionalized endpoints for more information.

tagging.us-east-1.amazonaws.com443

Used to install and manage clusters in an AWS environment. This endpoint is always us-east-1, regardless of the region the cluster is deployed in.

ec2.<aws_region>.amazonaws.com443

Used to install and manage clusters in an AWS environment.

elasticloadbalancing.<aws_region>.amazonaws.com443

Used to install and manage clusters in an AWS environment.

tagging.<aws_region>.amazonaws.com443

Allows the assignment of metadata about AWS resources in the form of tags.

Allowlist the following OpenShift URLs:

Domain Port Function mirror.openshift.com443

Used to access mirrored installation content and images. This site is also a source of release image signatures.

api.openshift.com443

Used to check if updates are available for the cluster.

Allowlist the following site reliability engineering (SRE) and management URLs:

Domain Port Function api.pagerduty.com443

This alerting service is used by the in-cluster alertmanager to send alerts notifying Red Hat SRE of an event to take action on.

events.pagerduty.com443

This alerting service is used by the in-cluster alertmanager to send alerts notifying Red Hat SRE of an event to take action on.

api.deadmanssnitch.com443

Alerting service used by Red Hat OpenShift Service on AWS to send periodic pings that indicate whether the cluster is available and running.

nosnch.in443

Alerting service used by Red Hat OpenShift Service on AWS to send periodic pings that indicate whether the cluster is available and running.

http-inputs-osdsecuritylogs.splunkcloud.com443

Required. Used by the

splunk-forwarder-operatoras a logging forwarding endpoint to be used by Red Hat SRE for log-based alerting.sftp.access.redhat.com(Recommended)22

The SFTP server used by

must-gather-operatorto upload diagnostic logs to help troubleshoot issues with the cluster.

Additional resources

2.7. Next steps

2.8. Additional resources

Chapter 3. ROSA IAM role resources

You must create several role resources on your AWS account in order to create and manage a Red Hat OpenShift Service on AWS (ROSA) cluster.

3.1. Overview of required roles

To create and manage your Red Hat OpenShift Service on AWS cluster, you must create several account-wide and cluster-wide roles. If you intend to use OpenShift Cluster Manager to create or manage your cluster, you need some additional roles.

- To create and manage clusters

Several account-wide roles are required to create and manage ROSA clusters. These roles only need to be created once per AWS account, and do not need to be created fresh for each cluster. You can specify your own prefix, or use the default prefix (

ManagedOpenShift).The following account-wide roles are required:

-

<prefix>-Worker-Role -

<prefix>-Support-Role -

<prefix>-Installer-Role -

<prefix>-ControlPlane-Role

NoteRole creation does not request your AWS access or secret keys. AWS Security Token Service (STS) is used as the basis of this workflow. AWS STS uses temporary, limited-privilege credentials to provide authentication.

-

- To manage cluster features provided by Operators

Cluster-specific Operator roles (

operator-rolesin the ROSA CLI), obtain the temporary permissions required to carry out cluster operations for features provided by Operators, such as managing back-end storage, ingress, and registry. This requires the configuration of an OpenID Connect (OIDC) provider, which connects to AWS Security Token Service (STS) to authenticate Operator access to AWS resources.Operator roles are required for every cluster, as several Operators are used to provide cluster features by default.

The following Operator roles are required:

-

<cluster_name>-<hash>-openshift-cluster-csi-drivers-ebs-cloud-credentials -

<cluster_name>-<hash>-openshift-cloud-network-config-controller-credentials -

<cluster_name>-<hash>-openshift-machine-api-aws-cloud-credentials -

<cluster_name>-<hash>-openshift-cloud-credential-operator-cloud-credentials -

<cluster_name>-<hash>-openshift-image-registry-installer-cloud-credentials -

<cluster_name>-<hash>-openshift-ingress-operator-cloud-credentials

-

- To use OpenShift Cluster Manager

The web user interface, OpenShift Cluster Manager, requires you to create additional roles in your AWS account to create a trust relationship between that AWS account and the OpenShift Cluster Manager.

This trust relationship is achieved through the creation and association of the

ocm-roleAWS IAM role. This role has a trust policy with the AWS installer that links your Red Hat account to your AWS account. In addition, you also need auser-roleAWS IAM role for each web UI user, which serves to identify these users. Thisuser-roleAWS IAM role has no permissions.The following AWS IAM roles are required to use OpenShift Cluster Manager:

-

ocm-role -

user-role

-

Additional resources

3.2. About the ocm-role IAM resource

You must create the ocm-role IAM resource to enable a Red Hat organization of users to create Red Hat OpenShift Service on AWS (ROSA) clusters. Within the context of linking to AWS, a Red Hat organization is a single user within OpenShift Cluster Manager.

Some considerations for your ocm-role IAM resource are:

-

Only one

ocm-roleIAM role can be linked per Red Hat organization; however, you can have any number ofocm-roleIAM roles per AWS account. The web UI requires that only one of these roles can be linked at a time. -

Any user in a Red Hat organization may create and link an

ocm-roleIAM resource. Only the Red Hat Organization Administrator can unlink an

ocm-roleIAM resource. This limitation is to protect other Red Hat organization members from disturbing the interface capabilities of other users.NoteIf you just created a Red Hat account that is not part of an existing organization, this account is also the Red Hat Organization Administrator.

-

See "Understanding the OpenShift Cluster Manager role" in the Additional resources of this section for a list of the AWS permissions policies for the basic and admin

ocm-roleIAM resources.

Using the ROSA CLI (rosa), you can link your IAM resource when you create it.

"Linking" or "associating" your IAM resources with your AWS account means creating a trust-policy with your ocm-role IAM role and the Red Hat OpenShift Cluster Manager AWS role. After creating and linking your IAM resource, you see a trust relationship from your ocm-role IAM resource in AWS with the arn:aws:iam::7333:role/RH-Managed-OpenShift-Installer resource.

After a Red Hat Organization Administrator has created and linked an ocm-role IAM resource, all organization members may want to create and link their own user-role IAM role. This IAM resource only needs to be created and linked only once per user. If another user in your Red Hat organization has already created and linked an ocm-role IAM resource, you need to ensure you have created and linked your own user-role IAM role.

Additional resources

3.2.1. Creating an ocm-role IAM role

You create your ocm-role IAM roles by using the command-line interface (CLI).

Prerequisites

- You have an AWS account.

- You have Red Hat Organization Administrator privileges in the OpenShift Cluster Manager organization.

- You have the permissions required to install AWS account-wide roles.

-

You have installed and configured the latest Red Hat OpenShift Service on AWS (ROSA) CLI,

rosa, on your installation host.

Procedure

To create an ocm-role IAM role with basic privileges, run the following command:

$ rosa create ocm-role

To create an ocm-role IAM role with admin privileges, run the following command:

$ rosa create ocm-role --admin

This command allows you to create the role by specifying specific attributes. The following example output shows the "auto mode" selected, which lets the ROSA CLI (

rosa) create your Operator roles and policies. See "Methods of account-wide role creation" for more information.

Example output

I: Creating ocm role ? Role prefix: ManagedOpenShift 1 ? Enable admin capabilities for the OCM role (optional): No 2 ? Permissions boundary ARN (optional): 3 ? Role Path (optional): 4 ? Role creation mode: auto 5 I: Creating role using 'arn:aws:iam::<ARN>:user/<UserName>' ? Create the 'ManagedOpenShift-OCM-Role-182' role? Yes 6 I: Created role 'ManagedOpenShift-OCM-Role-182' with ARN 'arn:aws:iam::<ARN>:role/ManagedOpenShift-OCM-Role-182' I: Linking OCM role ? OCM Role ARN: arn:aws:iam::<ARN>:role/ManagedOpenShift-OCM-Role-182 7 ? Link the 'arn:aws:iam::<ARN>:role/ManagedOpenShift-OCM-Role-182' role with organization '<AWS ARN>'? Yes 8 I: Successfully linked role-arn 'arn:aws:iam::<ARN>:role/ManagedOpenShift-OCM-Role-182' with organization account '<AWS ARN>'

- 1

- A prefix value for all of the created AWS resources. In this example,

ManagedOpenShiftprepends all of the AWS resources. - 2

- Choose if you want this role to have the additional admin permissions.Note

You do not see this prompt if you used the

--adminoption. - 3

- The Amazon Resource Name (ARN) of the policy to set permission boundaries.

- 4

- Specify an IAM path for the user name.

- 5

- Choose the method to create your AWS roles. Using

auto, the ROSA CLI generates and links the roles and policies. In theautomode, you receive some different prompts to create the AWS roles. - 6

- The

automethod asks if you want to create a specificocm-roleusing your prefix. - 7

- Confirm that you want to associate your IAM role with your OpenShift Cluster Manager.

- 8

- Links the created role with your AWS organization.

Additional resources

3.3. About the user-role IAM role

You need to create a user-role IAM role per web UI user to enable those users to create ROSA clusters.

Some considerations for your user-role IAM role are:

-

You only need one

user-roleIAM role per Red Hat user account, but your Red Hat organization can have many of these IAM resources. -

Any user in a Red Hat organization may create and link an

user-roleIAM role. -

There can be numerous

user-roleIAM roles per AWS account per Red Hat organization. -

Red Hat uses the

user-roleIAM role to identify the user. This IAM resource has no AWS account permissions. -

Your AWS account can have multiple

user-roleIAM roles, but you must link each IAM role to each user in your Red Hat organization. No user can have more than one linkeduser-roleIAM role.

"Linking" or "associating" your IAM resources with your AWS account means creating a trust-policy with your user-role IAM role and the Red Hat OpenShift Cluster Manager AWS role. After creating and linking this IAM resource, you see a trust relationship from your user-role IAM role in AWS with the arn:aws:iam::710019948333:role/RH-Managed-OpenShift-Installer resource.

3.3.1. Creating a user-role IAM role

You can create your user-role IAM roles by using the command-line interface (CLI).

Prerequisites

- You have an AWS account.

-

You have installed and configured the latest Red Hat OpenShift Service on AWS (ROSA) CLI,

rosa, on your installation host.

Procedure

To create a

user-roleIAM role with basic privileges, run the following command:$ rosa create user-role

This command allows you to create the role by specifying specific attributes. The following example output shows the "auto mode" selected, which lets the ROSA CLI (

rosa) to create your Operator roles and policies. See "Understanding the auto and manual deployment modes" for more information.

Example output

I: Creating User role ? Role prefix: ManagedOpenShift 1 ? Permissions boundary ARN (optional): 2 ? Role Path (optional): 3 ? Role creation mode: auto 4 I: Creating ocm user role using 'arn:aws:iam::2066:user' ? Create the 'ManagedOpenShift-User.osdocs-Role' role? Yes 5 I: Created role 'ManagedOpenShift-User.osdocs-Role' with ARN 'arn:aws:iam::2066:role/ManagedOpenShift-User.osdocs-Role' I: Linking User role ? User Role ARN: arn:aws:iam::2066:role/ManagedOpenShift-User.osdocs-Role ? Link the 'arn:aws:iam::2066:role/ManagedOpenShift-User.osdocs-Role' role with account '1AGE'? Yes 6 I: Successfully linked role ARN 'arn:aws:iam::2066:role/ManagedOpenShift-User.osdocs-Role' with account '1AGE'

- 1

- A prefix value for all of the created AWS resources. In this example,

ManagedOpenShiftprepends all of the AWS resources. - 2

- The Amazon Resource Name (ARN) of the policy to set permission boundaries.

- 3

- Specify an IAM path for the user name.

- 4

- Choose the method to create your AWS roles. Using

auto, the ROSA CLI generates and links the roles and policies. In theautomode, you receive some different prompts to create the AWS roles. - 5

- The

automethod asks if you want to create a specificuser-roleusing your prefix. - 6

- Links the created role with your AWS organization.

If you unlink or delete your user-role IAM role prior to deleting your cluster, an error prevents you from deleting your cluster. You must create or relink this role to proceed with the deletion process. See Repairing a cluster that cannot be deleted for more information.

Additional resources

3.4. AWS account association

When you provision Red Hat OpenShift Service on AWS (ROSA) using OpenShift Cluster Manager, you must associate the ocm-role and user-role IAM roles with your AWS account using your Amazon Resource Name (ARN). This association process is also known as account linking.

The ocm-role ARN is stored as a label in your Red Hat organization while the user-role ARN is stored as a label inside your Red Hat user account. Red Hat uses these ARN labels to confirm that the user is a valid account holder and that the correct permissions are available to perform provisioning tasks in the AWS account.

3.4.1. Associating your AWS account with IAM roles

You can associate or link your AWS account with existing IAM roles by using the Red Hat OpenShift Service on AWS (ROSA) CLI, rosa.

Prerequisites

- You have an AWS account.

- You have the permissions required to install AWS account-wide roles. See the "Additional resources" of this section for more information.

-

You have installed and configured the latest AWS (

aws) and ROSA (rosa) CLIs on your installation host. You have created the

ocm-roleanduser-roleIAM roles, but have not yet linked them to your AWS account. You can check whether your IAM roles are already linked by running the following commands:$ rosa list ocm-role

$ rosa list user-role

If

Yesis displayed in theLinkedcolumn for both roles, you have already linked the roles to an AWS account.

Procedure

From the CLI, link your

ocm-roleresource to your Red Hat organization by using your Amazon Resource Name (ARN):NoteYou must have Red Hat Organization Administrator privileges to run the

rosa linkcommand. After you link theocm-roleresource with your AWS account, it is visible for all users in the organization.$ rosa link ocm-role --role-arn <arn>

Example output

I: Linking OCM role ? Link the '<AWS ACCOUNT ID>` role with organization '<ORG ID>'? Yes I: Successfully linked role-arn '<AWS ACCOUNT ID>' with organization account '<ORG ID>'

From the CLI, link your

user-roleresource to your Red Hat user account by using your Amazon Resource Name (ARN):$ rosa link user-role --role-arn <arn>

Example output

I: Linking User role ? Link the 'arn:aws:iam::<ARN>:role/ManagedOpenShift-User-Role-125' role with organization '<AWS ID>'? Yes I: Successfully linked role-arn 'arn:aws:iam::<ARN>:role/ManagedOpenShift-User-Role-125' with organization account '<AWS ID>'

3.4.2. Associating multiple AWS accounts with your Red Hat organization

You can associate multiple AWS accounts with your Red Hat organization. Associating multiple accounts lets you create Red Hat OpenShift Service on AWS (ROSA) clusters on any of the associated AWS accounts from your Red Hat organization.

With this feature, you can create clusters in different AWS regions by using multiple AWS profiles as region-bound environments.

Prerequisites

- You have an AWS account.

- You are using OpenShift Cluster Manager to create clusters.

- You have the permissions required to install AWS account-wide roles.

-

You have installed and configured the latest AWS (

aws) and ROSA (rosa) CLIs on your installation host. -

You have created your

ocm-roleanduser-roleIAM roles.

Procedure

To associate an additional AWS account, first create a profile in your local AWS configuration. Then, associate the account with your Red Hat organization by creating the ocm-role, user, and account roles in the additional AWS account.

To create the roles in an additional region, specify the --profile <aws-profile> parameter when running the rosa create commands and replace <aws_profile> with the additional account profile name:

To specify an AWS account profile when creating an OpenShift Cluster Manager role:

$ rosa create --profile <aws_profile> ocm-role

To specify an AWS account profile when creating a user role:

$ rosa create --profile <aws_profile> user-role

To specify an AWS account profile when creating the account roles:

$ rosa create --profile <aws_profile> account-roles

If you do not specify a profile, the default AWS profile is used.

3.5. Permission boundaries for the installer role

You can apply a policy as a permissions boundary on an installer role. You can use an AWS-managed policy or a customer-managed policy to set the boundary for an Amazon Web Services (AWS) Identity and Access Management (IAM) entity (user or role). The combination of policy and boundary policy limits the maximum permissions for the user or role. ROSA includes a set of three prepared permission boundary policy files, with which you can restrict permissions for the installer role since changing the installer policy itself is not supported.

This feature is only supported on Red Hat OpenShift Service on AWS (classic architecture) clusters.

The permission boundary policy files are as follows:

- The Core boundary policy file contains the minimum permissions needed for ROSA (classic architecture) installer to install an Red Hat OpenShift Service on AWS cluster. The installer does not have permissions to create a virtual private cloud (VPC) or PrivateLink (PL). A VPC needs to be provided.

- The VPC boundary policy file contains the minimum permissions needed for ROSA (classic architecture) installer to create/manage the VPC. It does not include permissions for PL or core installation. If you need to install a cluster with enough permissions for the installer to install the cluster and create/manage the VPC, but you do not need to set up PL, then use the core and VPC boundary files together with the installer role.

- The PrivateLink (PL) boundary policy file contains the minimum permissions needed for ROSA (classic architecture) installer to create the AWS PL with a cluster. It does not include permissions for VPC or core installation. Provide a pre-created VPC for all PL clusters during installation.

When using the permission boundary policy files, the following combinations apply:

- No permission boundary policies means that the full installer policy permissions apply to your cluster.

Core only sets the most restricted permissions for the installer role. The VPC and PL permissions are not included in the Core only boundary policy.

- Installer cannot create or manage the VPC or PL.

- You must have a customer-provided VPC, and PrivateLink (PL) is not available.

Core + VPC sets the core and VPC permissions for the installer role.

- Installer cannot create or manage the PL.

- Assumes you are not using custom/BYO-VPC.

- Assumes the installer will create and manage the VPC.

Core + PrivateLink (PL) means the installer can provision the PL infrastructure.

- You must have a customer-provided VPC.

- This is for a private cluster with PL.

This example procedure is applicable for an installer role and policy with the most restriction of permissions, using only the core installer permission boundary policy for ROSA. You can complete this with the AWS console or the AWS CLI. This example uses the AWS CLI and the following policy:

Example 3.1. sts_installer_core_permission_boundary_policy.json

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": [

"autoscaling:DescribeAutoScalingGroups",

"ec2:AllocateAddress",

"ec2:AssociateAddress",

"ec2:AttachNetworkInterface",

"ec2:AuthorizeSecurityGroupEgress",

"ec2:AuthorizeSecurityGroupIngress",

"ec2:CopyImage",

"ec2:CreateNetworkInterface",

"ec2:CreateSecurityGroup",

"ec2:CreateTags",

"ec2:CreateVolume",

"ec2:DeleteNetworkInterface",

"ec2:DeleteSecurityGroup",

"ec2:DeleteSnapshot",

"ec2:DeleteTags",

"ec2:DeleteVolume",

"ec2:DeregisterImage",

"ec2:DescribeAccountAttributes",

"ec2:DescribeAddresses",

"ec2:DescribeAvailabilityZones",

"ec2:DescribeDhcpOptions",

"ec2:DescribeImages",

"ec2:DescribeInstanceAttribute",

"ec2:DescribeInstanceCreditSpecifications",

"ec2:DescribeInstances",

"ec2:DescribeInstanceStatus",

"ec2:DescribeInstanceTypeOfferings",

"ec2:DescribeInstanceTypes",

"ec2:DescribeInternetGateways",

"ec2:DescribeKeyPairs",

"ec2:DescribeNatGateways",

"ec2:DescribeNetworkAcls",

"ec2:DescribeNetworkInterfaces",

"ec2:DescribePrefixLists",

"ec2:DescribeRegions",

"ec2:DescribeReservedInstancesOfferings",

"ec2:DescribeRouteTables",

"ec2:DescribeSecurityGroups",

"ec2:DescribeSecurityGroupRules",

"ec2:DescribeSubnets",

"ec2:DescribeTags",

"ec2:DescribeVolumes",

"ec2:DescribeVpcAttribute",

"ec2:DescribeVpcClassicLink",

"ec2:DescribeVpcClassicLinkDnsSupport",

"ec2:DescribeVpcEndpoints",

"ec2:DescribeVpcs",

"ec2:GetConsoleOutput",

"ec2:GetEbsDefaultKmsKeyId",

"ec2:ModifyInstanceAttribute",

"ec2:ModifyNetworkInterfaceAttribute",

"ec2:ReleaseAddress",

"ec2:RevokeSecurityGroupEgress",

"ec2:RevokeSecurityGroupIngress",

"ec2:RunInstances",

"ec2:StartInstances",

"ec2:StopInstances",

"ec2:TerminateInstances",

"elasticloadbalancing:AddTags",

"elasticloadbalancing:ApplySecurityGroupsToLoadBalancer",

"elasticloadbalancing:AttachLoadBalancerToSubnets",

"elasticloadbalancing:ConfigureHealthCheck",

"elasticloadbalancing:CreateListener",

"elasticloadbalancing:CreateLoadBalancer",

"elasticloadbalancing:CreateLoadBalancerListeners",

"elasticloadbalancing:CreateTargetGroup",

"elasticloadbalancing:DeleteLoadBalancer",

"elasticloadbalancing:DeleteTargetGroup",

"elasticloadbalancing:DeregisterInstancesFromLoadBalancer",

"elasticloadbalancing:DeregisterTargets",

"elasticloadbalancing:DescribeInstanceHealth",

"elasticloadbalancing:DescribeListeners",

"elasticloadbalancing:DescribeLoadBalancerAttributes",

"elasticloadbalancing:DescribeLoadBalancers",

"elasticloadbalancing:DescribeTags",

"elasticloadbalancing:DescribeTargetGroupAttributes",

"elasticloadbalancing:DescribeTargetGroups",

"elasticloadbalancing:DescribeTargetHealth",

"elasticloadbalancing:ModifyLoadBalancerAttributes",

"elasticloadbalancing:ModifyTargetGroup",

"elasticloadbalancing:ModifyTargetGroupAttributes",

"elasticloadbalancing:RegisterInstancesWithLoadBalancer",

"elasticloadbalancing:RegisterTargets",

"elasticloadbalancing:SetLoadBalancerPoliciesOfListener",

"elasticloadbalancing:SetSecurityGroups",

"iam:AddRoleToInstanceProfile",

"iam:CreateInstanceProfile",

"iam:DeleteInstanceProfile",

"iam:GetInstanceProfile",

"iam:TagInstanceProfile",

"iam:GetRole",

"iam:GetRolePolicy",

"iam:GetUser",

"iam:ListAttachedRolePolicies",

"iam:ListInstanceProfiles",

"iam:ListInstanceProfilesForRole",

"iam:ListRolePolicies",

"iam:ListRoles",

"iam:ListUserPolicies",

"iam:ListUsers",

"iam:PassRole",

"iam:RemoveRoleFromInstanceProfile",

"iam:SimulatePrincipalPolicy",

"iam:TagRole",

"iam:UntagRole",

"route53:ChangeResourceRecordSets",

"route53:ChangeTagsForResource",

"route53:CreateHostedZone",

"route53:DeleteHostedZone",

"route53:GetAccountLimit",

"route53:GetChange",

"route53:GetHostedZone",

"route53:ListHostedZones",

"route53:ListHostedZonesByName",

"route53:ListResourceRecordSets",

"route53:ListTagsForResource",

"route53:UpdateHostedZoneComment",

"s3:CreateBucket",

"s3:DeleteBucket",

"s3:DeleteObject",

"s3:GetAccelerateConfiguration",

"s3:GetBucketAcl",

"s3:GetBucketCORS",

"s3:GetBucketLocation",

"s3:GetBucketLogging",

"s3:GetBucketObjectLockConfiguration",

"s3:GetBucketPolicy",

"s3:GetBucketRequestPayment",

"s3:GetBucketTagging",

"s3:GetBucketVersioning",

"s3:GetBucketWebsite",

"s3:GetEncryptionConfiguration",

"s3:GetLifecycleConfiguration",

"s3:GetObject",

"s3:GetObjectAcl",

"s3:GetObjectTagging",

"s3:GetObjectVersion",

"s3:GetReplicationConfiguration",

"s3:ListBucket",

"s3:ListBucketVersions",

"s3:PutBucketAcl",

"s3:PutBucketPolicy",

"s3:PutBucketTagging",

"s3:PutEncryptionConfiguration",

"s3:PutObject",

"s3:PutObjectAcl",

"s3:PutObjectTagging",

"servicequotas:GetServiceQuota",

"servicequotas:ListAWSDefaultServiceQuotas",

"sts:AssumeRole",

"sts:AssumeRoleWithWebIdentity",

"sts:GetCallerIdentity",

"tag:GetResources",

"tag:UntagResources",

"kms:DescribeKey",

"cloudwatch:GetMetricData",

"ec2:CreateRoute",

"ec2:DeleteRoute",

"ec2:CreateVpcEndpoint",

"ec2:DeleteVpcEndpoints",

"ec2:CreateVpcEndpointServiceConfiguration",

"ec2:DeleteVpcEndpointServiceConfigurations",

"ec2:DescribeVpcEndpointServiceConfigurations",

"ec2:DescribeVpcEndpointServicePermissions",

"ec2:DescribeVpcEndpointServices",

"ec2:ModifyVpcEndpointServicePermissions"

],

"Resource": "*"

},

{

"Effect": "Allow",

"Action": [

"secretsmanager:GetSecretValue"

],

"Resource": "*",

"Condition": {

"StringEquals": {

"aws:ResourceTag/red-hat-managed": "true"

}

}

}

]

}

To use the permission boundaries, you will need to prepare the permission boundary policy and add it to your relevant installer role in AWS IAM. While the ROSA (rosa) CLI offers a permission boundary function, it applies to all roles and not just the installer role, which means it does not work with the provided permission boundary policies (which are only for the installer role).

Prerequisites

- You have an AWS account.

- You have the permissions required to administer AWS roles and policies.

-

You have installed and configured the latest AWS (

aws) and ROSA (rosa) CLIs on your workstation. - You have already prepared your ROSA account-wide roles, includes the installer role, and the corresponding policies. If these do not exist in your AWS account, see "Creating the account-wide STS roles and policies" in Additional resources.

Procedure

Prepare the policy file by entering the following command in the

rosaCLI:$ curl -o ./rosa-installer-core.json https://raw.githubusercontent.com/openshift/managed-cluster-config/master/resources/sts/4.18/sts_installer_core_permission_boundary_policy.json

Create the policy in AWS and gather its Amazon Resource Name (ARN) by entering the following command:

$ aws iam create-policy \ --policy-name rosa-core-permissions-boundary-policy \ --policy-document file://./rosa-installer-core.json \ --description "ROSA installer core permission boundary policy, the minimum permission set, allows BYO-VPC, disallows PrivateLink"

Example output

{ "Policy": { "PolicyName": "rosa-core-permissions-boundary-policy", "PolicyId": "<Policy ID>", "Arn": "arn:aws:iam::<account ID>:policy/rosa-core-permissions-boundary-policy", "Path": "/", "DefaultVersionId": "v1", "AttachmentCount": 0, "PermissionsBoundaryUsageCount": 0, "IsAttachable": true, "CreateDate": "<CreateDate>", "UpdateDate": "<UpdateDate>" } }Add the permission boundary policy to the installer role you want to restrict by entering the following command:

$ aws iam put-role-permissions-boundary \ --role-name ManagedOpenShift-Installer-Role \ --permissions-boundary arn:aws:iam::<account ID>:policy/rosa-core-permissions-boundary-policy

Display the installer role to validate attached policies (including permissions boundary) by entering the following command in the

rosaCLI:$ aws iam get-role --role-name ManagedOpenShift-Installer-Role \ --output text | grep PERMISSIONSBOUNDARY

Example output

PERMISSIONSBOUNDARY arn:aws:iam::<account ID>:policy/rosa-core-permissions-boundary-policy Policy

For more examples of PL and VPC permission boundary policies see:

Example 3.2.

sts_installer_privatelink_permission_boundary_policy.json{ "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Action": [ "ec2:ModifyVpcEndpointServiceConfiguration", "route53:ListHostedZonesByVPC", "route53:CreateVPCAssociationAuthorization", "route53:AssociateVPCWithHostedZone", "route53:DeleteVPCAssociationAuthorization", "route53:DisassociateVPCFromHostedZone", "route53:ChangeResourceRecordSets" ], "Resource": "*" } ] }Example 3.3.

sts_installer_vpc_permission_boundary_policy.json{ "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Action": [ "ec2:AssociateDhcpOptions", "ec2:AssociateRouteTable", "ec2:AttachInternetGateway", "ec2:CreateDhcpOptions", "ec2:CreateInternetGateway", "ec2:CreateNatGateway", "ec2:CreateRouteTable", "ec2:CreateSubnet", "ec2:CreateVpc", "ec2:DeleteDhcpOptions", "ec2:DeleteInternetGateway", "ec2:DeleteNatGateway", "ec2:DeleteRouteTable", "ec2:DeleteSubnet", "ec2:DeleteVpc", "ec2:DetachInternetGateway", "ec2:DisassociateRouteTable", "ec2:ModifySubnetAttribute", "ec2:ModifyVpcAttribute", "ec2:ReplaceRouteTableAssociation" ], "Resource": "*" } ] }

3.6. Additional resources

Chapter 4. Limits and scalability

This document details the tested cluster maximums for Red Hat OpenShift Service on AWS (ROSA) clusters, along with information about the test environment and configuration used to test the maximums. Information about control plane and infrastructure node sizing and scaling is also provided.

4.1. Cluster maximums

Consider the following tested object maximums when you plan a Red Hat OpenShift Service on AWS (ROSA) cluster installation. The table specifies the maximum limits for each tested type in a (ROSA) cluster.

These guidelines are based on a cluster of 249 compute (also known as worker) nodes in a multiple availability zone configuration. For smaller clusters, the maximums are lower.

| Maximum type | 4.x tested maximum |

|---|---|

| Number of pods [1] | 25,000 |

| Number of pods per node | 250 |

| Number of pods per core | There is no default value |

| Number of namespaces [2] | 5,000 |

| Number of pods per namespace [3] | 25,000 |

| Number of services [4] | 10,000 |

| Number of services per namespace | 5,000 |

| Number of back ends per service | 5,000 |

| Number of deployments per namespace [3] | 2,000 |

- The pod count displayed here is the number of test pods. The actual number of pods depends on the memory, CPU, and storage requirements of the application.

- When there are a large number of active projects, etcd can suffer from poor performance if the keyspace grows excessively large and exceeds the space quota. Periodic maintenance of etcd, including defragmentation, is highly recommended to make etcd storage available.

- There are several control loops in the system that must iterate over all objects in a given namespace as a reaction to some changes in state. Having a large number of objects of a type, in a single namespace, can make those loops expensive and slow down processing the state changes. The limit assumes that the system has enough CPU, memory, and disk to satisfy the application requirements.

-

Each service port and each service back end has a corresponding entry in

iptables. The number of back ends of a given service impacts the size of the endpoints objects, which then impacts the size of data sent throughout the system.

4.2. OpenShift Container Platform testing environment and configuration

The following table lists the OpenShift Container Platform environment and configuration on which the cluster maximums are tested for the AWS cloud platform.

| Node | Type | vCPU | RAM(GiB) | Disk type | Disk size(GiB)/IOPS | Count | Region |

|---|---|---|---|---|---|---|---|

| Control plane/etcd [1] | m5.4xlarge | 16 | 64 | gp3 | 350 / 1,000 | 3 | us-west-2 |

| Infrastructure nodes [2] | r5.2xlarge | 8 | 64 | gp3 | 300 / 900 | 3 | us-west-2 |

| Workload [3] | m5.2xlarge | 8 | 32 | gp3 | 350 / 900 | 3 | us-west-2 |

| Compute nodes | m5.2xlarge | 8 | 32 | gp3 | 350 / 900 | 102 | us-west-2 |

- io1 disks are used for control plane/etcd nodes in all versions prior to 4.10.

- Infrastructure nodes are used to host monitoring components because Prometheus can claim a large amount of memory, depending on usage patterns.

- Workload nodes are dedicated to run performance and scalability workload generators.

Larger cluster sizes and higher object counts might be reachable. However, the sizing of the infrastructure nodes limits the amount of memory that is available to Prometheus. When creating, modifying, or deleting objects, Prometheus stores the metrics in its memory for roughly 3 hours prior to persisting the metrics on disk. If the rate of creation, modification, or deletion of objects is too high, Prometheus can become overwhelmed and fail due to the lack of memory resources.

4.3. Control plane and infrastructure node sizing and scaling

When you install a Red Hat OpenShift Service on AWS (ROSA) cluster, the sizing of the control plane and infrastructure nodes are automatically determined by the compute node count.

If you change the number of compute nodes in your cluster after installation, the Red Hat Site Reliability Engineering (SRE) team scales the control plane and infrastructure nodes as required to maintain cluster stability.

4.3.1. Node sizing during installation

During the installation process, the sizing of the control plane and infrastructure nodes are dynamically calculated. The sizing calculation is based on the number of compute nodes in a cluster.

The following table lists the control plane and infrastructure node sizing that is applied during installation.

| Number of compute nodes | Control plane size | Infrastructure node size |

|---|---|---|

| 1 to 25 | m5.2xlarge | r5.xlarge |

| 26 to 100 | m5.4xlarge | r5.2xlarge |

| 101 to 249 | m5.8xlarge | r5.4xlarge |

The maximum number of compute nodes on ROSA clusters version 4.14.14 and later is 249. For earlier versions, the limit is 180.

4.3.2. Node scaling after installation

If you change the number of compute nodes after installation, the control plane and infrastructure nodes are scaled by the Red Hat Site Reliability Engineering (SRE) team as required. The nodes are scaled to maintain platform stability.

Postinstallation scaling requirements for control plane and infrastructure nodes are assessed on a case-by-case basis. Node resource consumption and received alerts are taken into consideration.

Rules for control plane node resizing alerts

The resizing alert is triggered for the control plane nodes in a cluster when the following occurs:

Control plane nodes sustain over 66% utilization on average in a cluster.

NoteThe maximum number of compute nodes on ROSA is 180.

Rules for infrastructure node resizing alerts

Resizing alerts are triggered for the infrastructure nodes in a cluster when it has high-sustained CPU or memory utilization. This high-sustained utilization status is:

- Infrastructure nodes sustain over 50% utilization on average in a cluster with a single availability zone using 2 infrastructure nodes.

Infrastructure nodes sustain over 66% utilization on average in a cluster with multiple availability zones using 3 infrastructure nodes.

NoteThe maximum number of compute nodes on ROSA cluster versions 4.14.14 and later is 249. For earlier versions, the limit is 180.

The resizing alerts only appear after sustained periods of high utilization. Short usage spikes, such as a node temporarily going down causing the other node to scale up, do not trigger these alerts.

The SRE team might scale the control plane and infrastructure nodes for additional reasons, for example to manage an increase in resource consumption on the nodes.

When scaling is applied, the customer is notified through a service log entry. For more information about the service log, see Accessing the service logs for ROSA clusters.

4.3.3. Sizing considerations for larger clusters

For larger clusters, infrastructure node sizing can become a significant impacting factor to scalability. There are many factors that influence the stated thresholds, including the etcd version or storage data format.

Exceeding these limits does not necessarily mean that the cluster will fail. In most cases, exceeding these numbers results in lower overall performance.

4.4. Next steps

4.5. Additional resources

Chapter 5. ROSA with HCP limits and scalability

This document details the tested cluster maximums for Red Hat OpenShift Service on AWS (ROSA) with hosted control planes (HCP) clusters, along with information about the test environment and configuration used to test the maximums. For ROSA with HCP clusters, the control plane is fully managed in the service AWS account and will automatically scale with the cluster.

5.1. ROSA with HCP cluster maximums

Consider the following tested object maximums when you plan a Red Hat OpenShift Service on AWS (ROSA) with hosted control planes (HCP) cluster installation. The table specifies the maximum limits for each tested type in a ROSA with HCP cluster.

These guidelines are based on a cluster of 500 compute (also known as worker) nodes. For smaller clusters, the maximums are lower.

| Maximum type | 4.x tested maximum |

|---|---|

| Number of pods [1] | 25,000 |

| Number of pods per node | 250 |

| Number of pods per core | There is no default value |

| Number of namespaces [2] | 5,000 |

| Number of pods per namespace [3] | 25,000 |

| Number of services [4] | 10,000 |

| Number of services per namespace | 5,000 |

| Number of back ends per service | 5,000 |

| Number of deployments per namespace [3] | 2,000 |

- The pod count displayed here is the number of test pods. The actual number of pods depends on the memory, CPU, and storage requirements of the application.

- When there are a large number of active projects, etcd can suffer from poor performance if the keyspace grows excessively large and exceeds the space quota. Periodic maintenance of etcd, including defragmentation, is highly recommended to make etcd storage available.

- There are several control loops in the system that must iterate over all objects in a given namespace as a reaction to some changes in state. Having a large number of objects of a type, in a single namespace, can make those loops expensive and slow down processing the state changes. The limit assumes that the system has enough CPU, memory, and disk to satisfy the application requirements.

-

Each service port and each service back end has a corresponding entry in

iptables. The number of back ends of a given service impacts the size of the endpoints objects, which then impacts the size of data sent throughout the system.

5.2. Next steps

5.3. Additional resources

Chapter 6. Planning resource usage in your cluster

6.1. Planning your environment based on tested cluster maximums

This document describes how to plan your Red Hat OpenShift Service on AWS environment based on the tested cluster maximums.

Oversubscribing the physical resources on a node affects the resource guarantees that the Kubernetes scheduler makes during pod placement. Learn what measures you can take to avoid memory swapping.

Some of the tested maximums are stretched only in a single dimension. They will vary when many objects are running on the cluster.

The numbers noted in this documentation are based on Red Hat testing methodology, setup, configuration, and tunings. These numbers can vary based on your own individual setup and environments.

While planning your environment, determine how many pods are expected to fit per node using the following formula:

required pods per cluster / pods per node = total number of nodes needed

The current maximum number of pods per node is 250. However, the number of pods that fit on a node is dependent on the application itself. Consider the application’s memory, CPU, and storage requirements, as described in Planning your environment based on application requirements.

Example scenario

If you want to scope your cluster for 2200 pods per cluster, you would need at least nine nodes, assuming that there are 250 maximum pods per node:

2200 / 250 = 8.8

If you increase the number of nodes to 20, then the pod distribution changes to 110 pods per node:

2200 / 20 = 110

Where:

required pods per cluster / total number of nodes = expected pods per node

6.2. Planning your environment based on application requirements

This document describes how to plan your Red Hat OpenShift Service on AWS environment based on your application requirements.

Consider an example application environment:

| Pod type | Pod quantity | Max memory | CPU cores | Persistent storage |

|---|---|---|---|---|

| apache | 100 | 500 MB | 0.5 | 1 GB |

| node.js | 200 | 1 GB | 1 | 1 GB |

| postgresql | 100 | 1 GB | 2 | 10 GB |

| JBoss EAP | 100 | 1 GB | 1 | 1 GB |

Extrapolated requirements: 550 CPU cores, 450 GB RAM, and 1.4 TB storage.

Instance size for nodes can be modulated up or down, depending on your preference. Nodes are often resource overcommitted. In this deployment scenario, you can choose to run additional smaller nodes or fewer larger nodes to provide the same amount of resources. Factors such as operational agility and cost-per-instance should be considered.

| Node type | Quantity | CPUs | RAM (GB) |

|---|---|---|---|

| Nodes (option 1) | 100 | 4 | 16 |

| Nodes (option 2) | 50 | 8 | 32 |

| Nodes (option 3) | 25 | 16 | 64 |

Some applications lend themselves well to overcommitted environments, and some do not. Most Java applications and applications that use huge pages are examples of applications that would not allow for overcommitment. That memory can not be used for other applications. In the example above, the environment would be roughly 30 percent overcommitted, a common ratio.

The application pods can access a service either by using environment variables or DNS. If using environment variables, for each active service the variables are injected by the kubelet when a pod is run on a node. A cluster-aware DNS server watches the Kubernetes API for new services and creates a set of DNS records for each one. If DNS is enabled throughout your cluster, then all pods should automatically be able to resolve services by their DNS name. Service discovery using DNS can be used in case you must go beyond 5000 services. When using environment variables for service discovery, if the argument list exceeds the allowed length after 5000 services in a namespace, then the pods and deployments will start failing.

Disable the service links in the deployment’s service specification file to overcome this:

Example

Kind: Template

apiVersion: template.openshift.io/v1

metadata:

name: deploymentConfigTemplate

creationTimestamp:

annotations:

description: This template will create a deploymentConfig with 1 replica, 4 env vars and a service.

tags: ''

objects:

- kind: DeploymentConfig

apiVersion: apps.openshift.io/v1

metadata:

name: deploymentconfig${IDENTIFIER}

spec:

template:

metadata:

labels:

name: replicationcontroller${IDENTIFIER}

spec:

enableServiceLinks: false

containers:

- name: pause${IDENTIFIER}

image: "${IMAGE}"

ports:

- containerPort: 8080

protocol: TCP

env:

- name: ENVVAR1_${IDENTIFIER}

value: "${ENV_VALUE}"

- name: ENVVAR2_${IDENTIFIER}

value: "${ENV_VALUE}"

- name: ENVVAR3_${IDENTIFIER}

value: "${ENV_VALUE}"

- name: ENVVAR4_${IDENTIFIER}

value: "${ENV_VALUE}"

resources: {}

imagePullPolicy: IfNotPresent

capabilities: {}

securityContext:

capabilities: {}

privileged: false

restartPolicy: Always

serviceAccount: ''

replicas: 1

selector:

name: replicationcontroller${IDENTIFIER}

triggers:

- type: ConfigChange

strategy:

type: Rolling

- kind: Service

apiVersion: v1

metadata:

name: service${IDENTIFIER}

spec:

selector:

name: replicationcontroller${IDENTIFIER}

ports:

- name: serviceport${IDENTIFIER}

protocol: TCP

port: 80

targetPort: 8080

portalIP: ''

type: ClusterIP

sessionAffinity: None

status:

loadBalancer: {}

parameters:

- name: IDENTIFIER

description: Number to append to the name of resources

value: '1'

required: true

- name: IMAGE

description: Image to use for deploymentConfig

value: gcr.io/google-containers/pause-amd64:3.0

required: false

- name: ENV_VALUE

description: Value to use for environment variables

generate: expression

from: "[A-Za-z0-9]{255}"

required: false

labels:

template: deploymentConfigTemplate

The number of application pods that can run in a namespace is dependent on the number of services and the length of the service name when the environment variables are used for service discovery. ARG_MAX on the system defines the maximum argument length for a new process and it is set to 2097152 bytes (2 MiB) by default. The kubelet injects environment variables in to each pod scheduled to run in the namespace including:

-

<SERVICE_NAME>_SERVICE_HOST=<IP> -

<SERVICE_NAME>_SERVICE_PORT=<PORT> -

<SERVICE_NAME>_PORT=tcp://<IP>:<PORT> -

<SERVICE_NAME>_PORT_<PORT>_TCP=tcp://<IP>:<PORT> -

<SERVICE_NAME>_PORT_<PORT>_TCP_PROTO=tcp -

<SERVICE_NAME>_PORT_<PORT>_TCP_PORT=<PORT> -

<SERVICE_NAME>_PORT_<PORT>_TCP_ADDR=<ADDR>

The pods in the namespace start to fail if the argument length exceeds the allowed value and if the number of characters in a service name impacts it.

Chapter 7. Required AWS service quotas

Review this list of the required Amazon Web Service (AWS) service quotas that are required to run an Red Hat OpenShift Service on AWS cluster.

7.1. Required AWS service quotas

The table below describes the AWS service quotas and levels required to create and run one Red Hat OpenShift Service on AWS cluster. Although most default values are suitable for most workloads, you might need to request additional quota for the following cases:

-

ROSA clusters require a minimum AWS EC2 service quota of 100 vCPUs to provide for cluster creation, availability, and upgrades. The default maximum value for vCPUs assigned to Running On-Demand Standard Amazon EC2 instances is

5. Therefore if you have not created a ROSA cluster using the same AWS account previously, you must request additional EC2 quota forRunning On-Demand Standard (A, C, D, H, I, M, R, T, Z) instances.

-

Some optional cluster configuration features, such as custom security groups, might require you to request additional quota. For example, because ROSA associates 1 security group with network interfaces in worker machine pools by default, and the default quota for

Security groups per network interfaceis5, if you want to add 5 custom security groups, you must request additional quota, because this would bring the total number of security groups on worker network interfaces to 6.

The AWS SDK allows ROSA to check quotas, but the AWS SDK calculation does not account for your existing usage. Therefore, it is possible that the quota check can pass in the AWS SDK yet the cluster creation can fail. To fix this issue, increase your quota.