Backup and restore

Backing up and restoring your OpenShift Container Platform cluster

Abstract

Chapter 1. Backup and restore

1.1. Control plane backup and restore operations

As a cluster administrator, you might need to stop an OpenShift Container Platform cluster for a period and restart it later. Some reasons for restarting a cluster are that you need to perform maintenance on a cluster or want to reduce resource costs. In OpenShift Container Platform, you can perform a graceful shutdown of a cluster so that you can easily restart the cluster later.

You must back up etcd data before shutting down a cluster; etcd is the key-value store for OpenShift Container Platform, which persists the state of all resource objects. An etcd backup plays a crucial role in disaster recovery. In OpenShift Container Platform, you can also replace an unhealthy etcd member.

When you want to get your cluster running again, restart the cluster gracefully.

A cluster’s certificates expire one year after the installation date. You can shut down a cluster and expect it to restart gracefully while the certificates are still valid. Although the cluster automatically retrieves the expired control plane certificates, you must still approve the certificate signing requests (CSRs).

You might run into several situations where OpenShift Container Platform does not work as expected, such as:

- You have a cluster that is not functional after the restart because of unexpected conditions, such as node failure, or network connectivity issues.

- You have deleted something critical in the cluster by mistake.

- You have lost the majority of your control plane hosts, leading to etcd quorum loss.

You can always recover from a disaster situation by restoring your cluster to its previous state using the saved etcd snapshots.

Additional resources

1.2. Application backup and restore operations

As a cluster administrator, you can back up and restore applications running on OpenShift Container Platform by using the OpenShift API for Data Protection (OADP).

OADP backs up and restores Kubernetes resources and internal images, at the granularity of a namespace, by using the version of Velero that is appropriate for the version of OADP you install, according to the table in Downloading the Velero CLI tool. OADP backs up and restores persistent volumes (PVs) by using snapshots or Restic. For details, see OADP features.

1.2.1. OADP requirements

OADP has the following requirements:

-

You must be logged in as a user with a

cluster-adminrole. You must have object storage for storing backups, such as one of the following storage types:

- OpenShift Data Foundation

- Amazon Web Services

- Microsoft Azure

- Google Cloud Platform

- S3-compatible object storage

If you want to use CSI backup on OCP 4.11 and later, install OADP 1.1.x.

OADP 1.0.x does not support CSI backup on OCP 4.11 and later. OADP 1.0.x includes Velero 1.7.x and expects the API group snapshot.storage.k8s.io/v1beta1, which is not present on OCP 4.11 and later.

The CloudStorage API for S3 storage is a Technology Preview feature only. Technology Preview features are not supported with Red Hat production service level agreements (SLAs) and might not be functionally complete. Red Hat does not recommend using them in production. These features provide early access to upcoming product features, enabling customers to test functionality and provide feedback during the development process.

For more information about the support scope of Red Hat Technology Preview features, see Technology Preview Features Support Scope.

To back up PVs with snapshots, you must have cloud storage that has a native snapshot API or supports Container Storage Interface (CSI) snapshots, such as the following providers:

- Amazon Web Services

- Microsoft Azure

- Google Cloud Platform

- CSI snapshot-enabled cloud storage, such as Ceph RBD or Ceph FS

If you do not want to back up PVs by using snapshots, you can use Restic, which is installed by the OADP Operator by default.

1.2.2. Backing up and restoring applications

You back up applications by creating a Backup custom resource (CR). See Creating a Backup CR. You can configure the following backup options:

- Creating backup hooks to run commands before or after the backup operation

- Scheduling backups

- Restic backups

-

You restore application backups by creating a

Restore(CR). See Creating a Restore CR. - You can configure restore hooks to run commands in init containers or in the application container during the restore operation.

Chapter 2. Shutting down the cluster gracefully

This document describes the process to gracefully shut down your cluster. You might need to temporarily shut down your cluster for maintenance reasons, or to save on resource costs.

2.1. Prerequisites

Take an etcd backup prior to shutting down the cluster.

ImportantIt is important to take an etcd backup before performing this procedure so that your cluster can be restored if you encounter any issues when restarting the cluster.

For example, the following conditions can cause the restarted cluster to malfunction:

- etcd data corruption during shutdown

- Node failure due to hardware

- Network connectivity issues

If your cluster fails to recover, follow the steps to restore to a previous cluster state.

2.2. Shutting down the cluster

You can shut down your cluster in a graceful manner so that it can be restarted at a later date.

You can shut down a cluster until a year from the installation date and expect it to restart gracefully. After a year from the installation date, the cluster certificates expire.

Prerequisites

-

You have access to the cluster as a user with the

cluster-adminrole. - You have taken an etcd backup.

Procedure

If you plan to shut down the cluster for an extended period of time, determine the date that cluster certificates expire.

You must restart the cluster prior to the date that certificates expire. As the cluster restarts, the process might require you to manually approve the pending certificate signing requests (CSRs) to recover kubelet certificates.

Check the expiration date for the

kube-apiserver-to-kubelet-signerCA certificate:$ oc -n openshift-kube-apiserver-operator get secret kube-apiserver-to-kubelet-signer -o jsonpath='{.metadata.annotations.auth\.openshift\.io/certificate-not-after}{"\n"}'Example output

2023-08-05T14:37:50Z

Check the expiration date for the kubelet certificates:

Start a debug session for a control plane node by running the following command:

$ oc debug node/<node_name>

Change your root directory to

/hostby running the following command:sh-4.4# chroot /host

Check the kubelet client certificate expiration date by running the following command:

sh-5.1# openssl x509 -in /var/lib/kubelet/pki/kubelet-client-current.pem -noout -enddate

Example output

notAfter=Jun 6 10:50:07 2023 GMT

Check the kubelet server certificate expiration date by running the following command:

sh-5.1# openssl x509 -in /var/lib/kubelet/pki/kubelet-server-current.pem -noout -enddate

Example output

notAfter=Jun 6 10:50:07 2023 GMT

- Exit the debug session.

- Repeat these steps to check certificate expiration dates on all control plane nodes. To ensure that the cluster can restart gracefully, plan to restart it before the earliest certificate expiration date.

Shut down all of the nodes in the cluster. You can do this from your cloud provider’s web console, or run the following loop:

$ for node in $(oc get nodes -o jsonpath='{.items[*].metadata.name}'); do oc debug node/${node} -- chroot /host shutdown -h 1; done 1- 1

-h 1indicates how long, in minutes, this process lasts before the control-plane nodes are shut down. For large-scale clusters with 10 nodes or more, set to 10 minutes or longer to make sure all the compute nodes have time to shut down first.

Example output

Starting pod/ip-10-0-130-169us-east-2computeinternal-debug ... To use host binaries, run `chroot /host` Shutdown scheduled for Mon 2021-09-13 09:36:17 UTC, use 'shutdown -c' to cancel. Removing debug pod ... Starting pod/ip-10-0-150-116us-east-2computeinternal-debug ... To use host binaries, run `chroot /host` Shutdown scheduled for Mon 2021-09-13 09:36:29 UTC, use 'shutdown -c' to cancel.

Shutting down the nodes using one of these methods allows pods to terminate gracefully, which reduces the chance for data corruption.

NoteAdjust the shut down time to be longer for large-scale clusters:

$ for node in $(oc get nodes -o jsonpath='{.items[*].metadata.name}'); do oc debug node/${node} -- chroot /host shutdown -h 10; doneNoteIt is not necessary to drain control plane nodes of the standard pods that ship with OpenShift Container Platform prior to shutdown.

Cluster administrators are responsible for ensuring a clean restart of their own workloads after the cluster is restarted. If you drained control plane nodes prior to shutdown because of custom workloads, you must mark the control plane nodes as schedulable before the cluster will be functional again after restart.

Shut off any cluster dependencies that are no longer needed, such as external storage or an LDAP server. Be sure to consult your vendor’s documentation before doing so.

ImportantIf you deployed your cluster on a cloud-provider platform, do not shut down, suspend, or delete the associated cloud resources. If you delete the cloud resources of a suspended virtual machine, OpenShift Container Platform might not restore successfully.

2.3. Additional resources

Chapter 3. Restarting the cluster gracefully

This document describes the process to restart your cluster after a graceful shutdown.

Even though the cluster is expected to be functional after the restart, the cluster might not recover due to unexpected conditions, for example:

- etcd data corruption during shutdown

- Node failure due to hardware

- Network connectivity issues

If your cluster fails to recover, follow the steps to restore to a previous cluster state.

3.1. Prerequisites

- You have gracefully shut down your cluster.

3.2. Restarting the cluster

You can restart your cluster after it has been shut down gracefully.

Prerequisites

-

You have access to the cluster as a user with the

cluster-adminrole. - This procedure assumes that you gracefully shut down the cluster.

Procedure

- Power on any cluster dependencies, such as external storage or an LDAP server.

Start all cluster machines.

Use the appropriate method for your cloud environment to start the machines, for example, from your cloud provider’s web console.

Wait approximately 10 minutes before continuing to check the status of control plane nodes.

Verify that all control plane nodes are ready.

$ oc get nodes -l node-role.kubernetes.io/master

The control plane nodes are ready if the status is

Ready, as shown in the following output:NAME STATUS ROLES AGE VERSION ip-10-0-168-251.ec2.internal Ready master 75m v1.24.0 ip-10-0-170-223.ec2.internal Ready master 75m v1.24.0 ip-10-0-211-16.ec2.internal Ready master 75m v1.24.0

If the control plane nodes are not ready, then check whether there are any pending certificate signing requests (CSRs) that must be approved.

Get the list of current CSRs:

$ oc get csr

Review the details of a CSR to verify that it is valid:

$ oc describe csr <csr_name> 1- 1

<csr_name>is the name of a CSR from the list of current CSRs.

Approve each valid CSR:

$ oc adm certificate approve <csr_name>

After the control plane nodes are ready, verify that all worker nodes are ready.

$ oc get nodes -l node-role.kubernetes.io/worker

The worker nodes are ready if the status is

Ready, as shown in the following output:NAME STATUS ROLES AGE VERSION ip-10-0-179-95.ec2.internal Ready worker 64m v1.24.0 ip-10-0-182-134.ec2.internal Ready worker 64m v1.24.0 ip-10-0-250-100.ec2.internal Ready worker 64m v1.24.0

If the worker nodes are not ready, then check whether there are any pending certificate signing requests (CSRs) that must be approved.

Get the list of current CSRs:

$ oc get csr

Review the details of a CSR to verify that it is valid:

$ oc describe csr <csr_name> 1- 1

<csr_name>is the name of a CSR from the list of current CSRs.

Approve each valid CSR:

$ oc adm certificate approve <csr_name>

Verify that the cluster started properly.

Check that there are no degraded cluster Operators.

$ oc get clusteroperators

Check that there are no cluster Operators with the

DEGRADEDcondition set toTrue.NAME VERSION AVAILABLE PROGRESSING DEGRADED SINCE authentication 4.10.0 True False False 59m cloud-credential 4.10.0 True False False 85m cluster-autoscaler 4.10.0 True False False 73m config-operator 4.10.0 True False False 73m console 4.10.0 True False False 62m csi-snapshot-controller 4.10.0 True False False 66m dns 4.10.0 True False False 76m etcd 4.10.0 True False False 76m ...

Check that all nodes are in the

Readystate:$ oc get nodes

Check that the status for all nodes is

Ready.NAME STATUS ROLES AGE VERSION ip-10-0-168-251.ec2.internal Ready master 82m v1.24.0 ip-10-0-170-223.ec2.internal Ready master 82m v1.24.0 ip-10-0-179-95.ec2.internal Ready worker 70m v1.24.0 ip-10-0-182-134.ec2.internal Ready worker 70m v1.24.0 ip-10-0-211-16.ec2.internal Ready master 82m v1.24.0 ip-10-0-250-100.ec2.internal Ready worker 69m v1.24.0

If the cluster did not start properly, you might need to restore your cluster using an etcd backup.

Additional resources

- See Restoring to a previous cluster state for how to use an etcd backup to restore if your cluster failed to recover after restarting.

Chapter 4. OADP Application backup and restore

4.1. Introduction to OpenShift API for Data Protection

The OpenShift API for Data Protection (OADP) product safeguards customer applications on OpenShift Container Platform. It offers comprehensive disaster recovery protection, covering OpenShift Container Platform applications, application-related cluster resources, persistent volumes, and internal images. OADP is also capable of backing up both containerized applications and virtual machines (VMs).

However, OADP does not serve as a disaster recovery solution for etcd or OpenShift Operators.

4.1.1. OpenShift API for Data Protection APIs

OpenShift API for Data Protection (OADP) provides APIs that enable multiple approaches to customizing backups and preventing the inclusion of unnecessary or inappropriate resources.

OADP provides the following APIs:

Additional resources

4.2. OADP release notes

The release notes for OpenShift API for Data Protection (OADP) describe new features and enhancements, deprecated features, product recommendations, known issues, and resolved issues.

4.2.1. OADP 1.2.3 release notes

4.2.1.1. New features

There are no new features in the release of OpenShift API for Data Protection (OADP) 1.2.3.

4.2.1.2. Resolved issues

The following highlighted issues are resolved in OADP 1.2.3:

Multiple HTTP/2 enabled web servers are vulnerable to a DDoS attack (Rapid Reset Attack)

In previous releases of OADP 1.2, the HTTP/2 protocol was susceptible to a denial of service attack because request cancellation could reset multiple streams quickly. The server had to set up and tear down the streams while not hitting any server-side limit for the maximum number of active streams per connection. This resulted in a denial of service due to server resource consumption. For a list of all OADP issues associated with this CVE, see the following Jira list.

For more information, see CVE-2023-39325 (Rapid Reset Attack).

For a complete list of all issues resolved in the release of OADP 1.2.3, see the list of OADP 1.2.3 resolved issues in Jira.

4.2.1.3. Known issues

There are no known issues in the release of OADP 1.2.3.

4.2.2. OADP 1.2.2 release notes

4.2.2.1. New features

There are no new features in the release of OpenShift API for Data Protection (OADP) 1.2.2.

4.2.2.2. Resolved issues

The following highlighted issues are resolved in OADP 1.2.2:

Restic restore partially failed due to a Pod Security standard

In previous releases of OADP 1.2, OpenShift Container Platform 4.14 enforced a pod security admission (PSA) policy that hindered the readiness of pods during a Restic restore process.

This issue has been resolved in the release of OADP 1.2.2, and also OADP 1.1.6. Therefore, it is recommended that users upgrade to these releases.

For more information, see Restic restore partially failing on OCP 4.14 due to changed PSA policy. (OADP-2094)

Backup of an app with internal images partially failed with plugin panicked error

In previous releases of OADP 1.2, the backup of an application with internal images partially failed with plugin panicked error returned. The backup partially fails with this error in the Velero logs:

time="2022-11-23T15:40:46Z" level=info msg="1 errors encountered backup up item" backup=openshift-adp/django-persistent-67a5b83d-6b44-11ed-9cba-902e163f806c logSource="/remote-source/velero/app/pkg/backup/backup.go:413" name=django-psql-persistent time="2022-11-23T15:40:46Z" level=error msg="Error backing up item" backup=openshift-adp/django-persistent-67a5b83d-6b44-11ed-9cba-902e163f8

This issue has been resolved in OADP 1.2.2. (OADP-1057).

ACM cluster restore was not functioning as expected due to restore order

In previous releases of OADP 1.2, ACM cluster restore was not functioning as expected due to restore order. ACM applications were removed and re-created on managed clusters after restore activation. (OADP-2505)

VM’s using filesystemOverhead failed when backing up and restoring due to volume size mismatch

In previous releases of OADP 1.2, due to storage provider implementation choices, whenever there was a difference between the application persistent volume claims (PVCs) storage request and the snapshot size of the same PVC, VM’s using filesystemOverhead failed when backing up and restoring. This issue has been resolved in the Data Mover of OADP 1.2.2. (OADP-2144)

OADP did not contain an option to set VolSync replication source prune interval

In previous releases of OADP 1.2, there was no option to set the VolSync replication source pruneInterval. (OADP-2052)

Possible pod volume backup failure if Velero was installed in multiple namespaces

In previous releases of OADP 1.2, there was a possibility of pod volume backup failure if Velero was installed in multiple namespaces. (OADP-2409)

Backup Storage Locations moved to unavailable phase when VSL uses custom secret

In previous releases of OADP 1.2, Backup Storage Locations moved to unavailable phase when Volume Snapshot Location used custom secret. (OADP-1737)

For a complete list of all issues resolved in the release of OADP 1.2.2, see the list of OADP 1.2.2 resolved issues in Jira.

4.2.2.3. Known issues

The following issues have been highlighted as known issues in the release of OADP 1.2.2:

Must-gather command fails to remove ClusterRoleBinding resources

The oc adm must-gather command fails to remove ClusterRoleBinding resources, which are left on cluster due to admission webhook. Therefore, requests for the removal of the ClusterRoleBinding resources are denied. (OADP-27730)

admission webhook "clusterrolebindings-validation.managed.openshift.io" denied the request: Deleting ClusterRoleBinding must-gather-p7vwj is not allowed

For a complete list of all known issues in this release, see the list of OADP 1.2.2 known issues in Jira.

4.2.3. OADP 1.2.1 release notes

4.2.3.1. New features

There are no new features in the release of OpenShift API for Data Protection (OADP) 1.2.1.

4.2.3.2. Resolved issues

For a complete list of all issues resolved in the release of OADP 1.2.1, see the list of OADP 1.2.1 resolved issues in Jira.

4.2.3.3. Known issues

The following issues have been highlighted as known issues in the release of OADP 1.2.1:

DataMover Restic retain and prune policies do not work as expected

The retention and prune features provided by VolSync and Restic are not working as expected. Because there is no working option to set the prune interval on VolSync replication, you have to manage and prune remotely stored backups on S3 storage outside of OADP. For more details, see:

OADP Data Mover is a Technology Preview feature only. Technology Preview features are not supported with Red Hat production service level agreements (SLAs) and might not be functionally complete. Red Hat does not recommend using them in production. These features provide early access to upcoming product features, enabling customers to test functionality and provide feedback during the development process.

For more information about the support scope of Red Hat Technology Preview features, see Technology Preview Features Support Scope.

For a complete list of all known issues in this release, see the list of OADP 1.2.1 known issues in Jira.

4.2.4. OADP 1.2.0 release notes

The OADP 1.2.0 release notes include information about new features, bug fixes, and known issues.

4.2.4.1. New features

Resource timeouts

The new resourceTimeout option specifies the timeout duration in minutes for waiting on various Velero resources. This option applies to resources such as Velero CRD availability, volumeSnapshot deletion, and backup repository availability. The default duration is 10 minutes.

AWS S3 compatible backup storage providers

You can back up objects and snapshots on AWS S3 compatible providers. For more details, see Configuring Amazon Web Services.

4.2.4.1.1. Technical preview features

Data Mover

The OADP Data Mover enables you to back up Container Storage Interface (CSI) volume snapshots to a remote object store. When you enable Data Mover, you can restore stateful applications using CSI volume snapshots pulled from the object store in case of accidental cluster deletion, cluster failure, or data corruption. For more information, see Using Data Mover for CSI snapshots.

OADP Data Mover is a Technology Preview feature only. Technology Preview features are not supported with Red Hat production service level agreements (SLAs) and might not be functionally complete. Red Hat does not recommend using them in production. These features provide early access to upcoming product features, enabling customers to test functionality and provide feedback during the development process.

For more information about the support scope of Red Hat Technology Preview features, see Technology Preview Features Support Scope.

4.2.4.2. Resolved issues

For a complete list of all issues resolved in this release, see the list of OADP 1.2.0 resolved issues in Jira.

4.2.4.3. Known issues

The following issues have been highlighted as known issues in the release of OADP 1.2.0:

Multiple HTTP/2 enabled web servers are vulnerable to a DDoS attack (Rapid Reset Attack)

The HTTP/2 protocol is susceptible to a denial of service attack because request cancellation can reset multiple streams quickly. The server has to set up and tear down the streams while not hitting any server-side limit for the maximum number of active streams per connection. This results in a denial of service due to server resource consumption. For a list of all OADP issues associated with this CVE, see the following Jira list.

It is advised to upgrade to OADP 1.2.3, which resolves this issue.

For more information, see CVE-2023-39325 (Rapid Reset Attack).

4.2.5. OADP 1.1.7 release notes

The OADP 1.1.7 release notes lists any resolved issues and known issues.

4.2.5.1. Resolved issues

The following highlighted issues are resolved in OADP 1.1.7:

Multiple HTTP/2 enabled web servers are vulnerable to a DDoS attack (Rapid Reset Attack)

In previous releases of OADP 1.1, the HTTP/2 protocol was susceptible to a denial of service attack because request cancellation could reset multiple streams quickly. The server had to set up and tear down the streams while not hitting any server-side limit for the maximum number of active streams per connection. This resulted in a denial of service due to server resource consumption. For a list of all OADP issues associated with this CVE, see the following Jira list.

For more information, see CVE-2023-39325 (Rapid Reset Attack).

For a complete list of all issues resolved in the release of OADP 1.1.7, see the list of OADP 1.1.7 resolved issues in Jira.

4.2.5.2. Known issues

There are no known issues in the release of OADP 1.1.7.

4.2.6. OADP 1.1.6 release notes

The OADP 1.1.6 release notes lists any new features, resolved issues and bugs, and known issues.

4.2.6.1. Resolved issues

Restic restore partially failing due to Pod Security standard

OCP 4.14 introduced pod security standards that meant the privileged profile is enforced. In previous releases of OADP, this profile caused the pod to receive permission denied errors. This issue was caused because of the restore order. The pod was created before the security context constraints (SCC) resource. As this pod violated the pod security standard, the pod was denied and subsequently failed. OADP-2420

Restore partially failing for job resource

In previous releases of OADP, the restore of job resource was partially failing in OCP 4.14. This issue was not seen in older OCP versions. The issue was caused by an additional label being to the job resource, which was not present in older OCP versions. OADP-2530

For a complete list of all issues resolved in this release, see the list of OADP 1.1.6 resolved issues in Jira.

4.2.6.2. Known issues

For a complete list of all known issues in this release, see the list of OADP 1.1.6 known issues in Jira.

4.2.7. OADP 1.1.5 release notes

The OADP 1.1.5 release notes lists any new features, resolved issues and bugs, and known issues.

4.2.7.1. New features

This version of OADP is a service release. No new features are added to this version.

4.2.7.2. Resolved issues

For a complete list of all issues resolved in this release, see the list of OADP 1.1.5 resolved issues in Jira.

4.2.7.3. Known issues

For a complete list of all known issues in this release, see the list of OADP 1.1.5 known issues in Jira.

4.2.8. OADP 1.1.4 release notes

The OADP 1.1.4 release notes lists any new features, resolved issues and bugs, and known issues.

4.2.8.1. New features

This version of OADP is a service release. No new features are added to this version.

4.2.8.2. Resolved issues

Add support for all the velero deployment server arguments

In previous releases of OADP, OADP did not facilitate the support of all the upstream Velero server arguments. This issue has been resolved in OADP 1.1.4 and all the upstream Velero server arguments are supported. OADP-1557

Data Mover can restore from an incorrect snapshot when there was more than one VSR for the restore name and pvc name

In previous releases of OADP, OADP Data Mover could restore from an incorrect snapshot if there was more than one Volume Snapshot Restore (VSR) resource in the cluster for the same Velero restore name and PersistentVolumeClaim (pvc) name. OADP-1822

Cloud Storage API BSLs need OwnerReference

In previous releases of OADP, ACM BackupSchedules failed validation because of a missing OwnerReference on Backup Storage Locations (BSLs) created with dpa.spec.backupLocations.bucket. OADP-1511

For a complete list of all issues resolved in this release, see the list of OADP 1.1.4 resolved issues in Jira.

4.2.8.3. Known issues

This release has the following known issues:

OADP backups might fail because a UID/GID range might have changed on the cluster

OADP backups might fail because a UID/GID range might have changed on the cluster where the application has been restored, with the result that OADP does not back up and restore OpenShift Container Platform UID/GID range metadata. To avoid the issue, if the backed application requires a specific UUID, ensure the range is available when restored. An additional workaround is to allow OADP to create the namespace in the restore operation.

A restoration might fail if ArgoCD is used during the process due to a label used by ArgoCD

A restoration might fail if ArgoCD is used during the process due to a label used by ArgoCD, app.kubernetes.io/instance. This label identifies which resources ArgoCD needs to manage, which can create a conflict with OADP’s procedure for managing resources on restoration. To work around this issue, set .spec.resourceTrackingMethod on the ArgoCD YAML to annotation+label or annotation. If the issue continues to persist, then disable ArgoCD before beginning to restore, and enable it again when restoration is finished.

OADP Velero plugins returning "received EOF, stopping recv loop" message

Velero plugins are started as separate processes. When the Velero operation has completed, either successfully or not, they exit. Therefore if you see a received EOF, stopping recv loop messages in debug logs, it does not mean an error occurred. The message indicates that a plugin operation has completed. OADP-2176

For a complete list of all known issues in this release, see the list of OADP 1.1.4 known issues in Jira.

4.2.9. OADP 1.1.3 release notes

The OADP 1.1.3 release notes lists any new features, resolved issues and bugs, and known issues.

4.2.9.1. New features

This version of OADP is a service release. No new features are added to this version.

4.2.9.2. Resolved issues

For a complete list of all issues resolved in this release, see the list of OADP 1.1.3 resolved issues in Jira.

4.2.9.3. Known issues

For a complete list of all known issues in this release, see the list of OADP 1.1.3 known issues in Jira.

4.2.10. OADP 1.1.2 release notes

The OADP 1.1.2 release notes include product recommendations, a list of fixed bugs and descriptions of known issues.

4.2.10.1. Product recommendations

VolSync

To prepare for the upgrade from VolSync 0.5.1 to the latest version available from the VolSync stable channel, you must add this annotation in the openshift-adp namespace by running the following command:

$ oc annotate --overwrite namespace/openshift-adp volsync.backube/privileged-movers='true'

Velero

In this release, Velero has been upgraded from version 1.9.2 to version 1.9.5.

Restic

In this release, Restic has been upgraded from version 0.13.1 to version 0.14.0.

4.2.10.2. Resolved issues

The following issues have been resolved in this release:

4.2.10.3. Known issues

This release has the following known issues:

- OADP currently does not support backup and restore of AWS EFS volumes using restic in Velero (OADP-778).

CSI backups might fail due to a Ceph limitation of

VolumeSnapshotContentsnapshots per PVC.You can create many snapshots of the same persistent volume claim (PVC) but cannot schedule periodic creation of snapshots:

For more information, see Volume Snapshots.

4.2.11. OADP 1.1.1 release notes

The OADP 1.1.1 release notes include product recommendations and descriptions of known issues.

4.2.11.1. Product recommendations

Before you install OADP 1.1.1, it is recommended to either install VolSync 0.5.1 or to upgrade to it.

4.2.11.2. Known issues

This release has the following known issues:

Multiple HTTP/2 enabled web servers are vulnerable to a DDoS attack (Rapid Reset Attack)

The HTTP/2 protocol is susceptible to a denial of service attack because request cancellation can reset multiple streams quickly. The server has to set up and tear down the streams while not hitting any server-side limit for the maximum number of active streams per connection. This results in a denial of service due to server resource consumption. For a list of all OADP issues associated with this CVE, see the following Jira list.

It is advised to upgrade to OADP 1.1.7 or 1.2.3, which resolve this issue.

For more information, see CVE-2023-39325 (Rapid Reset Attack).

- OADP currently does not support backup and restore of AWS EFS volumes using restic in Velero (OADP-778).

CSI backups might fail due to a Ceph limitation of

VolumeSnapshotContentsnapshots per PVC.You can create many snapshots of the same persistent volume claim (PVC) but cannot schedule periodic creation of snapshots:

- For CephFS, you can create up to 100 snapshots per PVC.

For RADOS Block Device (RBD), you can create up to 512 snapshots for each PVC. (OADP-804) and (OADP-975)

For more information, see Volume Snapshots.

4.3. OADP features and plugins

OpenShift API for Data Protection (OADP) features provide options for backing up and restoring applications.

The default plugins enable Velero to integrate with certain cloud providers and to back up and restore OpenShift Container Platform resources.

4.3.1. OADP features

OpenShift API for Data Protection (OADP) supports the following features:

- Backup

You can use OADP to back up all applications on the OpenShift Platform, or you can filter the resources by type, namespace, or label.

OADP backs up Kubernetes objects and internal images by saving them as an archive file on object storage. OADP backs up persistent volumes (PVs) by creating snapshots with the native cloud snapshot API or with the Container Storage Interface (CSI). For cloud providers that do not support snapshots, OADP backs up resources and PV data with Restic.

NoteYou must exclude Operators from the backup of an application for backup and restore to succeed.

- Restore

You can restore resources and PVs from a backup. You can restore all objects in a backup or filter the objects by namespace, PV, or label.

NoteYou must exclude Operators from the backup of an application for backup and restore to succeed.

- Schedule

- You can schedule backups at specified intervals.

- Hooks

-

You can use hooks to run commands in a container on a pod, for example,

fsfreezeto freeze a file system. You can configure a hook to run before or after a backup or restore. Restore hooks can run in an init container or in the application container.

4.3.2. OADP plugins

The OpenShift API for Data Protection (OADP) provides default Velero plugins that are integrated with storage providers to support backup and snapshot operations. You can create custom plugins based on the Velero plugins.

OADP also provides plugins for OpenShift Container Platform resource backups, OpenShift Virtualization resource backups, and Container Storage Interface (CSI) snapshots.

| OADP plugin | Function | Storage location |

|---|---|---|

|

| Backs up and restores Kubernetes objects. | AWS S3 |

| Backs up and restores volumes with snapshots. | AWS EBS | |

|

| Backs up and restores Kubernetes objects. | Microsoft Azure Blob storage |

| Backs up and restores volumes with snapshots. | Microsoft Azure Managed Disks | |

|

| Backs up and restores Kubernetes objects. | Google Cloud Storage |

| Backs up and restores volumes with snapshots. | Google Compute Engine Disks | |

|

| Backs up and restores OpenShift Container Platform resources. [1] | Object store |

|

| Backs up and restores OpenShift Virtualization resources. [2] | Object store |

|

| Backs up and restores volumes with CSI snapshots. [3] | Cloud storage that supports CSI snapshots |

- Mandatory.

- Virtual machine disks are backed up with CSI snapshots or Restic.

-

The

csiplugin uses the Velero CSI beta snapshot API.

4.3.3. About OADP Velero plugins

You can configure two types of plugins when you install Velero:

- Default cloud provider plugins

- Custom plugins

Both types of plugin are optional, but most users configure at least one cloud provider plugin.

4.3.3.1. Default Velero cloud provider plugins

You can install any of the following default Velero cloud provider plugins when you configure the oadp_v1alpha1_dpa.yaml file during deployment:

-

aws(Amazon Web Services) -

gcp(Google Cloud Platform) -

azure(Microsoft Azure) -

openshift(OpenShift Velero plugin) -

csi(Container Storage Interface) -

kubevirt(KubeVirt)

You specify the desired default plugins in the oadp_v1alpha1_dpa.yaml file during deployment.

Example file

The following .yaml file installs the openshift, aws, azure, and gcp plugins:

apiVersion: oadp.openshift.io/v1alpha1

kind: DataProtectionApplication

metadata:

name: dpa-sample

spec:

configuration:

velero:

defaultPlugins:

- openshift

- aws

- azure

- gcp4.3.3.2. Custom Velero plugins

You can install a custom Velero plugin by specifying the plugin image and name when you configure the oadp_v1alpha1_dpa.yaml file during deployment.

You specify the desired custom plugins in the oadp_v1alpha1_dpa.yaml file during deployment.

Example file

The following .yaml file installs the default openshift, azure, and gcp plugins and a custom plugin that has the name custom-plugin-example and the image quay.io/example-repo/custom-velero-plugin:

apiVersion: oadp.openshift.io/v1alpha1

kind: DataProtectionApplication

metadata:

name: dpa-sample

spec:

configuration:

velero:

defaultPlugins:

- openshift

- azure

- gcp

customPlugins:

- name: custom-plugin-example

image: quay.io/example-repo/custom-velero-plugin4.3.3.3. Velero plugins returning "received EOF, stopping recv loop" message

Velero plugins are started as separate processes. After the Velero operation has completed, either successfully or not, they exit. Receiving a received EOF, stopping recv loop message in the debug logs indicates that a plugin operation has completed. It does not mean that an error has occurred.

4.3.4. Supported architectures for OADP

OpenShift API for Data Protection (OADP) supports the following architectures:

- AMD64

- ARM64

- PPC64le

- s390x

OADP 1.2.0 and later versions support the ARM64 architecture.

4.3.5. OADP support for IBM Power and IBM Z

OpenShift API for Data Protection (OADP) is platform neutral. The information that follows relates only to IBM Power and to IBM Z.

OADP 1.1.0 was tested successfully against OpenShift Container Platform 4.11 for both IBM Power and IBM Z. The sections that follow give testing and support information for OADP 1.1.0 in terms of backup locations for these systems.

4.3.5.1. OADP support for target backup locations using IBM Power

IBM Power running with OpenShift Container Platform 4.11 and 4.12, and OpenShift API for Data Protection (OADP) 1.1.2 was tested successfully against an AWS S3 backup location target. Although the test involved only an AWS S3 target, Red Hat supports running IBM Power with OpenShift Container Platform 4.11 and 4.12, and OADP 1.1.2 against all non-AWS S3 backup location targets as well.

4.3.5.2. OADP testing and support for target backup locations using IBM Z

IBM Z running with OpenShift Container Platform 4.11 and 4.12, and OpenShift API for Data Protection (OADP) 1.1.2 was tested successfully against an AWS S3 backup location target. Although the test involved only an AWS S3 target, Red Hat supports running IBM Z with OpenShift Container Platform 4.11 and 4.12, and OADP 1.1.2 against all non-AWS S3 backup location targets as well.

4.4. Installing and configuring OADP

4.4.1. About installing OADP

As a cluster administrator, you install the OpenShift API for Data Protection (OADP) by installing the OADP Operator. The OADP Operator installs Velero 1.11.

Starting from OADP 1.0.4, all OADP 1.0.z versions can only be used as a dependency of the MTC Operator and are not available as a standalone Operator.

To back up Kubernetes resources and internal images, you must have object storage as a backup location, such as one of the following storage types:

- Amazon Web Services

- Microsoft Azure

- Google Cloud Platform

- Multicloud Object Gateway

- AWS S3 compatible object storage, such as Multicloud Object Gateway or MinIO

Unless specified otherwise, "NooBaa" refers to the open source project that provides lightweight object storage, while "Multicloud Object Gateway (MCG)" refers to the Red Hat distribution of NooBaa.

For more information on the MCG, see Accessing the Multicloud Object Gateway with your applications.

The CloudStorage API, which automates the creation of a bucket for object storage, is a Technology Preview feature only. Technology Preview features are not supported with Red Hat production service level agreements (SLAs) and might not be functionally complete. Red Hat does not recommend using them in production. These features provide early access to upcoming product features, enabling customers to test functionality and provide feedback during the development process.

For more information about the support scope of Red Hat Technology Preview features, see Technology Preview Features Support Scope.

You can back up persistent volumes (PVs) by using snapshots or Restic.

To back up PVs with snapshots, you must have a cloud provider that supports either a native snapshot API or Container Storage Interface (CSI) snapshots, such as one of the following cloud providers:

- Amazon Web Services

- Microsoft Azure

- Google Cloud Platform

- CSI snapshot-enabled cloud provider, such as OpenShift Data Foundation

If you want to use CSI backup on OCP 4.11 and later, install OADP 1.1.x.

OADP 1.0.x does not support CSI backup on OCP 4.11 and later. OADP 1.0.x includes Velero 1.7.x and expects the API group snapshot.storage.k8s.io/v1beta1, which is not present on OCP 4.11 and later.

If your cloud provider does not support snapshots or if your storage is NFS, you can back up applications with Restic backups on object storage.

You create a default Secret and then you install the Data Protection Application.

4.4.1.1. AWS S3 compatible backup storage providers

OADP is compatible with many object storage providers for use with different backup and snapshot operations. Several object storage providers are fully supported, several are unsupported but known to work, and some have known limitations.

4.4.1.1.1. Supported backup storage providers

The following AWS S3 compatible object storage providers are fully supported by OADP through the AWS plugin for use as backup storage locations:

- MinIO

- Multicloud Object Gateway (MCG)

- Amazon Web Services (AWS) S3

The following compatible object storage providers are supported and have their own Velero object store plugins:

- Google Cloud Platform (GCP)

- Microsoft Azure

4.4.1.1.2. Unsupported backup storage providers

The following AWS S3 compatible object storage providers, are known to work with Velero through the AWS plugin, for use as backup storage locations, however, they are unsupported and have not been tested by Red Hat:

- IBM Cloud

- Oracle Cloud

- DigitalOcean

- NooBaa, unless installed using Multicloud Object Gateway (MCG)

- Tencent Cloud

- Ceph RADOS v12.2.7

- Quobyte

- Cloudian HyperStore

Unless specified otherwise, "NooBaa" refers to the open source project that provides lightweight object storage, while "Multicloud Object Gateway (MCG)" refers to the Red Hat distribution of NooBaa.

For more information on the MCG, see Accessing the Multicloud Object Gateway with your applications.

4.4.1.1.3. Backup storage providers with known limitations

The following AWS S3 compatible object storage providers are known to work with Velero through the AWS plugin with a limited feature set:

- Swift - It works for use as a backup storage location for backup storage, but is not compatible with Restic for filesystem-based volume backup and restore.

4.4.1.2. Configuring Multicloud Object Gateway (MCG) for disaster recovery on OpenShift Data Foundation

If you use cluster storage for your MCG bucket backupStorageLocation on OpenShift Data Foundation, configure MCG as an external object store.

Failure to configure MCG as an external object store might lead to backups not being available.

Unless specified otherwise, "NooBaa" refers to the open source project that provides lightweight object storage, while "Multicloud Object Gateway (MCG)" refers to the Red Hat distribution of NooBaa.

For more information on the MCG, see Accessing the Multicloud Object Gateway with your applications.

Procedure

- Configure MCG as an external object store as described in Adding storage resources for hybrid or Multicloud.

Additional resources

4.4.1.3. About OADP update channels

When you install an OADP Operator, you choose an update channel. This channel determines which upgrades to the OADP Operator and to Velero you receive. You can switch channels at any time.

The following update channels are available:

-

The stable channel is now deprecated. The stable channel contains the patches (z-stream updates) of OADP

ClusterServiceVersionforoadp.v1.1.zand older versions fromoadp.v1.0.z. -

The stable-1.0 channel contains

oadp.v1.0.z, the most recent OADP 1.0ClusterServiceVersion. -

The stable-1.1 channel contains

oadp.v1.1.z, the most recent OADP 1.1ClusterServiceVersion. -

The stable-1.2 channel contains

oadp.v1.2.z, the most recent OADP 1.2ClusterServiceVersion. -

The stable-1.3 channel contains

oadp.v1.3.z, the most recent OADP 1.3ClusterServiceVersion.

Which update channel is right for you?

-

The stable channel is now deprecated. If you are already using the stable channel, you will continue to get updates from

oadp.v1.1.z. - Choose the stable-1.y update channel to install OADP 1.y and to continue receiving patches for it. If you choose this channel, you will receive all z-stream patches for version 1.y.z.

When must you switch update channels?

- If you have OADP 1.y installed, and you want to receive patches only for that y-stream, you must switch from the stable update channel to the stable-1.y update channel. You will then receive all z-stream patches for version 1.y.z.

- If you have OADP 1.0 installed, want to upgrade to OADP 1.1, and then receive patches only for OADP 1.1, you must switch from the stable-1.0 update channel to the stable-1.1 update channel. You will then receive all z-stream patches for version 1.1.z.

- If you have OADP 1.y installed, with y greater than 0, and want to switch to OADP 1.0, you must uninstall your OADP Operator and then reinstall it using the stable-1.0 update channel. You will then receive all z-stream patches for version 1.0.z.

You cannot switch from OADP 1.y to OADP 1.0 by switching update channels. You must uninstall the Operator and then reinstall it.

4.4.1.4. Installation of OADP on multiple namespaces

You can install OADP into multiple namespaces on the same cluster so that multiple project owners can manage their own OADP instance. This use case has been validated with Restic and CSI.

You install each instance of OADP as specified by the per-platform procedures contained in this document with the following additional requirements:

- All deployments of OADP on the same cluster must be the same version, for example, 1.1.4. Installing different versions of OADP on the same cluster is not supported.

-

Each individual deployment of OADP must have a unique set of credentials and a unique

BackupStorageLocationconfiguration. - By default, each OADP deployment has cluster-level access across namespaces. OpenShift Container Platform administrators need to review security and RBAC settings carefully and make any necessary changes to them to ensure that each OADP instance has the correct permissions.

Additional resources

4.4.1.5. Velero CPU and memory requirements based on collected data

The following recommendations are based on observations of performance made in the scale and performance lab. The backup and restore resources can be impacted by the type of plugin, the amount of resources required by that backup or restore, and the respective data contained in the persistent volumes (PVs) related to those resources.

4.4.1.5.1. CPU and memory requirement for configurations

| Configuration types | [1] Average usage | [2] Large usage | resourceTimeouts |

|---|---|---|---|

| CSI | Velero: CPU- Request 200m, Limits 1000m Memory - Request 256Mi, Limits 1024Mi | Velero: CPU- Request 200m, Limits 2000m Memory- Request 256Mi, Limits 2048Mi | N/A |

| Restic | [3] Restic: CPU- Request 1000m, Limits 2000m Memory - Request 16Gi, Limits 32Gi | [4] Restic: CPU - Request 2000m, Limits 8000m Memory - Request 16Gi, Limits 40Gi | 900m |

| [5] DataMover | N/A | N/A | 10m - average usage 60m - large usage |

- Average usage - use these settings for most usage situations.

- Large usage - use these settings for large usage situations, such as a large PV (500GB Usage), multiple namespaces (100+), or many pods within a single namespace (2000 pods+), and for optimal performance for backup and restore involving large datasets.

- Restic resource usage corresponds to the amount of data, and type of data. For example, many small files or large amounts of data can cause Restic to utilize large amounts of resources. The Velero documentation references 500m as a supplied default, for most of our testing we found 200m request suitable with 1000m limit. As cited in the Velero documentation, exact CPU and memory usage is dependent on the scale of files and directories, in addition to environmental limitations.

- Increasing the CPU has a significant impact on improving backup and restore times.

- DataMover - DataMover default resourceTimeout is 10m. Our tests show that for restoring a large PV (500GB usage), it is required to increase the resourceTimeout to 60m.

The resource requirements listed throughout the guide are for average usage only. For large usage, adjust the settings as described in the table above.

4.4.1.5.2. NodeAgent CPU for large usage

Testing shows that increasing NodeAgent CPU can significantly improve backup and restore times when using OpenShift API for Data Protection (OADP).

It is not recommended to use Kopia without limits in production environments on nodes running production workloads due to Kopia’s aggressive consumption of resources. However, running Kopia with limits that are too low results in CPU limiting and slow backups and restore situations. Testing showed that running Kopia with 20 cores and 32 Gi memory supported backup and restore operations of over 100 GB of data, multiple namespaces, or over 2000 pods in a single namespace.

Testing detected no CPU limiting or memory saturation with these resource specifications.

You can set these limits in Ceph MDS pods by following the procedure in Changing the CPU and memory resources on the rook-ceph pods.

You need to add the following lines to the storage cluster Custom Resource (CR) to set the limits:

resources:

mds:

limits:

cpu: "3"

memory: 128Gi

requests:

cpu: "3"

memory: 8Gi4.4.2. Installing the OADP Operator

You can install the OpenShift API for Data Protection (OADP) Operator on OpenShift Container Platform 4.11 by using Operator Lifecycle Manager (OLM).

The OADP Operator installs Velero 1.11.

Prerequisites

-

You must be logged in as a user with

cluster-adminprivileges.

Procedure

- In the OpenShift Container Platform web console, click Operators → OperatorHub.

- Use the Filter by keyword field to find the OADP Operator.

- Select the OADP Operator and click Install.

-

Click Install to install the Operator in the

openshift-adpproject. - Click Operators → Installed Operators to verify the installation.

4.4.2.1. OADP-Velero-OpenShift Container Platform version relationship

| OADP version | Velero version | OpenShift Container Platform version |

|---|---|---|

| 1.1.0 | 4.9 and later | |

| 1.1.1 | 4.9 and later | |

| 1.1.2 | 4.9 and later | |

| 1.1.3 | 4.9 and later | |

| 1.1.4 | 4.9 and later | |

| 1.1.5 | 4.9 and later | |

| 1.1.6 | 4.11 and later | |

| 1.1.7 | 4.11 and later | |

| 1.2.0 | 4.11 and later | |

| 1.2.1 | 4.11 and later | |

| 1.2.2 | 4.11 and later | |

| 1.2.3 | 4.11 and later |

4.4.3. Configuring the OpenShift API for Data Protection with Amazon Web Services

You install the OpenShift API for Data Protection (OADP) with Amazon Web Services (AWS) by installing the OADP Operator. The Operator installs Velero 1.11.

Starting from OADP 1.0.4, all OADP 1.0.z versions can only be used as a dependency of the MTC Operator and are not available as a standalone Operator.

You configure AWS for Velero, create a default Secret, and then install the Data Protection Application. For more details, see Installing the OADP Operator.

To install the OADP Operator in a restricted network environment, you must first disable the default OperatorHub sources and mirror the Operator catalog. See Using Operator Lifecycle Manager on restricted networks for details.

4.4.3.1. Configuring Amazon Web Services

You configure Amazon Web Services (AWS) for the OpenShift API for Data Protection (OADP).

Prerequisites

- You must have the AWS CLI installed.

Procedure

Set the

BUCKETvariable:$ BUCKET=<your_bucket>

Set the

REGIONvariable:$ REGION=<your_region>

Create an AWS S3 bucket:

$ aws s3api create-bucket \ --bucket $BUCKET \ --region $REGION \ --create-bucket-configuration LocationConstraint=$REGION 1- 1

us-east-1does not support aLocationConstraint. If your region isus-east-1, omit--create-bucket-configuration LocationConstraint=$REGION.

Create an IAM user:

$ aws iam create-user --user-name velero 1- 1

- If you want to use Velero to back up multiple clusters with multiple S3 buckets, create a unique user name for each cluster.

Create a

velero-policy.jsonfile:$ cat > velero-policy.json <<EOF { "Version": "2012-10-17", "Statement": [ { "Effect": "Allow", "Action": [ "ec2:DescribeVolumes", "ec2:DescribeSnapshots", "ec2:CreateTags", "ec2:CreateVolume", "ec2:CreateSnapshot", "ec2:DeleteSnapshot" ], "Resource": "*" }, { "Effect": "Allow", "Action": [ "s3:GetObject", "s3:DeleteObject", "s3:PutObject", "s3:AbortMultipartUpload", "s3:ListMultipartUploadParts" ], "Resource": [ "arn:aws:s3:::${BUCKET}/*" ] }, { "Effect": "Allow", "Action": [ "s3:ListBucket", "s3:GetBucketLocation", "s3:ListBucketMultipartUploads" ], "Resource": [ "arn:aws:s3:::${BUCKET}" ] } ] } EOFAttach the policies to give the

velerouser the minimum necessary permissions:$ aws iam put-user-policy \ --user-name velero \ --policy-name velero \ --policy-document file://velero-policy.json

Create an access key for the

velerouser:$ aws iam create-access-key --user-name velero

Example output

{ "AccessKey": { "UserName": "velero", "Status": "Active", "CreateDate": "2017-07-31T22:24:41.576Z", "SecretAccessKey": <AWS_SECRET_ACCESS_KEY>, "AccessKeyId": <AWS_ACCESS_KEY_ID> } }Create a

credentials-velerofile:$ cat << EOF > ./credentials-velero [default] aws_access_key_id=<AWS_ACCESS_KEY_ID> aws_secret_access_key=<AWS_SECRET_ACCESS_KEY> EOF

You use the

credentials-velerofile to create aSecretobject for AWS before you install the Data Protection Application.

4.4.3.2. About backup and snapshot locations and their secrets

You specify backup and snapshot locations and their secrets in the DataProtectionApplication custom resource (CR).

Backup locations

You specify S3-compatible object storage, such as Multicloud Object Gateway or MinIO, as a backup location.

Velero backs up OpenShift Container Platform resources, Kubernetes objects, and internal images as an archive file on object storage.

Snapshot locations

If you use your cloud provider’s native snapshot API to back up persistent volumes, you must specify the cloud provider as the snapshot location.

If you use Container Storage Interface (CSI) snapshots, you do not need to specify a snapshot location because you will create a VolumeSnapshotClass CR to register the CSI driver.

If you use Restic, you do not need to specify a snapshot location because Restic backs up the file system on object storage.

Secrets

If the backup and snapshot locations use the same credentials or if you do not require a snapshot location, you create a default Secret.

If the backup and snapshot locations use different credentials, you create two secret objects:

-

Custom

Secretfor the backup location, which you specify in theDataProtectionApplicationCR. -

Default

Secretfor the snapshot location, which is not referenced in theDataProtectionApplicationCR.

The Data Protection Application requires a default Secret. Otherwise, the installation will fail.

If you do not want to specify backup or snapshot locations during the installation, you can create a default Secret with an empty credentials-velero file.

4.4.3.2.1. Creating a default Secret

You create a default Secret if your backup and snapshot locations use the same credentials or if you do not require a snapshot location.

The default name of the Secret is cloud-credentials.

The DataProtectionApplication custom resource (CR) requires a default Secret. Otherwise, the installation will fail. If the name of the backup location Secret is not specified, the default name is used.

If you do not want to use the backup location credentials during the installation, you can create a Secret with the default name by using an empty credentials-velero file.

Prerequisites

- Your object storage and cloud storage, if any, must use the same credentials.

- You must configure object storage for Velero.

-

You must create a

credentials-velerofile for the object storage in the appropriate format.

Procedure

Create a

Secretwith the default name:$ oc create secret generic cloud-credentials -n openshift-adp --from-file cloud=credentials-velero

The Secret is referenced in the spec.backupLocations.credential block of the DataProtectionApplication CR when you install the Data Protection Application.

4.4.3.2.2. Creating profiles for different credentials

If your backup and snapshot locations use different credentials, you create separate profiles in the credentials-velero file.

Then, you create a Secret object and specify the profiles in the DataProtectionApplication custom resource (CR).

Procedure

Create a

credentials-velerofile with separate profiles for the backup and snapshot locations, as in the following example:[backupStorage] aws_access_key_id=<AWS_ACCESS_KEY_ID> aws_secret_access_key=<AWS_SECRET_ACCESS_KEY> [volumeSnapshot] aws_access_key_id=<AWS_ACCESS_KEY_ID> aws_secret_access_key=<AWS_SECRET_ACCESS_KEY>

Create a

Secretobject with thecredentials-velerofile:$ oc create secret generic cloud-credentials -n openshift-adp --from-file cloud=credentials-velero 1Add the profiles to the

DataProtectionApplicationCR, as in the following example:apiVersion: oadp.openshift.io/v1alpha1 kind: DataProtectionApplication metadata: name: <dpa_sample> namespace: openshift-adp spec: ... backupLocations: - name: default velero: provider: aws default: true objectStorage: bucket: <bucket_name> prefix: <prefix> config: region: us-east-1 profile: "backupStorage" credential: key: cloud name: cloud-credentials snapshotLocations: - name: default velero: provider: aws config: region: us-west-2 profile: "volumeSnapshot"

4.4.3.3. Configuring the Data Protection Application

You can configure the Data Protection Application by setting Velero resource allocations or enabling self-signed CA certificates.

4.4.3.3.1. Setting Velero CPU and memory resource allocations

You set the CPU and memory resource allocations for the Velero pod by editing the DataProtectionApplication custom resource (CR) manifest.

Prerequisites

- You must have the OpenShift API for Data Protection (OADP) Operator installed.

Procedure

Edit the values in the

spec.configuration.velero.podConfig.ResourceAllocationsblock of theDataProtectionApplicationCR manifest, as in the following example:apiVersion: oadp.openshift.io/v1alpha1 kind: DataProtectionApplication metadata: name: <dpa_sample> spec: ... configuration: velero: podConfig: nodeSelector: <node selector> 1 resourceAllocations: 2 limits: cpu: "1" memory: 1024Mi requests: cpu: 200m memory: 256Mi

4.4.3.3.2. Enabling self-signed CA certificates

You must enable a self-signed CA certificate for object storage by editing the DataProtectionApplication custom resource (CR) manifest to prevent a certificate signed by unknown authority error.

Prerequisites

- You must have the OpenShift API for Data Protection (OADP) Operator installed.

Procedure

Edit the

spec.backupLocations.velero.objectStorage.caCertparameter andspec.backupLocations.velero.configparameters of theDataProtectionApplicationCR manifest:apiVersion: oadp.openshift.io/v1alpha1 kind: DataProtectionApplication metadata: name: <dpa_sample> spec: ... backupLocations: - name: default velero: provider: aws default: true objectStorage: bucket: <bucket> prefix: <prefix> caCert: <base64_encoded_cert_string> 1 config: insecureSkipTLSVerify: "false" 2 ...

4.4.3.4. Installing the Data Protection Application

You install the Data Protection Application (DPA) by creating an instance of the DataProtectionApplication API.

Prerequisites

- You must install the OADP Operator.

- You must configure object storage as a backup location.

- If you use snapshots to back up PVs, your cloud provider must support either a native snapshot API or Container Storage Interface (CSI) snapshots.

-

If the backup and snapshot locations use the same credentials, you must create a

Secretwith the default name,cloud-credentials. If the backup and snapshot locations use different credentials, you must create a

Secretwith the default name,cloud-credentials, which contains separate profiles for the backup and snapshot location credentials.NoteIf you do not want to specify backup or snapshot locations during the installation, you can create a default

Secretwith an emptycredentials-velerofile. If there is no defaultSecret, the installation will fail.NoteVelero creates a secret named

velero-repo-credentialsin the OADP namespace, which contains a default backup repository password. You can update the secret with your own password encoded as base64 before you run your first backup targeted to the backup repository. The value of the key to update isData[repository-password].After you create your DPA, the first time that you run a backup targeted to the backup repository, Velero creates a backup repository whose secret is

velero-repo-credentials, which contains either the default password or the one you replaced it with. If you update the secret password after the first backup, the new password will not match the password invelero-repo-credentials, and therefore, Velero will not be able to connect with the older backups.

Procedure

- Click Operators → Installed Operators and select the OADP Operator.

- Under Provided APIs, click Create instance in the DataProtectionApplication box.

Click YAML View and update the parameters of the

DataProtectionApplicationmanifest:apiVersion: oadp.openshift.io/v1alpha1 kind: DataProtectionApplication metadata: name: <dpa_sample> namespace: openshift-adp spec: configuration: velero: defaultPlugins: - openshift 1 - aws resourceTimeout: 10m 2 restic: enable: true 3 podConfig: nodeSelector: <node_selector> 4 backupLocations: - name: default velero: provider: aws default: true objectStorage: bucket: <bucket_name> 5 prefix: <prefix> 6 config: region: <region> profile: "default" credential: key: cloud name: cloud-credentials 7 snapshotLocations: 8 - name: default velero: provider: aws config: region: <region> 9 profile: "default"- 1

- The

openshiftplugin is mandatory. - 2

- Specify how many minutes to wait for several Velero resources before timeout occurs, such as Velero CRD availability, volumeSnapshot deletion, and backup repository availability. The default is 10m.

- 3

- Set this value to

falseif you want to disable the Restic installation. Restic deploys a daemon set, which means that Restic pods run on each working node. In OADP version 1.2 and later, you can configure Restic for backups by addingspec.defaultVolumesToFsBackup: trueto theBackupCR. In OADP version 1.1, addspec.defaultVolumesToRestic: trueto theBackupCR. - 4

- Specify on which nodes Restic is available. By default, Restic runs on all nodes.

- 5

- Specify a bucket as the backup storage location. If the bucket is not a dedicated bucket for Velero backups, you must specify a prefix.

- 6

- Specify a prefix for Velero backups, for example,

velero, if the bucket is used for multiple purposes. - 7

- Specify the name of the

Secretobject that you created. If you do not specify this value, the default name,cloud-credentials, is used. If you specify a custom name, the custom name is used for the backup location. - 8

- Specify a snapshot location, unless you use CSI snapshots or Restic to back up PVs.

- 9

- The snapshot location must be in the same region as the PVs.

- Click Create.

Verify the installation by viewing the OADP resources:

$ oc get all -n openshift-adp

Example output

NAME READY STATUS RESTARTS AGE pod/oadp-operator-controller-manager-67d9494d47-6l8z8 2/2 Running 0 2m8s pod/restic-9cq4q 1/1 Running 0 94s pod/restic-m4lts 1/1 Running 0 94s pod/restic-pv4kr 1/1 Running 0 95s pod/velero-588db7f655-n842v 1/1 Running 0 95s NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE service/oadp-operator-controller-manager-metrics-service ClusterIP 172.30.70.140 <none> 8443/TCP 2m8s NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE daemonset.apps/restic 3 3 3 3 3 <none> 96s NAME READY UP-TO-DATE AVAILABLE AGE deployment.apps/oadp-operator-controller-manager 1/1 1 1 2m9s deployment.apps/velero 1/1 1 1 96s NAME DESIRED CURRENT READY AGE replicaset.apps/oadp-operator-controller-manager-67d9494d47 1 1 1 2m9s replicaset.apps/velero-588db7f655 1 1 1 96s

4.4.3.4.1. Enabling CSI in the DataProtectionApplication CR

You enable the Container Storage Interface (CSI) in the DataProtectionApplication custom resource (CR) in order to back up persistent volumes with CSI snapshots.

Prerequisites

- The cloud provider must support CSI snapshots.

Procedure

Edit the

DataProtectionApplicationCR, as in the following example:apiVersion: oadp.openshift.io/v1alpha1 kind: DataProtectionApplication ... spec: configuration: velero: defaultPlugins: - openshift - csi 1- 1

- Add the

csidefault plugin.

4.4.4. Configuring the OpenShift API for Data Protection with Microsoft Azure

You install the OpenShift API for Data Protection (OADP) with Microsoft Azure by installing the OADP Operator. The Operator installs Velero 1.11.

Starting from OADP 1.0.4, all OADP 1.0.z versions can only be used as a dependency of the MTC Operator and are not available as a standalone Operator.

You configure Azure for Velero, create a default Secret, and then install the Data Protection Application. For more details, see Installing the OADP Operator.

To install the OADP Operator in a restricted network environment, you must first disable the default OperatorHub sources and mirror the Operator catalog. See Using Operator Lifecycle Manager on restricted networks for details.

4.4.4.1. Configuring Microsoft Azure

You configure a Microsoft Azure for the OpenShift API for Data Protection (OADP).

Prerequisites

- You must have the Azure CLI installed.

Procedure

Log in to Azure:

$ az login

Set the

AZURE_RESOURCE_GROUPvariable:$ AZURE_RESOURCE_GROUP=Velero_Backups

Create an Azure resource group:

$ az group create -n $AZURE_RESOURCE_GROUP --location CentralUS 1- 1

- Specify your location.

Set the

AZURE_STORAGE_ACCOUNT_IDvariable:$ AZURE_STORAGE_ACCOUNT_ID="velero$(uuidgen | cut -d '-' -f5 | tr '[A-Z]' '[a-z]')"

Create an Azure storage account:

$ az storage account create \ --name $AZURE_STORAGE_ACCOUNT_ID \ --resource-group $AZURE_RESOURCE_GROUP \ --sku Standard_GRS \ --encryption-services blob \ --https-only true \ --kind BlobStorage \ --access-tier HotSet the

BLOB_CONTAINERvariable:$ BLOB_CONTAINER=velero

Create an Azure Blob storage container:

$ az storage container create \ -n $BLOB_CONTAINER \ --public-access off \ --account-name $AZURE_STORAGE_ACCOUNT_ID

Obtain the storage account access key:

$ AZURE_STORAGE_ACCOUNT_ACCESS_KEY=`az storage account keys list \ --account-name $AZURE_STORAGE_ACCOUNT_ID \ --query "[?keyName == 'key1'].value" -o tsv`

Create a custom role that has the minimum required permissions:

AZURE_ROLE=Velero az role definition create --role-definition '{ "Name": "'$AZURE_ROLE'", "Description": "Velero related permissions to perform backups, restores and deletions", "Actions": [ "Microsoft.Compute/disks/read", "Microsoft.Compute/disks/write", "Microsoft.Compute/disks/endGetAccess/action", "Microsoft.Compute/disks/beginGetAccess/action", "Microsoft.Compute/snapshots/read", "Microsoft.Compute/snapshots/write", "Microsoft.Compute/snapshots/delete", "Microsoft.Storage/storageAccounts/listkeys/action", "Microsoft.Storage/storageAccounts/regeneratekey/action" ], "AssignableScopes": ["/subscriptions/'$AZURE_SUBSCRIPTION_ID'"] }'Create a

credentials-velerofile:$ cat << EOF > ./credentials-velero AZURE_SUBSCRIPTION_ID=${AZURE_SUBSCRIPTION_ID} AZURE_TENANT_ID=${AZURE_TENANT_ID} AZURE_CLIENT_ID=${AZURE_CLIENT_ID} AZURE_CLIENT_SECRET=${AZURE_CLIENT_SECRET} AZURE_RESOURCE_GROUP=${AZURE_RESOURCE_GROUP} AZURE_STORAGE_ACCOUNT_ACCESS_KEY=${AZURE_STORAGE_ACCOUNT_ACCESS_KEY} 1 AZURE_CLOUD_NAME=AzurePublicCloud EOF- 1

- Mandatory. You cannot back up internal images if the

credentials-velerofile contains only the service principal credentials.

You use the

credentials-velerofile to create aSecretobject for Azure before you install the Data Protection Application.

4.4.4.2. About backup and snapshot locations and their secrets

You specify backup and snapshot locations and their secrets in the DataProtectionApplication custom resource (CR).

Backup locations

You specify S3-compatible object storage, such as Multicloud Object Gateway or MinIO, as a backup location.

Velero backs up OpenShift Container Platform resources, Kubernetes objects, and internal images as an archive file on object storage.

Snapshot locations

If you use your cloud provider’s native snapshot API to back up persistent volumes, you must specify the cloud provider as the snapshot location.

If you use Container Storage Interface (CSI) snapshots, you do not need to specify a snapshot location because you will create a VolumeSnapshotClass CR to register the CSI driver.

If you use Restic, you do not need to specify a snapshot location because Restic backs up the file system on object storage.

Secrets

If the backup and snapshot locations use the same credentials or if you do not require a snapshot location, you create a default Secret.

If the backup and snapshot locations use different credentials, you create two secret objects:

-

Custom

Secretfor the backup location, which you specify in theDataProtectionApplicationCR. -

Default

Secretfor the snapshot location, which is not referenced in theDataProtectionApplicationCR.

The Data Protection Application requires a default Secret. Otherwise, the installation will fail.

If you do not want to specify backup or snapshot locations during the installation, you can create a default Secret with an empty credentials-velero file.

4.4.4.2.1. Creating a default Secret

You create a default Secret if your backup and snapshot locations use the same credentials or if you do not require a snapshot location.

The default name of the Secret is cloud-credentials-azure.

The DataProtectionApplication custom resource (CR) requires a default Secret. Otherwise, the installation will fail. If the name of the backup location Secret is not specified, the default name is used.

If you do not want to use the backup location credentials during the installation, you can create a Secret with the default name by using an empty credentials-velero file.

Prerequisites

- Your object storage and cloud storage, if any, must use the same credentials.

- You must configure object storage for Velero.

-

You must create a

credentials-velerofile for the object storage in the appropriate format.

Procedure

Create a

Secretwith the default name:$ oc create secret generic cloud-credentials-azure -n openshift-adp --from-file cloud=credentials-velero

The Secret is referenced in the spec.backupLocations.credential block of the DataProtectionApplication CR when you install the Data Protection Application.

4.4.4.2.2. Creating secrets for different credentials

If your backup and snapshot locations use different credentials, you must create two Secret objects:

-

Backup location

Secretwith a custom name. The custom name is specified in thespec.backupLocationsblock of theDataProtectionApplicationcustom resource (CR). -

Snapshot location

Secretwith the default name,cloud-credentials-azure. ThisSecretis not specified in theDataProtectionApplicationCR.

Procedure

-

Create a

credentials-velerofile for the snapshot location in the appropriate format for your cloud provider. Create a

Secretfor the snapshot location with the default name:$ oc create secret generic cloud-credentials-azure -n openshift-adp --from-file cloud=credentials-velero

-

Create a

credentials-velerofile for the backup location in the appropriate format for your object storage. Create a

Secretfor the backup location with a custom name:$ oc create secret generic <custom_secret> -n openshift-adp --from-file cloud=credentials-velero

Add the

Secretwith the custom name to theDataProtectionApplicationCR, as in the following example:apiVersion: oadp.openshift.io/v1alpha1 kind: DataProtectionApplication metadata: name: <dpa_sample> namespace: openshift-adp spec: ... backupLocations: - velero: config: resourceGroup: <azure_resource_group> storageAccount: <azure_storage_account_id> subscriptionId: <azure_subscription_id> storageAccountKeyEnvVar: AZURE_STORAGE_ACCOUNT_ACCESS_KEY credential: key: cloud name: <custom_secret> 1 provider: azure default: true objectStorage: bucket: <bucket_name> prefix: <prefix> snapshotLocations: - velero: config: resourceGroup: <azure_resource_group> subscriptionId: <azure_subscription_id> incremental: "true" name: default provider: azure- 1

- Backup location

Secretwith custom name.

4.4.4.3. Configuring the Data Protection Application

You can configure the Data Protection Application by setting Velero resource allocations or enabling self-signed CA certificates.

4.4.4.3.1. Setting Velero CPU and memory resource allocations

You set the CPU and memory resource allocations for the Velero pod by editing the DataProtectionApplication custom resource (CR) manifest.

Prerequisites

- You must have the OpenShift API for Data Protection (OADP) Operator installed.

Procedure

Edit the values in the

spec.configuration.velero.podConfig.ResourceAllocationsblock of theDataProtectionApplicationCR manifest, as in the following example:apiVersion: oadp.openshift.io/v1alpha1 kind: DataProtectionApplication metadata: name: <dpa_sample> spec: ... configuration: velero: podConfig: nodeSelector: <node selector> 1 resourceAllocations: 2 limits: cpu: "1" memory: 1024Mi requests: cpu: 200m memory: 256Mi

4.4.4.3.2. Enabling self-signed CA certificates

You must enable a self-signed CA certificate for object storage by editing the DataProtectionApplication custom resource (CR) manifest to prevent a certificate signed by unknown authority error.

Prerequisites

- You must have the OpenShift API for Data Protection (OADP) Operator installed.

Procedure

Edit the

spec.backupLocations.velero.objectStorage.caCertparameter andspec.backupLocations.velero.configparameters of theDataProtectionApplicationCR manifest:apiVersion: oadp.openshift.io/v1alpha1 kind: DataProtectionApplication metadata: name: <dpa_sample> spec: ... backupLocations: - name: default velero: provider: aws default: true objectStorage: bucket: <bucket> prefix: <prefix> caCert: <base64_encoded_cert_string> 1 config: insecureSkipTLSVerify: "false" 2 ...

4.4.4.4. Installing the Data Protection Application

You install the Data Protection Application (DPA) by creating an instance of the DataProtectionApplication API.

Prerequisites

- You must install the OADP Operator.

- You must configure object storage as a backup location.

- If you use snapshots to back up PVs, your cloud provider must support either a native snapshot API or Container Storage Interface (CSI) snapshots.

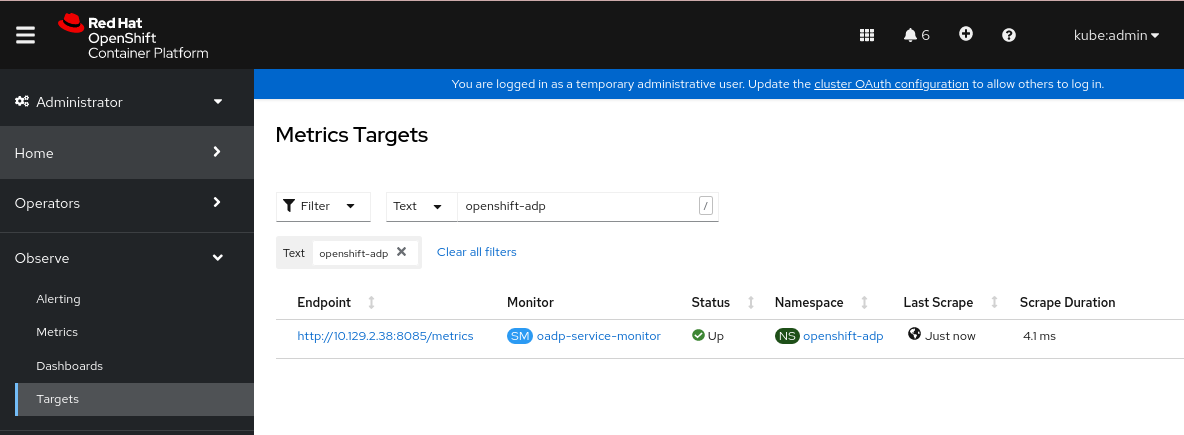

-