Red Hat Training

A Red Hat training course is available for Red Hat Enterprise Linux

Virtualization Host Configuration and Guest Installation Guide

Installing and configuring your virtual environment

Yehuda Zimmerman

Tahlia Richardson

Dayle Parker

Laura Bailey

Scott Radvan

Abstract

Chapter 1. Introduction

1.1. What is in This Guide?

Note

Chapter 2. System Requirements

Minimum system requirements

- 6 GB free disk space.

- 2 GB of RAM.

Recommended system requirements

- One processor core or hyper-thread for the maximum number of virtualized CPUs in a guest virtual machine and one for the host.

- 2 GB of RAM plus additional RAM for virtual machines.

- 6 GB disk space for the host, plus the required disk space for each virtual machine.Most guest operating systems will require at least 6GB of disk space, but the additional storage space required for each guest depends on its image format.For guest virtual machines using raw images, the guest's total required space

(total for raw format)is equal to or greater than the sum of the space required by the guest's raw image files(images), the 6GB space required by the host operating system(host), and the swap space that guest will require(swap).Equation 2.1. Calculating required space for guest virtual machines using raw images

total for raw format = images + host + swapFor qcow images, you must also calculate the expected maximum storage requirements of the guest(total for qcow format), as qcow and qcow2 images grow as required. To allow for this expansion, first multiply the expected maximum storage requirements of the guest(expected maximum guest storage)by 1.01, and add to this the space required by the host(host), and the necessary swap space(swap).Equation 2.2. Calculating required space for guest virtual machines using qcow images

total for qcow format = (expected maximum guest storage * 1.01) + host + swap

Using swap space can provide additional memory beyond the available physical memory. The swap partition is used for swapping underused memory to the hard drive to speed up memory performance. The default size of the swap partition is calculated from the physical RAM of the host.

The KVM hypervisor requires:

- an Intel processor with the Intel VT-x and Intel 64 extensions for x86-based systems, or

- an AMD processor with the AMD-V and the AMD64 extensions.

The guest virtual machine storage methods are:

- files on local storage,

- physical disk partitions,

- locally connected physical LUNs,

- LVM partitions,

- NFS shared file systems,

- iSCSI,

- GFS2 clustered file systems,

- Fibre Channel-based LUNs, and

- Fibre Channel over Ethernet (FCoE).

Chapter 3. KVM Guest Virtual Machine Compatibility

3.1. Red Hat Enterprise Linux 6 Support Limits

- For hypervisors: http://www.redhat.com/resourcelibrary/articles/virtualization-limits-rhel-hypervisors

Note

Red Hat Enterprise Linux 6.5 now supports 4TiB of memory per KVM guest.

3.2. Supported CPU Models

qemu32 and qemu64 are basic CPU models but there are other models (with additional features) available.

<!-- This is only a partial file, only containing the CPU models. The XML file has more information (including supported features per model) which you can see when you open the file yourself -->

<cpus>

<arch name='x86'>

...

<!-- Intel-based QEMU generic CPU models -->

<model name='pentium'>

<model name='486'/>

</model>

<model name='pentium2'>

<model name='pentium'/>

</model>

<model name='pentium3'>

<model name='pentium2'/>

</model>

<model name='pentiumpro'>

</model>

<model name='coreduo'>

<model name='pentiumpro'/>

<vendor name='Intel'/>

</model>

<model name='n270'>

<model name='coreduo'/>

</model>

<model name='core2duo'>

<model name='n270'/>

</model>

<!-- Generic QEMU CPU models -->

<model name='qemu32'>

<model name='pentiumpro'/>

</model>

<model name='kvm32'>

<model name='qemu32'/>

</model>

<model name='cpu64-rhel5'>

<model name='kvm32'/>

</model>

<model name='cpu64-rhel6'>

<model name='cpu64-rhel5'/>

</model>

<model name='kvm64'>

<model name='cpu64-rhel5'/>

</model>

<model name='qemu64'>

<model name='kvm64'/>

</model>

<!-- Intel CPU models -->

<model name='Conroe'>

<model name='pentiumpro'/>

<vendor name='Intel'/>

</model>

<model name='Penryn'>

<model name='Conroe'/>

</model>

<model name='Nehalem'>

<model name='Penryn'/>

</model>

<model name='Westmere'>

<model name='Nehalem'/>

<feature name='aes'/>

</model>

<model name='SandyBridge'>

<model name='Westmere'/>

</model>

<model name='Haswell'>

<model name='SandyBridge'/>

</model>

<!-- AMD CPUs -->

<model name='athlon'>

<model name='pentiumpro'/>

<vendor name='AMD'/>

</model>

<model name='phenom'>

<model name='cpu64-rhel5'/>

<vendor name='AMD'/>

</model>

<model name='Opteron_G1'>

<model name='cpu64-rhel5'/>

<vendor name='AMD'/>

</model>

<model name='Opteron_G2'>

<model name='Opteron_G1'/>

</model>

<model name='Opteron_G3'>

<model name='Opteron_G2'/>

</model>

<model name='Opteron_G4'>

<model name='Opteron_G2'/>

</model>

<model name='Opteron_G5'>

<model name='Opteron_G4'/>

</model>

</arch>

</cpus>Note

qemu-kvm -cpu ? command.

Chapter 4. Virtualization Restrictions

4.1. KVM Restrictions

- Maximum vCPUs per guest

- The maximum amount of virtual CPUs that is supported per guest varies depending on which minor version of Red Hat Enterprise Linux 6 you are using as a host machine. The release of 6.0 introduced a maximum of 64, while 6.3 introduced a maximum of 160. As of version 6.7, a maximum of 240 virtual CPUs per guest is supported.

- Constant TSC bit

- Systems without a Constant Time Stamp Counter require additional configuration. Refer to Chapter 14, KVM Guest Timing Management for details on determining whether you have a Constant Time Stamp Counter and configuration steps for fixing any related issues.

- Virtualized SCSI devices

- SCSI emulation is not supported with KVM in Red Hat Enterprise Linux.

- Virtualized IDE devices

- KVM is limited to a maximum of four virtualized (emulated) IDE devices per guest virtual machine.

- Migration restrictions

- Device assignment refers to physical devices that have been exposed to a virtual machine, for the exclusive use of that virtual machine. Because device assignment uses hardware on the specific host where the virtual machine runs, migration and save/restore are not supported when device assignment is in use. If the guest operating system supports hot plugging, assigned devices can be removed prior to the migration or save/restore operation to enable this feature.Live migration is only possible between hosts with the same CPU type (that is, Intel to Intel or AMD to AMD only).For live migration, both hosts must have the same value set for the No eXecution (NX) bit, either

onoroff.For migration to work,cache=nonemust be specified for all block devices opened in write mode.Warning

Failing to include thecache=noneoption can result in disk corruption. - Storage restrictions

- There are risks associated with giving guest virtual machines write access to entire disks or block devices (such as

/dev/sdb). If a guest virtual machine has access to an entire block device, it can share any volume label or partition table with the host machine. If bugs exist in the host system's partition recognition code, this can create a security risk. Avoid this risk by configuring the host machine to ignore devices assigned to a guest virtual machine.Warning

Failing to adhere to storage restrictions can result in risks to security. - Core dumping restrictions

- Core dumping uses the same infrastructure as migration and requires more device knowledge and control than device assignement can provide. Therefore, core dumping is not supported when device assignment is in use.

4.2. Application Restrictions

- kdump server

- netdump server

4.3. Other Restrictions

Chapter 5. Installing the Virtualization Packages

kvm kernel module.

5.1. Configuring a Virtualization Host Installation

Note

Procedure 5.1. Installing the virtualization package group

Launch the Red Hat Enterprise Linux 6 installation program

Start an interactive Red Hat Enterprise Linux 6 installation from the Red Hat Enterprise Linux Installation CD-ROM, DVD or PXE.Continue installation up to package selection

Complete the other steps up to the package selection step.

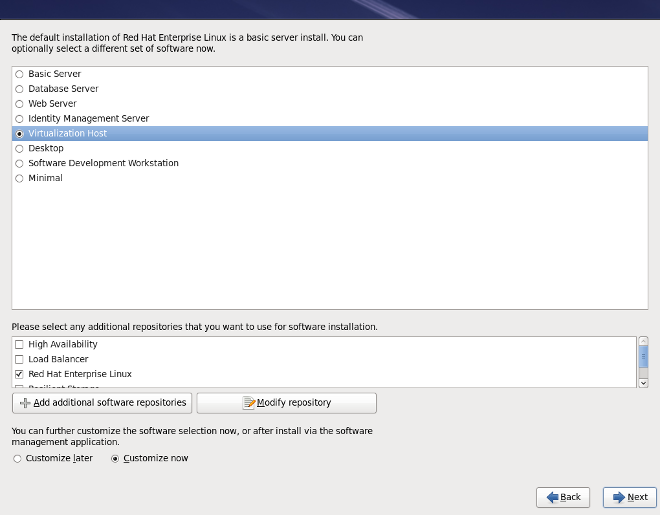

Figure 5.1. The Red Hat Enterprise Linux package selection screen

Select the Virtualization Host server role to install a platform for guest virtual machines. Alternatively, ensure that the Customize Now radio button is selected before proceeding, to specify individual packages.Select the Virtualization package group

This selects the qemu-kvm emulator,virt-manager,libvirtandvirt-viewerfor installation.

Figure 5.2. The Red Hat Enterprise Linux package selection screen

Note

If you wish to create virtual machines in a graphical user interface (virt-manager) later, you should also select theGeneral Purpose Desktoppackage group.Customize the packages (if required)

Customize the Virtualization group if you require other virtualization packages.

Figure 5.3. The Red Hat Enterprise Linux package selection screen

Click on the Close button, then the Next button to continue the installation.

Important

Kickstart files allow for large, automated installations without a user manually installing each individual host system. This section describes how to create and use a Kickstart file to install Red Hat Enterprise Linux with the Virtualization packages.

%packages section of your Kickstart file, append the following package groups:

@virtualization @virtualization-client @virtualization-platform @virtualization-tools

5.2. Installing Virtualization Packages on an Existing Red Hat Enterprise Linux System

subscription-manager register command and follow the prompts.

Note

yum

To use virtualization on Red Hat Enterprise Linux you require at least the qemu-kvm and qemu-img packages. These packages provide the user-level KVM emulator and disk image manager on the host Red Hat Enterprise Linux system.

qemu-kvm and qemu-img packages, run the following command:

# yum install qemu-kvm qemu-img

Recommended virtualization packages

- python-virtinst

- Provides the

virt-installcommand for creating virtual machines. - libvirt

- The libvirt package provides the server and host side libraries for interacting with hypervisors and host systems. The libvirt package provides the

libvirtddaemon that handles the library calls, manages virtual machines and controls the hypervisor. - libvirt-python

- The libvirt-python package contains a module that permits applications written in the Python programming language to use the interface supplied by the libvirt API.

- virt-manager

virt-manager, also known as Virtual Machine Manager, provides a graphical tool for administering virtual machines. It uses libvirt-client library as the management API.- libvirt-client

- The libvirt-client package provides the client-side APIs and libraries for accessing libvirt servers. The libvirt-client package includes the

virshcommand line tool to manage and control virtual machines and hypervisors from the command line or a special virtualization shell.

# yum install virt-manager libvirt libvirt-python python-virtinst libvirt-client

The virtualization packages can also be installed from package groups. The following table describes the virtualization package groups and what they provide.

Note

qemu-img package is installed as a dependency of the Virtualization package group if it is not already installed on the system. It can also be installed manually with the yum install qemu-img command as described previously.

Table 5.1. Virtualization package groups

| Package Group | Description | Mandatory Packages | Optional Packages |

|---|---|---|---|

| Virtualization | Provides an environment for hosting virtual machines | qemu-kvm | qemu-guest-agent, qemu-kvm-tools |

| Virtualization Client | Clients for installing and managing virtualization instances | python-virtinst, virt-manager, virt-viewer | virt-top |

| Virtualization Platform | Provides an interface for accessing and controlling virtual machines and containers | libvirt, libvirt-client, virt-who, virt-what | fence-virtd-libvirt, fence-virtd-multicast, fence-virtd-serial, libvirt-cim, libvirt-java, libvirt-qmf, libvirt-snmp, perl-Sys-Virt |

| Virtualization Tools | Tools for offline virtual image management | libguestfs | libguestfs-java, libguestfs-tools, virt-v2v |

yum groupinstall <groupname> command. For instance, to install the Virtualization Tools package group, run the yum groupinstall "Virtualization Tools" command.

Chapter 6. Guest Virtual Machine Installation Overview

virt-install. Both methods are covered by this chapter.

6.1. Guest Virtual Machine Prerequisites and Considerations

- Performance

- Guest virtual machines should be deployed and configured based on their intended tasks. Some guest systems (for instance, guests running a database server) may require special performance considerations. Guests may require more assigned CPUs or memory based on their role and projected system load.

- Input/Output requirements and types of Input/Output

- Some guest virtual machines may have a particularly high I/O requirement or may require further considerations or projections based on the type of I/O (for instance, typical disk block size access, or the amount of clients).

- Storage

- Some guest virtual machines may require higher priority access to storage or faster disk types, or may require exclusive access to areas of storage. The amount of storage used by guests should also be regularly monitored and taken into account when deploying and maintaining storage.

- Networking and network infrastructure

- Depending upon your environment, some guest virtual machines could require faster network links than other guests. Bandwidth or latency are often factors when deploying and maintaining guests, especially as requirements or load changes.

- Request requirements

- SCSI requests can only be issued to guest virtual machines on virtio drives if the virtio drives are backed by whole disks, and the disk device parameter is set to

lun, as shown in the following example:<devices> <emulator>/usr/libexec/qemu-kvm</emulator> <disk type='block' device='lun'>

6.2. Creating Guests with virt-install

virt-install command to create guest virtual machines from the command line. virt-install is used either interactively or as part of a script to automate the creation of virtual machines. Using virt-install with Kickstart files allows for unattended installation of virtual machines.

virt-install tool provides a number of options that can be passed on the command line. To see a complete list of options run the following command:

# virt-install --help

virt-install commands to complete successfully. The virt-install man page also documents each command option and important variables.

qemu-img is a related command which may be used before virt-install to configure storage options.

--graphics option which allows graphical installation of a virtual machine.

Example 6.1. Using virt-install to install a Red Hat Enterprise Linux 5 guest virtual machine

virt-install \ --name=guest1-rhel5-64 \ --file=/var/lib/libvirt/images/guest1-rhel5-64.dsk \ --file-size=8 \ --nonsparse --graphics spice \ --vcpus=2 --ram=2048 \ --location=http://example1.com/installation_tree/RHEL5.6-Server-x86_64/os \ --network bridge=br0 \ --os-type=linux \ --os-variant=rhel5.4

os-type for your operating system when running this command.

man virt-install for more examples.

Note

virt-install, the --os-type=windows option is recommended. This option prevents the CD-ROM from disconnecting when rebooting during the installation procedure. The --os-variant option further optimizes the configuration for a specific guest operating system.

6.3. Creating Guests with virt-manager

virt-manager, also known as Virtual Machine Manager, is a graphical tool for creating and managing guest virtual machines.

Procedure 6.1. Creating a guest virtual machine with virt-manager

Open virt-manager

Startvirt-manager. Launch the Virtual Machine Manager application from the Applications menu and System Tools submenu. Alternatively, run thevirt-managercommand as root.Optional: Open a remote hypervisor

Select the hypervisor and click the Connect button to connect to the remote hypervisor.Create a new virtual machine

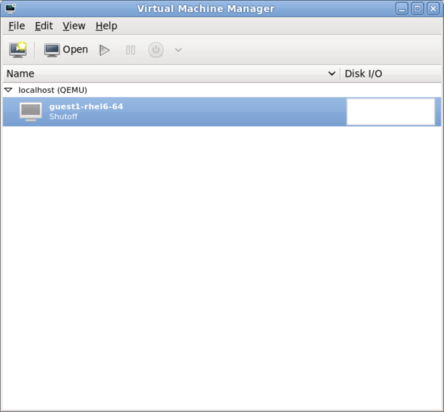

The virt-manager window allows you to create a new virtual machine. Click the Create a new virtual machine button (Figure 6.1, “Virtual Machine Manager window”) to open the New VM wizard.

Figure 6.1. Virtual Machine Manager window

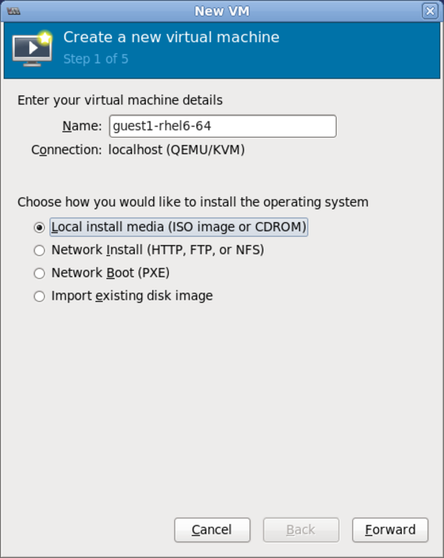

The New VM wizard breaks down the virtual machine creation process into five steps:- Naming the guest virtual machine and choosing the installation type

- Locating and configuring the installation media

- Configuring memory and CPU options

- Configuring the virtual machine's storage

- Configuring networking, architecture, and other hardware settings

Ensure thatvirt-managercan access the installation media (whether locally or over the network) before you continue.Specify name and installation type

The guest virtual machine creation process starts with the selection of a name and installation type. Virtual machine names can have underscores (_), periods (.), and hyphens (-).

Figure 6.2. Name virtual machine and select installation method

Type in a virtual machine name and choose an installation type:- Local install media (ISO image or CDROM)

- This method uses a CD-ROM, DVD, or image of an installation disk (for example,

.iso). - Network Install (HTTP, FTP, or NFS)

- This method involves the use of a mirrored Red Hat Enterprise Linux or Fedora installation tree to install a guest. The installation tree must be accessible through either HTTP, FTP, or NFS.

- Network Boot (PXE)

- This method uses a Preboot eXecution Environment (PXE) server to install the guest virtual machine. Setting up a PXE server is covered in the Deployment Guide. To install using network boot, the guest must have a routable IP address or shared network device. For information on the required networking configuration for PXE installation, refer to Section 6.4, “Creating Guests with PXE”.

- Import existing disk image

- This method allows you to create a new guest virtual machine and import a disk image (containing a pre-installed, bootable operating system) to it.

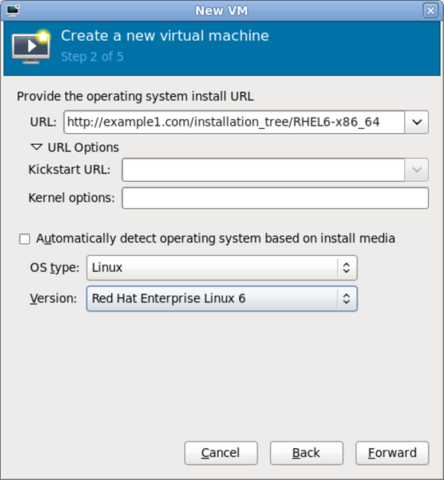

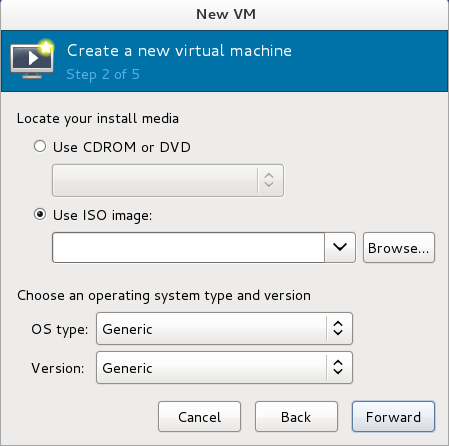

Click Forward to continue.Configure installation

Next, configure the OS type and Version of the installation. Ensure that you select the appropriate OS type for your virtual machine. Depending on the method of installation, provide the install URL or existing storage path.

Figure 6.3. Remote installation URL

Figure 6.4. Local ISO image installation

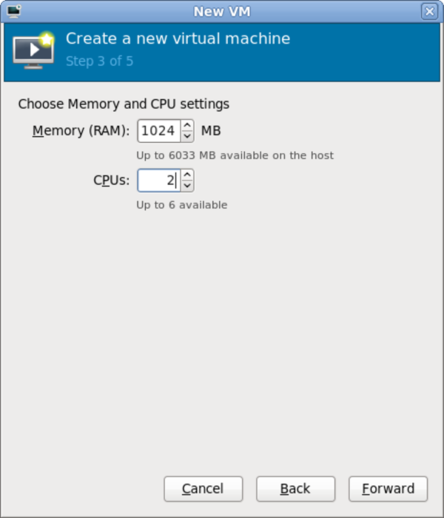

Configure CPU and memory

The next step involves configuring the number of CPUs and amount of memory to allocate to the virtual machine. The wizard shows the number of CPUs and amount of memory you can allocate; configure these settings and click Forward.

Figure 6.5. Configuring CPU and memory

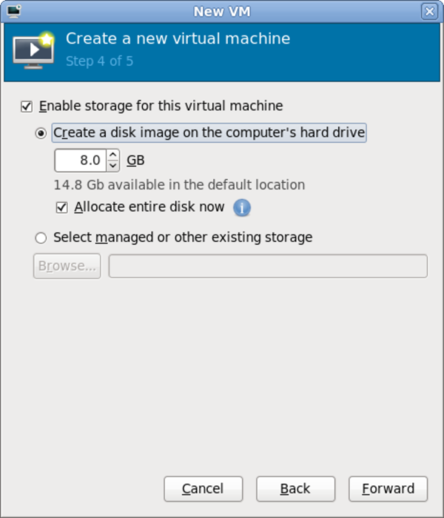

Configure storage

Assign storage to the guest virtual machine.

Figure 6.6. Configuring virtual storage

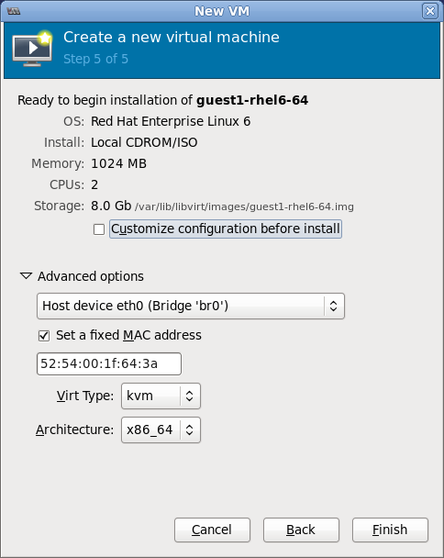

If you chose to import an existing disk image during the first step,virt-managerwill skip this step.Assign sufficient space for your virtual machine and any applications it requires, then click Forward to continue.Final configuration

Verify the settings of the virtual machine and click Finish when you are satisfied; doing so will create the virtual machine with default networking settings, virtualization type, and architecture.

Figure 6.7. Verifying the configuration

If you prefer to further configure the virtual machine's hardware first, check the Customize configuration before install box first before clicking Finish. Doing so will open another wizard that will allow you to add, remove, and configure the virtual machine's hardware settings.After configuring the virtual machine's hardware, click Apply.virt-managerwill then create the virtual machine with your specified hardware settings.- After the installation completes, you can connect to the guest operating system. For more information, see Section 6.5, “Connecting to Virtual Machines”

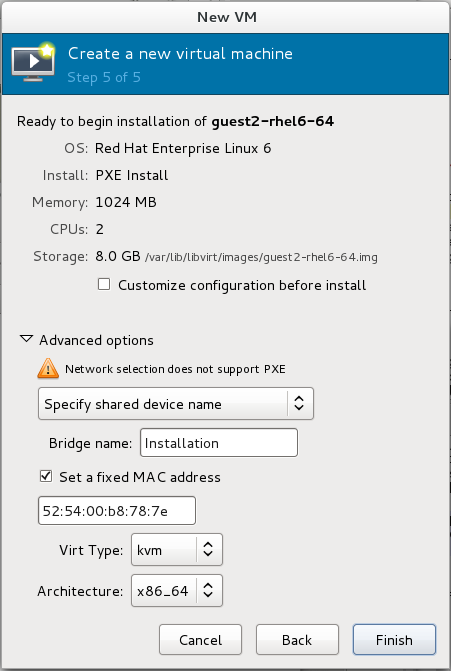

6.4. Creating Guests with PXE

PXE guest installation requires a PXE server running on the same subnet as the guest virtual machines you wish to install. The method of accomplishing this depends on how the virtual machines are connected to the network. Contact Support if you require assistance setting up a PXE server.

virt-install PXE installations require both the --network=bridge:installation parameter, where installation is the name of your bridge, and the --pxe parameter.

reboot-timeout to allow the guest to retry booting if no bootable device is found, like so:

# qemu-kvm -boot reboot-timeout=1000

Example 6.2. Fully-virtualized PXE installation with virt-install

# virt-install --hvm --connect qemu:///system \ --network=bridge:installation --pxe --graphics spice \ --name rhel6-machine --ram=756 --vcpus=4 \ --os-type=linux --os-variant=rhel6 \ --disk path=/var/lib/libvirt/images/rhel6-machine.img,size=10

--hvm) guest can only be installed in a text-only environment if the --location and --extra-args "console=console_type" are provided instead of the --graphics spice parameter.

Procedure 6.2. PXE installation with virt-manager

Select PXE

Select PXE as the installation method and follow the rest of the steps to configure the OS type, memory, CPU and storage settings.

Figure 6.8. Selecting the installation method

Figure 6.9. Selecting the installation type

Figure 6.10. Specifying virtualized hardware details

Figure 6.11. Specifying storage details

Start the installation

The installation is ready to start.

Figure 6.12. Finalizing virtual machine details

6.5. Connecting to Virtual Machines

- virt-viewer or remote-viewer - For details, see Graphical user interface tools for guest virtual machine management.

- virt-manager - For details, see Managing guests with the Virtual Machine Manager.

- The guest's serial console - For details, see Connecting the serial console for the Guest Virtual Machine.

Chapter 7. Installing a Red Hat Enterprise Linux 6 Guest Virtual Machine on a Red Hat Enterprise Linux 6 Host

Note

7.1. Creating a Red Hat Enterprise Linux 6 Guest with Local Installation Media

Procedure 7.1. Creating a Red Hat Enterprise Linux 6 guest virtual machine with virt-manager

Optional: Preparation

Prepare the storage environment for the virtual machine. For more information on preparing storage, refer to the Red Hat Enterprise Linux 6 Virtualization Administration Guide.Important

Various storage types may be used for storing guest virtual machines. However, for a virtual machine to be able to use migration features the virtual machine must be created on networked storage.Red Hat Enterprise Linux 6 requires at least 1GB of storage space. However, Red Hat recommends at least 5GB of storage space for a Red Hat Enterprise Linux 6 installation and for the procedures in this guide.Open virt-manager and start the wizard

Open virt-manager by executing thevirt-managercommand as root or opening Applications → System Tools → Virtual Machine Manager.

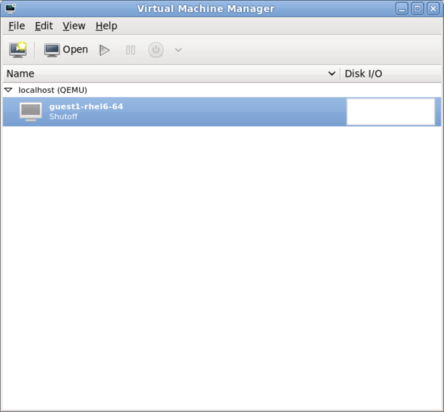

Figure 7.1. The Virtual Machine Manager window

Click on the Create a new virtual machine button to start the new virtualized guest wizard.

Figure 7.2. The Create a new virtual machine button

The New VM window opens.Name the virtual machine

Virtual machine names can contain letters, numbers and the following characters: '_', '.' and '-'. Virtual machine names must be unique for migration and cannot consist only of numbers.Choose the Local install media (ISO image or CDROM) radio button.

Figure 7.3. The New VM window - Step 1

Click Forward to continue.Select the installation media

Select the appropriate radio button for your installation media.

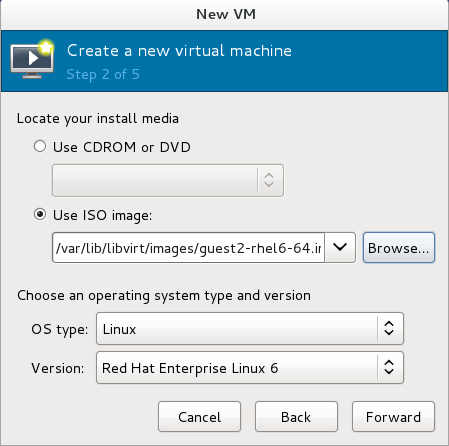

Figure 7.4. Locate your install media

- If you wish to install from a CD-ROM or DVD, select the Use CDROM or DVD radio button, and select the appropriate disk drive from the drop-down list of drives available.

- If you wish to install from an ISO image, select Use ISO image, and then click the Browse... button to open the Locate media volume window.Select the installation image you wish to use, and click Choose Volume.If no images are displayed in the Locate media volume window, click on the Browse Local button to browse the host machine for the installation image or DVD drive containing the installation disk. Select the installation image or DVD drive containing the installation disk and click Open; the volume is selected for use and you are returned to the Create a new virtual machine wizard.

Important

For ISO image files and guest storage images, the recommended location to use is/var/lib/libvirt/images/. Any other location may require additional configuration by SELinux. Refer to the Red Hat Enterprise Linux 6 Virtualization Administration Guide for more details on configuring SELinux.

Select the operating system type and version which match the installation media you have selected.

Figure 7.5. The New VM window - Step 2

Click Forward to continue.Set RAM and virtual CPUs

Choose appropriate values for the virtual CPUs and RAM allocation. These values affect the host's and guest's performance. Memory and virtual CPUs can be overcommitted. For more information on overcommitting, refer to the Red Hat Enterprise Linux 6 Virtualization Administration Guide.Virtual machines require sufficient physical memory (RAM) to run efficiently and effectively. Red Hat supports a minimum of 512MB of RAM for a virtual machine. Red Hat recommends at least 1024MB of RAM for each logical core.Assign sufficient virtual CPUs for the virtual machine. If the virtual machine runs a multithreaded application, assign the number of virtual CPUs the guest virtual machine will require to run efficiently.You cannot assign more virtual CPUs than there are physical processors (or hyper-threads) available on the host system. The number of virtual CPUs available is noted in the Up to X available field.

Figure 7.6. The new VM window - Step 3

Click Forward to continue.Enable and assign storage

Enable and assign storage for the Red Hat Enterprise Linux 6 guest virtual machine. Assign at least 5GB for a desktop installation or at least 1GB for a minimal installation.Note

Live and offline migrations require virtual machines to be installed on shared network storage. For information on setting up shared storage for virtual machines, refer to the Red Hat Enterprise Linux Virtualization Administration Guide.With the default local storage

Select the Create a disk image on the computer's hard drive radio button to create a file-based image in the default storage pool, the/var/lib/libvirt/images/directory. Enter the size of the disk image to be created. If the Allocate entire disk now check box is selected, a disk image of the size specified will be created immediately. If not, the disk image will grow as it becomes filled.Note

Although the storage pool is a virtual container it is limited by two factors: maximum size allowed to it by qemu-kvm and the size of the disk on the host physical machine. Storage pools may not exceed the size of the disk on the host physical machine. The maximum sizes are as follows:- virtio-blk = 2^63 bytes or 8 Exabytes(using raw files or disk)

- Ext4 = ~ 16 TB (using 4 KB block size)

- XFS = ~8 Exabytes

- qcow2 and host file systems keep their own metadata and scalability should be evaluated/tuned when trying very large image sizes. Using raw disks means fewer layers that could affect scalability or max size.

Figure 7.7. The New VM window - Step 4

Click Forward to create a disk image on the local hard drive. Alternatively, select Select managed or other existing storage, then select Browse to configure managed storage.With a storage pool

If you selected Select managed or other existing storage in the previous step to use a storage pool and clicked Browse, the Locate or create storage volume window will appear.

Figure 7.8. The Locate or create storage volume window

- Select a storage pool from the Storage Pools list.

- Optional: Click on the New Volume button to create a new storage volume. The Add a Storage Volume screen will appear. Enter the name of the new storage volume.Choose a format option from the Format drop-down menu. Format options include raw, cow, qcow, qcow2, qed, vmdk, and vpc. Adjust other fields as desired.

Figure 7.9. The Add a Storage Volume window

Click Finish to continue.Verify and finish

Verify there were no errors made during the wizard and everything appears as expected.Select the Customize configuration before install check box to change the guest's storage or network devices, to use the paravirtualized drivers or to add additional devices.Click on theAdvanced optionsdown arrow to inspect and modify advanced options. For a standard Red Hat Enterprise Linux 6 installation, none of these options require modification.

Figure 7.10. The New VM window - local storage

Click Finish to continue into the Red Hat Enterprise Linux installation sequence. For more information on installing Red Hat Enterprise Linux 6 refer to the Red Hat Enterprise Linux 6 Installation Guide.

7.2. Creating a Red Hat Enterprise Linux 6 Guest with a Network Installation Tree

Procedure 7.2. Creating a Red Hat Enterprise Linux 6 guest with virt-manager

Optional: Preparation

Prepare the storage environment for the guest virtual machine. For more information on preparing storage, refer to the Red Hat Enterprise Linux 6 Virtualization Administration Guide.Important

Various storage types may be used for storing guest virtual machines. However, for a virtual machine to be able to use migration features the virtual machine must be created on networked storage.Red Hat Enterprise Linux 6 requires at least 1GB of storage space. However, Red Hat recommends at least 5GB of storage space for a Red Hat Enterprise Linux 6 installation and for the procedures in this guide.Open virt-manager and start the wizard

Open virt-manager by executing thevirt-managercommand as root or opening Applications → System Tools → Virtual Machine Manager.

Figure 7.11. The main virt-manager window

Click on the Create a new virtual machine button to start the new virtual machine wizard.

Figure 7.12. The Create a new virtual machine button

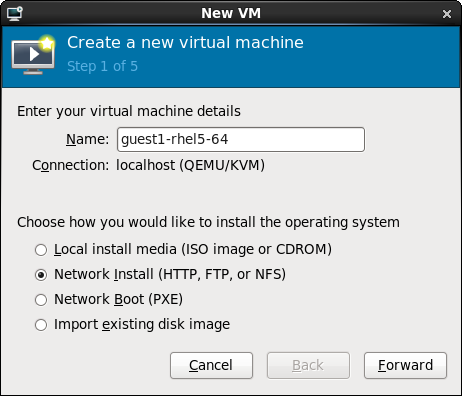

The Create a new virtual machine window opens.Name the virtual machine

Virtual machine names can contain letters, numbers and the following characters: '_', '.' and '-'. Virtual machine names must be unique for migration and cannot consist only of numbers.Choose the installation method from the list of radio buttons.

Figure 7.13. The New VM window - Step 1

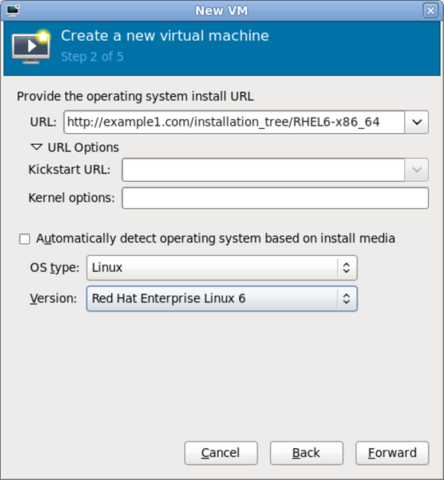

Click Forward to continue.- Provide the installation URL, and the Kickstart URL and Kernel options if required.

Figure 7.14. The New VM window - Step 2

Click Forward to continue. - The remaining steps are the same as the ISO installation procedure. Continue from Step 5 of the ISO installation procedure.

7.3. Creating a Red Hat Enterprise Linux 6 Guest with PXE

Procedure 7.3. Creating a Red Hat Enterprise Linux 6 guest with virt-manager

Optional: Preparation

Prepare the storage environment for the virtual machine. For more information on preparing storage, refer to the Red Hat Enterprise Linux 6 Virtualization Administration Guide.Important

Various storage types may be used for storing guest virtual machines. However, for a virtual machine to be able to use migration features the virtual machine must be created on networked storage.Red Hat Enterprise Linux 6 requires at least 1GB of storage space. However, Red Hat recommends at least 5GB of storage space for a Red Hat Enterprise Linux 6 installation and for the procedures in this guide.Open virt-manager and start the wizard

Open virt-manager by executing thevirt-managercommand as root or opening Applications → System Tools → Virtual Machine Manager.

Figure 7.15. The main virt-manager window

Click on the Create new virtualized guest button to start the new virtualized guest wizard.

Figure 7.16. The create new virtualized guest button

The New VM window opens.Name the virtual machine

Virtual machine names can contain letters, numbers and the following characters: '_', '.' and '-'. Virtual machine names must be unique for migration and cannot consist only of numbers.Choose the installation method from the list of radio buttons.

Figure 7.17. The New VM window - Step 1

Click Forward to continue.- The remaining steps are the same as the ISO installation procedure. Continue from Step 5 of the ISO installation procedure. From this point, the only difference in this PXE procedure is on the final New VM screen, which shows the Install: PXE Install field.

Figure 7.18. The New VM window - Step 5 - PXE Install

Chapter 8. Virtualizing Red Hat Enterprise Linux on Other Platforms

8.1. On VMware ESX

vmw_balloon driver, a paravirtualized memory ballooning driver used when running Red Hat Enterprise Linux on VMware hosts. For further information about this driver, refer to http://kb.VMware.com/selfservice/microsites/search.do?cmd=displayKC&docType=kc&externalId=1002586.

vmmouse_drv driver, a paravirtualized mouse driver used when running Red Hat Enterprise Linux on VMware hosts. For further information about this driver, refer to http://kb.VMware.com/selfservice/microsites/search.do?cmd=displayKC&docType=kc&externalId=5739104.

vmware_drv driver, a paravirtualized video driver used when running Red Hat Enterprise Linux on VMware hosts. For further information about this driver, refer to http://kb.VMware.com/selfservice/microsites/search.do?cmd=displayKC&docType=kc&externalId=1033557.

vmxnet3 driver, a paravirtualized network adapter used when running Red Hat Enterprise Linux on VMware hosts. For further information about this driver, refer to http://kb.VMware.com/selfservice/microsites/search.do?language=en_US&cmd=displayKC&externalId=1001805.

vmw_pvscsi driver, a paravirtualized SCSI adapter used when running Red Hat Enterprise Linux on VMware hosts. For further information about this driver, refer to http://kb.VMware.com/selfservice/microsites/search.do?language=en_US&cmd=displayKC&externalId=1010398.

8.2. On Hyper-V

Important

- Upgraded VMBUS protocols - VMBUS protocols have been upgraded to Windows 8 level. As part of this work, now VMBUS interrupts can be processed on all available virtual CPUs in the guest. Furthermore, the signaling protocol between the Red Hat Enterprise Linux guest virtual machine and the Windows host physical machine has been optimized.

- Synthetic frame buffer driver - Provides enhanced graphics performance and superior resolution for Red Hat Enterprise Linux desktop users.

- Live Virtual Machine Backup support - Provisions uninterrupted backup support for live Red Hat Enterprise Linux guest virtual machines.

- Dynamic expansion of fixed size Linux VHDXs - Allows expansion of live mounted fixed size Red Hat Enterprise Linux VHDXs.

- Boot using UEFI - Allows virtual machines to boot using Unified Extensible Firmware Interface (UEFI) on a Hyper-V 2012 R2 host.

Chapter 9. Installing a Fully-virtualized Windows Guest

virt-install), launch the operating system's installer inside the guest, and access the installer through virt-viewer.

virt-viewer tool. This tool allows you to display the graphical console of a virtual machine (using the SPICE or VNC protocol). In doing so, virt-viewer allows you to install a fully-virtualized guest's operating system with that operating system's installer .

- Creating the guest virtual machine, using either

virt-installorvirt-manager. - Installing the Windows operating system on the guest virtual machine, using

virt-viewer.

virt-install or virt-manager.

9.1. Using virt-install to Create a Guest

virt-install command allows you to create a fully-virtualized guest from a terminal, without the need for a GUI.

Important

# virt-install \ --name=guest-name \ --os-type=windows \ --network network=default \ --disk path=path-to-disk,size=disk-size \ --cdrom=path-to-install-disk \ --graphics spice --ram=1024

path-to-disk must be a device (e.g. /dev/sda3) or image file (/var/lib/libvirt/images/name.img). It must also have enough free space to support the disk-size.

Important

/var/lib/libvirt/images/ by default. Other directory locations for file-based images are possible, but may require SELinux configuration. If you run SELinux in enforcing mode, refer to the Red Hat Enterprise Linux 6 Virtualization Administration Guide for more information on SELinux.

virt-install interactively. To do so, use the --prompt command, as in:

# virt-install --prompt

virt-viewer will launch the guest and run the operating system's installer. Refer to the relevant Microsoft installation documentation for instructions on how to install the operating system.

Chapter 10. KVM Paravirtualized (virtio) Drivers

- Red Hat Enterprise Linux 4.8 and newer

- Red Hat Enterprise Linux 5.3 and newer

- Red Hat Enterprise Linux 6 and newer

- Red Hat Enterprise Linux 7 and newer

- Some versions of Linux based on the 2.6.27 kernel or newer kernel versions.

Note

- Windows Server 2003 (32-bit and 64-bit versions)

- Windows Server 2008 (32-bit and 64-bit versions)

- Windows Server 2008 R2 (64-bit only)

- Windows 7 (32-bit and 64-bit versions)

- Windows Server 2012 (64-bit only)

- Windows Server 2012 R2 (64-bit only)

- Windows 8 (32-bit and 64-bit versions)

- Windows 8.1 (32-bit and 64-bit versions)

10.1. Installing the KVM Windows virtio Drivers

- hosting the installation files on a network accessible to the virtual machine

- using a virtualized CD-ROM device of the driver installation disk .iso file

- using a USB drive, by mounting the same (provided) .ISO file that you would use for the CD-ROM

- using a virtualized floppy device to install the drivers during boot time (required and recommended only for XP/2003)

Download the drivers

The virtio-win package contains the virtio block and network drivers for all supported Windows guest virtual machines.Download and install the virtio-win package on the host with theyumcommand.# yum install virtio-win

The list of virtio-win packages that are supported on Windows operating systems, and the current certified package version, can be found at the following URL: windowsservercatalog.com.Note that the Red Hat Virtualization Hypervisor and Red Hat Enterprise Linux are created on the same code base so the drivers for the same version (for example, Red Hat Virtualization Hypervisor 3.3 and Red Hat Enterprise Linux 6.5) are supported for both environments.The virtio-win package installs a CD-ROM image,virtio-win.iso, in the/usr/share/virtio-win/directory.Install the virtio drivers

When booting a Windows guest that uses virtio-win devices, the relevant virtio-win device drivers must already be installed on this guest. The virtio-win drivers are not provided as inbox drivers in Microsoft's Windows installation kit, so installation of a Windows guest on a virtio-win storage device (viostor/virtio-scsi) requires that you provide the appropriate driver during the installation, either directly from thevirtio-win.isoor from the supplied Virtual Floppy imagevirtio-win<version>.vfd.

10.2. Installing the Drivers on an Installed Windows Guest Virtual Machine

virt-manager and then install the drivers.

Procedure 10.1. Installing from the driver CD-ROM image with virt-manager

Open virt-manager and the guest virtual machine

Openvirt-manager, then open the guest virtual machine from the list by double-clicking the guest name.Open the hardware window

Click the lightbulb icon on the toolbar at the top of the window to view virtual hardware details.

Figure 10.1. The virtual hardware details button

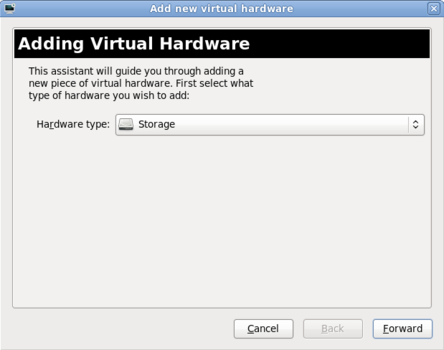

Then click the Add Hardware button at the bottom of the new view that appears. This opens a wizard for adding the new device.Select the device type — for Red Hat Enterprise Linux 6 versions prior to 6.2

Skip this step if you are using Red Hat Enterprise Linux 6.2 or later.On Red Hat Enterprise Linux 6 versions prior to version 6.2, you must select the type of device you wish to add. In this case, select Storage from the drop-down menu.

Figure 10.2. The Add new virtual hardware wizard in Red Hat Enterprise Linux 6.1

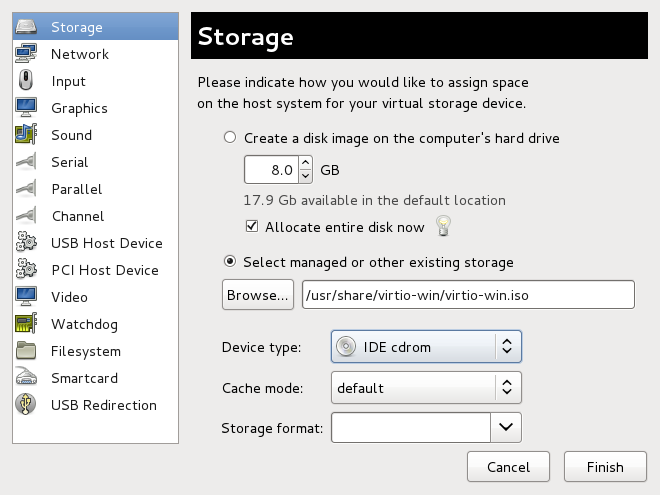

Click the Finish button to proceed.Select the ISO file

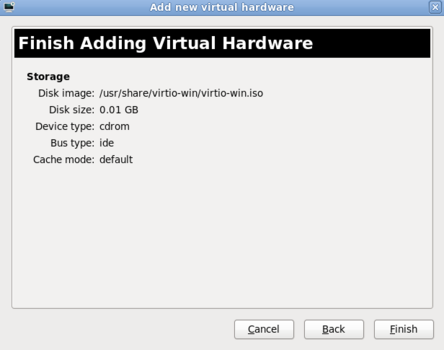

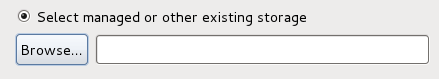

Ensure that the Select managed or other existing storage radio button is selected, and browse to the virtio driver's.isoimage file. The default location for the latest version of the drivers is/usr/share/virtio-win/virtio-win.iso.Change the Device type to IDE cdrom and click the Forward button to proceed.

Figure 10.3. The Add new virtual hardware wizard

Finish adding virtual hardware — for Red Hat Enterprise Linux 6 versions prior to 6.2

If you are using Red Hat Enterprise Linux 6.2 or later, skip this step.On Red Hat Enterprise Linux 6 versions prior to version 6.2, click on the Finish button to finish adding the virtual hardware and close the wizard.

Figure 10.4. The Add new virtual hardware wizard in Red Hat Enterprise Linux 6.1

Reboot

Reboot or start the virtual machine to begin using the driver disc. Virtualized IDE devices require a restart to for the virtual machine to recognize the new device.

Procedure 10.2. Windows installation on a Windows 7 virtual machine

Open the Computer Management window

On the desktop of the Windows virtual machine, click the Windows icon at the bottom corner of the screen to open the Start menu.Right-click on Computer and select Manage from the pop-up menu.

Figure 10.5. The Computer Management window

Open the Device Manager

Select the Device Manager from the left-most pane. This can be found under Computer Management > System Tools.

Figure 10.6. The Computer Management window

Start the driver update wizard

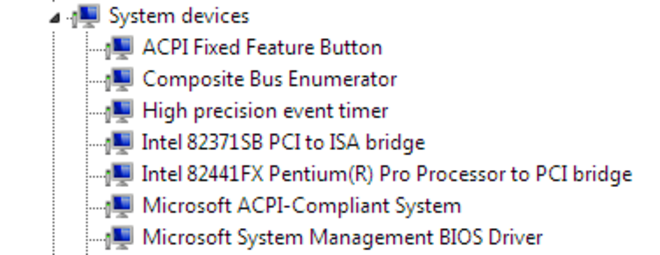

View available system devices

Expand System devices by clicking on the arrow to its left.

Figure 10.7. Viewing available system devices in the Computer Management window

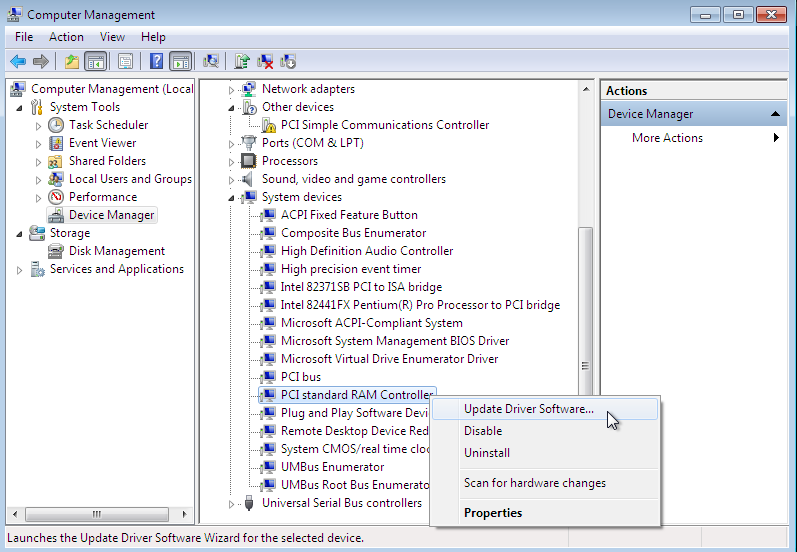

Locate the appropriate device

There are up to four drivers available: the balloon driver, the serial driver, the network driver, and the block driver.Balloon, the balloon driver, affects the PCI standard RAM Controller in the System devices group.vioserial, the serial driver, affects the PCI Simple Communication Controller in the System devices group.NetKVM, the network driver, affects the Network adapters group. This driver is only available if a virtio NIC is configured. Configurable parameters for this driver are documented in Appendix A, NetKVM Driver Parameters.viostor, the block driver, affects the Disk drives group. This driver is only available if a virtio disk is configured.

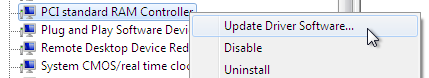

Right-click on the device whose driver you wish to update, and select Update Driver... from the pop-up menu.This example installs the balloon driver, so right-click on PCI standard RAM Controller.

Figure 10.8. The Computer Management window

Open the driver update wizard

From the drop-down menu, select Update Driver Software... to access the driver update wizard.

Figure 10.9. Opening the driver update wizard

Specify how to find the driver

The first page of the driver update wizard asks how you want to search for driver software. Click on the second option, Browse my computer for driver software.

Figure 10.10. The driver update wizard

Select the driver to install

Open a file browser

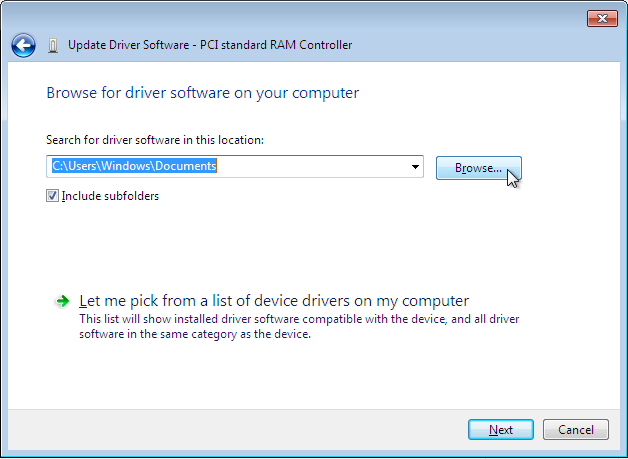

Click on Browse...

Figure 10.11. The driver update wizard

Browse to the location of the driver

A separate driver is provided for each of the various combinations of operating system and architecture. The drivers are arranged hierarchically according to their driver type, the operating system, and the architecture on which they will be installed:driver_type/os/arch/. For example, the Balloon driver for a Windows 7 operating system with an x86 (32-bit) architecture, resides in theBalloon/w7/x86directory.

Figure 10.12. The Browse for driver software pop-up window

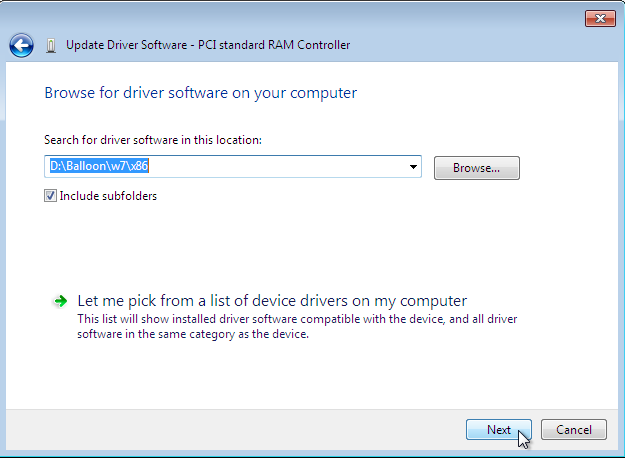

Once you have navigated to the correct location, click OK.Click Next to continue

Figure 10.13. The Update Driver Software wizard

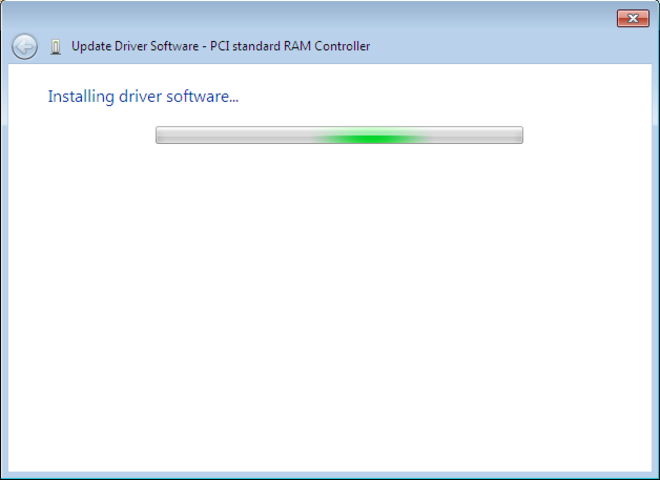

The following screen is displayed while the driver installs:

Figure 10.14. The Update Driver Software wizard

Close the installer

The following screen is displayed when installation is complete:

Figure 10.15. The Update Driver Software wizard

Click Close to close the installer.Reboot

Reboot the virtual machine to complete the driver installation.

10.3. Installing Drivers during the Windows Installation

Procedure 10.3. Installing virtio drivers during the Windows installation

Install the virtio-win package

Use the following command to install the virtio-win package:# yum install virtio-win

Create the guest virtual machine

Important

Create the virtual machine, as normal, without starting the virtual machine. Follow one of the procedures below.Select one of the following guest-creation methods, and follow the instructions.Create the guest virtual machine with virsh

This method attaches the virtio driver floppy disk to a Windows guest before the installation.If the virtual machine is created from an XML definition file withvirsh, use thevirsh definecommand not thevirsh createcommand.- Create, but do not start, the virtual machine. Refer to the Red Hat Enterprise Linux Virtualization Administration Guide for details on creating virtual machines with the

virshcommand. - Add the driver disk as a virtualized floppy disk with the

virshcommand. This example can be copied and used if there are no other virtualized floppy devices attached to the guest virtual machine. Note that vm_name should be replaced with the name of the virtual machine.# virsh attach-disk vm_name /usr/share/virtio-win/virtio-win.vfd fda --type floppy

You can now continue with Step 3.

Create the guest virtual machine with virt-manager and changing the disk type

- At the final step of the virt-manager guest creation wizard, check the Customize configuration before install check box.

Figure 10.16. The virt-manager guest creation wizard

Click on the Finish button to continue. Open the Add Hardware wizard

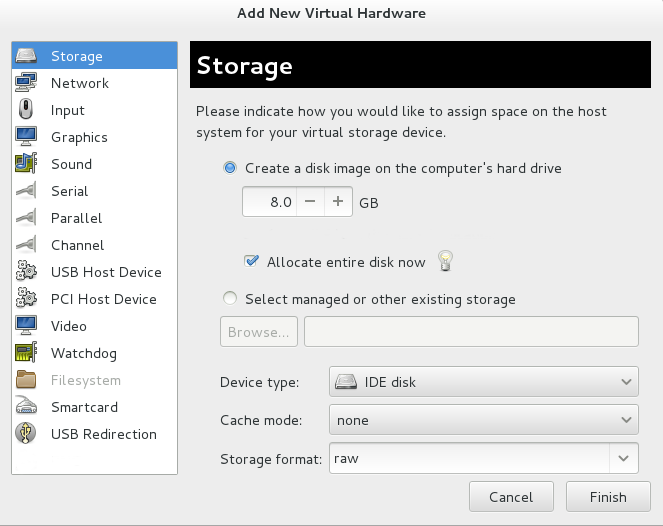

Click the Add Hardware button in the bottom left of the new panel.Select storage device

Storage is the default selection in the Hardware type list.

Figure 10.17. The Add new virtual hardware wizard

Ensure the Select managed or other existing storage radio button is selected. Click Browse....

Figure 10.18. Select managed or existing storage

In the new window that opens, click Browse Local. Navigate to/usr/share/virtio-win/virtio-win.vfd, and click Select to confirm.Change Device type to Floppy disk, and click Finish to continue.

Figure 10.19. Change the Device type

Confirm settings

Review the device settings.

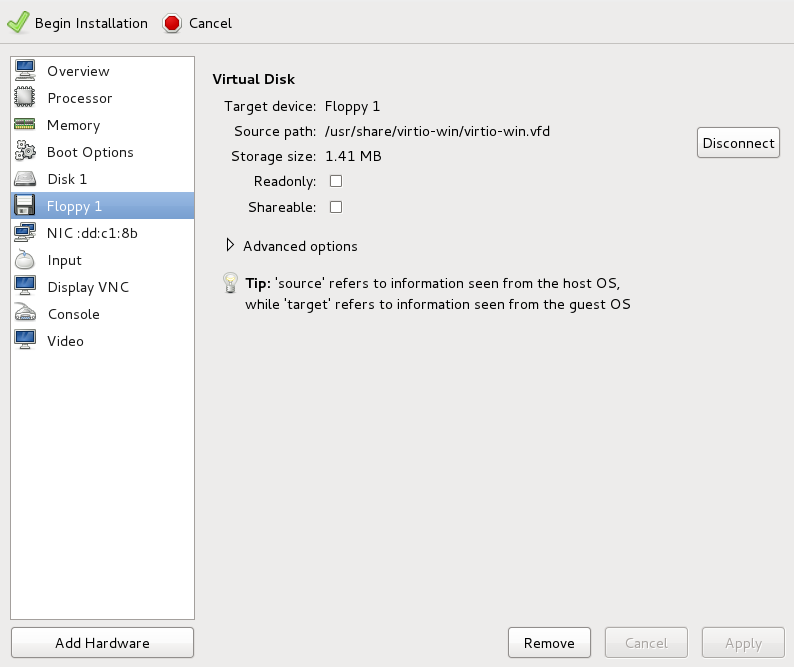

Figure 10.20. The virtual machine hardware information window

You have now created a removable device accessible by your virtual machine.Change the hard disk type

To change the hard disk type from IDE Disk to Virtio Disk, we must first remove the existing hard disk, Disk 1. Select the disk and click on the Remove button.

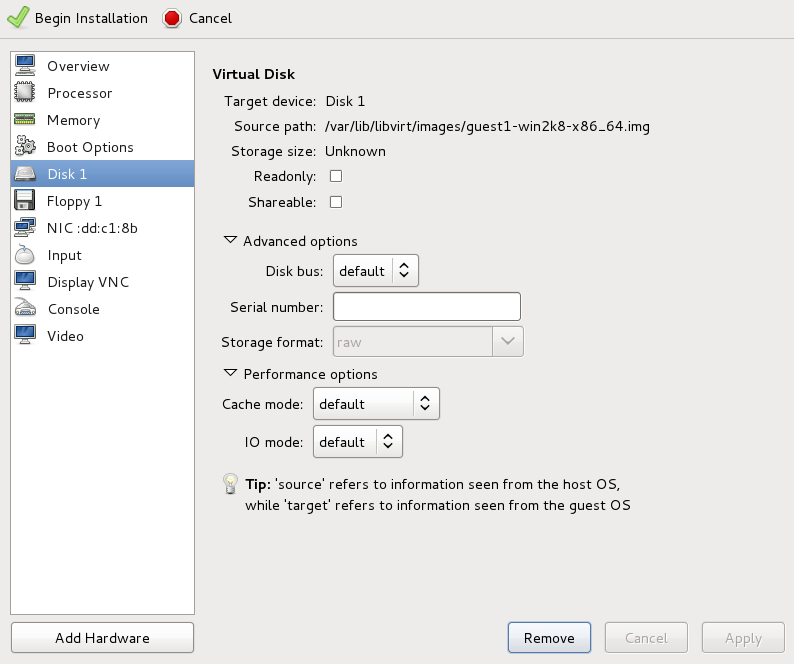

Figure 10.21. The virtual machine hardware information window

Add a new virtual storage device by clicking Add Hardware. Then, change the Device type from IDE disk to Virtio Disk. Click Finish to confirm the operation.

Figure 10.22. The virtual machine hardware information window

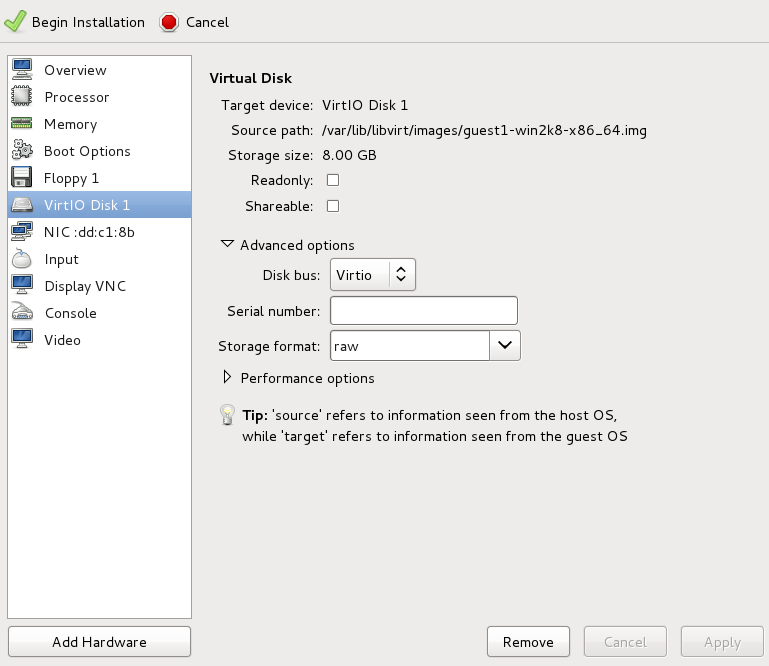

Ensure settings are correct

Review the settings for VirtIO Disk 1.

Figure 10.23. The virtual machine hardware information window

When you are satisfied with the configuration details, click the Begin Installation button.You can now continue with Step 3.

Create the guest virtual machine with virt-install

Append the following parameter exactly as listed below to add the driver disk to the installation with thevirt-installcommand:--disk path=/usr/share/virtio-win/virtio-win.vfd,device=floppy

Important

If the device you wish to add is adisk(that is, not afloppyor acdrom), you will also need to add thebus=virtiooption to the end of the--diskparameter, like so:--disk path=/usr/share/virtio-win/virtio-win.vfd,device=disk,bus=virtio

According to the version of Windows you are installing, append one of the following options to thevirt-installcommand:--os-variant win2k3

--os-variant win7

You can now continue with Step 3.

Additional steps for driver installation

During the installation, additional steps are required to install drivers, depending on the type of Windows guest.Windows Server 2003

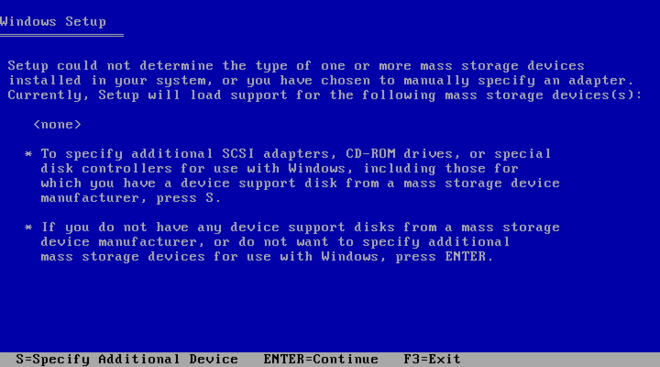

Before the installation blue screen repeatedly press F6 for third party drivers.

Figure 10.24. The Windows Setup screen

Press S to install additional device drivers.

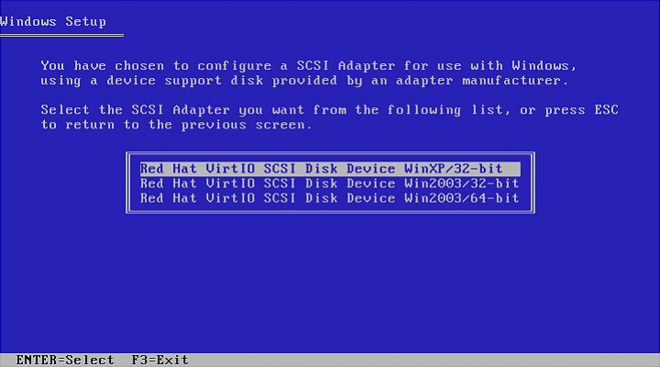

Figure 10.25. The Windows Setup screen

Figure 10.26. The Windows Setup screen

Press Enter to continue the installation.Windows Server 2008

Follow the same procedure for Windows Server 2003, but when the installer prompts you for the driver, click on Load Driver, point the installer toDrive A:and pick the driver that suits your guest operating system and architecture.

10.4. Using KVM virtio Drivers for Existing Devices

virtio driver instead of the virtualized IDE driver. The example shown in this section edits libvirt configuration files. Note that the guest virtual machine does not need to be shut down to perform these steps, however the change will not be applied until the guest is completely shut down and rebooted.

Procedure 10.4. Using KVM virtio drivers for existing devices

- Ensure that you have installed the appropriate driver (

viostor), as described in Section 10.1, “Installing the KVM Windows virtio Drivers”, before continuing with this procedure. - Run the

virsh edit <guestname>command as root to edit the XML configuration file for your device. For example,virsh edit guest1. The configuration files are located in/etc/libvirt/qemu. - Below is a file-based block device using the virtualized IDE driver. This is a typical entry for a virtual machine not using the virtio drivers.

<disk type='file' device='disk'> <source file='/var/lib/libvirt/images/disk1.img'/> <target dev='hda' bus='ide'/> </disk>

- Change the entry to use the virtio device by modifying the bus= entry to

virtio. Note that if the disk was previously IDE it will have a target similar to hda, hdb, or hdc and so on. When changing to bus=virtio the target needs to be changed to vda, vdb, or vdc accordingly.<disk type='file' device='disk'> <source file='/var/lib/libvirt/images/disk1.img'/> <target dev='vda' bus='virtio'/> </disk>

- Remove the address tag inside the disk tags. This must be done for this procedure to work. Libvirt will regenerate the address tag appropriately the next time the virtual machine is started.

virt-manager, virsh attach-disk or virsh attach-interface can add a new device using the virtio drivers.

10.5. Using KVM virtio Drivers for New Devices

virt-manager.

virsh attach-disk or virsh attach-interface commands can be used to attach devices using the virtio drivers.

Important

Procedure 10.5. Adding a storage device using the virtio storage driver

- Open the guest virtual machine by double clicking on the name of the guest in

virt-manager. - Open the Show virtual hardware details tab by clicking the lightbulb button.

Figure 10.27. The Show virtual hardware details tab

- In the Show virtual hardware details tab, click on the Add Hardware button.

Select hardware type

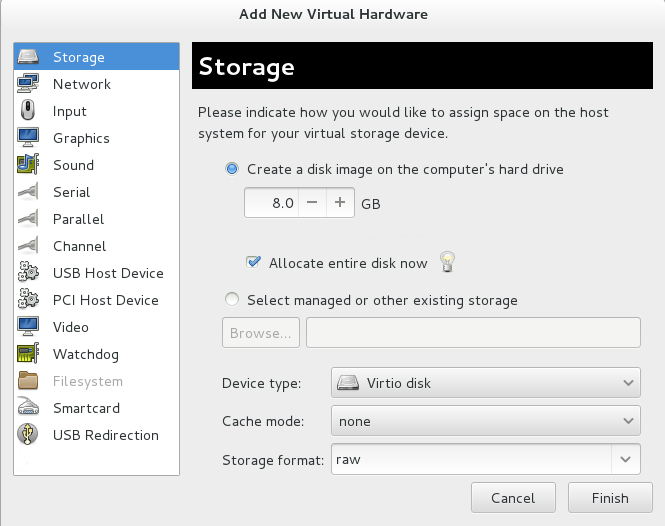

Select Storage as the Hardware type.

Figure 10.28. The Add new virtual hardware wizard

Select the storage device and driver

Create a new disk image or select a storage pool volume.Set the Device type to Virtio disk to use the virtio drivers.

Figure 10.29. The Add new virtual hardware wizard

Click Finish to complete the procedure.

Procedure 10.6. Adding a network device using the virtio network driver

- Open the guest virtual machine by double clicking on the name of the guest in

virt-manager. - Open the Show virtual hardware details tab by clicking the lightbulb button.

Figure 10.30. The Show virtual hardware details tab

- In the Show virtual hardware details tab, click on the Add Hardware button.

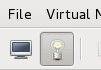

Select hardware type

Select Network as the Hardware type.

Figure 10.31. The Add new virtual hardware wizard

Select the network device and driver

Set the Device model to virtio to use the virtio drivers. Choose the desired Host device.

Figure 10.32. The Add new virtual hardware wizard

Click Finish to complete the procedure.

Chapter 11. Network Configuration

- virtual networks using Network Address Translation (NAT)

- directly allocated physical devices using PCI device assignment

- directly allocated virtual functions using PCIe SR-IOV

- bridged networks

11.1. Network Address Translation (NAT) with libvirt

Every standard libvirt installation provides NAT-based connectivity to virtual machines as the default virtual network. Verify that it is available with the virsh net-list --all command.

# virsh net-list --all Name State Autostart ----------------------------------------- default active yes

# virsh net-define /usr/share/libvirt/networks/default.xml

/usr/share/libvirt/networks/default.xml

# virsh net-autostart default Network default marked as autostarted

# virsh net-start default Network default started

libvirt default network is running, you will see an isolated bridge device. This device does not have any physical interfaces added. The new device uses NAT and IP forwarding to connect to the physical network. Do not add new interfaces.

# brctl show bridge name bridge id STP enabled interfaces virbr0 8000.000000000000 yes

libvirt adds iptables rules which allow traffic to and from guest virtual machines attached to the virbr0 device in the INPUT, FORWARD, OUTPUT and POSTROUTING chains. libvirt then attempts to enable the ip_forward parameter. Some other applications may disable ip_forward, so the best option is to add the following to /etc/sysctl.conf.

net.ipv4.ip_forward = 1

Once the host configuration is complete, a guest virtual machine can be connected to the virtual network based on its name. To connect a guest to the 'default' virtual network, the following could be used in the XML configuration file (such as /etc/libvirtd/qemu/myguest.xml) for the guest:

<interface type='network'> <source network='default'/> </interface>

Note

<interface type='network'> <source network='default'/> <mac address='00:16:3e:1a:b3:4a'/> </interface>

11.2. Disabling vhost-net

vhost-net module is a kernel-level back end for virtio networking that reduces virtualization overhead by moving virtio packet processing tasks out of user space (the qemu process) and into the kernel (the vhost-net driver). vhost-net is only available for virtio network interfaces. If the vhost-net kernel module is loaded, it is enabled by default for all virtio interfaces, but can be disabled in the interface configuration in the case that a particular workload experiences a degradation in performance when vhost-net is in use.

vhost-net causes the UDP socket's receive buffer to overflow more quickly, which results in greater packet loss. It is therefore better to disable vhost-net in this situation to slow the traffic, and improve overall performance.

vhost-net, edit the <interface> sub-element in the guest virtual machine's XML configuration file and define the network as follows:

<interface type="network"> ... <model type="virtio"/> <driver name="qemu"/> ... </interface>

qemu forces packet processing into qemu user space, effectively disabling vhost-net for that interface.

11.3. Bridged Networking with libvirt

br0) based on the eth0 interface, execute the following command on the host:

# virsh iface-bridge eth0 br0

Important

/etc/sysconfig/network-scripts/ directory).

# chkconfig NetworkManager off # chkconfig network on # service NetworkManager stop # service network start

NM_CONTROLLED=no" to the ifcfg-* network script being used for the bridge.

Chapter 12. PCI Device Assignment

- Emulated devices are purely virtual devices that mimic real hardware, allowing unmodified guest operating systems to work with them using their standard in-box drivers.

- Virtio devices are purely virtual devices designed to work optimally in a virtual machine. Virtio devices are similar to emulated devices, however, non-Linux virtual machines do not include the drivers they require by default. Virtualization management software like the Virtual Machine Manager (virt-manager) and the Red Hat Enterprise Virtualization Hypervisor install these drivers automatically for supported non-Linux guest operating systems.

- Assigned devices are physical devices that are exposed to the virtual machine. This method is also known as 'passthrough'. Device assignment allows virtual machines exclusive access to PCI devices for a range of tasks, and allows PCI devices to appear and behave as if they were physically attached to the guest operating system.Device assignment is supported on PCI Express devices, except graphics cards. Parallel PCI devices may be supported as assigned devices, but they have severe limitations due to security and system configuration conflicts.

- Each virtual machine supports a maximum of 8 assigned device functions.

- 4 PCI device slots are configured with 5 emulated devices (two devices are in slot 1) by default. However, users can explicitly remove 2 of the emulated devices that are configured by default if the guest operating system does not require them for operation (the video adapter device in slot 2; and the memory balloon driver device in the lowest available slot, usually slot 3). This gives users a supported functional maximum of 30 PCI device slots per virtual machine.

Note

allow_unsafe_assigned_interrupts KVM module parameter to 1.

Procedure 12.1. Preparing an Intel system for PCI device assignment

Enable the Intel VT-d specifications

The Intel VT-d specifications provide hardware support for directly assigning a physical device to a virtual machine. These specifications are required to use PCI device assignment with Red Hat Enterprise Linux.The Intel VT-d specifications must be enabled in the BIOS. Some system manufacturers disable these specifications by default. The terms used to refer to these specifications can differ between manufacturers; consult your system manufacturer's documentation for the appropriate terms.Activate Intel VT-d in the kernel

Activate Intel VT-d in the kernel by adding theintel_iommu=onparameter to the kernel line in the/boot/grub/grub.conffile.The example below is a modifiedgrub.conffile with Intel VT-d activated.default=0 timeout=5 splashimage=(hd0,0)/grub/splash.xpm.gz hiddenmenu title Red Hat Enterprise Linux Server (2.6.32-330.x86_645) root (hd0,0) kernel /vmlinuz-2.6.32-330.x86_64 ro root=/dev/VolGroup00/LogVol00 rhgb quiet intel_iommu=on initrd /initrd-2.6.32-330.x86_64.imgReady to use

Reboot the system to enable the changes. Your system is now capable of PCI device assignment.

Procedure 12.2. Preparing an AMD system for PCI device assignment

Enable the AMD IOMMU specifications

The AMD IOMMU specifications are required to use PCI device assignment in Red Hat Enterprise Linux. These specifications must be enabled in the BIOS. Some system manufacturers disable these specifications by default.Enable IOMMU kernel support

Appendamd_iommu=onto the kernel command line in/boot/grub/grub.confso that AMD IOMMU specifications are enabled at boot.Ready to use

Reboot the system to enable the changes. Your system is now capable of PCI device assignment.

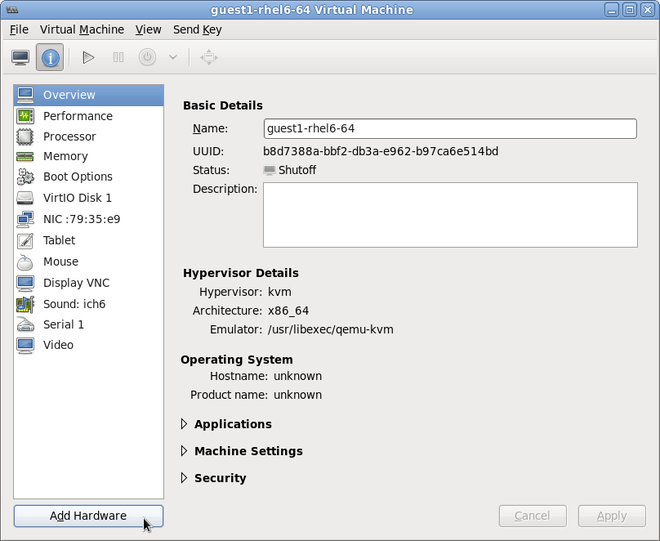

12.1. Assigning a PCI Device with virsh

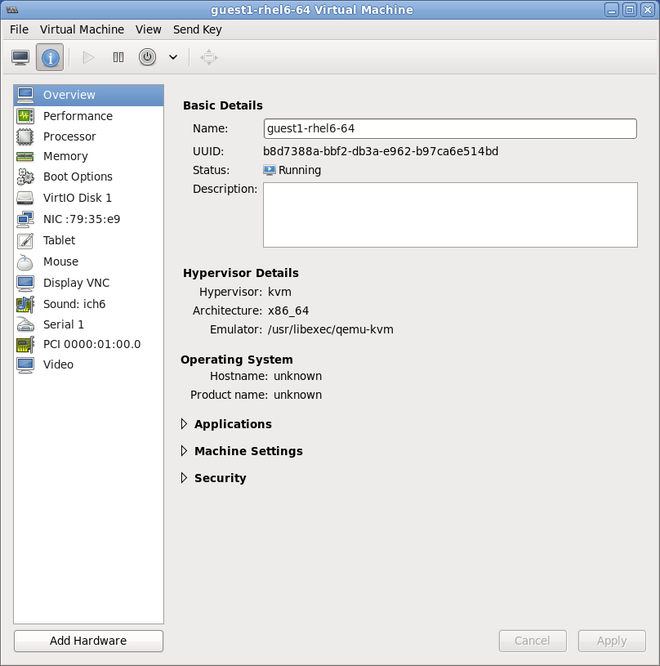

pci_0000_01_00_0, and a fully virtualized guest machine named guest1-rhel6-64.

Procedure 12.3. Assigning a PCI device to a guest virtual machine with virsh

Identify the device

First, identify the PCI device designated for device assignment to the virtual machine. Use thelspcicommand to list the available PCI devices. You can refine the output oflspciwithgrep.This example uses the Ethernet controller highlighted in the following output:# lspci | grep Ethernet 00:19.0 Ethernet controller: Intel Corporation 82567LM-2 Gigabit Network Connection 01:00.0 Ethernet controller: Intel Corporation 82576 Gigabit Network Connection (rev 01) 01:00.1 Ethernet controller: Intel Corporation 82576 Gigabit Network Connection (rev 01)This Ethernet controller is shown with the short identifier00:19.0. We need to find out the full identifier used byvirshin order to assign this PCI device to a virtual machine.To do so, combine thevirsh nodedev-listcommand with thegrepcommand to list all devices of a particular type (pci) that are attached to the host machine. Then look at the output for the string that maps to the short identifier of the device you wish to use.This example highlights the string that maps to the Ethernet controller with the short identifier00:19.0. Note that the:and.characters are replaced with underscores in the full identifier.# virsh nodedev-list --cap pci pci_0000_00_00_0 pci_0000_00_01_0 pci_0000_00_03_0 pci_0000_00_07_0 pci_0000_00_10_0 pci_0000_00_10_1 pci_0000_00_14_0 pci_0000_00_14_1 pci_0000_00_14_2 pci_0000_00_14_3 pci_0000_00_19_0 pci_0000_00_1a_0 pci_0000_00_1a_1 pci_0000_00_1a_2 pci_0000_00_1a_7 pci_0000_00_1b_0 pci_0000_00_1c_0 pci_0000_00_1c_1 pci_0000_00_1c_4 pci_0000_00_1d_0 pci_0000_00_1d_1 pci_0000_00_1d_2 pci_0000_00_1d_7 pci_0000_00_1e_0 pci_0000_00_1f_0 pci_0000_00_1f_2 pci_0000_00_1f_3 pci_0000_01_00_0 pci_0000_01_00_1 pci_0000_02_00_0 pci_0000_02_00_1 pci_0000_06_00_0 pci_0000_07_02_0 pci_0000_07_03_0Record the PCI device number that maps to the device you want to use; this is required in other steps.Review device information

Information on the domain, bus, and function are available from output of thevirsh nodedev-dumpxmlcommand:virsh nodedev-dumpxml pci_0000_00_19_0 <device> <name>pci_0000_00_19_0</name> <parent>computer</parent> <driver> <name>e1000e</name> </driver> <capability type='pci'> <domain>0</domain> <bus>0</bus> <slot>25</slot> <function>0</function> <product id='0x1502'>82579LM Gigabit Network Connection</product> <vendor id='0x8086'>Intel Corporation</vendor> <capability type='virt_functions'> </capability> </capability> </device>Determine required configuration details

Refer to the output from thevirsh nodedev-dumpxml pci_0000_00_19_0command for the values required for the configuration file.Optionally, convert slot and function values to hexadecimal values (from decimal) to get the PCI bus addresses. Append "0x" to the beginning of the output to tell the computer that the value is a hexadecimal number.The example device has the following values: bus = 0, slot = 25 and function = 0. The decimal configuration uses those three values:bus='0' slot='25' function='0'

If you want to convert to hexadecimal values, you can use theprintfutility to convert from decimal values, as shown in the following example:$ printf %x 0 0 $ printf %x 25 19 $ printf %x 0 0

The example device would use the following hexadecimal values in the configuration file:bus='0x0' slot='0x19' function='0x0'

Add configuration details

Runvirsh edit, specifying the virtual machine name, and add a device entry in the<source>section to assign the PCI device to the guest virtual machine.# virsh edit guest1-rhel6-64 <hostdev mode='subsystem' type='pci' managed='yes'> <source> <address domain='0x0' bus='0x0' slot='0x19' function='0x0'/> </source> </hostdev>Alternately, runvirsh attach-device, specifying the virtual machine name and the guest's XML file:virsh attach-device guest1-rhel6-64

file.xmlAllow device management

Set an SELinux boolean to allow the management of the PCI device from the virtual machine:# setsebool -P virt_use_sysfs 1

Start the virtual machine

# virsh start guest1-rhel6-64

12.2. Assigning a PCI Device with virt-manager

virt-manager tool. The following procedure adds a Gigabit Ethernet controller to a guest virtual machine.

Procedure 12.4. Assigning a PCI device to a guest virtual machine using virt-manager

Open the hardware settings

Open the guest virtual machine and click the Add Hardware button to add a new device to the virtual machine.

Figure 12.1. The virtual machine hardware information window

Select a PCI device

Select PCI Host Device from the Hardware list on the left.Select an unused PCI device. Note that selecting PCI devices presently in use on the host causes errors. In this example, a spare 82576 network device is used. Click Finish to complete setup.

Figure 12.2. The Add new virtual hardware wizard

Add the new device

The setup is complete and the guest virtual machine now has direct access to the PCI device.

Figure 12.3. The virtual machine hardware information window

12.3. Assigning a PCI Device with virt-install

--host-device parameter.

Procedure 12.5. Assigning a PCI device to a virtual machine with virt-install

Identify the device

Identify the PCI device designated for device assignment to the guest virtual machine.# lspci | grep Ethernet 00:19.0 Ethernet controller: Intel Corporation 82567LM-2 Gigabit Network Connection 01:00.0 Ethernet controller: Intel Corporation 82576 Gigabit Network Connection (rev 01) 01:00.1 Ethernet controller: Intel Corporation 82576 Gigabit Network Connection (rev 01)

Thevirsh nodedev-listcommand lists all devices attached to the system, and identifies each PCI device with a string. To limit output to only PCI devices, run the following command:# virsh nodedev-list --cap pci pci_0000_00_00_0 pci_0000_00_01_0 pci_0000_00_03_0 pci_0000_00_07_0 pci_0000_00_10_0 pci_0000_00_10_1 pci_0000_00_14_0 pci_0000_00_14_1 pci_0000_00_14_2 pci_0000_00_14_3 pci_0000_00_19_0 pci_0000_00_1a_0 pci_0000_00_1a_1 pci_0000_00_1a_2 pci_0000_00_1a_7 pci_0000_00_1b_0 pci_0000_00_1c_0 pci_0000_00_1c_1 pci_0000_00_1c_4 pci_0000_00_1d_0 pci_0000_00_1d_1 pci_0000_00_1d_2 pci_0000_00_1d_7 pci_0000_00_1e_0 pci_0000_00_1f_0 pci_0000_00_1f_2 pci_0000_00_1f_3 pci_0000_01_00_0 pci_0000_01_00_1 pci_0000_02_00_0 pci_0000_02_00_1 pci_0000_06_00_0 pci_0000_07_02_0 pci_0000_07_03_0

Record the PCI device number; the number is needed in other steps.Information on the domain, bus and function are available from output of thevirsh nodedev-dumpxmlcommand:# virsh nodedev-dumpxml pci_0000_01_00_0 <device> <name>pci_0000_01_00_0</name> <parent>pci_0000_00_01_0</parent> <driver> <name>igb</name> </driver> <capability type='pci'> <domain>0</domain> <bus>1</bus> <slot>0</slot> <function>0</function> <product id='0x10c9'>82576 Gigabit Network Connection</product> <vendor id='0x8086'>Intel Corporation</vendor> <capability type='virt_functions'> </capability> </capability> </device>Add the device

Use the PCI identifier output from thevirsh nodedevcommand as the value for the--host-deviceparameter.virt-install \ --name=guest1-rhel6-64 \ --disk path=/var/lib/libvirt/images/guest1-rhel6-64.img,size=8 \ --nonsparse --graphics spice \ --vcpus=2 --ram=2048 \ --location=http://example1.com/installation_tree/RHEL6.1-Server-x86_64/os \ --nonetworks \ --os-type=linux \ --os-variant=rhel6 \ --host-device=pci_0000_01_00_0Complete the installation

Complete the guest installation. The PCI device should be attached to the guest.

12.4. Detaching an Assigned PCI Device

virsh or virt-manager so it is available for host use.

Procedure 12.6. Detaching a PCI device from a guest with virsh

Detach the device

Use the following command to detach the PCI device from the guest by removing it in the guest's XML file:# virsh detach-device name_of_guest file.xml

Re-attach the device to the host (optional)

If the device is inmanagedmode, skip this step. The device will be returned to the host automatically.If the device is not usingmanagedmode, use the following command to re-attach the PCI device to the host machine:# virsh nodedev-reattach device

For example, to re-attach thepci_0000_01_00_0device to the host:virsh nodedev-reattach pci_0000_01_00_0

The device is now available for host use.

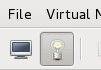

Procedure 12.7. Detaching a PCI Device from a guest with virt-manager

Open the virtual hardware details screen

In virt-manager, double-click on the virtual machine that contains the device. Select the Show virtual hardware details button to display a list of virtual hardware.

Figure 12.4. The virtual hardware details button

Select and remove the device

Select the PCI device to be detached from the list of virtual devices in the left panel.

Figure 12.5. Selecting the PCI device to be detached

Click the Remove button to confirm. The device is now available for host use.

12.5. PCI Device Restrictions

- Each virtual machine supports a maximum of 8 assigned device functions.

- 4 PCI device slots are configured with 5 emulated devices (two devices are in slot 1) by default. However, users can explicitly remove 2 of the emulated devices that are configured by default if the guest operating system does not require them for operation (the video adapter device in slot 2; and the memory balloon driver device in the lowest available slot, usually slot 3). This gives users a supported functional maximum of 30 PCI device slots per virtual machine.

- PCI device assignment (attaching PCI devices to virtual machines) requires host systems to have AMD IOMMU or Intel VT-d support to enable device assignment of PCIe devices.

- For parallel/legacy PCI, only single devices behind a PCI bridge are supported.

- Multiple PCIe endpoints connected through a non-root PCIe switch require ACS support in the PCIe bridges of the PCIe switch. To disable this restriction, edit the

/etc/libvirt/qemu.conffile and insert the line:relaxed_acs_check=1

- Red Hat Enterprise Linux 6 has limited PCI configuration space access by guest device drivers. This limitation could cause drivers that are dependent on PCI configuration space to fail configuration.

- Red Hat Enterprise Linux 6.2 introduced interrupt remapping as a requirement for PCI device assignment. If your platform does not provide support for interrupt remapping, circumvent the KVM check for this support with the following command as the root user at the command line prompt:

# echo 1 > /sys/module/kvm/parameters/allow_unsafe_assigned_interrupts

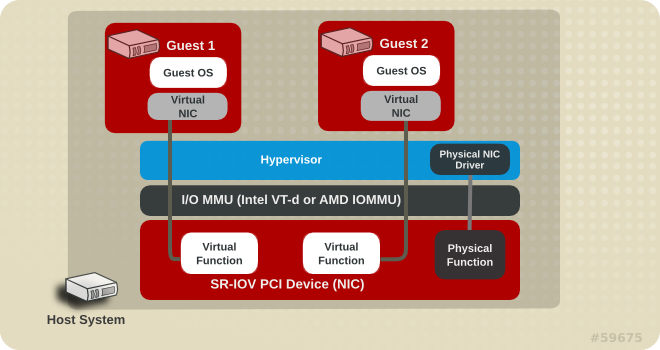

Chapter 13. SR-IOV

13.1. Introduction

Figure 13.1. How SR-IOV works

- Physical Functions (PFs) are full PCIe devices that include the SR-IOV capabilities. Physical Functions are discovered, managed, and configured as normal PCI devices. Physical Functions configure and manage the SR-IOV functionality by assigning Virtual Functions.

- Virtual Functions (VFs) are simple PCIe functions that only process I/O. Each Virtual Function is derived from a Physical Function. The number of Virtual Functions a device may have is limited by the device hardware. A single Ethernet port, the Physical Device, may map to many Virtual Functions that can be shared to virtual machines.

SR-IOV devices can share a single physical port with multiple virtual machines.

13.2. Using SR-IOV

<hostdev> with the virsh edit or virsh attach-device command. However, this can be problematic because unlike a regular network device, an SR-IOV VF network device does not have a permanent unique MAC address, and is assigned a new MAC address each time the host is rebooted. Because of this, even if the guest is assigned the same VF after a reboot, when the host is rebooted the guest determines its network adapter to have a new MAC address. As a result, the guest believes there is new hardware connected each time, and will usually require re-configuration of the guest's network settings.

<interface type='hostdev'> interface device. Using this interface device, libvirt will first perform any network-specific hardware/switch initialization indicated (such as setting the MAC address, VLAN tag, or 802.1Qbh virtualport parameters), then perform the PCI device assignment to the guest.

<interface type='hostdev'> interface device requires:

- an SR-IOV-capable network card,

- host hardware that supports either the Intel VT-d or the AMD IOMMU extensions, and

- the PCI address of the VF to be assigned.

Important

Procedure 13.1. Attach an SR-IOV network device on an Intel or AMD system

Enable Intel VT-d or the AMD IOMMU specifications in the BIOS and kernel

On an Intel system, enable Intel VT-d in the BIOS if it is not enabled already. Refer to Procedure 12.1, “Preparing an Intel system for PCI device assignment” for procedural help on enabling Intel VT-d in the BIOS and kernel.Skip this step if Intel VT-d is already enabled and working.On an AMD system, enable the AMD IOMMU specifications in the BIOS if they are not enabled already. Refer to Procedure 12.2, “Preparing an AMD system for PCI device assignment” for procedural help on enabling IOMMU in the BIOS.Verify support

Verify if the PCI device with SR-IOV capabilities is detected. This example lists an Intel 82576 network interface card which supports SR-IOV. Use thelspcicommand to verify whether the device was detected.# lspci 03:00.0 Ethernet controller: Intel Corporation 82576 Gigabit Network Connection (rev 01) 03:00.1 Ethernet controller: Intel Corporation 82576 Gigabit Network Connection (rev 01)

Note that the output has been modified to remove all other devices.Start the SR-IOV kernel modules

If the device is supported the driver kernel module should be loaded automatically by the kernel. Optional parameters can be passed to the module using themodprobecommand. The Intel 82576 network interface card uses theigbdriver kernel module.# modprobe igb [<option>=<VAL1>,<VAL2>,] # lsmod |grep igb igb 87592 0 dca 6708 1 igb

Activate Virtual Functions

Themax_vfsparameter of theigbmodule allocates the maximum number of Virtual Functions. Themax_vfsparameter causes the driver to spawn, up to the value of the parameter in, Virtual Functions. For this particular card the valid range is0to7.Remove the module to change the variable.# modprobe -r igb

Restart the module with themax_vfsset to7or any number of Virtual Functions up to the maximum supported by your device.# modprobe igb max_vfs=7

Make the Virtual Functions persistent

Add the lineoptions igb max_vfs=7to any file in/etc/modprobe.dto make the Virtual Functions persistent. For example:# echo "options igb max_vfs=7" >>/etc/modprobe.d/igb.conf

Inspect the new Virtual Functions

Using thelspcicommand, list the newly added Virtual Functions attached to the Intel 82576 network device. (Alternatively, usegrepto search forVirtual Function, to search for devices that support Virtual Functions.)# lspci | grep 82576 0b:00.0 Ethernet controller: Intel Corporation 82576 Gigabit Network Connection (rev 01) 0b:00.1 Ethernet controller: Intel Corporation 82576 Gigabit Network Connection(rev 01) 0b:10.0 Ethernet controller: Intel Corporation 82576 Virtual Function (rev 01) 0b:10.1 Ethernet controller: Intel Corporation 82576 Virtual Function (rev 01) 0b:10.2 Ethernet controller: Intel Corporation 82576 Virtual Function (rev 01) 0b:10.3 Ethernet controller: Intel Corporation 82576 Virtual Function (rev 01) 0b:10.4 Ethernet controller: Intel Corporation 82576 Virtual Function (rev 01) 0b:10.5 Ethernet controller: Intel Corporation 82576 Virtual Function (rev 01) 0b:10.6 Ethernet controller: Intel Corporation 82576 Virtual Function (rev 01) 0b:10.7 Ethernet controller: Intel Corporation 82576 Virtual Function (rev 01) 0b:11.0 Ethernet controller: Intel Corporation 82576 Virtual Function (rev 01) 0b:11.1 Ethernet controller: Intel Corporation 82576 Virtual Function (rev 01) 0b:11.2 Ethernet controller: Intel Corporation 82576 Virtual Function (rev 01) 0b:11.3 Ethernet controller: Intel Corporation 82576 Virtual Function (rev 01) 0b:11.4 Ethernet controller: Intel Corporation 82576 Virtual Function (rev 01) 0b:11.5 Ethernet controller: Intel Corporation 82576 Virtual Function (rev 01)

The identifier for the PCI device is found with the-nparameter of thelspcicommand. The Physical Functions correspond to0b:00.0and0b:00.1. All Virtual Functions haveVirtual Functionin the description.Verify devices exist with virsh