Red Hat Training

A Red Hat training course is available for Red Hat OpenStack Platform

第8章 Configuring Compute nodes for performance

You can configure the scheduling and placement of instances for optimal performance by creating customized flavors to target specialized workloads, including Network Functions Virtualization (NFV), and High Performance Computing (HPC).

Use the following features to tune your instances for optimal performance:

- CPU pinning: Pin virtual CPUs to physical CPUs.

- Emulator threads: Pin emulator threads associated with the instance to physical CPUs.

- Huge pages: Tune instance memory allocation policies both for normal memory (4k pages) and huge pages (2 MB or 1 GB pages).

Configuring any of these features creates an implicit NUMA topology on the instance if there is no NUMA topology already present.

For more information about NFV and hyper-converged infrastructure (HCI) deployments, see Deploying an overcloud with HCI and DPDK in the Network Functions Virtualization Planning and Configuration Guide.

8.1. Configuring CPU pinning with NUMA

This chapter describes how to use NUMA topology awareness to configure an OpenStack environment on systems with a NUMA architecture. The procedures detailed in this chapter show you how to pin virtual machines (VMs) to dedicated CPU cores, which improves scheduling and VM performance.

Background information about NUMA is available in the following article: What is NUMA and how does it work on Linux?

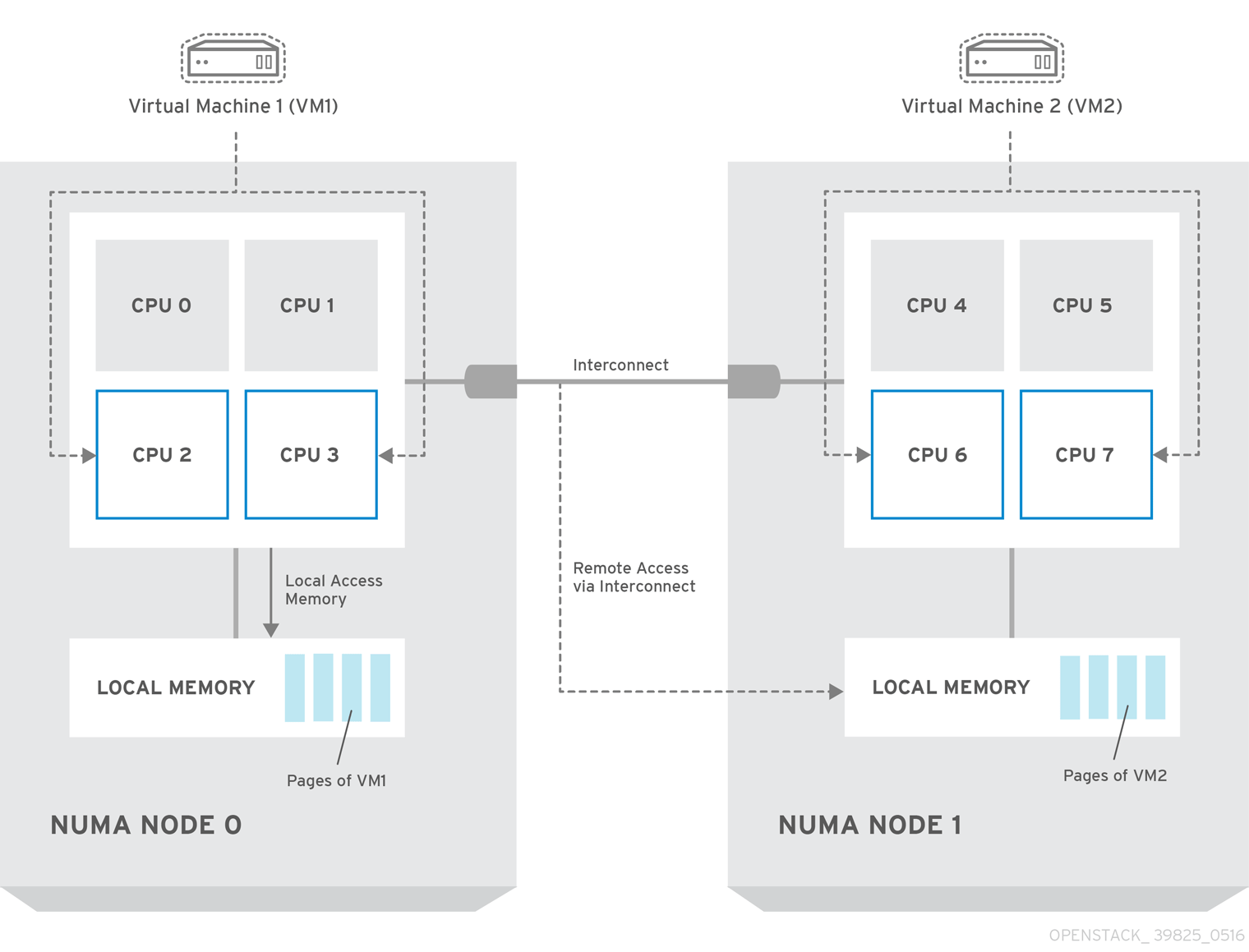

The following diagram provides an example of a two-node NUMA system and the way the CPU cores and memory pages are made available:

Remote memory available via Interconnect is accessed only if VM1 from NUMA node 0 has a CPU core in NUMA node 1. In this case, the memory of NUMA node 1 will act as local for the third CPU core of VM1 (for example, if VM1 is allocated with CPU 4 in the diagram above), but at the same time, it will act as remote memory for the other CPU cores of the same VM.

For more details on NUMA tuning with libvirt, see the Virtualization Tuning and Optimization Guide.

8.1.1. Compute node configuration

The exact configuration depends on the NUMA topology of your host system. However, you must reserve some CPU cores across all the NUMA nodes for host processes and let the rest of the CPU cores handle your virtual machines (VMs). The following example illustrates the layout of eight CPU cores evenly spread across two NUMA nodes.

表8.1 Example of NUMA Topology

| Node 0 | Node 1 | |||

|---|---|---|---|---|

| Host processes | Core 0 | Core 1 | Core 4 | Core 5 |

| Instances | Core 2 | Core 3 | Core 6 | Core 7 |

Determine the number of cores to reserve for host processes by observing the performance of the host under typical workloads.

Procedure

Reserve CPU cores for the VMs by setting the

NovaVcpuPinSetconfiguration in the Compute environment file:NovaVcpuPinSet: 2,3,6,7

Set the

NovaReservedHostMemoryoption in the same file to the amount of RAM to reserve for host processes. For example, if you want to reserve 512 MB, use:NovaReservedHostMemory: 512

To ensure that host processes do not run on the CPU cores reserved for VMs, set the parameter

IsolCpusListin the Compute environment file to the CPU cores you have reserved for VMs. Specify the value of theIsolCpusListparameter using a list of CPU indices, or ranges separated by a whitespace. For example:IsolCpusList: 2 3 6 7

注記The

IsolCpusListparameter andisolcpusparameter are different parameters for separate purposes:-

IsolCpusList: Use this heat parameter to setisolated_coresintuned.confusing thecpu-partitioningprofile. -

isolcpus: This is a Kernel boot parameter that you set with theKernelArgsheat parameter.

Do not use the

IsolCpusListparameter and theisolcpusparameter interchangeably.ヒントTo set

IsolCpusListin non-NFV roles, you must configureKernelArgsandIsolCpusList, and include the/usr/share/openstack-tripleo-heat-templates/environments/host-config-and-reboot.yamlenvironment file in the overcloud deployment. Contact Red Hat Support if you plan to deploy withconfig-download, and configureIsolCpusListfor non-NFV roles.-

To apply this configuration, deploy the overcloud:

(undercloud) $ openstack overcloud deploy --templates \ -e /home/stack/templates/<compute_environment_file>.yaml

8.1.2. Configuring emulator threads to run on dedicated physical CPU

The Compute scheduler determines the CPU resource utilization and places instances based on the number of virtual CPUs (vCPUs) in the flavor. There are a number of hypervisor operations that are performed on the host, on behalf of the guest instance, for example, with QEMU, there are threads used for the QEMU main event loop, asynchronous I/O operations and so on and these operations need to be accounted and scheduled separately.

The libvirt driver implements a generic placement policy for KVM which allows QEMU emulator threads to float across the same physical CPUs (pCPUs) that the vCPUs are running on. This leads to the emulator threads using time borrowed from the vCPUs operations. When you need a guest to have dedicated vCPU allocation, it is necessary to allocate one or more pCPUs for emulator threads. It is therefore necessary to describe to the scheduler any other CPU usage that might be associated with a guest and account for that during placement.

In an NFV deployment, to avoid packet loss, you have to make sure that the vCPUs are never preempted.

Before you enable the emulator threads placement policy on a flavor, check that the following heat parameters are defined as follows:

-

NovaComputeCpuSharedSet: Set this parameter to a list of CPUs defined to run emulator threads. -

NovaSchedulerDefaultFilters: IncludeNUMATopologyFilterin the list of defined filters.

You can define or change heat parameter values on an active cluster, and then redeploy for those changes to take effect.

To isolate emulator threads, you must use a flavor configured as follows:

# openstack flavor set FLAVOR-NAME \ --property hw:cpu_policy=dedicated \ --property hw:emulator_threads_policy=share

8.1.3. Scheduler configuration

Procedure

- Open your Compute environment file.

Add the following values to the

NovaSchedulerDefaultFiltersparameter, if they are not already present:-

NUMATopologyFilter -

AggregateInstanceExtraSpecsFilter

-

- Save the configuration file.

- Deploy the overcloud.

8.1.4. Aggregate and flavor configuration

Configure host aggregates to deploy instances that use CPU pinning on different hosts from instances that do not, to avoid unpinned instances using the resourcing requirements of pinned instances.

Do not deploy instances with NUMA topology on the same hosts as instances that do not have NUMA topology.

Prepare your OpenStack environment for running virtual machine instances pinned to specific resources by completing the following steps on a system with the Compute CLI.

Procedure

Load the

admincredentials:source ~/keystonerc_admin

Create an aggregate for the hosts that will receive pinning requests:

nova aggregate-create <aggregate-name-pinned>

Enable the pinning by editing the metadata for the aggregate:

nova aggregate-set-metadata <aggregate-pinned-UUID> pinned=true

Create an aggregate for other hosts:

nova aggregate-create <aggregate-name-unpinned>

Edit the metadata for this aggregate accordingly:

nova aggregate-set-metadata <aggregate-unpinned-UUID> pinned=false

Change your existing flavors' specifications to this one:

for i in $(nova flavor-list | cut -f 2 -d ' ' | grep -o '[0-9]*'); do nova flavor-key $i set "aggregate_instance_extra_specs:pinned"="false"; done

Create a flavor for the hosts that will receive pinning requests:

nova flavor-create <flavor-name-pinned> <flavor-ID> <RAM> <disk-size> <vCPUs>

Where:

-

<flavor-ID>- Set toautoif you wantnovato generate a UUID. -

<RAM>- Specify the required RAM in MB. -

<disk-size>- Specify the required disk size in GB. -

<vCPUs>- The number of virtual CPUs that you want to reserve.

-

Set the

hw:cpu_policyspecification of this flavor todedicatedso as to require dedicated resources, which enables CPU pinning, and also thehw:cpu_thread_policyspecification torequire, which places each vCPU on thread siblings:nova flavor-key <flavor-name-pinned> set hw:cpu_policy=dedicated nova flavor-key <flavor-name-pinned> set hw:cpu_thread_policy=require

注記If the host does not have an SMT architecture or enough CPU cores with free thread siblings, scheduling will fail. If such behavior is undesired, or if your hosts simply do not have an SMT architecture, do not use the

hw:cpu_thread_policyspecification, or set it topreferinstead ofrequire. The (default)preferpolicy ensures that thread siblings are used when available.Set the

aggregate_instance_extra_specs:pinnedspecification to "true" to ensure that instances based on this flavor have this specification in their aggregate metadata:nova flavor-key <flavor-name-pinned> set aggregate_instance_extra_specs:pinned=true

Add some hosts to the new aggregates:

nova aggregate-add-host <aggregate-pinned-UUID> <host_name> nova aggregate-add-host <aggregate-unpinned-UUID> <host_name>

Boot an instance using the new flavor:

nova boot --image <image-name> --flavor <flavor-name-pinned> <server-name>

To verify that the new server has been placed correctly, run the following command and check for

OS-EXT-SRV-ATTR:hypervisor_hostnamein the output:nova show <server-name>