-

Language:

English

-

Language:

English

Red Hat Training

A Red Hat training course is available for Red Hat Gluster Storage

Installation Guide

Installing Red Hat Storage 3

Abstract

Chapter 1. Introduction

Red Hat Storage Server for On-Premise enables enterprises to treat physical storage as a virtualized, scalable, and centrally managed pool of storage by using commodity server and storage hardware.

Red Hat Storage Server for Public Cloud packages GlusterFS as an Amazon Machine Image (AMI) for deploying scalable NAS in the AWS public cloud. This powerful storage server provides a highly available, scalable, virtualized, and centrally managed pool of storage for Amazon users.

Chapter 2. Obtaining Red Hat Storage

2.1. Obtaining Red Hat Storage Server for On-Premise

- Visit the Red Hat Customer Service Portal at https://access.redhat.com/login and enter your user name and password to log in.

- Click Downloads to visit the Software & Download Center.

- In the Red Hat Storage Server area, click Download Software to download the latest version of the software.

2.2. Obtaining Red Hat Storage Server for Public Cloud

Chapter 3. Planning Red Hat Storage Installation

3.1. Prerequisites

XFS - Format the back-end file system using XFS for glusterFS bricks. XFS can journal metadata, resulting in faster crash recovery. The XFS file system can also be defragmented and expanded while mounted and active.

Note

ext3 or ext4 to upgrade to a supported version of Red Hat Storage using the XFS back-end file system.

Format glusterFS bricks using XFS on the Logical Volume Manager to prepare for the installation.

- Synchronize time across all Red Hat Storage servers using the Network Time Protocol (NTP) daemon.

3.1.1. Network Time Protocol Setup

ntpd daemon to automatically synchronize the time during the boot process as follows:

- Edit the NTP configuration file

/etc/ntp.confusing a text editor such as vim or nano.# nano /etc/ntp.conf

- Add or edit the list of public NTP servers in the

ntp.conffile as follows:server 0.rhel.pool.ntp.org server 1.rhel.pool.ntp.org server 2.rhel.pool.ntp.org

The Red Hat Enterprise Linux 6 version of this file already contains the required information. Edit the contents of this file if customization is required. - Optionally, increase the initial synchronization speed by appending the

iburstdirective to each line:server 0.rhel.pool.ntp.org iburst server 1.rhel.pool.ntp.org iburst server 2.rhel.pool.ntp.org iburst

- After the list of servers is complete, set the required permissions in the same file. Ensure that only

localhosthas unrestricted access:restrict default kod nomodify notrap nopeer noquery restrict -6 default kod nomodify notrap nopeer noquery restrict 127.0.0.1 restrict -6 ::1

- Save all changes, exit the editor, and restart the NTP daemon:

# service ntpd restart

- Ensure that the

ntpddaemon starts at boot time:# chkconfig ntpd on

ntpdate command for a one-time synchronization of NTP. For more information about this feature, see the Red Hat Enterprise Linux Deployment Guide.

3.2. Hardware Compatibility

3.3. Port Information

Table 3.1. TCP Port Numbers

| Port Number | Usage |

|---|---|

| 22 | For sshd used by geo-replication. |

| 111 | For rpc port mapper. |

| 139 | For netbios service. |

| 445 | For CIFS protocol. |

| 965 | For NFS's Lock Manager (NLM). |

| 2049 | For glusterFS's NFS exports (nfsd process). |

| 24007 | For glusterd (for management). |

| 24009 - 24108 | For client communication with Red Hat Storage 2.0. |

| 38465 | For NFS mount protocol. |

| 38466 | For NFS mount protocol. |

| 38468 | For NFS's Lock Manager (NLM). |

| 38469 | For NFS's ACL support. |

| 39543 | For oVirt (Red Hat Storage-Console). |

| 49152 - 49251 | For client communication with Red Hat Storage 2.1 and for brick processes depending on the availability of the ports. The total number of ports required to be open depends on the total number of bricks exported on the machine. |

| 55863 | For oVirt (Red Hat Storage-Console). |

Table 3.2. TCP Port Numbers used for Object Storage (Swift)

| Port Number | Usage |

|---|---|

| 443 | For HTTPS request. |

| 6010 | For Object Server. |

| 6011 | For Container Server. |

| 6012 | For Account Server. |

| 8080 | For Proxy Server. |

Table 3.3. TCP Port Numbers for Nagios Monitoring

| Port Number | Usage |

|---|---|

| 80 | For HTTP protocol (required only if Nagios server is running on a Red Hat Storage node). |

| 443 | For HTTPS protocol (required only for Nagios server). |

| 5667 | For NSCA service (required only if Nagios server is running on a Red Hat Storage node). |

| 5666 | For NRPE service (required in all Red Hat Storage nodes). |

Table 3.4. UDP Port Numbers

| Port Number | Usage |

|---|---|

| 111 | For RPC Bind. |

| 963 | For NFS's Lock Manager (NLM). |

Chapter 4. Installing Red Hat Storage

Important

- Technology preview packages will also be installed with this installation of Red Hat Storage Server. For more information about the list of technology preview features, see Chapter 4. Technology Previews in the Red Hat Storage 3.0 Release Notes.

- While cloning a Red Hat Storage Server installed on a virtual machine, the

/var/lib/glusterd/glusterd.infofile will be cloned to the other virtual machines, hence causing all the cloned virtual machines to have the same UUID. Ensure to remove the/var/lib/glusterd/glusterd.infofile before the virtual machine is cloned. The file will be automatically created with a UUID on initial start-up of the glusterd daemon on the cloned virtual machines.

4.1. Installing from an ISO Image

- Download an ISO image file for Red Hat Storage Server as described in Chapter 2, Obtaining Red Hat Storage.The installation process launches automatically when you boot the system using the ISO image file.

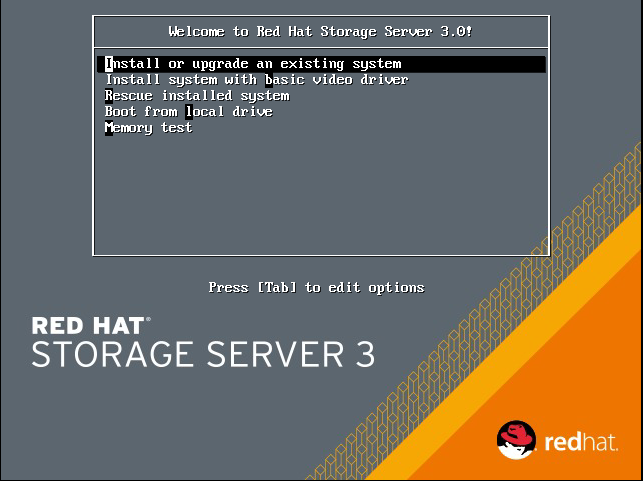

Figure 4.1. Installation

Press Enter to begin the installation process.Note

For some hypervisors, while installing Red Hat Storage on a virtual machine, you must select theInstall System with basic video driveroption. - The Configure TCP/IP screen displays.To configure your computer to support TCP/IP, accept the default values for Internet Protocol Version 4 (IPv4) and Internet Protocol Version 6 (IPv6) and click OK. Alternatively, you can manually configure network settings for both Internet Protocol Version 4 (IPv4) and Internet Protocol Version 6 (IPv6).

Important

NLM Locking protocol implementation in Red Hat Storage does not support clients over IPv6.

Figure 4.2. Configure TCP/IP

- The Welcome screen displays.

Figure 4.3. Welcome

Click Next. - The Language Selection screen displays. Select the preferred language for the installation and the system default and click Next.

- The Keyboard Configuration screen displays. Select the preferred keyboard layout for the installation and the system default and click Next.

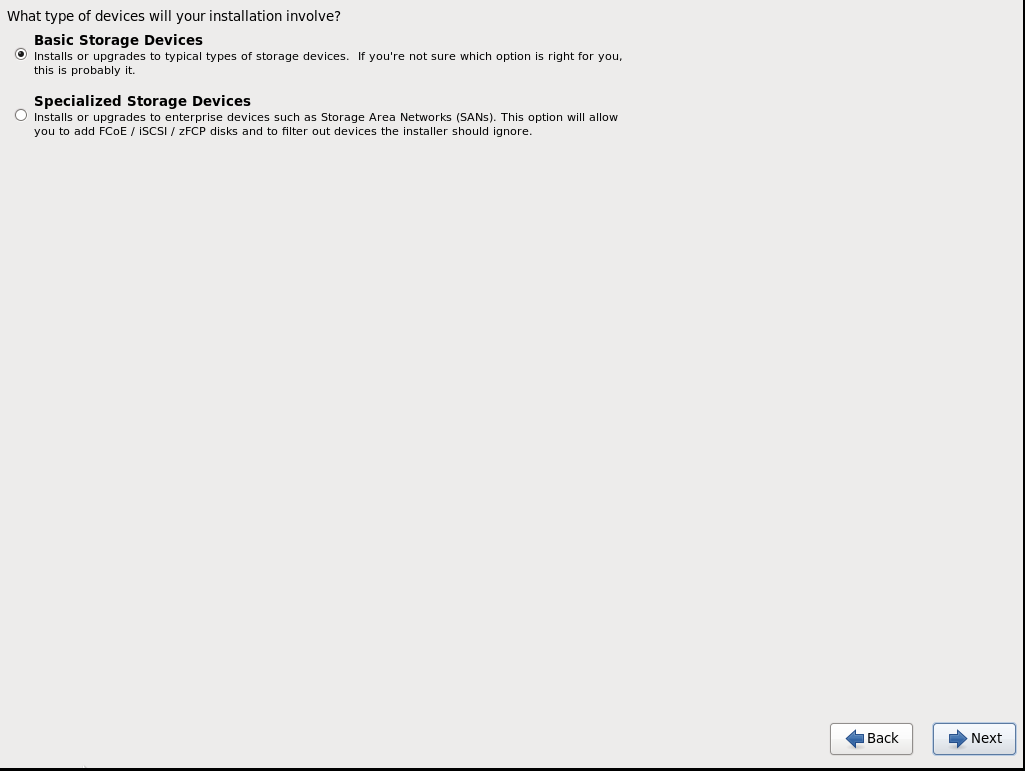

- The Storage Devices screen displays. Select Basic Storage Devices.

Figure 4.4. Storage Devices

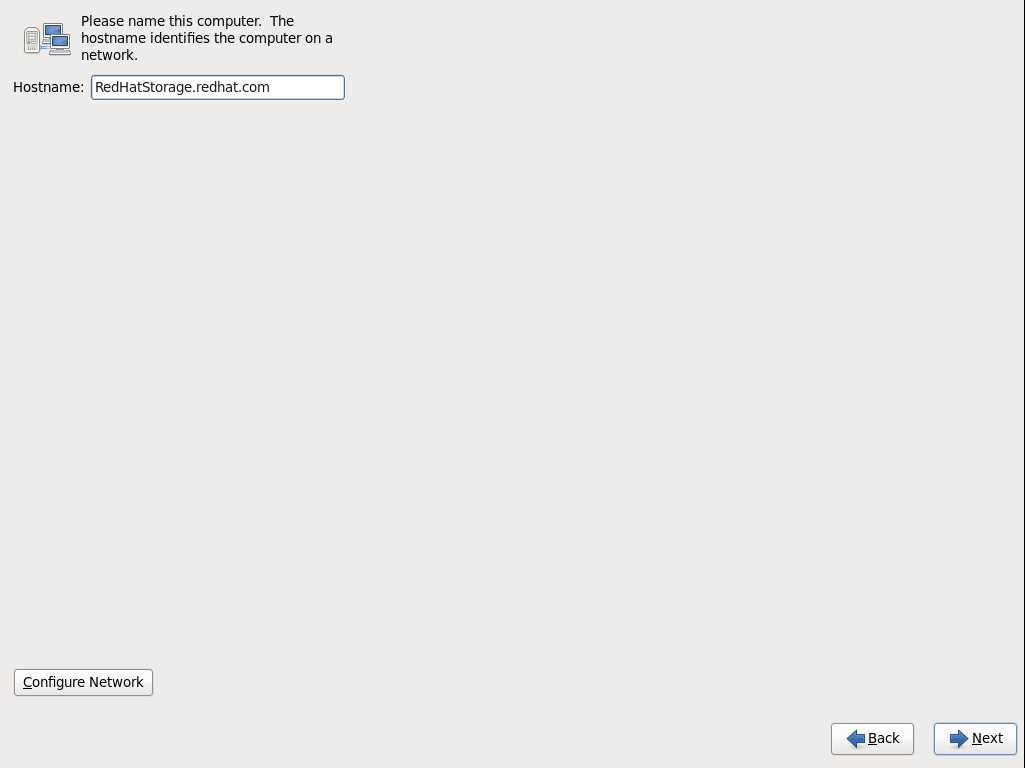

Click Next. - The Hostname configuration screen displays.

Figure 4.5. Hostname

Enter the hostname for the computer. You can also configure network interfaces if required. Click Next. - The Time Zone Configuration screen displays. Set your time zone by selecting the city closest to your computer's physical location.

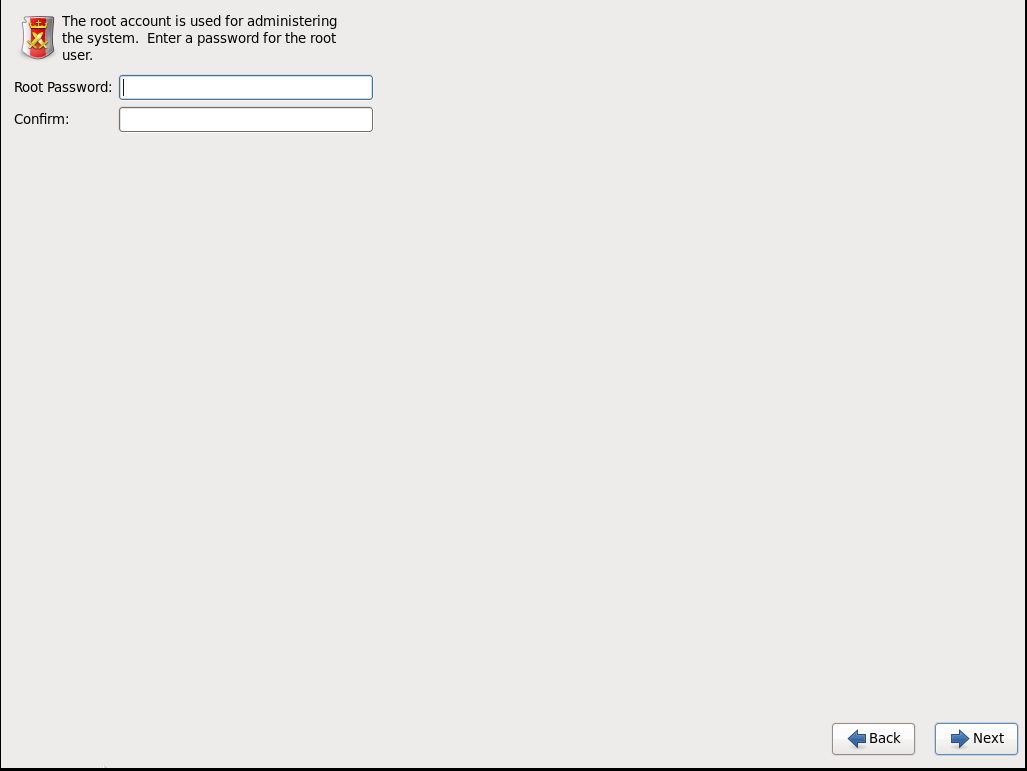

- The Set Root Password screen displays.The root account's credentials will be used to install packages, upgrade RPMs, and perform most system maintenance. As such, setting up a root account and password is one of the most important steps in the installation process.

Note

The root user (also known as the superuser) has complete access to the entire system. For this reason, you should only log in as the root user to perform system maintenance or administration.

Figure 4.6. Set Root Password

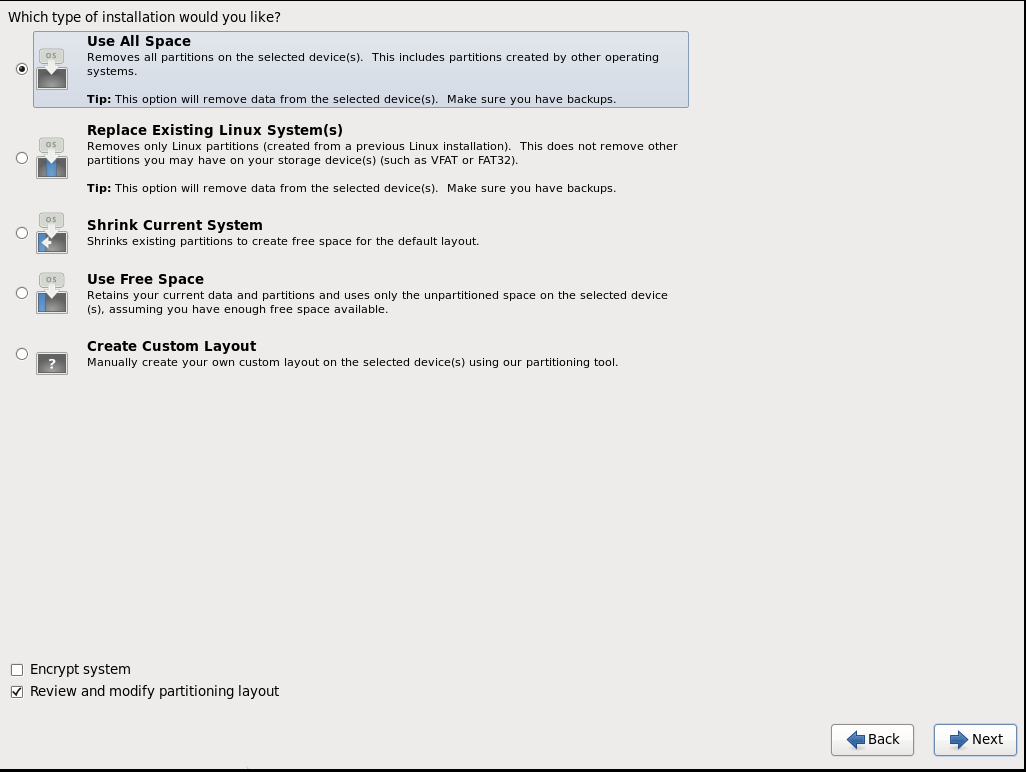

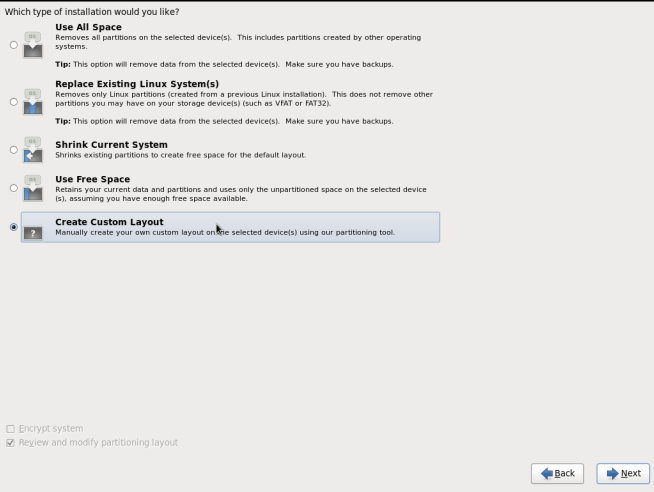

The Set Root Password screen prompts you to set a root password for your system. You cannot proceed to the next stage of the installation process without entering a root password.Enter the root password into the Root Password field. The characters you enter will be masked for security reasons. Then, type the same password into the Confirm field to ensure the password is set correctly. After you set the root password, click Next. - The Partitioning Type screen displays.Partitioning allows you to divide your hard drive into isolated sections that each behave as their own hard drive. Partitioning is particularly useful if you run multiple operating systems. If you are unsure how to partition your system, see An Introduction to Disk Partitions in Red Hat Enterprise Linux 6 Installation Guide for more information.In this screen you can choose to create the default partition layout in one of four different ways, or choose to partition storage devices manually to create a custom layout.If you do not feel comfortable partitioning your system, choose one of the first four options. These options allow you to perform an automated installation without having to partition your storage devices yourself. Depending on the option you choose, you can still control what data, if any, is removed from the system. Your options are:

- Use All Space

- Replace Existing Linux System(s)

- Shrink Current System

- Use Free Space

- Create Custom Layout

Choose the preferred partitioning method by clicking the radio button to the left of its description in the dialog box.

Figure 4.7. Partitioning Type

Click Next once you have made your selection. For more information on disk partitioning, see Disk Partitioning Setup in the Red Hat Enterprise Linux 6 Installation Guide.Note

If a user does not select Create Custom Layout, all the connected/detected disks will be used in the Volume Group for the/and/homefilesystems. - The Boot Loader screen displays with the default settings.Click Next.

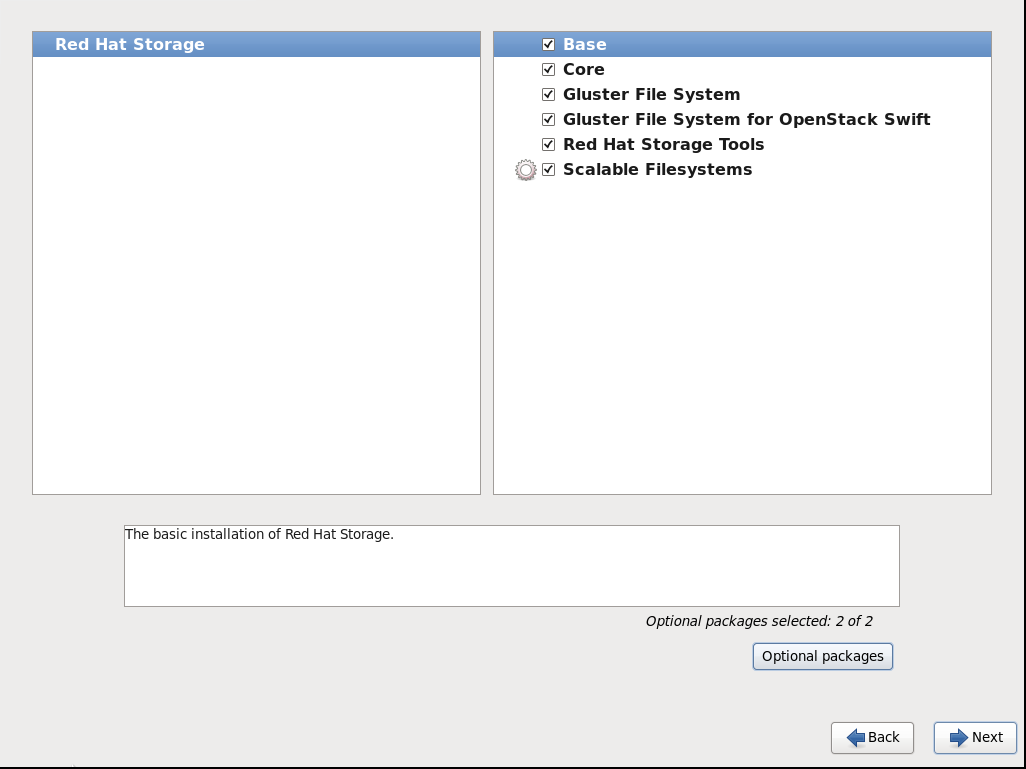

- The Minimal Selection screen displays.

Figure 4.8. Minimal Selection

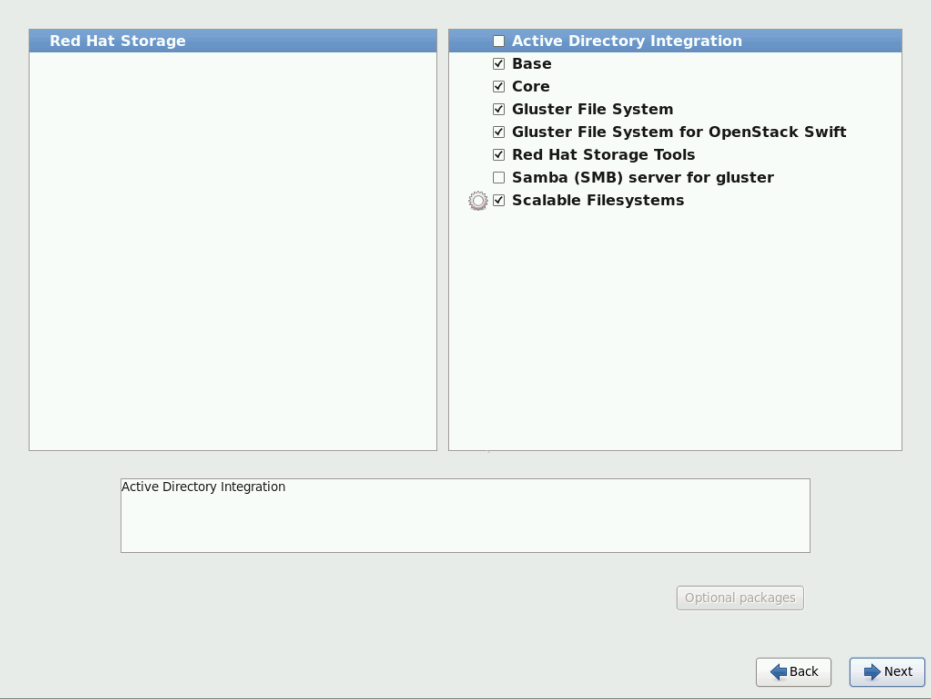

Click Next to retain the default selections and proceed with the installation.- To customize your package set further, select the Customize now option and click Next. This will take you to the Customizing the Software Selection screen.

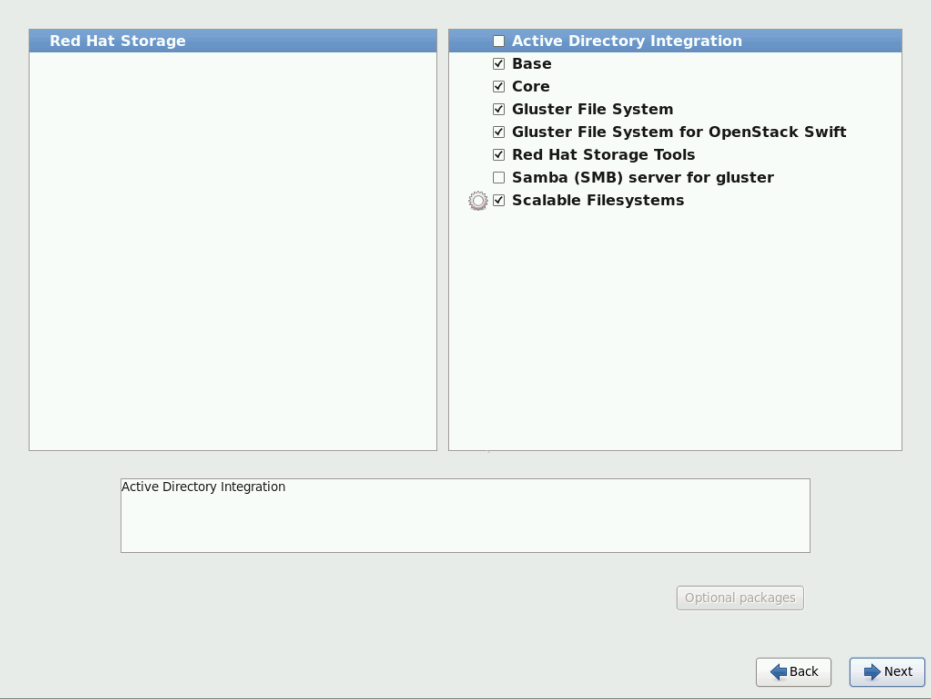

Figure 4.9. Customize Packages

Click Next to retain the default selections and proceed with the installation. - For Red Hat Storage 3.0.4 or later, if you require the Samba packages, ensure you select the Samba (SMB) server for gluster component, in the Customizing the Software Selection screen. If you require samba active directory integration with gluster, ensure you select Active Directory Integration component.

Figure 4.10. Customize Packages

- The Package Installation screen displays.Red Hat Storage Server reports the progress on the screen as it installs the selected packages in the system.

Figure 4.11. Package Installation

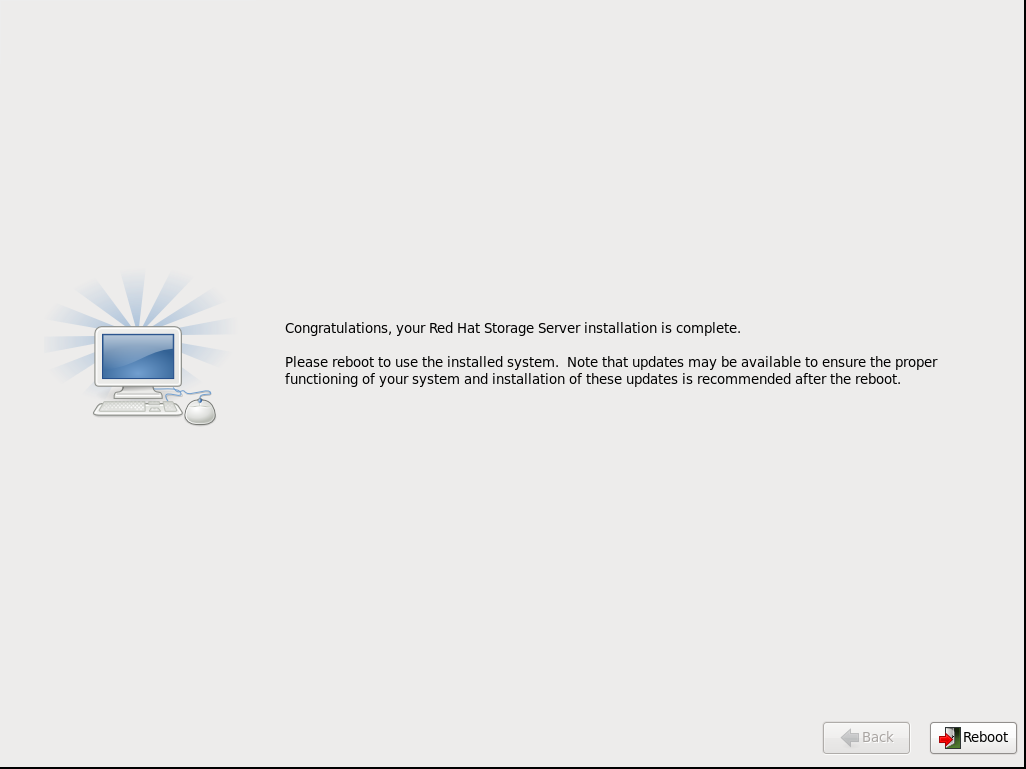

- On successful completion, the Installation Complete screen displays.

Figure 4.12. Installation Complete

- Click Reboot to reboot the system and complete the installation of Red Hat Storage Server.Ensure that you remove any installation media if it is not automatically ejected upon reboot.Congratulations! Your Red Hat Storage Server installation is now complete.

4.2. Installing Red Hat Storage Server on Red Hat Enterprise Linux (Layered Install)

Important

Perform a base install of Red Hat Enterprise Linux Server version 6.5 or 6.6.

Register the System with Subscription Manager

Run the following command and enter your Red Hat Network user name and password to register the system with the Red Hat Network:# subscription-manager register

Identify Available Entitlement Pools

Run the following commands to find entitlement pools containing the channels required to install Red Hat Storage:# subscription-manager list --available | grep -A8 "Red Hat Enterprise Linux Server" # subscription-manager list --available | grep -A8 "Red Hat Storage"

Attach Entitlement Pools to the System

Use the pool identifiers located in the previous step to attach theRed Hat Enterprise Linux ServerandRed Hat Storageentitlements to the system. Run the following command to attach the entitlements:# subscription-manager attach --pool=[POOLID]

Enable the Required Channels

Run the following commands to enable the channels required to install Red Hat Storage:# subscription-manager repos --enable=rhel-6-server-rpms # subscription-manager repos --enable=rhel-scalefs-for-rhel-6-server-rpms # subscription-manager repos --enable=rhs-3-for-rhel-6-server-rpms

- For Red Hat Storage 3.0.4 and later, if you require Samba, then enable the following channel:

# subscription-manager repos --enable=rh-gluster-3-samba-for-rhel-6-server-rpms

Verify if the Channels are Enabled

Run the following command to verify if the channels are enabled:#yum repolist

Kernel Version Requirement

Red Hat Storage requires the kernel-2.6.32-431.17.1.el6 version or higher to be used on the system. Verify the installed and running kernel versions by running the following command:# rpm -q kernel kernel-2.6.32-431.el6.x86_64 kernel-2.6.32-431.17.1.el6.x86_64

# uname -r 2.6.32-431.17.1.el6.x86_64

Install the Required Kernel Version

From the previous step, if the kernel version is found to be lower than kernel-2.6.32-431.17.1.el6, install the kernel-2.6.32-431.17.1.el6 or later:- To install kernel-2.6.32-431.17.1.el6 version, run the command:

# yum install kernel-2.6.32-431.17.1.el6

- To install the latest kernel version, run the command:

# yum update kernel

Install Red Hat Storage

Run the following command to install Red Hat Storage:# yum install redhat-storage-server

- For Red Hat Storage 3.0.4 and later, if you require Samba, then execute the following command to install Samba:

# yum groupinstall "Samba (SMB) server for gluster"

- If you require Samba Active Directory integration with gluster, execute the following command:

# yum groupinstall "Active Directory Integration"

Reboot the system

Warning

Red Hat Storage server currently does not support SELinux. You must reboot the system after the layered install is complete, in order to disable SELinux on the system.

4.3. Installing from a PXE Server

Network Boot or Boot Services. Once you properly configure PXE booting, the computer can boot the Red Hat Storage Server installation system without any other media.

- Ensure that the network cable is attached. The link indicator light on the network socket should be lit, even if the computer is not switched on.

- Switch on the computer.

- A menu screen appears. Press the number key that corresponds to the preferred option.

Important

4.4. Installing from Red Hat Satellite Server

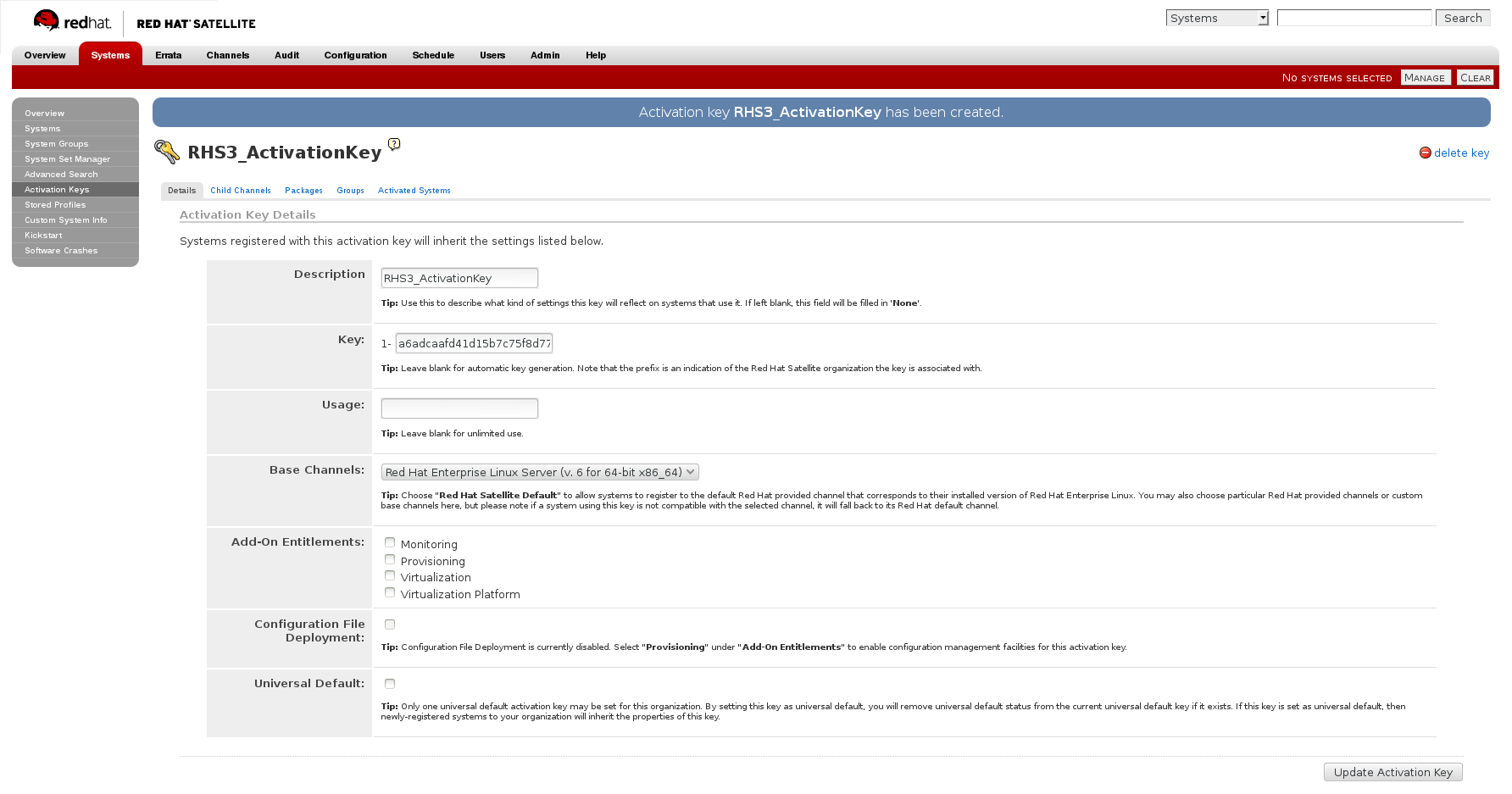

For more information on how to create an activation key, see Activation Keys in the Red Hat Network Satellite Reference Guide.

- In the Details tab of the Activation Keys screen, select

Red Hat Enterprise Linux Server (v.6 for 64-bit x86_64)from the Base Channels drop-down list.

Figure 4.13. Base Channels

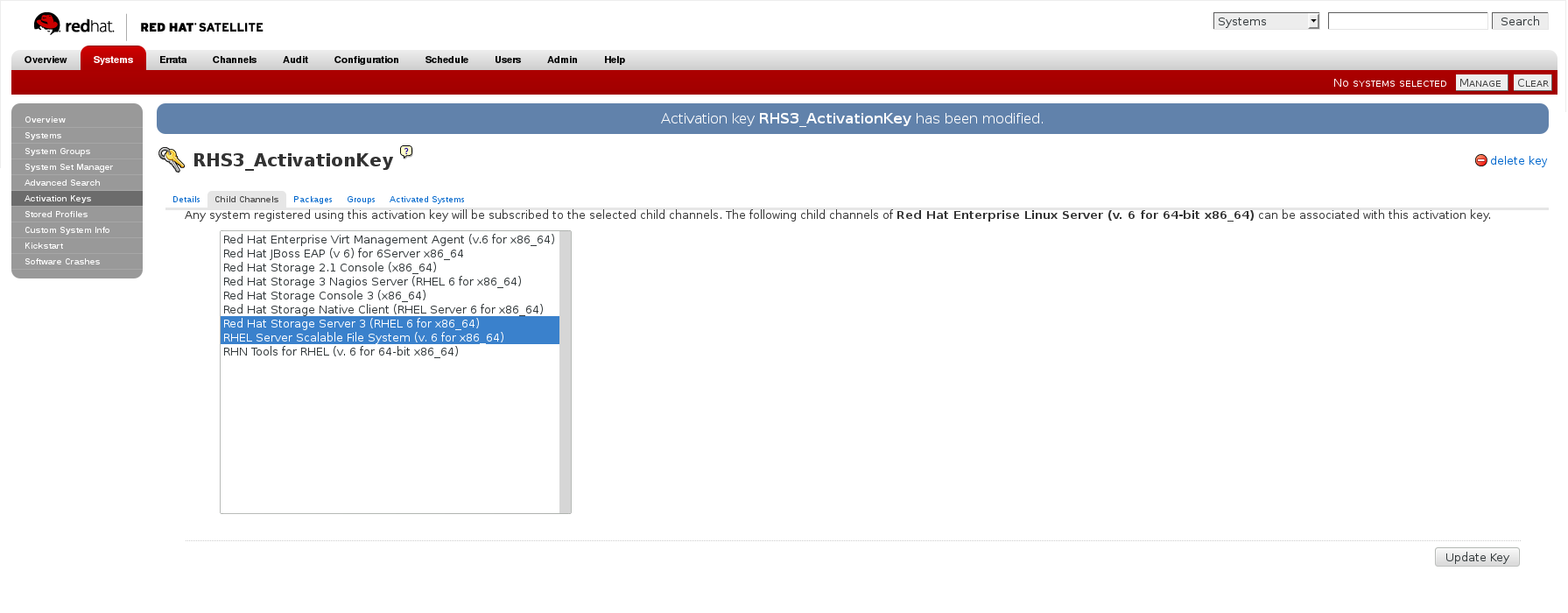

- In the Child Channels tab of the Activation Keys screen, select the following child channels:

RHEL Server Scalable File System (v. 6 for x86_64) Red Hat Storage Server 3 (RHEL 6 for x86_64)

For Red Hat Storage 3.0.4 or later, if you require the Samba package, then select the following child channel:Red Hat Gluster 3 Samba (RHEL 6 for x86_64)

Figure 4.14. Child Channels

- In the Packages tab of the Activation Keys screen, enter the following package name:

redhat-storage-server

Figure 4.15. Package

- For Red Hat Storage 3.0.4 or later, if you require the Samba package, then enter the following package name:

samba

For more information on creating a kickstart profile, see Kickstart in the Red Hat Network Satellite Reference Guide.

- When creating a kickstart profile, the following

Base ChannelandTreemust be selected.Base Channel: Red Hat Enterprise Linux Server (v.6 for 64-bit x86_64)Tree: ks-rhel-x86_64-server-6-6.5 - Do not associate any child channels with the kickstart profile.

- Associate the previously created activation key with the kickstart profile.

Important

- By default, the kickstart profile chooses

md5as the hash algorithm for user passwords.You must change this algorithm tosha512by providing the following settings in theauthfield of theKickstart Details,Advanced Optionspage of the kickstart profile:--enableshadow --passalgo=sha512

- After creating the kickstart profile, you must change the root password in the Kickstart Details, Advanced Options page of the kickstart profile and add a root password based on the prepared sha512 hash algorithm.

For more information on installing Red Hat Storage Server using a kickstart profile, see Kickstart in Red Hat Network Satellite Reference Guide.

Chapter 5. Subscribing to the Red Hat Storage Server Channels

Note

Register the System with Subscription Manager

Run the following command and enter your Red Hat Network user name and password to register the system with Subscription Manager:# subscription-manager register --auto-attach

Enable the Required Channels

Run the following commands to enable the repos required to install Red Hat Storage:# subscription-manager repos --enable=rhel-6-server-rpms # subscription-manager repos --enable=rhel-scalefs-for-rhel-6-server-rpms # subscription-manager repos --enable=rhs-3-for-rhel-6-server-rpms

- For Red Hat Storage 3.0.4 or later, if you require Samba, then enable the following channel:

# subscription-manager repos --enable=rh-gluster-3-samba-for-rhel-6-server-rpms

Verify if the Channels are Enabled

Run the following command to verify if the channels are enabled:# yum repolist

Configure the Client System to Access Red Hat Satellite

Configure the client system to access Red Hat Satellite. Refer section Registering Clients with Red Hat Satellite Server in Red Hat Satellite 5.6 Client Configuration Guide.Register to the Red Hat Satellite Server

Run the following command to register the system to the Red Hat Satellite Server:# rhn_register

Register to the Standard Base Channel

In the select operating system release page, selectAll available updatesand follow the prompts to register the system to the standard base channel for RHEL6 - rhel-x86_64-server-6Subscribe to the Required Red Hat Storage Server Channels

Run the following command to subscribe the system to the required Red Hat Storage server channels:# rhn-channel --add --channel rhel-x86_64-server-6-rhs-3 --channel rhel-x86_64-server-sfs-6

- For Red Hat Storage 3.0.4 or later, if you require Samba, then execute the following command to enable the required channel:

# rhn-channel --add --channel rhel-x86_64-server-6-rh-gluster-3-samba

Verify if the System is Registered Successfully

Run the following command to verify if the system is registered successfully:# rhn-channel --list rhel-x86_64-server-6 rhel-x86_64-server-6-rhs-3 rhel-x86_64-server-sfs-6

For Red Hat Storage 3.0.4 or later, if you have enabled the Samba channel, then you will also see the following channel while verifying:rhel-x86_64-server-6-rh-gluster-3-samba

Chapter 6. Upgrading Red Hat Storage

6.1. Upgrading from Red Hat Storage 2.1 to Red Hat Storage 3.0 using an ISO

yum command. For more information, refer to Section 6.2, “Upgrading from Red Hat Storage 2.1 to Red Hat Storage 3.0 for Systems Subscribed to Red Hat Network”.

Note

- Ensure that you perform the steps listed in this section on all the servers.

- In the case of a geo-replication set-up, perform the steps listed in this section on all the master and slave servers.

- You cannot access data during the upgrade process, and a downtime should be scheduled with applications, clients, and other end-users.

- Get the volume information and peer status using the following commands:

# gluster volume infoThe command displays the volume information similar to the following:Volume Name: volname Type: Distributed-Replicate Volume ID: d6274441-65bc-49f4-a705-fc180c96a072 Status: Started Number of Bricks: 2 x 2 = 4 Transport-type: tcp Bricks: Brick1: server1:/rhs/brick1/brick1 Brick2: server2:/rhs/brick1/brick2 Brick3: server3:/rhs/brick1/brick3 Brick4: server4:/rhs/brick1/brick4 Options Reconfigured: geo-replication.indexing: on

# gluster peer statusThe command displays the peer status information similar to the following:# gluster peer status Number of Peers: 3 Hostname: server2 Port: 24007 Uuid: 2dde2c42-1616-4109-b782-dd37185702d8 State: Peer in Cluster (Connected) Hostname: server3 Port: 24007 Uuid: 4224e2ac-8f72-4ef2-a01d-09ff46fb9414 State: Peer in Cluster (Connected) Hostname: server4 Port: 24007 Uuid: 10ae22d5-761c-4b2e-ad0c-7e6bd3f919dc State: Peer in Cluster (Connected)

Note

Make a note of this information to compare with the output after upgrading. - In case of a geo-replication set-up, stop the geo-replication session using the following command:

# gluster volume geo-replication master_volname slave_node::slave_volname stop

- In case of a CTDB/Samba set-up, stop the CTDB service using the following command:

# service ctdb stop ;Stopping the CTDB service also stops the SMB service

- Verify if the CTDB and the SMB services are stopped using the following command:

ps axf | grep -E '(ctdb|smb|winbind|nmb)[d]'

- In case of an object store set-up, turn off object store using the following commands:

# service gluster-swift-proxy stop # service gluster-swift-account stop # service gluster-swift-container stop # service gluster-swift-object stop

- Stop all the gluster volumes using the following command:

# gluster volume stop volname

- Stop the

glusterdservices on all the nodes using the following command:# service glusterd stop

- If there are any gluster processes still running, terminate the process using

kill. - Ensure all gluster processes are stopped using the following command:

# pgrep gluster

- Back up the following configuration directory and files on the backup directory:

/var/lib/glusterd,/etc/swift,/etc/samba,/etc/ctdb,/etc/glusterfs./var/lib/samba,/var/lib/ctdbEnsure that the backup directory is not the operating system partition.# cp -a /var/lib/glusterd /backup-disk/ # cp -a /etc/swift /backup-disk/ # cp -a /etc/samba /backup-disk/ # cp -a /etc/ctdb /backup-disk/ # cp -a /etc/glusterfs /backup-disk/ # cp -a /var/lib/samba /backup-disk/ # cp -a /var/lib/ctdb /backup-disk/

Also, back up any other files or configuration files that you might require to restore later. You can create a backup of everything in/etc/. - Locate and unmount the data disk partition that contains the bricks using the following command:

# mount | grep backend-disk # umount /dev/device

For example, use thegluster volume infocommand to display the backend-disk information:Volume Name: volname Type: Distributed-Replicate Volume ID: d6274441-65bc-49f4-a705-fc180c96a072 Status: Started Number of Bricks: 2 x 2 = 4 Transport-type: tcp Bricks: Brick1: server1:/rhs/brick1/brick1 Brick2: server2:/rhs/brick1/brick2 Brick3: server3:/rhs/brick1/brick3 Brick4: server4:/rhs/brick1/brick4 Options Reconfigured: geo-replication.indexing: on

In the above example, the backend-disk is mounted at /rhs/brick1# findmnt /rhs/brick1 TARGET SOURCE FSTYPE OPTIONS /rhs/brick1 /dev/mapper/glustervg-brick1 xfs rw,relatime,attr2,delaylog,no # umount /rhs/brick1

- Insert the DVD with Red Hat Storage 3.0 ISO and reboot the machine. The installation starts automatically. You must install Red Hat Storage on the system with the same network credentials, IP address, and host name.

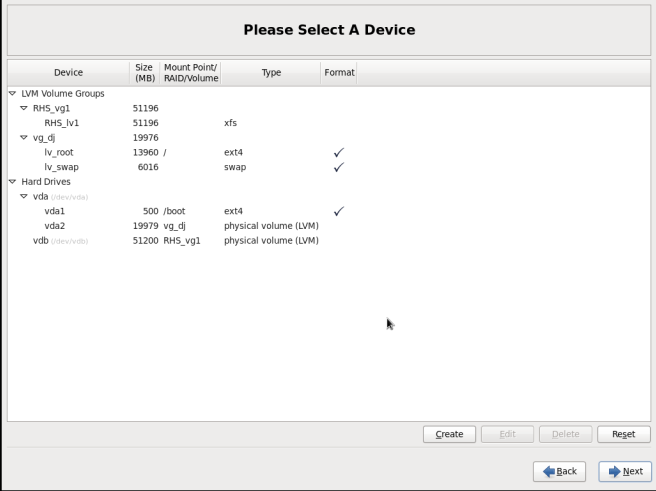

Warning

During installation, while creating a custom layout, ensure that you choose Create Custom Layout to proceed with installation. If you choose Replace Existing Linux System(s), it formats all disks on the system and erases existing data.Select Create Custom Layout. Click Next.

Figure 6.1. Custom Layout Window

- Select the disk on which to install Red Hat Storage. Click Next.For Red Hat Storage to install successfully, you must select the same disk that contained the operating system data previously.

Warning

While selecting your disk, do not select the disks containing bricks.

Figure 6.2. Select Disk Partition Window

- After installation, ensure that the host name and IP address of the machine is the same as before.

Warning

If the IP address and host name are not the same as before, you will not be able to access the data present in your earlier environment. - After installation, the system automatically starts

glusterd. Stop the gluster service using the following command:# service glusterd stop Stopping glusterd: [OK]

- Add entries to

/etc/fstabto mount data disks at the same path as before.Note

Ensure that the mount points exist in your trusted storage pool environment. - Mount all data disks using the following command:

# mount -a

- Back up the latest

glusterdusing the following command:# cp -a /var/lib/glusterd /var/lib/glusterd-backup

- Copy

/var/lib/glusterdand/etc/glusterfsfrom your backup disk to the OS disk.# cp -a /backup-disk/glusterd/* /var/lib/glusterd # cp -a /backup-disk/glusterfs/* /etc/glusterfs

Note

Do not restore the swift, samba and ctdb configuration files from the backup disk. However, any changes in swift, samba, and ctdb must be applied separately in the new configuration files from the backup taken earlier. - Copy back the latest hooks scripts to

/var/lib/glusterd/hooks.# cp -a /var/lib/glusterd-backup/hooks /var/lib/glusterd

- Ensure you restore any other files from the backup that was created earlier.

- You must restart the

glusterdmanagement daemon using the following commands:# glusterd --xlator-option *.upgrade=yes -N # service glusterd start Starting glusterd: [OK]

- Start the volume using the following command:

# gluster volume start volname force volume start: volname : success

Note

Repeat the above steps on all the servers in your trusted storage pool environment. - In case you have a pure replica volume (1*n) where n is the replica count, perform the following additional steps:

- Run the

fix-layoutcommand on the volume using the following command:# gluster volume rebalance volname fix-layout start

- Wait for the

fix-layoutcommand to complete. You can check the status for completion using the following command:# gluster volume rebalance volname status

- Stop the volume using the following command:

# gluster volume stop volname

- Force start the volume using the following command:

# gluster volume start volname force

- In case of an Object Store set-up, any configuration files that were edited should be renamed to end with a

.rpmsavefile extension, and other unedited files should be removed. - Re-configure the Object Store. For information on configuring Object Store, refer to Section 18.5 in Chapter 18. Managing Object Store of the Red Hat Storage Administration Guide.

- Get the volume information and peer status of the created volume using the following commands:

# gluster volume info # gluster peer status

Ensure that the output of these commands has the same values that they had before you started the upgrade.Note

In Red Hat Storage 3.0, thegluster peer statusoutput does not display the port number. - Verify the upgrade.

- If all servers in the trusted storage pool are not upgraded, the

gluster peer statuscommand displays the peers as disconnected or rejected.The command displays the peer status information similar to the following:# gluster peer status Number of Peers: 3 Hostname: server2 Uuid: 2dde2c42-1616-4109-b782-dd37185702d8 State: Peer Rejected (Connected) Hostname: server3 Uuid: 4224e2ac-8f72-4ef2-a01d-09ff46fb9414 State: Peer in Cluster (Connected) Hostname: server4 Uuid: 10ae22d5-761c-4b2e-ad0c-7e6bd3f919dc State: Peer Rejected (Disconnected)

- If all systems in the trusted storage pool are upgraded, the

gluster peer statuscommand displays peers as connected.The command displays the peer status information similar to the following:# gluster peer status Number of Peers: 3 Hostname: server2 Uuid: 2dde2c42-1616-4109-b782-dd37185702d8 State: Peer in Cluster (Connected) Hostname: server3 Uuid: 4224e2ac-8f72-4ef2-a01d-09ff46fb9414 State: Peer in Cluster (Connected) Hostname: server4 Uuid: 10ae22d5-761c-4b2e-ad0c-7e6bd3f919dc State: Peer in Cluster (Connected)

- If all the volumes in the trusted storage pool are started, the

gluster volume infocommand displays the volume status as started.Volume Name: volname Type: Distributed-Replicate Volume ID: d6274441-65bc-49f4-a705-fc180c96a072 Status: Started Number of Bricks: 2 x 2 = 4 Transport-type: tcp Bricks: Brick1: server1:/rhs/brick1/brick1 Brick2: server2:/rhs/brick1/brick2 Brick3: server3:/rhs/brick1/brick3 Brick4: server4:/rhs/brick1/brick4 Options Reconfigured: geo-replication.indexing: on

- If you have a geo-replication setup, re-establish the geo-replication session between the master and slave using the following steps:

- Run the following commands on any one of the master nodes:

# cd /usr/share/glusterfs/scripts/ # sh generate-gfid-file.sh localhost:${master-vol} $PWD/get-gfid.sh /tmp/tmp.atyEmKyCjo/upgrade-gfid-values.txt # scp /tmp/tmp.atyEmKyCjo/upgrade-gfid-values.txt root@${slavehost}:/tmp/ - Run the following commands on a slave node:

# cd /usr/share/glusterfs/scripts/ # sh slave-upgrade.sh localhost:${slave-vol} /tmp/tmp.atyEmKyCjo/upgrade-gfid-values.txt $PWD/gsync-sync-gfidNote

If the SSH connection for your setup requires a password, you will be prompted for a password for all machines where the bricks are residing. - Re-create and start the geo-replication sessions.For information on creating and starting geo-replication sessions, refer to Managing Geo-replication in the Red Hat Storage Administration Guide.

Note

It is recommended to add the child channel of Red Hat Enterprise Linux 6 containing the native client, so that you can refresh the clients and get access to all the new features in Red Hat Storage 3.0. For more information, refer to the Upgrading Native Client section in the Red Hat Storage Administration Guide. - Remount the volume to the client and verify for data consistency. If the gluster volume information and gluster peer status information matches with the information collected before migration, you have successfully upgraded your environment to Red Hat Storage 3.0.

6.2. Upgrading from Red Hat Storage 2.1 to Red Hat Storage 3.0 for Systems Subscribed to Red Hat Network

- Unmount the clients using the following command:

umount mount-point

- Stop the volumes using the following command:

gluster volume stop volname

- Unmount the data partition(s) on the servers using the following command:

umount mount-point

- To verify if the volume status is stopped, use the following command:

# gluster volume info

If there is more than one volume, stop all of the volumes. - Stop the

glusterdservices on all the servers using the following command:# service glusterd stop

Important

- You can upgrade to Red Hat Storage 3.0 only from Red Hat Storage 2.1 Update 4 release. If your current version is lower than Update 4, then upgrade it to Update 4 before upgrading to Red Hat Storage 3.0.

- Upgrade the servers before upgrading the clients.

- Execute the following command to kill all gluster processes:

# pkill gluster

- To check the system's current subscription status run the following command:

# migrate-rhs-classic-to-rhsm --status

- Install the required packages using the following command:

# yum install subscription-manager-migration # yum install subscription-manager-migration-data

- Execute the following command to migrate from Red Hat Network Classic to Red Hat Subscription Manager

# migrate-rhs-classic-to-rhsm --rhn-to-rhsm

- To enable the Red Hat Storage 3.0 repos, execute the following command:

# migrate-rhs-classic-to-rhsm --upgrade --version 3

- For Red Hat Storage 3.0.4 or later, if you require Samba, then enable the following channel:

# subscription-manager repos --enable=rh-gluster-3-samba-for-rhel-6-server-rpms

Warning

- The Samba version 3 is being deprecated from Red Hat Storage 3.0 Update 4. Further updates will not be provided for samba-3.x. It is recommended that you upgrade to Samba-4.x, which is provided in a separate channel or repository, for all updates including the security updates.

- Downgrade of Samba from Samba 4.x to Samba 3.x is not supported.

- Ensure that Samba is upgraded on all the nodes simultaneously, as running different versions of Samba in the same cluster will lead to data corruption.

- Stop the CTDB and SMB services across all nodes in the Samba cluster using the following command. This is because different versions of Samba cannot run in the same Samba cluster.

# service ctdb stop ;Stopping the CTDB service will also stop the SMB service.

- To verify if the CTDB and SMB services are stopped, execute the following command:

ps axf | grep -E '(ctdb|smb|winbind|nmb)[d]'

- To verify if the migration from Red Hat Network Classic to Red Hat Subscription Manager is successful, execute the following command:

# migrate-rhs-classic-to-rhsm --status

- To upgrade the server from Red Hat Storage 2.1 to 3.0, use the following command:

# yum update

Note

It is recommended to add the child channel of Red Hat Enterprise Linux 6 that contains the native client to refresh the clients and access the new features in Red Hat Storage 3.0. For more information, refer to Installing Native Client in the Red Hat Storage Administration Guide. - Reboot the servers. This is required as the kernel is updated to the latest version.

6.3. Upgrading from Red Hat Storage 2.1 to Red Hat Storage 3.0 for Systems Subscribed to Red Hat Satellite Server

- Unmount all the clients using the following command:

umount mount-name

- Stop the volumes using the following command:

# gluster volume stop volname

- Unmount the data partition(s) on the servers using the following command:

umount mount-point

- Ensure that the Red Hat Storage 2.1 server is updated to Red Hat Storage 2.1 Update 4, by running the following command:

# yum update

- Create an Activation Key at the Red Hat Satellite Server, and associate it with the following channels. For more information, refer to Section 4.4, “Installing from Red Hat Satellite Server”

Base Channel: Red Hat Enterprise Linux Server (v.6 for 64-bit x86_64) Child channels: RHEL Server Scalable File System (v. 6 for x86_64) Red Hat Storage Server 3 (RHEL 6 for x86_64)

- For Red Hat Storage 3.0.4 or later, if you require the Samba package add the following child channel:

Red Hat Gluster 3 Samba (RHEL 6 for x86_64)

- Unregister your system from Red Hat Satellite by following these steps:

- Log in to the Red Hat Satellite server.

- Click on the Systems tab in the top navigation bar and then the name of the old or duplicated system in the System List.

- Click the delete system link in the top-right corner of the page.

- To confirm the system profile deletion by clicking the Delete System button.

- On the updated Red Hat Storage 2.1 Update 4 server, run the following command:

# rhnreg_ks --username username --password password --force --activationkey Activation Key ID

This uses the prepared Activation Key and re-registers the system to the Red Hat Storage 3.0 channels on the Red Hat Satellite Server. - Verify if the channel subscriptions have changed to the following:

# rhn-channel --list rhel-x86_64-server-6 rhel-x86_64-server-6-rhs-3 rhel-x86_64-server-sfs-6

For Red Hat Storage 3.0.4 or later, if you have enabled the Samba channel, then verify if you have the following channel:rhel-x86_64-server-6-rh-gluster-3-samba

- Run the following command to upgrade to Red Hat Storage 3.0.

# yum update

- Reboot, and run volume and data integrity checks.

6.4. Upgrading from Red Hat Storage 2.1 to Red Hat Storage 3.0 in a Red Hat Enterprise Virtualization-Red Hat Storage Environment

yum.

6.4.1. Upgrading using an ISO

- Using Red Hat Enterprise Virtualization Manager, stop all the virtual machine instances.The Red Hat Storage volume on the instances will be stopped during the upgrade.

Note

Ensure you stop the volume, as rolling upgrade is not supported in Red Hat Storage. - Using Red Hat Enterprise Virtualization Manager, move the data domain of the data center to Maintenance mode.

- Using Red Hat Enterprise Virtualization Manager, stop the volume (the volume used for data domain) containing Red Hat Storage nodes in the data center.

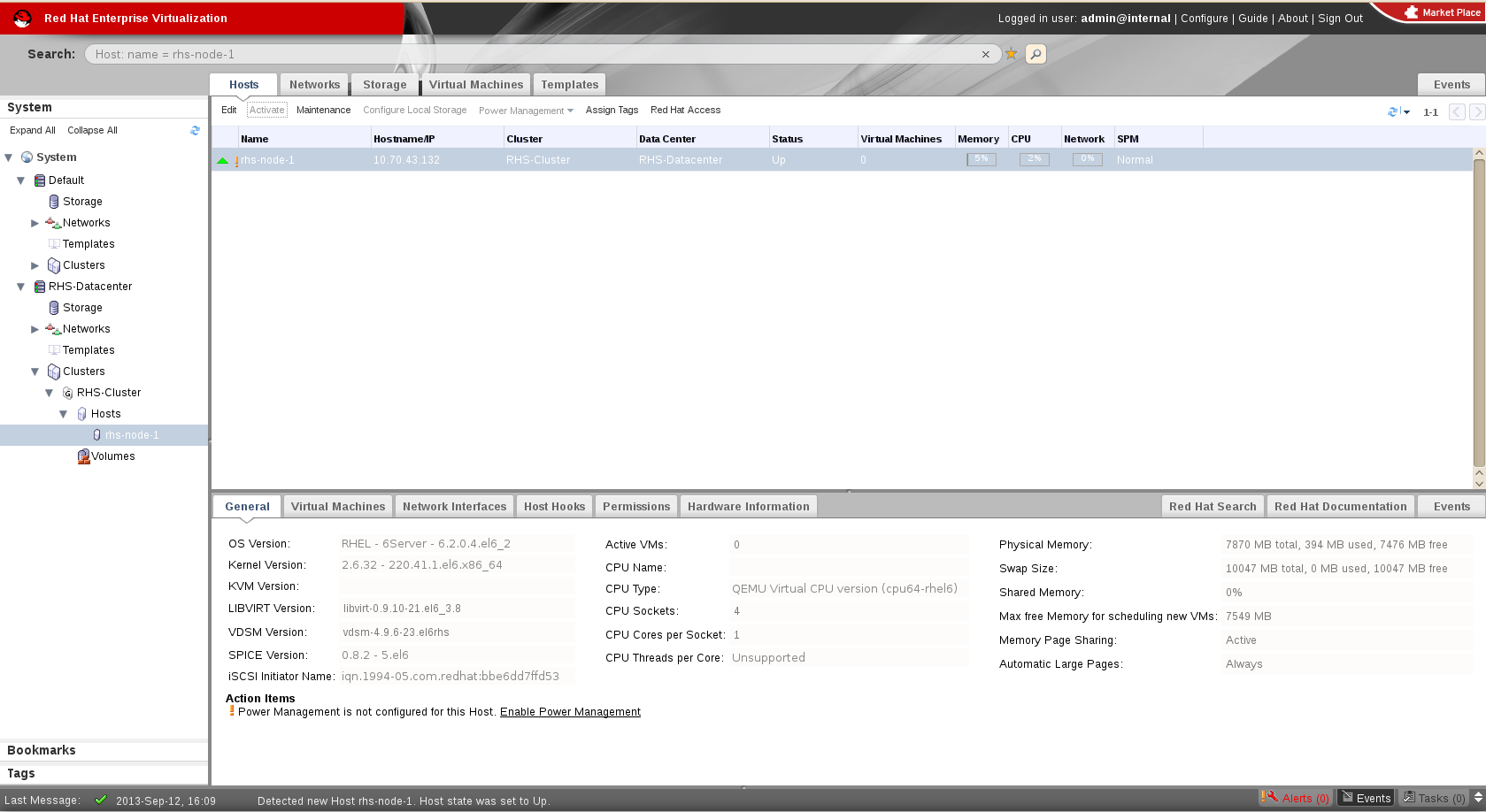

- Using Red Hat Enterprise Virtualization Manager, move all Red Hat Storage nodes to Maintenance mode.

- Perform the ISO Upgrade as mentioned in Section 6.1, “Upgrading from Red Hat Storage 2.1 to Red Hat Storage 3.0 using an ISO”.

Figure 6.3. Red Hat Storage Node

- Re-install the Red Hat Storage nodes from Red Hat Enterprise Virtualization Manager.

Note

- Re-installation for the Red Hat Storage nodes should be done from Red Hat Enterprise Virtualization Manager. The newly upgraded Red Hat Storage 3.0 nodes lose their network configuration and other configurations, such as iptables configuration, done earlier while adding the nodes to Red Hat Enterprise Virtualization Manager. Re-install the Red Hat Storage nodes to have the bootstrapping done.

- You can re-configure the Red Hat Storage nodes using the option provided under Action Items, as shown in Figure 6.4, “Red Hat Storage Node before Upgrade ”, and perform bootstrapping.

Figure 6.4. Red Hat Storage Node before Upgrade

- Perform the steps above in all Red Hat Storage nodes.

- Start the volume once all the nodes are shown in Up status in Red Hat Enterprise Virtualization Manager.

- Upgrade the native client bits for Red Hat Enterprise Linux 6.4, if Red Hat Enterprise Linux 6.4 is used as hypervisor.

Note

If Red Hat Enterprise Virtualization Hypervisor is used as hypervisor, then install the suitable build of Red Hat Enterprise Virtualization Hypervisor containing the latest native client.

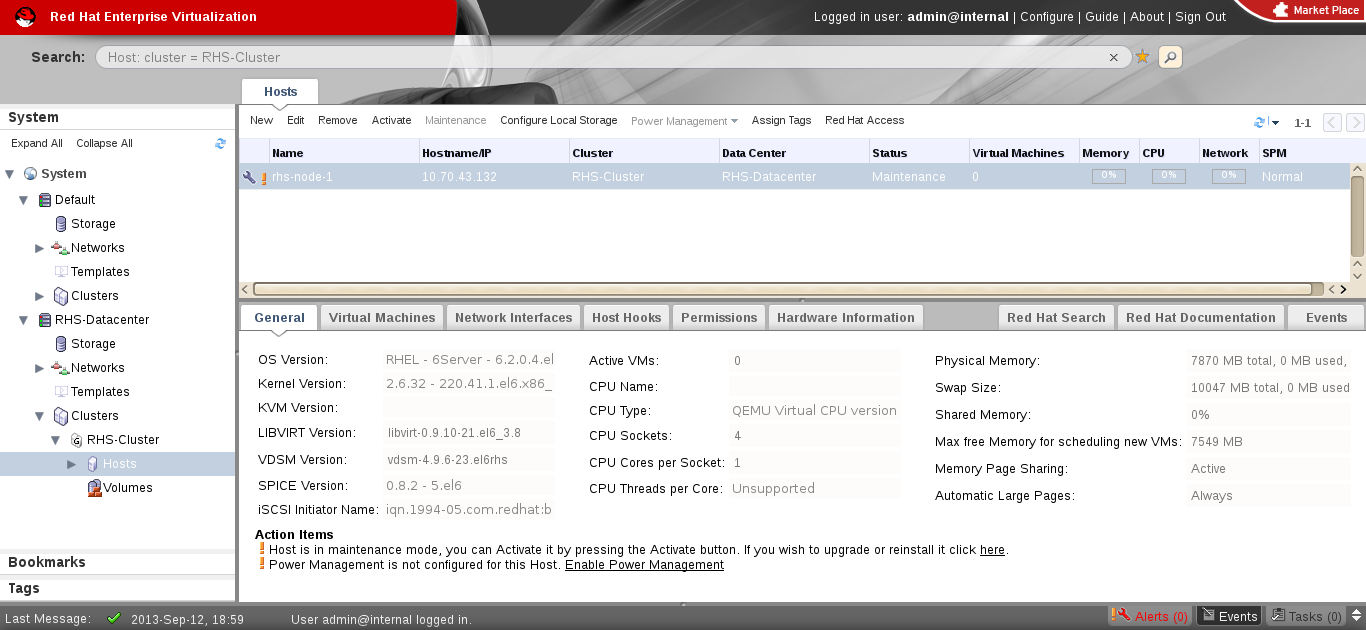

Figure 6.5. Red Hat Storage Node after Upgrade

- Using Red Hat Enterprise Virtualization Manager, activate the data domain and start all the virtual machine instances in the data center.

6.4.2. Upgrading using yum

- Using Red Hat Enterprise Virtualization Manager, stop all virtual machine instances in the data center.

- Using Red Hat Enterprise Virtualization Manager, move the data domain backed by gluster volume to Maintenance mode.

- Using Red Hat Enterprise Virtualization Manager, move all Red Hat Storage nodes to Maintenance mode.

- Perform

yumupdate as mentioned in Section 6.2, “Upgrading from Red Hat Storage 2.1 to Red Hat Storage 3.0 for Systems Subscribed to Red Hat Network”. - Once the Red Hat Storage nodes are rebooted and up, Activate them using Red Hat Enterprise Virtualization Manager.

Note

Re-installation of Red Hat Storage nodes is required, as the network configurations and bootstrapping configurations done prior to upgrade are preserved, unlike ISO upgrade. - Using Red Hat Enterprise Virtualization Manager, start the volume.

- Upgrade the native client on Red Hat Enterprise Linux 6.4, in case Red Hat Enterprise Linux 6.4 is used as hypervisor.

Note

If Red Hat Enterprise Virtualization Hypervisor is used as hypervisor, reinstall Red Hat Enterprise Virtualization Hypervisor containing the latest version of Red Hat Storage native client. - Activate the data domain and start all the virtual machine instances.

Chapter 7. Deploying Samba on Red Hat Storage

7.1. Prerequisites

- You must install Red Hat Storage Server 3.0.4 on the target server.

Warning

- The Samba version 3 is being deprecated from Red Hat Storage 3.0 Update 4. Further updates will not be provided for samba-3.x. It is recommended that you upgrade to Samba-4.x, which is provided in a separate channel or repository, for all updates including the security updates.

- Downgrade of Samba from Samba 4.x to Samba 3.x is not supported.

- Ensure that Samba is upgraded on all the nodes simultaneously, as running different versions of Samba in the same cluster will lead to data corruption.

- Enable the channel where the Samba packages are available:

- If you have registered your machine using Red Hat Subscription Manager, enable the channel by running the following command:

# subscription-manager repos --enable=rh-gluster-3-samba-for-rhel-6-server-rpms

- If you have registered your machine using Satellite server, enable the channel by running the following command:

# rhn-channel --add --channel rhel-x86_64-server-6-rh-gluster-3-samba

7.2. Installing Samba Using ISO

Figure 7.1. Customize Packages

7.3. Installing Samba Using yum

# yum groupinstall "Samba (SMB) server for gluster"

# yum groupinstall "Active Directory Integration"

Chapter 8. Deploying the Hortonworks Data Platform 2.1 on Red Hat Storage

8.1. Prerequisites

8.1.1. Supported Versions

Table 8.1. Red Hat Storage Server Support Matrix

| Red Hat Storage Server version | HDP version | Ambari version |

|---|---|---|

| 3.0.4 | 2.1 | 1.6.1 |

| 3.0.2 | 2.1 | 1.6.1 |

| 3.0.0 | 2.0.6 | 1.4.4 |

8.1.2. Software and Hardware Requirements

- Must have at least the following hardware specification:

- 2 x 2 GHz 4 core processors

- 32 GB RAM

- 500 GB of storage capacity

- 1 x 1 GbE NIC

- Must have iptables disabled.

- Must use fully qualified domain names (FQDN). For example rhs-1.server.com is acceptable, but rhs-1 is not allowed.

- Either, all servers must be configured to use a DNS server and must be able to use DNS for FQDN resolution or all the storage nodes must have the FQDN of all of the servers in the cluster listed in their

/etc/hostsfile. - Must have the following users and groups available on all the servers.

User Group yarn hadoop mapred hadoop hive hadoop hcat hadoop ambari-qa hadoop hbase hadoop tez hadoop zookeeper hadoop oozie hadoop falcon hadoop The specific UIDs and GIDs for the respective users and groups are up to the Administrator of the trusted storage pool, but they must be consistent across the trusted storage pool. For example, if the "hadoop" user has a UID as 591 on one server, the hadoop user must have UID as 591 on all other servers. This can be quite a lot of work to manage using Local Authentication and it is common and acceptable to install a central authentication solution such as LDAP or Active Directory for your cluster, so that users and groups can be easily managed in one place. However, to use local authentication, you can run the script below on each server to create the users and groups and ensure they are consistent across the cluster:groupadd hadoop -g 590; useradd -u 591 mapred -g hadoop; useradd -u 592 yarn -g hadoop; useradd -u 594 hcat -g hadoop; useradd -u 595 hive -g hadoop; useradd -u 590 ambari-qa -g hadoop; useradd -u 593 tez -g hadoop; useradd -u 596 oozie -g hadoop; useradd -u 597 zookeeper -g hadoop; useradd -u 598 falcon -g hadoop; useradd -u 599 hbase -g hadoop

8.1.3. Existing Red Hat Storage Trusted Storage Pool

Note

Important

/mnt/brick1 as the mount point for Red Hat Storage bricks and /mnt/glusterfs/volname as the mount point for Red Hat Storage volume. It is possible that you have an existing Red Hat Storage volume that has been created with different mount points for the Red Hat Storage bricks and volumes. If the mount points differ from the convention, replace the prefix listed in this installation guide with the prefix that you have.

8.1.4. New Red Hat Storage Trusted Storage Pool

Note

8.1.5. Red Hat Storage Server Requirements

rhs-big-data-3-for-rhel-6-server-rpms channel on this server.

- If you have registered your machine using Red Hat Subscription Manager, enable the channel by running the following command:

# subscription-manager repos --enable=rhs-big-data-3-for-rhel-6-server-rpms

- If you have registered your machine using Satellite server, enable the channel by running the following command:

# rhn-channel --add --channel rhel-x86_64-server-6-rhs-bigdata-3

8.1.6. Hortonworks Ambari Server Requirements

It is mandatory to setup a passwordless-SSH connection from the Ambari Server to all other servers within the trusted storage pool. Instructions for installing and configuring Hortonworks Ambari is provided in the further sections of this chapter.

rhel-6-server-rh-common-rpms channel on this server.

- If you have registered your machine using Red Hat Subscription Manager, enable the channel by running the following command:

# subscription-manager repos --enable=rhel-6-server-rh-common-rpms

- If you have registered your machine using Satellite server, enable the channel by running the following command:

# rhn-channel --add --channel rhel-x86_64-server-rh-common-6

Warning

Note

rhs-big-data-3-for-rhel-6-server-rpms channel on that server.

- If you have registered your machine using Red Hat Subscription Manager, enable the channel by running the following command:

# subscription-manager repos --enable=rhs-big-data-3-for-rhel-6-server-rpms

- If you have registered your machine using Satellite server, enable the channel by running the following command:

# rhn-channel --add --channel rhel-x86_64-server-6-rhs-bigdata-3

8.1.7. YARN Master Server Requirements

rhel-6-server-rh-common-rpms and rhel-6-server-rhs-client-1-rpms channels on the YARN server.

- If you have registered your machine using Red Hat Subscription Manager, enable the channel by running the following command:

# subscription-manager repos --enable=rhel-6-server-rh-common-rpms --enable=rhel-6-server-rhs-client-1-rpms

- If you have registered your machine using Satellite server, enable the channel by running the following command:

# rhn-channel --add --channel rhel-x86_64-server-rh-common-6 # rhn-channel --add --channel rhel-x86_64-server-rhsclient-6

Note

rhs-big-data-3-for-rhel-6-server-rpms channel on that server.

- If you have registered your machine using Red Hat Subscription Manager, enable the channel by running the following command:

# subscription-manager repos --enable=rhs-big-data-3-for-rhel-6-server-rpms

- If you have registered your machine using Satellite server, enable the channel by running the following command:

# rhn-channel --add --channel rhel-x86_64-server-6-rhs-bigdata-3

8.2. Installing the Hadoop FileSystem Plugin for Red Hat Storage

8.2.1. Adding the Hadoop Installer for Red Hat Storage

# yum install rhs-hadoop rhs-hadoop-install

8.2.2. Configuring the Trusted Storage Pool for use with Hadoop

Note

- Open the terminal window of the server designated to be the Ambari Management Server and navigate to the

/usr/share/rhs-hadoop-install/directory. - Run the hadoop cluster configuration script as given below:

setup_cluster.sh [-y] [--hadoop-mgmt-node <node>] [--yarn-master <node>] [--profile <profile>] [--ambari-repo <url>] <node-list-spec>

where <node-list-spec> is<node1>:<brickmnt1>:<blkdev1> <node2>[:<brickmnt2>][:<blkdev2>] [<node3>[:<brickmnt3>][:<blkdev3>]] ... [<nodeN>[:<brickmntN>][:<blkdevN>]]

where- <brickmnt> is the name of the XFS mount for the above <blkdev>,for example,

/mnt/brick1or/external/HadoopBrick. When a Red Hat Storage volume is created its bricks has the volume name appended, so <brickmnt> is a prefix for the volume's bricks. Example: If a new volume is namedHadoopVolthen its brick list would be:<node>:/mnt/brick1/HadoopVolor<node>:/external/HadoopBrick/HadoopVol. - <blkdev> is the name of a Logical Volume device path, for example,

/dev/VG1/LV1or/dev/mapper/VG1-LV1. Since LVM is a prerequisite for Red Hat Storage, the <blkdev> is not expected to be a raw block path, such as/dev/sdb.

Given below is an example of running the setup_cluster.sh script on the Ambari Management server and four Red Hat Storage Nodes which have the same logical volume and mount point intended to be used as a Red Hat Storage brick../setup_cluster.sh --yarn-master yarn.hdp rhs-1.hdp:/mnt/brick1:/dev/rhs_vg1/rhs_lv1 rhs-2.hdp rhs-3.hdp rhs-4.hdp

Note

If a brick mount is omitted, the brick mount of the first node is used and if one block device is omitted, the block device of the first node is used.

8.2.3. Creating Volumes for use with Hadoop

Note

hadoop or mapredlocal.

- Open the terminal window of the server designated to be the Ambari Management Server and navigate to the

/usr/share/rhs-hadoop-install/directory. - Run the hadoop cluster configuration script as given below:

create_vol.sh [-y] <volName> [--replica

count] <volMountPrefix> <node-list>where- <--replica count> is the replica count. You can specify the replica count as 2 or 3. By default, the replica count is 2. The number of bricks must be a multiple of the replica count. The order in which bricks are specified determines how bricks are mirrored with each other. For example, first n bricks, where n is the replica count.

- <node-list> is: <node1>:<brickmnt> <node2>[:<brickmnt2>] <node3>[:<brickmnt3>] ... [<nodeN>[:<brickmntN>

- <brickmnt> is the name of the XFS mount for the block devices used by the above nodes, for example,

/mnt/brick1or/external/HadoopBrick. When a Red Hat Storage volume is created its bricks will have the volume name appended, so <brickmnt> is a prefix for the volume's bricks. For example, if a new volume is namedHadoopVolthen its brick list would be:<node>:/mnt/brick1/HadoopVolor<node>:/external/HadoopBrick/HadoopVol.

Note

The node-list forcreate_vol.shis similar to thenode-list-specused bysetup_cluster.shexcept that a block device is not specified increate_vol.Given below is an example on how to create a volume named HadoopVol, using 4 Red Hat Storage Servers, each with the same brick mount and mount the volume on/mnt/glusterfs./create_vol.sh HadoopVol /mnt/glusterfs rhs-1.hdp:/mnt/brick1 rhs-2.hdp rhs-3.hdp rhs-4.hdp

8.2.4. Deploying and Configuring the HDP 2.1 Stack on Red Hat Storage using Ambari Manager

Before deploying and configuring the HDP stack, perfom the following steps:

- Open the terminal window of the server designated to be the Ambari Management Server and replace the

HDP 2.1.GlusterFS repoinfo.xmlfile by theHDP 2.1 repoinfo.xmlfile.cp /var/lib/ambari-server/resources/stacks/HDP/2.1/repos/repoinfo.xml /var/lib/ambari-server/resources/stacks/HDP/2.1.GlusterFS/repos/

You will be prompted to overwrite/2.1.GlusterFS/repos/repoinfo.xmlfile, typeyesto overwrite the file. - Restart the Ambari Server.

# ambari-server restart

Important

HDFS as the storage selection in the HDP 2.1.GlusterFS stack is not supported. If you want to deploy HDFS, then you must select the HDP 2.1 stack (not HDP 2.1.GlusterFS) and follow the instructions of the Hortonworks documentation.

- Launch a web browser and enter

http://hostname:8080in the URL by replacing hostname with the hostname of your Ambari Management Server.Note

If the Ambari Console fails to load in the browser, it is usually because iptables is still running. Stop iptables by opening a terminal window and runservice iptables stopcommand. - Enter

adminandadminfor the username and password. - Assign a name to your cluster, such as

MyCluster. - Select the

HDP 2.1 GlusterFS Stack(if not already selected by default) and clickNext. - On the

Install Optionsscreen:- For

Target Hosts, add the YARN server and all the nodes in the trusted storage pool. - Select

Provide your SSH Private Key to automatically register hostsand provide your Ambari Server private key that was used to set up passwordless-SSH across the cluster. - Click

Register and Confirmbutton. It may take a while for this process to complete.

- For

Confirm Hosts, it may take awhile for all the hosts to be confirmed.- After this process is complete, you can ignore any warnings from the Host Check related to File and Folder Issues, Package Issues and User Issues as these are related to customizations that are required for Red Hat Storage.

- Click

Nextand ignore the Confirmation Warning.

- For

Choose Services, unselect HDFS and as a minimum select GlusterFS, Ganglia, YARN+MapReduce2, ZooKeeper and Tez.Note

- The use of Storm and Falcon have not been extensively tested and as yet are not supported.

- Do not select the Nagios service, as it is not supported. For more information, see subsection 21.1. Deployment Scenarios of chapter 21. Administering the Hortonworks Data Platform on Red Hat Storage in the Red Hat Storage 3.0 Administration Guide.

- This section describes how to deploy HDP on Red Hat Storage. Selecting

HDFSas the storage selection in the HDP 2.1 GlusterFS stack is not supported. If users wish to deploy HDFS, then they must select the HDP 2.1 (not HDP 2.1.GlusterFS) and follow the instructions in the Hortonworks documentation.

- For

Assign Masters, set all the services to your designated YARN Master Server.- For ZooKeeper, select your YARN Master Server and at least 2 additional servers within your cluster.

- Click

Nextto proceed.

- For

Assign Slaves and Clients, select all the nodes asNodeManagersexcept the YARN Master Server.- Click

Clientcheckbox for each selected node. - Click

Nextto proceed.

- On the

Customize Servicesscreen:- Click YARN tab, scroll down to the yarn.nodemanager.log-dirs and yarn.nodemanager.local-dirs properties and remove any entries that begin with

/mnt/glusterfs/.Important

New Red Hat Storage and Hadoop Clusters use the naming convention of/mnt/glusterfs/volnameas the mount point for Red Hat Storage volumes. If you have existing Red Hat Storage volumes that has been created with different mount points, then remove the entries of those mount points. - Update the following property on the YARN tab - Application Timeline Server section:

Key Value yarn.timeline-service.leveldb-timeline-store.path /tmp/hadoop/yarn/timeline - Review other tabs that are highlighted in red. These require you to enter additional information, such as passwords for the respective services.

- On the

Reviewscreen, review your configuration and then clickDeploybutton. - On the

Summaryscreen, click theCompletebutton and ignore any warnings and the Starting Services failed statement. This is normal as there is still some addition configuration that is required before we can start the services. - Click

Nextto proceed to the Ambari Dashboard. Select the YARN service on the top left and clickStop-All. Do not clickStart-Alluntil you perform the steps in section Section 8.5, “Verifying the Configuration”.

8.2.5. Enabling Existing Volumes for use with Hadoop

Important

create_vol.sh script, you must follow the steps listed in this section as well.

enable_vol.sh script below to validate the volume's setup and to update Hadoop's core-site.xml configuration file which makes the volume accessible to Hadoop.

create_vol.sh script, it is important to ensure that both the bricks and the volumes that you intend to use are properly mounted and configured. If they are not, the enable_vol.sh script will throw an exception and not run. Perform the following steps to mount and configure bricks and volumes with required parameters on all storage servers:

- Bricks need to be an XFS formatted logical volume and mounted with the

noatimeandinode64parameters. For example, if we assume the logical volume path is/dev/rhs_vg1/rhs_lv1and that path is being mounted on/mnt/brick1then the/etc/fstabentry for the mount point should look as follows:/dev/rhs_vg1/rhs_lv1 /mnt/brick1 xfs noatime,inode64 0 0

- Volumes must be mounted with the

entry-timeout=0,attribute-timeout=0,use-readdirp=no,_netdevsettings. Assuming your volume name isHadoopVol, the server's FQDN isrhs-1.hdpand your intended mount point for the volume is/mnt/glusterfs/HadoopVolthen the/etc/fstabentry for the mount point of the volume must be as follows:rhs-1.hdp:/HadoopVol /mnt/glusterfs/HadoopVol glusterfs entry-timeout=0,attribute-timeout=0,use-readdirp=no,_netdev 0 0

Volumes that are to be used with Hadoop also need to have specific volume level parameters set on them. In order to set these, shell into a node within the appropriate volume's trusted storage pool and run the following commands (the examples assume the volume name is HadoopVol):# gluster volume set HadoopVol performance.stat-prefetch off # gluster volume set HadoopVol cluster.eager-lock on # gluster volume set HadoopVol performance.quick-read off

- Perform the following to create several Hadoop directories on that volume:

- Open the terminal window of one of the Red Hat Storage nodes in the trusted storage pool and navigate to the

/usr/share/rhs-hadoop-installdirectory. - Run the

bin/add_dirs.sh volume-mount-dir list-of-directories, where volume-mount-dir is the path name for the glusterfs-fuse mount of the volume you intend to enable for Hadoop (including the name of the volume) and list-of-directories is the list generated by runningbin/gen_dirs.sh -dscript. For example:# bin/add_dirs.sh /mnt/glusterfs/HadoopVol $(bin/gen_dirs.sh -d)

enable_vol.sh script.

default volume, which is the volume used when input and/or output URIs are unqualified. Unqualified URIs are common in Hadoop jobs, so defining the default volume, which can be set by enable_vol.sh script, is important. The default volume is the first volume appearing in the fs.glusterfs.volume property in the /etc/hadoop/conf/core-site.xml configuration file. The enable_vol.sh supports the --make-default option which, if specified, causes the supplied volume to be pre-pended to the above property, and thus, become the default volume. The default behavior for enable_vol.sh is to not make the target volume the default volume, meaning the volume name is appended, rather than prepended, to the above property value.

--user and --pass options are required for the enable_vol.sh script to login into Ambari instance of the cluster to reconfigure Red Hat Storage volume related configuration.

Note

enable_vol script for the first time, you must ensure to specify the --make-default option.

- Open the terminal window of the server designated to be the Ambari Management Server and navigate to the

/usr/share/rhs-hadoop-install/directory. - Run the Hadoop Trusted Storage pool configuration script as given below:

# enable_vol.sh [-y] [--make-default] [--hadoop-mgmt-node node] [--user admin-user] [--pass admin-password] [--port mgmt-port-num] [--yarn-master yarn-node] [--rhs-node storage-node] volName

For Example;# enable_vol.sh --yarn-master yarn.hdp --rhs-node rhs-1.hdp HadoopVol --make-default

Note

If --yarn-master and/or --rhs-node options are omitted then the default of localhost (the node from which the script is being executed) is assumed. Example:./enable_vol.sh --yarn-master yarn.hdp --rhs-node rhs-1.hdp HadoopVol --make-default

If this is the first time you are runningenable_volscript, you will see a warningWARN: Cannot find configured default volume on node: rhs-1.hdp: "fs.glusterfs.volumes" property value is missing from /etc/hadoop/conf/core-site.xml. This is normal and the system will proceed to set the volume you are enabling as the default volume. You will not see this message when subsequently enabling additional volume.

8.3. Adding and Removing Users

# useradd -u 1005 -g hadoop tom

Note

min.user.id value in the /etc/hadoop/conf/container-executor.cfg file on every Red Hat Storage server that is running a NodeManager.

HadoopVol according to the examples given in installation instructions.

# mkdir /mnt/glusterfs/HadoopVol/user/<username> # chown <username>:hadoop /mnt/glusterfs/HadoopVol/user/<username> # chmod 0755 /mnt/glusterfs/HadoopVol/user/<username>

To disable a user from submitting Hadoop Jobs, remove the user from the Hadoop group.

8.4. Disabling a Volume for use with Hadoop

enable_vol.sh script.

enable_vol.sh script, see Section 8.2.5, “Enabling Existing Volumes for use with Hadoop”.

/etc/hadoop/conf/core-site.xml file. Specifically, the volume's name is removed from the fs.glusterfs.volumes property list, and the fs.glusterfs.volume.fuse.volname property is deleted. All Ambari services are automatically restarted.

- Open the terminal window of the server designated to be the Ambari Management Server and navigate to the

/usr/share/rhs-hadoop-install/directory. - Run the Hadoop cluster configuration script as shown below:

disable_vol.sh [-y] [--hadoop-mgmt-node node] [--user admin-user] [--pass admin-password] [--port mgmt-port-num] --yarn-master node [--rhs-node storage-node] volname

For example,disable_vol.sh --rhs-node rhs-1.hdp --yarn-master yarn.hdp HadoopVol

8.5. Verifying the Configuration

# chown -R yarn:hadoop /mnt/brick1/hadoop/yarn/ # chmod -R 0755 /mnt/brick1/hadoop/yarn/

Note

/usr/lib/hadoop/ directory. Then su to one of the users you have enabled for Hadoop (such as tom) and submit a Hadoop Job:

# su tom # cd /usr/lib/hadoop # bin/hadoop jar /usr/lib/hadoop-mapreduce/hadoop-mapreduce-examples-2.4.0.2.1.7.0-784.jar teragen 1000 inTeraGen only generates data. TeraSort reads and sorts the output of TeraGen. In order to fully test the cluster is operational, one needs to run TeraSort as well.

# bin/hadoop jar /usr/lib/hadoop-mapreduce/hadoop-mapreduce-examples-2.4.0.2.1.7.0-784.jar terasort in out

8.6. Troubleshooting

This is due to a bug caused by Ambari expecting a local hadoop group on an LDAP enabled cluster. Due to the fact the users and groups are centrally managed with LDAP, Ambari is not able to find the group. In order to resolve this issue:

- Shell into the Ambari Server and navigate to

/var/lib/ambari-server/resources/scripts - Replace the $AMBARI-SERVER-FQDN with the FQDN of your Ambari Server and the $AMBARI-CLUSTER-NAME with the cluster name that you specified for your cluster within Ambari and run the following command:

./configs.sh set $AMBARI-SERVER-FQDN $AMBARI-CLUSTER-NAME global ignore_groupsusers_create "true"

- In the Ambari console, click

Retryin theCluster Installation Wizard.

This is due to a permissions bug in WebHCAT. In order to start the service, it must be restarted multiple times and requires several file permissions to be changed. To resolve this issue, begin by starting the service. After each start attempt, WebHCAT will attempt to copy a different jar with root permissions. Every time it does this you need to chmod 755 the jar file in /mnt/glusterfs/HadoopVolumeName/apps/webhcat. The three files it copies to this directory are hadoop-streaming-2.4.0.2.1.5.0-648.jar, HDP-webhcat/hive.tar.gz and HDP-webhcat/pig.tar.gz. After you have set the permissions on all three files, the service will start and be operational on the fourth attempt.

This error occurs if the clocks are not synchronized across the trusted storage pool. The time in all the servers must be uniform in the trusted storage pool. It is recommended to set up a NTP (Network Time Protocol) service to keep the bricks' time synchronized, and avoid out-of-time synchronization effects.

This error occurs when the user IDs(UID) and group IDs(GID) are not consistent across the trusted storage pool. For example, user "tom" has a UID of 1002 on server1, but on server2, the user tom has a UID of 1003. The simplest and recommended approach is to leverage LDAP authentication to resolve this issue. After creating the necessary users and groups on an LDAP server, the servers within the trusted storage pool can be configured to use the LDAP server for authentication. For more information on configuring authentication, see Chapter 12. Configuring Authentication of Red Hat Enterprise Linux 6 Deployment Guide.

Chapter 9. Setting up Software Updates

Note

- Asynchronous errata update releases of Red Hat Storage include all fixes that were released asynchronously since the last release as a cumulative update.

- When there are large number of snapshots, ensure to deactivate the snapshots before performing an upgrade. The snapshots can be activated after the upgrade is complete. For more information, see Chapter 4.1 Starting and Stopping the glusterd service in the Red Hat Storage 3 Administration Guide.

9.1. Updating Red Hat Storage in the Offline Mode

Important

- Take a backup of the bricks using reliable backup solution.

- Delete this Logical Volume (LV) and recreate a new thinly provisioned LV. Fore more information, see https://access.redhat.com/site/documentation/en-US/Red_Hat_Enterprise_Linux/6/html/Logical_Volume_Manager_Administration/thinprovisioned_volumes.html

- Restore the content to the newly created thinly provisioned LV.

# yum update

glusterd management deamon. The glusterfs server processes, glusterfsd is not restarted by default since restarting this daemon affects the active read and write operations.

# gluster volume stop volname # gluster volume start volname

Important

# gluster volume set all cluster.op-version 30000

9.2. In-Service Software Upgrade to Upgrade from Red Hat Storage 2.1 to Red Hat Storage 3.0

9.2.1. Pre-upgrade Tasks

9.2.1.1. Upgrade Requirements for Red Hat Storage 3.0

- In-service software upgrade is supported only for nodes with replicate and distributed replicate volumes.

- Each brick must be independent thinly provisioned logical volume(LV).

- The Logical Volume which contains the brick must not be used for any other purpose.

- Only linear LVM is supported with Red Hat Storage 3.0. For more information, see https://access.redhat.com/site/documentation/en-US/Red_Hat_Enterprise_Linux/4/html-single/Cluster_Logical_Volume_Manager/#lv_overview

- Recommended SetupIn addition to the following list, you must ensure to read Chapter 9 Configuring Red Hat Storage for Enhancing Performance in the Red Hat Storage 3.0 Administration Guide for enhancing performance:

- For each brick, create a dedicated thin pool that contains the brick of the volume and its (thin) brick snapshots. With the current thinly provisioned volume design, avoid placing the bricks of different gluster volumes in the same thin pool.

- The recommended thin pool chunk size is 256KB. There might be exceptions to this in cases where we have a detailed information of the customer's workload.

- The recommended pool metadata size is 0.1% of the thin pool size for a chunk size of 1MB or larger. In special cases, where we recommend a chunk size less than 256KB, use a pool metadata size of 0.5% of thin pool size.

- When server-side quorum is enabled, ensure that bringing one node down does not violate server-side quorum. Add dummy peers to ensure the server-side quorum will not be violated until the completion of rolling upgrade using the following command:

# gluster peer probe DummyNodeName

For Example 1When the server-side quorum percentage is set to the default value (>50%), for a plain replicate volume with two nodes and one brick on each machine, a dummy node which does not contain any bricks must be added to the trusted storage pool to provide high availability of the volume using the command mentioned above.

For Example 2In a three node cluster, if the server-side quorum percentage is set to 77%, then bringing down one node would violate the server-side quorum. In this scenario, you have to add two dummy nodes to meet server-side quorum.

- If the client-side quorum is enabled then, run the following command to disable the client-side quorum:

# gluster volume reset <vol-name> cluster.quorum-type

Note

When the client-side quorum is disabled, there are chances that the files might go into split-brain. - If there are any geo-replication sessions running between the master and slave, then stop this session by executing the following command:

# gluster volume geo-replication MASTER_VOL SLAVE_HOST::SLAVE_VOL stop

- Ensure the Red Hat Storage server is registered to the required channels:

rhel-x86_64-server-6 rhel-x86_64-server-6-rhs-3 rhel-x86_64-server-sfs-6

To subscribe to the channels, run the following command:# rhn-channel --add --channel=<channel>

9.2.1.2. Restrictions for In-Service Software Upgrade

- Do not perform in-service software upgrade when the I/O or load is high on the Red Hat Storage server.

- Do not perform any volume operations on the Red Hat Storage server

- Do not change the hardware configurations.

- Do not run mixed versions of Red Hat Storage for an extended period of time. For example, do not have a mixed environment of Red Hat Storage 2.1 and Red Hat Storage 2.1 Update 1 for a prolonged time.

- Do not combine different upgrade methods.

- It is not recommended to use in-service software upgrade for migrating to thinly provisioned volumes, but to use offline upgrade scenario instead. For more information see, Section 9.1, “Updating Red Hat Storage in the Offline Mode”

9.2.1.3. Configuring repo for Upgrading using ISO

Note

- Mount the ISO image file under any directory using the following command:

mount -o loop <ISO image file> <mount-point>

For example:mount -o loop RHSS-2.1U1-RC3-20131122.0-RHS-x86_64-DVD1.iso /mnt

- Set the repo options in a file in the following location:

/etc/yum.repos.d/<file_name.repo>

- Add the following information to the repo file:

[local] name=local baseurl=file:///mnt enabled=1 gpgcheck=0

9.2.1.4. Preparing and Monitoring the Upgrade Activity

- Check the peer status using the following commad:

# gluster peer status

For example:# gluster peer status Number of Peers: 2 Hostname: 10.70.42.237 Uuid: 04320de4-dc17-48ff-9ec1-557499120a43 State: Peer in Cluster (Connected) Hostname: 10.70.43.148 Uuid: 58fc51ab-3ad9-41e1-bb6a-3efd4591c297 State: Peer in Cluster (Connected)

- Check the volume status using the following command:

# gluster volume status

For example:# gluster volume status Status of volume: r2 Gluster process Port Online Pid ------------------------------------------------------------------------------ Brick 10.70.43.198:/brick/r2_0 49152 Y 32259 Brick 10.70.42.237:/brick/r2_1 49152 Y 25266 Brick 10.70.43.148:/brick/r2_2 49154 Y 2857 Brick 10.70.43.198:/brick/r2_3 49153 Y 32270 NFS Server on localhost 2049 Y 25280 Self-heal Daemon on localhost N/A Y 25284 NFS Server on 10.70.43.148 2049 Y 2871 Self-heal Daemon on 10.70.43.148 N/A Y 2875 NFS Server on 10.70.43.198 2049 Y 32284 Self-heal Daemon on 10.70.43.198 N/A Y 32288 Task Status of Volume r2 ------------------------------------------------------------------------------ There are no active volume tasks

- Check the rebalance status using the following command:

# gluster volume rebalance r2 status Node Rebalanced-files size scanned failures skipped status run time in secs --------- ----------- --------- -------- --------- ------ -------- -------------- 10.70.43.198 0 0Bytes 99 0 0 completed 1.00 10.70.43.148 49 196Bytes 100 0 0 completed 3.00

- Ensure that there are no pending self-heals before proceeding with in-service software upgrade using the following command:

# gluster volume heal volname info

The following example shows a self-heal completion:# gluster volume heal drvol info Gathering list of entries to be healed on volume drvol has been successful Brick 10.70.37.51:/rhs/brick1/dir1 Number of entries: 0 Brick 10.70.37.78:/rhs/brick1/dir1 Number of entries: 0 Brick 10.70.37.51:/rhs/brick2/dir2 Number of entries: 0 Brick 10.70.37.78:/rhs/brick2/dir2 Number of entries: 0

9.2.2. Upgrade Process with Service Impact

ReST requests that are in transition will fail during in-service software upgrade. Hence it is recommended to stop all swift services before in-service software upgrade using the following commands:

# service openstack-swift-proxy stop # service openstack-swift-account stop # service openstack-swift-container stop # service openstack-swift-object stop

When you NFS mount a volume, any new or outstanding file operations on that file system will hang uninterruptedly during in-service software upgrade until the server is upgraded.

Ongoing I/O on Samba shares will fail as the Samba shares will be temporarily unavailable during the in-service software upgrade, hence it is recommended to stop the Samba service using the following command:

# service ctdb stop ;Stopping CTDB will also stop the SMB service.

In-service software upgrade is not supported for distributed volume. In case you have a distributed volume in the cluster, stop that volume using the following command:

# gluster volume stop <VOLNAME>

# gluster volume start <VOLNAME>

The virtual machine images are likely to be modified constantly. The virtual machine listed in the output of the volume heal command does not imply that the self-heal of the virtual machine is incomplete. It could mean that the modifications on the virtual machine are happening constantly.

9.2.3. In-Service Software Upgrade

- Back up the following configuration directory and files on the backup directory:

/var/lib/glusterd, /etc/swift, /etc/samba, /etc/ctdb, /etc/glusterfs, /var/lib/samba, /var/lib/ctdb# cp -a /var/lib/glusterd /backup-disk/ # cp -a /etc/swift /backup-disk/ # cp -a /etc/samba /backup-disk/ # cp -a /etc/ctdb /backup-disk/ # cp -a /etc/glusterfs /backup-disk/ # cp -a /var/lib/samba /backup-disk/ # cp -a /var/lib/ctdb /backup-disk/

Note

- If you have a CTDB environment, then to upgrade to Red Hat Storage 3.0, see Section 9.2.4.1, “In-Service Software Upgrade for a CTDB Setup”.

- Stop the gluster services on the storage server using the following commands:

# service glusterd stop # pkill glusterfs # pkill glusterfsd

- To check the system's current subscription status run the following command:

# migrate-rhs-classic-to-rhsm --status

- Install the required packages using the following command:

# yum install subscription-manager-migration # yum install subscription-manager-migration-data

- Execute the following command to migrate from Red Hat Network Classic to Red Hat Subscription Manager

# migrate-rhs-classic-to-rhsm --rhn-to-rhsm

- To enable the Red Hat Storage 3.0 repos, execute the following command:

# migrate-rhs-classic-to-rhsm --upgrade --version 3

- To verify if the migration from Red Hat Network Classic to Red Hat Subscription Manager is successful, execute the following command:

# migrate-rhs-classic-to-rhsm --status

- Update the server using the following command:

# yum update

- If the volumes are thickly provisioned, then perform the following steps to migrate to thinly provisioned volumes:

Note

Migrating from thickly provisioned volume to thinly provisioned volume during in-service-software-upgrade takes a significant amount of time based on the data you have in the bricks. You must migrate only if you plan on using snapshots for your existing environment and plan to be online during the upgrade. If you do not plan to use snapshots, you can skip the migration steps from LVM to thinp. However, if you plan to use snapshots on your existing environment, the offline method to upgrade is recommended. For more information regarding offline upgrade, see Section 9.1, “Updating Red Hat Storage in the Offline Mode”Contact a Red Hat Support representative before migrating from thickly provisioned volumes to thinly provisioned volumes using in-service software upgrade.- Unmount all the bricks associated with the volume by executing the following command:

# umount mount point

For example:# umount /dev/RHS_vg/brick1

- Remove the LVM associated with the brick by executing the following command:

# lvremove logical_volume_name

For example:# lvremove /dev/RHS_vg/brick1

- Remove the volume group by executing the following command:

# vgremove -ff volume_group_name

For example:vgremove -ff RHS_vg

- Remove the physical volume by executing the following command:

# pvremove -ff physical_volume

- If the physical volume(PV) not created then create the PV for a RAID 6 volume by executing the following command, else proceed with the next step:

# pvcreate --dataalignment 2560K /dev/vdb

For more information refer Section 12.1 Prerequisites in the Red Hat Storage 3 Administration Guide - Create a single volume group from the PV by executing the following command:

# vgcreate volume_group_name disk

For example:vgcreate RHS_vg /dev/vdb

- Create a thinpool using the following command:

# lvcreate -L size --poolmetadatasize md size --chunksize chunk size -T pool device

For example:lvcreate -L 2T --poolmetadatasize 16G --chunksize 256 -T /dev/RHS_vg/thin_pool

- Create a thin volume from the pool by executing the following command:

# lvcreate -V size -T pool device -n thinp

For example:lvcreate -V 1.5T -T /dev/RHS_vg/thin_pool -n thin_vol

- Create filesystem in the new volume by executing the following command:

mkfs.xfs -i size=512 thin pool device

For example:mkfs.xfs -i size=512 /dev/RHS_vg/thin_vol