Manage Red Hat Quay

Manage Red Hat Quay

Abstract

Preface

Once you have deployed a Red Hat Quay registry, there are many ways you can further configure and manage that deployment. Topics covered here include:

- Advanced Red Hat Quay configuration

- Setting notifications to alert you of a new Red Hat Quay release

- Securing connections with SSL/TLS certificates

- Directing action logs storage to Elasticsearch

- Configuring image security scanning with Clair

- Scan pod images with the Container Security Operator

- Integrate Red Hat Quay into OpenShift Container Platform with the Quay Bridge Operator

- Mirroring images with repository mirroring

- Sharing Red Hat Quay images with a BitTorrent service

- Authenticating users with LDAP

- Enabling Quay for Prometheus and Grafana metrics

- Setting up geo-replication

- Troubleshooting Red Hat Quay

For a complete list of Red Hat Quay configuration fields, see the Configure Red Hat Quay page.

Chapter 1. Advanced Red Hat Quay configuration

You can configure your Red Hat Quay after initial deployment using one of the following methods:

-

Editing the

config.yamlfile. Theconfig.yamlfile contains most configuration information for the Red Hat Quay cluster. Editing theconfig.yamlfile directly is the primary method for advanced tuning and enabling specific features. - Using the Red Hat Quay API. Some Red Hat Quay features can be configured through the API.

This content in this section describes how to use each of the aforementioned interfaces and how to configure your deployment with advanced features.

1.1. Using the API to modify Red Hat Quay

See the Red Hat Quay API Guide for information on how to access Red Hat Quay API.

1.2. Editing the config.yaml file to modify Red Hat Quay

Advanced features can be implemented by editing the config.yaml file directly. All configuration fields for Red Hat Quay features and settings are available in the Red Hat Quay configuration guide.

The following example is one setting that you can change directly in the config.yaml file. Use this example as a reference when editing your config.yaml file for other features and settings.

1.2.1. Adding name and company to Red Hat Quay sign-in

By setting the FEATURE_USER_METADATA field to true, users are prompted for their name and company when they first sign in. This is an optional field, but can provide your with extra data about your Red Hat Quay users.

Use the following procedure to add a name and a company to the Red Hat Quay sign-in page.

Procedure

-

Add, or set, the

FEATURE_USER_METADATAconfiguration field totruein yourconfig.yamlfile. For example:

# ... FEATURE_USER_METADATA: true # ...

- Redeploy Red Hat Quay.

Now, when prompted to log in, users are requested to enter the following information:

Chapter 2. Using the configuration API

The configuration tool exposes 4 endpoints that can be used to build, validate, bundle and deploy a configuration. The config-tool API is documented at https://github.com/quay/config-tool/blob/master/pkg/lib/editor/API.md. In this section, you will see how to use the API to retrieve the current configuration and how to validate any changes you make.

2.1. Retrieving the default configuration

If you are running the configuration tool for the first time, and do not have an existing configuration, you can retrieve the default configuration. Start the container in config mode:

$ sudo podman run --rm -it --name quay_config \ -p 8080:8080 \ registry.redhat.io/quay/quay-rhel8:v3.11.0 config secret

Use the config endpoint of the configuration API to get the default:

$ curl -X GET -u quayconfig:secret http://quay-server:8080/api/v1/config | jq

The value returned is the default configuration in JSON format:

{

"config.yaml": {

"AUTHENTICATION_TYPE": "Database",

"AVATAR_KIND": "local",

"DB_CONNECTION_ARGS": {

"autorollback": true,

"threadlocals": true

},

"DEFAULT_TAG_EXPIRATION": "2w",

"EXTERNAL_TLS_TERMINATION": false,

"FEATURE_ACTION_LOG_ROTATION": false,

"FEATURE_ANONYMOUS_ACCESS": true,

"FEATURE_APP_SPECIFIC_TOKENS": true,

....

}

}2.2. Retrieving the current configuration

If you have already configured and deployed the Quay registry, stop the container and restart it in configuration mode, loading the existing configuration as a volume:

$ sudo podman run --rm -it --name quay_config \ -p 8080:8080 \ -v $QUAY/config:/conf/stack:Z \ registry.redhat.io/quay/quay-rhel8:v3.11.0 config secret

Use the config endpoint of the API to get the current configuration:

$ curl -X GET -u quayconfig:secret http://quay-server:8080/api/v1/config | jq

The value returned is the current configuration in JSON format, including database and Redis configuration data:

{

"config.yaml": {

....

"BROWSER_API_CALLS_XHR_ONLY": false,

"BUILDLOGS_REDIS": {

"host": "quay-server",

"password": "strongpassword",

"port": 6379

},

"DATABASE_SECRET_KEY": "4b1c5663-88c6-47ac-b4a8-bb594660f08b",

"DB_CONNECTION_ARGS": {

"autorollback": true,

"threadlocals": true

},

"DB_URI": "postgresql://quayuser:quaypass@quay-server:5432/quay",

"DEFAULT_TAG_EXPIRATION": "2w",

....

}

}2.3. Validating configuration using the API

You can validate a configuration by posting it to the config/validate endpoint:

curl -u quayconfig:secret --header 'Content-Type: application/json' --request POST --data '

{

"config.yaml": {

....

"BROWSER_API_CALLS_XHR_ONLY": false,

"BUILDLOGS_REDIS": {

"host": "quay-server",

"password": "strongpassword",

"port": 6379

},

"DATABASE_SECRET_KEY": "4b1c5663-88c6-47ac-b4a8-bb594660f08b",

"DB_CONNECTION_ARGS": {

"autorollback": true,

"threadlocals": true

},

"DB_URI": "postgresql://quayuser:quaypass@quay-server:5432/quay",

"DEFAULT_TAG_EXPIRATION": "2w",

....

}

} http://quay-server:8080/api/v1/config/validate | jq

The returned value is an array containing the errors found in the configuration. If the configuration is valid, an empty array [] is returned.

2.4. Determining the required fields

You can determine the required fields by posting an empty configuration structure to the config/validate endpoint:

curl -u quayconfig:secret --header 'Content-Type: application/json' --request POST --data '

{

"config.yaml": {

}

} http://quay-server:8080/api/v1/config/validate | jqThe value returned is an array indicating which fields are required:

[

{

"FieldGroup": "Database",

"Tags": [

"DB_URI"

],

"Message": "DB_URI is required."

},

{

"FieldGroup": "DistributedStorage",

"Tags": [

"DISTRIBUTED_STORAGE_CONFIG"

],

"Message": "DISTRIBUTED_STORAGE_CONFIG must contain at least one storage location."

},

{

"FieldGroup": "HostSettings",

"Tags": [

"SERVER_HOSTNAME"

],

"Message": "SERVER_HOSTNAME is required"

},

{

"FieldGroup": "HostSettings",

"Tags": [

"SERVER_HOSTNAME"

],

"Message": "SERVER_HOSTNAME must be of type Hostname"

},

{

"FieldGroup": "Redis",

"Tags": [

"BUILDLOGS_REDIS"

],

"Message": "BUILDLOGS_REDIS is required"

}

]Chapter 3. Getting Red Hat Quay release notifications

To keep up with the latest Red Hat Quay releases and other changes related to Red Hat Quay, you can sign up for update notifications on the Red Hat Customer Portal. After signing up for notifications, you will receive notifications letting you know when there is new a Red Hat Quay version, updated documentation, or other Red Hat Quay news.

- Log into the Red Hat Customer Portal with your Red Hat customer account credentials.

-

Select your user name (upper-right corner) to see Red Hat Account and Customer Portal selections:

- Select Notifications. Your profile activity page appears.

- Select the Notifications tab.

- Select Manage Notifications.

- Select Follow, then choose Products from the drop-down box.

-

From the drop-down box next to the Products, search for and select Red Hat Quay:

- Select the SAVE NOTIFICATION button. Going forward, you will receive notifications when there are changes to the Red Hat Quay product, such as a new release.

Chapter 4. Using SSL to protect connections to Red Hat Quay

4.1. Using SSL/TLS

To configure Red Hat Quay with a self-signed certificate, you must create a Certificate Authority (CA) and a primary key file named ssl.cert and ssl.key.

The following examples assume that you have configured the server hostname quay-server.example.com using DNS or another naming mechanism, such as adding an entry in your /etc/hosts file. For more information, see "Configuring port mapping for Red Hat Quay".

4.2. Creating a Certificate Authority

Use the following procedure to create a Certificate Authority (CA).

Procedure

Generate the root CA key by entering the following command:

$ openssl genrsa -out rootCA.key 2048

Generate the root CA certificate by entering the following command:

$ openssl req -x509 -new -nodes -key rootCA.key -sha256 -days 1024 -out rootCA.pem

Enter the information that will be incorporated into your certificate request, including the server hostname, for example:

Country Name (2 letter code) [XX]:IE State or Province Name (full name) []:GALWAY Locality Name (eg, city) [Default City]:GALWAY Organization Name (eg, company) [Default Company Ltd]:QUAY Organizational Unit Name (eg, section) []:DOCS Common Name (eg, your name or your server's hostname) []:quay-server.example.com

4.2.1. Signing the certificate

Use the following procedure to sign the certificate.

Procedure

Generate the server key by entering the following command:

$ openssl genrsa -out ssl.key 2048

Generate a signing request by entering the following command:

$ openssl req -new -key ssl.key -out ssl.csr

Enter the information that will be incorporated into your certificate request, including the server hostname, for example:

Country Name (2 letter code) [XX]:IE State or Province Name (full name) []:GALWAY Locality Name (eg, city) [Default City]:GALWAY Organization Name (eg, company) [Default Company Ltd]:QUAY Organizational Unit Name (eg, section) []:DOCS Common Name (eg, your name or your server's hostname) []:quay-server.example.com

Create a configuration file

openssl.cnf, specifying the server hostname, for example:openssl.cnf

[req] req_extensions = v3_req distinguished_name = req_distinguished_name [req_distinguished_name] [ v3_req ] basicConstraints = CA:FALSE keyUsage = nonRepudiation, digitalSignature, keyEncipherment subjectAltName = @alt_names [alt_names] DNS.1 = quay-server.example.com IP.1 = 192.168.1.112

Use the configuration file to generate the certificate

ssl.cert:$ openssl x509 -req -in ssl.csr -CA rootCA.pem -CAkey rootCA.key -CAcreateserial -out ssl.cert -days 356 -extensions v3_req -extfile openssl.cnf

4.3. Configuring SSL/TLS using the command line interface

Use the following procedure to configure SSL/TLS using the CLI.

Prerequisites

- You have created a certificate authority and signed the certificate.

Procedure

Copy the certificate file and primary key file to your configuration directory, ensuring they are named

ssl.certandssl.keyrespectively:cp ~/ssl.cert ~/ssl.key $QUAY/config

Change into the

$QUAY/configdirectory by entering the following command:$ cd $QUAY/config

Edit the

config.yamlfile and specify that you want Red Hat Quay to handle TLS/SSL:config.yaml

... SERVER_HOSTNAME: quay-server.example.com ... PREFERRED_URL_SCHEME: https ...

Optional: Append the contents of the rootCA.pem file to the end of the ssl.cert file by entering the following command:

$ cat rootCA.pem >> ssl.cert

Stop the

Quaycontainer by entering the following command:$ sudo podman stop quay

Restart the registry by entering the following command:

$ sudo podman run -d --rm -p 80:8080 -p 443:8443 \ --name=quay \ -v $QUAY/config:/conf/stack:Z \ -v $QUAY/storage:/datastorage:Z \ registry.redhat.io/quay/quay-rhel8:v3.11.0

4.4. Configuring SSL/TLS using the Red Hat Quay UI

Use the following procedure to configure SSL/TLS using the Red Hat Quay UI.

To configure SSL/TLS using the command line interface, see "Configuring SSL/TLS using the command line interface".

Prerequisites

- You have created a certificate authority and signed a certificate.

Procedure

Start the

Quaycontainer in configuration mode:$ sudo podman run --rm -it --name quay_config -p 80:8080 -p 443:8443 registry.redhat.io/quay/quay-rhel8:v3.11.0 config secret

- In the Server Configuration section, select Red Hat Quay handles TLS for SSL/TLS. Upload the certificate file and private key file created earlier, ensuring that the Server Hostname matches the value used when the certificates were created.

- Validate and download the updated configuration.

Stop the

Quaycontainer and then restart the registry by entering the following command:$ sudo podman rm -f quay $ sudo podman run -d --rm -p 80:8080 -p 443:8443 \ --name=quay \ -v $QUAY/config:/conf/stack:Z \ -v $QUAY/storage:/datastorage:Z \ registry.redhat.io/quay/quay-rhel8:v3.11.0

4.5. Testing the SSL/TLS configuration using the CLI

Use the following procedure to test your SSL/TLS configuration using the CLI.

Procedure

Enter the following command to attempt to log in to the Red Hat Quay registry with SSL/TLS enabled:

$ sudo podman login quay-server.example.com

Example output

Error: error authenticating creds for "quay-server.example.com": error pinging docker registry quay-server.example.com: Get "https://quay-server.example.com/v2/": x509: certificate signed by unknown authority

Because Podman does not trust self-signed certificates, you must use the

--tls-verify=falseoption:$ sudo podman login --tls-verify=false quay-server.example.com

Example output

Login Succeeded!

In a subsequent section, you will configure Podman to trust the root Certificate Authority.

4.6. Testing the SSL/TLS configuration using a browser

Use the following procedure to test your SSL/TLS configuration using a browser.

Procedure

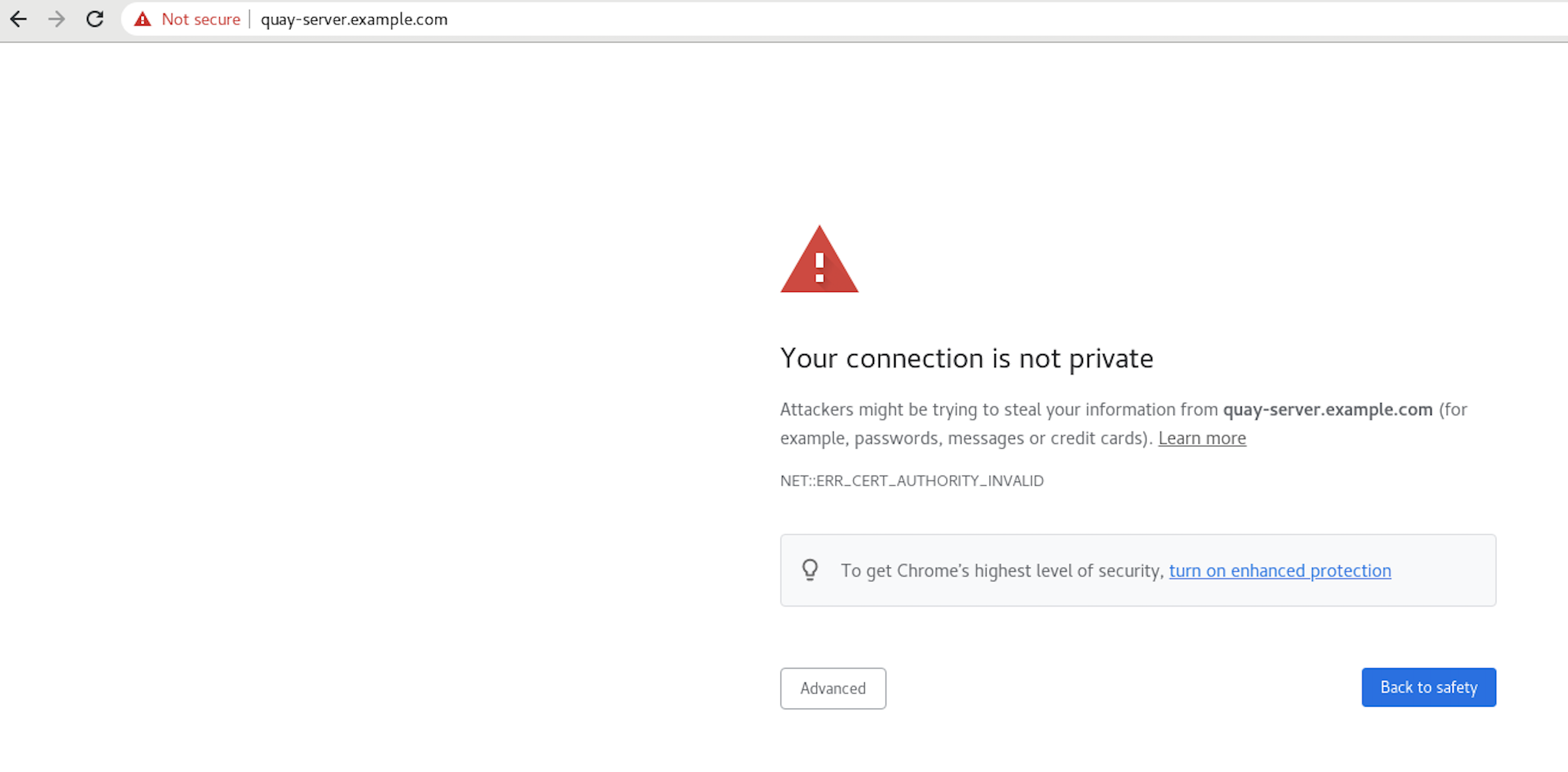

Navigate to your Red Hat Quay registry endpoint, for example,

https://quay-server.example.com. If configured correctly, the browser warns of the potential risk:

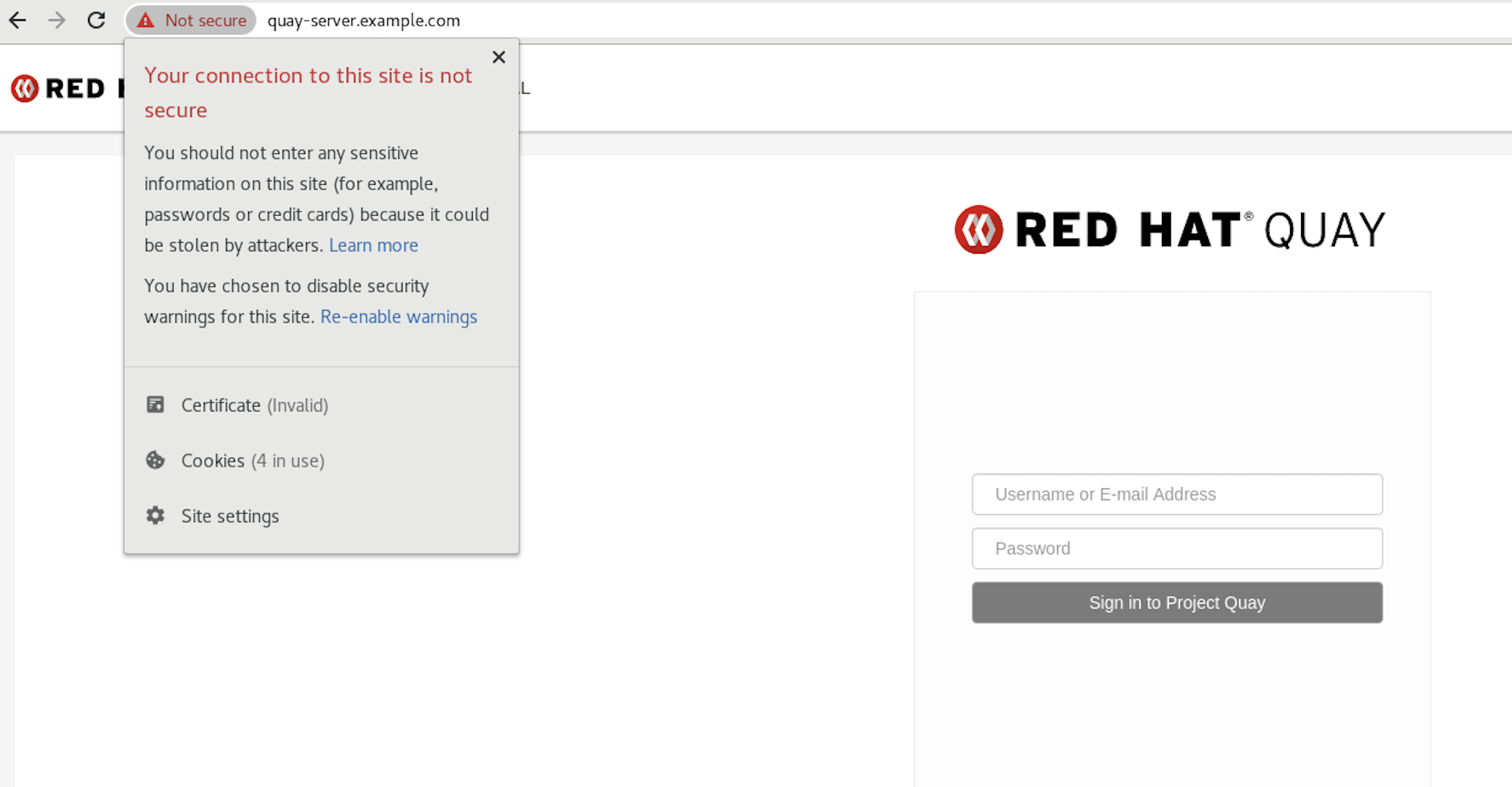

Proceed to the log in screen. The browser notifies you that the connection is not secure. For example:

In the following section, you will configure Podman to trust the root Certificate Authority.

4.7. Configuring Podman to trust the Certificate Authority

Podman uses two paths to locate the Certificate Authority (CA) file: /etc/containers/certs.d/ and /etc/docker/certs.d/. Use the following procedure to configure Podman to trust the CA.

Procedure

Copy the root CA file to one of

/etc/containers/certs.d/or/etc/docker/certs.d/. Use the exact path determined by the server hostname, and name the fileca.crt:$ sudo cp rootCA.pem /etc/containers/certs.d/quay-server.example.com/ca.crt

Verify that you no longer need to use the

--tls-verify=falseoption when logging in to your Red Hat Quay registry:$ sudo podman login quay-server.example.com

Example output

Login Succeeded!

4.8. Configuring the system to trust the certificate authority

Use the following procedure to configure your system to trust the certificate authority.

Procedure

Enter the following command to copy the

rootCA.pemfile to the consolidated system-wide trust store:$ sudo cp rootCA.pem /etc/pki/ca-trust/source/anchors/

Enter the following command to update the system-wide trust store configuration:

$ sudo update-ca-trust extract

Optional. You can use the

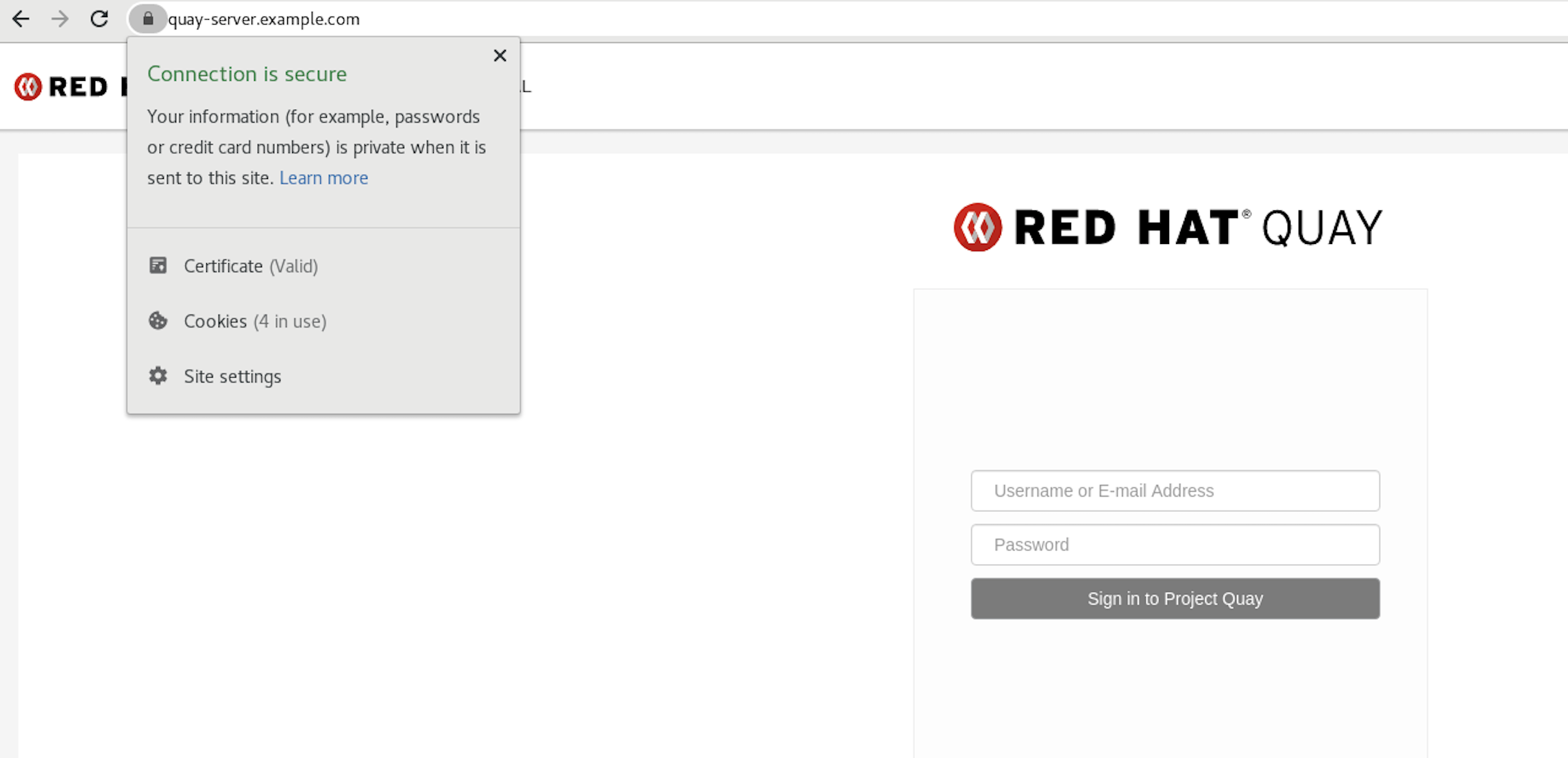

trust listcommand to ensure that theQuayserver has been configured:$ trust list | grep quay label: quay-server.example.comNow, when you browse to the registry at

https://quay-server.example.com, the lock icon shows that the connection is secure:

To remove the

rootCA.pemfile from system-wide trust, delete the file and update the configuration:$ sudo rm /etc/pki/ca-trust/source/anchors/rootCA.pem

$ sudo update-ca-trust extract

$ trust list | grep quay

More information can be found in the RHEL 9 documentation in the chapter Using shared system certificates.

Chapter 5. Adding TLS Certificates to the Red Hat Quay Container

To add custom TLS certificates to Red Hat Quay, create a new directory named extra_ca_certs/ beneath the Red Hat Quay config directory. Copy any required site-specific TLS certificates to this new directory.

5.1. Add TLS certificates to Red Hat Quay

View certificate to be added to the container

$ cat storage.crt -----BEGIN CERTIFICATE----- MIIDTTCCAjWgAwIBAgIJAMVr9ngjJhzbMA0GCSqGSIb3DQEBCwUAMD0xCzAJBgNV [...] -----END CERTIFICATE-----

Create certs directory and copy certificate there

$ mkdir -p quay/config/extra_ca_certs $ cp storage.crt quay/config/extra_ca_certs/ $ tree quay/config/ ├── config.yaml ├── extra_ca_certs │ ├── storage.crt

Obtain the

Quaycontainer’sCONTAINER IDwithpodman ps:$ sudo podman ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS 5a3e82c4a75f <registry>/<repo>/quay:v3.11.0 "/sbin/my_init" 24 hours ago Up 18 hours 0.0.0.0:80->80/tcp, 0.0.0.0:443->443/tcp, 443/tcp grave_keller

Restart the container with that ID:

$ sudo podman restart 5a3e82c4a75f

Examine the certificate copied into the container namespace:

$ sudo podman exec -it 5a3e82c4a75f cat /etc/ssl/certs/storage.pem -----BEGIN CERTIFICATE----- MIIDTTCCAjWgAwIBAgIJAMVr9ngjJhzbMA0GCSqGSIb3DQEBCwUAMD0xCzAJBgNV

5.2. Adding custom SSL/TLS certificates when Red Hat Quay is deployed on Kubernetes

When deployed on Kubernetes, Red Hat Quay mounts in a secret as a volume to store config assets. Currently, this breaks the upload certificate function of the superuser panel.

As a temporary workaround, base64 encoded certificates can be added to the secret after Red Hat Quay has been deployed.

Use the following procedure to add custom SSL/TLS certificates when Red Hat Quay is deployed on Kubernetes.

Prerequisites

- Red Hat Quay has been deployed.

-

You have a custom

ca.crtfile.

Procedure

Base64 encode the contents of an SSL/TLS certificate by entering the following command:

$ cat ca.crt | base64 -w 0

Example output

...c1psWGpqeGlPQmNEWkJPMjJ5d0pDemVnR2QNCnRsbW9JdEF4YnFSdVd3PT0KLS0tLS1FTkQgQ0VSVElGSUNBVEUtLS0tLQo=

Enter the following

kubectlcommand to edit thequay-enterprise-config-secretfile:$ kubectl --namespace quay-enterprise edit secret/quay-enterprise-config-secret

Add an entry for the certificate and paste the full

base64encoded stringer under the entry. For example:custom-cert.crt: c1psWGpqeGlPQmNEWkJPMjJ5d0pDemVnR2QNCnRsbW9JdEF4YnFSdVd3PT0KLS0tLS1FTkQgQ0VSVElGSUNBVEUtLS0tLQo=

Use the

kubectl deletecommand to remove all Red Hat Quay pods. For example:$ kubectl delete pod quay-operator.v3.7.1-6f9d859bd-p5ftc quayregistry-clair-postgres-7487f5bd86-xnxpr quayregistry-quay-app-upgrade-xq2v6 quayregistry-quay-database-859d5445ff-cqthr quayregistry-quay-redis-84f888776f-hhgms

Afterwards, the Red Hat Quay deployment automatically schedules replace pods with the new certificate data.

Chapter 6. Configuring action log storage for Elasticsearch and Splunk

By default, usage logs are stored in the Red Hat Quay database and exposed through the web UI on organization and repository levels. Appropriate administrative privileges are required to see log entries. For deployments with a large amount of logged operations, you can store the usage logs in Elasticsearch and Splunk instead of the Red Hat Quay database backend.

6.1. Configuring action log storage for Elasticsearch

To configure action log storage for Elasticsearch, you must provide your own Elasticsearch stack; it is not included with Red Hat Quay as a customizable component.

Enabling Elasticsearch logging can be done during Red Hat Quay deployment or post-deployment by updating your config.yaml file. When configured, usage log access continues to be provided through the web UI for repositories and organizations.

Use the following procedure to configure action log storage for Elasticsearch:

Procedure

- Obtain an Elasticsearch account.

Update your Red Hat Quay

config.yamlfile to include the following information:# ... LOGS_MODEL: elasticsearch 1 LOGS_MODEL_CONFIG: producer: elasticsearch 2 elasticsearch_config: host: http://<host.elasticsearch.example>:<port> 3 port: 9200 4 access_key: <access_key> 5 secret_key: <secret_key> 6 use_ssl: True 7 index_prefix: <logentry> 8 aws_region: <us-east-1> 9 # ...

- 1

- The method for handling log data.

- 2

- Choose either Elasticsearch or Kinesis to direct logs to an intermediate Kinesis stream on AWS. You need to set up your own pipeline to send logs from Kinesis to Elasticsearch, for example, Logstash.

- 3

- The hostname or IP address of the system providing the Elasticsearch service.

- 4

- The port number providing the Elasticsearch service on the host you just entered. Note that the port must be accessible from all systems running the Red Hat Quay registry. The default is TCP port

9200. - 5

- The access key needed to gain access to the Elasticsearch service, if required.

- 6

- The secret key needed to gain access to the Elasticsearch service, if required.

- 7

- Whether to use SSL/TLS for Elasticsearch. Defaults to

True. - 8

- Choose a prefix to attach to log entries.

- 9

- If you are running on AWS, set the AWS region (otherwise, leave it blank).

Optional. If you are using Kinesis as your logs producer, you must include the following fields in your

config.yamlfile:kinesis_stream_config: stream_name: <kinesis_stream_name> 1 access_key: <aws_access_key> 2 secret_key: <aws_secret_key> 3 aws_region: <aws_region> 4-

Save your

config.yamlfile and restart your Red Hat Quay deployment.

6.2. Configuring action log storage for Splunk

Splunk is an alternative to Elasticsearch that can provide log analyses for your Red Hat Quay data.

Enabling Splunk logging can be done during Red Hat Quay deployment or post-deployment using the configuration tool. The resulting configuration is stored in the config.yaml file. When configured, usage log access continues to be provided through the Splunk web UI for repositories and organizations.

Use the following procedures to enable Splunk for your Red Hat Quay deployment.

6.2.1. Installing and creating a username for Splunk

Use the following procedure to install and create Splunk credentials.

Procedure

- Create a Splunk account by navigating to Splunk and entering the required credentials.

- Navigate to the Splunk Enterprise Free Trial page, select your platform and installation package, and then click Download Now.

-

Install the Splunk software on your machine. When prompted, create a username, for example,

splunk_adminand password. -

After creating a username and password, a localhost URL will be provided for your Splunk deployment, for example,

http://<sample_url>.remote.csb:8000/. Open the URL in your preferred browser. - Log in with the username and password you created during installation. You are directed to the Splunk UI.

6.2.2. Generating a Splunk token

Use one of the following procedures to create a bearer token for Splunk.

6.2.2.1. Generating a Splunk token using the Splunk UI

Use the following procedure to create a bearer token for Splunk using the Splunk UI.

Prerequisites

- You have installed Splunk and created a username.

Procedure

- On the Splunk UI, navigate to Settings → Tokens.

- Click Enable Token Authentication.

- Ensure that Token Authentication is enabled by clicking Token Settings and selecting Token Authentication if necessary.

- Optional: Set the expiration time for your token. This defaults at 30 days.

- Click Save.

- Click New Token.

- Enter information for User and Audience.

- Optional: Set the Expiration and Not Before information.

Click Create. Your token appears in the Token box. Copy the token immediately.

ImportantIf you close out of the box before copying the token, you must create a new token. The token in its entirety is not available after closing the New Token window.

6.2.2.2. Generating a Splunk token using the CLI

Use the following procedure to create a bearer token for Splunk using the CLI.

Prerequisites

- You have installed Splunk and created a username.

Procedure

In your CLI, enter the following

CURLcommand to enable token authentication, passing in your Splunk username and password:$ curl -k -u <username>:<password> -X POST <scheme>://<host>:<port>/services/admin/token-auth/tokens_auth -d disabled=false

Create a token by entering the following

CURLcommand, passing in your Splunk username and password.$ curl -k -u <username>:<password> -X POST <scheme>://<host>:<port>/services/authorization/tokens?output_mode=json --data name=<username> --data audience=Users --data-urlencode expires_on=+30d

- Save the generated bearer token.

6.2.3. Configuring Red Hat Quay to use Splunk

Use the following procedure to configure Red Hat Quay to use Splunk.

Prerequisites

- You have installed Splunk and created a username.

- You have generated a Splunk bearer token.

Procedure

Open your Red Hat Quay

config.yamlfile and add the following configuration fields:# ... LOGS_MODEL: splunk LOGS_MODEL_CONFIG: producer: splunk splunk_config: host: http://<user_name>.remote.csb 1 port: 8089 2 bearer_token: <bearer_token> 3 url_scheme: <http/https> 4 verify_ssl: False 5 index_prefix: <splunk_log_index_name> 6 ssl_ca_path: <location_to_ssl-ca-cert.pem> 7 # ...- 1

- String. The Splunk cluster endpoint.

- 2

- Integer. The Splunk management cluster endpoint port. Differs from the Splunk GUI hosted port. Can be found on the Splunk UI under Settings → Server Settings → General Settings.

- 3

- String. The generated bearer token for Splunk.

- 4

- String. The URL scheme for access the Splunk service. If Splunk is configured to use TLS/SSL, this must be

https. - 5

- Boolean. Whether to enable TLS/SSL. Defaults to

true. - 6

- String. The Splunk index prefix. Can be a new, or used, index. Can be created from the Splunk UI.

- 7

- String. The relative container path to a single

.pemfile containing a certificate authority (CA) for TLS/SSL validation.

If you are configuring

ssl_ca_path, you must configure the SSL/TLS certificate so that Red Hat Quay will trust it.-

If you are using a standalone deployment of Red Hat Quay, SSL/TLS certificates can be provided by placing the certificate file inside of the

extra_ca_certsdirectory, or inside of the relative container path and specified byssl_ca_path. If you are using the Red Hat Quay Operator, create a config bundle secret, including the certificate authority (CA) of the Splunk server. For example:

$ oc create secret generic --from-file config.yaml=./config_390.yaml --from-file extra_ca_cert_splunkserver.crt=./splunkserver.crt config-bundle-secret

Specify the

conf/stack/extra_ca_certs/splunkserver.crtfile in yourconfig.yaml. For example:# ... LOGS_MODEL: splunk LOGS_MODEL_CONFIG: producer: splunk splunk_config: host: ec2-12-345-67-891.us-east-2.compute.amazonaws.com port: 8089 bearer_token: eyJra url_scheme: https verify_ssl: true index_prefix: quay123456 ssl_ca_path: conf/stack/splunkserver.crt # ...

-

If you are using a standalone deployment of Red Hat Quay, SSL/TLS certificates can be provided by placing the certificate file inside of the

6.2.4. Creating an action log

Use the following procedure to create a user account that can forward action logs to Splunk.

You must use the Splunk UI to view Red Hat Quay action logs. At this time, viewing Splunk action logs on the Red Hat Quay Usage Logs page is unsupported, and returns the following message: Method not implemented. Splunk does not support log lookups.

Prerequisites

- You have installed Splunk and created a username.

- You have generated a Splunk bearer token.

-

You have configured your Red Hat Quay

config.yamlfile to enable Splunk.

Procedure

- Log in to your Red Hat Quay deployment.

- Click on the name of the organization that you will use to create an action log for Splunk.

- In the navigation pane, click Robot Accounts → Create Robot Account.

-

When prompted, enter a name for the robot account, for example

spunkrobotaccount, then click Create robot account. - On your browser, open the Splunk UI.

- Click Search and Reporting.

In the search bar, enter the name of your index, for example,

<splunk_log_index_name>and press Enter.The search results populate on the Splunk UI. Logs are forwarded in JSON format. A response might look similar to the following:

{ "log_data": { "kind": "authentication", "account": "quayuser123", "performer": "John Doe", "repository": "projectQuay", "ip": "192.168.1.100", "metadata_json": {...}, "datetime": "2024-02-06T12:30:45Z" } }

Chapter 7. Clair security scanner

7.1. Clair vulnerability databases

Clair uses the following vulnerability databases to report for issues in your images:

- Ubuntu Oval database

- Debian Security Tracker

- Red Hat Enterprise Linux (RHEL) Oval database

- SUSE Oval database

- Oracle Oval database

- Alpine SecDB database

- VMware Photon OS database

- Amazon Web Services (AWS) UpdateInfo

- Open Source Vulnerability (OSV) Database

For information about how Clair does security mapping with the different databases, see Claircore Severity Mapping.

7.1.1. Information about Open Source Vulnerability (OSV) database for Clair

Open Source Vulnerability (OSV) is a vulnerability database and monitoring service that focuses on tracking and managing security vulnerabilities in open source software.

OSV provides a comprehensive and up-to-date database of known security vulnerabilities in open source projects. It covers a wide range of open source software, including libraries, frameworks, and other components that are used in software development. For a full list of included ecosystems, see defined ecosystems.

Clair also reports vulnerability and security information for golang, java, and ruby ecosystems through the Open Source Vulnerability (OSV) database.

By leveraging OSV, developers and organizations can proactively monitor and address security vulnerabilities in open source components that they use, which helps to reduce the risk of security breaches and data compromises in projects.

For more information about OSV, see the OSV website.

7.2. Setting up Clair on standalone Red Hat Quay deployments

For standalone Red Hat Quay deployments, you can set up Clair manually.

Procedure

In your Red Hat Quay installation directory, create a new directory for the Clair database data:

$ mkdir /home/<user-name>/quay-poc/postgres-clairv4

Set the appropriate permissions for the

postgres-clairv4file by entering the following command:$ setfacl -m u:26:-wx /home/<user-name>/quay-poc/postgres-clairv4

Deploy a Clair Postgres database by entering the following command:

$ sudo podman run -d --name postgresql-clairv4 \ -e POSTGRESQL_USER=clairuser \ -e POSTGRESQL_PASSWORD=clairpass \ -e POSTGRESQL_DATABASE=clair \ -e POSTGRESQL_ADMIN_PASSWORD=adminpass \ -p 5433:5433 \ -v /home/<user-name>/quay-poc/postgres-clairv4:/var/lib/pgsql/data:Z \ registry.redhat.io/rhel8/postgresql-13:1-109

Install the Postgres

uuid-osspmodule for your Clair deployment:$ podman exec -it postgresql-clairv4 /bin/bash -c 'echo "CREATE EXTENSION IF NOT EXISTS \"uuid-ossp\"" | psql -d clair -U postgres'

Example output

CREATE EXTENSION

NoteClair requires the

uuid-osspextension to be added to its Postgres database. For users with proper privileges, creating the extension will automatically be added by Clair. If users do not have the proper privileges, the extension must be added before start Clair.If the extension is not present, the following error will be displayed when Clair attempts to start:

ERROR: Please load the "uuid-ossp" extension. (SQLSTATE 42501).Stop the

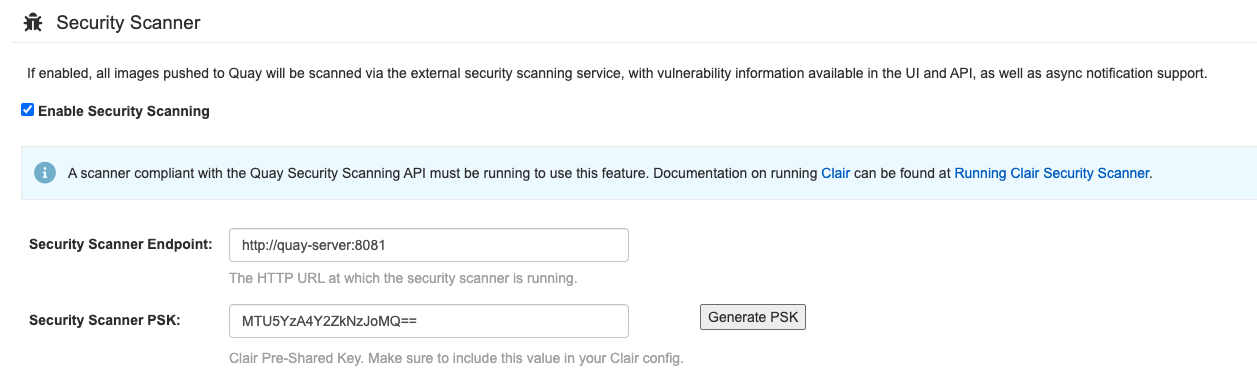

Quaycontainer if it is running and restart it in configuration mode, loading the existing configuration as a volume:$ sudo podman run --rm -it --name quay_config \ -p 80:8080 -p 443:8443 \ -v $QUAY/config:/conf/stack:Z \ {productrepo}/{quayimage}:{productminv} config secret- Log in to the configuration tool and click Enable Security Scanning in the Security Scanner section of the UI.

-

Set the HTTP endpoint for Clair using a port that is not already in use on the

quay-serversystem, for example,8081. Create a pre-shared key (PSK) using the Generate PSK button.

Security Scanner UI

-

Validate and download the

config.yamlfile for Red Hat Quay, and then stop theQuaycontainer that is running the configuration editor. Extract the new configuration bundle into your Red Hat Quay installation directory, for example:

$ tar xvf quay-config.tar.gz -d /home/<user-name>/quay-poc/

Create a folder for your Clair configuration file, for example:

$ mkdir /etc/opt/clairv4/config/

Change into the Clair configuration folder:

$ cd /etc/opt/clairv4/config/

Create a Clair configuration file, for example:

http_listen_addr: :8081 introspection_addr: :8088 log_level: debug indexer: connstring: host=quay-server.example.com port=5433 dbname=clair user=clairuser password=clairpass sslmode=disable scanlock_retry: 10 layer_scan_concurrency: 5 migrations: true matcher: connstring: host=quay-server.example.com port=5433 dbname=clair user=clairuser password=clairpass sslmode=disable max_conn_pool: 100 migrations: true indexer_addr: clair-indexer notifier: connstring: host=quay-server.example.com port=5433 dbname=clair user=clairuser password=clairpass sslmode=disable delivery_interval: 1m poll_interval: 5m migrations: true auth: psk: key: "MTU5YzA4Y2ZkNzJoMQ==" iss: ["quay"] # tracing and metrics trace: name: "jaeger" probability: 1 jaeger: agent: endpoint: "localhost:6831" service_name: "clair" metrics: name: "prometheus"For more information about Clair’s configuration format, see Clair configuration reference.

Start Clair by using the container image, mounting in the configuration from the file you created:

$ sudo podman run -d --name clairv4 \ -p 8081:8081 -p 8088:8088 \ -e CLAIR_CONF=/clair/config.yaml \ -e CLAIR_MODE=combo \ -v /etc/opt/clairv4/config:/clair:Z \ registry.redhat.io/quay/clair-rhel8:v3.11.0

NoteRunning multiple Clair containers is also possible, but for deployment scenarios beyond a single container the use of a container orchestrator like Kubernetes or OpenShift Container Platform is strongly recommended.

7.3. Clair on OpenShift Container Platform

To set up Clair v4 (Clair) on a Red Hat Quay deployment on OpenShift Container Platform, it is recommended to use the Red Hat Quay Operator. By default, the Red Hat Quay Operator installs or upgrades a Clair deployment along with your Red Hat Quay deployment and configure Clair automatically.

7.4. Testing Clair

Use the following procedure to test Clair on either a standalone Red Hat Quay deployment, or on an OpenShift Container Platform Operator-based deployment.

Prerequisites

- You have deployed the Clair container image.

Procedure

Pull a sample image by entering the following command:

$ podman pull ubuntu:20.04

Tag the image to your registry by entering the following command:

$ sudo podman tag docker.io/library/ubuntu:20.04 <quay-server.example.com>/<user-name>/ubuntu:20.04

Push the image to your Red Hat Quay registry by entering the following command:

$ sudo podman push --tls-verify=false quay-server.example.com/quayadmin/ubuntu:20.04

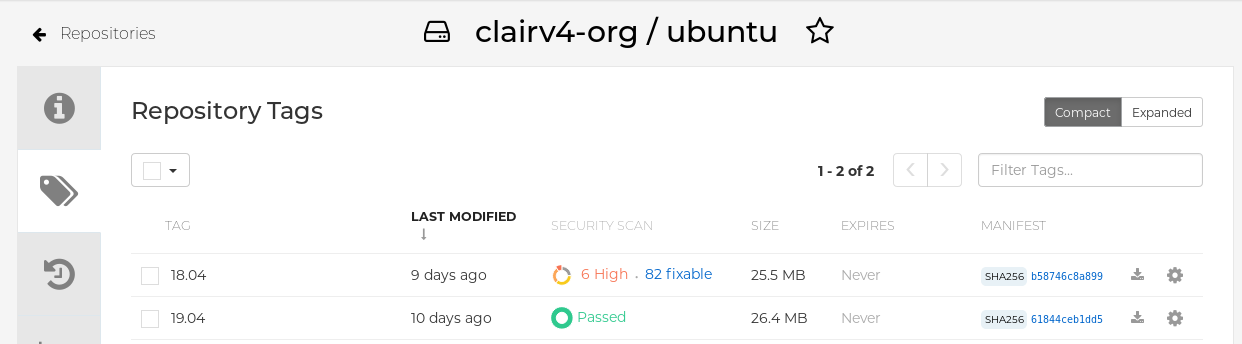

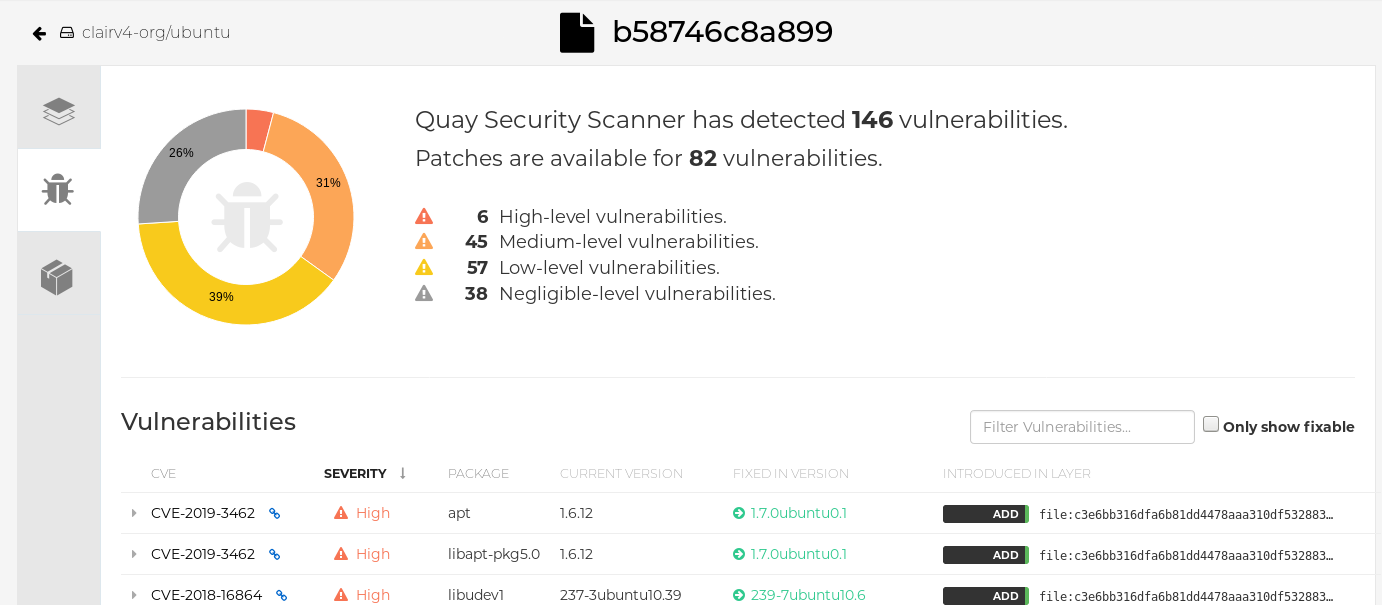

- Log in to your Red Hat Quay deployment through the UI.

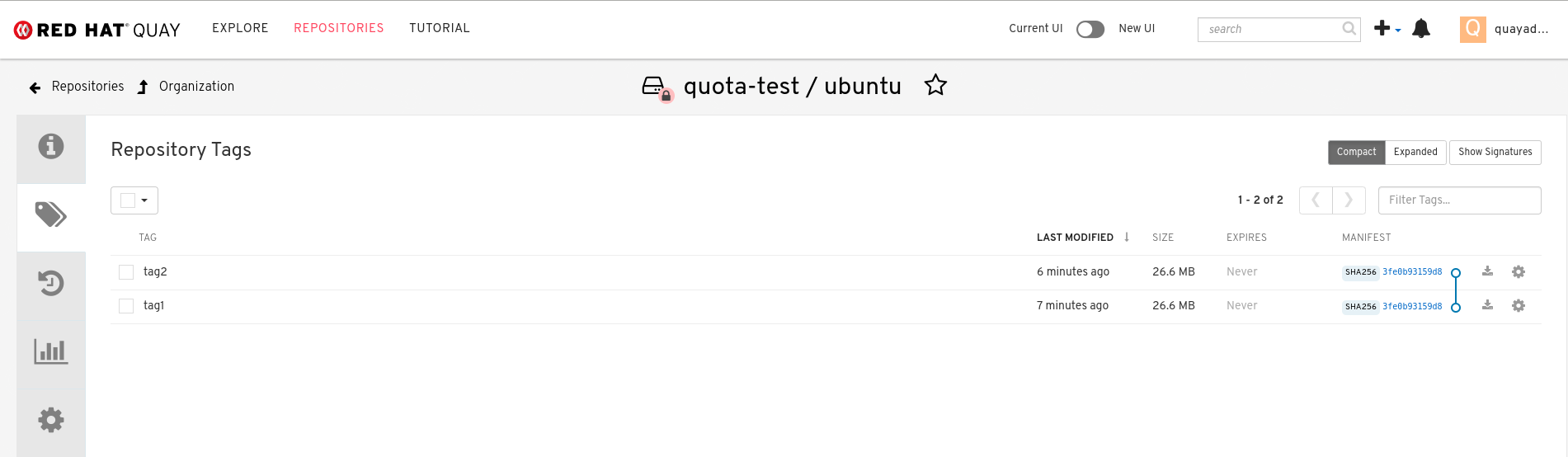

- Click the repository name, for example, quayadmin/ubuntu.

In the navigation pane, click Tags.

Report summary

Click the image report, for example, 45 medium, to show a more detailed report:

Report details

Note

NoteIn some cases, Clair shows duplicate reports on images, for example,

ubi8/nodejs-12orubi8/nodejs-16. This occurs because vulnerabilities with same name are for different packages. This behavior is expected with Clair vulnerability reporting and will not be addressed as a bug.

Chapter 8. Repository mirroring

8.1. Repository mirroring

Red Hat Quay repository mirroring lets you mirror images from external container registries, or another local registry, into your Red Hat Quay cluster. Using repository mirroring, you can synchronize images to Red Hat Quay based on repository names and tags.

From your Red Hat Quay cluster with repository mirroring enabled, you can perform the following:

- Choose a repository from an external registry to mirror

- Add credentials to access the external registry

- Identify specific container image repository names and tags to sync

- Set intervals at which a repository is synced

- Check the current state of synchronization

To use the mirroring functionality, you need to perform the following actions:

- Enable repository mirroring in the Red Hat Quay configuration file

- Run a repository mirroring worker

- Create mirrored repositories

All repository mirroring configurations can be performed using the configuration tool UI or by the Red Hat Quay API.

8.2. Repository mirroring compared to geo-replication

Red Hat Quay geo-replication mirrors the entire image storage backend data between 2 or more different storage backends while the database is shared, for example, one Red Hat Quay registry with two different blob storage endpoints. The primary use cases for geo-replication include the following:

- Speeding up access to the binary blobs for geographically dispersed setups

- Guaranteeing that the image content is the same across regions

Repository mirroring synchronizes selected repositories, or subsets of repositories, from one registry to another. The registries are distinct, with each registry having a separate database and separate image storage.

The primary use cases for mirroring include the following:

- Independent registry deployments in different data centers or regions, where a certain subset of the overall content is supposed to be shared across the data centers and regions

- Automatic synchronization or mirroring of selected (allowlisted) upstream repositories from external registries into a local Red Hat Quay deployment

Repository mirroring and geo-replication can be used simultaneously.

Table 8.1. Red Hat Quay Repository mirroring and geo-replication comparison

| Feature / Capability | Geo-replication | Repository mirroring |

|---|---|---|

| What is the feature designed to do? | A shared, global registry | Distinct, different registries |

| What happens if replication or mirroring has not been completed yet? | The remote copy is used (slower) | No image is served |

| Is access to all storage backends in both regions required? | Yes (all Red Hat Quay nodes) | No (distinct storage) |

| Can users push images from both sites to the same repository? | Yes | No |

| Is all registry content and configuration identical across all regions (shared database)? | Yes | No |

| Can users select individual namespaces or repositories to be mirrored? | No | Yes |

| Can users apply filters to synchronization rules? | No | Yes |

| Are individual / different role-base access control configurations allowed in each region | No | Yes |

8.3. Using repository mirroring

The following list shows features and limitations of Red Hat Quay repository mirroring:

- With repository mirroring, you can mirror an entire repository or selectively limit which images are synced. Filters can be based on a comma-separated list of tags, a range of tags, or other means of identifying tags through Unix shell-style wildcards. For more information, see the documentation for wildcards.

- When a repository is set as mirrored, you cannot manually add other images to that repository.

- Because the mirrored repository is based on the repository and tags you set, it will hold only the content represented by the repository and tag pair. For example if you change the tag so that some images in the repository no longer match, those images will be deleted.

- Only the designated robot can push images to a mirrored repository, superseding any role-based access control permissions set on the repository.

- Mirroring can be configured to rollback on failure, or to run on a best-effort basis.

- With a mirrored repository, a user with read permissions can pull images from the repository but cannot push images to the repository.

- Changing settings on your mirrored repository can be performed in the Red Hat Quay user interface, using the Repositories → Mirrors tab for the mirrored repository you create.

- Images are synced at set intervals, but can also be synced on demand.

8.4. Mirroring configuration UI

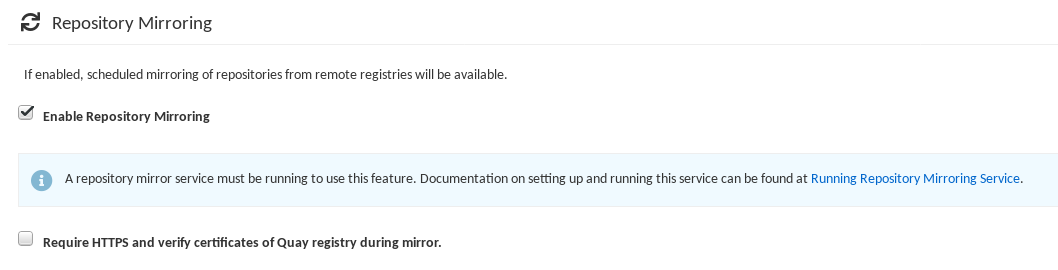

Start the

Quaycontainer in configuration mode and select the Enable Repository Mirroring check box. If you want to require HTTPS communications and verify certificates during mirroring, select the HTTPS and cert verification check box.

-

Validate and download the

configurationfile, and then restart Quay in registry mode using the updated config file.

8.5. Mirroring configuration fields

Table 8.2. Mirroring configuration

| Field | Type | Description |

|---|---|---|

| FEATURE_REPO_MIRROR | Boolean |

Enable or disable repository mirroring |

| REPO_MIRROR_INTERVAL | Number |

The number of seconds between checking for repository mirror candidates |

| REPO_MIRROR_SERVER_HOSTNAME | String |

Replaces the |

| REPO_MIRROR_TLS_VERIFY | Boolean |

Require HTTPS and verify certificates of Quay registry during mirror. |

| REPO_MIRROR_ROLLBACK | Boolean |

When set to

Default: |

8.6. Mirroring worker

Use the following procedure to start the repository mirroring worker.

Procedure

If you have not configured TLS communications using a

/root/ca.crtcertificate, enter the following command to start aQuaypod with therepomirroroption:$ sudo podman run -d --name mirroring-worker \ -v $QUAY/config:/conf/stack:Z \ registry.redhat.io/quay/quay-rhel8:v3.11.0 repomirror

If you have configured TLS communications using a

/root/ca.crtcertificate, enter the following command to start the repository mirroring worker:$ sudo podman run -d --name mirroring-worker \ -v $QUAY/config:/conf/stack:Z \ -v /root/ca.crt:/etc/pki/ca-trust/source/anchors/ca.crt:Z \ registry.redhat.io/quay/quay-rhel8:v3.11.0 repomirror

8.7. Creating a mirrored repository

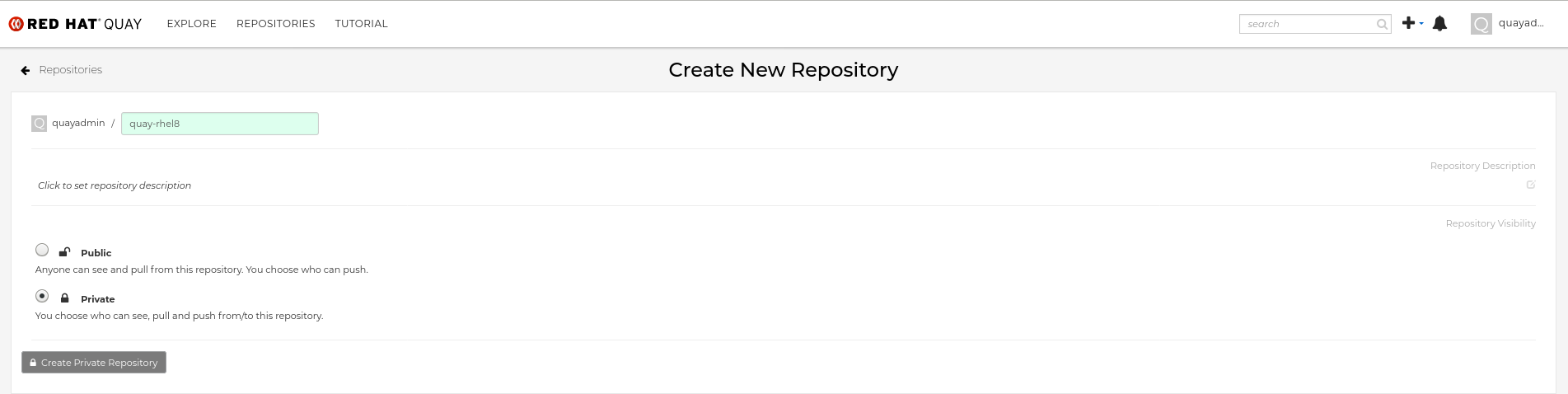

When mirroring a repository from an external container registry, you must create a new private repository. Typically, the same name is used as the target repository, for example, quay-rhel8.

8.7.1. Repository mirroring settings

Use the following procedure to adjust the settings of your mirrored repository.

Prerequisites

- You have enabled repository mirroring in your Red Hat Quay configuration file.

- You have deployed a mirroring worker.

Procedure

In the Settings tab, set the Repository State to

Mirror:

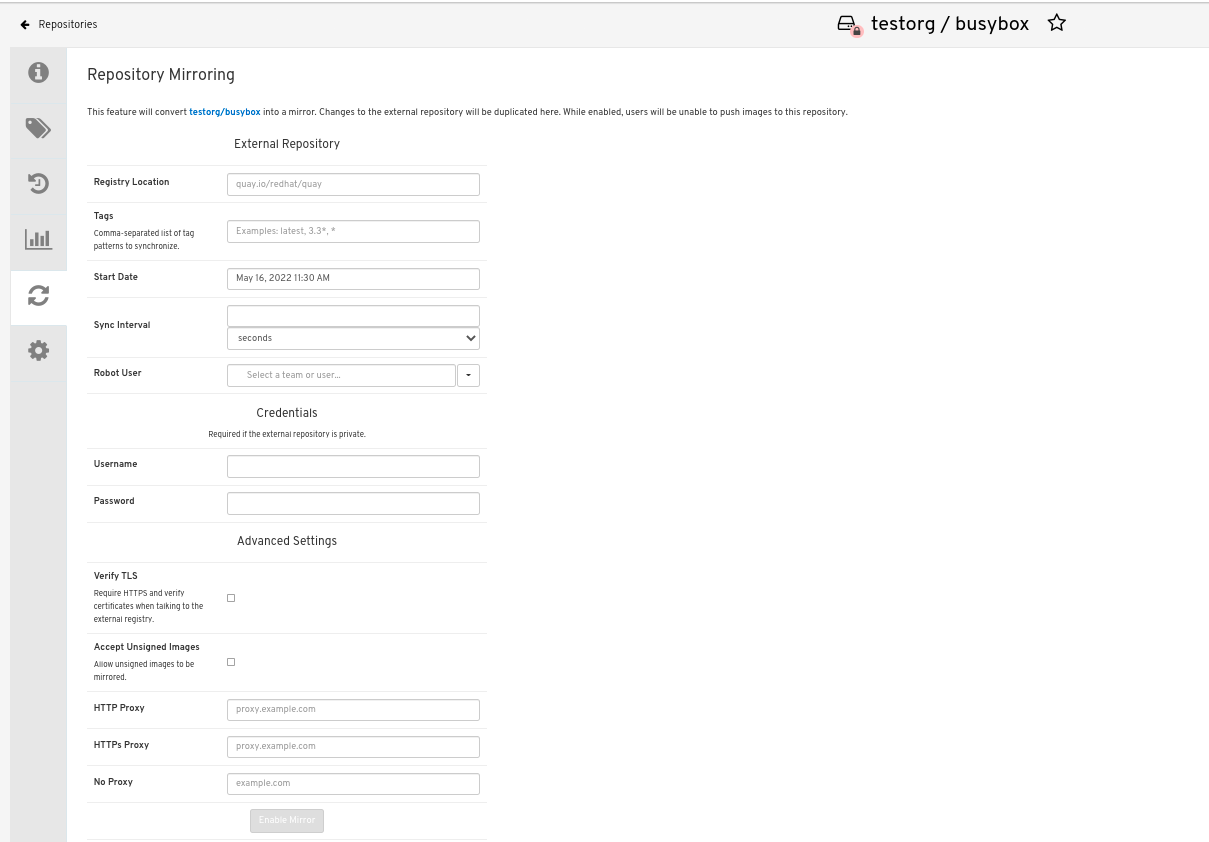

In the Mirror tab, enter the details for connecting to the external registry, along with the tags, scheduling and access information:

Enter the details as required in the following fields:

-

Registry Location: The external repository you want to mirror, for example,

registry.redhat.io/quay/quay-rhel8 - Tags: This field is required. You may enter a comma-separated list of individual tags or tag patterns. (See Tag Patterns section for details.)

- Start Date: The date on which mirroring begins. The current date and time is used by default.

- Sync Interval: Defaults to syncing every 24 hours. You can change that based on hours or days.

- Robot User: Create a new robot account or choose an existing robot account to do the mirroring.

- Username: The username for accessing the external registry holding the repository you are mirroring.

- Password: The password associated with the Username. Note that the password cannot include characters that require an escape character (\).

-

Registry Location: The external repository you want to mirror, for example,

8.7.2. Advanced settings

In the Advanced Settings section, you can configure SSL/TLS and proxy with the following options:

- Verify TLS: Select this option if you want to require HTTPS and to verify certificates when communicating with the target remote registry.

- Accept Unsigned Images: Selecting this option allows unsigned images to be mirrored.

- HTTP Proxy: Select this option if you want to require HTTPS and to verify certificates when communicating with the target remote registry.

- HTTPS PROXY: Identify the HTTPS proxy server needed to access the remote site, if a proxy server is needed.

- No Proxy: List of locations that do not require proxy.

8.7.3. Synchronize now

Use the following procedure to initiate the mirroring operation.

Procedure

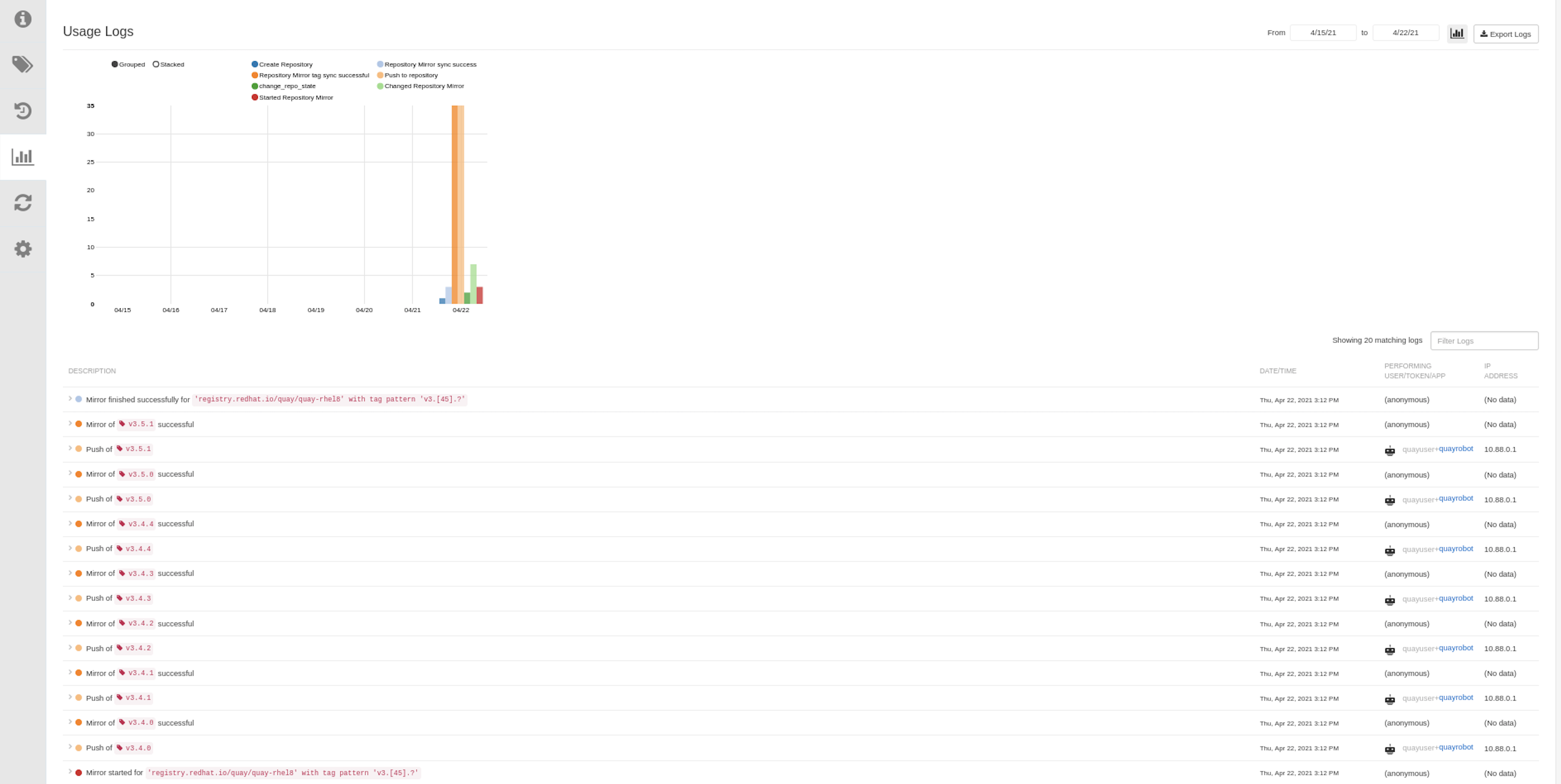

To perform an immediate mirroring operation, press the Sync Now button on the repository’s Mirroring tab. The logs are available on the Usage Logs tab:

When the mirroring is complete, the images will appear in the Tags tab:

Below is an example of a completed Repository Mirroring screen:

8.8. Event notifications for mirroring

There are three notification events for repository mirroring:

- Repository Mirror Started

- Repository Mirror Success

- Repository Mirror Unsuccessful

The events can be configured inside of the Settings tab for each repository, and all existing notification methods such as email, Slack, Quay UI, and webhooks are supported.

8.9. Mirroring tag patterns

At least one tag must be entered. The following table references possible image tag patterns.

8.9.1. Pattern syntax

| Pattern | Description |

| * | Matches all characters |

| ? | Matches any single character |

| [seq] | Matches any character in seq |

| [!seq] | Matches any character not in seq |

8.9.2. Example tag patterns

| Example Pattern | Example Matches |

| v3* | v32, v3.1, v3.2, v3.2-4beta, v3.3 |

| v3.* | v3.1, v3.2, v3.2-4beta |

| v3.? | v3.1, v3.2, v3.3 |

| v3.[12] | v3.1, v3.2 |

| v3.[12]* | v3.1, v3.2, v3.2-4beta |

| v3.[!1]* | v3.2, v3.2-4beta, v3.3 |

8.10. Working with mirrored repositories

Once you have created a mirrored repository, there are several ways you can work with that repository. Select your mirrored repository from the Repositories page and do any of the following:

- Enable/disable the repository: Select the Mirroring button in the left column, then toggle the Enabled check box to enable or disable the repository temporarily.

Check mirror logs: To make sure the mirrored repository is working properly, you can check the mirror logs. To do that, select the Usage Logs button in the left column. Here’s an example:

- Sync mirror now: To immediately sync the images in your repository, select the Sync Now button.

- Change credentials: To change the username and password, select DELETE from the Credentials line. Then select None and add the username and password needed to log into the external registry when prompted.

- Cancel mirroring: To stop mirroring, which keeps the current images available but stops new ones from being synced, select the CANCEL button.

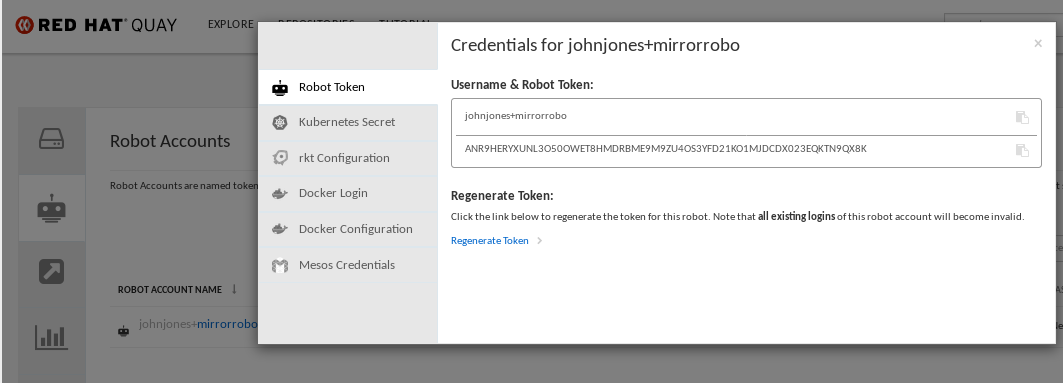

Set robot permissions: Red Hat Quay robot accounts are named tokens that hold credentials for accessing external repositories. By assigning credentials to a robot, that robot can be used across multiple mirrored repositories that need to access the same external registry.

You can assign an existing robot to a repository by going to Account Settings, then selecting the Robot Accounts icon in the left column. For the robot account, choose the link under the REPOSITORIES column. From the pop-up window, you can:

- Check which repositories are assigned to that robot.

-

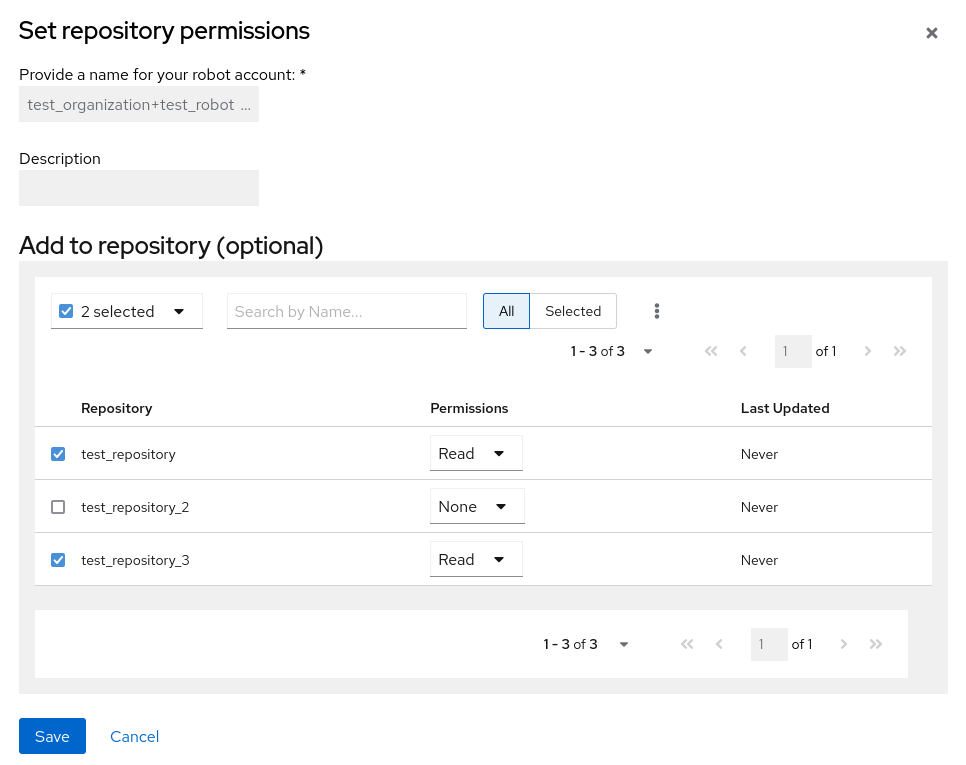

Assign read, write or Admin privileges to that robot from the PERMISSION field shown in this figure:

Change robot credentials: Robots can hold credentials such as Kubernetes secrets, Docker login information, and Mesos bundles. To change robot credentials, select the Options gear on the robot’s account line on the Robot Accounts window and choose View Credentials. Add the appropriate credentials for the external repository the robot needs to access.

- Check and change general setting: Select the Settings button (gear icon) from the left column on the mirrored repository page. On the resulting page, you can change settings associated with the mirrored repository. In particular, you can change User and Robot Permissions, to specify exactly which users and robots can read from or write to the repo.

8.11. Repository mirroring recommendations

Best practices for repository mirroring include the following:

- Repository mirroring pods can run on any node. This means that you can run mirroring on nodes where Red Hat Quay is already running.

- Repository mirroring is scheduled in the database and runs in batches. As a result, repository workers check each repository mirror configuration file and reads when the next sync needs to be. More mirror workers means more repositories can be mirrored at the same time. For example, running 10 mirror workers means that a user can run 10 mirroring operators in parallel. If a user only has 2 workers with 10 mirror configurations, only 2 operators can be performed.

The optimal number of mirroring pods depends on the following conditions:

- The total number of repositories to be mirrored

- The number of images and tags in the repositories and the frequency of changes

Parallel batching

For example, if a user is mirroring a repository that has 100 tags, the mirror will be completed by one worker. Users must consider how many repositories one wants to mirror in parallel, and base the number of workers around that.

Multiple tags in the same repository cannot be mirrored in parallel.

Chapter 9. IPv6 and dual-stack deployments

Your standalone Red Hat Quay deployment can now be served in locations that only support IPv6, such as Telco and Edge environments. Support is also offered for dual-stack networking so your Red Hat Quay deployment can listen on IPv4 and IPv6 simultaneously.

For a list of known limitations, see IPv6 limitations

9.1. Enabling the IPv6 protocol family

Use the following procedure to enable IPv6 support on your standalone Red Hat Quay deployment.

Prerequisites

- You have updated Red Hat Quay to 3.8.

- Your host and container software platform (Docker, Podman) must be configured to support IPv6.

Procedure

In your deployment’s

config.yamlfile, add theFEATURE_LISTEN_IP_VERSIONparameter and set it toIPv6, for example:--- FEATURE_GOOGLE_LOGIN: false FEATURE_INVITE_ONLY_USER_CREATION: false FEATURE_LISTEN_IP_VERSION: IPv6 FEATURE_MAILING: false FEATURE_NONSUPERUSER_TEAM_SYNCING_SETUP: false ---

- Start, or restart, your Red Hat Quay deployment.

Check that your deployment is listening to IPv6 by entering the following command:

$ curl <quay_endpoint>/health/instance {"data":{"services":{"auth":true,"database":true,"disk_space":true,"registry_gunicorn":true,"service_key":true,"web_gunicorn":true}},"status_code":200}

After enabling IPv6 in your deployment’s config.yaml, all Red Hat Quay features can be used as normal, so long as your environment is configured to use IPv6 and is not hindered by the ipv6-limitations[current limitations].

If your environment is configured to IPv4, but the FEATURE_LISTEN_IP_VERSION configuration field is set to IPv6, Red Hat Quay will fail to deploy.

9.2. Enabling the dual-stack protocol family

Use the following procedure to enable dual-stack (IPv4 and IPv6) support on your standalone Red Hat Quay deployment.

Prerequisites

- You have updated Red Hat Quay to 3.8.

- Your host and container software platform (Docker, Podman) must be configured to support IPv6.

Procedure

In your deployment’s

config.yamlfile, add theFEATURE_LISTEN_IP_VERSIONparameter and set it todual-stack, for example:--- FEATURE_GOOGLE_LOGIN: false FEATURE_INVITE_ONLY_USER_CREATION: false FEATURE_LISTEN_IP_VERSION: dual-stack FEATURE_MAILING: false FEATURE_NONSUPERUSER_TEAM_SYNCING_SETUP: false ---

- Start, or restart, your Red Hat Quay deployment.

Check that your deployment is listening to both channels by entering the following command:

For IPv4, enter the following command:

$ curl --ipv4 <quay_endpoint> {"data":{"services":{"auth":true,"database":true,"disk_space":true,"registry_gunicorn":true,"service_key":true,"web_gunicorn":true}},"status_code":200}For IPv6, enter the following command:

$ curl --ipv6 <quay_endpoint> {"data":{"services":{"auth":true,"database":true,"disk_space":true,"registry_gunicorn":true,"service_key":true,"web_gunicorn":true}},"status_code":200}

After enabling dual-stack in your deployment’s config.yaml, all Red Hat Quay features can be used as normal, so long as your environment is configured for dual-stack.

9.3. IPv6 and dua-stack limitations

Currently, attempting to configure your Red Hat Quay deployment with the common Azure Blob Storage configuration will not work on IPv6 single stack environments. Because the endpoint of Azure Blob Storage does not support IPv6, there is no workaround in place for this issue.

For more information, see PROJQUAY-4433.

Currently, attempting to configure your Red Hat Quay deployment with Amazon S3 CloudFront will not work on IPv6 single stack environments. Because the endpoint of Amazon S3 CloudFront does not support IPv6, there is no workaround in place for this issue.

For more information, see PROJQUAY-4470.

Chapter 10. LDAP Authentication Setup for Red Hat Quay

Lightweight Directory Access Protocol (LDAP) is an open, vendor-neutral, industry standard application protocol for accessing and maintaining distributed directory information services over an Internet Protocol (IP) network. Red Hat Quay supports using LDAP as an identity provider.

10.1. Considerations when enabling LDAP

Prior to enabling LDAP for your Red Hat Quay deployment, you should consider the following.

Existing Red Hat Quay deployments

Conflicts between usernames can arise when you enable LDAP for an existing Red Hat Quay deployment that already has users configured. For example, one user, alice, was manually created in Red Hat Quay prior to enabling LDAP. If the username alice also exists in the LDAP directory, Red Hat Quay automatically creates a new user, alice-1, when alice logs in for the first time using LDAP. Red Hat Quay then automatically maps the LDAP credentials to the alice account. For consistency reasons, this might be erroneous for your Red Hat Quay deployment. It is recommended that you remove any potentially conflicting local account names from Red Hat Quay prior to enabling LDAP.

Manual User Creation and LDAP authentication

When Red Hat Quay is configured for LDAP, LDAP-authenticated users are automatically created in Red Hat Quay’s database on first log in, if the configuration option FEATURE_USER_CREATION is set to true. If this option is set to false, the automatic user creation for LDAP users fails, and the user is not allowed to log in. In this scenario, the superuser needs to create the desired user account first. Conversely, if FEATURE_USER_CREATION is set to true, this also means that a user can still create an account from the Red Hat Quay login screen, even if there is an equivalent user in LDAP.

10.2. Configuring LDAP for Red Hat Quay

You can configure LDAP for Red Hat Quay by updating your config.yaml file directly and restarting your deployment. Use the following procedure as a reference when configuring LDAP for Red Hat Quay.

Update your

config.yamlfile directly to include the following relevant information:# ... AUTHENTICATION_TYPE: LDAP 1 # ... LDAP_ADMIN_DN: uid=<name>,ou=Users,o=<organization_id>,dc=<example_domain_component>,dc=com 2 LDAP_ADMIN_PASSWD: ABC123 3 LDAP_ALLOW_INSECURE_FALLBACK: false 4 LDAP_BASE_DN: 5 - o=<organization_id> - dc=<example_domain_component> - dc=com LDAP_EMAIL_ATTR: mail 6 LDAP_UID_ATTR: uid 7 LDAP_URI: ldap://<example_url>.com 8 LDAP_USER_FILTER: (memberof=cn=developers,ou=Users,dc=<domain_name>,dc=com) 9 LDAP_USER_RDN: 10 - ou=<example_organization_unit> - o=<organization_id> - dc=<example_domain_component> - dc=com # ...

- 1

- Required. Must be set to

LDAP. - 2

- Required. The admin DN for LDAP authentication.

- 3

- Required. The admin password for LDAP authentication.

- 4

- Required. Whether to allow SSL/TLS insecure fallback for LDAP authentication.

- 5

- Required. The base DN for LDAP authentication.

- 6

- Required. The email attribute for LDAP authentication.

- 7

- Required. The UID attribute for LDAP authentication.

- 8

- Required. The LDAP URI.

- 9

- Required. The user filter for LDAP authentication.

- 10

- Required. The user RDN for LDAP authentication.

- After you have added all required LDAP fields, save the changes and restart your Red Hat Quay deployment.

10.3. Enabling the LDAP_RESTRICTED_USER_FILTER configuration field

The LDAP_RESTRICTED_USER_FILTER configuration field is a subset of the LDAP_USER_FILTER configuration field. When configured, this option allows Red Hat Quay administrators the ability to configure LDAP users as restricted users when Red Hat Quay uses LDAP as its authentication provider.

Use the following procedure to enable LDAP restricted users on your Red Hat Quay deployment.

Prerequisites

- Your Red Hat Quay deployment uses LDAP as its authentication provider.

-

You have configured the

LDAP_USER_FILTERfield in yourconfig.yamlfile.

Procedure

In your deployment’s

config.yamlfile, add theLDAP_RESTRICTED_USER_FILTERparameter and specify the group of restricted users, for example,members:# ... AUTHENTICATION_TYPE: LDAP # ... LDAP_ADMIN_DN: uid=<name>,ou=Users,o=<organization_id>,dc=<example_domain_component>,dc=com LDAP_ADMIN_PASSWD: ABC123 LDAP_ALLOW_INSECURE_FALLBACK: false LDAP_BASE_DN: - o=<organization_id> - dc=<example_domain_component> - dc=com LDAP_EMAIL_ATTR: mail LDAP_UID_ATTR: uid LDAP_URI: ldap://<example_url>.com LDAP_USER_FILTER: (memberof=cn=developers,ou=Users,o=<example_organization_unit>,dc=<example_domain_component>,dc=com) LDAP_RESTRICTED_USER_FILTER: (<filterField>=<value>) 1 LDAP_USER_RDN: - ou=<example_organization_unit> - o=<organization_id> - dc=<example_domain_component> - dc=com # ...- 1

- Configures specified users as restricted users.

- Start, or restart, your Red Hat Quay deployment.

After enabling the LDAP_RESTRICTED_USER_FILTER feature, your LDAP Red Hat Quay users are restricted from reading and writing content, and creating organizations.

10.4. Enabling the LDAP_SUPERUSER_FILTER configuration field

With the LDAP_SUPERUSER_FILTER field configured, Red Hat Quay administrators can configure Lightweight Directory Access Protocol (LDAP) users as superusers if Red Hat Quay uses LDAP as its authentication provider.

Use the following procedure to enable LDAP superusers on your Red Hat Quay deployment.

Prerequisites

- Your Red Hat Quay deployment uses LDAP as its authentication provider.

-

You have configured the

LDAP_USER_FILTERfield field in yourconfig.yamlfile.

Procedure

In your deployment’s

config.yamlfile, add theLDAP_SUPERUSER_FILTERparameter and add the group of users you want configured as super users, for example,root:# ... AUTHENTICATION_TYPE: LDAP # ... LDAP_ADMIN_DN: uid=<name>,ou=Users,o=<organization_id>,dc=<example_domain_component>,dc=com LDAP_ADMIN_PASSWD: ABC123 LDAP_ALLOW_INSECURE_FALLBACK: false LDAP_BASE_DN: - o=<organization_id> - dc=<example_domain_component> - dc=com LDAP_EMAIL_ATTR: mail LDAP_UID_ATTR: uid LDAP_URI: ldap://<example_url>.com LDAP_USER_FILTER: (memberof=cn=developers,ou=Users,o=<example_organization_unit>,dc=<example_domain_component>,dc=com) LDAP_SUPERUSER_FILTER: (<filterField>=<value>) 1 LDAP_USER_RDN: - ou=<example_organization_unit> - o=<organization_id> - dc=<example_domain_component> - dc=com # ...- 1

- Configures specified users as superusers.

- Start, or restart, your Red Hat Quay deployment.

After enabling the LDAP_SUPERUSER_FILTER feature, your LDAP Red Hat Quay users have superuser privileges. The following options are available to superusers:

- Manage users

- Manage organizations

- Manage service keys

- View the change log

- Query the usage logs

- Create globally visible user messages

10.5. Common LDAP configuration issues

The following errors might be returned with an invalid configuration.

- Invalid credentials. If you receive this error, the Administrator DN or Administrator DN password values are incorrect. Ensure that you are providing accurate Administrator DN and password values.

*Verification of superuser %USERNAME% failed. This error is returned for the following reasons:

- The username has not been found.

- The user does not exist in the remote authentication system.

- LDAP authorization is configured improperly.

Cannot find the current logged in user. When configuring LDAP for Red Hat Quay, there may be situations where the LDAP connection is established successfully using the username and password provided in the Administrator DN fields. However, if the current logged-in user cannot be found within the specified User Relative DN path using the UID Attribute or Mail Attribute fields, there are typically two potential reasons for this:

- The current logged in user does not exist in the User Relative DN path.

The Administrator DN does not have rights to search or read the specified LDAP path.

To fix this issue, ensure that the logged in user is included in the User Relative DN path, or provide the correct permissions to the Administrator DN account.

10.6. LDAP configuration fields

For a full list of LDAP configuration fields, see LDAP configuration fields

Chapter 11. Configuring OIDC for Red Hat Quay

Configuring OpenID Connect (OIDC) for Red Hat Quay can provide several benefits to your deployment. For example, OIDC allows users to authenticate to Red Hat Quay using their existing credentials from an OIDC provider, such as Red Hat Single Sign-On, Google, Github, Microsoft, or others. Other benefits of OIDC include centralized user management, enhanced security, and single sign-on (SSO). Overall, OIDC configuration can simplify user authentication and management, enhance security, and provide a seamless user experience for Red Hat Quay users.

The following procedures show you how to configure Microsoft Entra ID on a standalone deployment of Red Hat Quay, and how to configure Red Hat Single Sign-On on an Operator-based deployment of Red Hat Quay. These procedures are interchangeable depending on your deployment type.

By following these procedures, you will be able to add any OIDC provider to Red Hat Quay, regardless of which identity provider you choose to use.

11.1. Configuring Microsoft Entra ID OIDC on a standalone deployment of Red Hat Quay

By integrating Microsoft Entra ID authentication with Red Hat Quay, your organization can take advantage of the centralized user management and security features offered by Microsoft Entra ID. Some features include the ability to manage user access to Red Hat Quay repositories based on their Microsoft Entra ID roles and permissions, and the ability to enable multi-factor authentication and other security features provided by Microsoft Entra ID.

Azure Active Directory (Microsoft Entra ID) authentication for Red Hat Quay allows users to authenticate and access Red Hat Quay using their Microsoft Entra ID credentials.

Use the following procedure to configure Microsoft Entra ID by updating the Red Hat Quay config.yaml file directly.

- Using the following procedure, you can add any ODIC provider to Red Hat Quay, regardless of which identity provider is being added.

-

If your system has a firewall in use, or proxy enabled, you must whitelist all Azure API endpoints for each Oauth application that is created. Otherwise, the following error is returned:

x509: certificate signed by unknown authority.

Use the following reference and update your

config.yamlfile with your desired OIDC provider’s credentials:# ... AZURE_LOGIN_CONFIG: 1 CLIENT_ID: <client_id> 2 CLIENT_SECRET: <client_secret> 3 OIDC_SERVER: <oidc_server_address_> 4 SERVICE_NAME: Microsoft Entra ID 5 VERIFIED_EMAIL_CLAIM_NAME: <verified_email> 6 # ...

- 1

- The parent key that holds the OIDC configuration settings. In this example, the parent key used is

AZURE_LOGIN_CONFIG, however, the stringAZUREcan be replaced with any arbitrary string based on your specific needs, for exampleABC123.However, the following strings are not accepted:GOOGLE,GITHUB. These strings are reserved for their respective identity platforms and require a specificconfig.yamlentry contingent upon when platform you are using. - 2

- The client ID of the application that is being registered with the identity provider.

- 3

- The client secret of the application that is being registered with the identity provider.

- 4

- The address of the OIDC server that is being used for authentication. In this example, you must use

sts.windows.netas the issuer identifier. Usinghttps://login.microsoftonline.comresults in the following error:Could not create provider for AzureAD. Error: oidc: issuer did not match the issuer returned by provider, expected "https://login.microsoftonline.com/73f2e714-xxxx-xxxx-xxxx-dffe1df8a5d5" got "https://sts.windows.net/73f2e714-xxxx-xxxx-xxxx-dffe1df8a5d5/". - 5

- The name of the service that is being authenticated.

- 6

- The name of the claim that is used to verify the email address of the user.

Proper configuration of Microsoft Entra ID results three redirects with the following format:

-

https://QUAY_HOSTNAME/oauth2/<name_of_service>/callback -

https://QUAY_HOSTNAME/oauth2/<name_of_service>/callback/attach -

https://QUAY_HOSTNAME/oauth2/<name_of_service>/callback/cli

-

- Restart your Red Hat Quay deployment.

11.2. Configuring Red Hat Single Sign-On for Red Hat Quay

Based on the Keycloak project, Red Hat Single Sign-On (RH-SSO) is an open source identity and access management (IAM) solution provided by Red Hat. RH-SSO allows organizations to manage user identities, secure applications, and enforce access control policies across their systems and applications. It also provides a unified authentication and authorization framework, which allows users to log in one time and gain access to multiple applications and resources without needing to re-authenticate. For more information, see Red Hat Single Sign-On.

By configuring Red Hat Single Sign-On on Red Hat Quay, you can create a seamless authentication integration between Red Hat Quay and other application platforms like OpenShift Container Platform.

11.2.1. Configuring the Red Hat Single Sign-On Operator for use with the Red Hat Quay Operator

Use the following procedure to configure Red Hat Single Sign-On for the Red Hat Quay Operator on OpenShift Container Platform.

Prerequisites

- You have set up the Red Hat Single Sign-On Operator. For more information, see Red Hat Single Sign-On Operator.

- You have configured SSL/TLS for your Red Hat Quay on OpenShift Container Platform deployment and for Red Hat Single Sign-On.

- You have generated a single Certificate Authority (CA) and uploaded it to your Red Hat Single Sign-On Operator and to your Red Hat Quay configuration.

Procedure

Navigate to the Red Hat Single Sign-On Admin Console.

- On the OpenShift Container Platform Web Console, navigate to Network → Route.

- Select the Red Hat Single Sign-On project from the drop-down list.

- Find the Red Hat Single Sign-On Admin Console in the Routes table.

- Select the Realm that you will use to configure Red Hat Quay.

- Click Clients under the Configure section of the navigation panel, and then click the Create button to add a new OIDC for Red Hat Quay.

Enter the following information.

-

Client ID:

quay-enterprise -

Client Protocol:

openid-connect -

Root URL:

https://<quay_endpoint>/

-

Client ID:

- Click Save. This results in a redirect to the Clients setting panel.

- Navigate to Access Type and select Confidential.

Navigate to Valid Redirect URIs. You must provide three redirect URIs. The value should be the fully qualified domain name of the Red Hat Quay registry appended with

/oauth2/redhatsso/callback. For example:-

https://<quay_endpoint>/oauth2/redhatsso/callback -

https://<quay_endpoint>/oauth2/redhatsso/callback/attach -

https://<quay_endpoint>/oauth2/redhatsso/callback/cli

-

- Click Save and navigate to the new Credentials setting.

- Copy the value of the Secret.

11.2.1.1. Configuring the Red Hat Quay Operator to use Red Hat Single Sign-On

Use the following procedure to configure Red Hat Single Sign-On with the Red Hat Quay Operator.

Prerequisites

- You have set up the Red Hat Single Sign-On Operator. For more information, see Red Hat Single Sign-On Operator.

- You have configured SSL/TLS for your Red Hat Quay on OpenShift Container Platform deployment and for Red Hat Single Sign-On.

- You have generated a single Certificate Authority (CA) and uploaded it to your Red Hat Single Sign-On Operator and to your Red Hat Quay configuration.

Procedure

-

Edit your Red Hat Quay

config.yamlfile by navigating to Operators → Installed Operators → Red Hat Quay → Quay Registry → Config Bundle Secret. Then, click Actions → Edit Secret. Alternatively, you can update theconfig.yamlfile locally. Add the following information to your Red Hat Quay on OpenShift Container Platform

config.yamlfile:# ... RHSSO_LOGIN_CONFIG: 1 CLIENT_ID: <client_id> 2 CLIENT_SECRET: <client_secret> 3 OIDC_SERVER: <oidc_server_url> 4 SERVICE_NAME: <service_name> 5 SERVICE_ICON: <service_icon> 6 VERIFIED_EMAIL_CLAIM_NAME: <example_email_address> 7 PREFERRED_USERNAME_CLAIM_NAME: <preferred_username> 8 LOGIN_SCOPES: 9 - 'openid' # ...

- 1

- The parent key that holds the OIDC configuration settings. In this example, the parent key used is

AZURE_LOGIN_CONFIG, however, the stringAZUREcan be replaced with any arbitrary string based on your specific needs, for exampleABC123.However, the following strings are not accepted:GOOGLE,GITHUB. These strings are reserved for their respective identity platforms and require a specificconfig.yamlentry contingent upon when platform you are using. - 2

- The client ID of the application that is being registered with the identity provider, for example,

quay-enterprise. - 3

- The Client Secret from the Credentials tab of the

quay-enterpriseOIDC client settings. - 4

- The fully qualified domain name (FQDN) of the Red Hat Single Sign-On instance, appended with

/auth/realms/and the Realm name. You must include the forward slash at the end, for example,https://sso-redhat.example.com//auth/realms/<keycloak_realm_name>/. - 5

- The name that is displayed on the Red Hat Quay login page, for example,

Red hat Single Sign On. - 6

- Changes the icon on the login screen. For example,

/static/img/RedHat.svg. - 7

- The name of the claim that is used to verify the email address of the user.

- 8

- The name of the claim that is used to verify the email address of the user.

- 9

- The scopes to send to the OIDC provider when performing the login flow, for example,

openid.

- Restart your Red Hat Quay on OpenShift Container Platform deployment with Red Hat Single Sign-On enabled.

11.3. Team synchronization for Red Hat Quay OIDC deployments

Administrators can leverage an OpenID Connect (OIDC) identity provider that supports group or team synchronization to apply repository permissions to sets of users in Red Hat Quay. This allows administrators to avoid having to manually create and sync group definitions between Red Hat Quay and the OIDC group.

11.3.1. Enabling synchronization for Red Hat Quay OIDC deployments

Use the following procedure to enable team synchronization when you Red Hat Quay deployment uses an OIDC authenticator.

The following procedure does not use a specific OIDC provider. Instead, it provides a general outline of how best to approach team synchronization between an OIDC provider and Red Hat Quay. Any OIDC provider can be used to enable team synchronization, however, setup might vary depending on your provider.

Procedure

Update your

config.yamlfile with the following information:AUTHENTICATION_TYPE: OIDC # ... OIDC_LOGIN_CONFIG: CLIENT_ID: 1 CLIENT_SECRET: 2 OIDC_SERVER: 3 SERVICE_NAME: 4 PREFERRED_GROUP_CLAIM_NAME: 5 LOGIN_SCOPES: [ 'openid', '<example_scope>' ] 6 # ... FEATURE_TEAM_SYNCING: true 7 FEATURE_NONSUPERUSER_TEAM_SYNCING_SETUP: true 8 FEATURE_V2_UI: true # ...

- 1

- Required. The registered OIDC client ID for this Red Hat Quay instance.

- 2

- Required. The registered OIDC client secret for this Red Hat Quay instance.

- 3

- Required. The address of the OIDC server that is being used for authentication. This URL should be such that a

GETrequest to<OIDC_SERVER>/.well-known/openid-configurationreturns the provider’s configuration information. This configuration information is essential for the relying party (RP) to interact securely with the OpenID Connect provider and obtain necessary details for authentication and authorization processes. - 4

- Required. The name of the service that is being authenticated.

- 5

- Required. The key name within the OIDC token payload that holds information about the user’s group memberships. This field allows the authentication system to extract group membership information from the OIDC token so that it can be used with Red Hat Quay.

- 6

- Required. Adds additional scopes that Red Hat Quay uses to communicate with the OIDC provider. Must include

'openid'. Additional scopes are optional. - 7

- Required. Whether to allow for team membership to be synced from a backing group in the authentication engine.

- 8

- Optional. If enabled, non-superusers can setup team synchronization.

- Restart your Red Hat Quay registry.

11.3.2. Setting up your Red Hat Quay deployment for team synchronization

- Log in to your Red Hat Quay registry via your OIDC provider.

- On the Red Hat Quay v2 UI dashboard, click Create Organization.

-

Enter and Organization name, for example,

test-org. - Click the name of the Organization.

- In the navigation pane, click Teams and membership.

-

Click Create new team and enter a name, for example,

testteam. On the Create team pop-up:

- Optional. Add this team to a repository.

-

Add a team member, for example,

user1, by typing in the user’s account name. - Add a robot account to this team. This page provides the option to create a robot account.

- Click Next.

- On the Review and Finish page, review the information that you have provided and click Review and Finish.

To enable team synchronization for your Red Hat Quay OIDC deployment, click Enable Directory Sync on the Teams and membership page. Note the message in the popup:

WarningPlease note that once team syncing is enabled, the membership of users who are already part of the team will be revoked. OIDC group will be the single source of truth. This is a non-reversible action. Team’s user membership from within Quay will be ready-only.

- In the popup box, enter the name of the group to sync membership with. Then, click Enable Sync.

You are returned to the Teams and membership page. Note that users of this team are removed and are re-added upon logging back in. At this stage, only the robot account is still part of the team.

A banner at the top of the page confirms that the team is synced:

This team is synchronized with a group in OIDC and its user membership is therefore read-only.

By clicking the Directory Synchronization Config accordion, the OIDC group that your deployment is synchronized with appears.

- Log out of your Red Hat Quay registry and continue on to the verification steps.

Verification

Use the following verification procedure to ensure that user1 appears as a member of the team.

- Log back in to your Red Hat Quay registry.

-

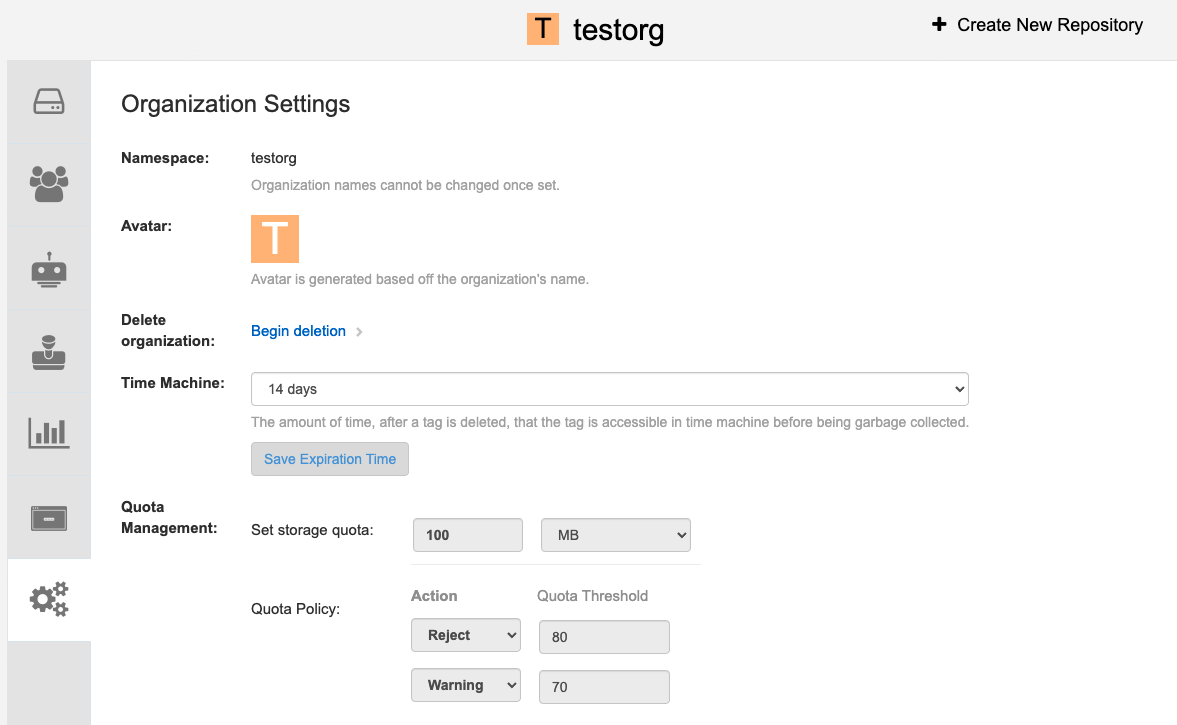

Click Organizations → test-org → test-team Teams and memberships.