Red Hat Training

A Red Hat training course is available for Red Hat OpenStack Platform

Deploying an Overcloud with Containerized Red Hat Ceph

Configuring the Director to Deploy and Use a Containerized Red Hat Ceph Cluster

OpenStack Documentation Team

rhos-docs@redhat.comAbstract

Making open source more inclusive

Red Hat is committed to replacing problematic language in our code, documentation, and web properties. We are beginning with these four terms: master, slave, blacklist, and whitelist. Because of the enormity of this endeavor, these changes will be implemented gradually over several upcoming releases. For more details, see our CTO Chris Wright’s message.

Chapter 1. Introduction to integrating Red Hat Ceph Storage with an overcloud

Red Hat OpenStack Platform (RHOSP) director creates a cloud environment called the overcloud. Director provides the ability to configure extra features for an overcloud. One of these extra features includes integration with Red Hat Ceph Storage. This includes both Ceph Storage clusters created with the director or existing Ceph Storage clusters.

The Red Hat Ceph cluster described in this guide features containerized Ceph Storage. For more information about containerized services in RHOSP, see Configuring a basic overcloud with the CLI tools in the Director Installation and Usage Guide.

1.1. Defining Ceph Storage

Red Hat Ceph Storage is a distributed data object store designed to provide excellent performance, reliability, and scalability. Distributed object stores are the future of storage, because they accommodate unstructured data, and because clients can use modern object interfaces and legacy interfaces simultaneously. At the heart of every Ceph deployment is the Ceph Storage cluster, which consists of two types of daemons:

- Ceph Object Storage Daemon

- A Ceph Object Storage Daemon (OSD) stores data on behalf of Ceph clients. Additionally, Ceph OSDs utilize the CPU and memory of Ceph nodes to perform data replication, rebalancing, recovery, monitoring, and reporting functions.

- Ceph Monitor

- A Ceph monitor (MON) maintains a master copy of the Ceph Storage cluster map with the current state of the storage cluster.

For more information about Red Hat Ceph Storage, see the Red Hat Ceph Storage Architecture Guide.

Ceph File System (CephFS) through NFS is supported. For more information, see CephFS via NFS Back End Guide for the Shared File Systems Service.

1.2. Defining the scenario

This guide provides instructions for deploying a containerized Red Hat Ceph cluster with your overcloud. To do this, the director uses Ansible playbooks provided through the ceph-ansible package. The director also manages the configuration and scaling operations of the cluster.

1.3. Setting requirements

This guide contains information supplementary to the Director Installation and Usage guide.

If you are using the Red Hat OpenStack Platform director to create Ceph Storage nodes, note the following requirements for these nodes:

- Placement Groups

- Ceph uses Placement Groups to facilitate dynamic and efficient object tracking at scale. In the case of OSD failure or cluster re-balancing, Ceph can move or replicate a placement group and its contents, which means a Ceph cluster can re-balance and recover efficiently. The default Placement Group count that Director creates is not always optimal so it is important to calculate the correct Placement Group count according to your requirements. You can use the Placement Group calculator to calculate the correct count: Ceph Placement Groups (PGs) per Pool Calculator

- Processor

- 64-bit x86 processor with support for the Intel 64 or AMD64 CPU extensions.

- Memory

- Red Hat typically recommends a baseline of 16GB of RAM per OSD host, with an additional 2 GB of RAM per OSD daemon.

- Disk Layout

Sizing is dependant on your storage need. The recommended Red Hat Ceph Storage node configuration requires at least three or more disks in a layout similar to the following:

-

/dev/sda- The root disk. The director copies the main Overcloud image to the disk. This should be at minimum 50 GB of available disk space. -

/dev/sdb- The journal disk. This disk divides into partitions for Ceph OSD journals. For example,/dev/sdb1,/dev/sdb2,/dev/sdb3, and onward. The journal disk is usually a solid state drive (SSD) to aid with system performance. /dev/sdcand onward - The OSD disks. Use as many disks as necessary for your storage requirements.NoteRed Hat OpenStack Platform director uses

ceph-ansible, which does not support installing the OSD on the root disk of Ceph Storage nodes. This means you need at least two or more disks for a supported Ceph Storage node.

-

- Network Interface Cards

- A minimum of one 1 Gbps Network Interface Cards, although it is recommended to use at least two NICs in a production environment. Use additional network interface cards for bonded interfaces or to delegate tagged VLAN traffic. It is recommended to use a 10 Gbps interface for storage node, especially if creating an OpenStack Platform environment that serves a high volume of traffic.

- Power Management

- Each Controller node requires a supported power management interface, such as an Intelligent Platform Management Interface (IPMI) functionality, on the motherboard of the server.

- Image Properties

-

To help improve Red Hat Ceph Storage block device performance, you can configure the Glance image to use the

virtio-scsidriver. For more information about recommended image properties for images, see Congfiguring Glance in the Red Hat Ceph Storage documentation.

This guide also requires the following:

- An Undercloud host with the Red Hat OpenStack Platform director installed. See Installing the undercloud in the Director Installation and Usage guide.

- Any additional hardware recommendation for Red Hat Ceph Storage. See the Red Hat Ceph Storage Hardware Selection Guide.

The Ceph Monitor service is installed on the overcloud Controller nodes. This means that you must provide adequate resources to alleviate performance issues. Ensure that the Controller nodes in your environment use at least 16 GB of RAM for memory and solid-state drive (SSD) storage for the Ceph monitor data. For a medium to large Ceph installation, provide at least 500 GB of Ceph monitor data. This space is necessary to avoid levelDB growth if the cluster becomes unstable.

1.4. Additional resources

The /usr/share/openstack-tripleo-heat-templates/environments/ceph-ansible/ceph-ansible.yaml environment file instructs the director to use playbooks derived from the ceph-ansible project. These playbooks are installed in /usr/share/ceph-ansible/ of the undercloud. In particular, the following file lists all the default settings applied by the playbooks:

-

/usr/share/ceph-ansible/group_vars/all.yml.sample

Although ceph-ansible uses playbooks to deploy containerized Ceph Storage, do not edit these files to customize your deployment. Instead, use heat environment files to override the defaults set by these playbooks. If you edit the ceph-ansible playbooks directly, your deployment fails.

For information about the default settings applied by director for containerized Ceph Storage, see the heat templates in /usr/share/openstack-tripleo-heat-templates/deployment/ceph-ansible.

Reading these templates requires a deeper understanding of how environment files and Heat templates work in director. See Understanding Heat Templates and Environment Files for reference.

Lastly, for more information about containerized services in OpenStack, see Configuring a basic overcloud with the CLI tools in the Director Installation and Usage Guide.

Chapter 2. Preparing overcloud nodes

All nodes in this scenario are bare metal systems using IPMI for power management. These nodes do not require an operating system because the director copies a Red Hat Enterprise Linux 7 image to each node; in addition, the Ceph Storage services on the nodes described here are containerized. The director communicates to each node through the Provisioning network during the introspection and provisioning processes. All nodes connect to this network through the native VLAN.

2.1. Cleaning Ceph Storage node disks

The Ceph Storage OSDs and journal partitions require GPT disk labels. This means the additional disks on Ceph Storage require conversion to GPT before installing the Ceph OSD services. For this to happen, all metadata must be deleted from the disks; this will allow the director to set GPT labels on them.

You can set the director to delete all disk metadata by default by adding the following setting to your /home/stack/undercloud.conf file:

clean_nodes=true

With this option, the Bare Metal Provisioning service will run an additional step to boot the nodes and clean the disks each time the node is set to available. This adds an additional power cycle after the first introspection and before each deployment. The Bare Metal Provisioning service uses wipefs --force --all to perform the clean.

After setting this option, run the openstack undercloud install command to execute this configuration change.

The wipefs --force --all will delete all data and metadata on the disk, but does not perform a secure erase. A secure erase takes much longer.

2.2. Registering nodes

A node definition template (instackenv.json) is a JSON format file and contains the hardware and power management details for registering nodes. For example:

{

"nodes":[

{

"mac":[

"b1:b1:b1:b1:b1:b1"

],

"cpu":"4",

"memory":"6144",

"disk":"40",

"arch":"x86_64",

"pm_type":"ipmi",

"pm_user":"admin",

"pm_password":"p@55w0rd!",

"pm_addr":"192.0.2.205"

},

{

"mac":[

"b2:b2:b2:b2:b2:b2"

],

"cpu":"4",

"memory":"6144",

"disk":"40",

"arch":"x86_64",

"pm_type":"ipmi",

"pm_user":"admin",

"pm_password":"p@55w0rd!",

"pm_addr":"192.0.2.206"

},

{

"mac":[

"b3:b3:b3:b3:b3:b3"

],

"cpu":"4",

"memory":"6144",

"disk":"40",

"arch":"x86_64",

"pm_type":"ipmi",

"pm_user":"admin",

"pm_password":"p@55w0rd!",

"pm_addr":"192.0.2.207"

},

{

"mac":[

"c1:c1:c1:c1:c1:c1"

],

"cpu":"4",

"memory":"6144",

"disk":"40",

"arch":"x86_64",

"pm_type":"ipmi",

"pm_user":"admin",

"pm_password":"p@55w0rd!",

"pm_addr":"192.0.2.208"

},

{

"mac":[

"c2:c2:c2:c2:c2:c2"

],

"cpu":"4",

"memory":"6144",

"disk":"40",

"arch":"x86_64",

"pm_type":"ipmi",

"pm_user":"admin",

"pm_password":"p@55w0rd!",

"pm_addr":"192.0.2.209"

},

{

"mac":[

"c3:c3:c3:c3:c3:c3"

],

"cpu":"4",

"memory":"6144",

"disk":"40",

"arch":"x86_64",

"pm_type":"ipmi",

"pm_user":"admin",

"pm_password":"p@55w0rd!",

"pm_addr":"192.0.2.210"

},

{

"mac":[

"d1:d1:d1:d1:d1:d1"

],

"cpu":"4",

"memory":"6144",

"disk":"40",

"arch":"x86_64",

"pm_type":"ipmi",

"pm_user":"admin",

"pm_password":"p@55w0rd!",

"pm_addr":"192.0.2.211"

},

{

"mac":[

"d2:d2:d2:d2:d2:d2"

],

"cpu":"4",

"memory":"6144",

"disk":"40",

"arch":"x86_64",

"pm_type":"ipmi",

"pm_user":"admin",

"pm_password":"p@55w0rd!",

"pm_addr":"192.0.2.212"

},

{

"mac":[

"d3:d3:d3:d3:d3:d3"

],

"cpu":"4",

"memory":"6144",

"disk":"40",

"arch":"x86_64",

"pm_type":"ipmi",

"pm_user":"admin",

"pm_password":"p@55w0rd!",

"pm_addr":"192.0.2.213"

}

]

}

After creating the template, save the file to the stack user’s home directory (/home/stack/instackenv.json). Initialize the stack user, then import instackenv.json into the director:

$ source ~/stackrc $ openstack overcloud node import ~/instackenv.json

This imports the template and registers each node from the template into the director.

Assign the kernel and ramdisk images to each node:

$ openstack overcloud node configure <node>

The nodes are now registered and configured in the director.

2.3. Pre-deployment validations for Ceph Storage

To help avoid overcloud deployment failures, validate that the required packages exist on your servers.

2.3.1. Verifying the ceph-ansible package version

The undercloud contains Ansible-based validations that you can run to identify potential problems before you deploy the overcloud. These validations can help you avoid overcloud deployment failures by identifying common problems before they happen.

Procedure

Verify that the correction version of the ceph-ansible package is installed:

$ ansible-playbook -i /usr/bin/tripleo-ansible-inventory /usr/share/openstack-tripleo-validations/validations/ceph-ansible-installed.yaml

2.3.2. Verifying packages for pre-provisioned nodes

When you use pre-provisioned nodes in your overcloud deployment, you can verify that the servers have the packages required to be overcloud nodes that host Ceph services.

For more information about pre-provisioned nodes, see Configuring a Basic Overcloud using Pre-Provisioned Nodes.

Procedure

Verify that the servers contained the required packages:

ansible-playbook -i /usr/bin/tripleo-ansible-inventory /usr/share/openstack-tripleo-validations/validations/ceph-dependencies-installed.yaml

2.4. Manually tagging the nodes

After registering each node, you will need to inspect the hardware and tag the node into a specific profile. Profile tags match your nodes to flavors, and in turn the flavors are assigned to a deployment role.

To inspect and tag new nodes, follow these steps:

Trigger hardware introspection to retrieve the hardware attributes of each node:

$ openstack overcloud node introspect --all-manageable --provide

-

The

--all-manageableoption introspects only nodes in a managed state. In this example, it is all of them. The

--provideoption resets all nodes to anactivestate after introspection.ImportantMake sure this process runs to completion. This process usually takes 15 minutes for bare metal nodes.

-

The

Retrieve a list of your nodes to identify their UUIDs:

$ openstack baremetal node list

Add a profile option to the

properties/capabilitiesparameter for each node to manually tag a node to a specific profile.For example, a typical deployment will use three profiles:

control,compute, andceph-storage. The following commands tag three nodes for each profile:$ ironic node-update 1a4e30da-b6dc-499d-ba87-0bd8a3819bc0 add properties/capabilities='profile:control,boot_option:local' $ ironic node-update 6faba1a9-e2d8-4b7c-95a2-c7fbdc12129a add properties/capabilities='profile:control,boot_option:local' $ ironic node-update 5e3b2f50-fcd9-4404-b0a2-59d79924b38e add properties/capabilities='profile:control,boot_option:local' $ ironic node-update 484587b2-b3b3-40d5-925b-a26a2fa3036f add properties/capabilities='profile:compute,boot_option:local' $ ironic node-update d010460b-38f2-4800-9cc4-d69f0d067efe add properties/capabilities='profile:compute,boot_option:local' $ ironic node-update d930e613-3e14-44b9-8240-4f3559801ea6 add properties/capabilities='profile:compute,boot_option:local' $ ironic node-update da0cc61b-4882-45e0-9f43-fab65cf4e52b add properties/capabilities='profile:ceph-storage,boot_option:local' $ ironic node-update b9f70722-e124-4650-a9b1-aade8121b5ed add properties/capabilities='profile:ceph-storage,boot_option:local' $ ironic node-update 68bf8f29-7731-4148-ba16-efb31ab8d34f add properties/capabilities='profile:ceph-storage,boot_option:local'

TipYou can also configure a new custom profile to tag a node for the Ceph MON and Ceph MDS services. See Chapter 3, Deploying Other Ceph Services on dedicated nodes for details.

The addition of the

profileoption tags the nodes into each respective profiles.

As an alternative to manual tagging, use the Automated Health Check (AHC) Tools to automatically tag larger numbers of nodes based on benchmarking data.

2.5. Defining the root disk

Director must identify the root disk during provisioning in the case of nodes with multiple disks. For example, most Ceph Storage nodes use multiple disks. By default, the director writes the overcloud image to the root disk during the provisioning process.

There are several properties that you can define to help the director identify the root disk:

-

model(String): Device identifier. -

vendor(String): Device vendor. -

serial(String): Disk serial number. -

hctl(String): Host:Channel:Target:Lun for SCSI. -

size(Integer): Size of the device in GB. -

wwn(String): Unique storage identifier. -

wwn_with_extension(String): Unique storage identifier with the vendor extension appended. -

wwn_vendor_extension(String): Unique vendor storage identifier. -

rotational(Boolean): True for a rotational device (HDD), otherwise false (SSD). -

name(String): The name of the device, for example: /dev/sdb1. -

by_path(String): The unique PCI path of the device. Use this property if you do not want to use the UUID of the device.

Use the name property only for devices with persistent names. Do not use name to set the root disk for any other device because this value can change when the node boots.

Complete the following steps to specify the root device using its serial number.

Procedure

Check the disk information from the hardware introspection of each node. Run the following command to display the disk information of a node:

(undercloud) $ openstack baremetal introspection data save 1a4e30da-b6dc-499d-ba87-0bd8a3819bc0 | jq ".inventory.disks"

For example, the data for one node might show three disks:

[ { "size": 299439751168, "rotational": true, "vendor": "DELL", "name": "/dev/sda", "wwn_vendor_extension": "0x1ea4dcc412a9632b", "wwn_with_extension": "0x61866da04f3807001ea4dcc412a9632b", "model": "PERC H330 Mini", "wwn": "0x61866da04f380700", "serial": "61866da04f3807001ea4dcc412a9632b" } { "size": 299439751168, "rotational": true, "vendor": "DELL", "name": "/dev/sdb", "wwn_vendor_extension": "0x1ea4e13c12e36ad6", "wwn_with_extension": "0x61866da04f380d001ea4e13c12e36ad6", "model": "PERC H330 Mini", "wwn": "0x61866da04f380d00", "serial": "61866da04f380d001ea4e13c12e36ad6" } { "size": 299439751168, "rotational": true, "vendor": "DELL", "name": "/dev/sdc", "wwn_vendor_extension": "0x1ea4e31e121cfb45", "wwn_with_extension": "0x61866da04f37fc001ea4e31e121cfb45", "model": "PERC H330 Mini", "wwn": "0x61866da04f37fc00", "serial": "61866da04f37fc001ea4e31e121cfb45" } ]Change to the

root_deviceparameter for the node definition. The following example shows how to set the root device to disk 2, which has61866da04f380d001ea4e13c12e36ad6as the serial number:(undercloud) $ openstack baremetal node set --property root_device='{"serial": "61866da04f380d001ea4e13c12e36ad6"}' 1a4e30da-b6dc-499d-ba87-0bd8a3819bc0NoteEnsure that you configure the BIOS of each node to include booting from the root disk that you choose. Configure the boot order to boot from the network first, then to boot from the root disk.

The director identifies the specific disk to use as the root disk. When you run the openstack overcloud deploy command, the director provisions and writes the Overcloud image to the root disk.

2.6. Using the overcloud-minimal image to avoid using a Red Hat subscription entitlement

By default, director writes the QCOW2 overcloud-full image to the root disk during the provisioning process. The overcloud-full image uses a valid Red Hat subscription. However, you can also use the overcloud-minimal image, for example, to provision a bare OS where you do not want to run any other OpenStack services and consume your subscription entitlements.

A common use case for this occurs when you want to provision nodes with only Ceph daemons. For this and similar use cases, you can use the overcloud-minimal image option to avoid reaching the limit of your paid Red Hat subscriptions. For information about how to obtain the overcloud-minimal image, see Obtaining images for overcloud nodes.

Procedure

To configure director to use the

overcloud-minimalimage, create an environment file that contains the following image definition:parameter_defaults: <roleName>Image: overcloud-minimal

Replace

<roleName>with the name of the role and appendImageto the name of the role. The following example shows anovercloud-minimalimage for Ceph storage nodes:parameter_defaults: CephStorageImage: overcloud-minimal

-

Pass the environment file to the

openstack overcloud deploycommand.

The overcloud-minimal image supports only standard Linux bridges and not OVS because OVS is an OpenStack service that requires an OpenStack subscription entitlement.

Chapter 3. Deploying Other Ceph Services on dedicated nodes

By default, the director deploys the Ceph MON and Ceph MDS services on the Controller nodes. This is suitable for small deployments. However, with larger deployments we advise that you deploy the Ceph MON and Ceph MDS services on dedicated nodes to improve the performance of your Ceph cluster. You can do this by creating a custom role for either one.

For more information about custom roles, see Creating a New Role in the Advanced Overcloud Customization guide.

The director uses the following file as a default reference for all overcloud roles:

-

/usr/share/openstack-tripleo-heat-templates/roles_data.yaml

Copy this file to /home/stack/templates/ so you can add custom roles to it:

$ cp /usr/share/openstack-tripleo-heat-templates/roles_data.yaml /home/stack/templates/roles_data_custom.yaml

You invoke the /home/stack/templates/roles_data_custom.yaml file later during overcloud creation (Section 7.2, “Initiating overcloud deployment”). The following sub-sections describe how to configure custom roles for either Ceph MON and Ceph MDS services.

3.1. Creating a custom role and flavor for the Ceph MON service

This section describes how to create a custom role (named CephMon) and flavor (named ceph-mon) for the Ceph MON role. You should already have a copy of the default roles data file as described in Chapter 3, Deploying Other Ceph Services on dedicated nodes.

-

Open the

/home/stack/templates/roles_data_custom.yamlfile. - Remove the service entry for the Ceph MON service (namely, OS::TripleO::Services::CephMon) from under the Controller role.

Add the OS::TripleO::Services::CephClient service to the Controller role:

[...] - name: Controller # the 'primary' role goes first CountDefault: 1 ServicesDefault: - OS::TripleO::Services::CACerts - OS::TripleO::Services::CephMds - OS::TripleO::Services::CephClient - OS::TripleO::Services::CephExternal - OS::TripleO::Services::CephRbdMirror - OS::TripleO::Services::CephRgw - OS::TripleO::Services::CinderApi [...]At the end of

roles_data_custom.yaml, add a customCephMonrole containing the Ceph MON service and all the other required node services. For example:- name: CephMon ServicesDefault: # Common Services - OS::TripleO::Services::AuditD - OS::TripleO::Services::CACerts - OS::TripleO::Services::CertmongerUser - OS::TripleO::Services::Collectd - OS::TripleO::Services::Docker - OS::TripleO::Services::Fluentd - OS::TripleO::Services::Kernel - OS::TripleO::Services::Ntp - OS::TripleO::Services::ContainersLogrotateCrond - OS::TripleO::Services::SensuClient - OS::TripleO::Services::Snmp - OS::TripleO::Services::Timezone - OS::TripleO::Services::TripleoFirewall - OS::TripleO::Services::TripleoPackages - OS::TripleO::Services::Tuned # Role-Specific Services - OS::TripleO::Services::CephMon

Using the

openstack flavor createcommand, define a new flavor namedceph-monfor this role:$ openstack flavor create --id auto --ram 6144 --disk 40 --vcpus 4 ceph-mon

NoteFor more details about this command, run

openstack flavor create --help.Map this flavor to a new profile, also named

ceph-mon:$ openstack flavor set --property "cpu_arch"="x86_64" --property "capabilities:boot_option"="local" --property "capabilities:profile"="ceph-mon" ceph-mon

NoteFor more details about this command, run

openstack flavor set --help.Tag nodes into the new

ceph-monprofile:$ ironic node-update UUID add properties/capabilities='profile:ceph-mon,boot_option:local'

Add the following configuration to the

node-info.yamlfile to associate theceph-monflavor with the CephMon role:parameter_defaults: OvercloudCephMonFlavor: CephMon CephMonCount: 3

See Section 2.4, “Manually tagging the nodes” for more details about tagging nodes. See also Tagging Nodes Into Profiles for related information on custom role profiles.

3.2. Creating a custom role and flavor for the Ceph MDS service

This section describes how to create a custom role (named CephMDS) and flavor (named ceph-mds) for the Ceph MDS role. You should already have a copy of the default roles data file as described in Chapter 3, Deploying Other Ceph Services on dedicated nodes.

-

Open the

/home/stack/templates/roles_data_custom.yamlfile. Remove the service entry for the Ceph MDS service (namely, OS::TripleO::Services::CephMds) from under the Controller role:

[...] - name: Controller # the 'primary' role goes first CountDefault: 1 ServicesDefault: - OS::TripleO::Services::CACerts # - OS::TripleO::Services::CephMds 1 - OS::TripleO::Services::CephMon - OS::TripleO::Services::CephExternal - OS::TripleO::Services::CephRbdMirror - OS::TripleO::Services::CephRgw - OS::TripleO::Services::CinderApi [...]- 1

- Comment out this line. This same service will be added to a custom role in the next step.

At the end of

roles_data_custom.yaml, add a customCephMDSrole containing the Ceph MDS service and all the other required node services. For example:- name: CephMDS ServicesDefault: # Common Services - OS::TripleO::Services::AuditD - OS::TripleO::Services::CACerts - OS::TripleO::Services::CertmongerUser - OS::TripleO::Services::Collectd - OS::TripleO::Services::Docker - OS::TripleO::Services::Fluentd - OS::TripleO::Services::Kernel - OS::TripleO::Services::Ntp - OS::TripleO::Services::ContainersLogrotateCrond - OS::TripleO::Services::SensuClient - OS::TripleO::Services::Snmp - OS::TripleO::Services::Timezone - OS::TripleO::Services::TripleoFirewall - OS::TripleO::Services::TripleoPackages - OS::TripleO::Services::Tuned # Role-Specific Services - OS::TripleO::Services::CephMds - OS::TripleO::Services::CephClient 1

- 1

- The Ceph MDS service requires the admin keyring, which can be set by either Ceph MON or Ceph Client service. As we are deploying Ceph MDS on a dedicated node (without the Ceph MON service), include the Ceph Client service on the role as well.

Using the

openstack flavor createcommand, define a new flavor namedceph-mdsfor this role:$ openstack flavor create --id auto --ram 6144 --disk 40 --vcpus 4 ceph-mds

NoteFor more details about this command, run

openstack flavor create --help.Map this flavor to a new profile, also named

ceph-mds:$ openstack flavor set --property "cpu_arch"="x86_64" --property "capabilities:boot_option"="local" --property "capabilities:profile"="ceph-mds" ceph-mds

NoteFor more details about this command, run

openstack flavor set --help.

Tag nodes into the new ceph-mds profile:

$ ironic node-update UUID add properties/capabilities='profile:ceph-mds,boot_option:local'

See Section 2.4, “Manually tagging the nodes” for more details about tagging nodes. See also Tagging Nodes Into Profiles for related information on custom role profiles.

Chapter 4. Customizing the Storage service

The heat template collection provided by director already contains the necessary templates and environment files to enable a basic Ceph Storage configuration.

The /usr/share/openstack-tripleo-heat-templates/environments/ceph-ansible/ceph-ansible.yaml environment file creates a Ceph cluster and integrates it with your overcloud at deployment. This cluster features containerized Ceph Storage nodes. For more information about containerized services in OpenStack, see Configuring a basic overcloud with the CLI tools in the Director Installation and Usage Guide.

The Red Hat OpenStack director also applies basic, default settings to the deployed Ceph cluster. You need a custom environment file to pass custom settings to your Ceph cluster.

Procedure

-

Create the file

storage-config.yamlin/home/stack/templates/. For the purposes of this document,~/templates/storage-config.yamlcontains most of the overcloud-related custom settings for your environment. It overrides all the default settings applied by director to your overcloud. Add a

parameter_defaultssection to~/templates/storage-config.yaml. This section contains custom settings for your overcloud. For example, to setvxlanas the network type of the Networking service (neutron):parameter_defaults: NeutronNetworkType: vxlan

Optional: You can set the following options under

parameter_defaultsdepending on your needs:Option Description Default value CinderEnableIscsiBackend

Enables the iSCSI backend

false

CinderEnableRbdBackend

Enables the Ceph Storage back end

true

CinderBackupBackend

Sets ceph or swift as the back end for volume backups; see Section 4.4, “Configuring the Backup Service to use Ceph” for related details

ceph

NovaEnableRbdBackend

Enables Ceph Storage for Nova ephemeral storage

true

GlanceBackend

Defines which back end the Image service should use:

rbd(Ceph),swift, orfilerbd

GnocchiBackend

Defines which back end the Telemetry service should use:

rbd(Ceph),swift, orfilerbd

NoteYou can omit an option from

~/templates/storage-config.yamlif you want to use the default setting.

The contents of your environment file changes depending on the settings you apply in the sections that follow. See Appendix A, Sample environment file: Creating a Ceph cluster for a finished example.

The following subsections explain how to override common default storage service settings applied by the director.

4.1. Enabling the Ceph Metadata server

The Ceph Metadata Server (MDS) runs the ceph-mds daemon, which manages metadata related to files stored on CephFS. CephFS can be consumed via NFS. For related information about using CephFS via NFS, see Ceph File System Guide and CephFS via NFS Back End Guide for the Shared File System Service.

Red Hat only supports deploying Ceph MDS with the CephFS through NFS back end for the Shared File Systems service.

To enable the Ceph Metadata Server, invoke the following environment file when you create your overcloud:

-

/usr/share/openstack-tripleo-heat-templates/environments/ceph-ansible/ceph-mds.yaml

See Section 7.2, “Initiating overcloud deployment” for more details. For more information about the Ceph Metadata Server, see Configuring Metadata Server Daemons.

By default, the Ceph Metadata Server is deployed on the Controller node. You can deploy the Ceph Metadata Server on its own dedicated node, see Section 3.2, “Creating a custom role and flavor for the Ceph MDS service”.

4.2. Enabling the Ceph Object Gateway

The Ceph Object Gateway (RGW) provides applications with an interface to object storage capabilities within a Ceph Storage cluster. When you deploy RGW, you can replace the default Object Storage service (swift) with Ceph. For more information, see Object Gateway Guide for Red Hat Enterprise Linux.

To enable RGW in your deployment, invoke the following environment file when creating your overcloud:

-

/usr/share/openstack-tripleo-heat-templates/environments/ceph-ansible/ceph-rgw.yaml

For more information, see Section 7.2, “Initiating overcloud deployment”.

By default, Ceph Storage allows 250 placement groups per OSD. When you enable RGW, Ceph Storage creates six additional pools that are required by RGW. The new pools are:

- .rgw.root

- default.rgw.control

- default.rgw.meta

- default.rgw.log

- default.rgw.buckets.index

- default.rgw.buckets.data

In your deployment, default is replaced with the name of the zone to which the pools belongs.

Therefore, when you enable RGW, be sure to set the default pg_num using the CephPoolDefaultPgNum parameter to account for the new pools. For more information about how to calculate the number of placement groups for Ceph pools, see Section 6.9, “Assigning custom attributes to different Ceph pools”.

The Ceph Object Gateway acts as a drop-in replacement for the default Object Storage service. As such, all other services that normally use swift can seamlessly start using the Ceph Object Gateway instead without further configuration. For example, when configuring the Block Storage Backup service (cinder-backup) to use the Ceph Object Gateway, set ceph as the target back end (see Section 4.4, “Configuring the Backup Service to use Ceph”).

4.3. Configuring Ceph Object Store to use external Ceph Object Gateway

Red Hat OpenStack Platform (RHOSP) director supports configuring an external Ceph Object Gateway (RGW) as an Object Store service. To authenticate with the external RGW service, you must configure RGW to verify users and their roles in the Identity service (keystone).

For more information about how to configure an external Ceph Object Gateway, see Configuring the Ceph Object Gateway in the Using Keystone with the Ceph Object Gateway Guide.

Procedure

Add the following

parameter_defaultsto a custom environment file, for example,swift-external-params.yaml, and adjust the values to suit your deployment:parameter_defaults: ExternalPublicUrl: 'http://<Public RGW endpoint or loadbalancer>:8080/swift/v1/AUTH_%(project_id)s' ExternalInternalUrl: 'http://<Internal RGW endpoint>:8080/swift/v1/AUTH_%(project_id)s' ExternalAdminUrl: 'http://<Admin RGW endpoint>:8080/swift/v1/AUTH_%(project_id)s' ExternalSwiftUserTenant: 'service' SwiftPassword: 'choose_a_random_password'

NoteThe example code snippet contains parameter values that might differ from values that you use in your environment:

-

The default port where the remote RGW instance listens is

8080. The port might be different depending on how the external RGW is configured. -

The

swiftuser created in the overcloud uses the password defined by theSwiftPasswordparameter. You must configure the external RGW instance to use the same password to authenticate with the Identity service by using thergw_keystone_admin_password.

-

The default port where the remote RGW instance listens is

Add the following code to the Ceph config file to configure RGW to use the Identity service. Adjust the variable values to suit your environment.

rgw_keystone_api_version: 3 rgw_keystone_url: http://<public Keystone endpoint>:5000/ rgw_keystone_accepted_roles: 'member, Member, admin' rgw_keystone_accepted_admin_roles: ResellerAdmin, swiftoperator rgw_keystone_admin_domain: default rgw_keystone_admin_project: service rgw_keystone_admin_user: swift rgw_keystone_admin_password: <Password as defined in the environment parameters> rgw_keystone_implicit_tenants: 'true' rgw_keystone_revocation_interval: '0' rgw_s3_auth_use_keystone: 'true' rgw_swift_versioning_enabled: 'true' rgw_swift_account_in_url: 'true'NoteDirector creates the following roles and users in the Identity service by default:

- rgw_keystone_accepted_admin_roles: ResellerAdmin, swiftoperator

- rgw_keystone_admin_domain: default

- rgw_keystone_admin_project: service

- rgw_keystone_admin_user: swift

Deploy the overcloud with the additional environment files:

openstack overcloud deploy --templates \ -e <your environment files> -e /usr/share/openstack-tripleo-heat-templates/environments/swift-external.yaml -e swift-external-params.yaml

4.4. Configuring the Backup Service to use Ceph

The Block Storage Backup service (cinder-backup) is disabled by default. To enable it, invoke the following environment file when creating your overcloud:

-

/usr/share/openstack-tripleo-heat-templates/environments/cinder-backup.yaml

See Section 7.2, “Initiating overcloud deployment” for more details.

When you enable cinder-backup (as in Section 4.2, “Enabling the Ceph Object Gateway”), you can configure it to store backups in Ceph. This involves adding the following line to the parameter_defaults of your environment file (namely, ~/templates/storage-config.yaml):

CinderBackupBackend: ceph

4.5. Configuring multiple bonded interfaces per Ceph node

You can use a bonded interface to combine multiple NICs to add redundancy to a network connection. If you have enough NICs on your Ceph nodes, you can take this a step further by creating multiple bonded interfaces per node.

With this, you can then use a bonded interface for each network connection required by the node. This provides both redundancy and a dedicated connection for each network.

The simplest implementation of this involves the use of two bonds, one for each storage network used by the Ceph nodes. These networks are the following:

- Front-end storage network (

StorageNet) - The Ceph client uses this network to interact with its Ceph cluster.

- Back-end storage network (

StorageMgmtNet) - The Ceph cluster uses this network to balance data in accordance with the placement group policy of the cluster. For more information, see Placement Groups (PG) in the Red Hat Ceph Architecture Guide.

Configuring this involves customizing a network interface template, as the director does not provide any sample templates that deploy multiple bonded NICs. However, the director does provide a template that deploys a single bonded interface — namely, /usr/share/openstack-tripleo-heat-templates/network/config/bond-with-vlans/ceph-storage.yaml. You can add a bonded interface for your additional NICs by defining it there.

For more information about creating custom interface templates, Creating Custom Interface Templates in the Advanced Overcloud Customization guide.

The following snippet contains the default definition for the single bonded interface defined by /usr/share/openstack-tripleo-heat-templates/network/config/bond-with-vlans/ceph-storage.yaml:

type: ovs_bridge // 1 name: br-bond members: - type: ovs_bond // 2 name: bond1 // 3 ovs_options: {get_param: BondInterfaceOvsOptions} 4 members: // 5 - type: interface name: nic2 primary: true - type: interface name: nic3 - type: vlan // 6 device: bond1 // 7 vlan_id: {get_param: StorageNetworkVlanID} addresses: - ip_netmask: {get_param: StorageIpSubnet} - type: vlan device: bond1 vlan_id: {get_param: StorageMgmtNetworkVlanID} addresses: - ip_netmask: {get_param: StorageMgmtIpSubnet}

- 1

- A single bridge named

br-bondholds the bond defined by this template. This line defines the bridge type, namely OVS. - 2

- The first member of the

br-bondbridge is the bonded interface itself, namedbond1. This line defines the bond type ofbond1, which is also OVS. - 3

- The default bond is named

bond1, as defined in this line. - 4

- The

ovs_optionsentry instructs director to use a specific set of bonding module directives. Those directives are passed through theBondInterfaceOvsOptions, which you can also configure in this same file. For instructions on how to configure this, see Section 4.5.1, “Configuring bonding module directives”. - 5

- The

memberssection of the bond defines which network interfaces are bonded bybond1. In this case, the bonded interface usesnic2(set as the primary interface) andnic3. - 6

- The

br-bondbridge has two other members: namely, a VLAN for both front-end (StorageNetwork) and back-end (StorageMgmtNetwork) storage networks. - 7

- The

deviceparameter defines what device a VLAN should use. In this case, both VLANs will use the bonded interfacebond1.

With at least two more NICs, you can define an additional bridge and bonded interface. Then, you can move one of the VLANs to the new bonded interface. This results in added throughput and reliability for both storage network connections.

When customizing /usr/share/openstack-tripleo-heat-templates/network/config/bond-with-vlans/ceph-storage.yaml for this purpose, it is advisable to also use Linux bonds (type: linux_bond ) instead of the default OVS (type: ovs_bond). This bond type is more suitable for enterprise production deployments.

The following edited snippet defines an additional OVS bridge (br-bond2) which houses a new Linux bond named bond2. The bond2 interface uses two additional NICs (namely, nic4 and nic5) and will be used solely for back-end storage network traffic:

type: ovs_bridge

name: br-bond

members:

-

type: linux_bond

name: bond1

**bonding_options**: {get_param: BondInterfaceOvsOptions} // 1

members:

-

type: interface

name: nic2

primary: true

-

type: interface

name: nic3

-

type: vlan

device: bond1

vlan_id: {get_param: StorageNetworkVlanID}

addresses:

-

ip_netmask: {get_param: StorageIpSubnet}

-

type: ovs_bridge

name: br-bond2

members:

-

type: linux_bond

name: bond2

**bonding_options**: {get_param: BondInterfaceOvsOptions}

members:

-

type: interface

name: nic4

primary: true

-

type: interface

name: nic5

-

type: vlan

device: bond1

vlan_id: {get_param: StorageMgmtNetworkVlanID}

addresses:

-

ip_netmask: {get_param: StorageMgmtIpSubnet}- 1

- As

bond1andbond2are both Linux bonds (instead of OVS), they usebonding_optionsinstead ofovs_optionsto set bonding directives. For related information, see Section 4.5.1, “Configuring bonding module directives”.

For the full contents of this customized template, see Appendix B, Sample custom interface template: Multiple bonded interfaces.

4.5.1. Configuring bonding module directives

After you add and configure the bonded interfaces, use the BondInterfaceOvsOptions parameter to set what directives each use. You can find this in the parameters: section of /usr/share/openstack-tripleo-heat-templates/network/config/bond-with-vlans/ceph-storage.yaml. The following snippet shows the default definition of this parameter (namely, empty):

BondInterfaceOvsOptions:

default: ''

description: The ovs_options string for the bond interface. Set

things like lacp=active and/or bond_mode=balance-slb

using this option.

type: string

Define the options you need in the default: line. For example, to use 802.3ad (mode 4) and a LACP rate of 1 (fast), use 'mode=4 lacp_rate=1', as in:

BondInterfaceOvsOptions:

default: 'mode=4 lacp_rate=1'

description: The bonding_options string for the bond interface. Set

things like lacp=active and/or bond_mode=balance-slb

using this option.

type: string

For more information about other supported bonding options, see Open vSwitch Bonding Options in the Advanced Overcloud Optimization guide. For the full contents of the customized /usr/share/openstack-tripleo-heat-templates/network/config/bond-with-vlans/ceph-storage.yaml template, see Appendix B, Sample custom interface template: Multiple bonded interfaces.

Chapter 5. Customizing the Ceph Storage cluster

Director deploys containerized Red Hat Ceph Storage using a default configuration. You can customize Ceph Storage by overriding the default settings.

Prerequistes

To deploy containerized Ceph Storage you must include the /usr/share/openstack-tripleo-heat-templates/environments/ceph-ansible/ceph-ansible.yaml file during overcloud deployment. This environment file defines the following resources:

-

CephAnsibleDisksConfig- This resource maps the Ceph Storage node disk layout. For more information, see Section 5.2, “Mapping the Ceph Storage node disk layout”. -

CephConfigOverrides- This resource applies all other custom settings to your Ceph Storage cluster.

Procedure

Enable the Red Hat Ceph Storage 3 Tools repository:

$ sudo subscription-manager repos --enable=rhel-7-server-rhceph-3-tools-rpms

Install the

ceph-ansiblepackage on your undercloud:$ sudo yum install ceph-ansible

To customize your Ceph Storage cluster, define custom parameters in a new environment file, for example,

/home/stack/templates/ceph-config.yaml. You can apply Ceph Storage cluster settings with the following syntax in theparameter_defaultssection of your environment file:parameter_defaults: section: KEY:VALUENoteYou can apply the

CephConfigOverridesparameter to the[global]section of theceph.conffile, as well as any other section, such as[osd],[mon], and[client]. If you specify a section, thekey:valuedata goes into the specified section. If you do not specify a section, the data goes into the[global]section by default. For information about Ceph Storage configuration, customization, and supported parameters, see Red Hat Ceph Storage Configuration Guide.Replace

KEYandVALUEwith the Ceph cluster settings that you want to apply. For example, in theglobalsection,max_open_filesis theKEYand131072is the correspondingVALUE:parameter_defaults: CephConfigOverrides: global: max_open_files: 131072 osd: osd_scrub_during_recovery: falseThis configuration results in the following settings defined in the configuration file of your Ceph cluster:

[global] max_open_files = 131072 [osd] osd_scrub_during_recovery = false

5.1. Setting ceph-ansible group variables

The ceph-ansible tool is a playbook used to install and manage Ceph Storage clusters.

For information about the group_vars directory, see 3.2. Installing a Red Hat Ceph Storage Cluster in the Installation Guide for Red Hat Enterprise Linux.

To change the variable defaults in director, use the CephAnsibleExtraConfig parameter to pass the new values in heat environment files. For example, to set the ceph-ansible group variable journal_size to 40960, create an environment file with the following journal_size definition:

parameter_defaults:

CephAnsibleExtraConfig:

journal_size: 40960

Change ceph-ansible group variables with the override parameters; do not edit group variables directly in the /usr/share/ceph-ansible directory on the undercloud.

5.2. Mapping the Ceph Storage node disk layout

When you deploy containerized Ceph Storage, you must map the disk layout and specify dedicated block devices for the Ceph OSD service. You can perform this mapping in the environment file you created earlier to define your custom Ceph parameters: /home/stack/templates/ceph-config.yaml.

Use the CephAnsibleDisksConfig resource in parameter_defaults to map your disk layout. This resource uses the following variables:

| Variable | Required? | Default value (if unset) | Description |

|---|---|---|---|

| osd_scenario | Yes | lvm

NOTE: For new deployments using Ceph 3.2 and later, |

With Ceph 3.2, With Ceph 3.1, the values set the journaling scenario, such as whether OSDs must be created with journals that are either:

- co-located on the same device for

- stored on dedicated devices for |

| devices | Yes | NONE. Variable must be set. | A list of block devices to be used on the node for OSDs. |

| dedicated_devices |

Yes (only if | devices |

A list of block devices that maps each entry under devices to a dedicated journaling block device. Use this variable only when |

| dmcrypt | No | false |

Sets whether data stored on OSDs are encrypted ( |

| osd_objectstore | No | bluestore

NOTE: For new deployments using Ceph 3.2 and later, | Sets the storage back end used by Ceph. |

If you deployed your Ceph cluster with a version of ceph-ansible older than 3.3 and osd_scenario is set to collocated or non-collocated, OSD reboot failure can occur due to a device naming discrepancy. For more information about this fault, see https://bugzilla.redhat.com/show_bug.cgi?id=1670734. For information about a workaround, see https://access.redhat.com/solutions/3702681.

5.2.1. Using BlueStore in Ceph 3.2 and later

New deployments of OpenStack Platform 13 must use bluestore. Current deployments that use filestore must continue using filestore, as described in Using FileStore in Ceph 3.1 and earlier. Migrations from filestore to bluestore are not supported by default in RHCS 3.x.

Procedure

To specify the block devices to be used as Ceph OSDs, use a variation of the following:

parameter_defaults: CephAnsibleDisksConfig: devices: - /dev/sdb - /dev/sdc - /dev/sdd - /dev/nvme0n1 osd_scenario: lvm osd_objectstore: bluestoreBecause

/dev/nvme0n1is in a higher performing device class—it is an SSD and the other devices are HDDs—the example parameter defaults produce three OSDs that run on/dev/sdb,/dev/sdc, and/dev/sdd. The three OSDs use/dev/nvme0n1as a BlueStore WAL device. The ceph-volume tool does this by using thebatchsubcommand. The same configuration is duplicated for each Ceph storage node and assumes uniform hardware. If the BlueStore WAL data resides on the same disks as the OSDs, then change the parameter defaults in the following way:parameter_defaults: CephAnsibleDisksConfig: devices: - /dev/sdb - /dev/sdc - /dev/sdd osd_scenario: lvm osd_objectstore: bluestore

5.2.2. Using FileStore in Ceph 3.1 and earlier

The default journaling scenario is set to osd_scenario=collocated, which has lower hardware requirements consistent with most testing environments. In a typical production environment, however, journals are stored on dedicated devices, osd_scenario=non-collocated, to accommodate heavier I/O workloads. For more information, see Identifying a Performance Use Case in the Red Hat Ceph Storage Hardware Selection Guide.

Procedure

List each block device to be used by the OSDs as a simple list under the

devicesvariable, for example:devices: - /dev/sda - /dev/sdb - /dev/sdc - /dev/sdd

Optional: If

osd_scenario=non-collocated, you must also map each entry indevicesto a corresponding entry indedicated_devices. For example, the following snippet in/home/stack/templates/ceph-config.yaml:osd_scenario: non-collocated devices: - /dev/sda - /dev/sdb - /dev/sdc - /dev/sdd dedicated_devices: - /dev/sdf - /dev/sdf - /dev/sdg - /dev/sdg

- Result

Each Ceph Storage node in the resulting Ceph cluster has the following characteristics:

-

/dev/sdahas/dev/sdf1as its journal -

/dev/sdbhas/dev/sdf2as its journal -

/dev/sdchas/dev/sdg1as its journal -

/dev/sddhas/dev/sdg2as its journal

-

5.2.3. Referring to devices with persistent names

Procedure

In some nodes, disk paths such as

/dev/sdband/dev/sdc, might not point to the same block device during reboots. If this is the case with yourCephStoragenodes, specify each disk with the/dev/disk/by-path/symlink to ensure that the block device mapping is consistent throughout deployments:parameter_defaults: CephAnsibleDisksConfig: devices: - /dev/disk/by-path/pci-0000:03:00.0-scsi-0:0:10:0 - /dev/disk/by-path/pci-0000:03:00.0-scsi-0:0:11:0 dedicated_devices: - /dev/nvme0n1 - /dev/nvme0n1Optional: Because you must set the list of OSD devices before overcloud deployment, it might not be possible to identify and set the PCI path of disk devices. In this case, gather the

/dev/disk/by-path/symlinkdata for block devices during introspection.In the following example, run the first command to download the introspection data from the undercloud Object Storage service (swift) for the server,

b08-h03-r620-hci, and save the data in a file calledb08-h03-r620-hci.json. Run the second command to grep for “by-path”. The output of this command contains the unique/dev/disk/by-pathvalues that you can use to identify disks.(undercloud) [stack@b08-h02-r620 ironic]$ openstack baremetal introspection data save b08-h03-r620-hci | jq . > b08-h03-r620-hci.json (undercloud) [stack@b08-h02-r620 ironic]$ grep by-path b08-h03-r620-hci.json "by_path": "/dev/disk/by-path/pci-0000:02:00.0-scsi-0:2:0:0", "by_path": "/dev/disk/by-path/pci-0000:02:00.0-scsi-0:2:1:0", "by_path": "/dev/disk/by-path/pci-0000:02:00.0-scsi-0:2:3:0", "by_path": "/dev/disk/by-path/pci-0000:02:00.0-scsi-0:2:4:0", "by_path": "/dev/disk/by-path/pci-0000:02:00.0-scsi-0:2:5:0", "by_path": "/dev/disk/by-path/pci-0000:02:00.0-scsi-0:2:6:0", "by_path": "/dev/disk/by-path/pci-0000:02:00.0-scsi-0:2:7:0", "by_path": "/dev/disk/by-path/pci-0000:02:00.0-scsi-0:2:0:0",

For more information about naming conventions for storage devices, see Persistent Naming in the Red Hat Enterprise Linux (RHEL) Managing storage devices guide.

osd_scenario: lvm is used in the example to default new deployments to bluestore as configured by ceph-volume; this is only available with ceph-ansible 3.2 or later and Ceph Luminous or later. The parameters to support filestore with ceph-ansible 3.2 are backwards compatible. Therefore, in existing FileStore deployments, do not change the osd_objectstore or osd_scenario parameters.

5.2.4. Creating a valid JSON file automatically from Bare Metal service introspection data

When you customize devices in a Ceph Storage deployment by manually including node-specific overrides, you can inadvertently introduce errors. The director tools directory contains a utility named make_ceph_disk_list.py that you can use to create a valid JSON environment file automatically from Bare Metal service (ironic) introspection data.

Procedure

Export the introspection data from the Bare Metal service database for the Ceph Storage nodes you want to deploy:

openstack baremetal introspection data save oc0-ceph-0 > ceph0.json openstack baremetal introspection data save oc0-ceph-1 > ceph1.json ...

Copy the utility to the

stackuser’s home directory on the undercloud, and then use it to generate anode_data_lookup.jsonfile that you can pass to theopenstack overcloud deploycommand:./make_ceph_disk_list.py -i ceph*.json -o node_data_lookup.json -k by_path

-

The

-ioption can take an expression such as*.jsonor a list of files as input. The

-koption defines the key of the ironic disk data structure used to identify the OSD disks.NoteRed Hat does not recommend using

namebecause it produces a list of devices such as/dev/sdd, which may not always point to the same device on reboot. Instead, Red Hat recommends that you useby_path, which is the default option if-kis not specified.NoteYou can only define

NodeDataLookuponce during a deployment, so pass the introspection data file to all nodes that host Ceph OSDs. The Bare Metal service reserves one of the available disks on the system as the root disk. The utility always exludes the root disk from the list of generated devices.

-

The

-

Run the

./make_ceph_disk_list.py –helpcommand to see other available options.

5.2.5. Mapping the Disk Layout to Non-Homogeneous Ceph Storage Nodes

Non-homogeneous Ceph Storage nodes can cause performance issues, such as the risk of unpredictable performance loss. Although you can configure non-homogeneous Ceph Storage nodes in your Red Hat OpenStack Platform environment, Red Hat does not recommend it.

By default, all nodes that host Ceph OSDs use the global devices and dedicated_devices lists that you set in Section 5.2, “Mapping the Ceph Storage node disk layout”.

This default configuration is appropriate when all Ceph OSD nodes have homogeneous hardware. However, if a subset of these servers do not have homogeneous hardware, then you must define a node-specific disk configuration in the director.

To identify nodes that host Ceph OSDs, inspect the roles_data.yaml file and identify all roles that include the OS::TripleO::Services::CephOSD service.

To define a node-specific configuration, create a custom environment file that identifies each server and includes a list of local variables that override global variables and include the environment file in the openstack overcloud deploy command. For example, create a node-specific configuration file called node-spec-overrides.yaml.

You can extract the machine unique UUID for each individual server or from the Ironic database.

To locate the UUID for an individual server, log in to the server and run the following command:

dmidecode -s system-uuid

To extract the UUID from the Ironic database, run the following command on the undercloud:

openstack baremetal introspection data save NODE-ID | jq .extra.system.product.uuid

If the undercloud.conf file does not have inspection_extras = true prior to undercloud installation or upgrade and introspection, then the machine unique UUID will not be in the Ironic database.

The machine unique UUID is not the Ironic UUID.

A valid node-spec-overrides.yaml file may look like the following:

parameter_defaults:

NodeDataLookup: |

{"32E87B4C-C4A7-418E-865B-191684A6883B": {"devices": ["/dev/sdc"]}}

All lines after the first two lines must be valid JSON. An easy way to verify that the JSON is valid is to use the jq command. For example:

-

Remove the first two lines (

parameter_defaults:andNodeDataLookup: |) from the file temporarily. -

Run

cat node-spec-overrides.yaml | jq .

As the node-spec-overrides.yaml file grows, jq may also be used to ensure that the embedded JSON is valid. For example, because the devices and dedicated_devices list should be the same length, use the following to verify that they are the same length before starting the deployment.

(undercloud) [stack@b08-h02-r620 tht]$ cat node-spec-c05-h17-h21-h25-6048r.yaml | jq '.[] | .devices | length' 33 30 33 (undercloud) [stack@b08-h02-r620 tht]$ cat node-spec-c05-h17-h21-h25-6048r.yaml | jq '.[] | .dedicated_devices | length' 33 30 33 (undercloud) [stack@b08-h02-r620 tht]$

In this example, the node-spec-c05-h17-h21-h25-6048r.yaml has three servers in rack c05 in which slots h17, h21, and h25 are missing disks. A more complicated example is included at the end of this section.

After you validate the JSON syntax, ensure that you repopulate the first two lines of the environment file and use the -e option to include the file in the deployment command.

In the following example, the updated environment file uses NodeDataLookup for Ceph deployment. All of the servers had a devices list with 35 disks, except one server has a disk missing.

Use the following example environment file to override the default devices list for the node that has 34 disks with the list of disks it should use instead of the global list.

parameter_defaults:

# c05-h01-6048r is missing scsi-0:2:35:0 (00000000-0000-0000-0000-0CC47A6EFD0C)

NodeDataLookup: |

{

"00000000-0000-0000-0000-0CC47A6EFD0C": {

"devices": [

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:1:0",

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:32:0",

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:2:0",

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:3:0",

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:4:0",

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:5:0",

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:6:0",

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:33:0",

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:7:0",

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:8:0",

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:34:0",

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:9:0",

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:10:0",

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:11:0",

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:12:0",

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:13:0",

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:14:0",

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:15:0",

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:16:0",

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:17:0",

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:18:0",

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:19:0",

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:20:0",

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:21:0",

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:22:0",

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:23:0",

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:24:0",

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:25:0",

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:26:0",

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:27:0",

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:28:0",

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:29:0",

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:30:0",

"/dev/disk/by-path/pci-0000:03:00.0-scsi-0:2:31:0"

],

"dedicated_devices": [

"/dev/disk/by-path/pci-0000:81:00.0-nvme-1",

"/dev/disk/by-path/pci-0000:81:00.0-nvme-1",

"/dev/disk/by-path/pci-0000:81:00.0-nvme-1",

"/dev/disk/by-path/pci-0000:81:00.0-nvme-1",

"/dev/disk/by-path/pci-0000:81:00.0-nvme-1",

"/dev/disk/by-path/pci-0000:81:00.0-nvme-1",

"/dev/disk/by-path/pci-0000:81:00.0-nvme-1",

"/dev/disk/by-path/pci-0000:81:00.0-nvme-1",

"/dev/disk/by-path/pci-0000:81:00.0-nvme-1",

"/dev/disk/by-path/pci-0000:81:00.0-nvme-1",

"/dev/disk/by-path/pci-0000:81:00.0-nvme-1",

"/dev/disk/by-path/pci-0000:81:00.0-nvme-1",

"/dev/disk/by-path/pci-0000:81:00.0-nvme-1",

"/dev/disk/by-path/pci-0000:81:00.0-nvme-1",

"/dev/disk/by-path/pci-0000:81:00.0-nvme-1",

"/dev/disk/by-path/pci-0000:81:00.0-nvme-1",

"/dev/disk/by-path/pci-0000:81:00.0-nvme-1",

"/dev/disk/by-path/pci-0000:81:00.0-nvme-1",

"/dev/disk/by-path/pci-0000:84:00.0-nvme-1",

"/dev/disk/by-path/pci-0000:84:00.0-nvme-1",

"/dev/disk/by-path/pci-0000:84:00.0-nvme-1",

"/dev/disk/by-path/pci-0000:84:00.0-nvme-1",

"/dev/disk/by-path/pci-0000:84:00.0-nvme-1",

"/dev/disk/by-path/pci-0000:84:00.0-nvme-1",

"/dev/disk/by-path/pci-0000:84:00.0-nvme-1",

"/dev/disk/by-path/pci-0000:84:00.0-nvme-1",

"/dev/disk/by-path/pci-0000:84:00.0-nvme-1",

"/dev/disk/by-path/pci-0000:84:00.0-nvme-1",

"/dev/disk/by-path/pci-0000:84:00.0-nvme-1",

"/dev/disk/by-path/pci-0000:84:00.0-nvme-1",

"/dev/disk/by-path/pci-0000:84:00.0-nvme-1",

"/dev/disk/by-path/pci-0000:84:00.0-nvme-1",

"/dev/disk/by-path/pci-0000:84:00.0-nvme-1",

"/dev/disk/by-path/pci-0000:84:00.0-nvme-1"

]

}

}5.3. Controlling resources that are available to Ceph Storage containers

When you colocate Ceph Storage containers and Red Hat OpenStack Platform containers on the same server, the containers can compete for memory and CPU resources.

To control the amount of memory or CPU that Ceph Storage containers can use, define the CPU and memory limits as shown in the following example:

parameter_defaults:

CephAnsibleExtraConfig:

ceph_mds_docker_cpu_limit: 4

ceph_mgr_docker_cpu_limit: 1

ceph_mon_docker_cpu_limit: 1

ceph_osd_docker_cpu_limit: 4

ceph_mds_docker_memory_limit: 64438m

ceph_mgr_docker_memory_limit: 64438m

ceph_mon_docker_memory_limit: 64438mThe limits shown are for example only. Actual values can vary based on your environment.

The default value for all of the memory limits specified in the example is the total host memory on the system. For example, ceph-ansible uses "{{ ansible_memtotal_mb }}m".

The ceph_osd_docker_memory_limit parameter is intentionally excluded from the example. Do not use the ceph_osd_docker_memory_limit parameter. For more information, see Reserving Memory Resources for Ceph in the Hyper-Converged Infrastructure Guide.

If the server on which the containers are colocated does not have sufficient memory or CPU, or if your design requires physical isolation, you can use composable services to deploy Ceph Storage containers to additional nodes. For more information, see Composable Services and Custom Roles in the Advanced Overcloud Customization guide.

5.4. Overriding Ansible environment variables

The Red Hat OpenStack Platform Workflow service (mistral) uses Ansible to configure Ceph Storage, but you can customize the Ansible environment by using Ansible environment variables.

Procedure

To override an ANSIBLE_* environment variable, use the CephAnsibleEnvironmentVariables heat template parameter.

This example configuration increases the number of forks and SSH retries:

parameter_defaults:

CephAnsibleEnvironmentVariables:

ANSIBLE_SSH_RETRIES: '6'

DEFAULT_FORKS: '35'For more information about Ansible environment variables, see Ansible Configuration Settings.

For more information about how to customize your Ceph Storage cluster, see Customizing the Ceph Storage cluster.

Chapter 6. Deploying second-tier Ceph storage on Red Hat OpenStack Platform

Using OpenStack director, you can deploy different Red Hat Ceph Storage performance tiers by adding new Ceph nodes dedicated to a specific tier in a Ceph cluster.

For example, you can add new object storage daemon (OSD) nodes with SSD drives to an existing Ceph cluster to create a Block Storage (cinder) backend exclusively for storing data on these nodes. A user creating a new Block Storage volume can then choose the desired performance tier: either HDDs or the new SSDs.

This type of deployment requires Red Hat OpenStack Platform director to pass a customized CRUSH map to ceph-ansible. The CRUSH map allows you to split OSD nodes based on disk performance, but you can also use this feature for mapping physical infrastructure layout.

The following sections demonstrate how to deploy four nodes where two of the nodes use SSDs and the other two use HDDs. The example is kept simple to communicate a repeatable pattern. However, a production deployment should use more nodes and more OSDs to be supported as per the Red Hat Ceph Storage hardware selection guide.

6.1. Create a CRUSH map

The CRUSH map allows you to put OSD nodes into a CRUSH root. By default, a “default” root is created and all OSD nodes are included in it.

Inside a given root, you define the physical topology, rack, rooms, and so forth, and then place the OSD nodes in the desired hierarchy (or bucket). By default, no physical topology is defined; a flat design is assumed as if all nodes are in the same rack.

See Crush Administration in the Storage Strategies Guide for details about creating a custom CRUSH map.

6.2. Mapping the OSDs

Complete the following step to map the OSDs.

Procedure

Declare the OSDs/journal mapping:

parameter_defaults: CephAnsibleDisksConfig: devices: - /dev/sda - /dev/sdb dedicated_devices: - /dev/sdc - /dev/sdc osd_scenario: non-collocated journal_size: 8192

6.3. Setting the replication factor

Complete the following step to set the replication factor.

This is normally supported only for full SSD deployment. See Red Hat Ceph Storage: Supported configurations.

Procedure

Set the default replication factor to two. This example splits four nodes into two different roots.

parameter_defaults: CephPoolDefaultSize: 2

If you upgrade a deployment that uses gnocchi as the backend, you might encounter deployment timeout. To prevent this timeout, use the following CephPool definition to customize the gnocchi pool:

parameter_defaults

CephPools: {"name": metrics, "pg_num": 128, "pgp_num": 128, "size": 1}6.4. Defining the CRUSH hierarchy

Director provides the data for the CRUSH hierarchy, but ceph-ansible actually passes that data by getting the CRUSH mapping through the Ansible inventory file. Unless you keep the default root, you must specify the location of the root for each node.

For example if node lab-ceph01 (provisioning IP 172.16.0.26) is placed in rack1 inside the fast_root, the Ansible inventory should resemble the following:

172.16.0.26:

osd_crush_location: {host: lab-ceph01, rack: rack1, root: fast_root}

When you use director to deploy Ceph, you don’t actually write the Ansible inventory; it is generated for you. Therefore, you must use NodeDataLookup to append the data.

NodeDataLookup works by specifying the system product UUID stored on the motherboard of the systems. The Bare Metal service (ironic) also stores this information after the introspection phase.

To create a CRUSH map that supports second-tier storage, complete the following steps:

Procedure

Run the following commands to retrieve the UUIDs of the four nodes:

for ((x=1; x<=4; x++)); \ { echo "Node overcloud-ceph0${x}"; \ openstack baremetal introspection data save overcloud-ceph0${x} | jq .extra.system.product.uuid; } Node overcloud-ceph01 "32C2BC31-F6BB-49AA-971A-377EFDFDB111" Node overcloud-ceph02 "76B4C69C-6915-4D30-AFFD-D16DB74F64ED" Node overcloud-ceph03 "FECF7B20-5984-469F-872C-732E3FEF99BF" Node overcloud-ceph04 "5FFEFA5F-69E4-4A88-B9EA-62811C61C8B3"NoteIn the example, overcloud-ceph0[1-4] are the Ironic nodes names; they will be deployed as

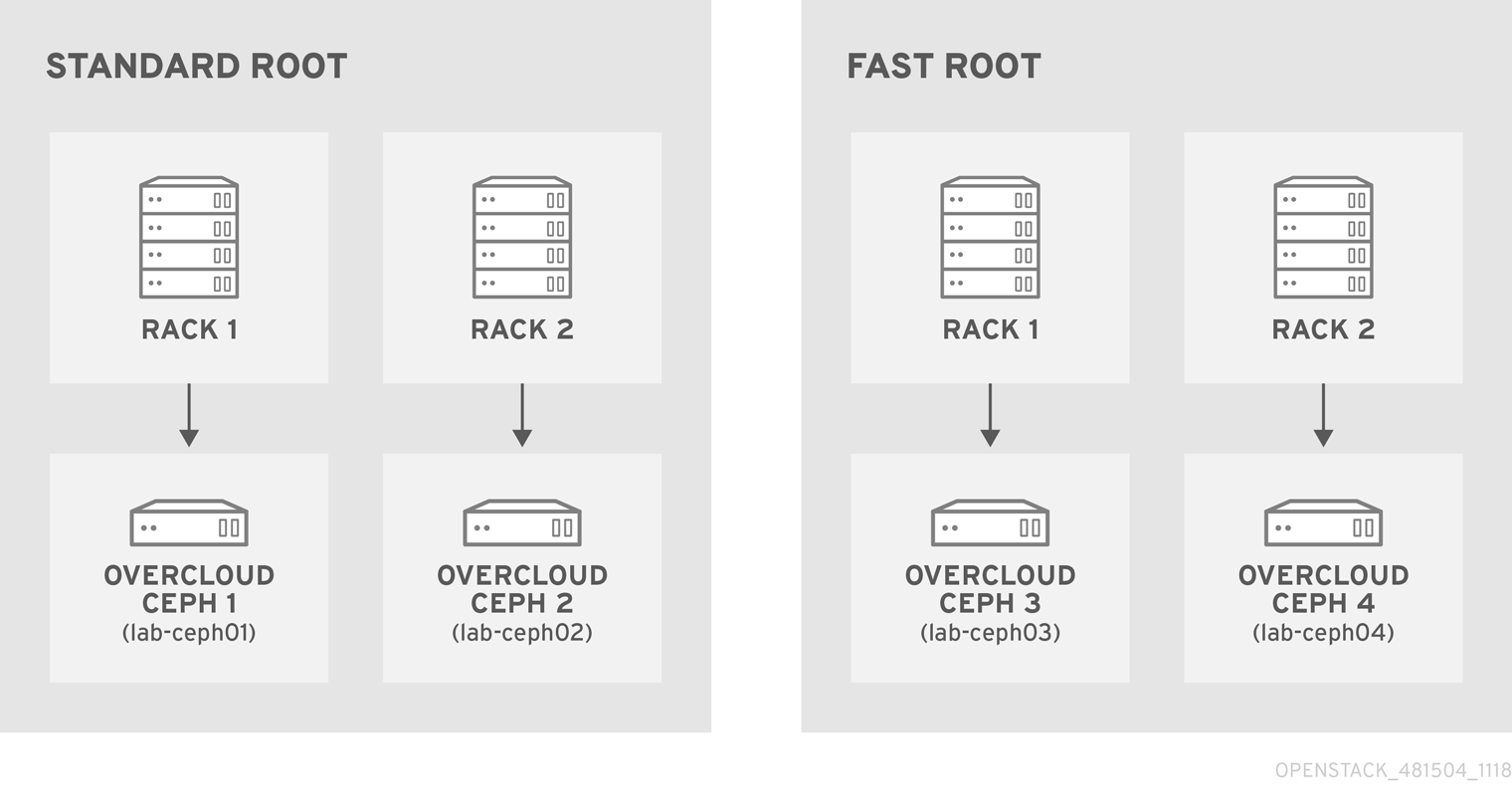

lab-ceph0[1–4](via HostnameMap.yaml).Specify the node placement as follows:

Root Rack Node standard_root

rack1_std

overcloud-ceph01 (lab-ceph01)

rack2_std

overcloud-ceph02 (lab-ceph02)

fast_root

rack1_fast

overcloud-ceph03 (lab-ceph03)

rack2_fast

overcloud-ceph04 (lab-ceph04)

NoteYou cannot have two buckets with the same name. Even if

lab-ceph01andlab-ceph03are in the same physical rack, you cannot have two buckets calledrack1. Therefore, we named themrack1_stdandrack1_fast.NoteThis example demonstrates how to create a specific route called “standard_root” to illustrate multiple custom roots. However, you could have kept the HDDs OSD nodes in the default root.

Use the following

NodeDataLookupsyntax:NodeDataLookup: {"SYSTEM_UUID": {"osd_crush_location": {"root": "$MY_ROOT", "rack": "$MY_RACK", "host": "$OVERCLOUD_NODE_HOSTNAME"}}}NoteYou must specify the system UUID and then the CRUSH hierarchy from top to bottom. Also, the

hostparameter must point to the node’s overcloud host name, not the Bare Metal service (ironic) node name. To match the example configuration, enter the following:parameter_defaults: NodeDataLookup: {"32C2BC31-F6BB-49AA-971A-377EFDFDB111": {"osd_crush_location": {"root": "standard_root", "rack": "rack1_std", "host": "lab-ceph01"}}, "76B4C69C-6915-4D30-AFFD-D16DB74F64ED": {"osd_crush_location": {"root": "standard_root", "rack": "rack2_std", "host": "lab-ceph02"}}, "FECF7B20-5984-469F-872C-732E3FEF99BF": {"osd_crush_location": {"root": "fast_root", "rack": "rack1_fast", "host": "lab-ceph03"}}, "5FFEFA5F-69E4-4A88-B9EA-62811C61C8B3": {"osd_crush_location": {"root": "fast_root", "rack": "rack2_fast", "host": "lab-ceph04"}}}Enable CRUSH map management at the ceph-ansible level:

parameter_defaults: CephAnsibleExtraConfig: create_crush_tree: trueUse scheduler hints to ensure the Bare Metal service node UUIDs correctly map to the hostnames:

parameter_defaults: CephStorageCount: 4 OvercloudCephStorageFlavor: ceph-storage CephStorageSchedulerHints: 'capabilities:node': 'ceph-%index%'Tag the Bare Metal service nodes with the corresponding hint:

openstack baremetal node set --property capabilities='profile:ceph-storage,node:ceph-0,boot_option:local' overcloud-ceph01 openstack baremetal node set --property capabilities=profile:ceph-storage,'node:ceph-1,boot_option:local' overcloud-ceph02 openstack baremetal node set --property capabilities='profile:ceph-storage,node:ceph-2,boot_option:local' overcloud-ceph03 openstack baremetal node set --property capabilities='profile:ceph-storage,node:ceph-3,boot_option:local' overcloud-ceph04

NoteFor more information about predictive placement, see Assigning Specific Node IDs in the Advanced Overcloud Customization guide.

6.5. Defining CRUSH map rules

Rules define how the data is written on a cluster. After the CRUSH map node placement is complete, define the CRUSH rules.

Procedure

Use the following syntax to define the CRUSH rules:

parameter_defaults: CephAnsibleExtraConfig: crush_rules: - name: $RULE_NAME root: $ROOT_NAME type: $REPLICAT_DOMAIN default: true/falseNoteSetting the default parameter to

truemeans that this rule will be used when you create a new pool without specifying any rule. There may only be one default rule.In the following example, rule

standardpoints to the OSD nodes hosted on thestandard_rootwith one replicate per rack. Rulefastpoints to the OSD nodes hosted on thestandard_rootwith one replicate per rack:parameter_defaults: CephAnsibleExtraConfig: crush_rule_config: true crush_rules: - name: standard root: standard_root type: rack default: true - name: fast root: fast_root type: rack default: falseNoteYou must set

crush_rule_configtotrue.

6.6. Configuring OSP pools

Ceph pools are configured with a CRUSH rules that define how to store data. This example features all built-in OSP pools using the standard_root (the standard rule) and a new pool using fast_root (the fast rule).

Procedure

Use the following syntax to define or change a pool property:

- name: $POOL_NAME pg_num: $PG_COUNT rule_name: $RULE_NAME application: rbdList all OSP pools and set the appropriate rule (standard, in this case), and create a new pool called

tier2that uses the fast rule. This pool will be used by Block Storage (cinder).parameter_defaults: CephPools: - name: tier2 pg_num: 64 rule_name: fast application: rbd - name: volumes pg_num: 64 rule_name: standard application: rbd - name: vms pg_num: 64 rule_name: standard application: rbd - name: backups pg_num: 64 rule_name: standard application: rbd - name: images pg_num: 64 rule_name: standard application: rbd - name: metrics pg_num: 64 rule_name: standard application: openstack_gnocchi

6.7. Configuring Block Storage to use the new pool

Add the Ceph pool to the cinder.conf file to enable Block Storage (cinder) to consume it:

Procedure

Update

cinder.confas follows:parameter_defaults: CinderRbdExtraPools: - tier2

6.8. Verifying customized CRUSH map

After the openstack overcloud deploy command creates or updates the overcloud, complete the following step to verify that the customized CRUSH map was correctly applied.

Be careful if you move a host from one route to another.

Procedure

Connect to a Ceph monitor node and run the following command:

# ceph osd tree ID WEIGHT TYPE NAME UP/DOWN REWEIGHT PRIMARY-AFFINITY -7 0.39996 root standard_root -6 0.19998 rack rack1_std -5 0.19998 host lab-ceph02 1 0.09999 osd.1 up 1.00000 1.00000 4 0.09999 osd.4 up 1.00000 1.00000 -9 0.19998 rack rack2_std -8 0.19998 host lab-ceph03 0 0.09999 osd.0 up 1.00000 1.00000 3 0.09999 osd.3 up 1.00000 1.00000 -4 0.19998 root fast_root -3 0.19998 rack rack1_fast -2 0.19998 host lab-ceph01 2 0.09999 osd.2 up 1.00000 1.00000 5 0.09999 osd.5 up 1.00000 1.00000

6.9. Assigning custom attributes to different Ceph pools

By default, Ceph pools created through the director have the same placement group (pg_num and pgp_num) and sizes. You can use either method in Chapter 5, Customizing the Ceph Storage cluster to override these settings globally; that is, doing so will apply the same values for all pools.

You can also apply different attributes to each Ceph pool. To do so, use the CephPools parameter, as in:

parameter_defaults:

CephPools:

- name: POOL

pg_num: 128

application: rbd

Replace POOL with the name of the pool you want to configure along with the pg_num setting to indicate number of placement groups. This overrides the default pg_num for the specified pool.

If you use the CephPools parameter, you must also specify the application type. The application type for Compute, Block Storage, and Image Storage should be rbd, as shown in the examples, but depending on what the pool will be used for, you may need to specify a different application type. For example, the application type for the gnocchi metrics pool is openstack_gnocchi. See Enable Application in the Storage Strategies Guide for more information.

If you do not use the CephPools parameter, director sets the appropriate application type automatically, but only for the default pool list.

You can also create new custom pools through the CephPools parameter. For example, to add a pool called custompool:

parameter_defaults:

CephPools:

- name: custompool

pg_num: 128

application: rbdThis creates a new custom pool in addition to the default pools.

For typical pool configurations of common Ceph use cases, see the Ceph Placement Groups (PGs) per Pool Calculator. This calculator is normally used to generate the commands for manually configuring your Ceph pools. In this deployment, the director will configure the pools based on your specifications.

Red Hat Ceph Storage 3 (Luminous) introduces a hard limit on the maximum number of PGs an OSD can have, which is 200 by default. Do not override this parameter beyond 200. If there is a problem because the Ceph PG number exceeds the maximum, adjust the pg_num per pool to address the problem, not the mon_max_pg_per_osd.

Chapter 7. Creating the overcloud

When your custom environment files are ready, you can specify which flavors and nodes each role uses and then execute the deployment.

7.1. Assigning nodes and flavors to roles

Planning an overcloud deployment involves specifying how many nodes and which flavors to assign to each role. Like all Heat template parameters, these role specifications are declared in the parameter_defaults section of your environment file (in this case, ~/templates/storage-config.yaml).

For this purpose, use the following parameters:

Table 7.1. Roles and Flavors for Overcloud Nodes

| Heat Template Parameter | Description |

|---|---|