Red Hat Training

A Red Hat training course is available for Red Hat OpenStack Platform

Director Installation and Usage

An end-to-end scenario on using Red Hat OpenStack Platform director to create an OpenStack cloud

OpenStack Documentation Team

rhos-docs@redhat.comAbstract

Chapter 1. Introduction

The Red Hat OpenStack Platform director is a toolset for installing and managing a complete OpenStack environment. It is based primarily on the OpenStack project TripleO, which is an abbreviation for "OpenStack-On-OpenStack". This project takes advantage of OpenStack components to install a fully operational OpenStack environment. This includes new OpenStack components that provision and control bare metal systems to use as OpenStack nodes. This provides a simple method for installing a complete Red Hat OpenStack Platform environment that is both lean and robust.

The Red Hat OpenStack Platform director uses two main concepts: an undercloud and an overcloud. The undercloud installs and configures the overcloud. The next few sections outline the concept of each.

1.1. Undercloud

The undercloud is the main director node. It is a single-system OpenStack installation that includes components for provisioning and managing the OpenStack nodes that form your OpenStack environment (the overcloud). The components that form the undercloud provide the multiple functions:

- Environment Planning

- The undercloud provides planning functions for users to create and assign certain node roles. The undercloud includes a default set of nodes such as Compute, Controller, and various storage roles, but also provides the ability to use custom roles. In addition, you can select which OpenStack Platform services to include on each node role, which provides a method to model new node types or isolate certain components on their own host.

- Bare Metal System Control

- The undercloud uses out-of-band management interface, usually Intelligent Platform Management Interface (IPMI), of each node for power management control and a PXE-based service to discover hardware attributes and install OpenStack to each node. This provides a method to provision bare metal systems as OpenStack nodes. See Appendix B, Power Management Drivers for a full list of power management drivers.

- Orchestration

- The undercloud provides a set of YAML templates that acts as a set of plans for your environment. The undercloud imports these plans and follows their instructions to create the resulting OpenStack environment. The plans also include hooks that allow you to incorporate your own customizations as certain points in the environment creation process.

- Command Line Tools and a Web UI

- The Red Hat OpenStack Platform director performs these undercloud functions through a terminal-based command line interface or a web-based user interface.

- Undercloud Components

The undercloud uses OpenStack components as its base tool set. This includes the following components:

- OpenStack Identity (keystone) - Provides authentication and authorization for the director’s components.

- OpenStack Bare Metal (ironic) and OpenStack Compute (nova) - Manages bare metal nodes.

- OpenStack Networking (neutron) and Open vSwitch - Controls networking for bare metal nodes.

- OpenStack Image Service (glance) - Stores images that are written to bare metal machines.

- OpenStack Orchestration (heat) and Puppet - Provides orchestration of nodes and configuration of nodes after the director writes the overcloud image to disk.

OpenStack Telemetry (ceilometer) - Performs monitoring and data collection. This also includes:

- OpenStack Telemetry Metrics (gnocchi) - Provides a time series database for metrics.

- OpenStack Telemetry Alarming (aodh) - Provides a an alarming component for monitoring.

- OpenStack Workflow Service (mistral) - Provides a set of workflows for certain director-specific actions, such as importing and deploying plans.

- OpenStack Messaging Service (zaqar) - Provides a messaging service for the OpenStack Workflow Service.

OpenStack Object Storage (swift) - Provides object storage for various OpenStack Platform components, including:

- Image storage for OpenStack Image Service

- Introspection data for OpenStack Bare Metal

- Deployment plans for OpenStack Workflow Service

1.2. Overcloud

The overcloud is the resulting Red Hat OpenStack Platform environment created using the undercloud. This includes different nodes roles which you define based on the OpenStack Platform environment you aim to create. The undercloud includes a default set of overcloud node roles, which include:

- Controller

Nodes that provide administration, networking, and high availability for the OpenStack environment. An ideal OpenStack environment recommends three of these nodes together in a high availability cluster.

A default Controller node contains the following components:

- OpenStack Dashboard (horizon)

- OpenStack Identity (keystone)

- OpenStack Compute (nova) API

- OpenStack Networking (neutron)

- OpenStack Image Service (glance)

- OpenStack Block Storage (cinder)

- OpenStack Object Storage (swift)

- OpenStack Orchestration (heat)

- OpenStack Telemetry (ceilometer)

- OpenStack Telemetry Metrics (gnocchi)

- OpenStack Telemetry Alarming (aodh)

- OpenStack Clustering (sahara)

- OpenStack Shared File Systems (manila)

- OpenStack Bare Metal (ironic)

- MariaDB

- Open vSwitch

- Pacemaker and Galera for high availability services.

- Compute

These nodes provide computing resources for the OpenStack environment. You can add more Compute nodes to scale out your environment over time. A default Compute node contains the following components:

- OpenStack Compute (nova)

- KVM/QEMU

- OpenStack Telemetry (ceilometer) agent

- Open vSwitch

- Storage

Nodes that provide storage for the OpenStack environment. This includes nodes for:

- Ceph Storage nodes - Used to form storage clusters. Each node contains a Ceph Object Storage Daemon (OSD). In addition, the director installs Ceph Monitor onto the Controller nodes in situations where it deploys Ceph Storage nodes.

Block storage (cinder) - Used as external block storage for HA Controller nodes. This node contains the following components:

- OpenStack Block Storage (cinder) volume

- OpenStack Telemetry (ceilometer) agent

- Open vSwitch.

Object storage (swift) - These nodes provide a external storage layer for OpenStack Swift. The Controller nodes access these nodes through the Swift proxy. This node contains the following components:

- OpenStack Object Storage (swift) storage

- OpenStack Telemetry (ceilometer) agent

- Open vSwitch.

1.3. High Availability

The Red Hat OpenStack Platform director uses a Controller node cluster to provide high availability services to your OpenStack Platform environment. The director installs a duplicate set of components on each Controller node and manages them together as a single service. This type of cluster configuration provides a fallback in the event of operational failures on a single Controller node; this provides OpenStack users with a certain degree of continuous operation.

The OpenStack Platform director uses some key pieces of software to manage components on the Controller node:

- Pacemaker - Pacemaker is a cluster resource manager. Pacemaker manages and monitors the availability of OpenStack components across all nodes in the cluster.

- HAProxy - Provides load balancing and proxy services to the cluster.

- Galera - Replicates the Red Hat OpenStack Platform database across the cluster.

- Memcached - Provides database caching.

Red Hat OpenStack Platform director automatically configures the bulk of high availability on Controller nodes. However, the nodes require some manual configuration to enable fencing and power management controls. This guide includes these instructions.

1.4. Ceph Storage

It is common for large organizations using OpenStack to serve thousands of clients or more. Each OpenStack client is likely to have their own unique needs when consuming block storage resources. Deploying glance (images), cinder (volumes) and/or nova (Compute) on a single node can become impossible to manage in large deployments with thousands of clients. Scaling OpenStack externally resolves this challenge.

However, there is also a practical requirement to virtualize the storage layer with a solution like Red Hat Ceph Storage so that you can scale the Red Hat OpenStack Platform storage layer from tens of terabytes to petabytes (or even exabytes) of storage. Red Hat Ceph Storage provides this storage virtualization layer with high availability and high performance while running on commodity hardware. While virtualization might seem like it comes with a performance penalty, Ceph stripes block device images as objects across the cluster; this means large Ceph Block Device images have better performance than a standalone disk. Ceph Block devices also support caching, copy-on-write cloning, and copy-on-read cloning for enhanced performance.

See Red Hat Ceph Storage for additional information about Red Hat Ceph Storage.

Chapter 2. Requirements

This chapter outlines the main requirements for setting up an environment to provision Red Hat OpenStack Platform using the director. This includes the requirements for setting up the director, accessing it, and the hardware requirements for hosts that the director provisions for OpenStack services.

Prior to deploying Red Hat OpenStack Platform, it is important to consider the characteristics of the available deployment methods. For more information, refer to the Installing and Managing Red Hat OpenStack Platform.

2.1. Environment Requirements

Minimum Requirements:

- 1 host machine for the Red Hat OpenStack Platform director

- 1 host machine for a Red Hat OpenStack Platform Compute node

- 1 host machine for a Red Hat OpenStack Platform Controller node

Recommended Requirements:

- 1 host machine for the Red Hat OpenStack Platform director

- 3 host machines for Red Hat OpenStack Platform Compute nodes

- 3 host machines for Red Hat OpenStack Platform Controller nodes in a cluster

- 3 host machines for Red Hat Ceph Storage nodes in a cluster

Note the following:

- It is recommended to use bare metal systems for all nodes. At minimum, the Compute nodes require bare metal systems.

- All overcloud bare metal systems require an Intelligent Platform Management Interface (IPMI). This is because the director controls the power management.

-

Set the each node’s internal BIOS clock to UTC. This prevents issues with future-dated file timestamps when

hwclocksynchronizes the BIOS clock before applying the timezone offset.

Do not upgrade to the Red Hat Enterprise Linux 7.3 kernel without also upgrading from Open vSwitch (OVS) 2.4.0 to OVS 2.5.0. If only the kernel is upgraded, then OVS will stop functioning.

2.2. Undercloud Requirements

The undercloud system hosting the director provides provisioning and management for all nodes in the overcloud.

- An 8-core 64-bit x86 processor with support for the Intel 64 or AMD64 CPU extensions.

- A minimum of 16 GB of RAM.

- A minimum of 40 GB of available disk space on the root disk. Make sure to leave at least 10 GB free space before attempting an overcloud deployment or update. This free space accommodates image conversion and caching during the node provisioning process.

- A minimum of 2 x 1 Gbps Network Interface Cards. However, it is recommended to use a 10 Gbps interface for Provisioning network traffic, especially if provisioning a large number of nodes in your overcloud environment.

- Red Hat Enterprise Linux 7.7 is installed as the host operating system.

- SELinux is enabled in Enforcing mode on the host.

2.2.1. Virtualization Support

Red Hat only supports a virtualized undercloud on the following platforms:

| Platform | Notes |

|---|---|

| Kernel-based Virtual Machine (KVM) | Hosted by Red Hat Enterprise Linux 7, as listed on certified hypervisors. |

| Red Hat Virtualization | Hosted by Red Hat Virtualization 4.x, as listed on certified hypervisors. |

| Microsoft Hyper-V | Hosted by versions of Hyper-V as listed on the Red Hat Customer Portal Certification Catalogue. |

| VMware ESX and ESXi | Hosted by versions of ESX and ESXi as listed on the Red Hat Customer Portal Certification Catalogue. |

Red Hat OpenStack Platform director 10 requires the latest version of Red Hat Enterprise Linux as the host operating system. This means your virtualization platform must also support the underlying Red Hat Enterprise Linux version.

Virtual Machine Requirements

Resource requirements for a virtual undercloud are similar to those of a bare metal undercloud. You should consider the various tuning options when provisioning such as network model, guest CPU capabilities, storage backend, storage format, and caching mode.

Network Considerations

Note the following network considerations for your virtualized undercloud:

- Power Management

-

The undercloud VM requires access to the overcloud nodes' power management devices. This is the IP address set for the

pm_addrparameter when registering nodes. - Provisioning network

-

The NIC used for the provisioning (

ctlplane) network requires the ability to broadcast and serve DHCP requests to the NICs of the overcloud’s bare metal nodes. As a recommendation, create a bridge that connects the VM’s NIC to the same network as the bare metal NICs.

A common problem occurs when the hypervisor technology blocks the undercloud from transmitting traffic from an unknown address. - If using Red Hat Enterprise Virtualization, disable anti-mac-spoofing to prevent this. - If using VMware ESX or ESXi, allow forged transmits to prevent this.

Example Architecture

This is just an example of a basic undercloud virtualization architecture using a KVM server. It is intended as a foundation you can build on depending on your network and resource requirements.

The KVM host uses two Linux bridges:

- br-ex (eth0)

- Provides outside access to the undercloud

- DHCP server on outside network assigns network configuration to undercloud using the virtual NIC (eth0)

- Provides access for the undercloud to access the power management interfaces for the bare metal servers

- br-ctlplane (eth1)

- Connects to the same network as the bare metal overcloud nodes

- Undercloud fulfills DHCP and PXE boot requests through virtual NIC (eth1)

- Bare metal servers for the overcloud boot through PXE over this network

The KVM host requires the following packages:

$ yum install libvirt-client libvirt-daemon qemu-kvm libvirt-daemon-driver-qemu libvirt-daemon-kvm virt-install bridge-utils rsync

The following command creates the undercloud virtual machine on the KVM host and create two virtual NICs that connect to the respective bridges:

$ virt-install --name undercloud --memory=16384 --vcpus=4 --location /var/lib/libvirt/images/rhel-server-7.3-x86_64-dvd.iso --disk size=100 --network bridge=br-ex --network bridge=br-ctlplane --graphics=vnc --hvm --os-variant=rhel7

This starts a libvirt domain. Connect to it with virt-manager and walk through the install process. Alternatively, you can perform an unattended installation using the following options to include a kickstart file:

--initrd-inject=/root/ks.cfg --extra-args "ks=file:/ks.cfg"

Once installation completes, SSH into the instance as the root user and follow the instructions in Chapter 4, Installing the Undercloud

Backups

To back up a virtualized undercloud, there are multiple solutions:

- Option 1: Follow the instructions in the Back Up and Restore the Director Undercloud Guide.

- Option 2: Shut down the undercloud and take a copy of the undercloud virtual machine storage backing.

- Option 3: Take a snapshot of the undercloud VM if your hypervisor supports live or atomic snapshots.

If using a KVM server, use the following procedure to take a snapshot:

-

Make sure

qemu-guest-agentis running on the undercloud guest VM. - Create a live snapshot of the running VM:

$ virsh snapshot-create-as --domain undercloud --disk-only --atomic --quiesce

- Take a copy of the (now read-only) QCOW backing file

$ rsync --sparse -avh --progress /var/lib/libvirt/images/undercloud.qcow2 1.qcow2

- Merge the QCOW overlay file into the backing file and switch the undercloud VM back to using the original file:

$ virsh blockcommit undercloud vda --active --verbose --pivot

2.3. Networking Requirements

The undercloud host requires at least two networks:

- Provisioning network - Provides DHCP and PXE boot functions to help discover bare metal systems for use in the overcloud. Typically, this network must use a native VLAN on a trunked interface so that the director serves PXE boot and DHCP requests. Some server hardware BIOSes support PXE boot from a VLAN, but the BIOS must also support translating that VLAN into a native VLAN after booting, otherwise the undercloud will not be reachable. Currently, only a small subset of server hardware fully supports this feature. This is also the network you use to control power management through Intelligent Platform Management Interface (IPMI) on all overcloud nodes.

- External Network - A separate network for external access to the overcloud and undercloud. The interface connecting to this network requires a routable IP address, either defined statically, or dynamically through an external DHCP service.

This represents the minimum number of networks required. However, the director can isolate other Red Hat OpenStack Platform network traffic into other networks. Red Hat OpenStack Platform supports both physical interfaces and tagged VLANs for network isolation.

Note the following:

Typical minimal overcloud network configuration can include:

- Single NIC configuration - One NIC for the Provisioning network on the native VLAN and tagged VLANs that use subnets for the different overcloud network types.

- Dual NIC configuration - One NIC for the Provisioning network and the other NIC for the External network.

- Dual NIC configuration - One NIC for the Provisioning network on the native VLAN and the other NIC for tagged VLANs that use subnets for the different overcloud network types.

- Multiple NIC configuration - Each NIC uses a subnet for a different overcloud network type.

- Additional physical NICs can be used for isolating individual networks, creating bonded interfaces, or for delegating tagged VLAN traffic.

- If using VLANs to isolate your network traffic types, use a switch that supports 802.1Q standards to provide tagged VLANs.

- During the overcloud creation, you will refer to NICs using a single name across all overcloud machines. Ideally, you should use the same NIC on each overcloud node for each respective network to avoid confusion. For example, use the primary NIC for the Provisioning network and the secondary NIC for the OpenStack services.

- Make sure the Provisioning network NIC is not the same NIC used for remote connectivity on the director machine. The director installation creates a bridge using the Provisioning NIC, which drops any remote connections. Use the External NIC for remote connections to the director system.

The Provisioning network requires an IP range that fits your environment size. Use the following guidelines to determine the total number of IP addresses to include in this range:

- Include at least one IP address per node connected to the Provisioning network.

- If planning a high availability configuration, include an extra IP address for the virtual IP of the cluster.

Include additional IP addresses within the range for scaling the environment.

NoteDuplicate IP addresses should be avoided on the Provisioning network. For more information, see Section 3.2, “Planning Networks”.

NoteFor more information on planning your IP address usage, for example, for storage, provider, and tenant networks, see the Networking Guide.

- Set all overcloud systems to PXE boot off the Provisioning NIC, and disable PXE boot on the External NIC (and any other NICs on the system). Also ensure that the Provisioning NIC has PXE boot at the top of the boot order, ahead of hard disks and CD/DVD drives.

- All overcloud bare metal systems require a supported power management interface, such as an Intelligent Platform Management Interface (IPMI). This allows the director to control the power management of each node.

- Make a note of the following details for each overcloud system: the MAC address of the Provisioning NIC, the IP address of the IPMI NIC, IPMI username, and IPMI password. This information will be useful later when setting up the overcloud nodes.

- Fencing is mandatory for Red Hat support. You must add a network flow from controller to OOB IPMI.

- If an instance needs to be accessible from the external internet, you can allocate a floating IP address from a public network and associate it with an instance. The instance still retains its private IP but network traffic uses NAT to traverse through to the floating IP address. Note that a floating IP address can only be assigned to a single instance rather than multiple private IP addresses. However, the floating IP address is reserved only for use by a single tenant, allowing the tenant to associate or disassociate with a particular instance as required. This configuration exposes your infrastructure to the external internet. As a result, you might need to check that you are following suitable security practices.

- To mitigate the risk of network loops in Open vSwitch, only a single interface or a single bond may be a member of a given bridge. If you require multiple bonds or interfaces, you can configure multiple bridges.

- It is recommended to use DNS hostname resolution so that your overcloud nodes can connect to external services, such as the Red Hat Content Delivery Network and network time servers.

Your OpenStack Platform implementation is only as secure as its environment. Follow good security principles in your networking environment to ensure that network access is properly controlled. For example:

- Use network segmentation to mitigate network movement and isolate sensitive data; a flat network is much less secure.

- Restrict services access and ports to a minimum.

- Ensure proper firewall rules and password usage.

- Ensure that SELinux is enabled.

For details on securing your system, see:

2.4. Overcloud Requirements

The following sections detail the requirements for individual systems and nodes in the overcloud installation.

Booting an overcloud node from the SAN (FC-AL, FCoE, iSCSI) is not yet supported.

2.4.1. Compute Node Requirements

Compute nodes are responsible for running virtual machine instances after they are launched. Compute nodes must support hardware virtualization. Compute nodes must also have enough memory and disk space to support the requirements of the virtual machine instances they host.

- Processor

- 64-bit x86 processor with support for the Intel 64 or AMD64 CPU extensions, and the AMD-V or Intel VT hardware virtualization extensions enabled. It is recommended this processor has a minimum of 4 cores.

- Memory

- A minimum of 6 GB of RAM. + Add additional RAM to this requirement based on the amount of memory that you intend to make available to virtual machine instances.

- Disk Space

- A minimum of 40 GB of available disk space.

- Network Interface Cards

- A minimum of one 1 Gbps Network Interface Cards, although it is recommended to use at least two NICs in a production environment. Use additional network interface cards for bonded interfaces or to delegate tagged VLAN traffic.

- Power Management

- Each Controller node requires a supported power management interface, such as an Intelligent Platform Management Interface (IPMI) functionality, on the server’s motherboard.

2.4.2. Controller Node Requirements

Controller nodes are responsible for hosting the core services in a RHEL OpenStack Platform environment, such as the Horizon dashboard, the back-end database server, Keystone authentication, and High Availability services.

- Processor

- 64-bit x86 processor with support for the Intel 64 or AMD64 CPU extensions.

- Memory

Minimum amount of memory is 32 GB. However, the amount of recommended memory depends on the number of vCPUs (which is based on CPU cores multiplied by hyper-threading value). Use the following calculations as guidance:

Controller RAM minimum calculation:

- Use 1.5 GB of memory per vCPU. For example, a machine with 48 vCPUs should have 72 GB of RAM.

Controller RAM recommended calculation:

- Use 3 GB of memory per vCPU. For example, a machine with 48 vCPUs should have 144 GB of RAM

For more information on measuring memory requirements, see "Red Hat OpenStack Platform Hardware Requirements for Highly Available Controllers" on the Red Hat Customer Portal.

- Disk Storage and Layout

By default, the Telemetry (

gnocchi) and Object Storage (swift) services are both installed on the Controller, with both configured to use the root disk. These defaults are suitable for deploying small overclouds built on commodity hardware; such environments are typical of proof-of-concept and test environments. These defaults also allow the deployment of overclouds with minimal planning but offer little in terms of workload capacity and performance.In an enterprise environment, however, this could cause a significant bottleneck, as Telemetry accesses storage constantly. This results in heavy disk I/O usage, which severely impacts the performance of all other Controller services. In this type of environment, you need to plan your overcloud and configure it accordingly.

Red Hat provides several configuration recommendations for both Telemetry and Object Storage. See Deployment Recommendations for Specific Red Hat OpenStack Platform Services for details.

- Disk Space

- A minimum of 40 GB of available disk space, if the Object Storage service (swift) is not running on the controller nodes.

- Network Interface Cards

- A minimum of 2 x 1 Gbps Network Interface Cards. Use additional network interface cards for bonded interfaces or to delegate tagged VLAN traffic.

- Power Management

- Each Controller node requires a supported power management interface, such as an Intelligent Platform Management Interface (IPMI) functionality, on the server’s motherboard.

2.4.3. Ceph Storage Node Requirements

Ceph Storage nodes are responsible for providing object storage in a RHEL OpenStack Platform environment.

- Placement Groups

- Ceph uses Placement Groups to facilitate dynamic and efficient object tracking at scale. In the case of OSD failure or cluster re-balancing, Ceph can move or replicate a placement group and its contents, which means a Ceph cluster can re-balance and recover efficiently. The default Placement Group count that Director creates is not always optimal so it is important to calculate the correct Placement Group count according to your requirements. You can use the Placement Group calculator to calculate the correct count: Ceph Placement Groups (PGs) per Pool Calculator

- Processor

- 64-bit x86 processor with support for the Intel 64 or AMD64 CPU extensions.

- Memory

- Memory requirements depend on the amount of storage space. Ideally, use at minimum 1 GB of memory per 1 TB of hard disk space.

- Disk Space

- Storage requirements depends on the amount of memory. Ideally, use at minimum 1 GB of memory per 1 TB of hard disk space.

- Disk Layout

The recommended Red Hat Ceph Storage node configuration requires at least three or more disks in a layout similar to the following:

-

/dev/sda- The root disk. The director copies the main Overcloud image to the disk. -

/dev/sdb- The journal disk. This disk divides into partitions for Ceph OSD journals. For example,/dev/sdb1,/dev/sdb2,/dev/sdb3, and onward. The journal disk is usually a solid state drive (SSD) to aid with system performance. -

/dev/sdcand onward - The OSD disks. Use as many disks as necessary for your storage requirements.

-

- Network Interface Cards

- A minimum of one 1 Gbps Network Interface Cards, although it is recommended to use at least two NICs in a production environment. Use additional network interface cards for bonded interfaces or to delegate tagged VLAN traffic. It is recommended to use a 10 Gbps interface for storage node, especially if creating an OpenStack Platform environment that serves a high volume of traffic.

- Power Management

- Each Controller node requires a supported power management interface, such as an Intelligent Platform Management Interface (IPMI) functionality, on the server’s motherboard.

See the Red Hat Ceph Storage for the Overcloud guide for more information about installing an overcloud with a Ceph Storage cluster.

2.4.4. Object Storage Node Requirements

Object Storage nodes provides an object storage layer for the overcloud. The Object Storage proxy is installed on Controller nodes. The storage layer will require bare metal nodes with multiple number of disks per node.

- Processor

- 64-bit x86 processor with support for the Intel 64 or AMD64 CPU extensions.

- Memory

- Memory requirements depend on the amount of storage space. Ideally, use at minimum 1 GB of memory per 1 TB of hard disk space. For optimal performance, it is recommended to use 2 GB per 1 TB of hard disk space, especially for small file (less 100GB) workloads.

- Disk Space

Storage requirements depends on the capacity needed for the workload. It is recommended to use SSD drives to store the account and container data. The capacity ratio of account and container data to objects is of about 1 per cent. For example, for every 100TB of hard drive capacity, provide 1TB of SSD capacity for account and container data.

However, this depends on the type of stored data. If STORING mostly small objects, provide more SSD space. For large objects (videos, backups), use less SSD space.

- Disk Layout

The recommended node configuration requires a disk layout similar to the following:

-

/dev/sda- The root disk. The director copies the main overcloud image to the disk. -

/dev/sdb- Used for account data. -

/dev/sdc- Used for container data. -

/dev/sddand onward - The object server disks. Use as many disks as necessary for your storage requirements.

-

- Network Interface Cards

- A minimum of 2 x 1 Gbps Network Interface Cards. Use additional network interface cards for bonded interfaces or to delegate tagged VLAN traffic.

- Power Management

- Each Controller node requires a supported power management interface, such as an Intelligent Platform Management Interface (IPMI) functionality, on the server’s motherboard.

2.5. Repository Requirements

Both the undercloud and overcloud require access to Red Hat repositories either through the Red Hat Content Delivery Network, or through Red Hat Satellite 5 or 6. If using a Red Hat Satellite Server, synchronize the required repositories to your OpenStack Platform environment. Use the following list of CDN channel names as a guide:

Do not upgrade to the Red Hat Enterprise Linux 7.3 kernel without also upgrading from Open vSwitch (OVS) 2.4.0 to OVS 2.5.0. If only the kernel is upgraded, then OVS will stop functioning.

Table 2.1. OpenStack Platform Repositories

| Name | Repository | Description of Requirement |

| Red Hat Enterprise Linux 7 Server (RPMs) |

| Base operating system repository. |

| Red Hat Enterprise Linux 7 Server - Extras (RPMs) |

| Contains Red Hat OpenStack Platform dependencies. |

| Red Hat Enterprise Linux 7 Server - RH Common (RPMs) |

| Contains tools for deploying and configuring Red Hat OpenStack Platform. |

| Red Hat Satellite Tools for RHEL 7 Server RPMs x86_64 |

| Tools for managing hosts with Red Hat Satellite 6. |

| Red Hat Enterprise Linux High Availability (for RHEL 7 Server) (RPMs) |

| High availability tools for Red Hat Enterprise Linux. Used for Controller node high availability. |

| Red Hat Enterprise Linux OpenStack Platform 10 for RHEL 7 (RPMs) |

| Core Red Hat OpenStack Platform repository. Also contains packages for Red Hat OpenStack Platform director. |

| Red Hat Ceph Storage OSD 2 for Red Hat Enterprise Linux 7 Server (RPMs) |

| (For Ceph Storage Nodes) Repository for Ceph Storage Object Storage daemon. Installed on Ceph Storage nodes. |

| Red Hat Ceph Storage MON 2 for Red Hat Enterprise Linux 7 Server (RPMs) |

| (For Ceph Storage Nodes) Repository for Ceph Storage Monitor daemon. Installed on Controller nodes in OpenStack environments using Ceph Storage nodes. |

| Red Hat Ceph Storage Tools 2 for Red Hat Enterprise Linux 7 Server (RPMs) |

| Provides tools for nodes to communicate with the Ceph Storage cluster. This repository should be enabled for all overcloud nodes when deploying an overcloud with a Ceph Storage cluster. |

To configure repositories for your Red Hat OpenStack Platform environment in an offline network, see "Configuring Red Hat OpenStack Platform Director in an Offline Environment" on the Red Hat Customer Portal.

Chapter 3. Planning your Overcloud

The following section provides some guidelines on planning various aspects of your Red Hat OpenStack Platform environment. This includes defining node roles, planning your network topology, and storage.

3.1. Planning Node Deployment Roles

The director provides multiple default node types for building your overcloud. These node types are:

- Controller

Provides key services for controlling your environment. This includes the dashboard (horizon), authentication (keystone), image storage (glance), networking (neutron), orchestration (heat), and high availability services. A Red Hat OpenStack Platform environment requires three Controller nodes for a highly available environment.

NoteEnvironments with one node can be used for testing purposes. Environments with two nodes or more than three nodes are not supported.

- Compute

- A physical server that acts as a hypervisor, and provides the processing capabilities required for running virtual machines in the environment. A basic Red Hat OpenStack Platform environment requires at least one Compute node.

- Ceph Storage

- A host that provides Red Hat Ceph Storage. Additional Ceph Storage hosts scale into a cluster. This deployment role is optional.

- Swift Storage

- A host that provides external object storage for OpenStack’s swift service. This deployment role is optional.

The following table provides some example of different overclouds and defines the node types for each scenario.

Table 3.1. Node Deployment Roles for Scenarios

| Controller | Compute | Ceph Storage | Swift Storage | Total | |

| Small overcloud | 3 | 1 | - | - | 4 |

| Medium overcloud | 3 | 3 | - | - | 6 |

| Medium overcloud with additional Object storage | 3 | 3 | - | 3 | 9 |

| Medium overcloud with Ceph Storage cluster | 3 | 3 | 3 | - | 9 |

In addition, consider whether to split individual services into custom roles. For more information on the composable roles architecture, see Chapter 8. Composable Roles and Services in the Advanced Overcloud Customization guide.

3.2. Planning Networks

It is important to plan your environment’s networking topology and subnets so that you can properly map roles and services to correctly communicate with each other. Red Hat OpenStack Platform uses the neutron networking service, which operates autonomously and manages software-based networks, static and floating IP addresses, and DHCP. The director deploys this service on each Controller node in an overcloud environment.

Red Hat OpenStack Platform maps the different services onto separate network traffic types, which are assigned to the various subnets in your environments. These network traffic types include:

Table 3.2. Network Type Assignments

| Network Type | Description | Used By |

| IPMI | Network used for power management of nodes. This network is predefined before the installation of the undercloud. | All nodes |

| Provisioning | The director uses this network traffic type to deploy new nodes over PXE boot and orchestrate the installation of OpenStack Platform on the overcloud bare metal servers. This network is predefined before the installation of the undercloud. | All nodes |

| Internal API | The Internal API network is used for communication between the OpenStack services using API communication, RPC messages, and database communication. | Controller, Compute, Cinder Storage, Swift Storage |

| Tenant | Neutron provides each tenant with their own networks using either VLAN segregation (where each tenant network is a network VLAN), or tunneling (through VXLAN or GRE). Network traffic is isolated within each tenant network. Each tenant network has an IP subnet associated with it, and network namespaces means that multiple tenant networks can use the same address range without causing conflicts. | Controller, Compute |

| Storage | Block Storage, NFS, iSCSI, and others. Ideally, this would be isolated to an entirely separate switch fabric for performance reasons. | All nodes |

| Storage Management | OpenStack Object Storage (swift) uses this network to synchronize data objects between participating replica nodes. The proxy service acts as the intermediary interface between user requests and the underlying storage layer. The proxy receives incoming requests and locates the necessary replica to retrieve the requested data. Services that use a Ceph back end connect over the Storage Management network, since they do not interact with Ceph directly but rather use the frontend service. Note that the RBD driver is an exception, as this traffic connects directly to Ceph. | Controller, Ceph Storage, Cinder Storage, Swift Storage |

| External | Hosts the OpenStack Dashboard (horizon) for graphical system management, the public APIs for OpenStack services, and performs SNAT for incoming traffic destined for instances. If the external network uses private IP addresses (as per RFC-1918), then further NAT must be performed for traffic originating from the internet. | Controller and undercloud |

| Floating IP | Allows incoming traffic to reach instances using 1-to-1 IP address mapping between the floating IP address, and the IP address actually assigned to the instance in the tenant network. If hosting the Floating IPs on a VLAN separate from External, you can trunk the Floating IP VLAN to the Controller nodes and add the VLAN through Neutron after overcloud creation. This provides a means to create multiple Floating IP networks attached to multiple bridges. The VLANs are trunked but are not configured as interfaces. Instead, neutron creates an OVS port with the VLAN segmentation ID on the chosen bridge for each Floating IP network. | Controller |

| Management | Provides access for system administration functions such as SSH access, DNS traffic, and NTP traffic. This network also acts as a gateway for non-Controller nodes | All nodes |

In a typical Red Hat OpenStack Platform installation, the number of network types often exceeds the number of physical network links. In order to connect all the networks to the proper hosts, the overcloud uses VLAN tagging to deliver more than one network per interface. Most of the networks are isolated subnets but some require a Layer 3 gateway to provide routing for Internet access or infrastructure network connectivity.

It is recommended that you deploy a project network (tunneled with GRE or VXLAN) even if you intend to use a neutron VLAN mode (with tunneling disabled) at deployment time. This requires minor customization at deployment time and leaves the option available to use tunnel networks as utility networks or virtualization networks in the future. You still create Tenant networks using VLANs, but you can also create VXLAN tunnels for special-use networks without consuming tenant VLANs. It is possible to add VXLAN capability to a deployment with a Tenant VLAN, but it is not possible to add a Tenant VLAN to an existing overcloud without causing disruption.

The director provides a method for mapping six of these traffic types to certain subnets or VLANs. These traffic types include:

- Internal API

- Storage

- Storage Management

- Tenant Networks

- External

- Management (optional)

Any unassigned networks are automatically assigned to the same subnet as the Provisioning network.

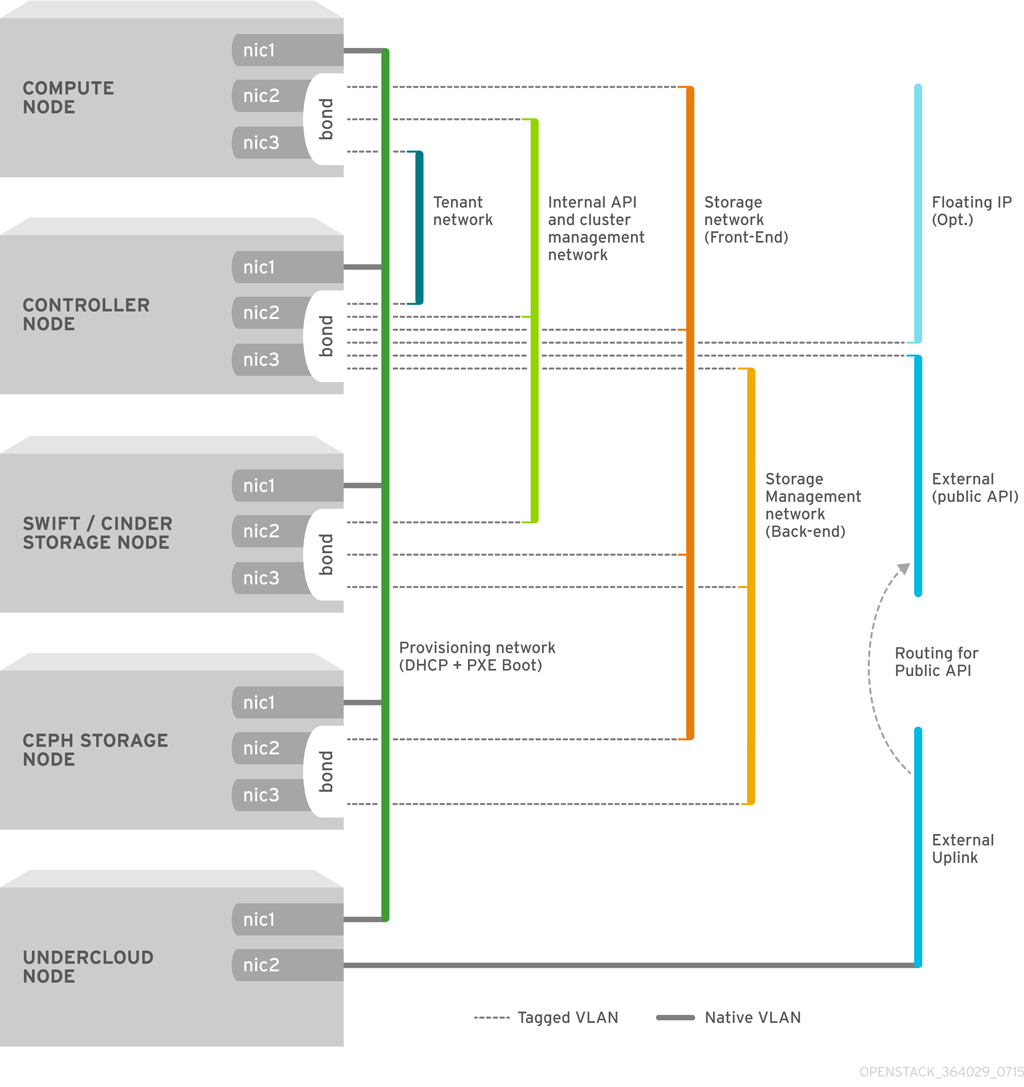

The diagram below provides an example of a network topology where the networks are isolated on separate VLANs. Each overcloud node uses two interfaces (nic2 and nic3) in a bond to deliver these networks over their respective VLANs. Meanwhile, each overcloud node communicates with the undercloud over the Provisioning network through a native VLAN using nic1.

The following table provides examples of network traffic mappings different network layouts:

Table 3.3. Network Mappings

| Mappings | Total Interfaces | Total VLANs | |

| Flat Network with External Access | Network 1 - Provisioning, Internal API, Storage, Storage Management, Tenant Networks Network 2 - External, Floating IP (mapped after overcloud creation) | 2 | 2 |

| Isolated Networks | Network 1 - Provisioning Network 2 - Internal API Network 3 - Tenant Networks Network 4 - Storage Network 5 - Storage Management Network 6 - Management (optional) Network 7 - External, Floating IP (mapped after overcloud creation) | 3 (includes 2 bonded interfaces) | 7 |

3.3. Planning Storage

Using LVM on a guest instance that uses a back end cinder-volume of any driver or back-end type results in issues with performance, volume visibility and availability, and data corruption. These issues can be mitigated using a LVM filter. For more information, refer to section 2.1 Back Ends in the Storage Guide and KCS article 3213311, "Using LVM on a cinder volume exposes the data to the compute host."

The director provides different storage options for the overcloud environment. This includes:

- Ceph Storage Nodes

The director creates a set of scalable storage nodes using Red Hat Ceph Storage. The overcloud uses these nodes for:

- Images - Glance manages images for VMs. Images are immutable. OpenStack treats images as binary blobs and downloads them accordingly. You can use glance to store images in a Ceph Block Device.

- Volumes - Cinder volumes are block devices. OpenStack uses volumes to boot VMs, or to attach volumes to running VMs. OpenStack manages volumes using Cinder services. You can use Cinder to boot a VM using a copy-on-write clone of an image.

Guest Disks - Guest disks are guest operating system disks. By default, when you boot a virtual machine with nova, its disk appears as a file on the filesystem of the hypervisor (usually under

/var/lib/nova/instances/<uuid>/). It is possible to boot every virtual machine inside Ceph directly without using cinder, which is advantageous because it allows you to perform maintenance operations easily with the live-migration process. Additionally, if your hypervisor dies it is also convenient to triggernova evacuateand run the virtual machine elsewhere almost seamlessly.ImportantIf you want to boot virtual machines in Ceph (ephemeral back end or boot from volume), the glance image format must be

RAWformat. Ceph does not support other image formats such as QCOW2 or VMDK for hosting a virtual machine disk.

See Red Hat Ceph Storage Architecture Guide for additional information.

- Swift Storage Nodes

- The director creates an external object storage node. This is useful in situations where you need to scale or replace controller nodes in your overcloud environment but need to retain object storage outside of a high availability cluster.

Chapter 4. Installing the Undercloud

The first step to creating your Red Hat OpenStack Platform environment is to install the director on the undercloud system. This involves a few prerequisite steps to enable the necessary subscriptions and repositories.

4.1. Creating a Director Installation User

The director installation process requires a non-root user to execute commands. Use the following commands to create the user named stack and set a password:

[root@director ~]# useradd stack [root@director ~]# passwd stack # specify a password

Disable password requirements for this user when using sudo:

[root@director ~]# echo "stack ALL=(root) NOPASSWD:ALL" | tee -a /etc/sudoers.d/stack [root@director ~]# chmod 0440 /etc/sudoers.d/stack

Switch to the new stack user:

[root@director ~]# su - stack [stack@director ~]$

Continue the director installation as the stack user.

4.2. Creating Directories for Templates and Images

The director uses system images and Heat templates to create the overcloud environment. To keep these files organized, we recommend creating directories for images and templates:

$ mkdir ~/images $ mkdir ~/templates

Other sections in this guide use these two directories to store certain files.

4.3. Setting the Hostname for the System

The director requires a fully qualified domain name for its installation and configuration process. This means you may need to set the hostname of your director’s host. Check the hostname of your host:

$ hostname # Checks the base hostname $ hostname -f # Checks the long hostname (FQDN)

If either commands do not report the correct hostname or report an error, use hostnamectl to set a hostname:

$ sudo hostnamectl set-hostname manager.example.com $ sudo hostnamectl set-hostname --transient manager.example.com

The director also requires an entry for the system’s hostname and base name in /etc/hosts. For example, if the system is named manager.example.com, then /etc/hosts requires an entry like:

127.0.0.1 manager.example.com manager localhost localhost.localdomain localhost4 localhost4.localdomain4

4.4. Registering your System

To install the Red Hat OpenStack Platform director, first register the host system using Red Hat Subscription Manager, and subscribe to the required channels.

Register your system with the Content Delivery Network, entering your Customer Portal user name and password when prompted:

$ sudo subscription-manager register

Find the entitlement pool ID for Red Hat OpenStack Platform director. For example:

$ sudo subscription-manager list --available --all --matches="*OpenStack*"

Subscription Name: Name of SKU

Provides: Red Hat Single Sign-On

Red Hat Enterprise Linux Workstation

Red Hat CloudForms

Red Hat OpenStack

Red Hat Software Collections (for RHEL Workstation)

Red Hat Virtualization

SKU: SKU-Number

Contract: Contract-Number

Pool ID: Valid-Pool-Number-123456

Provides Management: Yes

Available: 1

Suggested: 1

Service Level: Support-level

Service Type: Service-Type

Subscription Type: Sub-type

Ends: End-date

System Type: Physical

Locate the Pool ID value and attach the Red Hat OpenStack Platform 10 entitlement:

$ sudo subscription-manager attach --pool=Valid-Pool-Number-123456

Disable all default repositories, and then enable the required Red Hat Enterprise Linux repositories:

$ sudo subscription-manager repos --disable=* $ sudo subscription-manager repos --enable=rhel-7-server-rpms --enable=rhel-7-server-extras-rpms --enable=rhel-7-server-rh-common-rpms --enable=rhel-ha-for-rhel-7-server-rpms --enable=rhel-7-server-openstack-10-rpms

These repositories contain packages the director installation requires.

Only enable the repositories listed in Section 2.5, “Repository Requirements”. Additional repositories can cause package and software conflicts. Do not enable any additional repositories.

Set the RHEL version to RHEL 7.7:

$ sudo subscription-manager release --set=7.7

Perform an update on your system to make sure you have the latest base system packages:

$ sudo yum update -y $ sudo reboot

The system is now ready for the director installation.

4.5. Installing the Director Packages

Use the following command to install the required command line tools for director installation and configuration:

$ sudo yum install -y python-tripleoclient

This installs all packages required for the director installation.

4.6. Configuring the Director

The director installation process requires certain settings to determine your network configurations. The settings are stored in a template located in the stack user’s home directory as undercloud.conf.

Red Hat provides a basic template to help determine the required settings for your installation. Copy this template to the stack user’s home directory:

$ cp /usr/share/instack-undercloud/undercloud.conf.sample ~/undercloud.conf

The undercloud.conf file contains settings to configure your undercloud. If you omit or comment out a parameter, the undercloud installation uses the default value.

The template contains two sections: [DEFAULT] and [auth]. The [DEFAULT] section contains the following parameters:

- undercloud_hostname

- Defines the fully qualified host name for the undercloud. If set, the undercloud installation configures all system host name settings. If left unset, the undercloud uses the current host name, but the user must configure all system host name settings appropriately.

- local_ip

-

The IP address defined for the director’s Provisioning NIC. This is also the IP address the director uses for its DHCP and PXE boot services. Leave this value as the default

192.0.2.1/24unless you are using a different subnet for the Provisioning network, for example, if it conflicts with an existing IP address or subnet in your environment. - network_gateway

The gateway for the overcloud instances. This is the undercloud host, which forwards traffic to the External network. Leave this as the default

192.0.2.1unless you are either using a different IP address for the director or want to directly use an external gateway.NoteThe director’s configuration script also automatically enables IP forwarding using the relevant

sysctlkernel parameter.- undercloud_public_vip

-

The IP address or hostname defined for director Public API endpoints over SSL/TLS. The director configuration attaches the IP address to the director software bridge as a routed IP address, which uses the

/32netmask. - undercloud_admin_vip

-

The IP address or hostname defined for director Admin API endpoints over SSL/TLS. The director configuration attaches the IP address to the director software bridge as a routed IP address, which uses the

/32netmask. - undercloud_service_certificate

- The location and filename of the certificate for OpenStack SSL communication. Ideally, you obtain this certificate from a trusted certificate authority. Otherwise generate your own self-signed certificate using the guidelines in Appendix A, SSL/TLS Certificate Configuration. These guidelines also contain instructions on setting the SELinux context for your certificate, whether self-signed or from an authority.

- generate_service_certificate

-

Defines whether to generate an SSL certificate during the undercloud installation, which is used for the

undercloud_service_certificateparameter. The undercloud installation saves the resulting certificate/etc/pki/tls/certs/undercloud-[undercloud_public_vip].pem. The CA defined in thecertificate_generation_caparameter signs this certificate. - certificate_generation_ca

-

The

certmongernickname of the CA that signs the requested certificate. Only use this option if you have set thegenerate_service_certificateparameter. If you select thelocalCA, certmonger extracts the local CA certificate to/etc/pki/ca-trust/source/anchors/cm-local-ca.pemand adds it to the trust chain. - service_principal

- The Kerberos principal for the service using the certificate. Only use this if your CA requires a Kerberos principal, such as in FreeIPA.

- local_interface

The chosen interface for the director’s Provisioning NIC. This is also the device the director uses for its DHCP and PXE boot services. Change this value to your chosen device. To see which device is connected, use the

ip addrcommand. For example, this is the result of anip addrcommand:2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000 link/ether 52:54:00:75:24:09 brd ff:ff:ff:ff:ff:ff inet 192.168.122.178/24 brd 192.168.122.255 scope global dynamic eth0 valid_lft 3462sec preferred_lft 3462sec inet6 fe80::5054:ff:fe75:2409/64 scope link valid_lft forever preferred_lft forever 3: eth1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noop state DOWN link/ether 42:0b:c2:a5:c1:26 brd ff:ff:ff:ff:ff:ffIn this example, the External NIC uses

eth0and the Provisioning NIC useseth1, which is currently not configured. In this case, set thelocal_interfacetoeth1. The configuration script attaches this interface to a custom bridge defined with theinspection_interfaceparameter.- local_mtu

-

MTU to use for the

local_interface. - network_cidr

-

The network that the director uses to manage overcloud instances. This is the Provisioning network, which the undercloud’s

neutronservice manages. Leave this as the default192.0.2.0/24unless you are using a different subnet for the Provisioning network. - masquerade_network

-

Defines the network that will masquerade for external access. This provides the Provisioning network with a degree of network address translation (NAT) so that it has external access through the director. Leave this as the default (

192.0.2.0/24) unless you are using a different subnet for the Provisioning network. - dhcp_start; dhcp_end

- The start and end of the DHCP allocation range for overcloud nodes. Ensure this range contains enough IP addresses to allocate your nodes.

- hieradata_override

-

Path to

hieradataoverride file. If set, the undercloud installation copies this file under/etc/puppet/hieradataand sets it as the first file in the hierarchy. Use this to provide custom configuration to services beyond theundercloud.confparameters. - net_config_override

-

Path to network configuration override template. If set, the undercloud uses a JSON format template to configure the networking with

os-net-config. This ignores the network parameters set inundercloud.conf. See/usr/share/instack-undercloud/templates/net-config.json.templatefor an example. - inspection_interface

-

The bridge the director uses for node introspection. This is custom bridge that the director configuration creates. The

LOCAL_INTERFACEattaches to this bridge. Leave this as the defaultbr-ctlplane. - inspection_iprange

-

A range of IP address that the director’s introspection service uses during the PXE boot and provisioning process. Use comma-separated values to define the start and end of this range. For example,

192.0.2.100,192.0.2.120. Make sure this range contains enough IP addresses for your nodes and does not conflict with the range fordhcp_startanddhcp_end. - inspection_extras

-

Defines whether to enable extra hardware collection during the inspection process. Requires

python-hardwareorpython-hardware-detectpackage on the introspection image. - inspection_runbench

-

Runs a set of benchmarks during node introspection. Set to

trueto enable. This option is necessary if you intend to perform benchmark analysis when inspecting the hardware of registered nodes. See Section 5.2, “Inspecting the Hardware of Nodes” for more details. - inspection_enable_uefi

- Defines whether to support introspection of nodes with UEFI-only firmware. For more information, see Appendix D, Alternative Boot Modes.

- undercloud_debug

-

Sets the log level of undercloud services to

DEBUG. Set this value totrueto enable. - enable_tempest

-

Defines whether to install the validation tools. The default is set to

false, but you can can enable usingtrue. - enable_mistral

- Defines whether to install the OpenStack Workflow Service (mistral) in the undercloud.

- enable_zaqar

- Defines whether to install the OpenStack Messaging Service (zaqar) in the undercloud.

- ipxe_enabled

-

Defines whether to use iPXE or standard PXE. The default is

true, which enables iPXE. Set tofalseto set to standard PXE. For more information, see Appendix D, Alternative Boot Modes. - enable_telemetry

-

Defines whether to install OpenStack Telemetry (ceilometer, aodh) services in the undercloud. The default value is

false, which disables telemetry on the undercloud. This parameter is required if using other products that consume metrics data, such as Red Hat CloudForms. - enable_ui

-

Defines Whether to install the director’s web UI. This allows you to perform overcloud planning and deployments through a graphical web interface. For more information, see Chapter 6, Configuring Basic Overcloud Requirements with the Web UI. Note that the UI is only available with SSL/TLS enabled using either the

undercloud_service_certificateorgenerate_service_certificate. - enable_validations

- Defines whether to install the requirements to run validations.

- store_events

- Defines whether to store events in OpenStack Telemetry (ceilometer) on the undercloud.

- scheduler_max_attempts

- Maximum number of times the scheduler attempts to deploy an instance. Keep this greater or equal to the number of bare metal nodes you expect to deploy at once to work around potential race condition when scheduling.

The [auth] section contains the following parameters:

- undercloud_db_password; undercloud_admin_token; undercloud_admin_password; undercloud_glance_password; etc

The remaining parameters are the access details for all of the director’s services. No change is required for the values. The director’s configuration script automatically generates these values if blank in

undercloud.conf. You can retrieve all values after the configuration script completes.ImportantThe configuration file examples for these parameters use

<None>as a placeholder value. Setting these values to<None>leads to a deployment error.

Modify the values for these parameters to suit your network. When complete, save the file and run the following command:

$ openstack undercloud install

This launches the director’s configuration script. The director installs additional packages and configures its services to suit the settings in the undercloud.conf. This script takes several minutes to complete.

The configuration script generates two files when complete:

-

undercloud-passwords.conf- A list of all passwords for the director’s services. -

stackrc- A set of initialization variables to help you access the director’s command line tools.

The configuration also starts all OpenStack Platform services automatically. Check the enabled services using the following command:

$ sudo systemctl list-units openstack-*

To initialize the stack user to use the command line tools, run the following command:

$ source ~/stackrc

You can now use the director’s command line tools.

4.7. Obtaining Images for Overcloud Nodes

The director requires several disk images for provisioning overcloud nodes. This includes:

- An introspection kernel and ramdisk - Used for bare metal system introspection over PXE boot.

- A deployment kernel and ramdisk - Used for system provisioning and deployment.

- An overcloud kernel, ramdisk, and full image - A base overcloud system that is written to the node’s hard disk.

Obtain these images from the rhosp-director-images and rhosp-director-images-ipa packages:

$ sudo yum install rhosp-director-images rhosp-director-images-ipa

Extract the archives to the images directory on the stack user’s home (/home/stack/images):

$ cd ~/images $ for i in /usr/share/rhosp-director-images/overcloud-full-latest-10.0.tar /usr/share/rhosp-director-images/ironic-python-agent-latest-10.0.tar; do tar -xvf $i; done

Import these images into the director:

$ openstack overcloud image upload --image-path /home/stack/images/

This uploads the following images into the director: bm-deploy-kernel, bm-deploy-ramdisk, overcloud-full, overcloud-full-initrd, overcloud-full-vmlinuz. These are the images for deployment and the overcloud. The script also installs the introspection images on the director’s PXE server.

View a list of the images in the CLI:

$ openstack image list +--------------------------------------+------------------------+ | ID | Name | +--------------------------------------+------------------------+ | 765a46af-4417-4592-91e5-a300ead3faf6 | bm-deploy-ramdisk | | 09b40e3d-0382-4925-a356-3a4b4f36b514 | bm-deploy-kernel | | ef793cd0-e65c-456a-a675-63cd57610bd5 | overcloud-full | | 9a51a6cb-4670-40de-b64b-b70f4dd44152 | overcloud-full-initrd | | 4f7e33f4-d617-47c1-b36f-cbe90f132e5d | overcloud-full-vmlinuz | +--------------------------------------+------------------------+

This list will not show the introspection PXE images. The director copies these files to /httpboot.

[stack@host1 ~]$ ls -l /httpboot total 341460 -rwxr-xr-x. 1 root root 5153184 Mar 31 06:58 agent.kernel -rw-r--r--. 1 root root 344491465 Mar 31 06:59 agent.ramdisk -rw-r--r--. 1 root root 337 Mar 31 06:23 inspector.ipxe

The default overcloud-full.qcow2 image is a flat partition image. However, you can also import and use whole disk images. See Appendix C, Whole Disk Images for more information.

4.8. Setting a Nameserver on the Undercloud’s Neutron Subnet

If you intend for the overcloud to resolve external hostnames, such as cdn.redhat.com, it is recommended to set a nameserver on the overcloud nodes. For a standard overcloud without network isolation, the nameserver is defined using the undercloud’s neutron subnet. Use the following commands to define nameservers for the environment:

$ openstack subnet list $ openstack subnet set --dns-nameserver [nameserver1-ip] --dns-nameserver [nameserver2-ip] [subnet-uuid]

View the subnet to verify the nameserver:

$ openstack subnet show [subnet-uuid] +-------------------+-----------------------------------------------+ | Field | Value | +-------------------+-----------------------------------------------+ | ... | | | dns_nameservers | 8.8.8.8 | | ... | | +-------------------+-----------------------------------------------+

If you aim to isolate service traffic onto separate networks, the overcloud nodes use the DnsServer parameter in your network environment files.

4.9. Backing Up the Undercloud

Red Hat provides a process to back up important data from the undercloud host and the Red Hat OpenStack Platform director. For more information about undercloud backups, see the "Back Up and Restore the Director Undercloud" guide.

4.10. Completing the Undercloud Configuration

This completes the undercloud configuration. The next chapter explores basic overcloud configuration, including registering nodes, inspecting them, and then tagging them into various node roles.

Chapter 5. Configuring Basic Overcloud Requirements with the CLI Tools

This chapter provides the basic configuration steps for an OpenStack Platform environment using the CLI tools. An overcloud with a basic configuration contains no custom features. However, you can add advanced configuration options to this basic overcloud and customize it to your specifications using the instructions in the Advanced Overcloud Customization guide.

For the examples in this chapter, all nodes in this chapter are bare metal systems using IPMI for power management. For more supported power management types and their options, see Appendix B, Power Management Drivers.

Workflow

- Create a node definition template and register blank nodes in the director.

- Inspect hardware of all nodes.

- Tag nodes into roles.

- Define additional node properties.

Requirements

- The director node created in Chapter 4, Installing the Undercloud

- A set of bare metal machines for your nodes. The number of node required depends on the type of overcloud you intend to create (see Section 3.1, “Planning Node Deployment Roles” for information on overcloud roles). These machines also must comply with the requirements set for each node type. For these requirements, see Section 2.4, “Overcloud Requirements”. These nodes do not require an operating system. The director copies a Red Hat Enterprise Linux 7 image to each node.

One network connection for our Provisioning network, which is configured as a native VLAN. All nodes must connect to this network and comply with the requirements set in Section 2.3, “Networking Requirements”. For the examples in this chapter, we use 192.0.2.0/24 as the Provisioning subnet with the following IP address assignments:

Table 5.1. Provisioning Network IP Assignments

Node Name

IP Address

MAC Address

IPMI IP Address

Director

192.0.2.1

aa:aa:aa:aa:aa:aa

None required

Controller

DHCP defined

bb:bb:bb:bb:bb:bb

192.0.2.205

Compute

DHCP defined

cc:cc:cc:cc:cc:cc

192.0.2.206

- All other network types use the Provisioning network for OpenStack services. However, you can create additional networks for other network traffic types.

5.1. Registering Nodes for the Overcloud

The director requires a node definition template, which you create manually. This file (instackenv.json) uses the JSON format file, and contains the hardware and power management details for your nodes. For example, a template for registering two nodes might look like this:

{

"nodes":[

{

"mac":[

"bb:bb:bb:bb:bb:bb"

],

"name":"node01",

"cpu":"4",

"memory":"6144",

"disk":"40",

"arch":"x86_64",

"pm_type":"pxe_ipmitool",

"pm_user":"admin",

"pm_password":"p@55w0rd!",

"pm_addr":"192.0.2.205"

},

{

"mac":[

"cc:cc:cc:cc:cc:cc"

],

"name":"node02",

"cpu":"4",

"memory":"6144",

"disk":"40",

"arch":"x86_64",

"pm_type":"pxe_ipmitool",

"pm_user":"admin",

"pm_password":"p@55w0rd!",

"pm_addr":"192.0.2.206"

}

]

}This template uses the following attributes:

- name

- The logical name for the node.

- pm_type

-

The power management driver to use. This example uses the IPMI driver (

pxe_ipmitool), which is the preferred driver for power management. - pm_user; pm_password

- The IPMI username and password.

- pm_addr

- The IP address of the IPMI device.

- mac

- (Optional) A list of MAC addresses for the network interfaces on the node. Use only the MAC address for the Provisioning NIC of each system.

- cpu

- (Optional) The number of CPUs on the node.

- memory

- (Optional) The amount of memory in MB.

- disk

- (Optional) The size of the hard disk in GB.

- arch

- (Optional) The system architecture.

IPMI is the preferred supported power management driver. For more supported power management types and their options, see Appendix B, Power Management Drivers. If these power management drivers do not work as expected, use IPMI for your power management.

After creating the template, run the following command to verify the formatting and syntax:

$ openstack baremetal instackenv validate --file instackenv.json

Save the file to the stack user’s home directory (/home/stack/instackenv.json), then run the following command to import the template to the director:

$ openstack overcloud node import ~/instackenv.json

This imports the template and registers each node from the template into the director.

After the node registration and configuration completes, wiew a list of these nodes in the CLI:

$ openstack baremetal node list

5.2. Inspecting the Hardware of Nodes

The director can run an introspection process on each node. This process causes each node to boot an introspection agent over PXE. This agent collects hardware data from the node and sends it back to the director. The director then stores this introspection data in the OpenStack Object Storage (swift) service running on the director. The director uses hardware information for various purposes such as profile tagging, benchmarking, and manual root disk assignment.

You can also create policy files to automatically tag nodes into profiles immediately after introspection. For more information on creating policy files and including them in the introspection process, see Appendix E, Automatic Profile Tagging. Alternatively, you can manually tag nodes into profiles as per the instructions in Section 5.3, “Tagging Nodes into Profiles”.

Set all nodes to a managed state:

$ for node in $(openstack baremetal node list -c UUID -f value) ; do openstack baremetal node manage $node ; done

Run the following command to inspect the hardware attributes of each node:

$ openstack overcloud node introspect --all-manageable --provide

-

The

--all-manageableoption introspects only nodes in a managed state. In this example, it is all of them. -

The

--provideoption resets all nodes to an active state after introspection.

Monitor the progress of the introspection using the following command in a separate terminal window:

$ sudo journalctl -l -u openstack-ironic-inspector -u openstack-ironic-inspector-dnsmasq -u openstack-ironic-conductor -f

Make sure this process runs to completion. This process usually takes 15 minutes for bare metal nodes.

Alternatively, perform a single introspection on each node individually. Set the node to management mode, perform the introspection, then move the node out of management mode:

$ openstack baremetal node manage [NODE UUID] $ openstack overcloud node introspect [NODE UUID] --provide

5.3. Tagging Nodes into Profiles

After registering and inspecting the hardware of each node, you will tag them into specific profiles. These profile tags match your nodes to flavors, and in turn the flavors are assigned to a deployment role. The following example shows the relationship across roles, flavors, profiles, and nodes for Controller nodes:

| Type | Description |

|---|---|

| Role |

The |

| Flavor |

The |

| Profile |

The |

| Node |

You also apply the |

Default profile flavors compute, control, swift-storage, ceph-storage, and block-storage are created during undercloud installation and are usable without modification in most environments.

For a large number of nodes, use automatic profile tagging. See Appendix E, Automatic Profile Tagging for more details.

To tag a node into a specific profile, add a profile option to the properties/capabilities parameter for each node. For example, to tag your nodes to use Controller and Compute profiles respectively, use the following commands:

$ openstack baremetal node set --property capabilities='profile:compute,boot_option:local' 58c3d07e-24f2-48a7-bbb6-6843f0e8ee13 $ openstack baremetal node set --property capabilities='profile:control,boot_option:local' 1a4e30da-b6dc-499d-ba87-0bd8a3819bc0

The addition of the profile:compute and profile:control options tag the two nodes into each respective profiles.

These commands also set the boot_option:local parameter, which defines how each node boots. Depending on your hardware, you might also need to add the boot_mode parameter to uefi so that nodes boot using UEFI instead of the default BIOS mode. For more information, see Section D.2, “UEFI Boot Mode”.

After completing node tagging, check the assigned profiles or possible profiles:

$ openstack overcloud profiles list

Custom Role Profiles

If using custom roles, you might need to create additional flavors and profiles to accommodate these new roles. For example, to create a new flavor for a Networker role, run the following command:

$ openstack flavor create --id auto --ram 4096 --disk 40 --vcpus 1 networker $ openstack flavor set --property "cpu_arch"="x86_64" --property "capabilities:boot_option"="local" --property "capabilities:profile"="networker" networker

Assign nodes with this new profile:

$ openstack baremetal node set --property capabilities='profile:networker,boot_option:local' dad05b82-0c74-40bf-9d12-193184bfc72d

5.4. Defining the Root Disk for Nodes

Some nodes might use multiple disks. This means the director needs to identify the disk to use for the root disk during provisioning. There are several properties you can use to help the director identify the root disk:

-

model(String): Device identifier. -

vendor(String): Device vendor. -

serial(String): Disk serial number. -

hctl(String): Host:Channel:Target:Lun for SCSI. -

size(Integer): Size of the device in GB. -

wwn(String): Unique storage identifier. -

wwn_with_extension(String): Unique storage identifier with the vendor extension appended. -

wwn_vendor_extension(String): Unique vendor storage identifier. -

rotational(Boolean): True for a rotational device (HDD), otherwise false (SSD). -

name(String): The name of the device, for example: /dev/sdb1 Only use this for devices with persistent names.

This example shows how to use a disk’s serial number to specify the root disk to deploy the overcloud image.

Check the disk information from each node’s hardware introspection. The following command displays the disk information from a node:

$ openstack baremetal introspection data save 1a4e30da-b6dc-499d-ba87-0bd8a3819bc0 | jq ".inventory.disks"

For example, the data for one node might show three disks:

[

{

"size": 299439751168,

"rotational": true,

"vendor": "DELL",

"name": "/dev/sda",

"wwn_vendor_extension": "0x1ea4dcc412a9632b",

"wwn_with_extension": "0x61866da04f3807001ea4dcc412a9632b",

"model": "PERC H330 Mini",

"wwn": "0x61866da04f380700",

"serial": "61866da04f3807001ea4dcc412a9632b"

}

{

"size": 299439751168,

"rotational": true,

"vendor": "DELL",

"name": "/dev/sdb",

"wwn_vendor_extension": "0x1ea4e13c12e36ad6",

"wwn_with_extension": "0x61866da04f380d001ea4e13c12e36ad6",

"model": "PERC H330 Mini",

"wwn": "0x61866da04f380d00",

"serial": "61866da04f380d001ea4e13c12e36ad6"

}

{

"size": 299439751168,

"rotational": true,

"vendor": "DELL",

"name": "/dev/sdc",

"wwn_vendor_extension": "0x1ea4e31e121cfb45",

"wwn_with_extension": "0x61866da04f37fc001ea4e31e121cfb45",

"model": "PERC H330 Mini",

"wwn": "0x61866da04f37fc00",

"serial": "61866da04f37fc001ea4e31e121cfb45"

}

]

For this example, set the root device to disk 2, which has 61866da04f380d001ea4e13c12e36ad6 as the serial number. This requires a change to the root_device parameter for the node definition:

$ openstack baremetal node set --property root_device='{"serial": "61866da04f380d001ea4e13c12e36ad6"}' 1a4e30da-b6dc-499d-ba87-0bd8a3819bc0This helps the director identify the specific disk to use as the root disk. When we initiate our overcloud creation, the director provisions this node and writes the overcloud image to this disk.

Make sure to configure the BIOS of each node to include booting from the chosen root disk. The recommended boot order is network boot, then root disk boot.

Do not use name to set the root disk as this value can change when the node boots.

5.5. Customizing the Overcloud

The undercloud includes a set of Heat templates that acts as a plan for your overcloud creation. You can customize advanced features for your overcloud using the Advanced Overcloud Customization guide.

Otherwise, you can continue and deploy a basic overcloud. See Section 5.6, “Creating the Overcloud with the CLI Tools” for more information.

A basic overcloud uses local LVM storage for block storage, which is not a supported configuration. It is recommended to use an external storage solution for block storage.

5.6. Creating the Overcloud with the CLI Tools

The final stage in creating your OpenStack environment is to run the openstack overcloud deploy command to create it. Before running this command, you should familiarize yourself with key options and how to include custom environment files. The following section discusses the openstack overcloud deploy command and the options associated with it.

Do not run openstack overcloud deploy as a background process. The overcloud creation might hang in mid-deployment if started as a background process.

Setting Overcloud Parameters

The following table lists the additional parameters when using the openstack overcloud deploy command.

Table 5.2. Deployment Parameters

| Parameter | Description |

|---|---|

|

|

The directory containing the Heat templates to deploy. If blank, the command uses the default template location at |

|

| The name of the stack to create or update |

|

| Deployment timeout in minutes |

|

| Virtualization type to use for hypervisors |

|

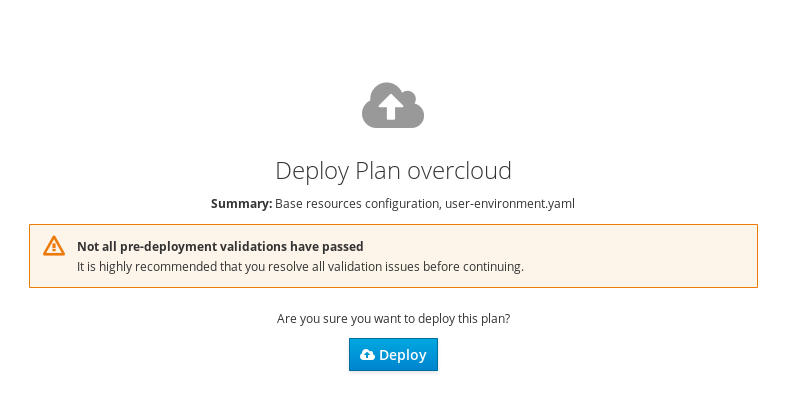

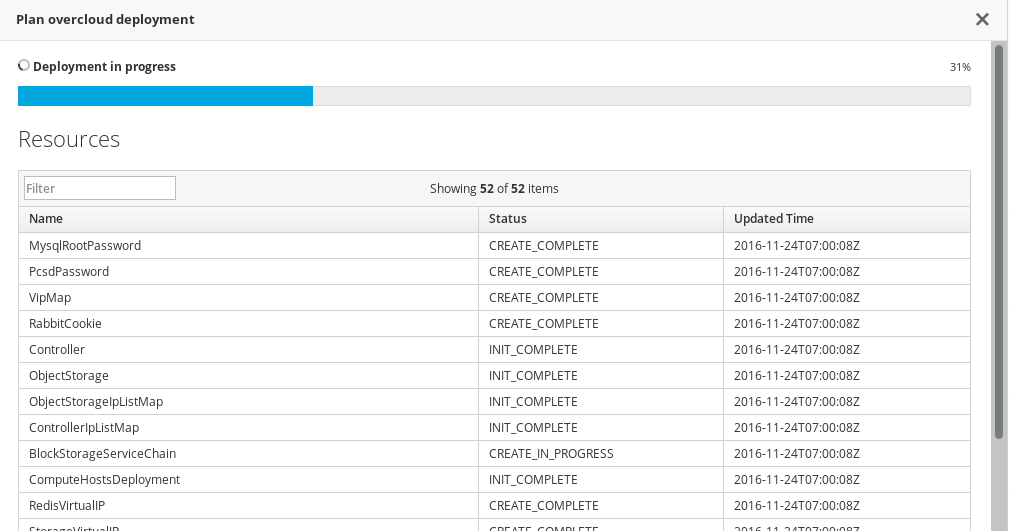

|