Chapter 3. Dynamically provisioned OpenShift Container Storage deployed on Red Hat Virtualization

To replace an operational or failed storage device on the Red Hat Virtualization installer provisioned infrastructure, perform the steps in the following section.

3.1. Replacing operational or failed storage devices on Red Hat Virtualization installer-provisioned infrastructure

If you want to replace one or more virtual machine disks (VMDK) in OpenSHift Container Storage deployed Red Hat Virtualization infrastructure, perform the steps in the procedure. This procedure helps to create a new Persistent Volume Claim (PVC) on a new volume and removes the old object storage device (OSD).

Prerequisites

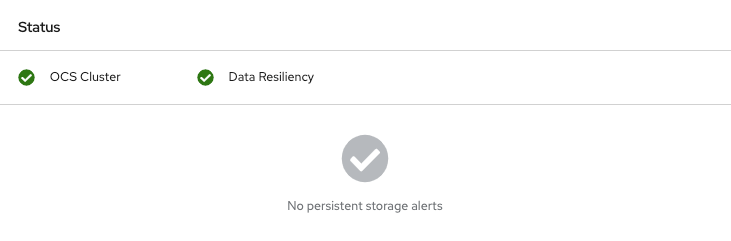

Ensure that the data is resilient.

- On the OpenShift Web console, navigate to Storage → Overview.

- Under Block and File in the Status card, confirm that the Data Resiliency has a green tick mark.

Procedure

Identify the OSD to be replaced and the OpenShift Container Platform node that has the OSD scheduled on it.

$ oc get -n openshift-storage pods -l app=rook-ceph-osd -o wide

Example output:

rook-ceph-osd-0-6d77d6c7c6-m8xj6 0/1 CrashLoopBackOff 0 24h 10.129.0.16 compute-2 <none> <none> rook-ceph-osd-1-85d99fb95f-2svc7 1/1 Running 0 24h 10.128.2.24 compute-0 <none> <none> rook-ceph-osd-2-6c66cdb977-jp542 1/1 Running 0 24h 10.130.0.18 compute-1 <none> <none>

In this example,

rook-ceph-osd-0-6d77d6c7c6-m8xj6needs to be replaced andcompute-2is the OpenShift Container platform node on which the OSD is scheduled.NoteIf the OSD to be replaced is healthy, the status of the pod is Running.

Scale down the OSD deployment for the OSD to be replaced.

Every time you want to replace the OSD, repeat this step by updating the

osd_id_to_removeparameter with the OSD ID.$ osd_id_to_remove=0 $ oc scale -n openshift-storage deployment rook-ceph-osd-${osd_id_to_remove} --replicas=0where,

osd_id_to_removeis the integer in the pod name immediately after therook-ceph-osdprefix. In this example, the deployment name isrook-ceph-osd-0.Example output:

deployment.extensions/rook-ceph-osd-0 scaled

Verify the

rook-ceph-osdpod is terminated.$ oc get -n openshift-storage pods -l ceph-osd-id=${osd_id_to_remove}Example output:

No resources found.

NoteIf the

rook-ceph-osdpod is in the terminating state, use theforceoption to delete the pod.$ oc delete pod rook-ceph-osd-0-6d77d6c7c6-m8xj6 --force --grace-period=0

Example output:

warning: Immediate deletion does not wait for confirmation that the running resource has been terminated. The resource may continue to run on the cluster indefinitely. pod "rook-ceph-osd-0-6d77d6c7c6-m8xj6" force deleted

Remove the old OSD from the cluster to add a new OSD.

Delete any old

ocs-osd-removaljobs.$ oc delete -n openshift-storage job ocs-osd-removal-job

Example output:

job.batch "ocs-osd-removal-job"

Change to the

openshift-storageproject.$ oc project openshift-storage

Remove the old OSD from the cluster.

$ oc process -n openshift-storage ocs-osd-removal -p FAILED_OSD_IDS=${osd_id_to_remove} |oc create -n openshift-storage -f -You can remove more than one OSD by adding comma separated OSD IDs in the command. (For example: FAILED_OSD_IDS=0,1,2)

WarningThis step results in OSD being completely removed from the cluster. Ensure that the correct value of

osd_id_to_removeis provided.

Verify the OSD is removed successfully by checking the status of the

ocs-osd-removalpod. A status of Completed confirms that the OSD removal job succeeded.$ oc get pod -l job-name=ocs-osd-removal-job -n openshift-storage

NoteIf

ocs-osd-removalfails and the pod is not in the expected Completed state, check the pod logs for further debugging. For example:$ oc logs -l job-name=ocs-osd-removal-job -n openshift-storage --tail=-1'

If encryption was enabled at the time of install, remove

dm-cryptmanageddevice-mappermapping from the OSD devices that are removed from the respective OpenShift Container Storage nodes.Get PVC names of the replaced OSDs from the logs of

ocs-osd-removal-jobpod :$ oc logs -l job-name=ocs-osd-removal-job -n openshift-storage --tail=-1 |egrep -i ‘pvc|deviceset’

For example:

2021-05-12 14:31:34.666000 I | cephosd: removing the OSD PVC "ocs-deviceset-xxxx-xxx-xxx-xxx"

For each of the nodes identified in the previous step, perform the following:

Create a

debugpod andchrootto the host on the storage node.$ oc debug node/<node name> $ chroot /host

Find relevant device name based on the PVC names identified in the previous step

sh-4.4# dmsetup ls| grep <pvc name> ocs-deviceset-xxx-xxx-xxx-xxx-block-dmcrypt (253:0)

Remove the mapped device.

$ cryptsetup luksClose --debug --verbose ocs-deviceset-xxx-xxx-xxx-xxx-block-dmcrypt

NoteIf the above command gets stuck due to insufficient privileges, run the following commands:

-

Press

CTRL+Zto exit the above command. Find the PID of the process that is stuck.

$ ps -ef | grep crypt

Terminate the process using the

killcommand.$ kill -9 <PID>

Verify that the device name is removed.

$ dmsetup ls

-

Press

Delete the

ocs-osd-removaljob.$ oc delete -n openshift-storage job ocs-osd-removal-job

Example output:

job.batch "ocs-osd-removal-job" deleted

When using an external key management system (KMS) with data encryption, the old OSD encryption key can be removed from the Vault server as it is now an orphan key.

Verification steps

Verify there is a new OSD running.

$ oc get -n openshift-storage pods -l app=rook-ceph-osd

Example output:

rook-ceph-osd-0-5f7f4747d4-snshw 1/1 Running 0 4m47s rook-ceph-osd-1-85d99fb95f-2svc7 1/1 Running 0 1d20h rook-ceph-osd-2-6c66cdb977-jp542 1/1 Running 0 1d20h

Verify there is a new PVC created which is in

Boundstate.$ oc get -n openshift-storage pvc

Optional: If cluster-wide encryption is enabled on the cluster, verify that the new OSD devices are encrypted.

Identify the nodes where the new OSD pods are running.

$ oc get -o=custom-columns=NODE:.spec.nodeName pod/<OSD pod name>

For example:

oc get -o=custom-columns=NODE:.spec.nodeName pod/rook-ceph-osd-0-544db49d7f-qrgqm

For each of the nodes identified in previous step, perform the following:

Create a

debugpod and open achrootenvironment for the selected hosts.$ oc debug node/<node name> $ chroot /host

Run

lsblkand check for thecryptkeyword next to theocs-devicesetnames.$ lsblk

Log in to the OpenShift Web Console and view the storage dashboard.

Figure 3.1. OSD status in OpenShift Container Platform storage dashboard after device replacement