Chapter 2. Deploying using local storage devices

Deploying OpenShift Container Storage on OpenShift Container Platform using local storage devices provides you with the option to create internal cluster resources. This will result in the internal provisioning of the base services, which helps to make additional storage classes available to applications.

Use this section to deploy OpenShift Container Storage on Amazon EC2 storage optimized I3 where OpenShift Container Platform is already installed.

Installing OpenShift Container Storage on Amazon EC2 storage optimized I3 instances using the Local Storage Operator is a Technology Preview feature. Technology Preview features are not supported with Red Hat production service level agreements (SLAs) and might not be functionally complete. Red Hat does not recommend using them in production. These features provide early access to upcoming product features, enabling customers to test functionality and provide feedback during the development process. Red Hat OpenShift Container Storage deployment assumes a new cluster, without any application or other workload running on the 3 worker nodes. Applications should run on additional worker nodes.

2.1. Overview of deploying with internal local storage

To deploy Red Hat OpenShift Container Storage using local storage, follow these steps:

- Understand the requirements for installing OpenShift Container Storage using local storage devices.

For Red Hat Enterprise Linux based hosts, enabling file system access for containers on Red Hat Enterprise Linux based nodes.

NoteSkip this step for Red Hat Enterprise Linux CoreOS (RHCOS).

- Install the Red Hat OpenShift Container Storage Operator.

- Install Local Storage Operator.

- Find the available storage devices.

- Creating OpenShift Container Storage cluster service on Amazon EC2 storage optimized - i3en.2xlarge instance type.

2.2. Requirements for installing OpenShift Container Storage using local storage devices

You must have at least three OpenShift Container Platform worker nodes in the cluster with locally attached storage devices on each of them.

- Each of the three selected nodes must have at least one raw block device available to be used by OpenShift Container Storage.

- For minimum starting node requirements, see Resource requirements section in Planning guide.

- The devices to be used must be empty, that is, there should be no PVs, VGs, or LVs remaining on the disks.

You must have a minimum of three labeled nodes.

- Ensure that the Nodes are spread across different Locations/Availability Zones for a multiple availability zones platform.

Each node that has local storage devices to be used by OpenShift Container Storage must have a specific label to deploy OpenShift Container Storage pods. To label the nodes, use the following command:

$ oc label nodes <NodeNames> cluster.ocs.openshift.io/openshift-storage=''

- There should not be any storage providers managing locally mounted storage on the storage nodes that would conflict with the use of Local Storage Operator for Red Hat OpenShift Container Storage.

- The Local Storage Operator version must match the Red Hat OpenShift Container Platform version in order to have the Local Storage Operator fully supported with Red Hat OpenShift Container Storage. The Local Storage Operator does not get upgraded when Red Hat OpenShift Container Platform is upgraded.

2.3. Enabling file system access for containers on Red Hat Enterprise Linux based nodes

Deploying OpenShift Container Platform on a Red Hat Enterprise Linux base in a user provisioned infrastructure (UPI) does not automatically provide container access to the underlying Ceph file system.

This process is not necessary for hosts based on Red Hat Enterprise Linux CoreOS.

Procedure

Perform the following steps on each node in your cluster.

- Log in to the Red Hat Enterprise Linux based node and open a terminal.

Verify that the node has access to the rhel-7-server-extras-rpms repository.

# subscription-manager repos --list-enabled | grep rhel-7-server

If you do not see both

rhel-7-server-rpmsandrhel-7-server-extras-rpmsin the output, or if there is no output, run the following commands to enable each repository.# subscription-manager repos --enable=rhel-7-server-rpms # subscription-manager repos --enable=rhel-7-server-extras-rpms

Install the required packages.

# yum install -y policycoreutils container-selinux

Persistently enable container use of the Ceph file system in SELinux.

# setsebool -P container_use_cephfs on

2.4. Installing Red Hat OpenShift Container Storage Operator

You can install Red Hat OpenShift Container Storage Operator using the Red Hat OpenShift Container Platform Operator Hub. For information about the hardware and software requirements, see Planning your deployment.

Prerequisites

- You must be logged into the OpenShift Container Platform cluster.

- You must have at least three worker nodes in the OpenShift Container Platform cluster.

When you need to override the cluster-wide default node selector for OpenShift Container Storage, you can use the following command in command line interface to specify a blank node selector for the openshift-storage namespace:

$ oc annotate namespace openshift-storage openshift.io/node-selector=

Procedure

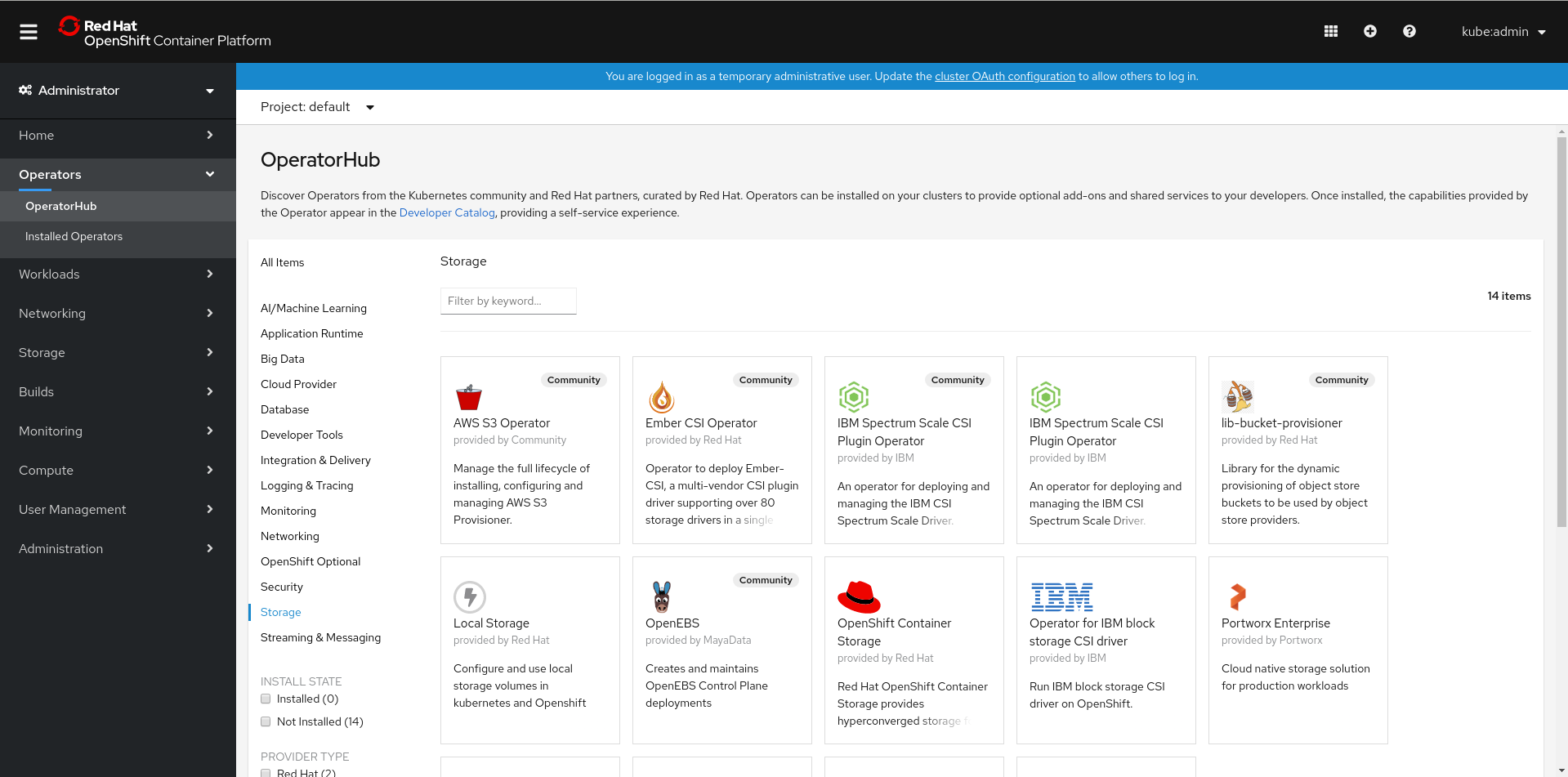

Click Operators → OperatorHub in the left pane of the OpenShift Web Console.

Figure 2.1. List of operators in the Operator Hub

Click on OpenShift Container Storage.

You can use the Filter by keyword text box or the filter list to search for OpenShift Container Storage from the list of operators.

- On the OpenShift Container Storage operator page, click Install.

On the Install Operator page, ensure the following options are selected:

- Update Channel as stable-4.5

- Installation Mode as A specific namespace on the cluster

-

Installed Namespace as Operator recommended namespace PR openshift-storage. If Namespace

openshift-storagedoes not exist, it will be created during the operator installation. Select Approval Strategy as Automatic or Manual. Approval Strategy is set to Automatic by default.

Approval Strategy as Automatic.

NoteWhen you select the Approval Strategy as Automatic, approval is not required either during fresh installation or when updating to the latest version of OpenShift Container Storage.

- Click Install

- Wait for the install to initiate. This may take up to 20 minutes.

- Click Operators → Installed Operators

-

Ensure the Project is

openshift-storage. By default, the Project isopenshift-storage. - Wait for the Status of OpenShift Container Storage to change to Succeeded.

Approval Strategy as Manual.

NoteWhen you select the Approval Strategy as Manual, approval is required during fresh installation or when updating to the latest version of OpenShift Container Storage.

- Click Install.

- On the Installed Operators page, click ocs-operator.

- On the Subscription Details page, click the Install Plan link.

- On the InstallPlan Details page, click Preview Install Plan.

- Review the install plan and click Approve.

- Wait for the Status of the Components to change from Unknown to either Created or Present.

- Click Operators → Installed Operators

-

Ensure the Project is

openshift-storage. By default, the Project isopenshift-storage. - Wait for the Status of OpenShift Container Storage to change to Succeeded.

Verification steps

- Verify that OpenShift Container Storage Operator shows the Status as Succeeded on the Installed Operators dashboard.

2.5. Installing Local Storage Operator

Use this procedure to install the Local Storage Operator from the Operator Hub before creating OpenShift Container Storage clusters on local storage devices.

Prerequisites

Create a namespace called

local-storageas follows:- Click Administration → Namespaces in the left pane of the OpenShift Web Console.

- Click Create Namespace.

-

In the Create Namespace dialog box, enter

local-storagefor Name. - Select No restrictions option for Default Network Policy.

- Click Create.

Procedure

- Click Operators → OperatorHub in the left pane of the OpenShift Web Console.

- Search for Local Storage Operator from the list of operators and click on it.

Click Install.

Figure 2.2. Install Operator page

On the Install Operator page, ensure the following options are selected

- Update Channel as stable-4.5

- Installation Mode as A specific namespace on the cluster

- Installed Namespace as local-storage.

- Approval Strategy as Automatic

- Click Install.

-

Verify that the Local Storage Operator shows the Status as

Succeeded.

2.6. Finding available storage devices

Use this procedure to identify the device names for each of the three or more nodes that you have labeled with the OpenShift Container Storage label cluster.ocs.openshift.io/openshift-storage='' before creating PVs.

Procedure

List and verify the name of the nodes with the OpenShift Container Storage label.

$ oc get nodes -l cluster.ocs.openshift.io/openshift-storage=

Example output:

NAME STATUS ROLES AGE VERSION ip-10-0-135-71.us-east-2.compute.internal Ready worker 6h45m v1.16.2 ip-10-0-145-125.us-east-2.compute.internal Ready worker 6h45m v1.16.2 ip-10-0-160-91.us-east-2.compute.internal Ready worker 6h45m v1.16.2

Log in to each node that is used for OpenShift Container Storage resources and find the unique

by-iddevice name for each available raw block device.$ oc debug node/<Nodename>

Example output:

$ oc debug node/ip-10-0-135-71.us-east-2.compute.internal Starting pod/ip-10-0-135-71us-east-2computeinternal-debug ... To use host binaries, run `chroot /host` Pod IP: 10.0.135.71 If you don't see a command prompt, try pressing enter. sh-4.2# chroot /host sh-4.4# lsblk NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT xvda 202:0 0 120G 0 disk |-xvda1 202:1 0 384M 0 part /boot |-xvda2 202:2 0 127M 0 part /boot/efi |-xvda3 202:3 0 1M 0 part `-xvda4 202:4 0 119.5G 0 part `-coreos-luks-root-nocrypt 253:0 0 119.5G 0 dm /sysroot nvme0n1 259:0 0 2.3T 0 disk nvme1n1 259:1 0 2.3T 0 disk

In this example, for the selected node, the local devices available are

nvme0n1andnvme1n1.Identify the unique ID for each of the devices selected in Step 2.

sh-4.4# ls -l /dev/disk/by-id/ | grep Storage lrwxrwxrwx. 1 root root 13 Mar 17 16:24 nvme-Amazon_EC2_NVMe_Instance_Storage_AWS10382E5D7441494EC -> ../../nvme0n1 lrwxrwxrwx. 1 root root 13 Mar 17 16:24 nvme-Amazon_EC2_NVMe_Instance_Storage_AWS60382E5D7441494EC -> ../../nvme1n1

In the example above, the IDs for the two local devices are

- nvme0n1: nvme-Amazon_EC2_NVMe_Instance_Storage_AWS10382E5D7441494EC

- nvme1n1: nvme-Amazon_EC2_NVMe_Instance_Storage_AWS60382E5D7441494EC

- Repeat the above step to identify the device ID for all the other nodes that have the storage devices to be used by OpenShift Container Storage. See this Knowledge Base article for more details.

2.7. Creating OpenShift Container Storage cluster on Amazon EC2 storage optimized - i3en.2xlarge instance type

Use this procedure to create OpenShift Container Storage cluster on Amazon EC2 (storage optimized - i3en.2xlarge instance type) infrastructure, which will:

-

Create PVs by using the

LocalVolumeCR -

Create a new

StorageClass

The Amazon EC2 storage optimized - i3en.2xlarge instance type includes two non-volatile memory express (NVMe) disks. The example in this procedure illustrates the use of both the disks that the instance type comes with.

When you are using the ephemeral storage of Amazon EC2 I3

- Use three availability zones to decrease the risk of losing all the data.

- Limit the number of users with ec2:StopInstances permissions to avoid instance shutdown by mistake.

It is not recommended to use ephemeral storage of Amazon EC2 I3 for OpenShift Container Storage persistent data, because stopping all the three nodes can cause data loss.

It is recommended to use ephemeral storage of Amazon EC2 I3 only in following scenarios:

- Cloud burst where data is copied from another location for a specific data crunching, which is limited in time

- Development or testing environment

Installing OpenShift Container Storage on Amazon EC2 storage optimized - i3en.2xlarge instance using local storage operator is a Technology Preview feature. Technology Preview features are not supported with Red Hat production service level agreements (SLAs) and might not be functionally complete. Red Hat does not recommend using them in production. These features provide early access to upcoming product features, enabling customers to test functionality and provide feedback during the development process.

Prerequisites

- Ensure that all the requirements in the Requirements for installing OpenShift Container Storage using local storage devices section are met.

Verify your OpenShift Container Platform worker nodes are labeled for OpenShift Container Storage, which is used as the

nodeSelector.$ oc get nodes -l cluster.ocs.openshift.io/openshift-storage -o jsonpath='{range .items[*]}{.metadata.name}{"\n"}'Example output:

ip-10-0-135-71.us-east-2.compute.internal ip-10-0-145-125.us-east-2.compute.internal ip-10-0-160-91.us-east-2.compute.internal

Procedure

Create local persistent volumes (PVs) on the storage nodes using

LocalVolumecustom resource (CR).Example of

LocalVolumeCRlocal-storage-block.yamlusing OpenShift Storage Container label as node selector andby-iddevice identifier:apiVersion: local.storage.openshift.io/v1 kind: LocalVolume metadata: name: local-block namespace: local-storage labels: app: ocs-storagecluster spec: tolerations: - key: "node.ocs.openshift.io/storage" value: "true" effect: NoSchedule nodeSelector: nodeSelectorTerms: - matchExpressions: - key: cluster.ocs.openshift.io/openshift-storage operator: In values: - '' storageClassDevices: - storageClassName: localblock volumeMode: Block devicePaths: - /dev/disk/by-id/nvme-Amazon_EC2_NVMe_Instance_Storage_AWS10382E5D7441494EC # <-- modify this line - /dev/disk/by-id/nvme-Amazon_EC2_NVMe_Instance_Storage_AWS1F45C01D7E84FE3E9 # <-- modify this line - /dev/disk/by-id/nvme-Amazon_EC2_NVMe_Instance_Storage_AWS136BC945B4ECB9AE4 # <-- modify this line - /dev/disk/by-id/nvme-Amazon_EC2_NVMe_Instance_Storage_AWS10382E5D7441464EP # <-- modify this line - /dev/disk/by-id/nvme-Amazon_EC2_NVMe_Instance_Storage_AWS1F45C01D7E84F43E7 # <-- modify this line - /dev/disk/by-id/nvme-Amazon_EC2_NVMe_Instance_Storage_AWS136BC945B4ECB9AE8 # <-- modify this lineEach Amazon EC2 I3 instance has two disks and this example uses both disks on each node.

Create the

LocalVolumeCR.$ oc create -f local-storage-block.yaml

Example output:

localvolume.local.storage.openshift.io/local-block created

Check if the pods are created.

$ oc -n local-storage get pods

Example output:

NAME READY STATUS RESTARTS AGE local-block-local-diskmaker-59rmn 1/1 Running 0 15m local-block-local-diskmaker-6n7ct 1/1 Running 0 15m local-block-local-diskmaker-jwtsn 1/1 Running 0 15m local-block-local-provisioner-6ssxc 1/1 Running 0 15m local-block-local-provisioner-swwvx 1/1 Running 0 15m local-block-local-provisioner-zmv5j 1/1 Running 0 15m local-storage-operator-7848bbd595-686dg 1/1 Running 0 15m

Check if the PVs are created.

You must see a new PV for each of the local storage devices on the three worker nodes. Refer to the example in the Finding available storage devices section that shows two available storage devices per worker node with a size 2.3 TiB for each node.

$ oc get pv

Example output:

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE local-pv-1a46bc79 2328Gi RWO Delete Available localblock 14m local-pv-429d90ee 2328Gi RWO Delete Available localblock 14m local-pv-4d0a62e3 2328Gi RWO Delete Available localblock 14m local-pv-55c05d76 2328Gi RWO Delete Available localblock 14m local-pv-5c7b0990 2328Gi RWO Delete Available localblock 14m local-pv-a6b283b 2328Gi RWO Delete Available localblock 14m

Check for the new

StorageClassthat is now present when theLocalVolumeCR is created. ThisStorageClassis used to provide theStorageClusterPVCs in the following steps.$ oc get sc | grep localblock

Example output:

NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE localblock kubernetes.io/no-provisioner Delete WaitForFirstConsumer false 15m

Create the

StorageClusterCR that uses thelocalblockStorageClass to consume the PVs created by the Local Storage Operator.Example of

StorageClusterCRocs-cluster-service.yamlusingmonDataDirHostPathandlocalblockStorageClass.apiVersion: ocs.openshift.io/v1 kind: StorageCluster metadata: name: ocs-storagecluster namespace: openshift-storage spec: manageNodes: false resources: mds: limits: cpu: 3 memory: 8Gi requests: cpu: 1 memory: 8Gi monDataDirHostPath: /var/lib/rook storageDeviceSets: - count: 2 dataPVCTemplate: spec: accessModes: - ReadWriteOnce resources: requests: storage: 2328Gi storageClassName: localblock volumeMode: Block name: ocs-deviceset placement: {} portable: false replica: 3 resources: limits: cpu: 2 memory: 5Gi requests: cpu: 1 memory: 5GiImportantTo ensure that the OSDs have a guaranteed size across the nodes, the storage size for

storageDeviceSetsmust be specified as less than or equal to the size of the PVs created on the nodes.Create

StorageClusterCR.$ oc create -f ocs-cluster-service.yaml

Example output

storagecluster.ocs.openshift.io/ocs-cluster-service created

Verification steps

See Verifying your OpenShift Container Storage installation.