6.4. SMB

You can access Red Hat Gluster Storage volumes using the Server Message Block (SMB) protocol by exporting directories in Red Hat Gluster Storage volumes as SMB shares on the server.

This section describes how to enable SMB shares, how to mount SMB shares manually and automatically on Microsoft Windows and macOS based clients, and how to verify that the share has been mounted successfully.

Warning

When performance translators are enabled, data inconsistency is observed when multiple clients access the same data. To avoid data inconsistency, you can either disable the performance translators or avoid such workloads.

Follow the process outlined below. The details of this overview are provided in the rest of this section.

Overview of configuring SMB shares

- Verify that your system fulfils the requirements outlined in Section 6.4.1, “Requirements for using SMB with Red Hat Gluster Storage”.

- If you want to share volumes that use replication, set up CTDB: Section 6.4.2, “Setting up CTDB for Samba”.

- Configure your volumes to be shared using SMB: Section 6.4.3, “Sharing Volumes over SMB”.

- If you want to mount volumes on macOS clients: Section 6.4.4.1, “Configuring the Apple Create Context for macOS users”.

- Set up permissions for user access: Section 6.4.4.2, “Configuring read/write access for a non-privileged user”.

- Mount the shared volume on a client:

- Verify that your shared volume is working properly: Section 6.4.6, “Starting and Verifying your Configuration”

6.4.1. Requirements for using SMB with Red Hat Gluster Storage

- Samba is required to provide support and interoperability for the SMB protocol on Red Hat Gluster Storage. Additionally, CTDB is required when you want to share replicated volumes using SMB. See Subscribing to the Red Hat Gluster Storage server channels in the Red Hat Gluster Storage 3.5 Installation Guide for information on subscribing to the correct channels for SMB support.

- Enable the Samba firewall service in the active zones for runtime and permanent mode. The following commands are for systems based on Red Hat Enterprise Linux 7.To get a list of active zones, run the following command:

# firewall-cmd --get-active-zones

To allow the firewall services in the active zones, run the following commands# firewall-cmd --zone=zone_name --add-service=samba # firewall-cmd --zone=zone_name --add-service=samba --permanent

6.4.2. Setting up CTDB for Samba

If you want to share volumes that use replication using SMB, you need to configure CTDB (Cluster Trivial Database) to provide high availability and lock synchronization.

CTDB provides high availability by adding virtual IP addresses (VIPs) and a heartbeat service. When a node in the trusted storage pool fails, CTDB enables a different node to take over the virtual IP addresses that the failed node was hosting. This ensures the IP addresses for the services provided are always available.

Important

Amazon Elastic Compute Cloud (EC2) does not support VIPs and is hence not compatible with this solution.

Prerequisites

- If you already have an older version of CTDB (version <= ctdb1.x), then remove CTDB by executing the following command:

# yum remove ctdb

After removing the older version, proceed with installing the latest CTDB.Note

Ensure that the system is subscribed to the samba channel to get the latest CTDB packages. - Install CTDB on all the nodes that are used as Samba servers to the latest version using the following command:

# yum install ctdb

- In a CTDB based high availability environment of Samba , the locks will not be migrated on failover.

- Enable the CTDB firewall service in the active zones for runtime and permanent mode. The following commands are for systems based on Red Hat Enterprise Linux 7.To get a list of active zones, run the following command:

# firewall-cmd --get-active-zones

To add ports to the active zones, run the following commands:# firewall-cmd --zone=zone_name --add-port=4379/tcp # firewall-cmd --zone=zone_name --add-port=4379/tcp --permanent

Best Practices

- CTDB requires a different broadcast domain from the Gluster internal network. The network used by the Windows clients to access the Gluster volumes exported by Samba, must be different from the internal Gluster network. Failing to do so can lead to an excessive time when there is a failover of CTDB between the nodes, and a degraded performance accessing the shares in Windows.For example an incorrect setup where CTDB is running in Network 192.168.10.X:

Status of volume: ctdb Gluster process TCP Port RDMA Port Online Pid Brick node1:/rhgs/ctdb/b1 49157 0 Y 30439 Brick node2:/rhgs/ctdb/b1 49157 0 Y 3827 Brick node3:/rhgs/ctdb/b1 49157 0 Y 89421 Self-heal Daemon on localhost N/A N/A Y 183026 Self-heal Daemon on sesdel0207 N/A N/A Y 44245 Self-heal Daemon on segotl4158 N/A N/A Y 110627 cat ctdb_listnodes 192.168.10.1 192.168.10.2 cat ctdb_ip Public IPs on node 0 192.168.10.3 0

Note

The host names, node1, node2, and node3 are used to setup the bricks and resolve the IPs in the same network 192.168.10.X. The Windows clients are accessing the shares using the internal Gluster network and this should not be the case. - Additionally, the CTDB network and the Gluster internal network must run in separate physical interfaces. Red Hat recommends 10GbE interfaces for better performance.

- It is recommended to use the same network bandwidth for Gluster and CTDB networks. Using different network speeds can lead to performance bottlenecks.The same amount of network traffic is expected in both internal and external networks.

Configuring CTDB on Red Hat Gluster Storage Server

- Create a new replicated volume to house the CTDB lock file. The lock file has a size of zero bytes, so use small bricks.To create a replicated volume run the following command, replacing N with the number of nodes to replicate across:

# gluster volume create volname replica N ip_address_1:brick_path ... ip_address_N:brick_path

For example:# gluster volume create ctdb replica 3 10.16.157.75:/rhgs/brick1/ctdb/b1 10.16.157.78:/rhgs/brick1/ctdb/b2 10.16.157.81:/rhgs/brick1/ctdb/b3

- In the following files, replace

allin the statementMETA="all"with the newly created volume name, for example,META="ctdb"./var/lib/glusterd/hooks/1/start/post/S29CTDBsetup.sh /var/lib/glusterd/hooks/1/stop/pre/S29CTDB-teardown.sh

- In the

/etc/samba/smb.conffile, add the following line in the global section on all the nodes:clustering=yes

- Start the volume.

# gluster volume start ctdb

The S29CTDBsetup.sh script runs on all Red Hat Gluster Storage servers, adds an entry in/etc/fstabfor the mount, and mounts the volume at/gluster/lockon all the nodes with Samba server. It also enables automatic start of CTDB service on reboot.Note

When you stop the special CTDB volume, the S29CTDB-teardown.sh script runs on all Red Hat Gluster Storage servers and removes an entry in/etc/fstabfor the mount and unmounts the volume at/gluster/lock. - Verify that the

/etc/ctdbdirectory exists on all nodes that are used as a Samba server. This file contains CTDB configuration details recommended for Red Hat Gluster Storage. - Create the

/etc/ctdb/nodesfile on all the nodes that are used as Samba servers and add the IP addresses of these nodes to the file.10.16.157.0 10.16.157.3 10.16.157.6

The IP addresses listed here are the private IP addresses of Samba servers. - On nodes that are used as Samba servers and require IP failover, create the

/etc/ctdb/public_addressesfile. Add any virtual IP addresses that CTDB should create to the file in the following format:VIP/routing_prefix network_interface

For example:192.168.1.20/24 eth0 192.168.1.21/24 eth0

- Start the CTDB service on all the nodes.On RHEL 7 and RHEL 8, run

# systemctl start ctdb

On RHEL 6, run# service ctdb start

6.4.3. Sharing Volumes over SMB

After you follow this process, any gluster volumes configured on servers that run Samba are exported automatically on volume start.

See the below example for a default volume share section added to

/etc/samba/smb.conf:

[gluster-VOLNAME]

comment = For samba share of volume VOLNAME

vfs objects = glusterfs

glusterfs:volume = VOLNAME

glusterfs:logfile = /var/log/samba/VOLNAME.log

glusterfs:loglevel = 7

path = /

read only = no

guest ok = yes

The configuration options are described in the following table:

Table 6.8. Configuration Options

| Configuration Options | Required? | Default Value | Description |

|---|---|---|---|

| Path | Yes | n/a | It represents the path that is relative to the root of the gluster volume that is being shared. Hence / represents the root of the gluster volume. Exporting a subdirectory of a volume is supported and /subdir in path exports only that subdirectory of the volume. |

glusterfs:volume | Yes | n/a | The volume name that is shared. |

glusterfs:logfile | No | NULL | Path to the log file that will be used by the gluster modules that are loaded by the vfs plugin. Standard Samba variable substitutions as mentioned in smb.conf are supported. |

glusterfs:loglevel | No | 7 | This option is equivalent to the client-log-level option of gluster. 7 is the default value and corresponds to the INFO level. |

glusterfs:volfile_server | No | localhost | The gluster server to be contacted to fetch the volfile for the volume. It takes the value, which is a list of white space separated elements, where each element is unix+/path/to/socket/file or [tcp+]IP|hostname|\[IPv6\][:port] |

The procedure to share volumes over samba differs depending on the Samba version you would choose.

If you are using an older version of Samba:

- Enable SMB specific caching:

# gluster volume set VOLNAME performance.cache-samba-metadata on

You can also enable generic metadata caching to improve performance. See Section 19.7, “Directory Operations” for details. - Restart the

glusterdservice on each Red Hat Gluster Storage node. - Verify proper lock and I/O coherence:

# gluster volume set VOLNAME storage.batch-fsync-delay-usec 0

Note

For RHEL based Red Hat Gluster Storage upgrading to 3.5 batch update 4 with Samba, the write-behind translator has to manually disabled for all existing samba volumes.

# gluster volume set <volname> performance.write-behind off

If you are using Samba-4.8.5-104 or later:

- To export gluster volume as SMB share via Samba, one of the following volume options,

user.cifsoruser.smbis required.To enable user.cifs volume option, run:# gluster volume set VOLNAME user.cifs enable

And to enable user.smb, run:# gluster volume set VOLNAME user.smb enable

Red Hat Gluster Storage 3.4 introduces a group commandsambafor configuring the necessary volume options for Samba-CTDB setup. - Execute the following command to configure the volume options for the Samba-CTDB:

# gluster volume set VOLNAME group samba

This command will enable the following option for Samba-CTDB setup:- performance.readdir-ahead: on

- performance.parallel-readdir: on

- performance.nl-cache-timeout: 600

- performance.nl-cache: on

- performance.cache-samba-metadata: on

- network.inode-lru-limit: 200000

- performance.md-cache-timeout: 600

- performance.cache-invalidation: on

- features.cache-invalidation-timeout: 600

- features.cache-invalidation: on

- performance.stat-prefetch: on

If you are using Samba-4.9.8-109 or later:

Below mentioned steps are strictly optional and are to be followed in environments where large number of clients are connecting to volumes and/or more volumes are being used.

Red Hat Gluster Storage 3.5 introduces an optional method for configuring volume shares out of corresponding FUSE mounted paths. Following steps need to be performed on every node in the cluster.

- Have a local mount using native Gluster protocol Fuse on every Gluster node that shares the Gluster volume via Samba. Mount GlusterFS volume via FUSE and record the FUSE mountpoint for further steps:Add an entry in

/etc/fstab:localhost:/myvol /mylocal glusterfs defaults,_netdev,acl 0 0

For example:localhost:/myvol 4117504 1818292 2299212 45% /mylocal

Where gluster volume ismyvolthat will be mounted on/mylocal - Edit the samba share configuration file located at

/etc/samba/smb.conf[gluster-VOLNAME] comment = For samba share of volume VOLNAME vfs objects = glusterfs glusterfs:volume = VOLNAME glusterfs:logfile =

/var/log/samba/VOLNAME.logglusterfs:loglevel = 7 path = / read only = no guest ok = yes- Edit the

vfs objectsparameter value toglusterfs_fusevfs objects = glusterfs_fuse

- Edit the

pathparameter value to the FUSE mountpoint recorded previously. For example:path = /MOUNTDIR

- With SELinux in Enforcing mode, turn on the SELinux boolean

samba_share_fusefs:# setsebool -P samba_share_fusefs on

Note

- New volumes being created will be automatically configured with the use of default

vfs objectsparameter. - Modifications to samba share configuration file are retained over restart of volumes until these volumes are deleted using Gluster CLI.

- The Samba hook scripts invoked as part of Gluster CLI operations on a volume

VOLNAMEwill only operate on a Samba share named[gluster-VOLNAME]. In other words, hook scripts will never delete or change the samba share configuration file for a samba share called[VOLNAME].

Then, for all Samba versions:

- Verify that the volume can be accessed from the SMB/CIFS share:

# smbclient -L <hostname> -U%

For example:#

smbclient -L rhs-vm1 -U%Domain=[MYGROUP] OS=[Unix] Server=[Samba 4.1.17] Sharename Type Comment --------- ---- ------- IPC$ IPC IPC Service (Samba Server Version 4.1.17) gluster-vol1 Disk For samba share of volume vol1 Domain=[MYGROUP] OS=[Unix] Server=[Samba 4.1.17] Server Comment --------- ------- Workgroup Master --------- ------- - Verify that the SMB/CIFS share can be accessed by the user, run the following command:

# smbclient //<hostname>/gluster-<volname> -U <username>%<password>

For example:#

smbclient //10.0.0.1/gluster-vol1 -U root%redhatDomain=[MYGROUP] OS=[Unix] Server=[Samba 4.1.17] smb: \> mkdir test smb: \> cd test\ smb: \test\> pwd Current directory is \\10.0.0.1\gluster-vol1\test\ smb: \test\>

6.4.4. Configuring User Access to Shared Volumes

6.4.4.1. Configuring the Apple Create Context for macOS users

- Add the following lines to the

[global]section of thesmb.conffile. Note that the indentation level shown is required.fruit:aapl = yes ea support = yes - Load the

vfs_fruitmodule and its dependencies by adding the following line to your volume's export configuration block in thesmb.conffile.vfs objects = fruit streams_xattr glusterfs

For example:[gluster-volname] comment = For samba share of volume smbshare vfs objects = fruit streams_xattr glusterfs glusterfs:volume = volname glusterfs:logfile = /var/log/samba/glusterfs-volname-fruit.%M.log glusterfs:loglevel = 7 path = / read only = no guest ok = yes fruit:encoding = native

6.4.4.2. Configuring read/write access for a non-privileged user

- Add the user on all the Samba servers based on your configuration:

# adduser username

- Add the user to the list of Samba users on all Samba servers and assign password by executing the following command:

# smbpasswd -a username

- From any other Samba server, mount the volume using the FUSE protocol.

# mount -t glusterfs -o acl ip-address:/volname /mountpoint

For example:# mount -t glusterfs -o acl rhs-a:/repvol /mnt

- Use the

setfaclcommand to provide the required permissions for directory access to the user.# setfacl -m user:username:rwx mountpoint

For example:# setfacl -m user:cifsuser:rwx /mnt

6.4.5. Mounting Volumes using SMB

6.4.5.1. Manually mounting volumes exported with SMB on Red Hat Enterprise Linux

- Install the

cifs-utilspackage on the client.# yum install cifs-utils

- Run

mount -t cifsto mount the exported SMB share, using the syntax example as guidance.# mount -t cifs -o user=username,pass=password //hostname/gluster-volname /mountpoint

Thesec=ntlmsspparameter is also required when mounting a volume on Red Hat Enterprise Linux 6.# mount -t cifs -o user=username,pass=password,sec=ntlmssp //hostname/gluster-volname /mountpoint

For example:# mount -t cifs -o user=cifsuser,pass=redhat,sec=ntlmssp //server1/gluster-repvol /cifs

Important

Red Hat Gluster Storage is not supported on Red Hat Enterprise Linux 6 (RHEL 6) from 3.5 Batch Update 1 onwards. See Version Details table in section Red Hat Gluster Storage Software Components and Versions of the Installation Guide - Run

# smbstatus -Son the server to display the status of the volume:Service pid machine Connected at ------------------------------------------------------------------- gluster-VOLNAME 11967 __ffff_192.168.1.60 Mon Aug 6 02:23:25 2012

6.4.5.2. Manually mounting volumes exported with SMB on Microsoft Windows

6.4.5.2.1. Using Microsoft Windows Explorer to manually mount a volume

- In Windows Explorer, click Tools → Map Network Drive…. to open the Map Network Drive screen.

- Choose the drive letter using the Drive drop-down list.

- In the Folder text box, specify the path of the server and the shared resource in the following format: \\SERVER_NAME\VOLNAME.

- Click Finish to complete the process, and display the network drive in Windows Explorer.

- Navigate to the network drive to verify it has mounted correctly.

6.4.5.2.2. Using Microsoft Windows command line interface to manually mount a volume

- Click Start → Run, and then type

cmd. - Enter

net use z: \\SERVER_NAME\VOLNAME, where z: is the drive letter to assign to the shared volume.For example,net use y: \\server1\test-volume - Navigate to the network drive to verify it has mounted correctly.

6.4.5.3. Manually mounting volumes exported with SMB on macOS

Prerequisites

- Ensure that your Samba configuration allows the use the SMB Apple Create Context.

- Ensure that the username you're using is on the list of allowed users for the volume.

Manual mounting process

- In the Finder, click Go > Connect to Server.

- In the Server Address field, type the IP address or hostname of a Red Hat Gluster Storage server that hosts the volume you want to mount.

- Click Connect.

- When prompted, select Registered User to connect to the volume using a valid username and password.If required, enter your user name and password, then select the server volumes or shared folders that you want to mount.To make it easier to connect to the computer in the future, select Remember this password in my keychain to add your user name and password for the computer to your keychain.

For further information about mounting volumes on macOS, see the Apple Support documentation: https://support.apple.com/en-in/guide/mac-help/mchlp1140/mac.

6.4.5.4. Configuring automatic mounting for volumes exported with SMB on Red Hat Enterprise Linux

- Open the

/etc/fstabfile in a text editor and add a line containing the following details:\\HOSTNAME|IPADDRESS\SHARE_NAME MOUNTDIR cifs OPTIONS DUMP FSCK

In the OPTIONS column, ensure that you specify thecredentialsoption, with a value of the path to the file that contains the username and/or password.Using the example server names, the entry contains the following replaced values.\\server1\test-volume /mnt/glusterfs cifs credentials=/etc/samba/passwd,_netdev 0 0

Thesec=ntlmsspparameter is also required when mounting a volume on Red Hat Enterprise Linux 6, for example:\\server1\test-volume /mnt/glusterfs cifs credentials=/etc/samba/passwd,_netdev,sec=ntlmssp 0 0

See themount.cifsman page for more information about these options.Important

Red Hat Gluster Storage is not supported on Red Hat Enterprise Linux 6 (RHEL 6) from 3.5 Batch Update 1 onwards. See Version Details table in section Red Hat Gluster Storage Software Components and Versions of the Installation Guide - Run

# smbstatus -Son the client to display the status of the volume:Service pid machine Connected at ------------------------------------------------------------------- gluster-VOLNAME 11967 __ffff_192.168.1.60 Mon Aug 6 02:23:25 2012

6.4.5.5. Configuring automatic mounting for volumes exported with SMB on Microsoft Windows

- In Windows Explorer, click Tools → Map Network Drive…. to open the Map Network Drive screen.

- Choose the drive letter using the Drive drop-down list.

- In the Folder text box, specify the path of the server and the shared resource in the following format: \\SERVER_NAME\VOLNAME.

- Click the Reconnect at logon check box.

- Click Finish to complete the process, and display the network drive in Windows Explorer.

- If the Windows Security screen pops up, enter the username and password and click OK.

- Navigate to the network drive to verify it has mounted correctly.

6.4.5.6. Configuring automatic mounting for volumes exported with SMB on macOS

- Manually mount the volume using the process outlined in Section 6.4.5.3, “Manually mounting volumes exported with SMB on macOS”.

- In the Finder, click System Preferences > Users & Groups > Username > Login Items.

- Drag and drop the mounted volume into the login items list.Check Hide if you want to prevent the drive's window from opening every time you boot or log in.

For further information about mounting volumes on macOS, see the Apple Support documentation: https://support.apple.com/en-in/guide/mac-help/mchlp1140/mac.

6.4.6. Starting and Verifying your Configuration

Perform the following to start and verify your configuration:

Verify the Configuration

Verify the virtual IP (VIP) addresses of a shut down server are carried over to another server in the replicated volume.

- Verify that CTDB is running using the following commands:

# ctdb status # ctdb ip # ctdb ping -n all

- Mount a Red Hat Gluster Storage volume using any one of the VIPs.

- Run

# ctdb ipto locate the physical server serving the VIP. - Shut down the CTDB VIP server to verify successful configuration.When the Red Hat Gluster Storage server serving the VIP is shut down there will be a pause for a few seconds, then I/O will resume.

6.4.7. Disabling SMB Shares

To stop automatic sharing on all nodes for all volumes execute the following steps:

- On all Red Hat Gluster Storage Servers, with elevated privileges, navigate to /var/lib/glusterd/hooks/1/start/post

- Rename the S30samba-start.sh to K30samba-start.sh.For more information about these scripts, see Section 13.2, “Prepackaged Scripts”.

To stop automatic sharing on all nodes for one particular volume:

- Run the following command to disable automatic SMB sharing per-volume:

# gluster volume set <VOLNAME> user.smb disable

6.4.8. Accessing Snapshots in Windows

A snapshot is a read-only point-in-time copy of the volume. Windows has an inbuilt mechanism to browse snapshots via Volume Shadow-copy Service (also known as VSS). Using this feature users can access the previous versions of any file or folder with minimal steps.

Note

Shadow Copy (also known as Volume Shadow-copy Service, or VSS) is a technology included in Microsoft Windows that allows taking snapshots of computer files or volumes, apart from viewing snapshots. Currently we only support viewing of snapshots. Creation of snapshots with this interface is NOT supported.

6.4.8.1. Configuring Shadow Copy

To configure shadow copy, the following configurations must be modified/edited in the smb.conf file. The smb.conf file is located at etc/samba/smb.conf.

Note

Ensure, shadow_copy2 module is enabled in smb.conf. To enable add the following parameter to the vfs objects option.

For example:

vfs objects = shadow_copy2 glusterfs

Table 6.9. Configuration Options

| Configuration Options | Required? | Default Value | Description |

|---|---|---|---|

| shadow:snapdir | Yes | n/a | Path to the directory where snapshots are kept. The snapdir name should be .snaps. |

| shadow:basedir | Yes | n/a | Path to the base directory that snapshots are from. The basedir value should be /. |

| shadow:sort | Optional | unsorted | The supported values are asc/desc. By this parameter one can specify that the shadow copy directories should be sorted before they are sent to the client. This can be beneficial as unix filesystems are usually not listed alphabetically sorted. If enabled, it is specified in descending order. |

| shadow:localtime | Optional | UTC | This is an optional parameter that indicates whether the snapshot names are in UTC/GMT or in local time. |

| shadow:format | Yes | n/a | This parameter specifies the format specification for the naming of snapshots. The format must be compatible with the conversion specifications recognized by str[fp]time. The default value is _GMT-%Y.%m.%d-%H.%M.%S. |

| shadow:fixinodes | Optional | No | If you enable shadow:fixinodes then this module will modify the apparent inode number of files in the snapshot directories using a hash of the files path. This is needed for snapshot systems where the snapshots have the same device:inode number as the original files (such as happens with GPFS snapshots). If you don't set this option then the 'restore' button in the shadow copy UI will fail with a sharing violation. |

| shadow:snapprefix | Optional | n/a | Regular expression to match prefix of snapshot name. Red Hat Gluster Storage only supports Basic Regular Expression (BRE) |

| shadow:delimiter | Optional | _GMT | delimiter is used to separate shadow:snapprefix and shadow:format. |

Following is an example of the smb.conf file:

[gluster-vol0] comment = For samba share of volume vol0 vfs objects = shadow_copy2 glusterfs glusterfs:volume = vol0 glusterfs:logfile = /var/log/samba/glusterfs-vol0.%M.log glusterfs:loglevel = 3 path = / read only = no guest ok = yes shadow:snapdir = /.snaps shadow:basedir = / shadow:sort = desc shadow:snapprefix= ^S[A-Za-z0-9]*p$ shadow:format = _GMT-%Y.%m.%d-%H.%M.%S

In the above example, the mentioned parameters have to be added in the smb.conf file to enable shadow copy. The options mentioned are not mandatory.

Note

When configuring Shadow Copy with glusterfs_fuse modify the smb.conf file configurations.

For example:

vfs objects = shadow_copy2 glusterfs_fuse

[gluster-vol0] comment = For samba share of volume vol0 vfs objects = shadow_copy2 glusterfs_fuse path = /MOUNTDIR read only = no guest ok = yes shadow:snapdir = /MOUNTDIR/.snaps shadow:basedir = /MOUNTDIR shadow:sort = desc shadow:snapprefix= ^S[A-Za-z0-9]*p$ shadow:format = _GMT-%Y.%m.%d-%H.%M.%S

In the above example `MOUNTDIR` is a local FUSE moutpoint.

Shadow copy will filter all the snapshots based on the smb.conf entries. It will only show those snapshots which matches the criteria. In the example mentioned earlier, the snapshot name should start with an 'S' and end with 'p' and any alpha numeric characters in between is considered for the search. For example in the list of the following snapshots, the first two snapshots will be shown by Windows and the last one will be ignored. Hence, these options will help us filter out what snapshots to show and what not to.

Snap_GMT-2016.06.06-06.06.06 Sl123p_GMT-2016.07.07-07.07.07 xyz_GMT-2016.08.08-08.08.08

After editing the smb.conf file, execute the following steps to enable snapshot access:

- Start or restart the

smbservice.On RHEL 7 and RHEL 8, runsystemctl [re]start smbOn RHEL 6, runservice smb [re]start - Enable User Serviceable Snapshot (USS) for Samba. For more information see Section 8.13, “User Serviceable Snapshots”

6.4.8.2. Accessing Snapshot

To access snapshot on the Windows system, execute the following steps:

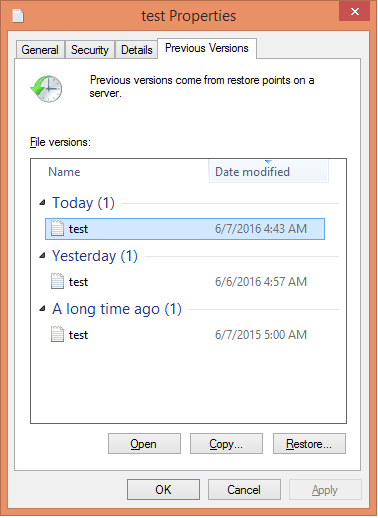

- Right Click on the file or directory for which the previous version is required.

- Click on Restore previous versions.

- In the dialog box, select the Date/Time of the previous version of the file, and select either Open, Restore, or Copy.where,Open: Lets you open the required version of the file in read-only mode.Restore: Restores the file back to the selected version.Copy: Lets you copy the file to a different location.

Figure 6.1. Accessing Snapshot

6.4.9. Tuning Performance

This section provides details regarding improving the system performance in an SMB environment. The various enhancements tasks can be classified into:

- Enabling Metadata Caching to improve the performance of SMB access of Red Hat Gluster Storage volumes.

- Enhancing Directory Listing Performance

- Enhancing File/Directory Create Performance

More detailed information for each of this is provided in the sections ahead.

6.4.9.1. Enabling Metadata Caching

Enable metadata caching to improve the performance of directory operations. Execute the following commands from any one of the nodes on the trusted storage pool in the order mentioned below.

Note

If majority of the workload is modifying the same set of files and directories simultaneously from multiple clients, then enabling metadata caching might not provide the desired performance improvement.

- Execute the following command to enable metadata caching and cache invalidation:

# gluster volume set <volname> group metadata-cache

This is group set option which sets multiple volume options in a single command. - To increase the number of files that can be cached, execute the following command:

# gluster volume set <VOLNAME> network.inode-lru-limit <n>

n, is set to 50000. It can be increased if the number of active files in the volume is very high. Increasing this number increases the memory footprint of the brick processes.

6.4.9.2. Enhancing Directory Listing Performance

The directory listing gets slower as the number of bricks/nodes increases in a volume, though the file/directory numbers remain unchanged. By enabling the parallel readdir volume option, the performance of directory listing is made independent of the number of nodes/bricks in the volume. Thus, the increase in the scale of the volume does not reduce the directory listing performance.

Note

You can expect an increase in performance only if the distribute count of the volume is 2 or greater and the size of the directory is small (< 3000 entries). The larger the volume (distribute count) greater is the performance benefit.

To enable parallel readdir execute the following commands:

- Verify if the

performance.readdir-aheadoption is enabled by executing the following command:# gluster volume get <VOLNAME> performance.readdir-ahead

If theperformance.readdir-aheadis not enabled then execute the following command:# gluster volume set <VOLNAME> performance.readdir-ahead on

- Execute the following command to enable

parallel-readdiroption:# gluster volume set <VOLNAME> performance.parallel-readdir on

Note

If there are more than 50 bricks in the volume it is recommended to increase the cache size to be more than 10Mb (default value):# gluster volume set <VOLNAME> performance.rda-cache-limit <CACHE SIZE>

6.4.9.3. Enhancing File/Directory Create Performance

Before creating / renaming any file, lookups (5-6 in SMB) are sent to verify if the file already exists. By serving these lookup from the cache when possible, increases the create / rename performance by multiple folds in SMB access.

- Execute the following command to enable negative-lookup cache:

# gluster volume set <volname> group nl-cache volume set success

Note

The above command also enables cache-invalidation and increases the timeout to 10 minutes.