Red Hat Training

A Red Hat training course is available for RHEL 8

Monitoring and managing system status and performance

Optimizing system throughput, latency, and power consumption

Abstract

Making open source more inclusive

Red Hat is committed to replacing problematic language in our code, documentation, and web properties. We are beginning with these four terms: master, slave, blacklist, and whitelist. Because of the enormity of this endeavor, these changes will be implemented gradually over several upcoming releases. For more details, see our CTO Chris Wright’s message.

Providing feedback on Red Hat documentation

We appreciate your feedback on our documentation. Let us know how we can improve it.

Submitting feedback through Jira (account required)

- Log in to the Jira website.

- Click Create in the top navigation bar.

- Enter a descriptive title in the Summary field.

- Enter your suggestion for improvement in the Description field. Include links to the relevant parts of the documentation.

- Click Create at the bottom of the dialogue.

Chapter 1. Overview of performance monitoring options

The following are some of the performance monitoring and configuration tools available in Red Hat Enterprise Linux 8:

-

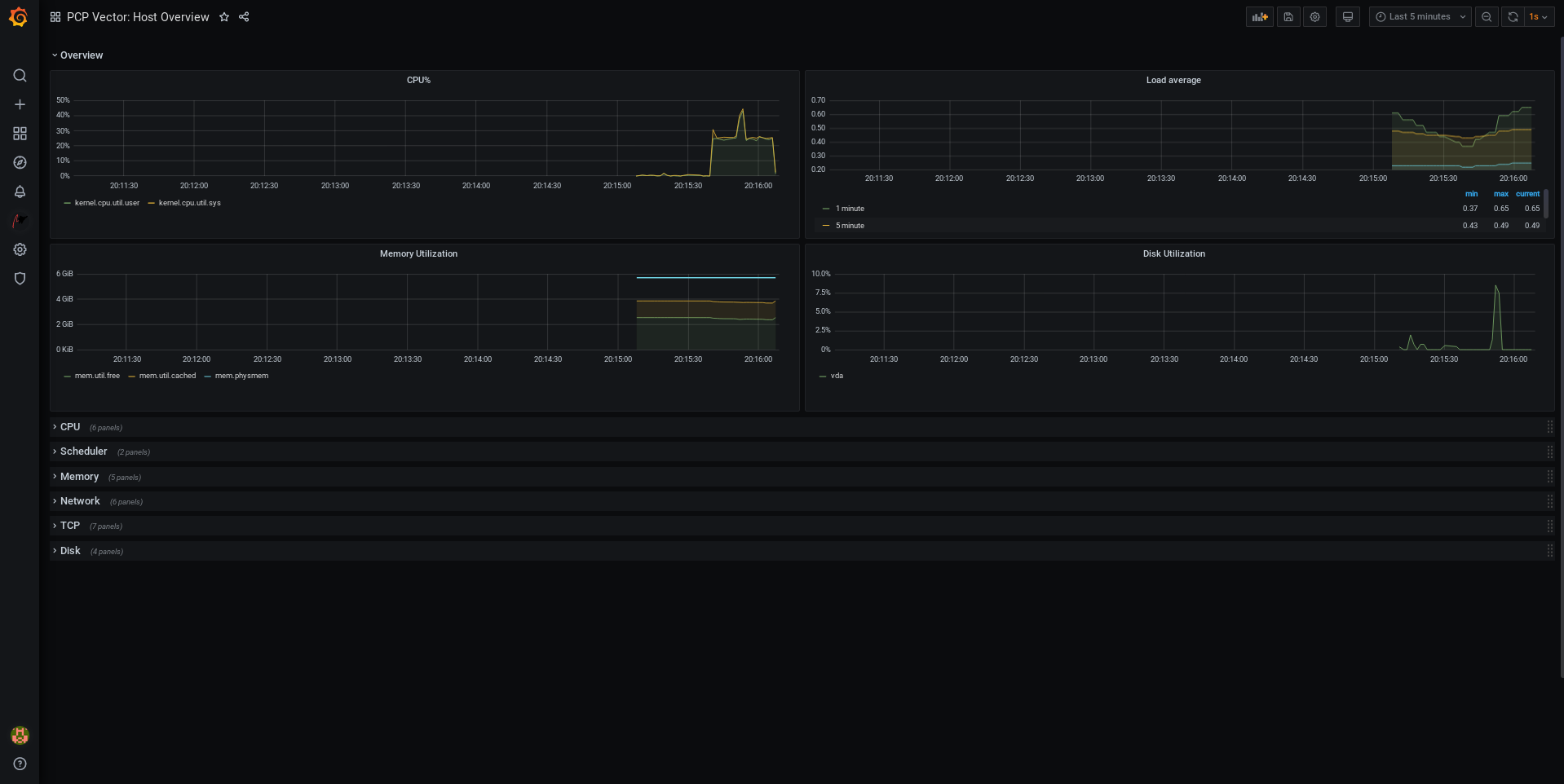

Performance Co-Pilot (

pcp) is used for monitoring, visualizing, storing, and analyzing system-level performance measurements. It allows the monitoring and management of real-time data, and logging and retrieval of historical data. Red Hat Enterprise Linux 8 provides several tools that can be used from the command line to monitor a system outside run level

5. The following are the built-in command line tools:-

topis provided by theprocps-ngpackage. It gives a dynamic view of the processes in a running system. It displays a variety of information, including a system summary and a list of tasks currently being managed by the Linux kernel. -

psis provided by theprocps-ngpackage. It captures a snapshot of a select group of active processes. By default, the examined group is limited to processes that are owned by the current user and associated with the terminal where thepscommand is executed. -

Virtual memory statistics (

vmstat) is provided by theprocps-ngpackage. It provides instant reports of your system’s processes, memory, paging, block input/output, interrupts, and CPU activity. -

System activity reporter (

sar) is provided by thesysstatpackage. It collects and reports information about system activity that has occurred so far on the current day.

-

-

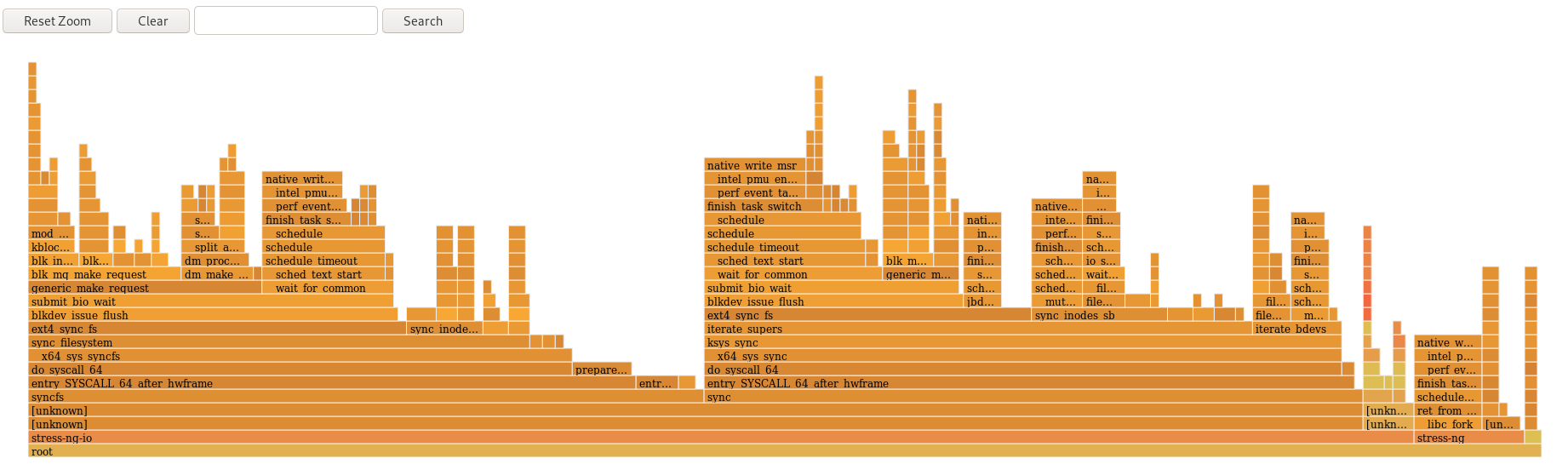

perfuses hardware performance counters and kernel trace-points to track the impact of other commands and applications on a system. -

bcc-toolsis used for BPF Compiler Collection (BCC). It provides over 100eBPFscripts that monitor kernel activities. For more information about each of this tool, see the man page describing how to use it and what functions it performs. -

turbostatis provided by thekernel-toolspackage. It reports on processor topology, frequency, idle power-state statistics, temperature, and power usage on the Intel 64 processors. -

iostatis provided by thesysstatpackage. It monitors and reports on system IO device loading to help administrators make decisions about how to balance IO load between physical disks. -

irqbalancedistributes hardware interrupts across processors to improve system performance. -

ssprints statistical information about sockets, allowing administrators to assess device performance over time. Red Hat recommends usingssovernetstatin Red Hat Enterprise Linux 8. -

numastatis provided by thenumactlpackage. By default,numastatdisplays per-node NUMA hit an miss system statistics from the kernel memory allocator. Optimal performance is indicated by highnuma_hitvalues and lownuma_missvalues. -

numadis an automatic NUMA affinity management daemon. It monitors NUMA topology and resource usage within a system that dynamically improves NUMA resource allocation, management, and therefore system performance. -

SystemTapmonitors and analyzes operating system activities, especially the kernel activities. -

valgrindanalyzes applications by running it on a synthetic CPU and instrumenting existing application code as it is executed. It then prints commentary that clearly identifies each process involved in application execution to a user-specified file, file descriptor, or network socket. It is also useful for finding memory leaks. -

pqosis provided by theintel-cmt-catpackage. It monitors and controls CPU cache and memory bandwidth on recent Intel processors.

Additional resources

-

pcp,top,ps,vmstat,sar,perf,iostat,irqbalance,ss,numastat,numad,valgrind, andpqosman pages -

/usr/share/doc/directory - What exactly is the meaning of value "await" reported by iostat? Red Hat Knowledgebase article

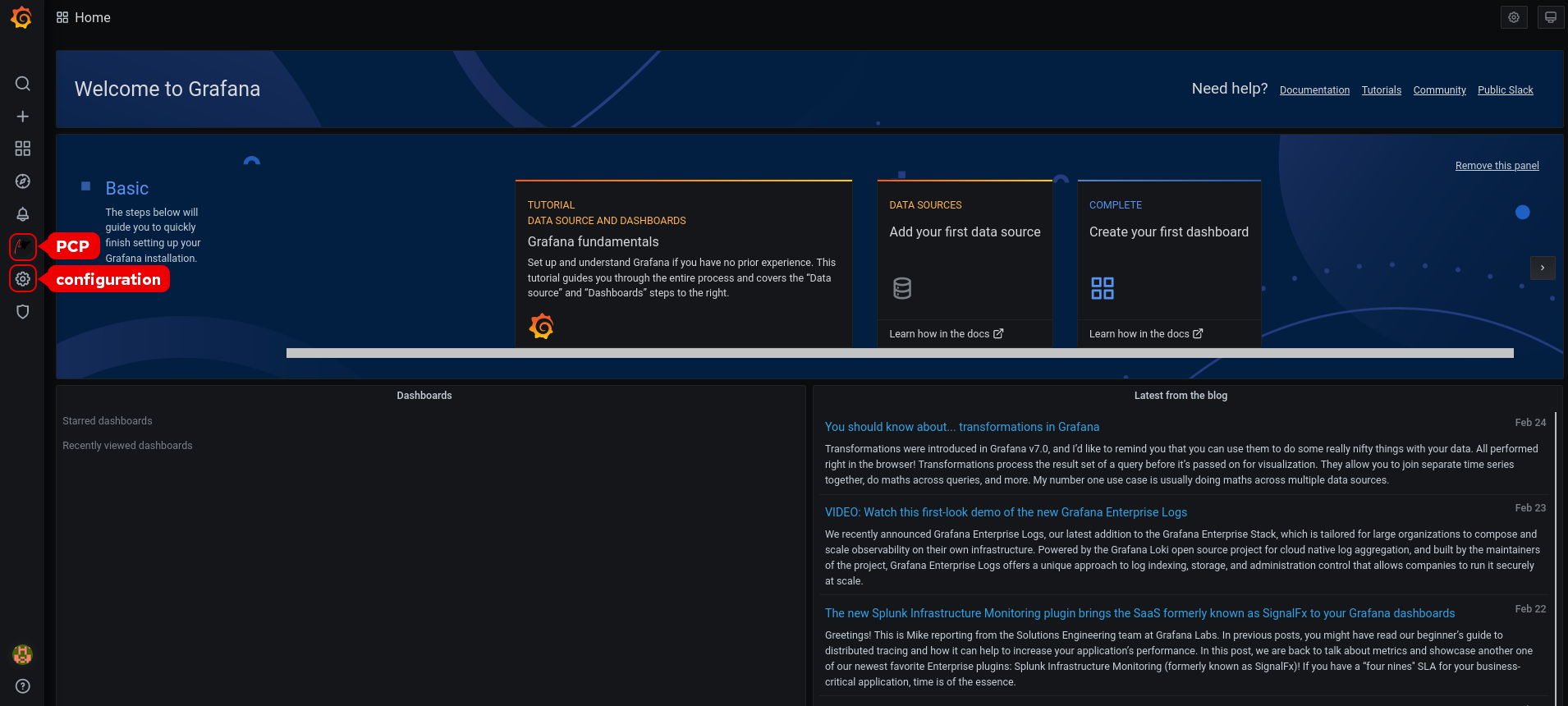

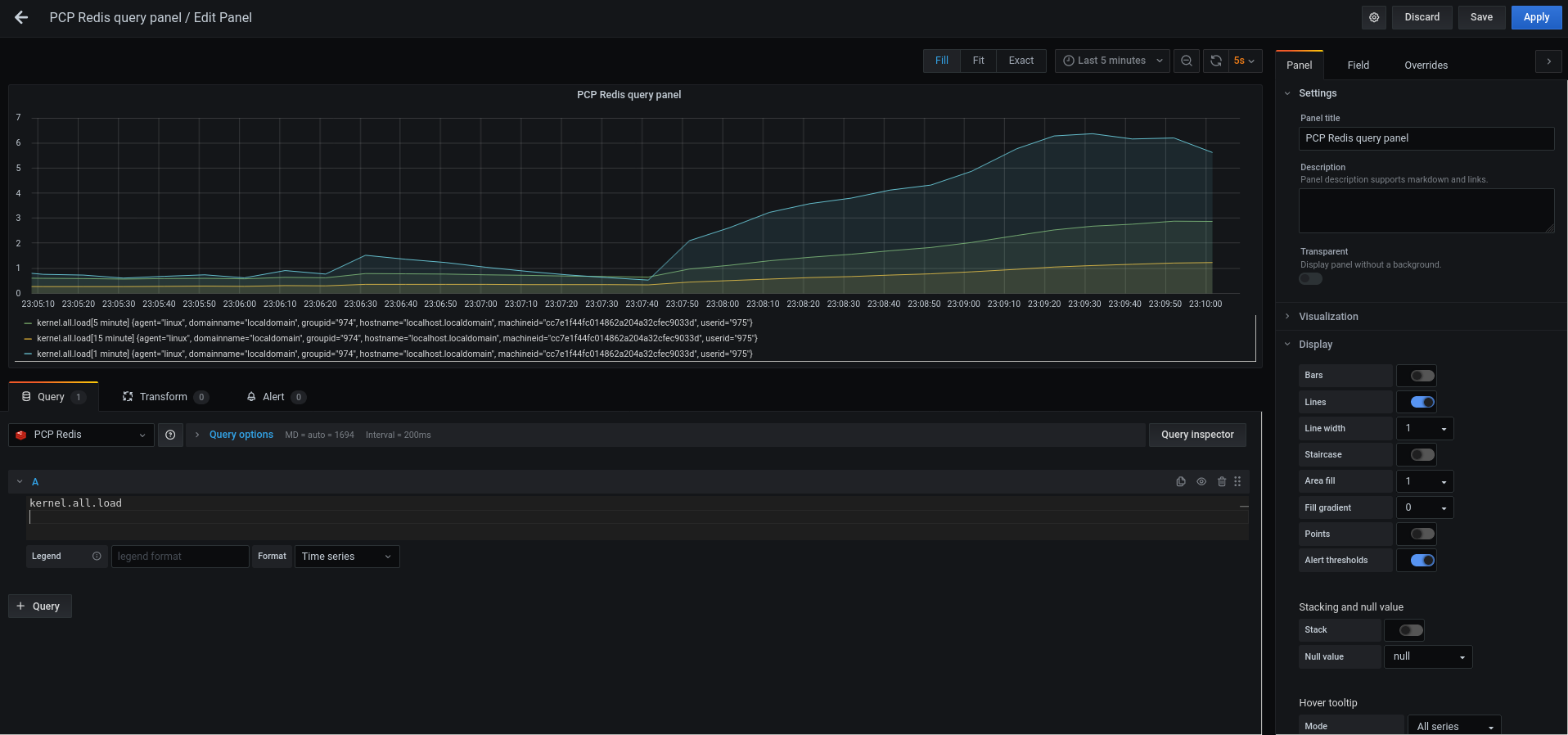

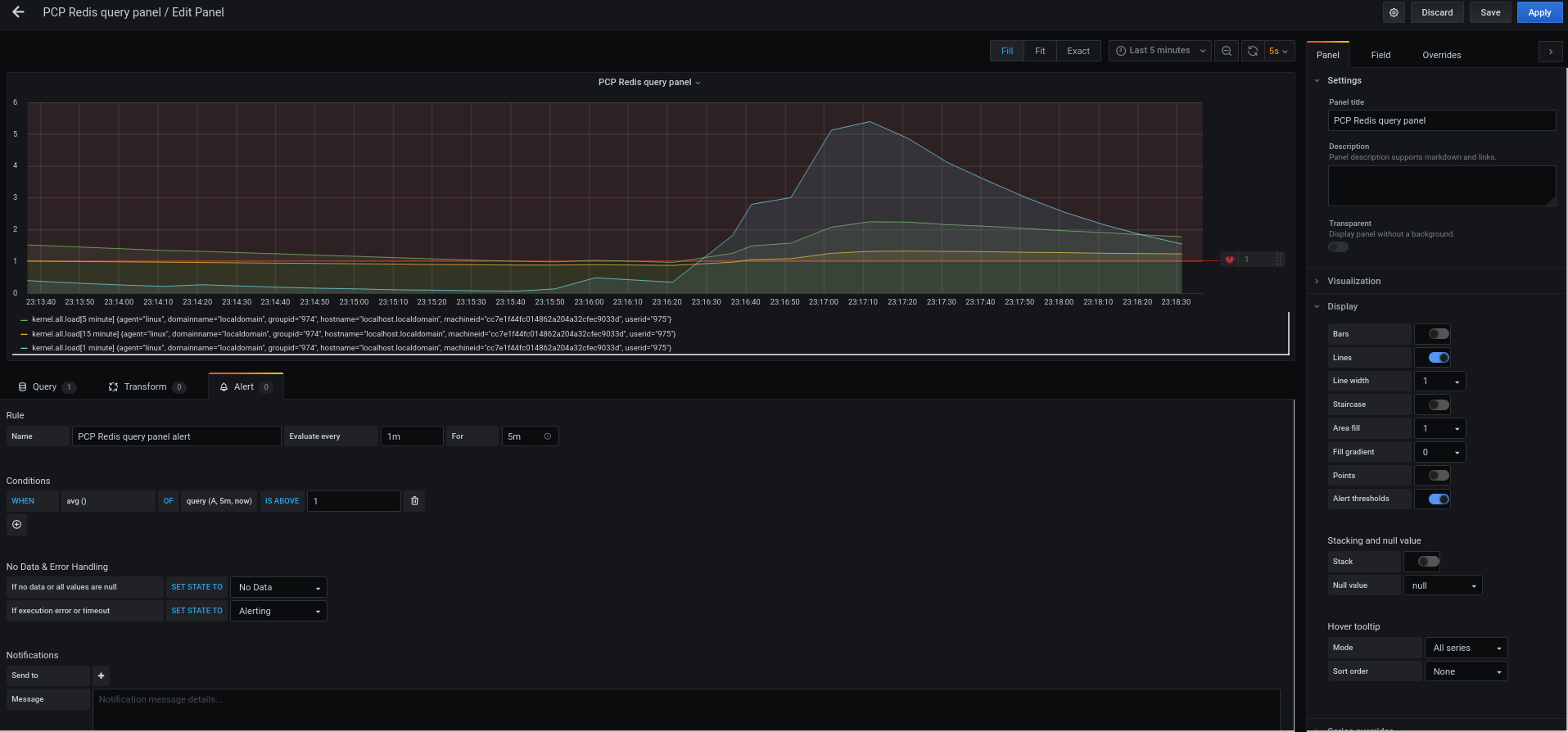

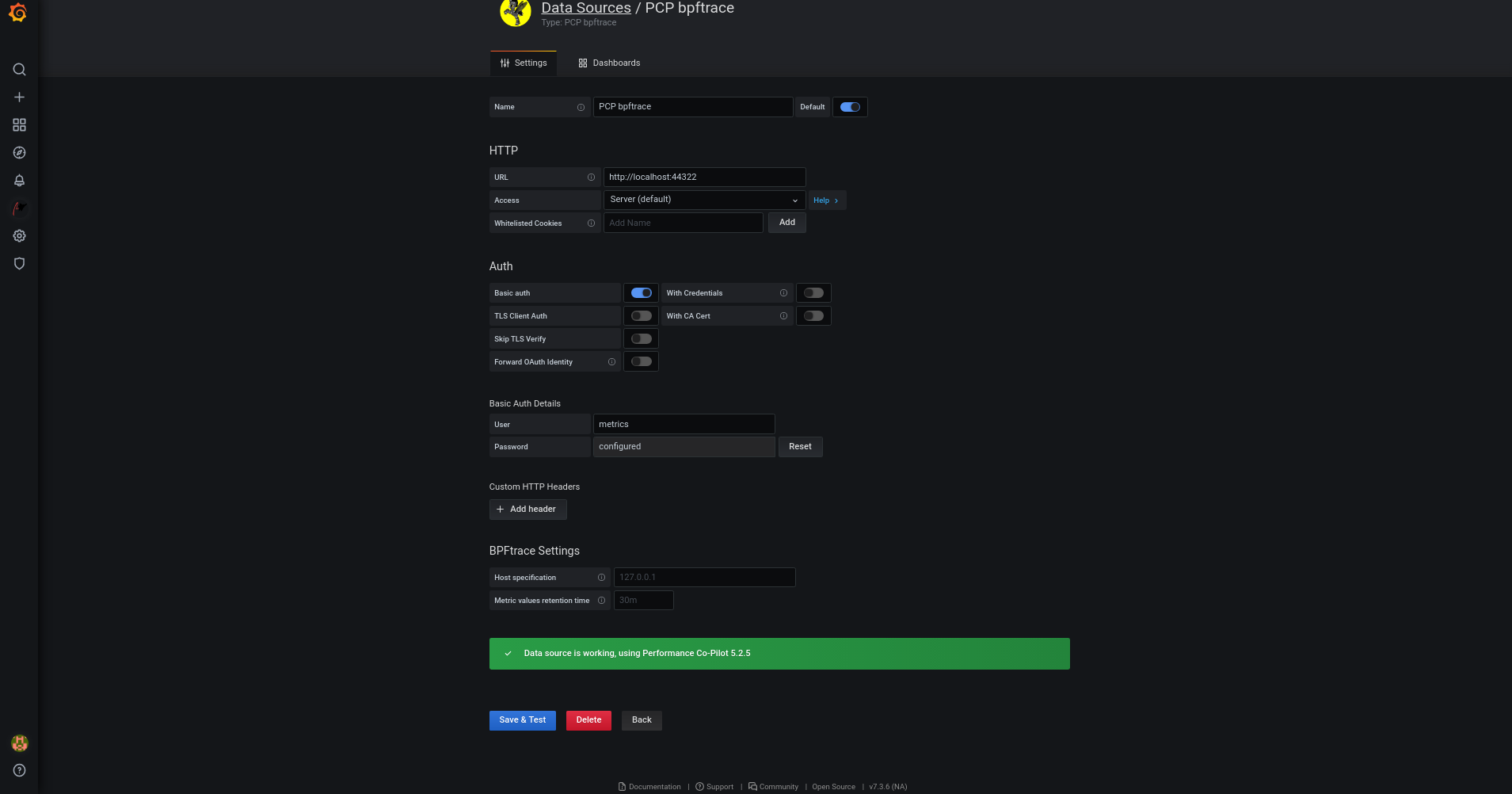

- Monitoring performance with Performance Co-Pilot

Chapter 2. Getting started with TuneD

As a system administrator, you can use the TuneD application to optimize the performance profile of your system for a variety of use cases.

2.1. The purpose of TuneD

TuneD is a service that monitors your system and optimizes the performance under certain workloads. The core of TuneD are profiles, which tune your system for different use cases.

TuneD is distributed with a number of predefined profiles for use cases such as:

- High throughput

- Low latency

- Saving power

It is possible to modify the rules defined for each profile and customize how to tune a particular device. When you switch to another profile or deactivate TuneD, all changes made to the system settings by the previous profile revert back to their original state.

You can also configure TuneD to react to changes in device usage and adjusts settings to improve performance of active devices and reduce power consumption of inactive devices.

2.2. TuneD profiles

A detailed analysis of a system can be very time-consuming. TuneD provides a number of predefined profiles for typical use cases. You can also create, modify, and delete profiles.

The profiles provided with TuneD are divided into the following categories:

- Power-saving profiles

- Performance-boosting profiles

The performance-boosting profiles include profiles that focus on the following aspects:

- Low latency for storage and network

- High throughput for storage and network

- Virtual machine performance

- Virtualization host performance

Syntax of profile configuration

The tuned.conf file can contain one [main] section and other sections for configuring plug-in instances. However, all sections are optional.

Lines starting with the hash sign (#) are comments.

Additional resources

-

tuned.conf(5)man page.

2.3. The default TuneD profile

During the installation, the best profile for your system is selected automatically. Currently, the default profile is selected according to the following customizable rules:

| Environment | Default profile | Goal |

|---|---|---|

| Compute nodes |

| The best throughput performance |

| Virtual machines |

|

The best performance. If you are not interested in the best performance, you can change it to the |

| Other cases |

| Balanced performance and power consumption |

Additional resources

-

tuned.conf(5)man page.

2.4. Merged TuneD profiles

As an experimental feature, it is possible to select more profiles at once. TuneD will try to merge them during the load.

If there are conflicts, the settings from the last specified profile takes precedence.

Example 2.1. Low power consumption in a virtual guest

The following example optimizes the system to run in a virtual machine for the best performance and concurrently tunes it for low power consumption, while the low power consumption is the priority:

# tuned-adm profile virtual-guest powersave

Merging is done automatically without checking whether the resulting combination of parameters makes sense. Consequently, the feature might tune some parameters the opposite way, which might be counterproductive: for example, setting the disk for high throughput by using the throughput-performance profile and concurrently setting the disk spindown to the low value by the spindown-disk profile.

Additional resources

*tuned-adm man page. * tuned.conf(5) man page.

2.5. The location of TuneD profiles

TuneD stores profiles in the following directories:

/usr/lib/tuned/-

Distribution-specific profiles are stored in the directory. Each profile has its own directory. The profile consists of the main configuration file called

tuned.conf, and optionally other files, for example helper scripts. /etc/tuned/-

If you need to customize a profile, copy the profile directory into the directory, which is used for custom profiles. If there are two profiles of the same name, the custom profile located in

/etc/tuned/is used.

Additional resources

-

tuned.conf(5)man page.

2.6. TuneD profiles distributed with RHEL

The following is a list of profiles that are installed with TuneD on Red Hat Enterprise Linux.

There might be more product-specific or third-party TuneD profiles available. Such profiles are usually provided by separate RPM packages.

balancedThe default power-saving profile. It is intended to be a compromise between performance and power consumption. It uses auto-scaling and auto-tuning whenever possible. The only drawback is the increased latency. In the current TuneD release, it enables the CPU, disk, audio, and video plugins, and activates the

conservativeCPU governor. Theradeon_powersaveoption uses thedpm-balancedvalue if it is supported, otherwise it is set toauto.It changes the

energy_performance_preferenceattribute to thenormalenergy setting. It also changes thescaling_governorpolicy attribute to either theconservativeorpowersaveCPU governor.powersaveA profile for maximum power saving performance. It can throttle the performance in order to minimize the actual power consumption. In the current TuneD release it enables USB autosuspend, WiFi power saving, and Aggressive Link Power Management (ALPM) power savings for SATA host adapters. It also schedules multi-core power savings for systems with a low wakeup rate and activates the

ondemandgovernor. It enables AC97 audio power saving or, depending on your system, HDA-Intel power savings with a 10 seconds timeout. If your system contains a supported Radeon graphics card with enabled KMS, the profile configures it to automatic power saving. On ASUS Eee PCs, a dynamic Super Hybrid Engine is enabled.It changes the

energy_performance_preferenceattribute to thepowersaveorpowerenergy setting. It also changes thescaling_governorpolicy attribute to either theondemandorpowersaveCPU governor.NoteIn certain cases, the

balancedprofile is more efficient compared to thepowersaveprofile.Consider there is a defined amount of work that needs to be done, for example a video file that needs to be transcoded. Your machine might consume less energy if the transcoding is done on the full power, because the task is finished quickly, the machine starts to idle, and it can automatically step-down to very efficient power save modes. On the other hand, if you transcode the file with a throttled machine, the machine consumes less power during the transcoding, but the process takes longer and the overall consumed energy can be higher.

That is why the

balancedprofile can be generally a better option.throughput-performanceA server profile optimized for high throughput. It disables power savings mechanisms and enables

sysctlsettings that improve the throughput performance of the disk and network IO. CPU governor is set toperformance.It changes the

energy_performance_preferenceandscaling_governorattribute to theperformanceprofile.accelerator-performance-

The

accelerator-performanceprofile contains the same tuning as thethroughput-performanceprofile. Additionally, it locks the CPU to low C states so that the latency is less than 100us. This improves the performance of certain accelerators, such as GPUs. latency-performanceA server profile optimized for low latency. It disables power savings mechanisms and enables

sysctlsettings that improve latency. CPU governor is set toperformanceand the CPU is locked to the low C states (by PM QoS).It changes the

energy_performance_preferenceandscaling_governorattribute to theperformanceprofile.network-latencyA profile for low latency network tuning. It is based on the

latency-performanceprofile. It additionally disables transparent huge pages and NUMA balancing, and tunes several other network-relatedsysctlparameters.It inherits the

latency-performanceprofile which changes theenergy_performance_preferenceandscaling_governorattribute to theperformanceprofile.hpc-compute-

A profile optimized for high-performance computing. It is based on the

latency-performanceprofile. network-throughputA profile for throughput network tuning. It is based on the

throughput-performanceprofile. It additionally increases kernel network buffers.It inherits either the

latency-performanceorthroughput-performanceprofile, and changes theenergy_performance_preferenceandscaling_governorattribute to theperformanceprofile.virtual-guestA profile designed for Red Hat Enterprise Linux 8 virtual machines and VMWare guests based on the

throughput-performanceprofile that, among other tasks, decreases virtual memory swappiness and increases disk readahead values. It does not disable disk barriers.It inherits the

throughput-performanceprofile and changes theenergy_performance_preferenceandscaling_governorattribute to theperformanceprofile.virtual-hostA profile designed for virtual hosts based on the

throughput-performanceprofile that, among other tasks, decreases virtual memory swappiness, increases disk readahead values, and enables a more aggressive value of dirty pages writeback.It inherits the

throughput-performanceprofile and changes theenergy_performance_preferenceandscaling_governorattribute to theperformanceprofile.oracle-

A profile optimized for Oracle databases loads based on

throughput-performanceprofile. It additionally disables transparent huge pages and modifies other performance-related kernel parameters. This profile is provided by thetuned-profiles-oraclepackage. desktop-

A profile optimized for desktops, based on the

balancedprofile. It additionally enables scheduler autogroups for better response of interactive applications. optimize-serial-consoleA profile that tunes down I/O activity to the serial console by reducing the printk value. This should make the serial console more responsive. This profile is intended to be used as an overlay on other profiles. For example:

# tuned-adm profile throughput-performance optimize-serial-console

mssql-

A profile provided for Microsoft SQL Server. It is based on the

throughput-performanceprofile. intel-sstA profile optimized for systems with user-defined Intel Speed Select Technology configurations. This profile is intended to be used as an overlay on other profiles. For example:

# tuned-adm profile cpu-partitioning intel-sst

2.7. TuneD cpu-partitioning profile

For tuning Red Hat Enterprise Linux 8 for latency-sensitive workloads, Red Hat recommends to use the cpu-partitioning TuneD profile.

Prior to Red Hat Enterprise Linux 8, the low-latency Red Hat documentation described the numerous low-level steps needed to achieve low-latency tuning. In Red Hat Enterprise Linux 8, you can perform low-latency tuning more efficiently by using the cpu-partitioning TuneD profile. This profile is easily customizable according to the requirements for individual low-latency applications.

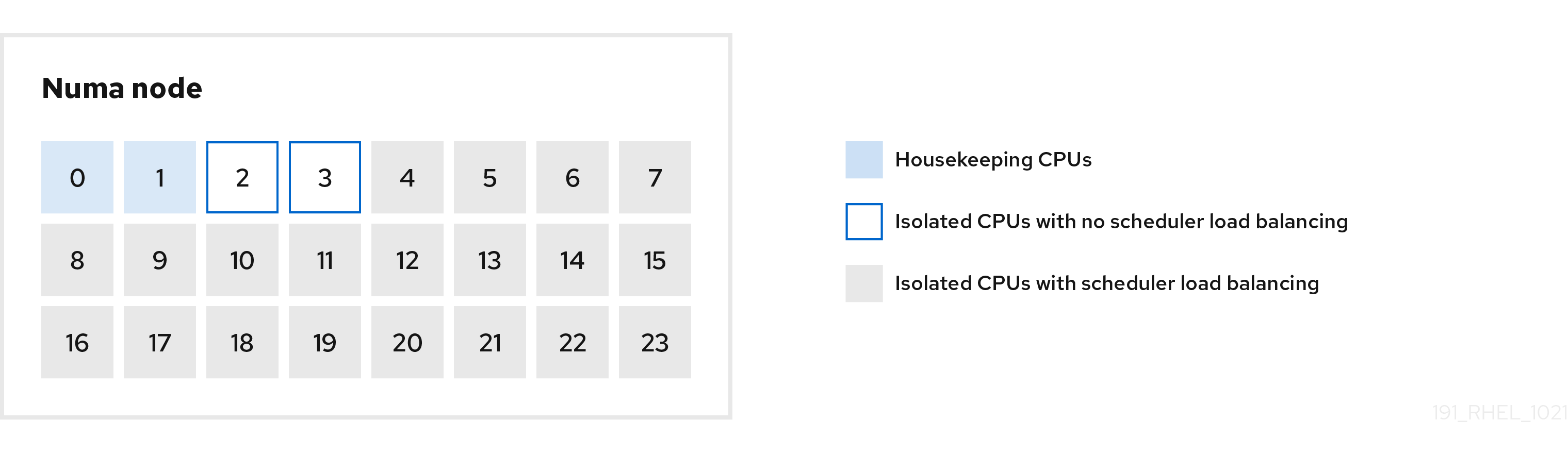

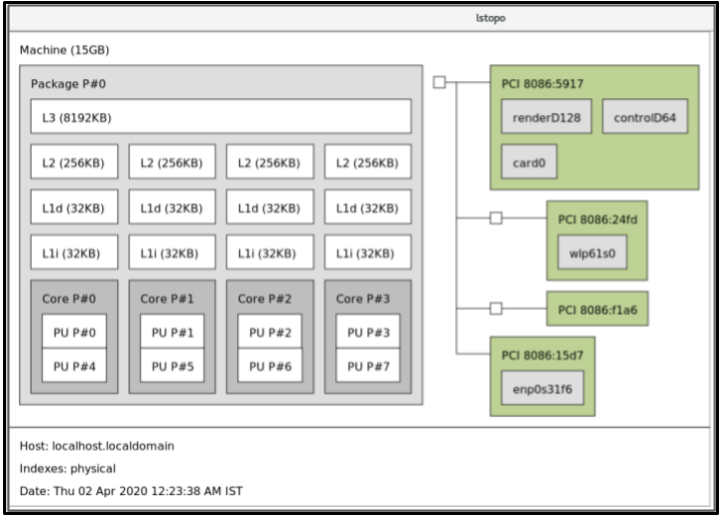

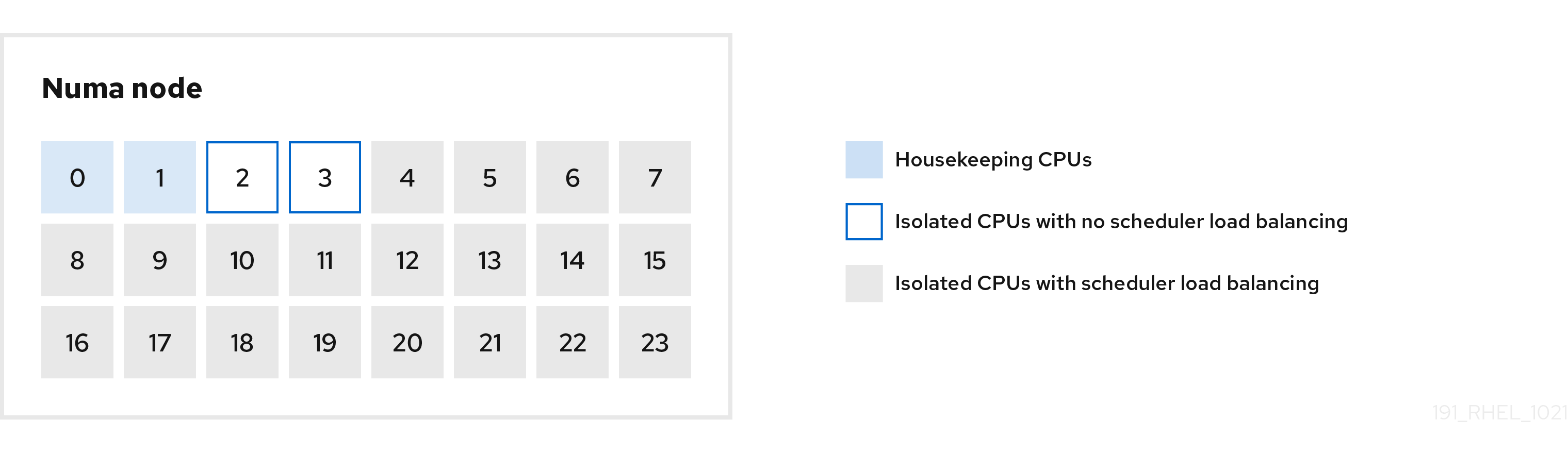

The following figure is an example to demonstrate how to use the cpu-partitioning profile. This example uses the CPU and node layout.

Figure 2.1. Figure cpu-partitioning

You can configure the cpu-partitioning profile in the /etc/tuned/cpu-partitioning-variables.conf file using the following configuration options:

- Isolated CPUs with load balancing

In the cpu-partitioning figure, the blocks numbered from 4 to 23, are the default isolated CPUs. The kernel scheduler’s process load balancing is enabled on these CPUs. It is designed for low-latency processes with multiple threads that need the kernel scheduler load balancing.

You can configure the cpu-partitioning profile in the

/etc/tuned/cpu-partitioning-variables.conffile using theisolated_cores=cpu-listoption, which lists CPUs to isolate that will use the kernel scheduler load balancing.The list of isolated CPUs is comma-separated or you can specify a range using a dash, such as

3-5. This option is mandatory. Any CPU missing from this list is automatically considered a housekeeping CPU.- Isolated CPUs without load balancing

In the cpu-partitioning figure, the blocks numbered 2 and 3, are the isolated CPUs that do not provide any additional kernel scheduler process load balancing.

You can configure the cpu-partitioning profile in the

/etc/tuned/cpu-partitioning-variables.conffile using theno_balance_cores=cpu-listoption, which lists CPUs to isolate that will not use the kernel scheduler load balancing.Specifying the

no_balance_coresoption is optional, however any CPUs in this list must be a subset of the CPUs listed in theisolated_coreslist.Application threads using these CPUs need to be pinned individually to each CPU.

- Housekeeping CPUs

-

Any CPU not isolated in the

cpu-partitioning-variables.conffile is automatically considered a housekeeping CPU. On the housekeeping CPUs, all services, daemons, user processes, movable kernel threads, interrupt handlers, and kernel timers are permitted to execute.

Additional resources

-

tuned-profiles-cpu-partitioning(7)man page

2.8. Using the TuneD cpu-partitioning profile for low-latency tuning

This procedure describes how to tune a system for low-latency using the TuneD’s cpu-partitioning profile. It uses the example of a low-latency application that can use cpu-partitioning and the CPU layout as mentioned in the cpu-partitioning figure.

The application in this case uses:

- One dedicated reader thread that reads data from the network will be pinned to CPU 2.

- A large number of threads that process this network data will be pinned to CPUs 4-23.

- A dedicated writer thread that writes the processed data to the network will be pinned to CPU 3.

Prerequisites

-

You have installed the

cpu-partitioningTuneD profile by using theyum install tuned-profiles-cpu-partitioningcommand as root.

Procedure

Edit

/etc/tuned/cpu-partitioning-variables.conffile and add the following information:# All isolated CPUs: isolated_cores=2-23 # Isolated CPUs without the kernel’s scheduler load balancing: no_balance_cores=2,3

Set the

cpu-partitioningTuneD profile:# tuned-adm profile cpu-partitioning

Reboot

After rebooting, the system is tuned for low-latency, according to the isolation in the cpu-partitioning figure. The application can use taskset to pin the reader and writer threads to CPUs 2 and 3, and the remaining application threads on CPUs 4-23.

Additional resources

-

tuned-profiles-cpu-partitioning(7)man page

2.9. Customizing the cpu-partitioning TuneD profile

You can extend the TuneD profile to make additional tuning changes.

For example, the cpu-partitioning profile sets the CPUs to use cstate=1. In order to use the cpu-partitioning profile but to additionally change the CPU cstate from cstate1 to cstate0, the following procedure describes a new TuneD profile named my_profile, which inherits the cpu-partitioning profile and then sets C state-0.

Procedure

Create the

/etc/tuned/my_profiledirectory:# mkdir /etc/tuned/my_profileCreate a

tuned.conffile in this directory, and add the following content:# vi /etc/tuned/my_profile/tuned.conf [main] summary=Customized tuning on top of cpu-partitioning include=cpu-partitioning [cpu] force_latency=cstate.id:0|1Use the new profile:

# tuned-adm profile my_profile

In the shared example, a reboot is not required. However, if the changes in the my_profile profile require a reboot to take effect, then reboot your machine.

Additional resources

-

tuned-profiles-cpu-partitioning(7)man page

2.10. Real-time TuneD profiles distributed with RHEL

Real-time profiles are intended for systems running the real-time kernel. Without a special kernel build, they do not configure the system to be real-time. On RHEL, the profiles are available from additional repositories.

The following real-time profiles are available:

realtimeUse on bare-metal real-time systems.

Provided by the

tuned-profiles-realtimepackage, which is available from the RT or NFV repositories.realtime-virtual-hostUse in a virtualization host configured for real-time.

Provided by the

tuned-profiles-nfv-hostpackage, which is available from the NFV repository.realtime-virtual-guestUse in a virtualization guest configured for real-time.

Provided by the

tuned-profiles-nfv-guestpackage, which is available from the NFV repository.

2.11. Static and dynamic tuning in TuneD

Understanding the difference between the two categories of system tuning that TuneD applies, static and dynamic, is important when determining which one to use for a given situation or purpose.

- Static tuning

-

Mainly consists of the application of predefined

sysctlandsysfssettings and one-shot activation of several configuration tools such asethtool. - Dynamic tuning

Watches how various system components are used throughout the uptime of your system. TuneD adjusts system settings dynamically based on that monitoring information.

For example, the hard drive is used heavily during startup and login, but is barely used later when the user might mainly work with applications such as web browsers or email clients. Similarly, the CPU and network devices are used differently at different times. TuneD monitors the activity of these components and reacts to the changes in their use.

By default, dynamic tuning is disabled. To enable it, edit the

/etc/tuned/tuned-main.conffile and change thedynamic_tuningoption to1. TuneD then periodically analyzes system statistics and uses them to update your system tuning settings. To configure the time interval in seconds between these updates, use theupdate_intervaloption.Currently implemented dynamic tuning algorithms try to balance the performance and powersave, and are therefore disabled in the performance profiles. Dynamic tuning for individual plug-ins can be enabled or disabled in the TuneD profiles.

Example 2.2. Static and dynamic tuning on a workstation

On a typical office workstation, the Ethernet network interface is inactive most of the time. Only a few emails go in and out or some web pages might be loaded.

For those kinds of loads, the network interface does not have to run at full speed all the time, as it does by default. TuneD has a monitoring and tuning plug-in for network devices that can detect this low activity and then automatically lower the speed of that interface, typically resulting in a lower power usage.

If the activity on the interface increases for a longer period of time, for example because a DVD image is being downloaded or an email with a large attachment is opened, TuneD detects this and sets the interface speed to maximum to offer the best performance while the activity level is high.

This principle is used for other plug-ins for CPU and disks as well.

2.12. TuneD no-daemon mode

You can run TuneD in no-daemon mode, which does not require any resident memory. In this mode, TuneD applies the settings and exits.

By default, no-daemon mode is disabled because a lot of TuneD functionality is missing in this mode, including:

- D-Bus support

- Hot-plug support

- Rollback support for settings

To enable no-daemon mode, include the following line in the /etc/tuned/tuned-main.conf file:

daemon = 0

2.13. Installing and enabling TuneD

This procedure installs and enables the TuneD application, installs TuneD profiles, and presets a default TuneD profile for your system.

Procedure

Install the

TuneDpackage:# yum install tuned

Enable and start the

TuneDservice:# systemctl enable --now tuned

Optionally, install TuneD profiles for real-time systems:

For the TuneD profiles for real-time systems enable

rhel-8repository.# subscription-manager repos --enable=rhel-8-for-x86_64-nfv-beta-rpms

Install it.

# yum install tuned-profiles-realtime tuned-profiles-nfv

Verify that a TuneD profile is active and applied:

$ tuned-adm active Current active profile: throughput-performanceNoteThe active profile TuneD automatically presets differs based on your machine type and system settings.

$ tuned-adm verify Verification succeeded, current system settings match the preset profile. See tuned log file ('/var/log/tuned/tuned.log') for details.

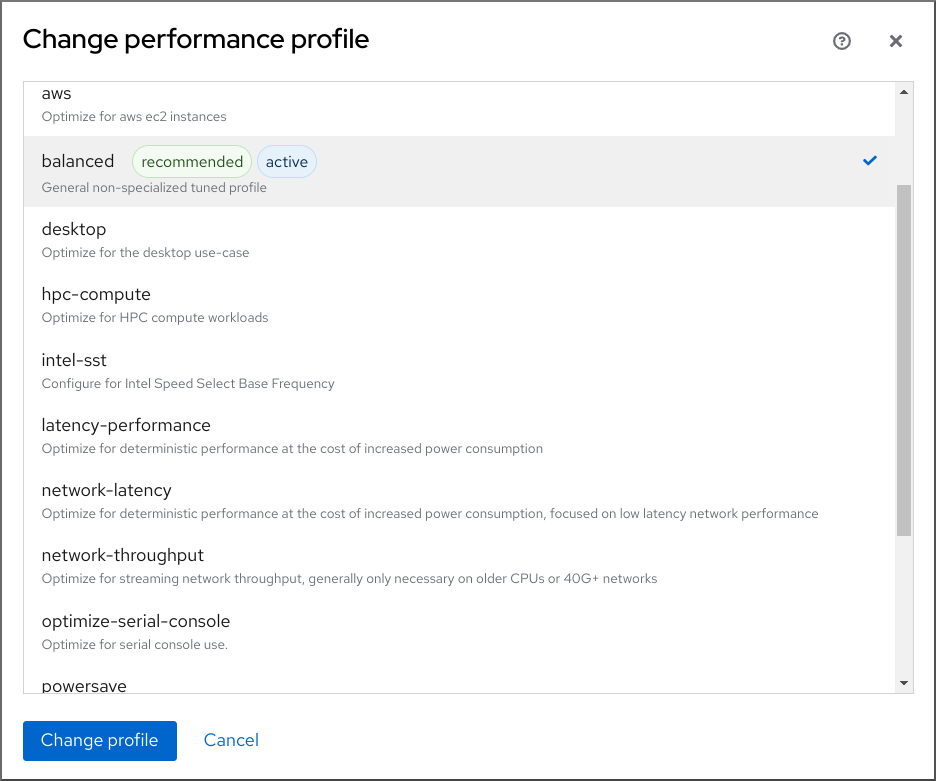

2.14. Listing available TuneD profiles

This procedure lists all TuneD profiles that are currently available on your system.

Procedure

To list all available TuneD profiles on your system, use:

$ tuned-adm list Available profiles: - accelerator-performance - Throughput performance based tuning with disabled higher latency STOP states - balanced - General non-specialized TuneD profile - desktop - Optimize for the desktop use-case - latency-performance - Optimize for deterministic performance at the cost of increased power consumption - network-latency - Optimize for deterministic performance at the cost of increased power consumption, focused on low latency network performance - network-throughput - Optimize for streaming network throughput, generally only necessary on older CPUs or 40G+ networks - powersave - Optimize for low power consumption - throughput-performance - Broadly applicable tuning that provides excellent performance across a variety of common server workloads - virtual-guest - Optimize for running inside a virtual guest - virtual-host - Optimize for running KVM guests Current active profile: balanced

To display only the currently active profile, use:

$ tuned-adm active Current active profile: throughput-performance

Additional resources

-

tuned-adm(8)man page.

2.15. Setting a TuneD profile

This procedure activates a selected TuneD profile on your system.

Prerequisites

-

The

TuneDservice is running. See Installing and Enabling TuneD for details.

Procedure

Optionally, you can let TuneD recommend the most suitable profile for your system:

# tuned-adm recommend throughput-performanceActivate a profile:

# tuned-adm profile selected-profileAlternatively, you can activate a combination of multiple profiles:

# tuned-adm profile selected-profile1 selected-profile2

Example 2.3. A virtual machine optimized for low power consumption

The following example optimizes the system to run in a virtual machine with the best performance and concurrently tunes it for low power consumption, while the low power consumption is the priority:

# tuned-adm profile virtual-guest powersave

View the current active TuneD profile on your system:

# tuned-adm active Current active profile: selected-profileReboot the system:

# reboot

Verification steps

Verify that the TuneD profile is active and applied:

$ tuned-adm verify Verification succeeded, current system settings match the preset profile. See tuned log file ('/var/log/tuned/tuned.log') for details.

Additional resources

-

tuned-adm(8)man page

2.16. Using the TuneD D-Bus interface

You can directly communicate with TuneD at runtime through the TuneD D-Bus interface to control a variety of TuneD services.

You can use the busctl or dbus-send commands to access the D-Bus API.

Although you can use either the busctl or dbus-send command, the busctl command is a part of systemd and, therefore, present on most hosts already.

2.16.1. Using the TuneD D-Bus interface to show available TuneD D-Bus API methods

You can see the D-Bus API methods available to use with TuneD by using the TuneD D-Bus interface.

Prerequisites

- The TuneD service is running. See Installing and Enabling TuneD for details.

Procedure

To see the available TuneD API methods, run:

$ busctl introspect com.redhat.tuned /Tuned com.redhat.tuned.control

The output should look similar to the following:

NAME TYPE SIGNATURE RESULT/VALUE FLAGS .active_profile method - s - .auto_profile method - (bs) - .disable method - b - .get_all_plugins method - a{sa{ss}} - .get_plugin_documentation method s s - .get_plugin_hints method s a{ss} - .instance_acquire_devices method ss (bs) - .is_running method - b - .log_capture_finish method s s - .log_capture_start method ii s - .post_loaded_profile method - s - .profile_info method s (bsss) - .profile_mode method - (ss) - .profiles method - as - .profiles2 method - a(ss) - .recommend_profile method - s - .register_socket_signal_path method s b - .reload method - b - .start method - b - .stop method - b - .switch_profile method s (bs) - .verify_profile method - b - .verify_profile_ignore_missing method - b - .profile_changed signal sbs - -You can find descriptions of the different available methods in the TuneD upstream repository.

2.16.2. Using the TuneD D-Bus interface to change the active TuneD profile

You can replace the active TuneD profile with your desired TuneD profile by using the TuneD D-Bus interface.

Prerequisites

- The TuneD service is running. See Installing and Enabling TuneD for details.

Procedure

To change the active TuneD profile, run:

$ busctl call com.redhat.tuned /Tuned com.redhat.tuned.control switch_profile s profile (bs) true "OK"Replace profile with the name of your desired profile.

Verification

To view the current active TuneD profile, run:

$ busctl call com.redhat.tuned /Tuned com.redhat.tuned.control active_profile s "profile"

2.17. Disabling TuneD

This procedure disables TuneD and resets all affected system settings to their original state before TuneD modified them.

Procedure

To disable all tunings temporarily:

# tuned-adm off

The tunings are applied again after the

TuneDservice restarts.Alternatively, to stop and disable the

TuneDservice permanently:# systemctl disable --now tuned

Additional resources

-

tuned-adm(8)man page

Chapter 3. Customizing TuneD profiles

You can create or modify TuneD profiles to optimize system performance for your intended use case.

Prerequisites

- Install and enable TuneD as described in Installing and Enabling TuneD for details.

3.1. TuneD profiles

A detailed analysis of a system can be very time-consuming. TuneD provides a number of predefined profiles for typical use cases. You can also create, modify, and delete profiles.

The profiles provided with TuneD are divided into the following categories:

- Power-saving profiles

- Performance-boosting profiles

The performance-boosting profiles include profiles that focus on the following aspects:

- Low latency for storage and network

- High throughput for storage and network

- Virtual machine performance

- Virtualization host performance

Syntax of profile configuration

The tuned.conf file can contain one [main] section and other sections for configuring plug-in instances. However, all sections are optional.

Lines starting with the hash sign (#) are comments.

Additional resources

-

tuned.conf(5)man page.

3.2. The default TuneD profile

During the installation, the best profile for your system is selected automatically. Currently, the default profile is selected according to the following customizable rules:

| Environment | Default profile | Goal |

|---|---|---|

| Compute nodes |

| The best throughput performance |

| Virtual machines |

|

The best performance. If you are not interested in the best performance, you can change it to the |

| Other cases |

| Balanced performance and power consumption |

Additional resources

-

tuned.conf(5)man page.

3.3. Merged TuneD profiles

As an experimental feature, it is possible to select more profiles at once. TuneD will try to merge them during the load.

If there are conflicts, the settings from the last specified profile takes precedence.

Example 3.1. Low power consumption in a virtual guest

The following example optimizes the system to run in a virtual machine for the best performance and concurrently tunes it for low power consumption, while the low power consumption is the priority:

# tuned-adm profile virtual-guest powersave

Merging is done automatically without checking whether the resulting combination of parameters makes sense. Consequently, the feature might tune some parameters the opposite way, which might be counterproductive: for example, setting the disk for high throughput by using the throughput-performance profile and concurrently setting the disk spindown to the low value by the spindown-disk profile.

Additional resources

*tuned-adm man page. * tuned.conf(5) man page.

3.4. The location of TuneD profiles

TuneD stores profiles in the following directories:

/usr/lib/tuned/-

Distribution-specific profiles are stored in the directory. Each profile has its own directory. The profile consists of the main configuration file called

tuned.conf, and optionally other files, for example helper scripts. /etc/tuned/-

If you need to customize a profile, copy the profile directory into the directory, which is used for custom profiles. If there are two profiles of the same name, the custom profile located in

/etc/tuned/is used.

Additional resources

-

tuned.conf(5)man page.

3.5. Inheritance between TuneD profiles

TuneD profiles can be based on other profiles and modify only certain aspects of their parent profile.

The [main] section of TuneD profiles recognizes the include option:

[main]

include=parentAll settings from the parent profile are loaded in this child profile. In the following sections, the child profile can override certain settings inherited from the parent profile or add new settings not present in the parent profile.

You can create your own child profile in the /etc/tuned/ directory based on a pre-installed profile in /usr/lib/tuned/ with only some parameters adjusted.

If the parent profile is updated, such as after a TuneD upgrade, the changes are reflected in the child profile.

Example 3.2. A power-saving profile based on balanced

The following is an example of a custom profile that extends the balanced profile and sets Aggressive Link Power Management (ALPM) for all devices to the maximum powersaving.

[main] include=balanced [scsi_host] alpm=min_power

Additional resources

-

tuned.conf(5)man page

3.6. Static and dynamic tuning in TuneD

Understanding the difference between the two categories of system tuning that TuneD applies, static and dynamic, is important when determining which one to use for a given situation or purpose.

- Static tuning

-

Mainly consists of the application of predefined

sysctlandsysfssettings and one-shot activation of several configuration tools such asethtool. - Dynamic tuning

Watches how various system components are used throughout the uptime of your system. TuneD adjusts system settings dynamically based on that monitoring information.

For example, the hard drive is used heavily during startup and login, but is barely used later when the user might mainly work with applications such as web browsers or email clients. Similarly, the CPU and network devices are used differently at different times. TuneD monitors the activity of these components and reacts to the changes in their use.

By default, dynamic tuning is disabled. To enable it, edit the

/etc/tuned/tuned-main.conffile and change thedynamic_tuningoption to1. TuneD then periodically analyzes system statistics and uses them to update your system tuning settings. To configure the time interval in seconds between these updates, use theupdate_intervaloption.Currently implemented dynamic tuning algorithms try to balance the performance and powersave, and are therefore disabled in the performance profiles. Dynamic tuning for individual plug-ins can be enabled or disabled in the TuneD profiles.

Example 3.3. Static and dynamic tuning on a workstation

On a typical office workstation, the Ethernet network interface is inactive most of the time. Only a few emails go in and out or some web pages might be loaded.

For those kinds of loads, the network interface does not have to run at full speed all the time, as it does by default. TuneD has a monitoring and tuning plug-in for network devices that can detect this low activity and then automatically lower the speed of that interface, typically resulting in a lower power usage.

If the activity on the interface increases for a longer period of time, for example because a DVD image is being downloaded or an email with a large attachment is opened, TuneD detects this and sets the interface speed to maximum to offer the best performance while the activity level is high.

This principle is used for other plug-ins for CPU and disks as well.

3.7. TuneD plug-ins

Plug-ins are modules in TuneD profiles that TuneD uses to monitor or optimize different devices on the system.

TuneD uses two types of plug-ins:

- Monitoring plug-ins

Monitoring plug-ins are used to get information from a running system. The output of the monitoring plug-ins can be used by tuning plug-ins for dynamic tuning.

Monitoring plug-ins are automatically instantiated whenever their metrics are needed by any of the enabled tuning plug-ins. If two tuning plug-ins require the same data, only one instance of the monitoring plug-in is created and the data is shared.

- Tuning plug-ins

- Each tuning plug-in tunes an individual subsystem and takes several parameters that are populated from the TuneD profiles. Each subsystem can have multiple devices, such as multiple CPUs or network cards, that are handled by individual instances of the tuning plug-ins. Specific settings for individual devices are also supported.

Syntax for plug-ins in TuneD profiles

Sections describing plug-in instances are formatted in the following way:

[NAME] type=TYPE devices=DEVICES

- NAME

- is the name of the plug-in instance as it is used in the logs. It can be an arbitrary string.

- TYPE

- is the type of the tuning plug-in.

- DEVICES

is the list of devices that this plug-in instance handles.

The

devicesline can contain a list, a wildcard (*), and negation (!). If there is nodevicesline, all devices present or later attached on the system of the TYPE are handled by the plug-in instance. This is same as using thedevices=*option.Example 3.4. Matching block devices with a plug-in

The following example matches all block devices starting with

sd, such assdaorsdb, and does not disable barriers on them:[data_disk] type=disk devices=sd* disable_barriers=false

The following example matches all block devices except

sda1andsda2:[data_disk] type=disk devices=!sda1, !sda2 disable_barriers=false

If no instance of a plug-in is specified, the plug-in is not enabled.

If the plug-in supports more options, they can be also specified in the plug-in section. If the option is not specified and it was not previously specified in the included plug-in, the default value is used.

Short plug-in syntax

If you do not need custom names for the plug-in instance and there is only one definition of the instance in your configuration file, TuneD supports the following short syntax:

[TYPE] devices=DEVICES

In this case, it is possible to omit the type line. The instance is then referred to with a name, same as the type. The previous example could be then rewritten into:

Example 3.5. Matching block devices using the short syntax

[disk] devices=sdb* disable_barriers=false

Conflicting plug-in definitions in a profile

If the same section is specified more than once using the include option, the settings are merged. If they cannot be merged due to a conflict, the last conflicting definition overrides the previous settings. If you do not know what was previously defined, you can use the replace Boolean option and set it to true. This causes all the previous definitions with the same name to be overwritten and the merge does not happen.

You can also disable the plug-in by specifying the enabled=false option. This has the same effect as if the instance was never defined. Disabling the plug-in is useful if you are redefining the previous definition from the include option and do not want the plug-in to be active in your custom profile.

- NOTE

TuneD includes the ability to run any shell command as part of enabling or disabling a tuning profile. This enables you to extend TuneD profiles with functionality that has not been integrated into TuneD yet.

You can specify arbitrary shell commands using the

scriptplug-in.

Additional resources

-

tuned.conf(5)man page

3.8. Available TuneD plug-ins

Monitoring plug-ins

Currently, the following monitoring plug-ins are implemented:

disk- Gets disk load (number of IO operations) per device and measurement interval.

net- Gets network load (number of transferred packets) per network card and measurement interval.

load- Gets CPU load per CPU and measurement interval.

Tuning plug-ins

Currently, the following tuning plug-ins are implemented. Only some of these plug-ins implement dynamic tuning. Options supported by plug-ins are also listed:

cpuSets the CPU governor to the value specified by the

governoroption and dynamically changes the Power Management Quality of Service (PM QoS) CPU Direct Memory Access (DMA) latency according to the CPU load.If the CPU load is lower than the value specified by the

load_thresholdoption, the latency is set to the value specified by thelatency_highoption, otherwise it is set to the value specified bylatency_low.You can also force the latency to a specific value and prevent it from dynamically changing further. To do so, set the

force_latencyoption to the required latency value.eeepc_sheDynamically sets the front-side bus (FSB) speed according to the CPU load.

This feature can be found on some netbooks and is also known as the ASUS Super Hybrid Engine (SHE).

If the CPU load is lower or equal to the value specified by the

load_threshold_powersaveoption, the plug-in sets the FSB speed to the value specified by theshe_powersaveoption. If the CPU load is higher or equal to the value specified by theload_threshold_normaloption, it sets the FSB speed to the value specified by theshe_normaloption.Static tuning is not supported and the plug-in is transparently disabled if TuneD does not detect the hardware support for this feature.

net-

Configures the Wake-on-LAN functionality to the values specified by the

wake_on_lanoption. It uses the same syntax as theethtoolutility. It also dynamically changes the interface speed according to the interface utilization. sysctlSets various

sysctlsettings specified by the plug-in options.The syntax is

name=value, where name is the same as the name provided by thesysctlutility.Use the

sysctlplug-in if you need to change system settings that are not covered by other plug-ins available in TuneD. If the settings are covered by some specific plug-ins, prefer these plug-ins.usbSets autosuspend timeout of USB devices to the value specified by the

autosuspendparameter.The value

0means that autosuspend is disabled.vmEnables or disables transparent huge pages depending on the value of the

transparent_hugepagesoption.Valid values of the

transparent_hugepagesoption are:- "always"

- "never"

- "madvise"

audioSets the autosuspend timeout for audio codecs to the value specified by the

timeoutoption.Currently, the

snd_hda_intelandsnd_ac97_codeccodecs are supported. The value0means that the autosuspend is disabled. You can also enforce the controller reset by setting the Boolean optionreset_controllertotrue.diskSets the disk elevator to the value specified by the

elevatoroption.It also sets:

-

APM to the value specified by the

apmoption -

Scheduler quantum to the value specified by the

scheduler_quantumoption -

Disk spindown timeout to the value specified by the

spindownoption -

Disk readahead to the value specified by the

readaheadparameter -

The current disk readahead to a value multiplied by the constant specified by the

readahead_multiplyoption

In addition, this plug-in dynamically changes the advanced power management and spindown timeout setting for the drive according to the current drive utilization. The dynamic tuning can be controlled by the Boolean option

dynamicand is enabled by default.-

APM to the value specified by the

scsi_hostTunes options for SCSI hosts.

It sets Aggressive Link Power Management (ALPM) to the value specified by the

alpmoption.mounts-

Enables or disables barriers for mounts according to the Boolean value of the

disable_barriersoption. scriptExecutes an external script or binary when the profile is loaded or unloaded. You can choose an arbitrary executable.

ImportantThe

scriptplug-in is provided mainly for compatibility with earlier releases. Prefer other TuneD plug-ins if they cover the required functionality.TuneD calls the executable with one of the following arguments:

-

startwhen loading the profile -

stopwhen unloading the profile

You need to correctly implement the

stopaction in your executable and revert all settings that you changed during thestartaction. Otherwise, the roll-back step after changing your TuneD profile will not work.Bash scripts can import the

/usr/lib/tuned/functionsBash library and use the functions defined there. Use these functions only for functionality that is not natively provided by TuneD. If a function name starts with an underscore, such as_wifi_set_power_level, consider the function private and do not use it in your scripts, because it might change in the future.Specify the path to the executable using the

scriptparameter in the plug-in configuration.Example 3.6. Running a Bash script from a profile

To run a Bash script named

script.shthat is located in the profile directory, use:[script] script=${i:PROFILE_DIR}/script.sh-

sysfsSets various

sysfssettings specified by the plug-in options.The syntax is

name=value, where name is thesysfspath to use.Use this plugin in case you need to change some settings that are not covered by other plug-ins. Prefer specific plug-ins if they cover the required settings.

videoSets various powersave levels on video cards. Currently, only the Radeon cards are supported.

The powersave level can be specified by using the

radeon_powersaveoption. Supported values are:-

default -

auto -

low -

mid -

high -

dynpm -

dpm-battery -

dpm-balanced -

dpm-perfomance

For details, see www.x.org. Note that this plug-in is experimental and the option might change in future releases.

-

bootloaderAdds options to the kernel command line. This plug-in supports only the GRUB 2 boot loader.

Customized non-standard location of the GRUB 2 configuration file can be specified by the

grub2_cfg_fileoption.The kernel options are added to the current GRUB configuration and its templates. The system needs to be rebooted for the kernel options to take effect.

Switching to another profile or manually stopping the

TuneDservice removes the additional options. If you shut down or reboot the system, the kernel options persist in thegrub.cfgfile.The kernel options can be specified by the following syntax:

cmdline=arg1 arg2 ... argN

Example 3.7. Modifying the kernel command line

For example, to add the

quietkernel option to a TuneD profile, include the following lines in thetuned.conffile:[bootloader] cmdline=quiet

The following is an example of a custom profile that adds the

isolcpus=2option to the kernel command line:[bootloader] cmdline=isolcpus=2

serviceHandles various

sysvinit,sysv-rc,openrc, andsystemdservices specified by the plug-in options.The syntax is

service.service_name=command[,file:file].Supported service-handling commands are:

-

start -

stop -

enable -

disable

Separate multiple commands using either a comma (

,) or a semicolon (;). If the directives conflict, theserviceplugin uses the last listed one.Use the optional

file:filedirective to install an overlay configuration file,file, forsystemdonly. Other init systems ignore this directive. Theserviceplugin copies overlay configuration files to/etc/systemd/system/service_name.service.d/directories. Once profiles are unloaded, theserviceplugin removes these directories if they are empty.NoteThe

serviceplugin only operates on the current runlevel with non-systemdinit systems.Example 3.8. Starting and enabling the sendmail

sendmailservice with an overlay file[service] service.sendmail=start,enable,file:${i:PROFILE_DIR}/tuned-sendmail.confThe internal variable

${i:PROFILE_DIR}points to the directory the plugin loads the profile from.-

scheduler- Offers a variety of options for the tuning of scheduling priorities, CPU core isolation, and process, thread, and IRQ affinities.

For specifics of the different options available, see Functionalities of the scheduler TuneD plug-in.

3.9. Functionalities of the scheduler TuneD plugin

Use the scheduler TuneD plugin to control and tune scheduling priorities, CPU core isolation, and process, thread, and IRQ afinities.

CPU isolation

To prevent processes, threads, and IRQs from using certain CPUs, use the isolated_cores option. It changes process and thread affinities, IRQ affinities, and sets the default_smp_affinity parameter for IRQs.

The CPU affinity mask is adjusted for all processes and threads matching the ps_whitelist option, subject to success of the sched_setaffinity() system call. The default setting of the ps_whitelist regular expression is .* to match all processes and thread names. To exclude certain processes and threads, use the ps_blacklist option. The value of this option is also interpreted as a regular expression. Process and thread names are matched against that expression. Profile rollback enables all matching processes and threads to run on all CPUs, and restores the IRQ settings prior to the profile application.

Multiple regular expressions separated by ; for the ps_whitelist and ps_blacklist options are supported. Escaped semicolon \; is taken literally.

Example 3.9. Isolate CPUs 2-4

The following configuration isolates CPUs 2-4. Processes and threads that match the ps_blacklist regular expression can use any CPUs regardless of the isolation:

[scheduler] isolated_cores=2-4 ps_blacklist=.*pmd.*;.*PMD.*;^DPDK;.*qemu-kvm.*

IRQ SMP affinity

The /proc/irq/default_smp_affinity file contains a bitmask representing the default target CPU cores on a system for all inactive interrupt request (IRQ) sources. Once an IRQ is activated or allocated, the value in the /proc/irq/default_smp_affinity file determines the IRQ’s affinity bitmask.

The default_irq_smp_affinity parameter controls what TuneD writes to the /proc/irq/default_smp_affinity file. The default_irq_smp_affinity parameter supports the following values and behaviors:

calcCalculates the content of the

/proc/irq/default_smp_affinityfile from theisolated_coresparameter. An inversion of theisolated_coresparameter calculates the non-isolated cores.The intersection of the non-isolated cores and the previous content of the

/proc/irq/default_smp_affinityfile is then written to the/proc/irq/default_smp_affinityfile.This is the default behavior if the

default_irq_smp_affinityparameter is omitted.ignore-

TuneD does not modify the

/proc/irq/default_smp_affinityfile. - A CPU list

Takes the form of a single number such as

1, a comma separated list such as1,3, or a range such as3-5.Unpacks the CPU list and writes it directly to the

/proc/irq/default_smp_affinityfile.

Example 3.10. Setting the default IRQ smp affinity using an explicit CPU list

The following example uses an explicit CPU list to set the default IRQ SMP affinity to CPUs 0 and 2:

[scheduler] isolated_cores=1,3 default_irq_smp_affinity=0,2

Scheduling policy

To adjust scheduling policy, priority and affinity for a group of processes or threads, use the following syntax:

group.groupname=rule_prio:sched:prio:affinity:regex

where rule_prio defines internal TuneD priority of the rule. Rules are sorted based on priority. This is needed for inheritance to be able to reorder previously defined rules. Equal rule_prio rules should be processed in the order they were defined. However, this is Python interpreter dependent. To disable an inherited rule for groupname, use:

group.groupname=

sched must be one of the following:

f- for first in, first out (FIFO)

b- for batch

r- for round robin

o- for other

*- for do not change

affinity is CPU affinity in hexadecimal. Use * for no change.

prio is scheduling priority (see chrt -m).

regex is Python regular expression. It is matched against the output of the ps -eo cmd command.

Any given process name can match more than one group. In such cases, the last matching regex determines the priority and scheduling policy.

Example 3.11. Setting scheduling policies and priorities

The following example sets the scheduling policy and priorities to kernel threads and watchdog:

[scheduler] group.kthreads=0:*:1:*:\[.*\]$ group.watchdog=0:f:99:*:\[watchdog.*\]

The scheduler plugin uses a perf event loop to identify newly created processes. By default, it listens to perf.RECORD_COMM and perf.RECORD_EXIT events.

Setting the perf_process_fork parameter to true tells the plug-in to also listen to perf.RECORD_FORK events, meaning that child processes created by the fork() system call are processed.

Processing perf events can pose a significant CPU overhead.

The CPU overhead of the scheduler plugin can be mitigated by using the scheduler runtime option and setting it to 0. This completely disables the dynamic scheduler functionality and the perf events are not monitored and acted upon. The disadvantage of this is that the process and thread tuning will be done only at profile application.

Example 3.12. Disabling the dynamic scheduler functionality

The following example disables the dynamic scheduler functionality while also isolating CPUs 1 and 3:

[scheduler] runtime=0 isolated_cores=1,3

The mmapped buffer is used for perf events. Under heavy loads, this buffer might overflow and as a result the plugin might start missing events and not processing some newly created processes. In such cases, use the perf_mmap_pages parameter to increase the buffer size. The value of the perf_mmap_pages parameter must be a power of 2. If the perf_mmap_pages parameter is not manually set, a default value of 128 is used.

Confinement using cgroups

The scheduler plugin supports process and thread confinement using cgroups v1.

The cgroup_mount_point option specifies the path to mount the cgroup file system, or, where TuneD expects it to be mounted. If it is unset, /sys/fs/cgroup/cpuset is expected.

If the cgroup_groups_init option is set to 1, TuneD creates and removes all cgroups defined with the cgroup* options. This is the default behavior. If the cgroup_mount_point option is set to 0, the cgroups must be preset by other means.

If the cgroup_mount_point_init option is set to 1, TuneD creates and removes the cgroup mount point. It implies cgroup_groups_init = 1. If the cgroup_mount_point_init option is set to 0, you must preset the cgroups mount point by other means. This is the default behavior.

The cgroup_for_isolated_cores option is the cgroup name for the isolated_cores option functionality. For example, if a system has 4 CPUs, isolated_cores=1 means that Tuned moves all processes and threads to CPUs 0, 2, and 3. The scheduler plug-in isolates the specified core by writing the calculated CPU affinity to the cpuset.cpus control file of the specified cgroup and moves all the matching processes and threads to this group. If this option is unset, classic cpuset affinity using sched_setaffinity() sets the CPU affinity.

The cgroup.cgroup_name option defines affinities for arbitrary cgroups. You can even use hierarchic cgroups, but you must specify the hierarchy in the correct order. TuneD does not do any sanity checks here, with the exception that it forces the cgroup to be in the location specified by the cgroup_mount_point option.

The syntax of the scheduler option starting with group. has been augmented to use cgroup.cgroup_name instead of the hexadecimal affinity. The matching processes are moved to the cgroup cgroup_name. You can also use cgroups not defined by the cgroup. option as described above. For example, cgroups not managed by TuneD.

All cgroup names are sanitized by replacing all periods (.) with slashes (/). This prevents the plugin from writing outside the location specified by the cgroup_mount_point option.

Example 3.13. Using cgroups v1 with the scheduler plug-in

The following example creates 2 cgroups, group1 and group2. It sets the cgroup group1 affinity to CPU 2 and the cgroup group2 to CPUs 0 and 2. Given a 4 CPU setup, the isolated_cores=1 option moves all processes and threads to CPU cores 0, 2, and 3. Processes and threads specified by the ps_blacklist regular expression are not moved.

[scheduler] cgroup_mount_point=/sys/fs/cgroup/cpuset cgroup_mount_point_init=1 cgroup_groups_init=1 cgroup_for_isolated_cores=group cgroup.group1=2 cgroup.group2=0,2 group.ksoftirqd=0:f:2:cgroup.group1:ksoftirqd.* ps_blacklist=ksoftirqd.*;rcuc.*;rcub.*;ktimersoftd.* isolated_cores=1

The cgroup_ps_blacklist option excludes processes belonging to the specified cgroups. The regular expression specified by this option is matched against cgroup hierarchies from /proc/PID/cgroups. Commas (,) separate cgroups v1 hierarchies from /proc/PID/cgroups before regular expression matching. The following is an example of content the regular expression is matched against:

10:hugetlb:/,9:perf_event:/,8:blkio:/

Multiple regular expressions can be separated by semicolons (;). The semicolon represents a logical 'or' operator.

Example 3.14. Excluding processes from the scheduler using cgroups

In the following example, the scheduler plug-in moves all processes away from core 1, except for processes which belong to cgroup /daemons. The \b string is a regular expression metacharacter that matches a word boundary.

[scheduler] isolated_cores=1 cgroup_ps_blacklist=:/daemons\b

In the following example, the scheduler plugin excludes all processes which belong to a cgroup with a hierarchy-ID of 8 and controller-list blkio.

[scheduler] isolated_cores=1 cgroup_ps_blacklist=\b8:blkio:

Recent kernels moved some sched_ and numa_balancing_ kernel run-time parameters from the /proc/sys/kernel directory managed by the sysctl utility, to debugfs, typically mounted under the /sys/kernel/debug directory. TuneD provides an abstraction mechanism for the following parameters via the scheduler plugin where, based on the kernel used, TuneD writes the specified value to the correct location:

-

sched_min_granularity_ns -

sched_latency_ns, -

sched_wakeup_granularity_ns -

sched_tunable_scaling, -

sched_migration_cost_ns -

sched_nr_migrate -

numa_balancing_scan_delay_ms -

numa_balancing_scan_period_min_ms -

numa_balancing_scan_period_max_ms numa_balancing_scan_size_mbExample 3.15. Set tasks' "cache hot" value for migration decisions.

On the old kernels, setting the following parameter meant that

sysctlwrote a value of500000to the/proc/sys/kernel/sched_migration_cost_nsfile:[sysctl] kernel.sched_migration_cost_ns=500000

This is, on more recent kernels, equivalent to setting the following parameter via the

schedulerplugin:[scheduler] sched_migration_cost_ns=500000

Meaning TuneD writes a value of

500000to the/sys/kernel/debug/sched/migration_cost_nsfile.

3.10. Variables in TuneD profiles

Variables expand at run time when a TuneD profile is activated.

Using TuneD variables reduces the amount of necessary typing in TuneD profiles.

There are no predefined variables in TuneD profiles. You can define your own variables by creating the [variables] section in a profile and using the following syntax:

[variables] variable_name=value

To expand the value of a variable in a profile, use the following syntax:

${variable_name}Example 3.16. Isolating CPU cores using variables

In the following example, the ${isolated_cores} variable expands to 1,2; hence the kernel boots with the isolcpus=1,2 option:

[variables]

isolated_cores=1,2

[bootloader]

cmdline=isolcpus=${isolated_cores}

The variables can be specified in a separate file. For example, you can add the following lines to tuned.conf:

[variables]

include=/etc/tuned/my-variables.conf

[bootloader]

cmdline=isolcpus=${isolated_cores}

If you add the isolated_cores=1,2 option to the /etc/tuned/my-variables.conf file, the kernel boots with the isolcpus=1,2 option.

Additional resources

-

tuned.conf(5)man page

3.11. Built-in functions in TuneD profiles

Built-in functions expand at run time when a TuneD profile is activated.

You can:

- Use various built-in functions together with TuneD variables

- Create custom functions in Python and add them to TuneD in the form of plug-ins

To call a function, use the following syntax:

${f:function_name:argument_1:argument_2}

To expand the directory path where the profile and the tuned.conf file are located, use the PROFILE_DIR function, which requires special syntax:

${i:PROFILE_DIR}Example 3.17. Isolating CPU cores using variables and built-in functions

In the following example, the ${non_isolated_cores} variable expands to 0,3-5, and the cpulist_invert built-in function is called with the 0,3-5 argument:

[variables]

non_isolated_cores=0,3-5

[bootloader]

cmdline=isolcpus=${f:cpulist_invert:${non_isolated_cores}}

The cpulist_invert function inverts the list of CPUs. For a 6-CPU machine, the inversion is 1,2, and the kernel boots with the isolcpus=1,2 command-line option.

Additional resources

-

tuned.conf(5)man page

3.12. Built-in functions available in TuneD profiles

The following built-in functions are available in all TuneD profiles:

PROFILE_DIR-

Returns the directory path where the profile and the

tuned.conffile are located. exec- Executes a process and returns its output.

assertion- Compares two arguments. If they do not match, the function logs text from the first argument and aborts profile loading.

assertion_non_equal- Compares two arguments. If they match, the function logs text from the first argument and aborts profile loading.

kb2s- Converts kilobytes to disk sectors.

s2kb- Converts disk sectors to kilobytes.

strip- Creates a string from all passed arguments and deletes both leading and trailing white space.

virt_checkChecks whether TuneD is running inside a virtual machine (VM) or on bare metal:

- Inside a VM, the function returns the first argument.

- On bare metal, the function returns the second argument, even in case of an error.

cpulist_invert-

Inverts a list of CPUs to make its complement. For example, on a system with 4 CPUs, numbered from 0 to 3, the inversion of the list

0,2,3is1. cpulist2hex- Converts a CPU list to a hexadecimal CPU mask.

cpulist2hex_invert- Converts a CPU list to a hexadecimal CPU mask and inverts it.

hex2cpulist- Converts a hexadecimal CPU mask to a CPU list.

cpulist_online- Checks whether the CPUs from the list are online. Returns the list containing only online CPUs.

cpulist_present- Checks whether the CPUs from the list are present. Returns the list containing only present CPUs.

cpulist_unpack-

Unpacks a CPU list in the form of

1-3,4to1,2,3,4. cpulist_pack-

Packs a CPU list in the form of

1,2,3,5to1-3,5.

3.13. Creating new TuneD profiles

This procedure creates a new TuneD profile with custom performance rules.

Prerequisites

-

The

TuneDservice is running. See Installing and Enabling TuneD for details.

Procedure

In the

/etc/tuned/directory, create a new directory named the same as the profile that you want to create:# mkdir /etc/tuned/my-profileIn the new directory, create a file named

tuned.conf. Add a[main]section and plug-in definitions in it, according to your requirements.For example, see the configuration of the

balancedprofile:[main] summary=General non-specialized TuneD profile [cpu] governor=conservative energy_perf_bias=normal [audio] timeout=10 [video] radeon_powersave=dpm-balanced, auto [scsi_host] alpm=medium_power

To activate the profile, use:

# tuned-adm profile my-profileVerify that the TuneD profile is active and the system settings are applied:

$ tuned-adm active Current active profile: my-profile$ tuned-adm verify Verification succeeded, current system settings match the preset profile. See tuned log file ('/var/log/tuned/tuned.log') for details.

Additional resources

-

tuned.conf(5)man page

3.14. Modifying existing TuneD profiles

This procedure creates a modified child profile based on an existing TuneD profile.

Prerequisites

-

The

TuneDservice is running. See Installing and Enabling TuneD for details.

Procedure

In the

/etc/tuned/directory, create a new directory named the same as the profile that you want to create:# mkdir /etc/tuned/modified-profileIn the new directory, create a file named

tuned.conf, and set the[main]section as follows:[main] include=parent-profileReplace parent-profile with the name of the profile you are modifying.

Include your profile modifications.

Example 3.18. Lowering swappiness in the throughput-performance profile

To use the settings from the

throughput-performanceprofile and change the value ofvm.swappinessto 5, instead of the default 10, use:[main] include=throughput-performance [sysctl] vm.swappiness=5

To activate the profile, use:

# tuned-adm profile modified-profileVerify that the TuneD profile is active and the system settings are applied:

$ tuned-adm active Current active profile: my-profile$ tuned-adm verify Verification succeeded, current system settings match the preset profile. See tuned log file ('/var/log/tuned/tuned.log') for details.

Additional resources

-

tuned.conf(5)man page

3.15. Setting the disk scheduler using TuneD

This procedure creates and enables a TuneD profile that sets a given disk scheduler for selected block devices. The setting persists across system reboots.

In the following commands and configuration, replace:

-

device with the name of the block device, for example

sdf -

selected-scheduler with the disk scheduler that you want to set for the device, for example

bfq

Prerequisites

-

The

TuneDservice is installed and enabled. For details, see Installing and enabling TuneD.

Procedure

Optional: Select an existing TuneD profile on which your profile will be based. For a list of available profiles, see TuneD profiles distributed with RHEL.

To see which profile is currently active, use:

$ tuned-adm active

Create a new directory to hold your TuneD profile:

# mkdir /etc/tuned/my-profileFind the system unique identifier of the selected block device:

$ udevadm info --query=property --name=/dev/device | grep -E '(WWN|SERIAL)' ID_WWN=0x5002538d00000000_ ID_SERIAL=Generic-_SD_MMC_20120501030900000-0:0 ID_SERIAL_SHORT=20120501030900000

NoteThe command in the this example will return all values identified as a World Wide Name (WWN) or serial number associated with the specified block device. Although it is preferred to use a WWN, the WWN is not always available for a given device and any values returned by the example command are acceptable to use as the device system unique ID.

Create the

/etc/tuned/my-profile/tuned.confconfiguration file. In the file, set the following options:Optional: Include an existing profile:

[main] include=existing-profileSet the selected disk scheduler for the device that matches the WWN identifier:

[disk] devices_udev_regex=IDNAME=device system unique id elevator=selected-scheduler

Here:

-

Replace IDNAME with the name of the identifier being used (for example,

ID_WWN). Replace device system unique id with the value of the chosen identifier (for example,

0x5002538d00000000).To match multiple devices in the

devices_udev_regexoption, enclose the identifiers in parentheses and separate them with vertical bars:devices_udev_regex=(ID_WWN=0x5002538d00000000)|(ID_WWN=0x1234567800000000)

-

Replace IDNAME with the name of the identifier being used (for example,

Enable your profile:

# tuned-adm profile my-profile

Verification steps

Verify that the TuneD profile is active and applied:

$ tuned-adm active Current active profile: my-profile$ tuned-adm verify Verification succeeded, current system settings match the preset profile. See TuneD log file ('/var/log/tuned/tuned.log') for details.Read the contents of the

/sys/block/device/queue/schedulerfile:# cat /sys/block/device/queue/scheduler [mq-deadline] kyber bfq noneIn the file name, replace device with the block device name, for example

sdc.The active scheduler is listed in square brackets (

[]).

Additional resources

Chapter 4. Reviewing a system using tuna interface

Use the tuna tool to adjust scheduler tunables, tune thread priority, IRQ handlers, and isolate CPU cores and sockets. Tuna reduces the complexity of performing tuning tasks.

The tuna tool performs the following operations:

- Lists the CPUs on a system

- Lists the interrupt requests (IRQs) currently running on a system

- Changes policy and priority information about threads

- Displays the current policies and priorities of a system

4.1. Installing the tuna tool

The tuna tool is designed to be used on a running system. This allows application-specific measurement tools to see and analyze system performance immediately after changes have been made.

Procedure

Install the

tunatool:# yum install tuna

Verification steps

Display the available

tunaCLI options:# tuna -h

Additional resources

-

tuna(8)man page

4.2. Viewing the system status using tuna tool

This procedure describes how to view the system status using the tuna command-line interface (CLI) tool.

Prerequisites

- The tuna tool is installed. For more information, see Installing tuna tool.

Procedure

To view the current policies and priorities:

# tuna --show_threads thread pid SCHED_ rtpri affinity cmd 1 OTHER 0 0,1 init 2 FIFO 99 0 migration/0 3 OTHER 0 0 ksoftirqd/0 4 FIFO 99 0 watchdog/0To view a specific thread corresponding to a PID or matching a command name:

# tuna --threads=pid_or_cmd_list --show_threadsThe pid_or_cmd_list argument is a list of comma-separated PIDs or command-name patterns.

-

To tune CPUs using the

tunaCLI, see Tuning CPUs using tuna tool. -

To tune the IRQs using the

tunatool, see Tuning IRQs using tuna tool. To save the changed configuration:

# tuna --save=filename

This command saves only currently running kernel threads. Processes that are not running are not saved.

Additional resources

-

tuna(8)man page

4.3. Tuning CPUs using tuna tool

The tuna tool commands can target individual CPUs.

Using the tuna tool, you can:

Isolate CPUs- All tasks running on the specified CPU move to the next available CPU. Isolating a CPU makes it unavailable by removing it from the affinity mask of all threads.

Include CPUs- Allows tasks to run on the specified CPU

Restore CPUs- Restores the specified CPU to its previous configuration.

This procedure describes how to tune CPUs using the tuna CLI.

Prerequisites

- The tuna tool is installed. For more information, see Installing tuna tool.

Procedure

To specify the list of CPUs to be affected by a command:

# tuna --cpus=cpu_list [command]

The cpu_list argument is a list of comma-separated CPU numbers. For example,

--cpus=0,2. CPU lists can also be specified in a range, for example--cpus=”1-3”, which would select CPUs 1, 2, and 3.To add a specific CPU to the current cpu_list, for example, use

--cpus=+0.Replace [command] with, for example,

--isolate.To isolate a CPU:

# tuna --cpus=cpu_list --isolate

To include a CPU:

# tuna --cpus=cpu_list --include

To use a system with four or more processors, display how to make all the ssh threads run on CPU 0 and 1, and all the

httpthreads on CPU 2 and 3:# tuna --cpus=0,1 --threads=ssh\* \ --move --cpus=2,3 --threads=http\* --move

This command performs the following operations sequentially:

- Selects CPUs 0 and 1.

-

Selects all threads that begin with

ssh. -

Moves the selected threads to the selected CPUs. Tuna sets the affinity mask of threads starting with

sshto the appropriate CPUs. The CPUs can be expressed numerically as 0 and 1, in hex mask as 0x3, or in binary as 11. - Resets the CPU list to 2 and 3.

-

Selects all threads that begin with

http. -

Moves the selected threads to the specified CPUs. Tuna sets the affinity mask of threads starting with

httpto the specified CPUs. The CPUs can be expressed numerically as 2 and 3, in hex mask as 0xC, or in binary as 1100.

Verification steps

Display the current configuration and verify that the changes were performed as expected:

# tuna --threads=gnome-sc\* --show_threads \ --cpus=0 --move --show_threads --cpus=1 \ --move --show_threads --cpus=+0 --move --show_threads thread ctxt_switches pid SCHED_ rtpri affinity voluntary nonvoluntary cmd 3861 OTHER 0 0,1 33997 58 gnome-screensav thread ctxt_switches pid SCHED_ rtpri affinity voluntary nonvoluntary cmd 3861 OTHER 0 0 33997 58 gnome-screensav thread ctxt_switches pid SCHED_ rtpri affinity voluntary nonvoluntary cmd 3861 OTHER 0 1 33997 58 gnome-screensav thread ctxt_switches pid SCHED_ rtpri affinity voluntary nonvoluntary cmd 3861 OTHER 0 0,1 33997 58 gnome-screensav

This command performs the following operations sequentially:

-

Selects all threads that begin with the

gnome-scthreads. - Displays the selected threads to enable the user to verify their affinity mask and RT priority.

- Selects CPU 0.

-

Moves the

gnome-scthreads to the specified CPU, CPU 0. - Shows the result of the move.

- Resets the CPU list to CPU 1.

-

Moves the

gnome-scthreads to the specified CPU, CPU 1. - Displays the result of the move.

- Adds CPU 0 to the CPU list.

-

Moves the

gnome-scthreads to the specified CPUs, CPUs 0 and 1. - Displays the result of the move.

-

Selects all threads that begin with the

Additional resources

-

/proc/cpuinfofile -

tuna(8)man page

4.4. Tuning IRQs using tuna tool

The /proc/interrupts file records the number of interrupts per IRQ, the type of interrupt, and the name of the device that is located at that IRQ.

This procedure describes how to tune the IRQs using the tuna tool.

Prerequisites

- The tuna tool is installed. For more information, see Installing tuna tool.

Procedure

To view the current IRQs and their affinity:

# tuna --show_irqs # users affinity 0 timer 0 1 i8042 0 7 parport0 0

To specify the list of IRQs to be affected by a command:

# tuna --irqs=irq_list [command]

The irq_list argument is a list of comma-separated IRQ numbers or user-name patterns.

Replace [command] with, for example,

--spread.To move an interrupt to a specified CPU:

# tuna --irqs=128 --show_irqs # users affinity 128 iwlwifi 0,1,2,3 # tuna --irqs=128 --cpus=3 --move

Replace 128 with the irq_list argument and 3 with the cpu_list argument.

The cpu_list argument is a list of comma-separated CPU numbers, for example,

--cpus=0,2. For more information, see Tuning CPUs using tuna tool.

Verification steps

Compare the state of the selected IRQs before and after moving any interrupt to a specified CPU:

# tuna --irqs=128 --show_irqs # users affinity 128 iwlwifi 3

Additional resources

-

/procs/interruptsfile -

tuna(8)man page

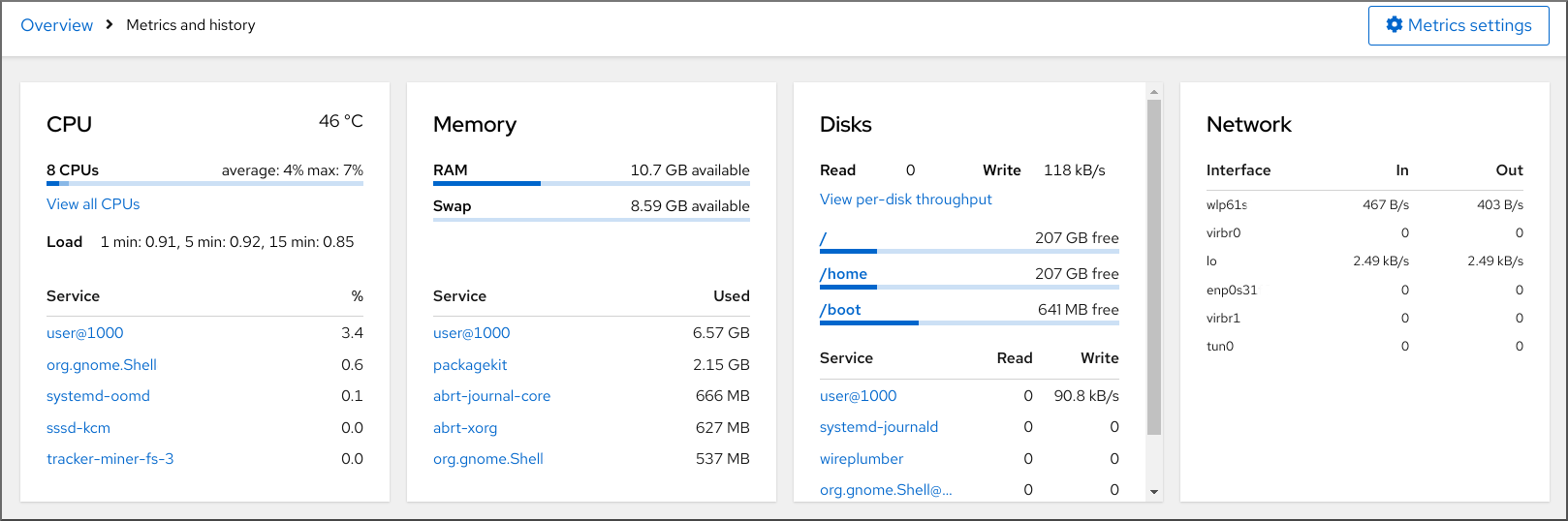

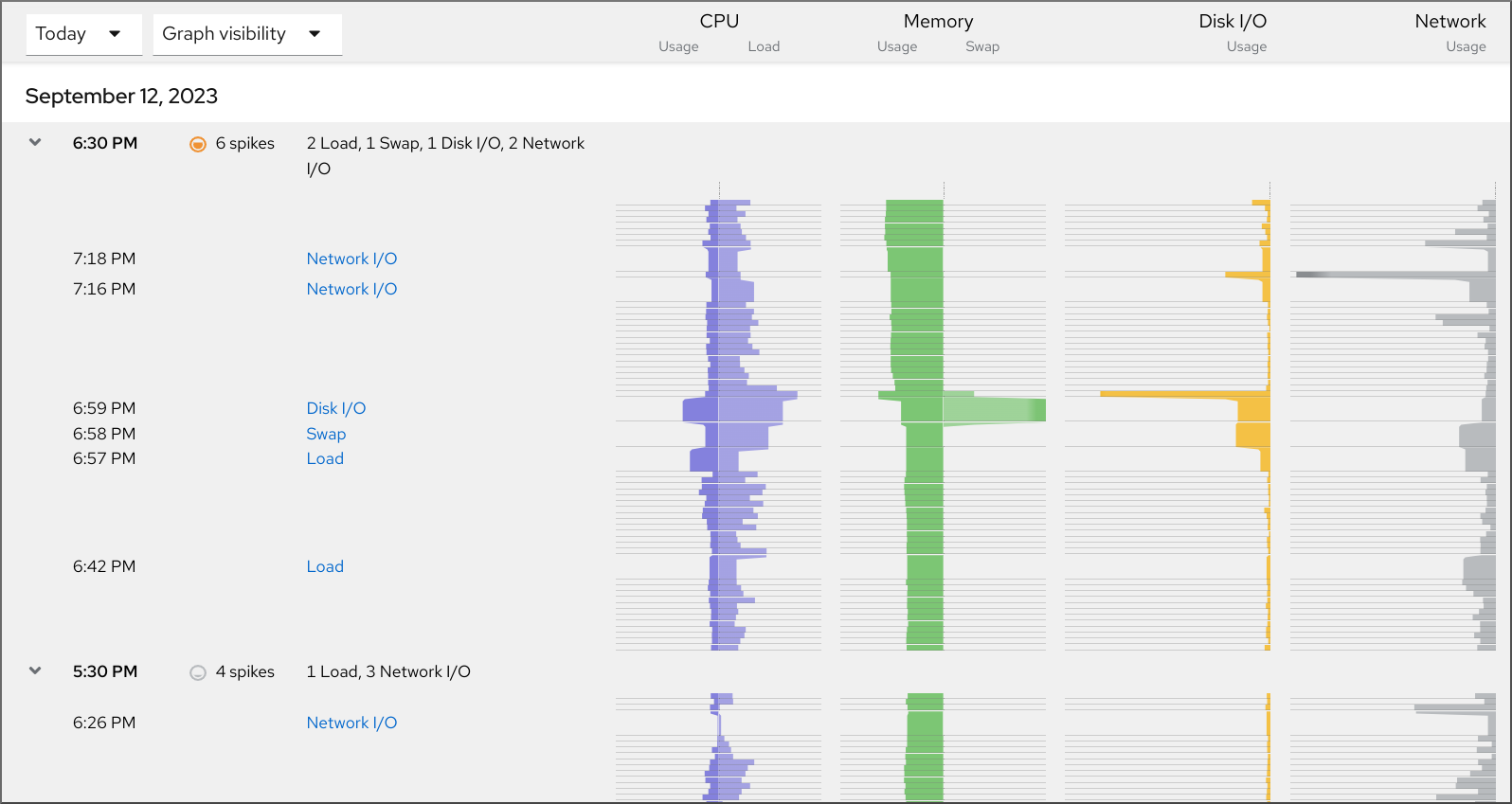

Chapter 5. Monitoring performance using RHEL System Roles

As a system administrator, you can use the metrics RHEL System Role with any Ansible Automation Platform control node to monitor the performance of a system.