Administration and Configuration Guide

For use with Red Hat JBoss Data Grid 7.0

Abstract

Chapter 1. Setting up Red Hat JBoss Data Grid

1.1. Prerequisites

1.2. Steps to Set up Red Hat JBoss Data Grid

Procedure 1.1. Set Up JBoss Data Grid

Set Up the Cache Manager

The first step in a JBoss Data Grid configuration is a cache manager. Cache managers can retrieve cache instances and create cache instances quickly and easily using previously specified configuration templates. For details about setting up a cache manager, refer to theCache Managersection in the JBoss Data Grid Getting Started Guide.Set Up JVM Memory Management

An important step in configuring your JBoss Data Grid is to set up memory management for your Java Virtual Machine (JVM). JBoss Data Grid offers features such as eviction and expiration to help manage the JVM memory.Set Up Eviction

Use eviction to specify the logic used to remove entries from the in-memory cache implementation based on how often they are used. JBoss Data Grid offers different eviction strategies for finer control over entry eviction in your data grid. Eviction strategies and instructions to configure them are available in Chapter 2, Set Up Eviction.Set Up Expiration

To set upper limits to an entry's time in the cache, attach expiration information to each entry. Use expiration to set up the maximum period an entry is allowed to remain in the cache and how long the retrieved entry can remain idle before being removed from the cache. For details, see Chapter 3, Set Up Expiration

Monitor Your Cache

JBoss Data Grid uses logging via JBoss Logging to help users monitor their caches.Set Up Logging

It is not mandatory to set up logging for your JBoss Data Grid, but it is highly recommended. JBoss Data Grid uses JBoss Logging, which allows the user to easily set up automated logging for operations in the data grid. Logs can subsequently be used to troubleshoot errors and identify the cause of an unexpected failure. For details, see Chapter 4, Set Up Logging

Set Up Cache Modes

Cache modes are used to specify whether a cache is local (simple, in-memory cache) or a clustered cache (replicates state changes over a small subset of nodes). Additionally, if a cache is clustered, either replication, distribution or invalidation mode must be applied to determine how the changes propagate across the subset of nodes. For details, see Part III, “Set Up Cache Modes”Set Up Locking for the Cache

When replication or distribution is in effect, copies of entries are accessible across multiple nodes. As a result, copies of the data can be accessed or modified concurrently by different threads. To maintain consistency for all copies across nodes, configure locking. For details, see Part VI, “Set Up Locking for the Cache” and Chapter 17, Set Up Isolation LevelsSet Up and Configure a Cache Store

JBoss Data Grid offers the passivation feature (or cache writing strategies if passivation is turned off) to temporarily store entries removed from memory in a persistent, external cache store. To set up passivation or a cache writing strategy, you must first set up a cache store.Set Up a Cache Store

The cache store serves as a connection to the persistent store. Cache stores are primarily used to fetch entries from the persistent store and to push changes back to the persistent store. For details, see Part VII, “Set Up and Configure a Cache Store”Set Up Passivation

Passivation stores entries evicted from memory in a cache store. This feature allows entries to remain available despite not being present in memory and prevents potentially expensive write operations to the persistent cache. For details, see Part VIII, “Set Up Passivation”Set Up a Cache Writing Strategy

If passivation is disabled, every attempt to write to the cache results in writing to the cache store. This is the default Write-Through cache writing strategy. Set the cache writing strategy to determine whether these cache store writes occur synchronously or asynchronously. For details, see Part IX, “Set Up Cache Writing”

Monitor Caches and Cache Managers

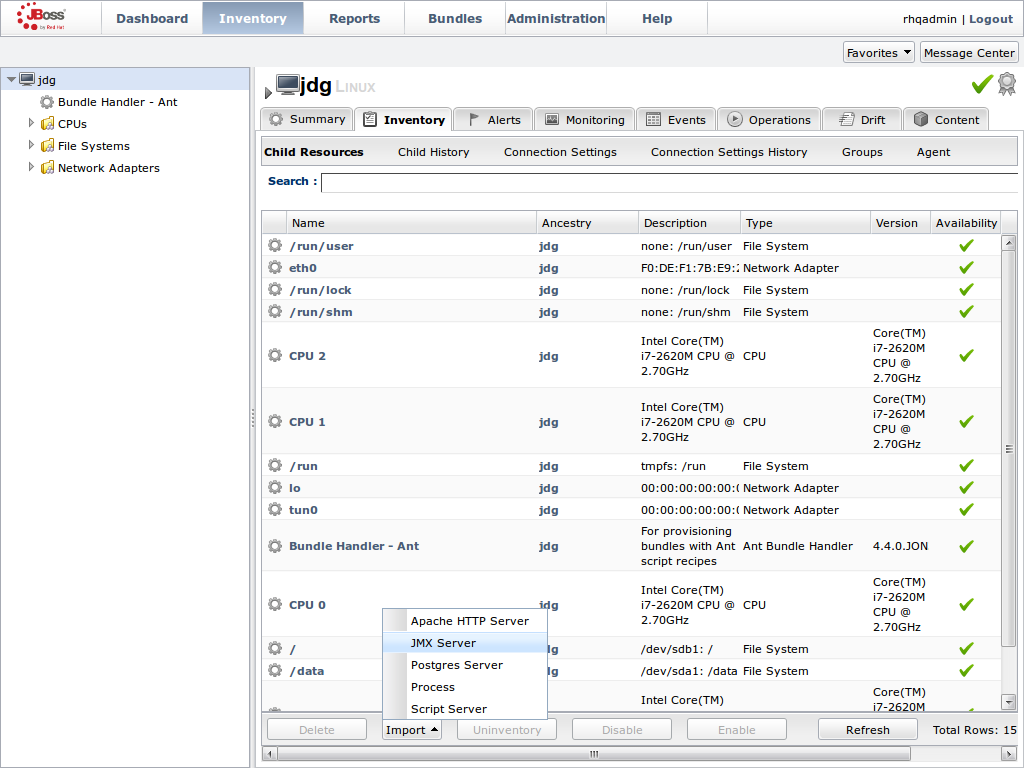

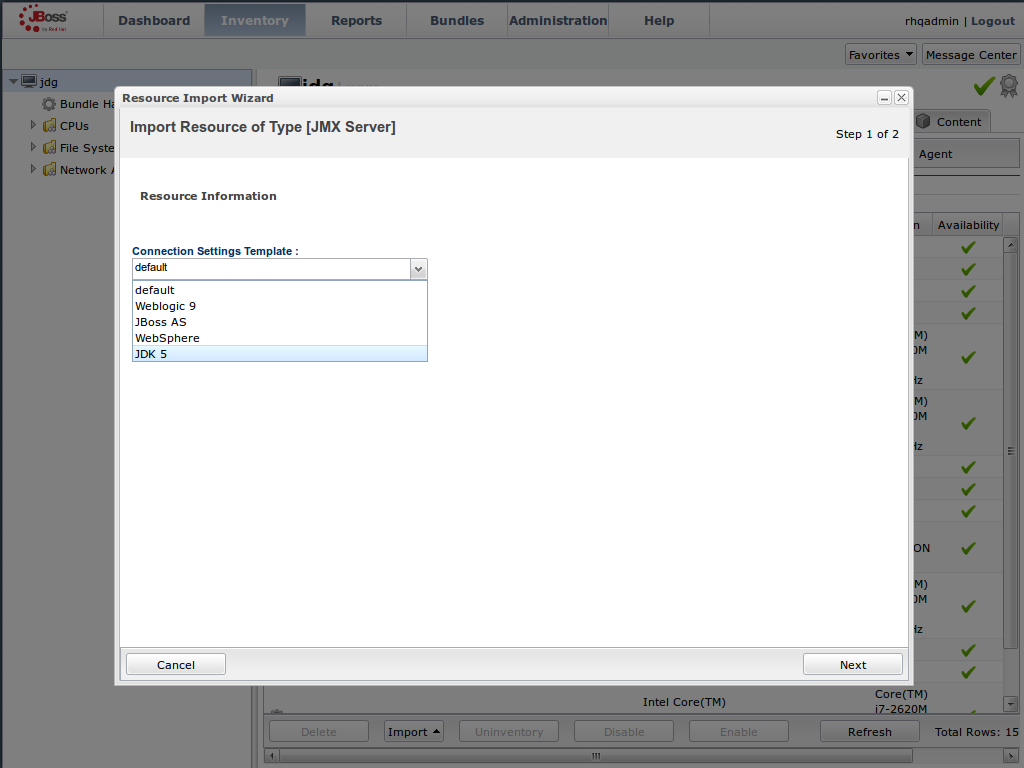

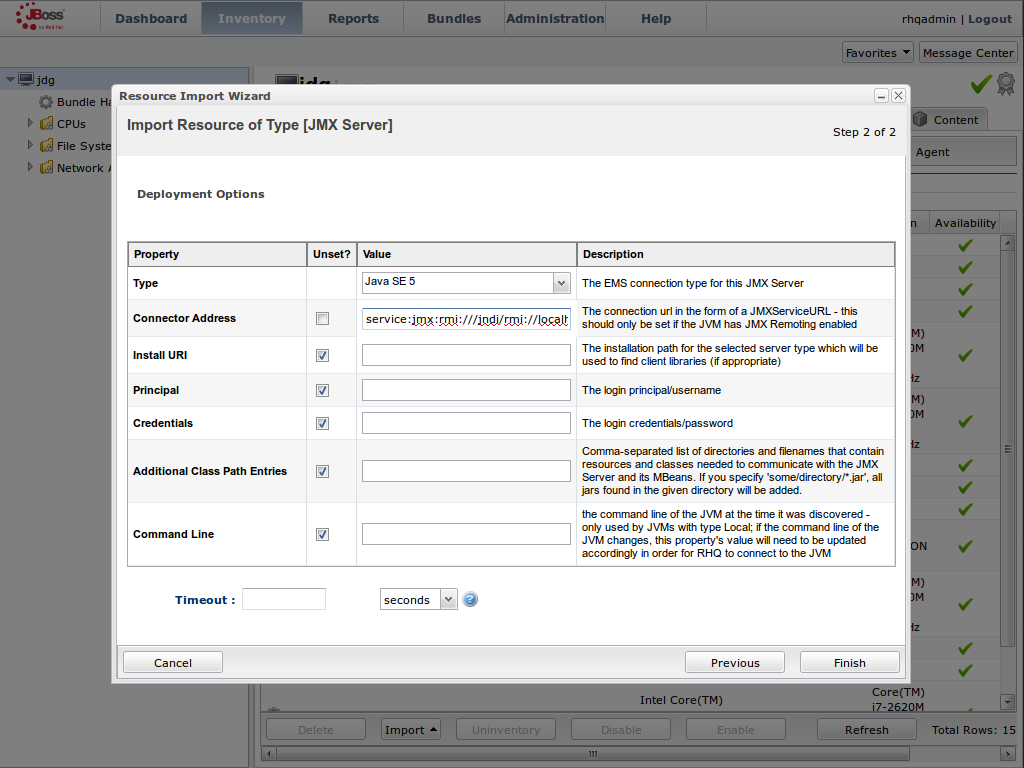

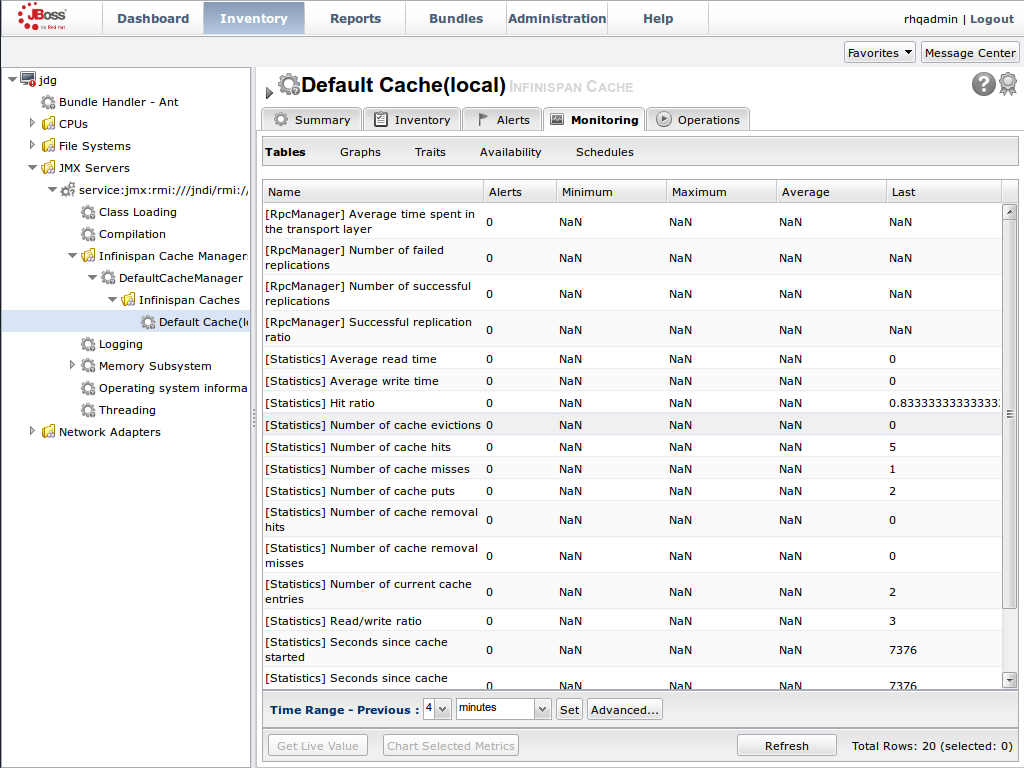

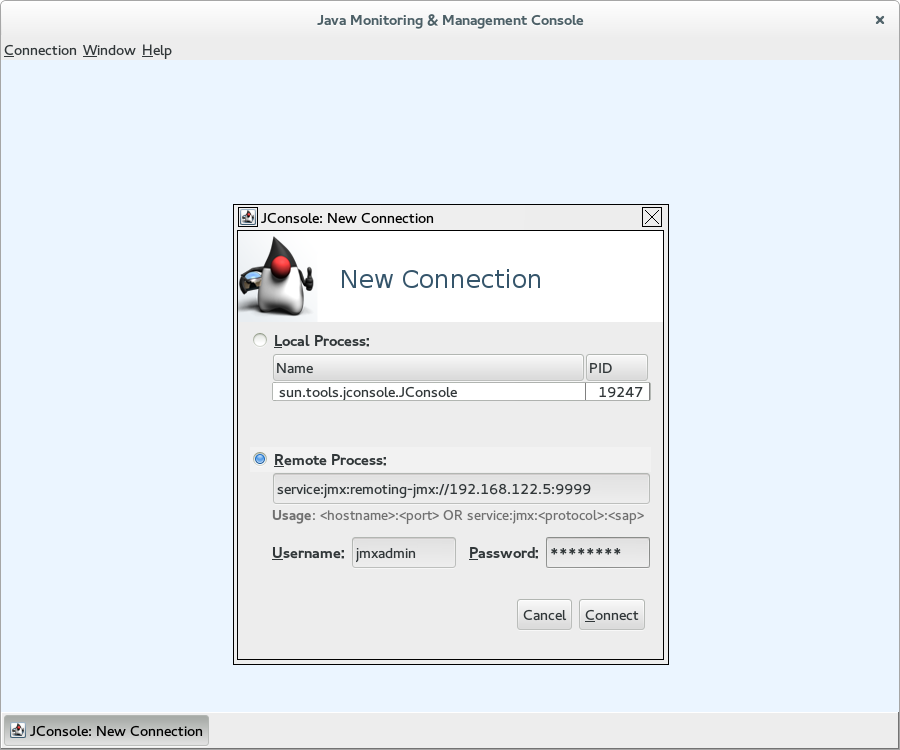

JBoss Data Grid includes three primary tools to monitor the cache and cache managers once the data grid is up and running.Set Up JMX

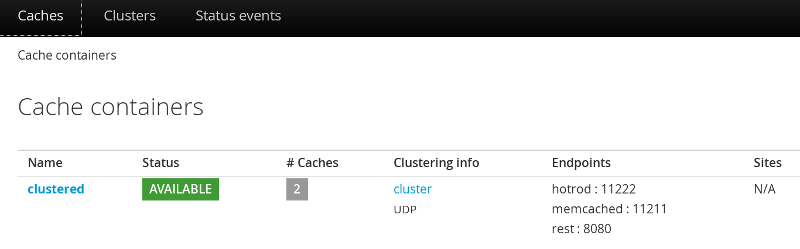

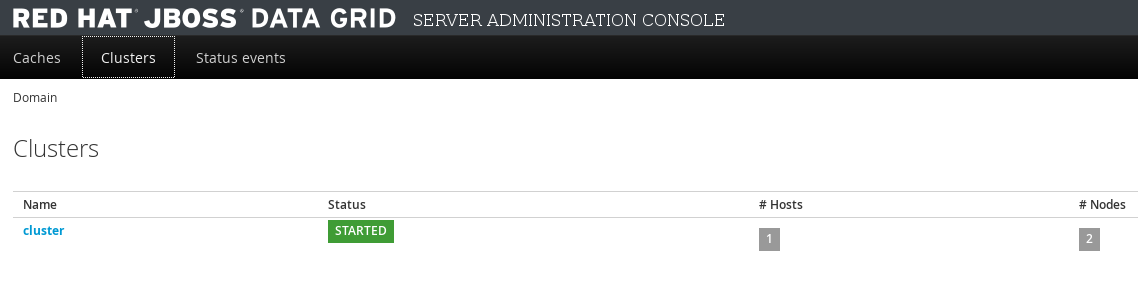

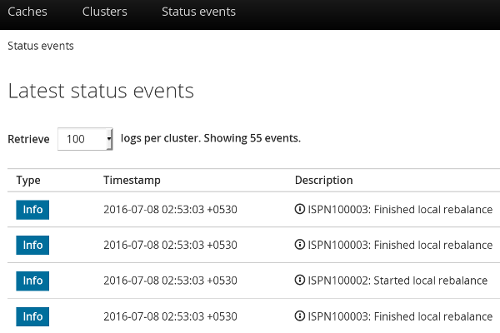

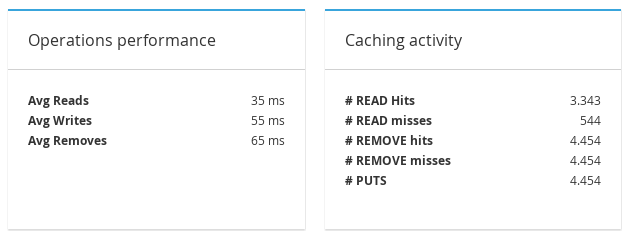

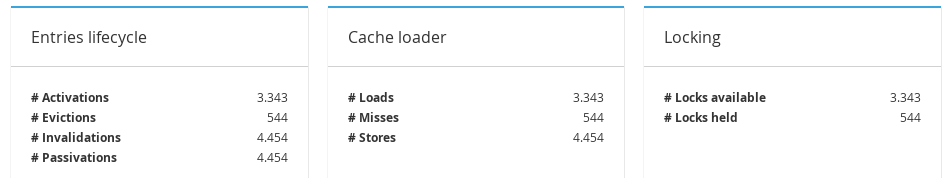

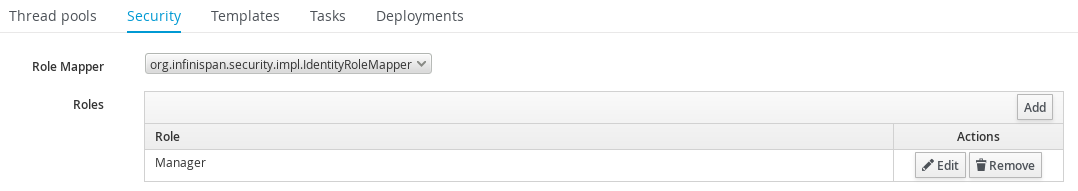

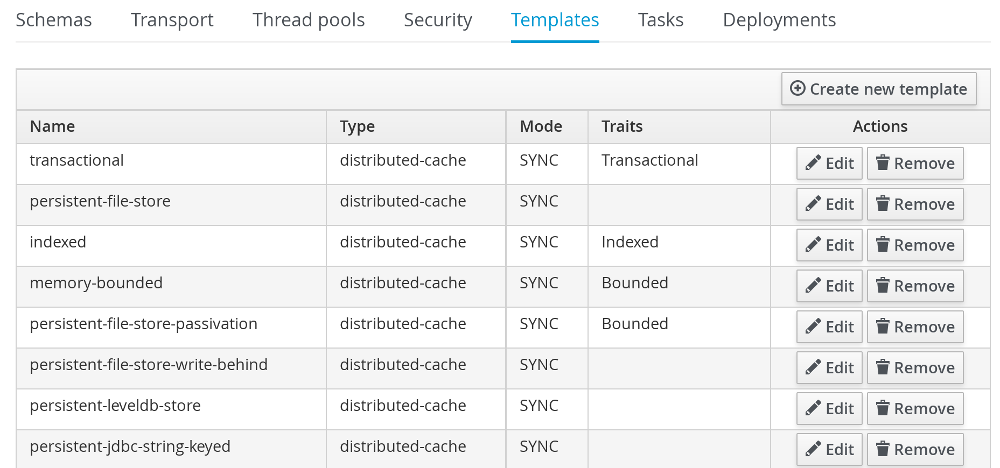

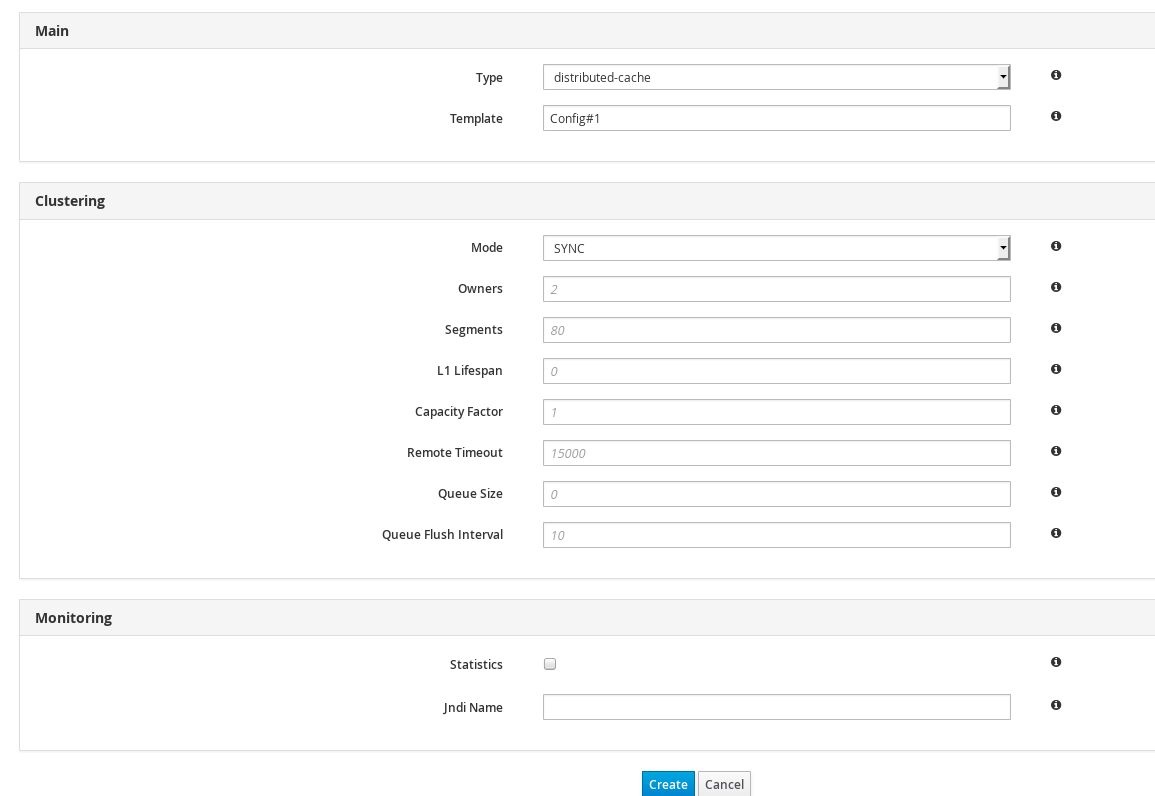

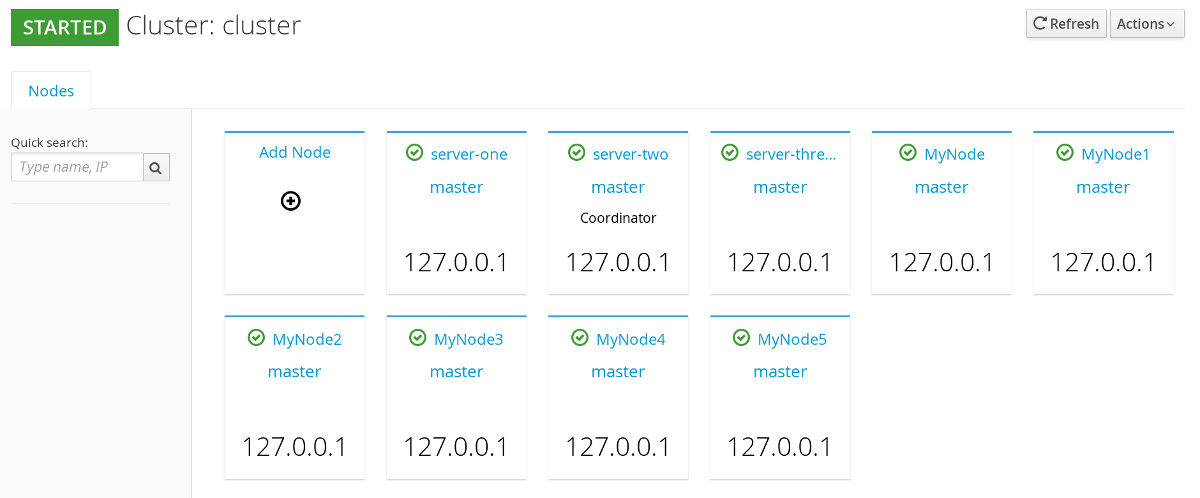

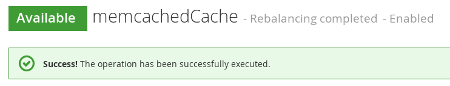

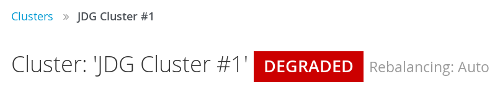

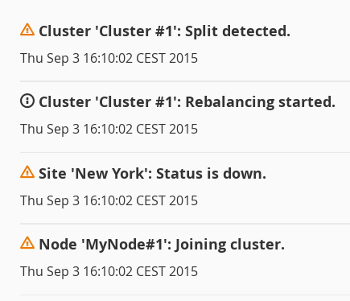

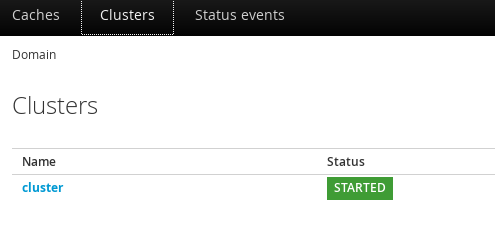

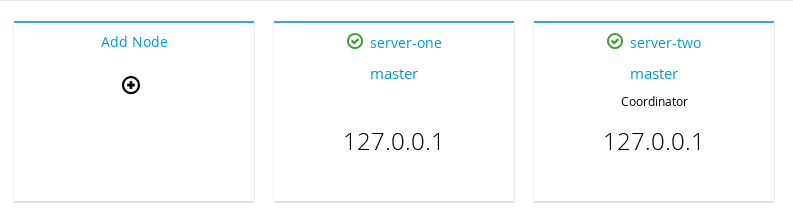

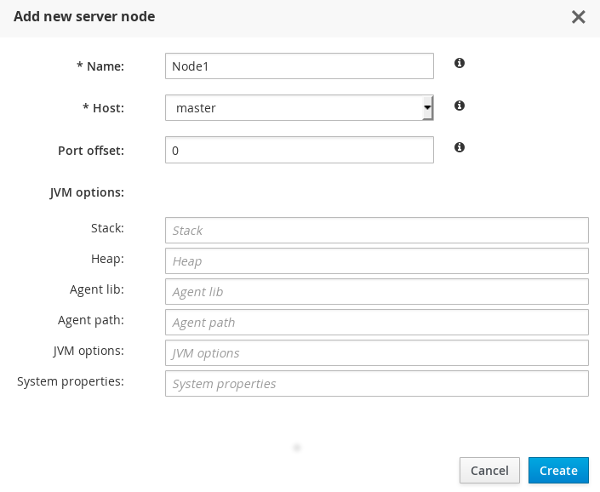

JMX is the standard statistics and management tool used for JBoss Data Grid. Depending on the use case, JMX can be configured at a cache level or a cache manager level or both. For details, see Chapter 22, Set Up Java Management Extensions (JMX)Access the Administration Console

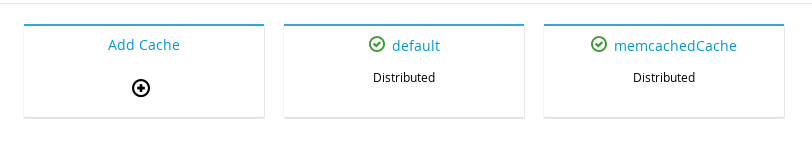

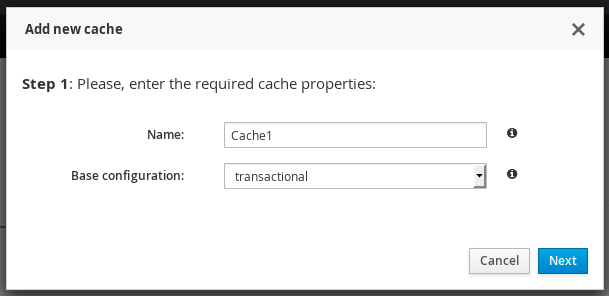

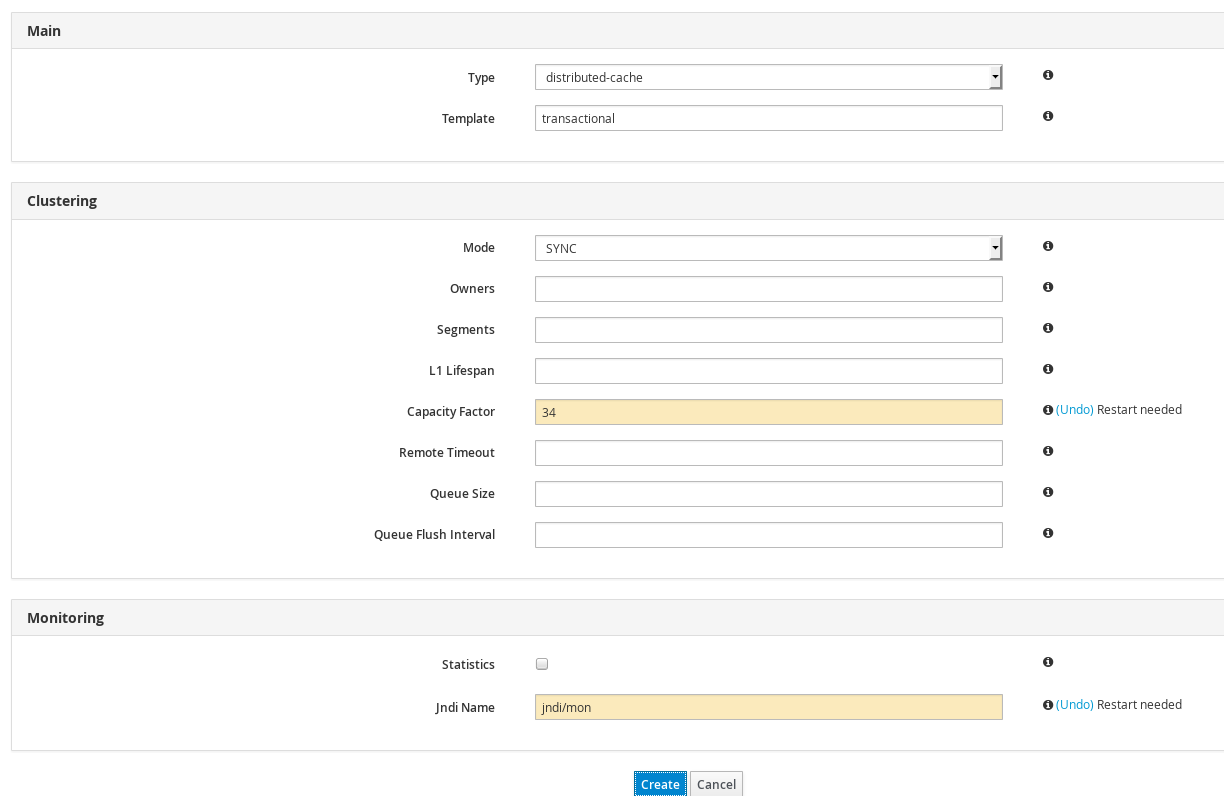

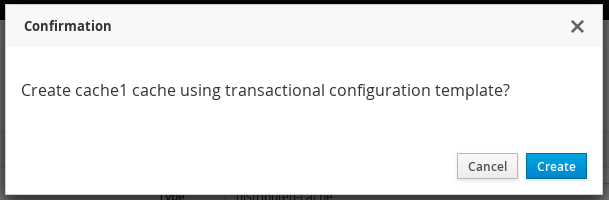

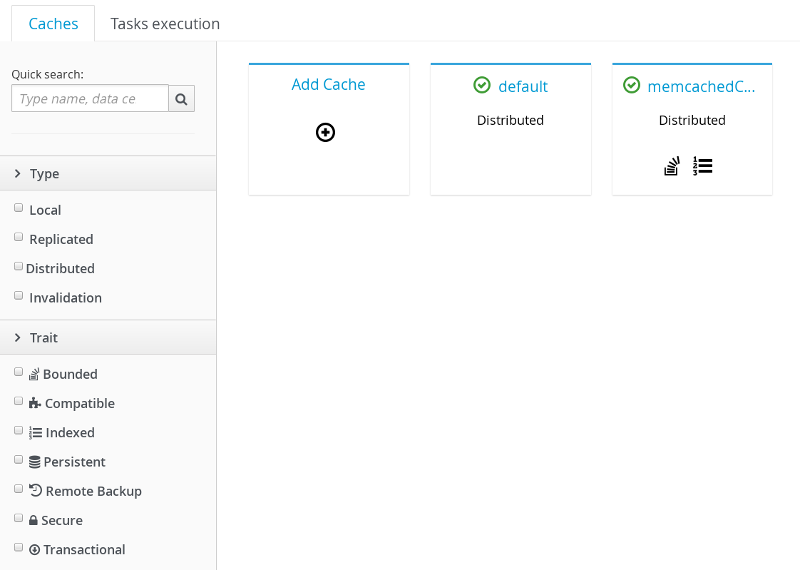

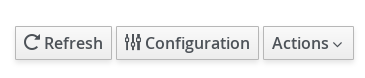

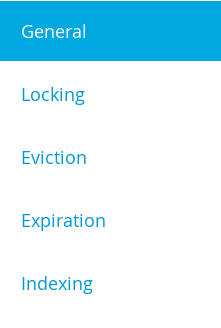

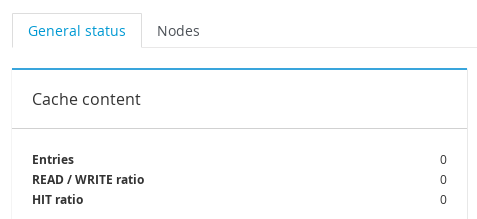

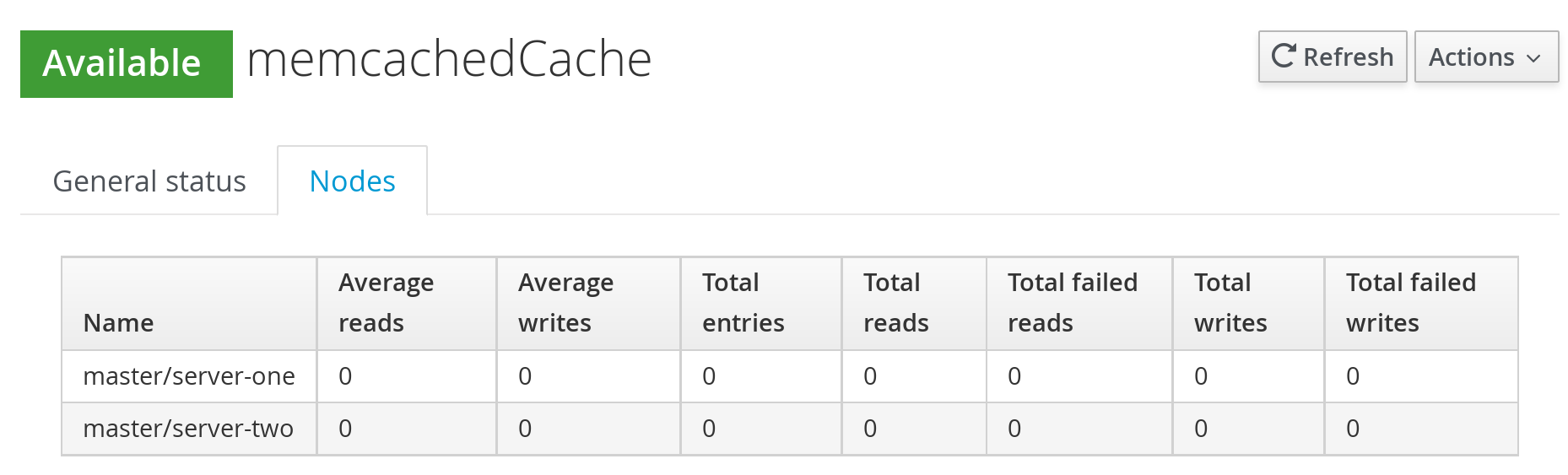

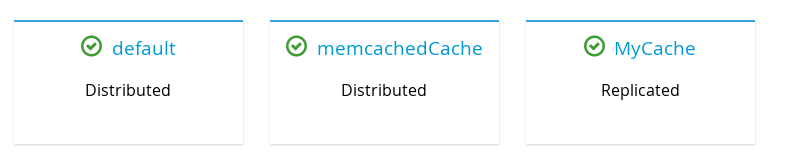

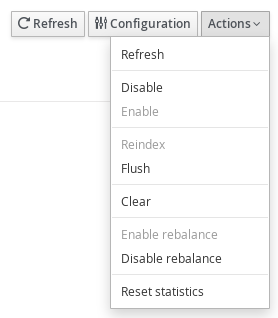

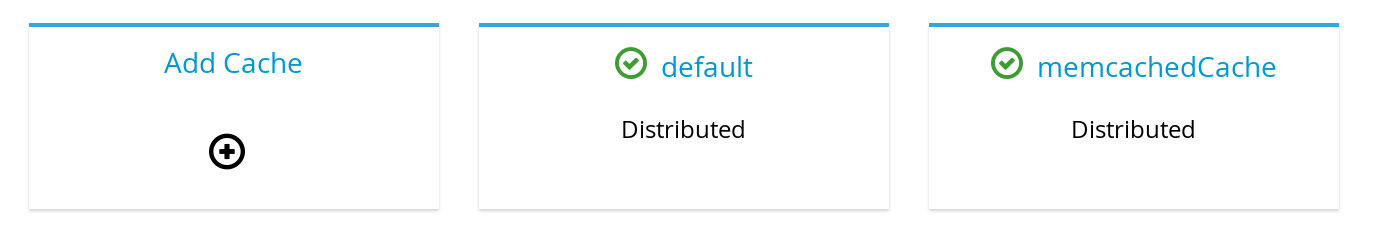

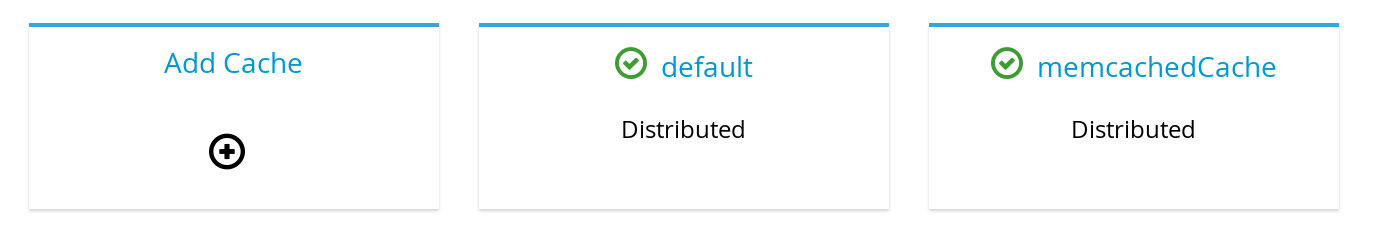

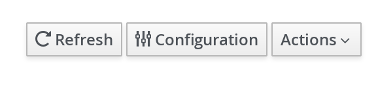

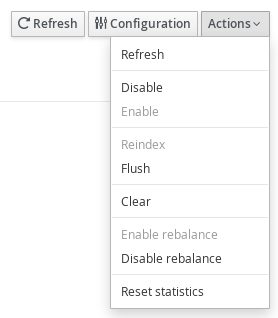

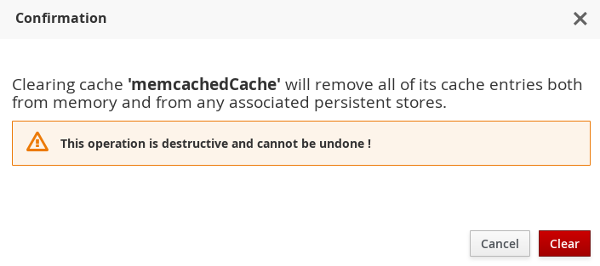

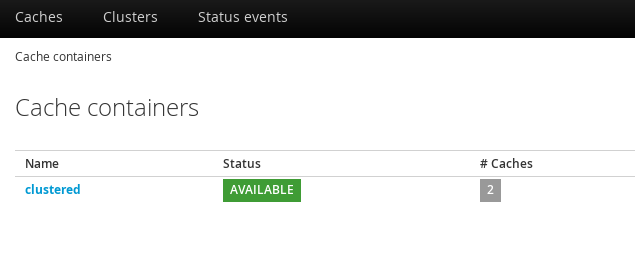

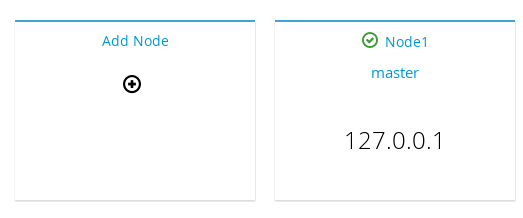

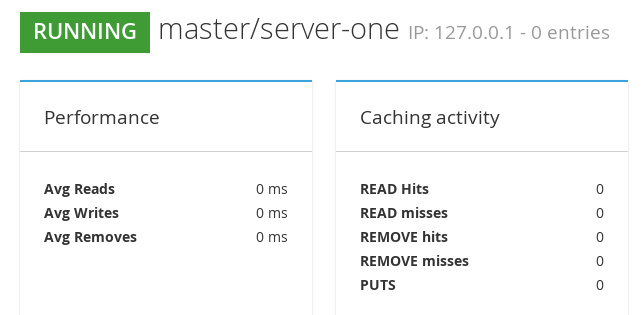

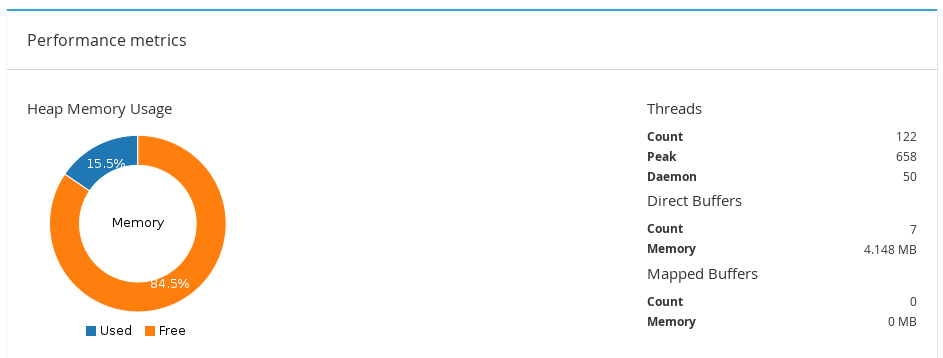

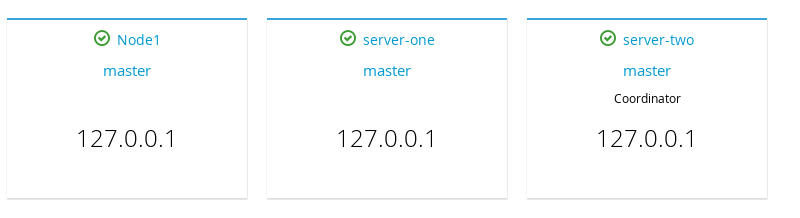

Red Hat JBoss Data Grid 7.0.0 introduces an Administration Console, allowing for web-based monitoring and management of caches and cache managers. For usage detals refer to Section 24.3.1, “Red Hat JBoss Data Grid Administration Console Getting Started”.Set Up Red Hat JBoss Operations Network (JON)

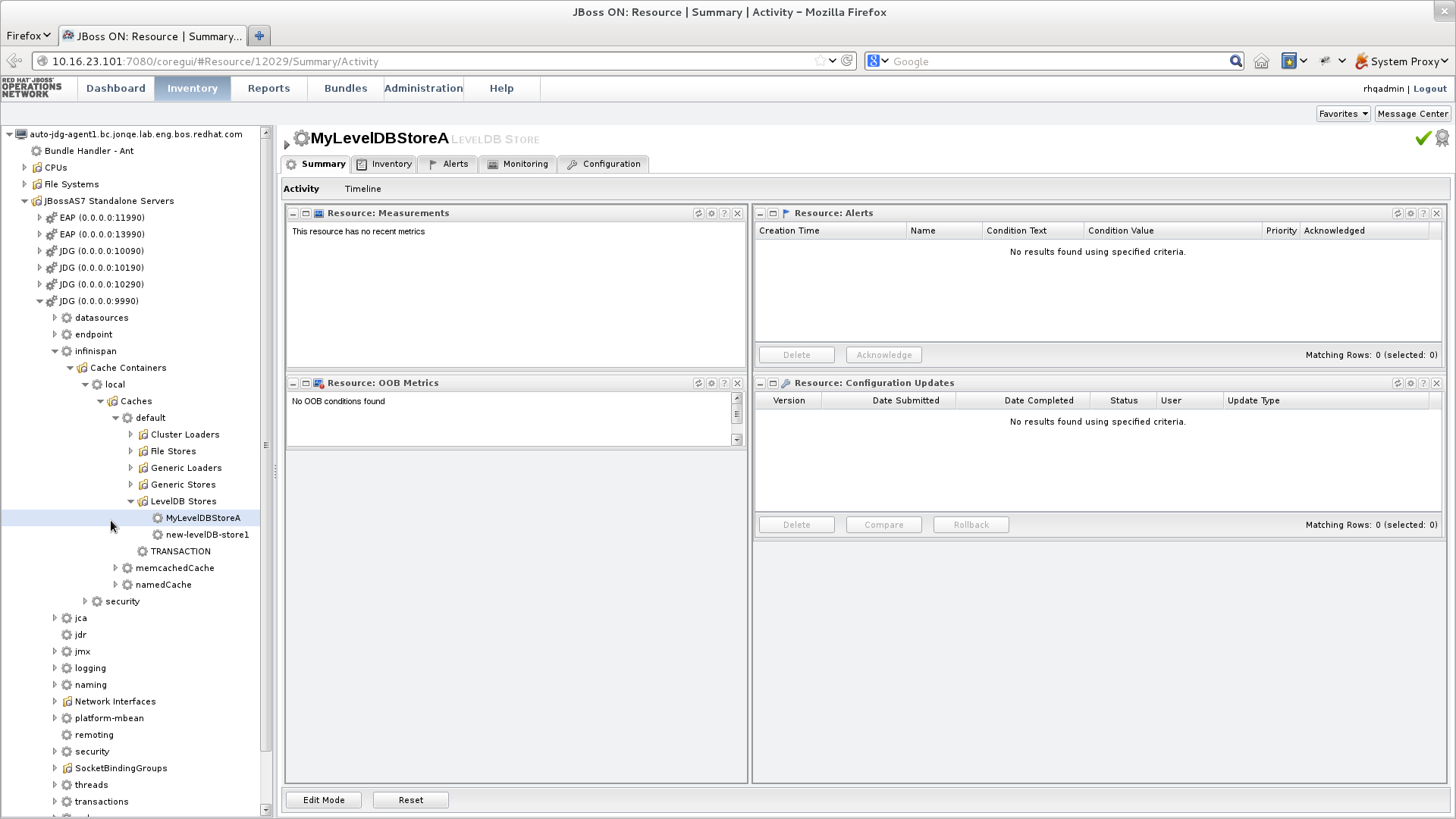

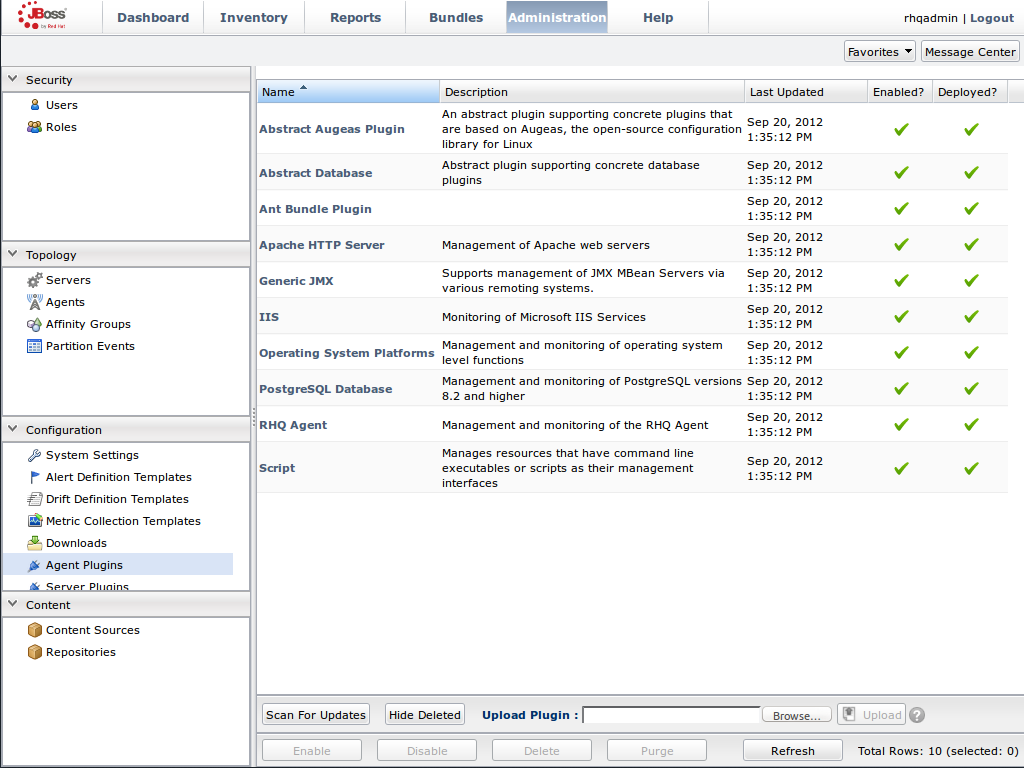

Red Hat JBoss Operations Network (JON) is the second monitoring solution available for JBoss Data Grid. JBoss Operations Network (JON) offers a graphical interface to monitor runtime parameters and statistics for caches and cache managers. For details, see Chapter 23, Set Up JBoss Operations Network (JON)Note

The JON plugin has been deprecated in JBoss Data Grid 7.0 and is expected to be removed in a subsequent version.

Introduce Topology Information

Optionally, introduce topology information to your data grid to specify where specific types of information or objects in your data grid are located. Server hinting is one of the ways to introduce topology information in JBoss Data Grid.Set Up Server Hinting

When set up, server hinting provides high availability by ensuring that the original and backup copies of data are not stored on the same physical server, rack or data center. This is optional in cases such as a replicated cache, where all data is backed up on all servers, racks and data centers. For details, see Chapter 34, High Availability Using Server Hinting

Part I. Set Up JVM Memory Management

Chapter 2. Set Up Eviction

2.1. About Eviction

2.2. Eviction Strategies

Table 2.1. Eviction Strategies

| Strategy Name | Operations | Details |

|---|---|---|

EvictionStrategy.NONE | No eviction occurs. | This is the default eviction strategy in Red Hat JBoss Data Grid. |

EvictionStrategy.LRU | Least Recently Used eviction strategy. This strategy evicts entries that have not been used for the longest period. This ensures that entries that are reused periodically remain in memory. | |

EvictionStrategy.UNORDERED | Unordered eviction strategy. This strategy evicts entries without any ordered algorithm and may therefore evict entries that are required later. However, this strategy saves resources because no algorithm related calculations are required before eviction. | This strategy is recommended for testing purposes and not for a real work implementation. |

EvictionStrategy.LIRS | Low Inter-Reference Recency Set eviction strategy. | LIRS is an eviction algorithm that suits a large variety of production use cases. |

2.2.1. LRU Eviction Algorithm Limitations

- Single use access entries are not replaced in time.

- Entries that are accessed first are unnecessarily replaced.

2.3. Using Eviction

eviction /> element is used to enable eviction without any strategy or maximum entries settings, the following default values are used:

- Strategy: If no eviction strategy is specified,

EvictionStrategy.NONEis assumed as a default. - size: If no value is specified, the

sizevalue is set to-1, which allows unlimited entries.

2.3.1. Initialize Eviction

size attributes value to a number greater than zero. Adjust the value set for size to discover the optimal value for your configuration. It is important to remember that if too large a value is set for size, Red Hat JBoss Data Grid runs out of memory.

Procedure 2.1. Initialize Eviction

Add the Eviction Tag

Add the <eviction> tag to your project's <cache> tags as follows:<eviction />

Set the Eviction Strategy

Set thestrategyvalue to set the eviction strategy employed. Possible values areLRU,UNORDEREDandLIRS(orNONEif no eviction is required). The following is an example of this step:<eviction strategy="LRU" />

Set the Maximum Size to use for Eviction

Set the maximum number of entries allowed in memory by defining thesizeelement. The default value is-1for unlimited entries. The following demonstrates this step:<eviction strategy="LRU" size="200" />

Eviction is configured for the target cache.

2.3.2. Eviction Configuration Examples

<eviction strategy="LRU" size="2000"/>

2.3.3. Utilizing Memory Based Eviction

Only keys and values that are stored as primitives, primitive wrappers (such as java.lang.Integer), java.lang.String instances, or an Array of these values may be used with memory based eviction.

store-as-binary must be enabled on the cache, or the data from the custom class may be serialized, storing it in a byte array.

Memory based eviction is only supported with the LRU eviction strategy.

This eviction method may be used by defining MEMORY as the eviction type, as seen in the following example:

<local-cache name="local">

<eviction size="10000000000" strategy="LRU" type="MEMORY"/>

</local-cache>2.3.4. Eviction and Passivation

Chapter 3. Set Up Expiration

3.1. About Expiration

- A lifespan value.

- A maximum idle time value.

lifespan or max-idle value.

lifespan or max-idle defined are mortal, as they will eventually be removed from the cache once one of these conditions are met.

- expiration removes entries based on the period they have been in memory. Expiration only removes entries when the life span period concludes or when an entry has been idle longer than the specified idle time.

- eviction removes entries based on how recently (and often) they are used. Eviction only removes entries when too many entries are present in the memory. If a cache store has been configured, evicted entries are persisted in the cache store.

3.2. Expiration Operations

lifespan) or maximum idle time (max-idle) defined for an individual key/value pair overrides the cache-wide default for the entry in question.

3.3. Eviction and Expiration Comparison

lifespan) and idle time (max-idle) values are replicated alongside each cache entry.

3.4. Cache Entry Expiration Behavior

- An entry is passivated/overflowed to disk and is discovered to have expired.

- The expiration maintenance thread discovers that an entry it has found is expired.

3.5. Configure Expiration

Procedure 3.1. Configure Expiration

Add the Expiration Tag

Add the <expiration> tag to your project's <cache> tags as follows:<expiration />

Set the Expiration Lifespan

Set thelifespanvalue to set the period of time (in milliseconds) an entry can remain in memory. The following is an example of this step:<expiration lifespan="1000" />

Set the Maximum Idle Time

Set the time that entries are allowed to remain idle (unused) after which they are removed (in milliseconds). The default value is-1for unlimited time.<expiration lifespan="1000" max-idle="1000" />

3.6. Troubleshooting Expiration

put() are passed a life span value as a parameter. This value defines the interval after which the entry must expire. In cases where eviction is not configured and the life span interval expires, it can appear as if Red Hat JBoss Data Grid has not removed the entry. For example, when viewing JMX statistics, such as the number of entries, you may see an out of date count, or the persistent store associated with JBoss Data Grid may still contain this entry. Behind the scenes, JBoss Data Grid has marked it as an expired entry, but has not removed it. Removal of such entries happens as follows:

- An entry is passivated/overflowed to disk and is discovered to have expired.

- The expiration maintenance thread discovers that an entry it has found is expired.

get() or containsKey() for the expired entry causes JBoss Data Grid to return a null value. The expired entry is later removed by the expiration thread.

Part II. Monitor Your Cache

Chapter 4. Set Up Logging

4.1. About Logging

4.2. Supported Application Logging Frameworks

- JBoss Logging, which is included with Red Hat JBoss Data Grid 7.

4.2.1. About JBoss Logging

4.2.2. JBoss Logging Features

- Provides an innovative, easy to use typed logger.

- Full support for internationalization and localization. Translators work with message bundles in properties files while developers can work with interfaces and annotations.

- Build-time tooling to generate typed loggers for production, and runtime generation of typed loggers for development.

4.3. Boot Logging

4.3.1. Configure Boot Logging

logging.properties file to configure the boot log. This file is a standard Java properties file and can be edited in a text editor. Each line in the file has the format of property=value.

logging.properties file is available in the $JDG_HOME/standalone/configuration folder.

4.3.2. Default Log File Locations

Table 4.1. Default Log File Locations

| Log File | Location | Description |

|---|---|---|

boot.log | $JDG_HOME/standalone/log/ |

The Server Boot Log. Contains log messages related to the start up of the server.

By default this file is prepended to the

server.log. This file may be created independently of the server.log by defining the org.jboss.boot.log property in logging.properties.

|

server.log | $JDG_HOME/standalone/log/ | The Server Log. Contains all log messages once the server has launched. |

4.4. Logging Attributes

4.4.1. About Log Levels

TRACEDEBUGINFOWARNERRORFATAL

WARN will only record messages of the levels WARN, ERROR and FATAL.

4.4.2. Supported Log Levels

Table 4.2. Supported Log Levels

| Log Level | Value | Description |

|---|---|---|

| FINEST | 300 | - |

| FINER | 400 | - |

| TRACE | 400 | Used for messages that provide detailed information about the running state of an application. TRACE level log messages are captured when the server runs with the TRACE level enabled. |

| DEBUG | 500 | Used for messages that indicate the progress of individual requests or activities of an application. DEBUG level log messages are captured when the server runs with the DEBUG level enabled. |

| FINE | 500 | - |

| CONFIG | 700 | - |

| INFO | 800 | Used for messages that indicate the overall progress of the application. Used for application start up, shut down and other major lifecycle events. |

| WARN | 900 | Used to indicate a situation that is not in error but is not considered ideal. Indicates circumstances that can lead to errors in the future. |

| WARNING | 900 | - |

| ERROR | 1000 | Used to indicate an error that has occurred that could prevent the current activity or request from completing but will not prevent the application from running. |

| SEVERE | 1000 | - |

| FATAL | 1100 | Used to indicate events that could cause critical service failure and application shutdown and possibly cause JBoss Data Grid to shut down. |

4.4.3. About Log Categories

WARNING log level results in log values of 900, 1000 and 1100 are captured.

4.4.4. About the Root Logger

server.log. This file is sometimes referred to as the server log.

4.4.5. About Log Handlers

ConsoleFilePeriodicSizeAsyncCustom

4.4.6. Log Handler Types

Table 4.3. Log Handler Types

| Log Handler Type | Description | Use Case |

|---|---|---|

| Console | Console log handlers write log messages to either the host operating system’s standard out (stdout) or standard error (stderr) stream. These messages are displayed when JBoss Data Grid is run from a command line prompt. | The Console log handler is preferred when JBoss Data Grid is administered using the command line. In such a case, the messages from a Console log handler are not saved unless the operating system is configured to capture the standard out or standard error stream. |

| File | File log handlers are the simplest log handlers. Their primary use is to write log messages to a specified file. | File log handlers are most useful if the requirement is to store all log entries according to the time in one place. |

| Periodic | Periodic file handlers write log messages to a named file until a specified period of time has elapsed. Once the time period has elapsed, the specified time stamp is appended to the file name. The handler then continues to write into the newly created log file with the original name. | The Periodic file handler can be used to accumulate log messages on a weekly, daily, hourly or other basis depending on the requirements of the environment. |

| Size | Size log handlers write log messages to a named file until the file reaches a specified size. When the file reaches a specified size, it is renamed with a numeric prefix and the handler continues to write into a newly created log file with the original name. Each size log handler must specify the maximum number of files to be kept in this fashion. | The Size handler is best suited to an environment where the log file size must be consistent. |

| Async | Async log handlers are wrapper log handlers that provide asynchronous behavior for one or more other log handlers. These are useful for log handlers that have high latency or other performance problems such as writing a log file to a network file system. | The Async log handlers are best suited to an environment where high latency is a problem or when writing to a network file system. |

| Custom | Custom log handlers enable to you to configure new types of log handlers that have been implemented. A custom handler must be implemented as a Java class that extends java.util.logging.Handler and be contained in a module. | Custom log handlers create customized log handler types and are recommended for advanced users. |

4.4.7. Selecting Log Handlers

- The

Consolelog handler is preferred when JBoss Data Grid is administered using the command line. In such a case, errors and log messages appear on the console window and are not saved unless separately configured to do so. - The

Filelog handler is used to direct log entries into a specified file. This simplicity is useful if the requirement is to store all log entries according to the time in one place. - The

Periodiclog handler is similar to theFilehandler but creates files according to the specified period. As an example, this handler can be used to accumulate log messages on a weekly, daily, hourly or other basis depending on the requirements of the environment. - The

Sizelog handler also writes log messages to a specified file, but only while the log file size is within a specified limit. Once the file size reaches the specified limit, log files are written to a new log file. This handler is best suited to an environment where the log file size must be consistent. - The

Asynclog handler is a wrapper that forces other log handlers to operate asynchronously. This is best suited to an environment where high latency is a problem or when writing to a network file system. - The

Customlog handler creates new, customized types of log handlers. This is an advanced log handler.

4.4.8. About Log Formatters

java.util.Formatter class.

4.5. Logging Sample Configurations

4.5.1. Logging Sample Configuration Location

standalone.xml or clustered.xml for standalone instances, or domain.xml for managed domain instances.

4.5.2. Sample XML Configuration for the Root Logger

Procedure 4.1. Configure the Root Logger

Set the

levelPropertyThelevelproperty sets the maximum level of log message that the root logger records.<subsystem xmlns="urn:jboss:domain:logging:3.0"> <root-logger> <level name="INFO"/>List

handlershandlersis a list of log handlers that are used by the root logger.<subsystem xmlns="urn:jboss:domain:logging:3.0"> <root-logger> <level name="INFO"/> <handlers> <handler name="CONSOLE"/> <handler name="FILE"/> </handlers> </root-logger> </subsystem>

4.5.3. Sample XML Configuration for a Log Category

Procedure 4.2. Configure a Log Category

<subsystem xmlns="urn:jboss:domain:logging:3.0">

<logger category="com.company.accounts.rec" use-parent-handlers="true">

<level name="WARN"/>

<handlers>

<handler name="accounts-rec"/>

</handlers>

</logger>

</subsystem>- Use the

categoryproperty to specify the log category from which log messages will be captured.Theuse-parent-handlersis set to"true"by default. When set to"true", this category will use the log handlers of the root logger in addition to any other assigned handlers. - Use the

levelproperty to set the maximum level of log message that the log category records. - The

handlerselement contains a list of log handlers.

4.5.4. Sample XML Configuration for a Console Log Handler

Procedure 4.3. Configure the Console Log Handler

<subsystem xmlns="urn:jboss:domain:logging:3.0">

<console-handler name="CONSOLE" autoflush="true">

<level name="INFO"/>

<encoding value="UTF-8"/>

<target value="System.out"/>

<filter-spec value="not(match("JBAS.*"))"/>

<formatter>

<pattern-formatter pattern="%K{level}%d{HH:mm:ss,SSS} %-5p [%c] (%t) %s%E%n"/>

</formatter>

</console-handler>

</subsystem>Add the Log Handler Identifier Information

Thenameproperty sets the unique identifier for this log handler.Whenautoflushis set to"true"the log messages will be sent to the handler's target immediately upon request.Set the

levelPropertyThelevelproperty sets the maximum level of log messages recorded.Set the

encodingOutputUseencodingto set the character encoding scheme to be used for the output.Define the

targetValueThetargetproperty defines the system output stream where the output of the log handler goes. This can beSystem.errfor the system error stream, orSystem.outfor the standard out stream.Define the

filter-specPropertyThefilter-specproperty is an expression value that defines a filter. The example provided defines a filter that does not match a pattern:not(match("JBAS.*")).Specify the

formatterUseformatterto list the log formatter used by the log handler.

4.5.5. Sample XML Configuration for a File Log Handler

Procedure 4.4. Configure the File Log Handler

<file-handler name="accounts-rec-trail" autoflush="true">

<level name="INFO"/>

<encoding value="UTF-8"/>

<file relative-to="jboss.server.log.dir" path="accounts-rec-trail.log"/>

<formatter>

<pattern-formatter pattern="%d{HH:mm:ss,SSS} %-5p [%c] (%t) %s%E%n"/>

</formatter>

<append value="true"/>

</file-handler>Add the File Log Handler Identifier Information

Thenameproperty sets the unique identifier for this log handler.Whenautoflushis set to"true"the log messages will be sent to the handler's target immediately upon request.Set the

levelPropertyThelevelproperty sets the maximum level of log message that the root logger records.Set the

encodingOutputUseencodingto set the character encoding scheme to be used for the output.Set the

fileObjectThefileobject represents the file where the output of this log handler is written to. It has two configuration properties:relative-toandpath.Therelative-toproperty is the directory where the log file is written to. JBoss Enterprise Application Platform 6 file path variables can be specified here. Thejboss.server.log.dirvariable points to thelog/directory of the server.Thepathproperty is the name of the file where the log messages will be written. It is a relative path name that is appended to the value of therelative-toproperty to determine the complete path.Specify the

formatterUseformatterto list the log formatter used by the log handler.Set the

appendPropertyWhen theappendproperty is set to"true", all messages written by this handler will be appended to an existing file. If set to"false"a new file will be created each time the application server launches. Changes toappendrequire a server reboot to take effect.

4.5.6. Sample XML Configuration for a Periodic Log Handler

Procedure 4.5. Configure the Periodic Log Handler

<periodic-rotating-file-handler name="FILE" autoflush="true">

<level name="INFO"/>

<encoding value="UTF-8"/>

<formatter>

<pattern-formatter pattern="%d{HH:mm:ss,SSS} %-5p [%c] (%t) %s%E%n"/>

</formatter>

<file relative-to="jboss.server.log.dir" path="server.log"/>

<suffix value=".yyyy-MM-dd"/>

<append value="true"/>

</periodic-rotating-file-handler>Add the Periodic Log Handler Identifier Information

Thenameproperty sets the unique identifier for this log handler.Whenautoflushis set to"true"the log messages will be sent to the handler's target immediately upon request.Set the

levelPropertyThelevelproperty sets the maximum level of log message that the root logger records.Set the

encodingOutputUseencodingto set the character encoding scheme to be used for the output.Specify the

formatterUseformatterto list the log formatter used by the log handler.Set the

fileObjectThefileobject represents the file where the output of this log handler is written to. It has two configuration properties:relative-toandpath.Therelative-toproperty is the directory where the log file is written to. JBoss Enterprise Application Platform 6 file path variables can be specified here. Thejboss.server.log.dirvariable points to thelog/directory of the server.Thepathproperty is the name of the file where the log messages will be written. It is a relative path name that is appended to the value of therelative-toproperty to determine the complete path.Set the

suffixValueThesuffixis appended to the filename of the rotated logs and is used to determine the frequency of rotation. The format of thesuffixis a dot (.) followed by a date string, which is parsable by thejava.text.SimpleDateFormatclass. The log is rotated on the basis of the smallest time unit defined by thesuffix. For example,yyyy-MM-ddwill result in daily log rotation. See http://docs.oracle.com/javase/6/docs/api/index.html?java/text/SimpleDateFormat.htmlSet the

appendPropertyWhen theappendproperty is set to"true", all messages written by this handler will be appended to an existing file. If set to"false"a new file will be created each time the application server launches. Changes toappendrequire a server reboot to take effect.

4.5.7. Sample XML Configuration for a Size Log Handler

Procedure 4.6. Configure the Size Log Handler

<size-rotating-file-handler name="accounts_debug" autoflush="false">

<level name="DEBUG"/>

<encoding value="UTF-8"/>

<file relative-to="jboss.server.log.dir" path="accounts-debug.log"/>

<rotate-size value="500k"/>

<max-backup-index value="5"/>

<formatter>

<pattern-formatter pattern="%d{HH:mm:ss,SSS} %-5p [%c] (%t) %s%E%n"/>

</formatter>

<append value="true"/>

</size-rotating-file-handler>Add the Size Log Handler Identifier Information

Thenameproperty sets the unique identifier for this log handler.Whenautoflushis set to"true"the log messages will be sent to the handler's target immediately upon request.Set the

levelPropertyThelevelproperty sets the maximum level of log message that the root logger records.Set the

encodingOutputUseencodingto set the character encoding scheme to be used for the output.Set the

fileObjectThefileobject represents the file where the output of this log handler is written to. It has two configuration properties:relative-toandpath.Therelative-toproperty is the directory where the log file is written to. JBoss Enterprise Application Platform 6 file path variables can be specified here. Thejboss.server.log.dirvariable points to thelog/directory of the server.Thepathproperty is the name of the file where the log messages will be written. It is a relative path name that is appended to the value of therelative-toproperty to determine the complete path.Specify the

rotate-sizeValueThe maximum size that the log file can reach before it is rotated. A single character appended to the number indicates the size units:bfor bytes,kfor kilobytes,mfor megabytes,gfor gigabytes. For example:50mfor 50 megabytes.Set the

max-backup-indexNumberThe maximum number of rotated logs that are kept. When this number is reached, the oldest log is reused.Specify the

formatterUseformatterto list the log formatter used by the log handler.Set the

appendPropertyWhen theappendproperty is set to"true", all messages written by this handler will be appended to an existing file. If set to"false"a new file will be created each time the application server launches. Changes toappendrequire a server reboot to take effect.

4.5.8. Sample XML Configuration for a Async Log Handler

Procedure 4.7. Configure the Async Log Handler

<async-handler name="Async_NFS_handlers">

<level name="INFO"/>

<queue-length value="512"/>

<overflow-action value="block"/>

<subhandlers>

<handler name="FILE"/>

<handler name="accounts-record"/>

</subhandlers>

</async-handler>- The

nameproperty sets the unique identifier for this log handler. - The

levelproperty sets the maximum level of log message that the root logger records. - The

queue-lengthdefines the maximum number of log messages that will be held by this handler while waiting for sub-handlers to respond. - The

overflow-actiondefines how this handler responds when its queue length is exceeded. This can be set toBLOCKorDISCARD.BLOCKmakes the logging application wait until there is available space in the queue. This is the same behavior as an non-async log handler.DISCARDallows the logging application to continue but the log message is deleted. - The

subhandlerslist is the list of log handlers to which this async handler passes its log messages.

Part III. Set Up Cache Modes

Chapter 5. Cache Modes

- Local mode is the only non-clustered cache mode offered in JBoss Data Grid. In local mode, JBoss Data Grid operates as a simple single-node in-memory data cache. Local mode is most effective when scalability and failover are not required and provides high performance in comparison with clustered modes.

- Clustered mode replicates state changes to a subset of nodes. The subset size should be sufficient for fault tolerance purposes, but not large enough to hinder scalability. Before attempting to use clustered mode, it is important to first configure JGroups for a clustered configuration. For details about configuring JGroups, see Section 30.2, “Configure JGroups (Library Mode)”

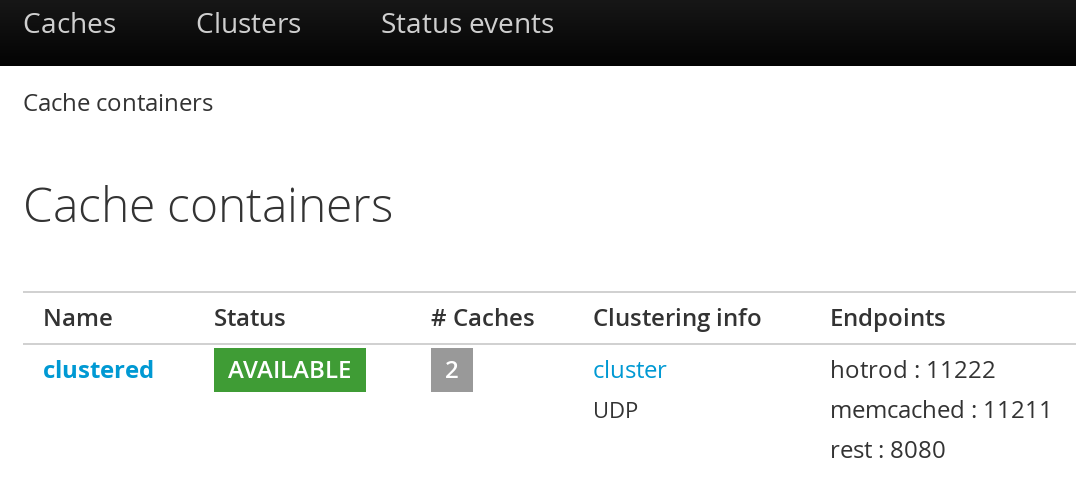

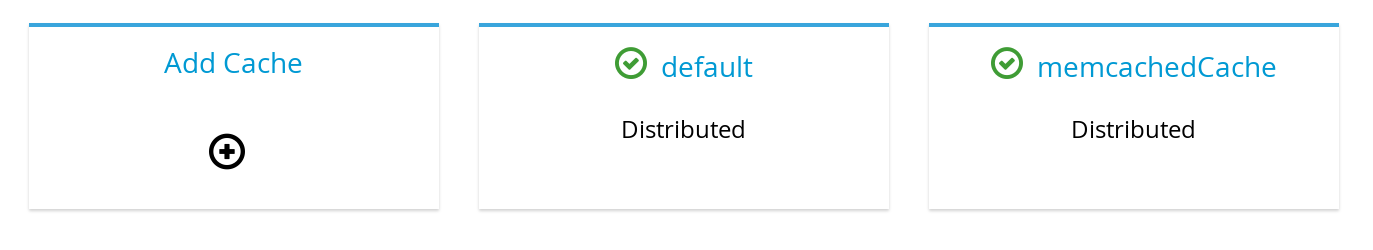

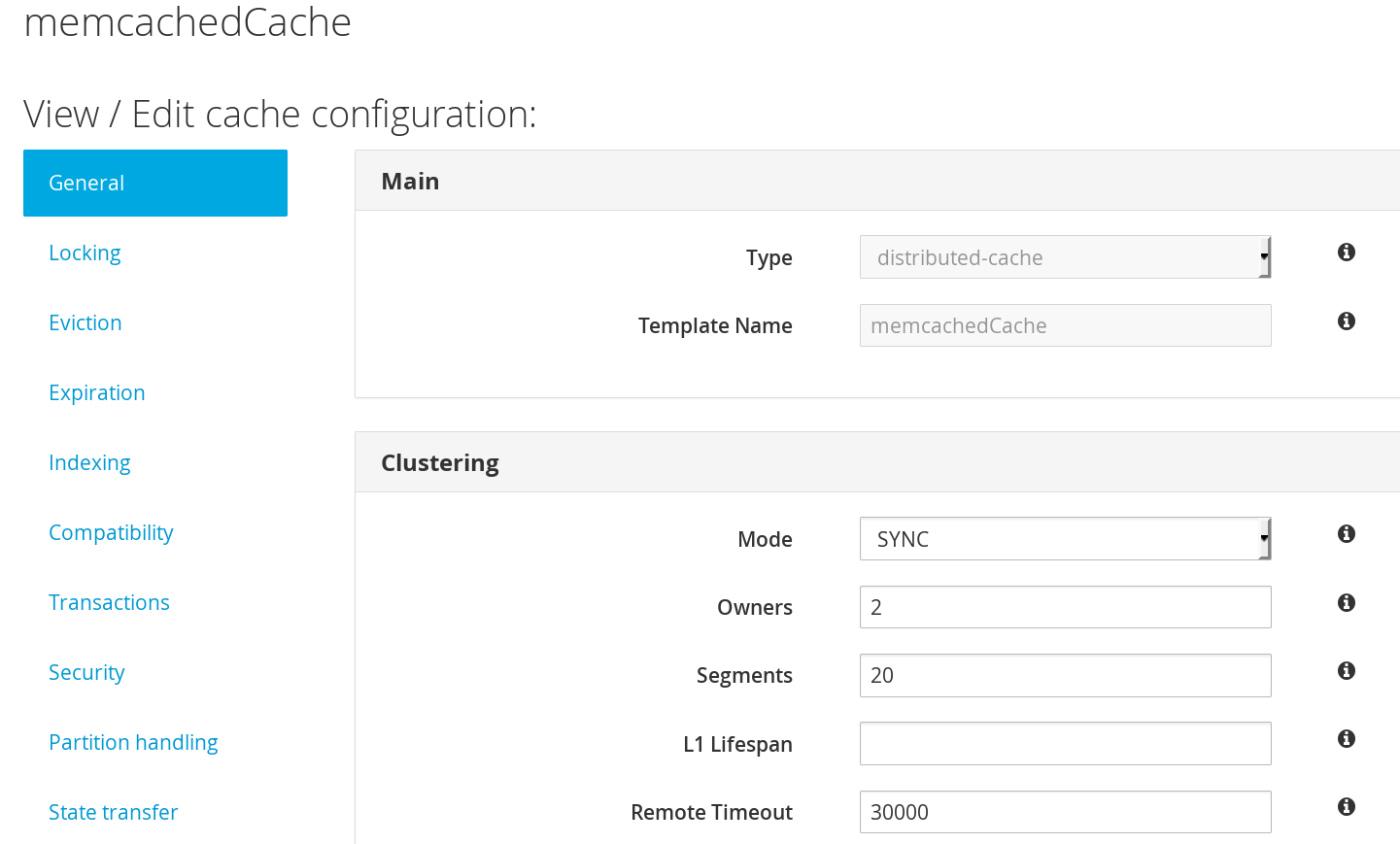

5.1. About Cache Containers

cache-container element acts as a parent of one or more (local or clustered) caches. To add clustered caches to the container, transport must be defined.

Procedure 5.1. How to Configure the Cache Container

<subsystem xmlns="urn:infinispan:server:core:8.3" default-cache-container="local"> <cache-container name="local" default-cache="default" statistics="true" start="EAGER"> <local-cache name="default" start="EAGER" statistics="false"> <!-- Additional configuration information here --> </local-cache> </cache-container> </subsystem>

Configure the Cache Container

Thecache-containerelement specifies information about the cache container using the following parameters:- The

nameparameter defines the name of the cache container. - The

default-cacheparameter defines the name of the default cache used with the cache container. - The

statisticsattribute is optional and istrueby default. Statistics are useful in monitoring JBoss Data Grid via JMX or JBoss Operations Network, however they adversely affect performance. Disable this attribute by setting it tofalseif it is not required. - The

startparameter indicates when the cache container starts, i.e. whether it will start lazily when requested or "eagerly" when the server starts up. Valid values for this parameter areEAGERandLAZY.

Configure Per-cache Statistics

Ifstatisticsare enabled at the container level, per-cache statistics can be selectively disabled for caches that do not require monitoring by setting thestatisticsattribute tofalse.

5.2. Local Mode

- Write-through and write-behind caching to persist data.

- Entry eviction to prevent the Java Virtual Machine (JVM) running out of memory.

- Support for entries that expire after a defined period.

ConcurrentMap, resulting in a simple migration process from a map to JBoss Data Grid.

5.2.1. Configure Local Mode

local-cache element.

Procedure 5.2. The local-cache Element

<cache-container name="local"

default-cache="default"

statistics="true">

<local-cache name="default"

start="EAGER"

batching="false"

statistics="true">

<!-- Additional configuration information here -->

</local-cache>local-cache element specifies information about the local cache used with the cache container using the following parameters:

- The

nameparameter specifies the name of the local cache to use. - The

startparameter indicates when the cache container starts, i.e. whether it will start lazily when requested or "eagerly" when the server starts up. Valid values for this parameter areEAGERandLAZY. - The

batchingparameter specifies whether batching is enabled for the local cache. - If

statisticsare enabled at the container level, per-cache statistics can be selectively disabled for caches that do not require monitoring by setting thestatisticsattribute tofalse.

DefaultCacheManager with the "no-argument" constructor. Both of these methods create a local default cache.

<transport/> it can only contain local caches. The container used in the example can only contain local caches as it does not have a <transport/>.

ConcurrentMap and is compatible with multiple cache systems.

5.3. Clustered Modes

- Replication Mode replicates any entry that is added across all cache instances in the cluster.

- Invalidation Mode does not share any data, but signals remote caches to initiate the removal of invalid entries.

- Distribution Mode stores each entry on a subset of nodes instead of on all nodes in the cluster.

5.3.1. Asynchronous and Synchronous Operations

5.3.2. About Asynchronous Communications

local-cache, distributed-cache and replicated-cache elements respectively. Each of these elements contains a mode property, the value of which can be set to SYNC for synchronous or ASYNC for asynchronous communications.

Example 5.1. Asynchronous Communications Example Configuration

<replicated-cache name="default"

start="EAGER"

mode="ASYNC"

batching="false"

statistics="true">

<!-- Additional configuration information here -->

</replicated-cache>Note

5.3.3. Cache Mode Troubleshooting

5.3.3.1. Invalid Data in ReadExternal

readExternal, it can be because when using Cache.putAsync(), starting serialization can cause your object to be modified, causing the datastream passed to readExternal to be corrupted. This can be resolved if access to the object is synchronized.

5.3.3.2. Cluster Physical Address Retrieval

The physical address can be retrieved using an instance method call. For example: AdvancedCache.getRpcManager().getTransport().getPhysicalAddresses().

Chapter 6. Set Up Distribution Mode

6.1. About Distribution Mode

6.2. Distribution Mode's Consistent Hash Algorithm

numSegments and cannot be changed without restarting the cluster. The mapping of keys to segments is also fixed — a key maps to the same segment, regardless of how the topology of the cluster changes.

6.3. Locating Entries in Distribution Mode

PUT operation can result in as many remote calls as specified by the owners parameter, while a GET operation executed on any node in the cluster results in a single remote call. In the background, the GET operation results in the same number of remote calls as a PUT operation (specifically the value of the owners parameter), but these occur in parallel and the returned entry is passed to the caller as soon as one returns.

6.4. Return Values in Distribution Mode

6.5. Configure Distribution Mode

Procedure 6.1. The distributed-cache Element

<cache-container name="clustered"

default-cache="default"

statistics="true">

<!-- Additional configuration information here -->

<distributed-cache name="default"

mode="SYNC"

segments="20"

start="EAGER"

owners="2"

statistics="true">

<!-- Additional configuration information here -->

</distributed-cache>

</cache-container>distributed-cache element configures settings for the distributed cache using the following parameters:

- The

nameparameter provides a unique identifier for the cache. - The

modeparameter sets the clustered cache mode. Valid values areSYNC(synchronous) andASYNC(asynchronous). - The (optional)

segmentsparameter specifies the number of hash space segments per cluster. The recommended value for this parameter is ten multiplied by the cluster size and the default value is20. - The

startparameter specifies whether the cache starts when the server starts up or when it is requested or deployed. - The

ownersparameter indicates the number of nodes that will contain the hash segment. - If

statisticsare enabled at the container level, per-cache statistics can be selectively disabled for caches that do not require monitoring by setting thestatisticsattribute tofalse.

Important

6.6. Synchronous and Asynchronous Distribution

Example 6.1. Communication Mode example

A, B and C, and a key K that maps nodes A and B. Perform an operation on node C that requires a return value, for example Cache.remove(K). To execute successfully, the operation must first synchronously forward the call to both node A and B, and then wait for a result returned from either node A or B. If asynchronous communication was used, the usefulness of the returned values cannot be guaranteed, despite the operation behaving as expected.

Chapter 7. Set Up Replication Mode

7.1. About Replication Mode

7.2. Optimized Replication Mode Usage

7.3. Configure Replication Mode

Procedure 7.1. The replicated-cache Element

<cache-container name="clustered"

default-cache="default"

statistics="true">

<!-- Additional configuration information here -->

<replicated-cache name="default"

mode="SYNC"

start="EAGER"

statistics="true">

<!-- Additional configuration information here -->

</replicated-cache>

</cache-container>Important

replicated-cache element configures settings for the distributed cache using the following parameters:

- The

nameparameter provides a unique identifier for the cache. - The

modeparameter sets the clustered cache mode. Valid values areSYNC(synchronous) andASYNC(asynchronous). - The

startparameter specifies whether the cache starts when the server starts up or when it is requested or deployed. - If

statisticsare enabled at the container level, per-cache statistics can be selectively disabled for caches that do not require monitoring by setting thestatisticsattribute tofalse.

cache-container and locking, see the appropriate chapter.

7.4. Synchronous and Asynchronous Replication

- Synchronous replication blocks a thread or caller (for example on a

put()operation) until the modifications are replicated across all nodes in the cluster. By waiting for acknowledgments, synchronous replication ensures that all replications are successfully applied before the operation is concluded. - Asynchronous replication operates significantly faster than synchronous replication because it does not need to wait for responses from nodes. Asynchronous replication performs the replication in the background and the call returns immediately. Errors that occur during asynchronous replication are written to a log. As a result, a transaction can be successfully completed despite the fact that replication of the transaction may not have succeeded on all the cache instances in the cluster.

7.4.1. Troubleshooting Asynchronous Replication Behavior

- Disable state transfer and use a

ClusteredCacheLoaderto lazily look up remote state as and when needed. - Enable state transfer and

REPL_SYNC. Use the Asynchronous API (for example, thecache.putAsync(k, v)) to activate 'fire-and-forget' capabilities. - Enable state transfer and

REPL_ASYNC. All RPCs end up becoming synchronous, but client threads will not be held up if a replication queue is enabled (which is recommended for asynchronous mode).

7.5. The Replication Queue

- Previously set intervals.

- The queue size exceeding the number of elements.

- A combination of previously set intervals and the queue size exceeding the number of elements.

7.5.1. Replication Queue Usage

- Disable asynchronous marshalling.

- Set the

max-threadscount value to1for theexecutorattribute of thetransportelement. Theexecutoris only available in Library Mode, and is therefore defined in its configuration file as follows:<transport executor="infinispan-transport"/>

queue-flush-interval, value is in milliseconds) and queue size (queue-size) as follows:

Example 7.1. Replication Queue in Asynchronous Mode

<replicated-cache name="asyncCache"

start="EAGER"

mode="ASYNC"

batching="false"

indexing="NONE"

statistics="true"

queue-size="1000"

queue-flush-interval="500">

<!-- Additional configuration information here -->

</replicated-cache>7.6. About Replication Guarantees

7.7. Replication Traffic on Internal Networks

IP addresses than for traffic over public IP addresses, or do not charge at all for internal network traffic (for example, GoGrid). To take advantage of lower rates, you can configure Red Hat JBoss Data Grid to transfer replication traffic using the internal network. With such a configuration, it is difficult to know the internal IP address you are assigned. JBoss Data Grid uses JGroups interfaces to solve this problem.

Chapter 8. Set Up Invalidation Mode

8.1. About Invalidation Mode

8.2. Configure Invalidation Mode

Procedure 8.1. The invalidation-cache Element

<cache-container name="local"

default-cache="default"

statistics="true">

<invalidation-cache name="default"

mode="ASYNC"

start="EAGER"

statistics="true">

<!-- Additional configuration information here -->

</invalidation-cache>

</cache-container>invalidation-cache element configures settings for the distributed cache using the following parameters:

- The

nameparameter provides a unique identifier for the cache. - The

modeparameter sets the clustered cache mode. Valid values areSYNC(synchronous) andASYNC(asynchronous). - The

startparameter specifies whether the cache starts when the server starts up or when it is requested or deployed. - If

statisticsare enabled at the container level, per-cache statistics can be selectively disabled for caches that do not require monitoring by setting thestatisticsattribute tofalse.

Important

cache-container, locking, and transaction elements, see the appropriate chapter.

8.3. Synchronous/Asynchronous Invalidation

- Synchronous invalidation blocks the thread until all caches in the cluster have received invalidation messages and evicted the obsolete data.

- Asynchronous invalidation operates in a fire-and-forget mode that allows invalidation messages to be broadcast without blocking a thread to wait for responses.

8.4. The L1 Cache and Invalidation

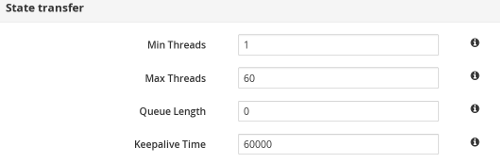

Chapter 9. State Transfer

owners copies of each key in the cache (as determined through consistent hashing). In invalidation mode the initial state transfer is similar to replication mode, the only difference being that the nodes are not guaranteed to have the same state. When a node leaves, a replicated mode or invalidation mode cache does not perform any state transfer. A distributed cache needs to make additional copies of the keys that were stored on the leaving nodes, again to keep owners copies of each key.

ClusterLoader must be configured, otherwise a node will become the owner or backup owner of a key without the data being loaded into its cache. In addition, if State Transfer is disabled in distributed mode then a key will occasionally have less than owners owners.

9.1. Non-Blocking State Transfer

- Minimize the interval(s) where the entire cluster cannot respond to requests because of a state transfer in progress.

- Minimize the interval(s) where an existing member stops responding to requests because of a state transfer in progress.

- Allow state transfer to occur with a drop in the performance of the cluster. However, the drop in the performance during the state transfer does not throw any exception, and allows processes to continue.

- Allows a

GEToperation to successfully retrieve a key from another node without returning a null value during a progressive state transfer.

- The blocking protocol queues the transaction delivery during the state transfer.

- State transfer control messages (such as CacheTopologyControlCommand) are sent according to the total order information.

9.2. Suppress State Transfer via JMX

getCache() call will timeout after stateTransfer.timeout expires unless rebalancing is re-enabled or stateTransfer.awaitInitialTransferis set to false.

9.3. The rebalancingEnabled Attribute

rebalancingEnabled JMX attribute, and requires no specific configuration.

rebalancingEnabled attribute can be modified for the entire cluster from the LocalTopologyManager JMX Mbean on any node. This attribute is true by default, and is configurable programmatically.

<await-initial-transfer="false"/>

Part IV. Enabling APIs

Chapter 10. Enabling APIs Declaratively

10.1. Batching API

Batching may be enabled on a per-cache basis by defining a transaction mode of BATCH. The following example demonstrates this:

<local-cache> <transaction mode="BATCH"/> </local-cache>

10.2. Grouping API

The grouping API may be enabled on a per-cache basis by adding the groups element as seen in the following example:

<distributed-cache>

<groups enabled="true"/>

</distributed-cache>

Assuming a custom Grouper exists it may be defined by passing in the classname as seen below:

<distributed-cache>

<groups enabled="true">

<grouper class="com.acme.KXGrouper" />

</groups>

</distributed-cache>

10.3. Externalizable API

Externalizer is a class that can:

- Marshall a given object type to a byte array.

- Unmarshall the contents of a byte array into an instance of the object type.

10.3.1. Register the Advanced Externalizer (Declaratively)

Procedure 10.1. Register the Advanced Externalizer

<infinispan>

<cache-container>

<serialization>

<advanced-externalizer class="Book$BookExternalizer" />

</serialization>

</cache-container>

</infinispan>- Add the

serializationelement to thecache-containerelement. - Add the

advanced-externalizerelement, defining the custom Externalizer with theclassattribute. Replace the Book$BookExternalizer values as required.

10.3.2. Custom Externalizer ID Values

Table 10.1. Reserved Externalizer ID Ranges

| ID Range | Reserved For |

|---|---|

| 1000-1099 | The Infinispan Tree Module |

| 1100-1199 | Red Hat JBoss Data Grid Server modules |

| 1200-1299 | Hibernate Infinispan Second Level Cache |

| 1300-1399 | JBoss Data Grid Lucene Directory |

| 1400-1499 | Hibernate OGM |

| 1500-1599 | Hibernate Search |

| 1600-1699 | Infinispan Query Module |

| 1700-1799 | Infinispan Remote Query Module |

| 1800-1849 | JBoss Data Grid Scripting Module |

| 1850-1899 | JBoss Data Grid Server Event Logger Module |

| 1900-1999 | JBoss Data Grid Remote Store |

10.3.2.1. Customize the Externalizer ID (Declaratively)

Procedure 10.2. Customizing the Externalizer ID (Declaratively)

<infinispan>

<cache-container>

<serialization>

<advanced-externalizer id="123"

class="Book$BookExternalizer"/>

</serialization>

</global>

</infinispan>- Add the

serializationelement to thecache-containerelement. - Add the

advanced-externalizerelement to add information about the new advanced externalizer. - Define the externalizer ID using the

idattribute. Ensure that the selected ID is not from the range of IDs reserved for other modules. - Define the externalizer class using the

classattribute. Replace the Book$BookExternalizer values as required.

Chapter 11. Set Up and Configure the Infinispan Query API

11.1. Set Up Infinispan Query

11.1.1. Infinispan Query Dependencies in Library Mode

<dependency>

<groupId>org.infinispan</groupId>

<artifactId>infinispan-embedded-query</artifactId>

<version>${infinispan.version}</version>

</dependency>infinispan-embedded-query.jar and infinispan-embedded.jar files from the JBoss Data Grid distribution.

Warning

infinispan-embedded-query.jar file. Do not include other versions of Hibernate Search and Lucene in the same deployment as infinispan-embedded-query. This action will cause classpath conflicts and result in unexpected behavior.

11.2. Indexing Modes

11.2.1. Managing Indexes

- Each node can maintain an individual copy of the global index.

- The index can be shared across all nodes.

indexLocalOnly to true, each write to cache must be forwarded to all other nodes so that they can update their indexes. If the index is shared, by setting indexLocalOnly to false, only the node where the write originates is required to update the shared index.

directory provider, which is used to store the index. The index can be stored, for example, as in-memory, on filesystem, or in distributed cache.

11.2.2. Managing the Index in Local Mode

indexLocalOnly option is meaningless in local mode.

11.2.3. Managing the Index in Replicated Mode

indexLocalOnly to false, so that each node will apply the required updates it receives from other nodes in addition to the updates started locally.

indexLocalOnly must be set to true so that each node will only apply the changes originated locally. While there is no risk of having an out of sync index, this causes contention on the node used for updating the index.

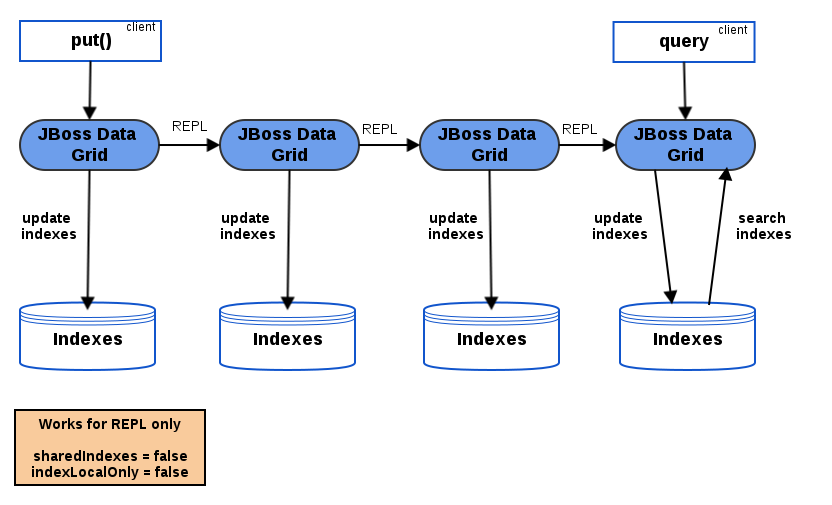

Figure 11.1. Replicated Cache Querying

11.2.4. Managing the Index in Distribution Mode

indexLocalOnly set to true.

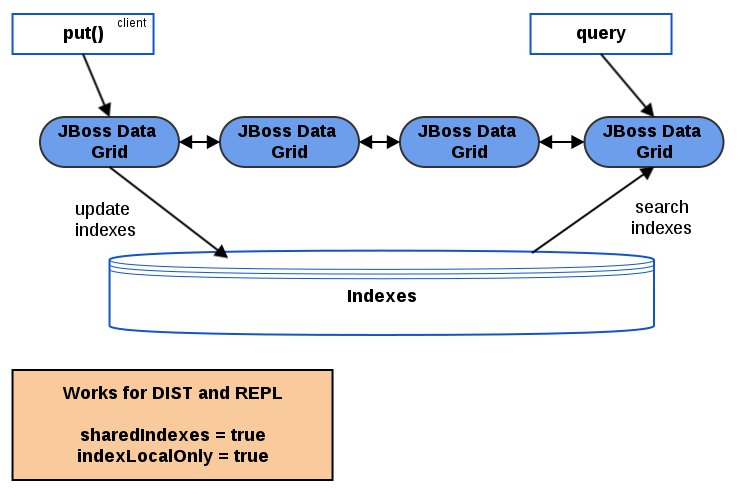

Figure 11.2. Querying with a Shared Index

11.2.5. Managing the Index in Invalidation Mode

11.3. Directory Providers

- RAM Directory Provider

- Filesystem Directory Provider

- Infinispan Directory Provider

11.3.1. RAM Directory Provider

- maintain its own index.

- use Lucene's in-memory or filesystem-based index directory.

<local-cache name="indexesInMemory">

<indexing index="LOCAL">

<property name="default.directory_provider">ram</property>

</indexing>

</local-cache>11.3.2. Filesystem Directory Provider

Example 11.1. Disk-based Index Store

<local-cache name="indexesInInfinispan">

<indexing index="ALL">

<property name="default.directory_provider">filesystem</property>

<property name="default.indexBase">/tmp/ispn_index</property>

</indexing>

</local-cache>11.3.3. Infinispan Directory Provider

infinispan-directory module.

Note

infinispan-directory in the context of the Querying feature, not as a standalone feature.

infinispan-directory allows Lucene to store indexes within the distributed data grid. This allows the indexes to be distributed, stored in-memory, and optionally written to disk using the cache store for durability.

Important

true, as this provides major performance increases; however, if external applications access the same index in use by Infinispan this property must be set to false. The default value is recommended for the majority of applications and use cases due to the performance increases, so only change this if absolutely necessary.

InfinispanIndexManager provides a default back end that sends all updates to master node which later applies the updates to the index. In case of master node failure, the update can be lost, therefore keeping the cache and index non-synchronized. Non-default back ends are not supported.

Example 11.2. Enable Shared Indexes

<local-cache name="indexesInInfinispan">

<indexing index="ALL">

<property name="default.directory_provider">infinispan</property>

<property name="default.indexmanager">org.infinispan.query.indexmanager.InfinispanIndexManager</property>

</indexing>

</local-cache>11.4. Configure Indexing

11.4.1. Configure the Index in Remote Client-Server Mode

- NONE

- LOCAL = indexLocalOnly="true"

- ALL = indexLocalOnly="false"

Example 11.3. Configuration in Remote Client-Server Mode

<indexing index="LOCAL">

<property name="default.directory_provider">ram</property>

<!-- Additional configuration information here -->

</indexing>By default the Lucene caches will be created as local caches; however, with this configuration the Lucene search results are not shared between nodes in the cluster. To prevent this define the caches required by Lucene in a clustered mode, as seen in the following configuration snippet:

Example 11.4. Configuring the Lucene cache in Remote Client-Server Mode

<cache-container name="clustered" default-cache="repltestcache">

[...]

<replicated-cache name="LuceneIndexesMetadata" mode="SYNC">

<transaction mode="NONE"/>

<indexing index="NONE"/>

</replicated-cache>

<distributed-cache name="LuceneIndexesData" mode="SYNC">

<transaction mode="NONE"/>

<indexing index="NONE"/>

</distributed-cache>

<replicated-cache name="LuceneIndexesLocking" mode="SYNC">

<transaction mode="NONE"/>

<indexing index="NONE"/>

</replicated-cache>

[...]

</cache-container>11.4.2. Rebuilding the Index

- The definition of what is indexed in the types has changed.

- A parameter affecting how the index is defined, such as the

Analyserchanges. - The index is destroyed or corrupted, possibly due to a system administration error.

MassIndexer and start it as follows:

SearchManager searchManager = Search.getSearchManager(cache); searchManager.getMassIndexer().start();

11.5. Tuning the Index

11.5.1. Near-Realtime Index Manager

<property name="default.indexmanager">near-real-time</property>

11.5.2. Tuning Infinispan Directory

- Data cache

- Metadata cache

- Locking cache

Example 11.5. Tuning the Infinispan Directory

<distributed-cache name="indexedCache" mode="SYNC" owners="2">

<indexing index="LOCAL">

<property name="default.indexmanager">org.infinispan.query.indexmanager.InfinispanIndexManager</property>

<property name="default.metadata_cachename>lucene_metadata_repl</property>

<property name="default.data_cachename">lucene_data_dist</property>

<property name="default.locking_cachename">lucene_locking_repl</property>

</indexing>

</distributed-cache>

<replicated-cache name="lucene_metadata_repl" mode="SYNC" />

<distributed-cache name="lucene_data_dist" mode="SYNC" owners="2" />

<replicated-cache name="lucene_locking_repl" mode="SYNC" />11.5.3. Per-Index Configuration

default. prefix for each property. To specify different configuration for each index, replace default with the index name. By default, this is the full class name of the indexed object, however you can override the index name in the @Indexed annotation.

Part V. Remote Client-Server Mode Interfaces

- The Asynchronous API (can only be used in conjunction with the Hot Rod Client in Remote Client-Server Mode)

- The REST Interface

- The Memcached Interface

- The Hot Rod Interface

- The RemoteCache API

Chapter 12. The REST Interface

Important

authentication and encryption parameters from the connector.

12.1. The REST Interface Connector

12.1.1. Configure REST Connectors

rest-connector element in Red Hat JBoss Data Grid's Remote Client-Server mode.

Procedure 12.1. Configuring REST Connectors for Remote Client-Server Mode

<subsystem xmlns="urn:infinispan:server:endpoint:8.0">

<rest-connector cache-container="local"

context-path="${CONTEXT_PATH}"/>

</subsystem>rest-connector element specifies the configuration information for the REST connector.

- The

cache-containerparameter names the cache container used by the REST connector. This is a mandatory parameter. - The

context-pathparameter specifies the context path for the REST connector. The default value for this parameter is an empty string (""). This is an optional parameter. - The

security-domainparameter specifies that the specified domain, declared in the security subsystem, should be used to authenticate access to the REST endpoint. This is an optional parameter. If this parameter is omitted, no authentication is performed. - The

auth-methodparameter specifies the method used to retrieve credentials for the end point. The default value for this parameter isBASIC. Supported alternate values includeBASIC,DIGEST, andCLIENT-CERT. This is an optional parameter. - The

security-modeparameter specifies whether authentication is required only for write operations (such as PUT, POST and DELETE) or for read operations (such as GET and HEAD) as well. Valid values for this parameter areWRITEfor authenticating write operations only, orREAD_WRITEto authenticate read and write operations. The default value for this parameter isREAD_WRITE.

Chapter 13. The Memcached Interface

13.1. About Memcached Servers

- Standalone, where each server acts independently without communication with any other memcached servers.

- Clustered, where servers replicate and distribute data to other memcached servers.

13.2. Memcached Statistics

Table 13.1. Memcached Statistics

| Statistic | Data Type | Details |

|---|---|---|

| uptime | 32-bit unsigned integer. | Contains the time (in seconds) that the memcached instance has been available and running. |

| time | 32-bit unsigned integer. | Contains the current time. |

| version | String | Contains the current version. |

| curr_items | 32-bit unsigned integer. | Contains the number of items currently stored by the instance. |

| total_items | 32-bit unsigned integer. | Contains the total number of items stored by the instance during its lifetime. |

| cmd_get | 64-bit unsigned integer | Contains the total number of get operation requests (requests to retrieve data). |

| cmd_set | 64-bit unsigned integer | Contains the total number of set operation requests (requests to store data). |

| get_hits | 64-bit unsigned integer | Contains the number of keys that are present from the keys requested. |

| get_misses | 64-bit unsigned integer | Contains the number of keys that were not found from the keys requested. |

| delete_hits | 64-bit unsigned integer | Contains the number of keys to be deleted that were located and successfully deleted. |

| delete_misses | 64-bit unsigned integer | Contains the number of keys to be deleted that were not located and therefore could not be deleted. |

| incr_hits | 64-bit unsigned integer | Contains the number of keys to be incremented that were located and successfully incremented |

| incr_misses | 64-bit unsigned integer | Contains the number of keys to be incremented that were not located and therefore could not be incremented. |

| decr_hits | 64-bit unsigned integer | Contains the number of keys to be decremented that were located and successfully decremented. |

| decr_misses | 64-bit unsigned integer | Contains the number of keys to be decremented that were not located and therefore could not be decremented. |

| cas_hits | 64-bit unsigned integer | Contains the number of keys to be compared and swapped that were found and successfully compared and swapped. |

| cas_misses | 64-bit unsigned integer | Contains the number of keys to be compared and swapped that were not found and therefore not compared and swapped. |

| cas_badval | 64-bit unsigned integer | Contains the number of keys where a compare and swap occurred but the original value did not match the supplied value. |

| evictions | 64-bit unsigned integer | Contains the number of eviction calls performed. |

| bytes_read | 64-bit unsigned integer | Contains the total number of bytes read by the server from the network. |

| bytes_written | 64-bit unsigned integer | Contains the total number of bytes written by the server to the network. |

13.3. The Memcached Interface Connector

memcached socket binding, and exposes the memcachedCache cache declared in the local container, using defaults for all other settings.

<memcached-connector socket-binding="memcached" cache-container="local"/>

13.3.1. Configure Memcached Connectors

connectors element in Red Hat JBoss Data Grid's Remote Client-Server Mode.

Procedure 13.1. Configuring the Memcached Connector in Remote Client-Server Mode

memcached-connector element defines the configuration elements for use with memcached.

<subsystem xmlns="urn:infinispan:server:endpoint:8.0">

<memcached-connector socket-binding="memcached"

cache-container="local"

worker-threads="${VALUE}"

idle-timeout="{VALUE}"

tcp-nodelay="{TRUE/FALSE}"

send-buffer-size="{VALUE}"

receive-buffer-size="${VALUE}" />

</subsystem>- The

socket-bindingparameter specifies the socket binding port used by the memcached connector. This is a mandatory parameter. - The

cache-containerparameter names the cache container used by the memcached connector. This is a mandatory parameter. - The

worker-threadsparameter specifies the number of worker threads available for the memcached connector. The default value for this parameter is 160. This is an optional parameter. - The

idle-timeoutparameter specifies the time (in milliseconds) the connector can remain idle before the connection times out. The default value for this parameter is-1, which means that no timeout period is set. This is an optional parameter. - The

tcp-nodelayparameter specifies whether TCP packets will be delayed and sent out in batches. Valid values for this parameter aretrueandfalse. The default value for this parameter istrue. This is an optional parameter. - The

send-buffer-sizeparameter indicates the size of the send buffer for the memcached connector. The default value for this parameter is the size of the TCP stack buffer. This is an optional parameter. - The

receive-buffer-sizeparameter indicates the size of the receive buffer for the memcached connector. The default value for this parameter is the size of the TCP stack buffer. This is an optional parameter.

Chapter 14. The Hot Rod Interface

14.1. About Hot Rod

14.2. The Benefits of Using Hot Rod over Memcached

- Memcached

- The memcached protocol causes the server endpoint to use the memcached text wire protocol. The memcached wire protocol has the benefit of being commonly used, and is available for almost any platform. All of JBoss Data Grid's functions, including clustering, state sharing for scalability, and high availability, are available when using memcached.However the memcached protocol lacks dynamicity, resulting in the need to manually update the list of server nodes on your clients in the event one of the nodes in a cluster fails. Also, memcached clients are not aware of the location of the data in the cluster. This means that they will request data from a non-owner node, incurring the penalty of an additional request from that node to the actual owner, before being able to return the data to the client. This is where the Hot Rod protocol is able to provide greater performance than memcached.

- Hot Rod

- JBoss Data Grid's Hot Rod protocol is a binary wire protocol that offers all the capabilities of memcached, while also providing better scaling, durability, and elasticity.The Hot Rod protocol does not need the hostnames and ports of each node in the remote cache, whereas memcached requires these parameters to be specified. Hot Rod clients automatically detect changes in the topology of clustered Hot Rod servers; when new nodes join or leave the cluster, clients update their Hot Rod server topology view. Consequently, Hot Rod provides ease of configuration and maintenance, with the advantage of dynamic load balancing and failover.Additionally, the Hot Rod wire protocol uses smart routing when connecting to a distributed cache. This involves sharing a consistent hash algorithm between the server nodes and clients, resulting in faster read and writing capabilities than memcached.

Warning

cacheManager.getCache method.

14.3. Hot Rod Hash Functions

14.4. The Hot Rod Interface Connector

hotrod socket binding.

<hotrod-connector socket-binding="hotrod" cache-container="local" />

<topology-state-transfer /> child element to the connector as follows:

<hotrod-connector socket-binding="hotrod" cache-container="local"> <topology-state-transfer lazy-retrieval="false" lock-timeout="1000" replication-timeout="5000" /> </hotrod-connector>

Note

14.4.1. Configure Hot Rod Connectors

hotrod-connector and topology-state-transfer elements must be configured based on the following procedure.

Procedure 14.1. Configuring Hot Rod Connectors for Remote Client-Server Mode

<subsystem xmlns="urn:infinispan:server:endpoint:8.0">

<hotrod-connector socket-binding="hotrod"

cache-container="local"

worker-threads="${VALUE}"

idle-timeout="${VALUE}"

tcp-nodelay="${TRUE/FALSE}"

send-buffer-size="${VALUE}"

receive-buffer-size="${VALUE}" >

<topology-state-transfer lock-timeout"="${MILLISECONDS}"

replication-timeout="${MILLISECONDS}"

external-host="${HOSTNAME}"

external-port="${PORT}"

lazy-retrieval="${TRUE/FALSE}" />

</hotrod-connector>

</subsystem>- The

hotrod-connectorelement defines the configuration elements for use with Hot Rod.- The

socket-bindingparameter specifies the socket binding port used by the Hot Rod connector. This is a mandatory parameter. - The

cache-containerparameter names the cache container used by the Hot Rod connector. This is a mandatory parameter. - The

worker-threadsparameter specifies the number of worker threads available for the Hot Rod connector. The default value for this parameter is160. This is an optional parameter. - The

idle-timeoutparameter specifies the time (in milliseconds) the connector can remain idle before the connection times out. The default value for this parameter is-1, which means that no timeout period is set. This is an optional parameter. - The

tcp-nodelayparameter specifies whether TCP packets will be delayed and sent out in batches. Valid values for this parameter aretrueandfalse. The default value for this parameter istrue. This is an optional parameter. - The

send-buffer-sizeparameter indicates the size of the send buffer for the Hot Rod connector. The default value for this parameter is the size of the TCP stack buffer. This is an optional parameter. - The

receive-buffer-sizeparameter indicates the size of the receive buffer for the Hot Rod connector. The default value for this parameter is the size of the TCP stack buffer. This is an optional parameter.

- The

topology-state-transferelement specifies the topology state transfer configurations for the Hot Rod connector. This element can only occur once within ahotrod-connectorelement.- The

lock-timeoutparameter specifies the time (in milliseconds) after which the operation attempting to obtain a lock times out. The default value for this parameter is10seconds. This is an optional parameter. - The

replication-timeoutparameter specifies the time (in milliseconds) after which the replication operation times out. The default value for this parameter is10seconds. This is an optional parameter. - The

external-hostparameter specifies the hostname sent by the Hot Rod server to clients listed in the topology information. The default value for this parameter is the host address. This is an optional parameter. - The

external-portparameter specifies the port sent by the Hot Rod server to clients listed in the topology information. The default value for this parameter is the configured port. This is an optional parameter. - The

lazy-retrievalparameter indicates whether the Hot Rod connector will carry out retrieval operations lazily. The default value for this parameter istrue. This is an optional parameter.

Part VI. Set Up Locking for the Cache

Chapter 15. Locking

15.1. Configure Locking (Remote Client-Server Mode)

locking element within the cache tags (for example, invalidation-cache, distributed-cache, replicated-cache or local-cache).

Note

READ_COMMITTED. If the isolation attribute is included to explicitly specify an isolation mode, it is ignored, a warning is thrown, and the default value is used instead.

Procedure 15.1. Configure Locking (Remote Client-Server Mode)

<distributed-cache> <locking acquire-timeout="30000" concurrency-level="1000" striping="false" /> <!-- Additional configuration here --> </distributed-cache>

- The

acquire-timeoutparameter specifies the number of milliseconds after which lock acquisition will time out. - The

concurrency-levelparameter defines the number of lock stripes used by the LockManager. - The

stripingparameter specifies whether lock striping will be used for the local cache.

15.2. Configure Locking (Library Mode)

locking element and its parameters are set within the default element and for each named cache, it occurs within the local-cache element. The following is an example of this configuration:

Procedure 15.2. Configure Locking (Library Mode)

<local-cache name="default">

<locking concurrency-level="${VALUE}"

isolation="${LEVEL}"

acquire-timeout="${TIME}"

striping="${TRUE/FALSE}"

write-skew="${TRUE/FALSE}" />

</local-cache>- The

concurrency-levelparameter specifies the concurrency level for the lock container. Set this value according to the number of concurrent threads interacting with the data grid. - The

isolationparameter specifies the cache's isolation level. Valid isolation levels areREAD_COMMITTEDandREPEATABLE_READ. For details about isolation levels, see Section 17.1, “About Isolation Levels” - The

acquire-timeoutparameter specifies time (in milliseconds) after which a lock acquisition attempt times out. - The

stripingparameter specifies whether a pool of shared locks are maintained for all entries that require locks. If set toFALSE, locks are created for each entry in the cache. For details, see Section 16.1, “About Lock Striping” - The

write-skewparameter is only valid if theisolationis set toREPEATABLE_READ. If this parameter is set toFALSE, a disparity between a working entry and the underlying entry at write time results in the working entry overwriting the underlying entry. If the parameter is set toTRUE, such conflicts (namely write skews) throw an exception. Thewrite-skewparameter can be only used withOPTIMISTICtransactions and it requires entry versioning to be enabled, withSIMPLEversioning scheme.

15.3. Locking Types

15.3.1. About Optimistic Locking

write-skew enabled, transactions in optimistic locking mode roll back if one or more conflicting modifications are made to the data before the transaction completes.

15.3.2. About Pessimistic Locking

15.3.3. Pessimistic Locking Types

- Explicit Pessimistic Locking, which uses the JBoss Data Grid Lock API to allow cache users to explicitly lock cache keys for the duration of a transaction. The Lock call attempts to obtain locks on specified cache keys across all nodes in a cluster. This attempt either fails or succeeds for all specified cache keys. All locks are released during the commit or rollback phase.

- Implicit Pessimistic Locking ensures that cache keys are locked in the background as they are accessed for modification operations. Using Implicit Pessimistic Locking causes JBoss Data Grid to check and ensure that cache keys are locked locally for each modification operation. Discovering unlocked cache keys causes JBoss Data Grid to request a cluster-wide lock to acquire a lock on the unlocked cache key.

15.3.4. Explicit Pessimistic Locking Example

Procedure 15.3. Transaction with Explicit Pessimistic Locking

tx.begin() cache.lock(K) cache.put(K,V5) tx.commit()

- When the line

cache.lock(K)executes, a cluster-wide lock is acquired onK. - When the line

cache.put(K,V5)executes, it guarantees success. - When the line

tx.commit()executes, the locks held for this process are released.

15.3.5. Implicit Pessimistic Locking Example

Procedure 15.4. Transaction with Implicit Pessimistic locking

tx.begin() cache.put(K,V) cache.put(K2,V2) cache.put(K,V5) tx.commit()

- When the line

cache.put(K,V)executes, a cluster-wide lock is acquired onK. - When the line

cache.put(K2,V2)executes, a cluster-wide lock is acquired onK2. - When the line

cache.put(K,V5)executes, the lock acquisition is non operational because a cluster-wide lock forKhas been previously acquired. Theputoperation will still occur. - When the line

tx.commit()executes, all locks held for this transaction are released.

15.3.6. Configure Locking Mode (Remote Client-Server Mode)

transaction element as follows:

<transaction locking="{OPTIMISTIC/PESSIMISTIC}" />15.3.7. Configure Locking Mode (Library Mode)

transaction element as follows:

<transaction transaction-manager-lookup="{TransactionManagerLookupClass}"

mode="{NONE, BATCH, NON_XA, NON_DURABLE_XA, FULL_XA}"

locking="{OPTIMISTIC,PESSIMISTIC}">

</transaction>locking value to OPTIMISTIC or PESSIMISTIC to configure the locking mode used for the transactional cache.

15.4. Locking Operations

15.4.1. About the LockManager

LockManager component is responsible for locking an entry before a write process initiates. The LockManager uses a LockContainer to locate, hold and create locks. There are two types of LockContainers JBoss Data Grid uses internally and their choice is dependent on the useLockStriping setting. The first type offers support for lock striping while the second type supports one lock per entry.

See Also:

15.4.2. About Lock Acquisition

15.4.3. About Concurrency Levels

ConcurrentHashMap based collections, such as those internal to DataContainers.

Chapter 16. Set Up Lock Striping

16.1. About Lock Striping

16.2. Configure Lock Striping (Remote Client-Server Mode)

striping element to true.

Example 16.1. Lock Striping (Remote Client-Server Mode)

<locking acquire-timeout="20000" concurrency-level="500" striping="true" />

Note

READ_COMMITTED. If the isolation attribute is included to explicitly specify an isolation mode, it is ignored, a warning is thrown, and the default value is used instead.

locking element uses the following attributes:

- The

acquire-timeoutattribute specifies the maximum time to attempt a lock acquisition. The default value for this attribute is10000milliseconds. - The

concurrency-levelattribute specifies the concurrency level for lock containers. Adjust this value according to the number of concurrent threads interacting with JBoss Data Grid. The default value for this attribute is32. - The

stripingattribute specifies whether a shared pool of locks is maintained for all entries that require locking (true). If set tofalse, a lock is created for each entry. Lock striping controls the memory footprint but can reduce concurrency in the system. The default value for this attribute isfalse.

16.3. Configure Lock Striping (Library Mode)

striping parameter as demonstrated in the following procedure.

Procedure 16.1. Configure Lock Striping (Library Mode)

<local-cache>

<locking concurrency-level="${VALUE}"

isolation="${LEVEL}"

acquire-timeout="${TIME}"

striping="${TRUE/FALSE}"

write-skew="${TRUE/FALSE}" />

</local-cache>- The

concurrency-levelis used to specify the size of the shared lock collection use when lock striping is enabled. - The

isolationparameter specifies the cache's isolation level. Valid isolation levels areREAD_COMMITTEDandREPEATABLE_READ. - The

acquire-timeoutparameter specifies time (in milliseconds) after which a lock acquisition attempt times out. - The

stripingparameter specifies whether a pool of shared locks are maintained for all entries that require locks. If set toFALSE, locks are created for each entry in the cache. If set toTRUE, lock striping is enabled and shared locks are used as required from the pool. - The

write-skewcheck determines if a modification to the entry from a different transaction should roll back the transaction. Write skew set to true requiresisolation_levelset toREPEATABLE_READ. The default value forwrite-skewandisolation_levelareFALSEandREAD_COMMITTEDrespectively. Thewrite-skewparameter can be only used withOPTIMISTICtransactions and it requires entry versioning to be enabled, withSIMPLEversioning scheme.

Chapter 17. Set Up Isolation Levels

17.1. About Isolation Levels

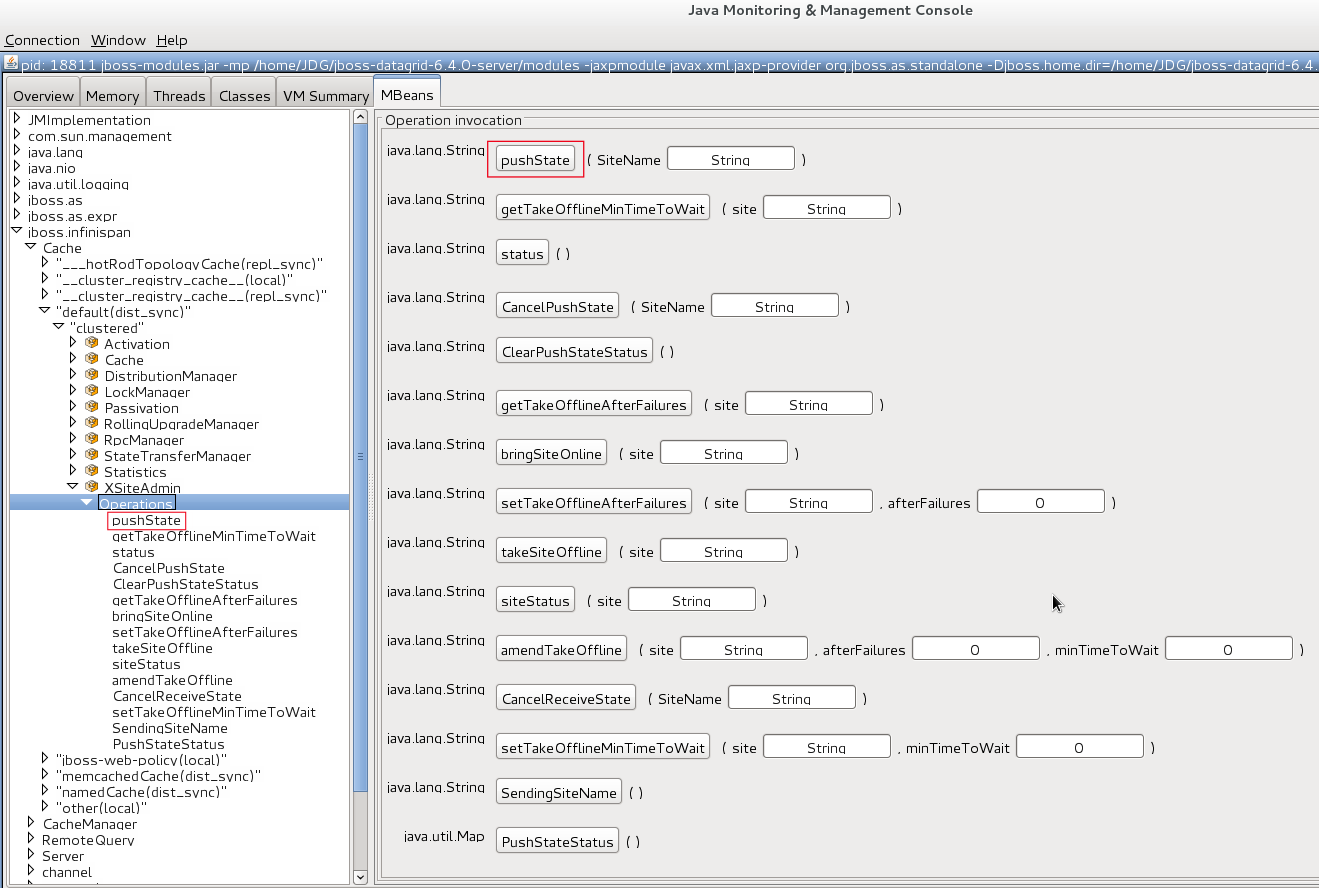

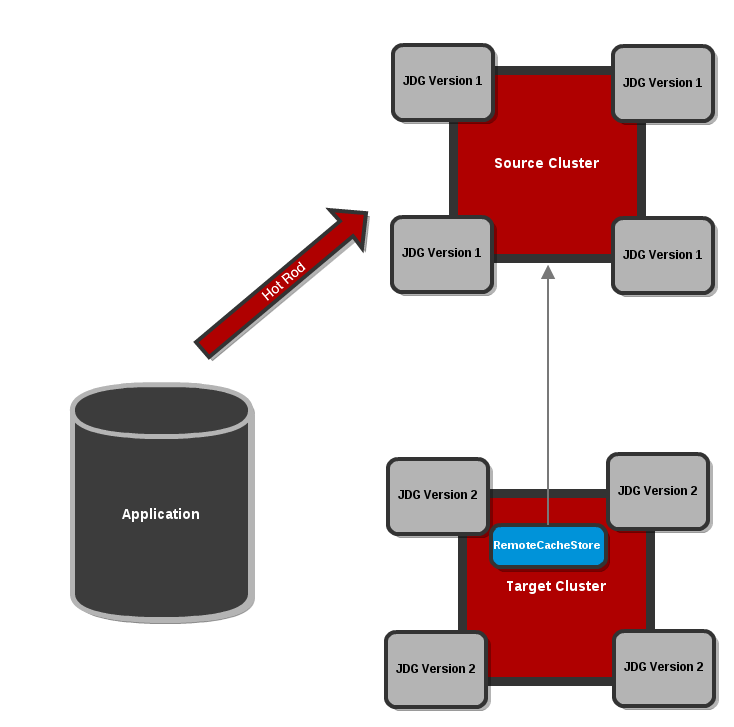

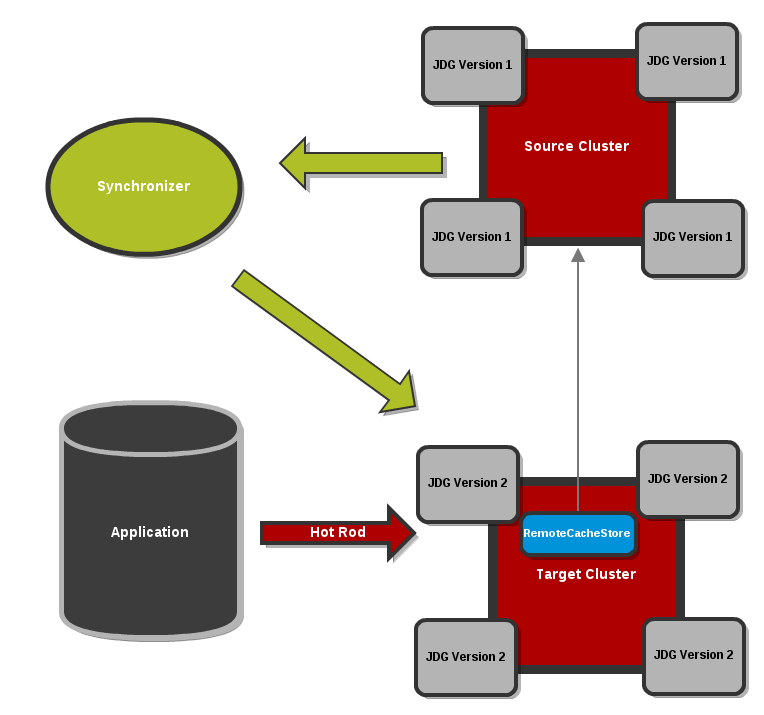

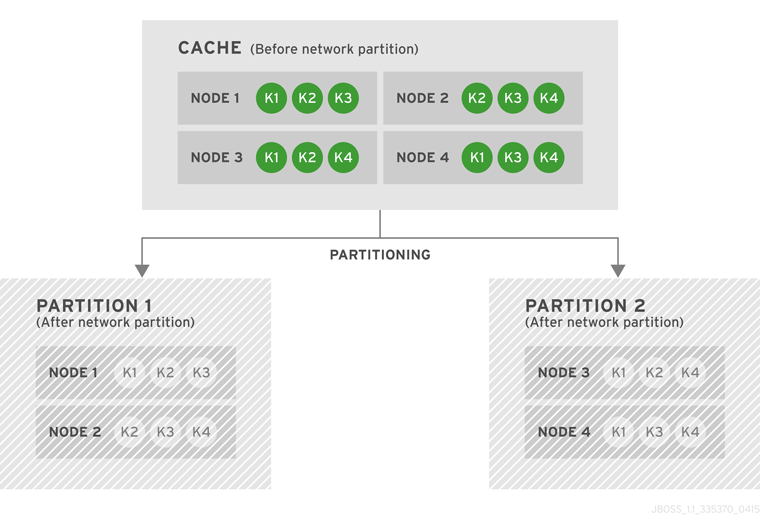

READ_COMMITTED and REPEATABLE_READ are the two isolation modes offered in Red Hat JBoss Data Grid.