Administration Guide

Administering Red Hat CodeReady Workspaces 2.8

Robert Kratky

rkratky@redhat.comMichal Maléř

mmaler@redhat.comFabrice Flore-Thébault

ffloreth@redhat.comYana Hontyk

yhontyk@redhat.comdevtools-docs@redhat.com

Abstract

Making open source more inclusive

Red Hat is committed to replacing problematic language in our code, documentation, and web properties. We are beginning with these four terms: master, slave, blacklist, and whitelist. Because of the enormity of this endeavor, these changes will be implemented gradually over several upcoming releases. For more details, see our CTO Chris Wright’s message.

Chapter 1. CodeReady Workspaces architecture overview

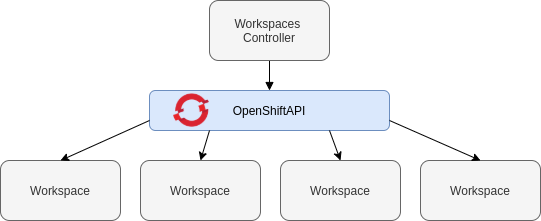

Red Hat CodeReady Workspaces components are:

- A central workspace controller: an always running service that manages users workspaces through the OpenShift API.

- Users workspaces: container-based IDEs that the controller stops when the user stops coding.

Figure 1.1. High-level CodeReady Workspaces architecture

When CodeReady Workspaces is installed on a OpenShift cluster, the workspace controller is the only component that is deployed. A CodeReady Workspaces workspace is created immediately after a user requests it.

Additional resources

1.1. Understanding CodeReady Workspaces workspace controller

1.1.1. CodeReady Workspaces workspace controller

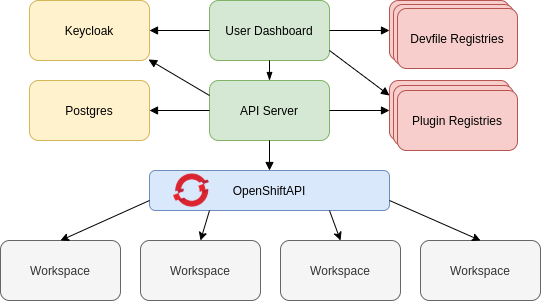

The workspaces controller manages the container-based development environments: CodeReady Workspaces workspaces. Following deployment scenarios are available:

- Single-user: The deployment contains no authentication service. Development environments are not secured. This configuration requires fewer resources. It is more adapted for local installations.

- Multi-user: This is a multi-tenant configuration. Development environments are secured, and this configuration requires more resources. Appropriate for cloud installations.

The following diagram shows the different services that are a part of the CodeReady Workspaces workspaces controller. Note that RH-SSO and PostgreSQL are only needed in the multi-user configuration.

Figure 1.2. CodeReady Workspaces workspaces controller

Additional resources

1.1.2. CodeReady Workspaces server

The CodeReady Workspaces server is the central service of the workspaces controller. It is a Java web service that exposes an HTTP REST API to manage CodeReady Workspaces workspaces and, in multi-user mode, CodeReady Workspaces users.

| Container image |

|

Additional resources

1.1.3. CodeReady Workspaces user dashboard

The user dashboard is the landing page of Red Hat CodeReady Workspaces. It is an Angular front-end application. CodeReady Workspaces users create, start, and manage CodeReady Workspaces workspaces from their browsers through the user dashboard.

| Container image |

|

1.1.4. CodeReady Workspaces Devfile registry

The CodeReady Workspaces devfile registry is a service that provides a list of CodeReady Workspaces stacks to create ready-to-use workspaces. This list of stacks is used in the Dashboard → Create Workspace window. The devfile registry runs in a container and can be deployed wherever the user dashboard can connect.

For more information about devfile registry customization, see the Customizing devfile registry section.

| Container image |

|

1.1.5. CodeReady Workspaces plug-in registry

The CodeReady Workspaces plug-in registry is a service that provides the list of plug-ins and editors for the CodeReady Workspaces workspaces. A devfile only references a plug-in that is published in a CodeReady Workspaces plug-in registry. It runs in a container and can be deployed wherever CodeReady Workspaces server connects.

| Container image |

|

1.1.6. CodeReady Workspaces and PostgreSQL

The PostgreSQL database is a prerequisite to configure CodeReady Workspaces in multi-user mode. The CodeReady Workspaces administrator can choose to connect CodeReady Workspaces to an existing PostgreSQL instance or let the CodeReady Workspaces deployment start a new dedicated PostgreSQL instance.

The CodeReady Workspaces server uses the database to persist user configurations (workspaces metadata, Git credentials). RH-SSO uses the database as its back end to persist user information.

| Container image |

|

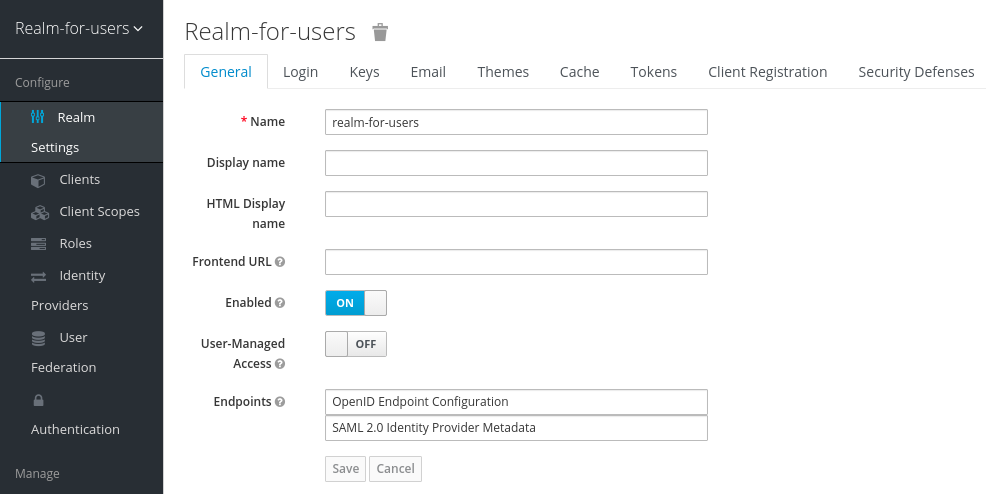

1.1.7. CodeReady Workspaces and RH-SSO

RH-SSO is a prerequisite to configure CodeReady Workspaces in multi-user mode. The CodeReady Workspaces administrator can choose to connect CodeReady Workspaces to an existing RH-SSO instance or let the CodeReady Workspaces deployment start a new dedicated RH-SSO instance.

The CodeReady Workspaces server uses RH-SSO as an OpenID Connect (OIDC) provider to authenticate CodeReady Workspaces users and secure access to CodeReady Workspaces resources.

| Container image |

|

1.2. Understanding CodeReady Workspaces workspaces architecture

1.2.1. CodeReady Workspaces workspaces architecture

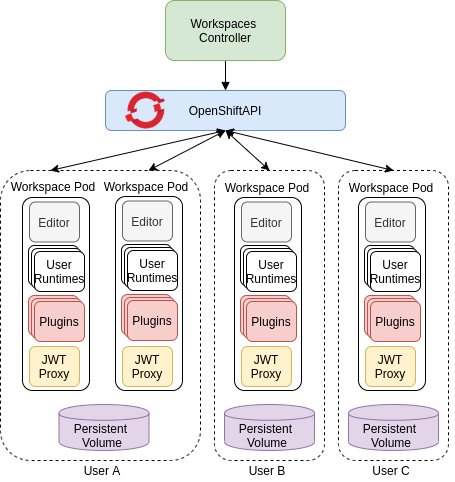

A CodeReady Workspaces deployment on the cluster consists of the CodeReady Workspaces server component, a database for storing user profile and preferences, and several additional deployments hosting workspaces. The CodeReady Workspaces server orchestrates the creation of workspaces, which consist of a deployment containing the workspace containers and enabled plug-ins, plus related components, such as:

- ConfigMaps

- services

- endpoints

- ingresses/routes

- secrets

- PVs

The CodeReady Workspaces workspace is a web application. It is composed of microservices running in containers that provide all the services of a modern IDE such as an editor, language auto-completion, and debugging tools. The IDE services are deployed with the development tools, packaged in containers and user runtime applications, which are defined as OpenShift resources.

The source code of the projects of a CodeReady Workspaces workspace is persisted in a OpenShift PersistentVolume. Microservices run in containers that have read-write access to the source code (IDE services, development tools), and runtime applications have read-write access to this shared directory.

The following diagram shows the detailed components of a CodeReady Workspaces workspace.

Figure 1.3. CodeReady Workspaces workspace components

In the diagram, there are three running workspaces: two belonging to User A and one to User C. A fourth workspace is getting provisioned where the plug-in broker is verifying and completing the workspace configuration.

Use the devfile format to specify the tools and runtime applications of a CodeReady Workspaces workspace.

1.2.2. CodeReady Workspaces workspace components

This section describes the components of a CodeReady Workspaces workspace.

1.2.2.1. Che Editor plug-in

A Che Editor plug-in is a CodeReady Workspaces workspace plug-in. It defines the web application that is used as an editor in a workspace. The default CodeReady Workspaces workspace editor is Che-Theia. It is a web-based source-code editor similar to Visual Studio Code (VS Code). It has a plug-in system that supports VS Code extensions.

| Source code | |

| Container image |

|

| Endpoints |

|

Additional resources

1.2.2.2. CodeReady Workspaces user runtimes

Use any non-terminating user container as a user runtime. An application that can be defined as a container image or as a set of OpenShift resources can be included in a CodeReady Workspaces workspace. This makes it easy to test applications in the CodeReady Workspaces workspace.

To test an application in the CodeReady Workspaces workspace, include the application YAML definition used in stage or production in the workspace specification. It is a 12-factor application development / production parity.

Examples of user runtimes are Node.js, SpringBoot or MongoDB, and MySQL.

1.2.2.3. CodeReady Workspaces workspace JWT proxy

The JWT proxy is responsible for securing the communication of the CodeReady Workspaces workspace services. The CodeReady Workspaces workspace JWT proxy is included in a CodeReady Workspaces workspace only if the CodeReady Workspaces server is configured in multi-user mode.

An HTTP proxy is used to sign outgoing requests from a workspace service to the CodeReady Workspaces server and to authenticate incoming requests from the IDE client running on a browser.

| Source code | |

| Container image |

|

1.2.2.4. CodeReady Workspaces plug-ins broker

Plug-in brokers are special services that, given a plug-in meta.yaml file:

- Gather all the information to provide a plug-in definition that the CodeReady Workspaces server knows.

- Perform preparation actions in the workspace project (download, unpack files, process configuration).

The main goal of the plug-in broker is to decouple the CodeReady Workspaces plug-ins definitions from the actual plug-ins that CodeReady Workspaces can support. With brokers, CodeReady Workspaces can support different plug-ins without updating the CodeReady Workspaces server.

The CodeReady Workspaces server starts the plug-in broker. The plug-in broker runs in the same OpenShift project as the workspace. It has access to the plug-ins and project persistent volumes.

A plug-ins broker is defined as a container image (for example, eclipse/che-plugin-broker). The plug-in type determines the type of the broker that is started. Two types of plug-ins are supported: Che Plugin and Che Editor.

| Source code | |

| Container image |

|

1.2.3. CodeReady Workspaces workspace creation flow

The following is a CodeReady Workspaces workspace creation flow:

A user starts a CodeReady Workspaces workspace defined by:

- An editor (the default is Che-Theia)

- A list of plug-ins (for example, Java and OpenShift tools)

- A list of runtime applications

- CodeReady Workspaces server retrieves the editor and plug-in metadata from the plug-in registry.

- For every plug-in type, CodeReady Workspaces server starts a specific plug-in broker.

The CodeReady Workspaces plug-ins broker transforms the plug-in metadata into a Che Plugin definition. It executes the following steps:

- Downloads a plug-in and extracts its content.

-

Processes the plug-in

meta.yamlfile and sends it back to CodeReady Workspaces server in the format of a Che Plugin.

- CodeReady Workspaces server starts the editor and the plug-in sidecars.

- The editor loads the plug-ins from the plug-in persistent volume.

Chapter 2. Calculating CodeReady Workspaces resource requirements

This section describes how to calculate resources, such as memory and CPU, required to run Red Hat CodeReady Workspaces.

Both the CodeReady Workspaces central controller and user workspaces consist of a set of containers. Those containers contribute to the resources consumption in terms of CPU and RAM limits and requests.

2.1. Controller requirements

The Workspace Controller consists of a set of five services running in five distinct containers. The following table presents the default resource requirements of each of these services.

Table 2.1. ControllerServices

| Pod | Container name | Default memory limit | Default memory request |

|---|---|---|---|

| CodeReady Workspaces Server and Dashboard | che | 1 GiB | 512 MiB |

| PostgreSQL |

| 1 GiB | 512 MiB |

| RH-SSO |

| 2 GiB | 512 MiB |

| Devfile registry |

| 256 MiB | 16 MiB |

| Plug-in registry |

| 256 MiB | 16 MiB |

These default values are sufficient when the CodeReady Workspaces Workspace Controller manages a small amount of CodeReady Workspaces workspaces. For larger deployments, increase the memory limit. See the Advanced configuration options for the CodeReady Workspaces server component article for instructions on how to override the default requests and limits. For example, the Eclipse Che hosted by Red Hat that runs on https://workspaces.openshift.com uses 1 GB of memory.

Additional resources

2.2. Workspaces requirements

This section describes how to calculate the resources required for a workspace. It is the sum of the resources required for each component of this workspace.

These examples demonstrate the necessity of a proper calculation:

- A workspace with ten active plug-ins requires more resources than the same workspace with fewer plug-ins.

- A standard Java workspace requires more resources than a standard Node.js workspace because running builds, tests, and application debugging requires more resources.

Procedure

-

Identify the workspace components explicitly specified in the

componentssection of the Configuring a workspace using a devfile. Identify the implicit workspace components:

-

CodeReady Workspaces implicitly loads the default

cheEditor:che-theia, and thechePluginthat allows commands execution:che-machine-exec-plugin. To change the default editor, add acheEditorcomponent section in the devfile. -

When CodeReady Workspaces is running in multiuser mode, it loads the

JWT Proxycomponent. The JWT Proxy is responsible for the authentication and authorization of the external communications of the workspace components.

-

CodeReady Workspaces implicitly loads the default

Calculate the requirements for each component:

Default values:

The following table presents the default requirements for all workspace components. It also presents the corresponding CodeReady Workspaces server property to modify the defaults cluster-wide.

Table 2.2. Default requirements of workspace components by type

Component types CodeReady Workspaces server property Default memory limit Default memory request chePluginche.workspace.sidecar.default_memory_limit_mb128 MiB

64 MiB

cheEditorche.workspace.sidecar.default_memory_limit_mb128 MiB

64 MiB

kubernetes,openshift,dockerimageche.workspace.default_memory_limit_mb,che.workspace.default_memory_request_mb1 Gi

200 MiB

JWT Proxyche.server.secure_exposer.jwtproxy.memory_limit,che.server.secure_exposer.jwtproxy.memory_request128 MiB

15 MiB

Custom requirements for

chePluginsandcheEditorscomponents:Custom memory limit and request:

If present, the

memoryLimitandmemoryRequestattributes of thecontainerssection of themeta.yamlfile define the memory limit of thechePluginsorcheEditorscomponents. CodeReady Workspaces automatically sets the memory request to match the memory limit in case it is not specified explicitly.Example 2.1. The

chePluginche-incubator/typescript/latestmeta.yamlspec section:spec: containers: - image: docker.io/eclipse/che-remote-plugin-node:next name: vscode-typescript memoryLimit: 512Mi memoryRequest: 256MiThis results in a container with the following memory limit and request:

Memory limit

512 MiB

Memory request

256 MiB

NoteFor IBM Power Systems (ppc64le), the memory limit for some plugins has been increased by up to 1.5G to allow pods sufficient RAM to run. For example, on IBM Power Systems (ppc64le), the Theia editor pod requires 2G; the OpenShift connector pod requires 2.5G. For AMD64 and Intel 64 (x86_64) and IBM Z (s390x), memory requirements remain lower at 512M and 1500M respectively. However, some devfiles may still be configured to set the lower limit valid for AMD64 and Intel 64 (x86_64) and IBM Z (s390x), so to work around this, edit devfiles for workspaces that are crashing to increase the default memoryLimit by at least 1 - 1.5 GB.

NoteHow to find the

meta.yamlfile ofchePluginCommunity plug-ins are available in the che-plugin-registry GitHub repository in folder

v3/plugins/${organization}/${name}/${version}/.For non-community or customized plug-ins, the

meta.yamlfiles are available on the local OpenShift cluster at${pluginRegistryEndpoint}/v3/plugins/${organization}/${name}/${version}/meta.yaml.Custom CPU limit and request:

CodeReady Workspaces does not set CPU limits and requests by default. However, it is possible to configure CPU limits for the

chePluginandcheEditortypes in themeta.yamlfile or in the devfile in the same way as it done for memory limits.Example 2.2. The

chePluginche-incubator/typescript/latestmeta.yamlspec section:spec: containers: - image: docker.io/eclipse/che-remote-plugin-node:next name: vscode-typescript cpuLimit: 2000m cpuRequest: 500mIt results in a container with the following CPU limit and request:

CPU limit

2 cores

CPU request

0.5 cores

To set CPU limits and requests globally, use the following dedicated environment variables:

|

|

|

|

|

|

See also Advanced configuration options for the CodeReady Workspaces server component.

Note that the LimitRange object of the OpenShift project may specify defaults for CPU limits and requests set by cluster administrators. To prevent start errors due to resources overrun, limits on application or workspace levels must comply with those settings.

Custom requirements for

dockerimagecomponentsIf present, the

memoryLimitandmemoryRequestattributes of the devfile define the memory limit of adockerimagecontainer. CodeReady Workspaces automatically sets the memory request to match the memory limit in case it is not specified explicitly.- alias: maven type: dockerimage image: eclipse/maven-jdk8:latest memoryLimit: 1536MCustom requirements for

kubernetesoropenshiftcomponents:The referenced manifest may define the memory requirements and limits.

- Add all previously calculated requirements.

Additional resources

2.3. A workspace example

This section describes a CodeReady Workspaces workspace example.

The following devfile defines the CodeReady Workspaces workspace:

apiVersion: 1.0.0

metadata:

generateName: guestbook-nodejs-sample-

projects:

- name: guestbook-nodejs-sample

source:

type: git

location: "https://github.com/l0rd/nodejs-sample"

components:

- type: chePlugin

id: che-incubator/typescript/latest

- type: kubernetes

alias: guestbook-frontend

reference: https://raw.githubusercontent.com/l0rd/nodejs-sample/master/kubernetes-manifests/guestbook-frontend.deployment.yaml

mountSources: true

entrypoints:

- command: ['sleep']

args: ['infinity']This table provides the memory requirements for each workspace component:

Table 2.3. Total workspace memory requirement and limit

| Pod | Container name | Default memory limit | Default memory request |

|---|---|---|---|

| Workspace |

theia-ide (default | 512 MiB | 512 MiB |

| Workspace |

machine-exec (default | 128 MiB | 128 MiB |

| Workspace |

vscode-typescript ( | 512 MiB | 512 MiB |

| Workspace |

frontend ( | 1 GiB | 512 MiB |

| JWT Proxy | verifier | 128 MiB | 128 MiB |

| Total | 2.25 GiB | 1.75 GiB | |

-

The

theia-ideandmachine-execcomponents are implicitly added to the workspace, even when not included in the devfile. -

The resources required by

machine-execare the default forchePlugin. -

The resources for

theia-ideare specifically set in thecheEditormeta.yamlto 512 MiB asmemoryLimit. -

The Typescript VS Code extension has also overridden the default memory limits. In its

meta.yamlfile, the limits are explicitly specified to 512 MiB. -

CodeReady Workspaces is applying the defaults for the

kubernetescomponent type: a memory limit of 1 GiB and a memory request of 512 MiB. This is because thekubernetescomponent references aDeploymentmanifest that has a container specification with no resource limits or requests. - The JWT container requires 128 MiB of memory.

Adding all together results in 1.75 GiB of memory requests with a 2.25 GiB limit.

Additional resources

- Chapter 1, CodeReady Workspaces architecture overview

- Configuring the CodeReady Workspaces installation

- Advanced configuration options for the CodeReady Workspaces server component

- Configuring a workspace using a devfile

- A minimal devfile

- Section 9.1, “Authenticating users”

- Eclipse Che plugin registry - GitHub repository

Chapter 3. Customizing the registries

This chapter describes how to build and run custom registries for CodeReady Workspaces.

3.1. Understanding the CodeReady Workspaces registries

CodeReady Workspaces uses two registries: the plug-ins registry and the devfile registry. They are static websites publishing the metadata of CodeReady Workspaces plug-ins and devfiles. When built in offline mode they also include artifacts.

The devfile and plug-in registries run in two separate Pods. Their deployment is part of the CodeReady Workspaces installation.

The devfile and plug-in registries

- The devfile registry

-

The devfile registry holds the definitions of the CodeReady Workspaces stacks. Stacks are available on the CodeReady Workspaces user dashboard when selecting Create Workspace. It contains the list of CodeReady Workspaces technological stack samples with example projects. When built in offline mode it also contains all sample projects referenced in devfiles as

zipfiles. - The plug-in registry

- The plug-in registry makes it possible to share a plug-in definition across all the users of the same instance of CodeReady Workspaces. When built in offline mode it also contains all plug-in or extension artifacts.

Additional resources

3.2. Building custom registry images

3.2.1. Building a custom devfile registry image

This section describes how to build a custom devfile registry image. The procedure explains how to add a devfile. The image contains all sample projects referenced in devfiles.

Prerequisites

- A running installation of podman or docker.

- Valid content for the devfile to add. See: Configuring a workspace using a devfile.

Procedure

Clone the devfile registry repository and check out the version to deploy:

$ git clone git@github.com:redhat-developer/codeready-workspaces.git $ cd codeready-workspaces $ git checkout crw-2.8-rhel-8

In the

./dependencies/che-devfile-registry/devfiles/directory, create a subdirectory<devfile-name>/and add thedevfile.yamlandmeta.yamlfiles.Example 3.1. File organization for a devfile

./dependencies/che-devfile-registry/devfiles/ └── <devfile-name> ├── devfile.yaml └── meta.yaml-

Add valid content in the

devfile.yamlfile. For a detailed description of the devfile format, see Configuring a workspace using a devfile. Ensure that the

meta.yamlfile conforms to the following structure:Table 3.1. Parameters for a devfile

meta.yamlAttribute Description descriptionDescription as it appears on the user dashboard.

displayNameName as it appears on the user dashboard.

globalMemoryLimitThe sum of the expected memory consumed by all the components launched by the devfile. This number will be visible on the user dashboard. It is informative and is not taken into account by the CodeReady Workspaces server.

iconLink to an

.svgfile that is displayed on the user dashboard.tagsList of tags. Tags typically include the tools included in the stack.

Example 3.2. Example devfile

meta.yamldisplayName: Rust description: Rust Stack with Rust 1.39 tags: ["Rust"] icon: https://www.eclipse.org/che/images/logo-eclipseche.svg globalMemoryLimit: 1686Mi

Build a custom devfile registry image:

$ cd dependencies/che-devfile-registry $ ./build.sh --organization <my-org> \ --registry <my-registry> \ --tag <my-tag>

NoteTo display full options for the

build.shscript, use the--helpparameter.

Additional resources

3.2.2. Building a custom plug-ins registry image

This section describes how to build a custom plug-ins registry image. The procedure explains how to add a plug-in. The image contains plug-ins or extensions metadata.

Prerequisites

- NodeJS 12.x

- A running version of yarn. See: Installing Yarn.

-

./node_modules/.binis in thePATHenvironment variable. - A running installation of podman or docker.

-

Valid content for the

meta.yamlfile describing the plug-in to add. See: Publishing metadata for a VS Code extension.

Procedure

Clone the plug-ins registry repository and check out the version to deploy:

$ git clone git@github.com:redhat-developer/codeready-workspaces.git $ cd codeready-workspaces $ git checkout crw-2.8-rhel-8

In the

./dependencies/che-plugin-registry/v3/plugins/directory, create new directories<publisher>/<plugin-name>/<plugin-version>/and ameta.yamlfile in the last directory.Example 3.3. File organization for a plugin

./dependencies/che-plugin-registry/v3/plugins/ ├── <publisher> │ └── <plugin-name> │ ├── <plugin-version> │ │ └── meta.yaml │ └── latest.txt

-

Add valid content to the

meta.yamlfile. See: Publishing metadata for a VS Code extension. Create a file named

latest.txtwith content the name of the latest<plugin-version>directory.Example 3.4. Example plug-in files tree

$ tree che-plugin-registry/v3/plugins/redhat/java/ che-plugin-registry/v3/plugins/redhat/java/ ├── 0.38.0 │ └── meta.yaml ├── 0.43.0 │ └── meta.yaml ├── 0.45.0 │ └── meta.yaml ├── 0.46.0 │ └── meta.yaml ├── 0.50.0 │ └── meta.yaml └── latest.txt $ cat che-plugin-registry/v3/plugins/redhat/java/latest.txt 0.50.0

Build a custom plug-ins registry image:

$ cd dependencies/che-plugin-registry $ ./build.sh --organization <my-org> \ --registry <my-registry> \ --tag <my-tag>

NoteTo display full options for the

build.shscript, use the--helpparameter. To include the plug-in binaries in the registry image, add the--offlineparameter.

Additional resources

3.3. Running custom registries

Prerequisites

The my-plug-in-registry and my-devfile-registry images used in this section are built using the docker command. This section assumes that these images are available on the OpenShift cluster where CodeReady Workspaces is deployed.

These images can be then pushed to:

-

A public container registry such as

quay.io, or the DockerHub. - A private registry.

3.3.1. Deploying registries in OpenShift

Procedure

An OpenShift template to deploy the plug-in registry is available in the deploy/openshift/ directory of the GitHub repository.

To deploy the plug-in registry using the OpenShift template, run the following command:

NAMESPACE=<namespace-name> 1 IMAGE_NAME="my-plug-in-registry" IMAGE_TAG="latest" oc new-app -f openshift/che-plugin-registry.yml \ -n "$\{NAMESPACE}" \ -p IMAGE="$\{IMAGE_NAME}" \ -p IMAGE_TAG="$\{IMAGE_TAG}" \ -p PULL_POLICY="Always"

- 1

- If installed using crwctl, the default CodeReady Workspaces project is

openshift-workspaces. The OperatorHub installation method deploys CodeReady Workspaces to the users current project.

The devfile registry has an OpenShift template in the

deploy/openshift/directory of the GitHub repository. To deploy it, run the command:NAMESPACE=<namespace-name> 1 IMAGE_NAME="my-devfile-registry" IMAGE_TAG="latest" oc new-app -f openshift/che-devfile-registry.yml \ -n "$\{NAMESPACE}" \ -p IMAGE="$\{IMAGE_NAME}" \ -p IMAGE_TAG="$\{IMAGE_TAG}" \ -p PULL_POLICY="Always"

- 1

- If installed using crwctl, the default CodeReady Workspaces project is

openshift-workspaces. The OperatorHub installation method deploys CodeReady Workspaces to the users current project.

Verification steps

The <plug-in> plug-in is available in the plug-in registry.

Example 3.5. Find <plug-in> requesting the plug-in registry API.

$ URL=$(oc get route -l app=che,component=plugin-registry \ -o 'custom-columns=URL:.spec.host' --no-headers) $ INDEX_JSON=$(curl -sSL http://${URL}/v3/plugins/index.json) $ echo ${INDEX_JSON} | jq '.[] | select(.name == "<plug-in>")'The <devfile> devfile is available in the devfile registry.

Example 3.6. Find <devfile> requesting the devfile registry API.

$ URL=$(oc get route -l app=che,component=devfile-registry \ -o 'custom-columns=URL:.spec.host' --no-headers) $ INDEX_JSON=$(curl -sSL http://${URL}/v3/plugins/index.json) $ echo ${INDEX_JSON} | jq '.[] | select(.name == "<devfile>")'CodeReady Workspaces server points to the URL of the plug-in registry.

Example 3.7. Compare the value of the

CHE_WORKSPACE_PLUGIN__REGISTRY__URLparameter in thecheConfigMap with the URL of the plug-in registry route.Get the value of the

CHE_WORKSPACE_PLUGIN__REGISTRY__URLparameter in thecheConfigMap.$ oc get cm/che \ -o "custom-columns=URL:.data['CHE_WORKSPACE_PLUGIN__REGISTRY__URL']" \ --no-headers

Get the URL of the plug-in registry route.

$ oc get route -l app=che,component=plugin-registry \ -o 'custom-columns=URL: .spec.host' --no-headers

CodeReady Workspaces server points to the URL of the devfile registry.

Example 3.8. Compare the value of the

CHE_WORKSPACE_DEVFILE__REGISTRY__URLparameter in thecheConfigMap with the URL of the devfile registry route.Get the value of the

CHE_WORKSPACE_DEVFILE__REGISTRY__URLparameter in thecheConfigMap.$ oc get cm/che \ -o "custom-columns=URL:.data['CHE_WORKSPACE_DEVFILE__REGISTRY__URL']" \ --no-headers

Get the URL of the devfile registry route.

$ oc get route -l app=che,component=devfile-registry \ -o 'custom-columns=URL: .spec.host' --no-headers

If the values do not match, update the ConfigMap and restart the CodeReady Workspaces server.

$ oc edit cm/codeready (...) $ oc scale --replicas=0 deployment/codeready $ oc scale --replicas=1 deployment/codeready

The plug-ins are available in the:

- Completion to chePlugin components in the Devfile tab of a workspace details

- Plugin Che-Theia view of a workspace

- The devfiles are available in the Get Started and Create Custom Workspace tab of the user dashboard.

3.3.2. Adding a custom plug-in registry in an existing CodeReady Workspaces workspace

The following section describes two methods of adding a custom plug-in registry in an existing CodeReady Workspaces workspace:

- Adding a custom plug-in registry using Command palette - For adding a new custom plug-in registry quickly, with a use of text inputs from Command palette command. This method does not allow a user to edit already existing information, such as plug-in registry URL or name.

-

Adding a custom plug-in registry using the

settings.jsonfile - For adding a new custom plug-in registry and editing of the already existing entries.

3.3.2.1. Adding a custom plug-in registry using Command Palette

Prerequisites

- An instance of CodeReady Workspaces

Procedure

In the CodeReady Workspaces IDE, press F1 to open the Command Palette, or navigate to View → Find Command in the top menu.

The command palette can be also activated by pressing Ctrl+Shift+p (or Cmd+Shift+p on macOS).

-

Enter the

Add Registrycommand into the search box and pres Enter once filled. Enter the registry name and registry URL in next two command prompts.

- After adding a new plug-in registry, the list of plug-ins in the Plug-ins view is refreshed, and if the new plug-in registry is not valid, a user is notified by a warning message.

3.3.2.2. Adding a custom plug-in registry using the settings.json file

The following section describes the use of the main CodeReady Workspaces Settings menu to edit and add a new plug-in registry using the settings.json file.

Prerequisites

- An instance of CodeReady Workspaces

Procedure

- From the main CodeReady Workspaces screen, select Open Preferences by pressing Ctrl+, or using the gear wheel icon on the left bar.

Select Che Plug-ins and continue by Edit in

setting.jsonlink.The

setting.jsonfile is displayed.Add a new plug-in registry using the

chePlugins.repositoriesattribute as shown below:{ “application.confirmExit”: “never”, “chePlugins.repositories”: {“test”: “https://test.com”} }Save the changes to add a custom plug-in registry in an existing CodeReady Workspaces workspace.

-

A newly added plug-in validation tool checks the correctness of URL values set in the

chePlugins.repositoriesfield of thesettings.jsonfile. -

After adding a new plug-in registry, the list of plug-ins in the Plug-ins view is refreshed, and if the new plug-in registry is not valid, a user is notified by a warning message. This check is also functional for plug-ins added using the Command palette command

Add plugin registry.

-

A newly added plug-in validation tool checks the correctness of URL values set in the

Chapter 4. Retrieving CodeReady Workspaces logs

For information about obtaining various types of logs in CodeReady Workspaces, see the following sections:

4.1. Configuring server logging

It is possible to fine-tune the log levels of individual loggers available in the CodeReady Workspaces server.

The log level of the whole CodeReady Workspaces server is configured globally using the cheLogLevel configuration property of the Operator. To set the global log level in installations not managed by the Operator, specify the CHE_LOG_LEVEL environment variable in the che ConfigMap.

It is possible to configure the log levels of the individual loggers in the CodeReady Workspaces server using the CHE_LOGGER_CONFIG environment variable.

4.1.1. Configuring log levels

The format of the value of the CHE_LOGGER_CONFIG property is a list of comma-separated key-value pairs, where keys are the names of the loggers as seen in the CodeReady Workspaces server log output and values are the required log levels.

In Operator-based deployments, the CHE_LOGGER_CONFIG variable is specified under the customCheProperties of the custom resource.

For example, the following snippet would make the WorkspaceManager produce the DEBUG log messages.

...

server:

customCheProperties:

CHE_LOGGER_CONFIG: "org.eclipse.che.api.workspace.server.WorkspaceManager=DEBUG"4.1.2. Logger naming

The names of the loggers follow the class names of the internal server classes that use those loggers.

4.1.3. Logging HTTP traffic

It is possible to log the HTTP traffic between the CodeReady Workspaces server and the API server of the Kubernetes or OpenShift cluster. To do that, one has to set the che.infra.request-logging logger to the TRACE level.

...

server:

customCheProperties:

CHE_LOGGER_CONFIG: "che.infra.request-logging=TRACE"4.2. Accessing OpenShift events on OpenShift

For high-level monitoring of OpenShift projects, view the OpenShift events that the project performs.

This section describes how to access these events in the OpenShift web console.

Prerequisites

- A running OpenShift web console.

Procedure

- In the left panel of the OpenShift web console, click the Home → Events.

- To view the list of all events for a particular project, select the project from the list.

- The details of the events for the current project are displayed.

Additional resources

- For a list of OpenShift events, see Comprehensive List of Events in OpenShift documentation.

4.3. Viewing the state of the CodeReady Workspaces cluster deployment using OpenShift 4 CLI tools

This section describes how to view the state of the CodeReady Workspaces cluster deployment using OpenShift 4 CLI tools.

Prerequisites

- An instance of Red Hat CodeReady Workspaces running on OpenShift.

-

An installation of the OpenShift command-line tool,

oc.

Procedure

Run the following commands to select the

crwproject:$ oc project <project_name>Run the following commands to get the name and status of the Pods running in the selected project:

$ oc get pods

Check that the status of all the Pods is

Running.Example 4.1. Pods with status

RunningNAME READY STATUS RESTARTS AGE codeready-8495f4946b-jrzdc 0/1 Running 0 86s codeready-operator-578765d954-99szc 1/1 Running 0 42m keycloak-74fbfb9654-g9vp5 1/1 Running 0 4m32s postgres-5d579c6847-w6wx5 1/1 Running 0 5m14s

To see the state of the CodeReady Workspaces cluster deployment, run:

$ oc logs --tail=10 -f `(oc get pods -o name | grep operator)`

Example 4.2. Logs of the Operator:

time="2019-07-12T09:48:29Z" level=info msg="Exec successfully completed" time="2019-07-12T09:48:29Z" level=info msg="Updating eclipse-che CR with status: provisioned with OpenShift identity provider: true" time="2019-07-12T09:48:29Z" level=info msg="Custom resource eclipse-che updated" time="2019-07-12T09:48:29Z" level=info msg="Creating a new object: ConfigMap, name: che" time="2019-07-12T09:48:29Z" level=info msg="Creating a new object: ConfigMap, name: custom" time="2019-07-12T09:48:29Z" level=info msg="Creating a new object: Deployment, name: che" time="2019-07-12T09:48:30Z" level=info msg="Updating eclipse-che CR with status: CodeReady Workspaces API: Unavailable" time="2019-07-12T09:48:30Z" level=info msg="Custom resource eclipse-che updated" time="2019-07-12T09:48:30Z" level=info msg="Waiting for deployment che. Default timeout: 420 seconds"

4.4. Viewing CodeReady Workspaces server logs

This section describes how to view the CodeReady Workspaces server logs using the command line.

4.4.1. Viewing the CodeReady Workspaces server logs using the OpenShift CLI

This section describes how to view the CodeReady Workspaces server logs using the OpenShift CLI (command line interface).

Procedure

In the terminal, run the following command to get the Pods:

$ oc get pods

Example

$ oc get pods NAME READY STATUS RESTARTS AGE codeready-11-j4w2b 1/1 Running 0 3m

To get the logs for a deployment, run the following command:

$ oc logs <name-of-pod>Example

$ oc logs codeready-11-j4w2b

4.5. Viewing external service logs

This section describes how the view the logs from external services related to CodeReady Workspaces server.

4.5.1. Viewing RH-SSO logs

The RH-SSO OpenID provider consists of two parts: Server and IDE. It writes its diagnostics or error information to several logs.

4.5.1.1. Viewing the RH-SSO server logs

This section describes how to view the RH-SSO OpenID provider server logs.

Procedure

- In the OpenShift Web Console, click Deployments.

-

In the Filter by label search field, type

keycloakto see the RH-SSO logs.

. In the Deployment Configs section, click the keycloak link to open it.

- In the History tab, click the View log link for the active RH-SSO deployment.

- The RH-SSO logs are displayed.

Additional resources

- See the Section 4.4, “Viewing CodeReady Workspaces server logs” for diagnostics and error messages related to the RH-SSO IDE Server.

4.5.1.2. Viewing the RH-SSO client logs on Firefox

This section describes how to view the RH-SSO IDE client diagnostics or error information in the Firefox WebConsole.

Procedure

- Click Menu > WebDeveloper > WebConsole.

4.5.1.3. Viewing the RH-SSO client logs on Google Chrome

This section describes how to view the RH-SSO IDE client diagnostics or error information in the Google Chrome Console tab.

Procedure

- Click Menu > More Tools > Developer Tools.

- Click the Console tab.

4.5.2. Viewing the CodeReady Workspaces database logs

This section describes how to view the database logs in CodeReady Workspaces, such as PostgreSQL server logs.

Procedure

- In the OpenShift Web Console, click Deployments.

In the Find by label search field, type:

-

app=cheand press Enter component=postgresand press EnterThe OpenShift Web Console is searching base on those two keys and displays PostgreSQL logs.

-

-

Click

postgresdeployment to open it. Click the View log link for the active PostgreSQL deployment.

The OpenShift Web Console displays the database logs.

Additional resources

- Some diagnostics or error messages related to the PostgreSQL server can be found in the active CodeReady Workspaces deployment log. For details to access the active CodeReady Workspaces deployments logs, see the Section 4.4, “Viewing CodeReady Workspaces server logs” section.

4.6. Viewing the plug-in broker logs

This section describes how to view the plug-in broker logs.

The che-plugin-broker Pod itself is deleted when its work is complete. Therefore, its event logs are only available while the workspace is starting.

Procedure

To see logged events from temporary Pods:

- Start a CodeReady Workspaces workspace.

- From the main OpenShift Container Platform screen, go to Workload → Pods.

- Use the OpenShift terminal console located in the Pod’s Terminal tab

Verification step

- OpenShift terminal console displays the plug-in broker logs while the workspace is starting

4.7. Collecting logs using crwctl

It is possible to get all Red Hat CodeReady Workspaces logs from a OpenShift cluster using the crwctl tool.

-

crwctl server:deployautomatically starts collecting Red Hat CodeReady Workspaces servers logs during installation of Red Hat CodeReady Workspaces -

crwctl server:logscollects existing Red Hat CodeReady Workspaces server logs -

crwctl workspace:logscollects workspace logs

Chapter 5. Monitoring CodeReady Workspaces

This chapter describes how to configure CodeReady Workspaces to expose metrics and how to build an example monitoring stack with external tools to process data exposed as metrics by CodeReady Workspaces.

5.1. Enabling and exposing CodeReady Workspaces metrics

This section describes how to enable and expose CodeReady Workspaces metrics.

Procedure

-

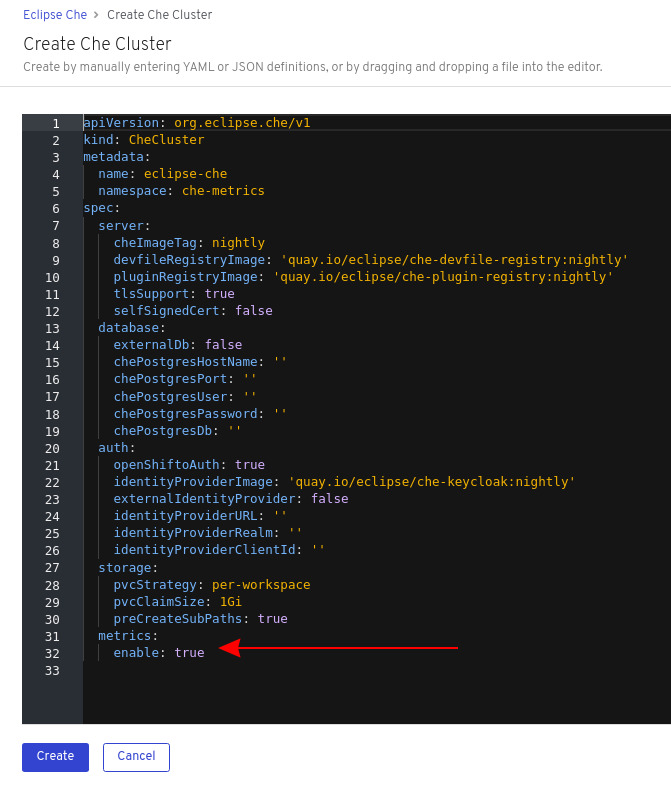

Set the

CHE_METRICS_ENABLED=trueenvironment variable, which will expose the8087port as a service on the che-master host.

When Red Hat CodeReady Workspaces is installed from the OperatorHub, the environment variable is set automatically if the default CheCluster CR is used:

spec:

metrics:

enable: true5.2. Collecting CodeReady Workspaces metrics with Prometheus

This section describes how to use the Prometheus monitoring system to collect, store and query metrics about CodeReady Workspaces.

Prerequisites

-

CodeReady Workspaces is exposing metrics on port

8087. See Enabling and exposing che metrics. -

Prometheus 2.9.1 or higher is running. The Prometheus console is running on port

9090with a corresponding service and route. See First steps with Prometheus.

Procedure

Configure Prometheus to scrape metrics from the

8087port:Example 5.1. Prometheus configuration example

apiVersion: v1 kind: ConfigMap metadata: name: prometheus-config data: prometheus.yml: |- global: scrape_interval: 5s 1 evaluation_interval: 5s 2 scrape_configs: 3 - job_name: 'che' static_configs: - targets: ['[che-host]:8087'] 4- 1

- Rate, at which a target is scraped.

- 2

- Rate, at which recording and alerting rules are re-checked (not used in the system at the moment).

- 3

- Resources Prometheus monitors. In the default configuration, there is a single job called

che, which scrapes the time series data exposed by the CodeReady Workspaces server. - 4

- Scrape metrics from the

8087port.

Verification steps

Use the Prometheus console to query and view metrics.

Metrics are available at:

http://<che-server-url>:9090/metrics.For more information, see Using the expression browser in the Prometheus documentation.

Additional resources

Chapter 6. Tracing CodeReady Workspaces

Tracing helps gather timing data to troubleshoot latency problems in microservice architectures and helps to understand a complete transaction or workflow as it propagates through a distributed system. Every transaction may reflect performance anomalies in an early phase when new services are being introduced by independent teams.

Tracing the CodeReady Workspaces application may help analyze the execution of various operations, such as workspace creations, workspace startup, breaking down the duration of sub-operations executions, helping finding bottlenecks and improve the overall state of the platform.

Tracers live in applications. They record timing and metadata about operations that take place. They often instrument libraries, so that their use is indiscernible to users. For example, an instrumented web server records when it received a request and when it sent a response. The trace data collected is called a span. A span has a context that contains information such as trace and span identifiers and other kinds of data that can be propagated down the line.

6.1. Tracing API

CodeReady Workspaces utilizes OpenTracing API - a vendor-neutral framework for instrumentation. This means that if a developer wants to try a different tracing back end, then rather than repeating the whole instrumentation process for the new distributed tracing system, the developer can simply change the configuration of the tracer back end.

6.2. Tracing back end

By default, CodeReady Workspaces uses Jaeger as the tracing back end. Jaeger was inspired by Dapper and OpenZipkin, and it is a distributed tracing system released as open source by Uber Technologies. Jaeger extends a more complex architecture for a larger scale of requests and performance.

6.3. Installing the Jaeger tracing tool

The following sections describe the installation methods for the Jaeger tracing tool. Jaeger can then be used for gathering metrics in CodeReady Workspaces.

Installation methods available:

For tracing a CodeReady Workspaces instance using Jaeger, version 1.12.0 or above is required. For additional information about Jaeger, see the Jaeger website.

6.3.1. Installing Jaeger using OperatorHub on OpenShift 4

This section provide information about using Jaeger tracing tool for testing an evaluation purposes in production.

To install the Jaeger tracing tool from the OperatorHub interface in OpenShift Container Platform, follow the instructions below.

Prerequisites

- The user is logged in to the OpenShift Container Platform Web Console.

- A CodeReady Workspaces instance is available in a project.

Procedure

- Open the OpenShift Container Platform console.

- From the left menu of the main OpenShift Container Platform screen, navigate to Operators → OperatorHub.

-

In the Search by keyword search bar, type

Jaeger Operator. -

Click the

Jaeger Operatortile. -

Click the Install button in the

Jaeger Operatorpop-up window. -

Select the installation method:

A specific project on the clusterwhere the CodeReady Workspaces is deployed and leave the rest in its default values. - Click the Subscribe button.

- From the left menu of the main OpenShift Container Platform screen, navigate to the Operators → Installed Operators section.

- Red Hat CodeReady Workspaces is displayed as an Installed Operator, as indicated by the InstallSucceeded status.

- Click the Jaeger Operator name in the list of installed Operators.

- Navigate to the Overview tab.

-

In the Conditions sections at the bottom of the page, wait for this message:

install strategy completed with no errors. -

Jaeger Operatorand additionalElasticsearch Operatoris installed. - Navigate to the Operators → Installed Operators section.

- Click Jaeger Operator in the list of installed Operators.

- The Jaeger Cluster page is displayed.

- In the lower left corner of the window, click Create Instance

- Click Save.

-

OpenShift creates the Jaeger cluster

jaeger-all-in-one-inmemory. - Follow the steps in Enabling metrics collection to finish the procedure.

6.3.2. Installing Jaeger using CLI on OpenShift 4

This section provide information about using Jaeger tracing tool for testing an evaluation purposes.

To install the Jaeger tracing tool from a CodeReady Workspaces project in OpenShift Container Platform, follow the instructions in this section.

Prerequisites

- The user is logged in to the OpenShift Container Platform web console.

- A instance of CodeReady Workspaces in an OpenShift Container Platform cluster.

Procedure

In the CodeReady Workspaces installation project of the OpenShift Container Platform cluster, use the

occlient to create a new application for the Jaeger deployment.$ oc new-app -f / ${CHE_LOCAL_GIT_REPO}/deploy/openshift/templates/jaeger-all-in-one-template.yml: --> Deploying template "<project_name>/jaeger-template-all-in-one" for "/home/user/crw-projects/crw/deploy/openshift/templates/jaeger-all-in-one-template.yml" to project <project_name> Jaeger (all-in-one) --------- Jaeger Distributed Tracing Server (all-in-one) * With parameters: * Jaeger Service Name=jaeger * Image version=latest * Jaeger Zipkin Service Name=zipkin --> Creating resources ... deployment.apps "jaeger" created service "jaeger-query" created service "jaeger-collector" created service "jaeger-agent" created service "zipkin" created route.route.openshift.io "jaeger-query" created --> Success Access your application using the route: 'jaeger-query-<project_name>.apps.ci-ln-whx0352-d5d6b.origin-ci-int-aws.dev.rhcloud.com' Run 'oc status' to view your app.- Using the Workloads → Deployments from the left menu of main OpenShift Container Platform screen, monitor the Jaeger deployment until it finishes successfully.

- Select Networking → Routes from the left menu of the main OpenShift Container Platform screen, and click the URL link to access the Jaeger dashboard.

- Follow the steps in Enabling metrics collection to finish the procedure.

6.4. Enabling metrics collection

Prerequisites

- Installed Jaeger v1.12.0 or above. See instructions at Section 6.3, “Installing the Jaeger tracing tool”

Procedure

For Jaeger tracing to work, enable the following environment variables in your CodeReady Workspaces deployment:

# Activating CodeReady Workspaces tracing modules CHE_TRACING_ENABLED=true # Following variables are the basic Jaeger client library configuration. JAEGER_ENDPOINT="http://jaeger-collector:14268/api/traces" # Service name JAEGER_SERVICE_NAME="che-server" # URL to remote sampler JAEGER_SAMPLER_MANAGER_HOST_PORT="jaeger:5778" # Type and param of sampler (constant sampler for all traces) JAEGER_SAMPLER_TYPE="const" JAEGER_SAMPLER_PARAM="1" # Maximum queue size of reporter JAEGER_REPORTER_MAX_QUEUE_SIZE="10000"

To enable the following environment variables:

In the

yamlsource code of the CodeReady Workspaces deployment, add the following configuration variables underspec.server.customCheProperties.customCheProperties: CHE_TRACING_ENABLED: 'true' JAEGER_SAMPLER_TYPE: const DEFAULT_JAEGER_REPORTER_MAX_QUEUE_SIZE: '10000' JAEGER_SERVICE_NAME: che-server JAEGER_ENDPOINT: 'http://jaeger-collector:14268/api/traces' JAEGER_SAMPLER_MANAGER_HOST_PORT: 'jaeger:5778' JAEGER_SAMPLER_PARAM: '1'Edit the

JAEGER_ENDPOINTvalue to match the name of the Jaeger collector service in your deployment.From the left menu of the main OpenShift Container Platform screen, obtain the value of JAEGER_ENDPOINT by navigation to Networking → Services. Alternatively, execute the following

occommand:$ oc get services

The requested value is included in the service name that contains the

collectorstring.

Additional resources

- For additional information about custom environment properties and how to define them in CheCluster Custom Resource, see Advanced configuration options for the CodeReady Workspaces server component.

- For custom configuration of Jaeger, see the list of Jaeger client environment variables.

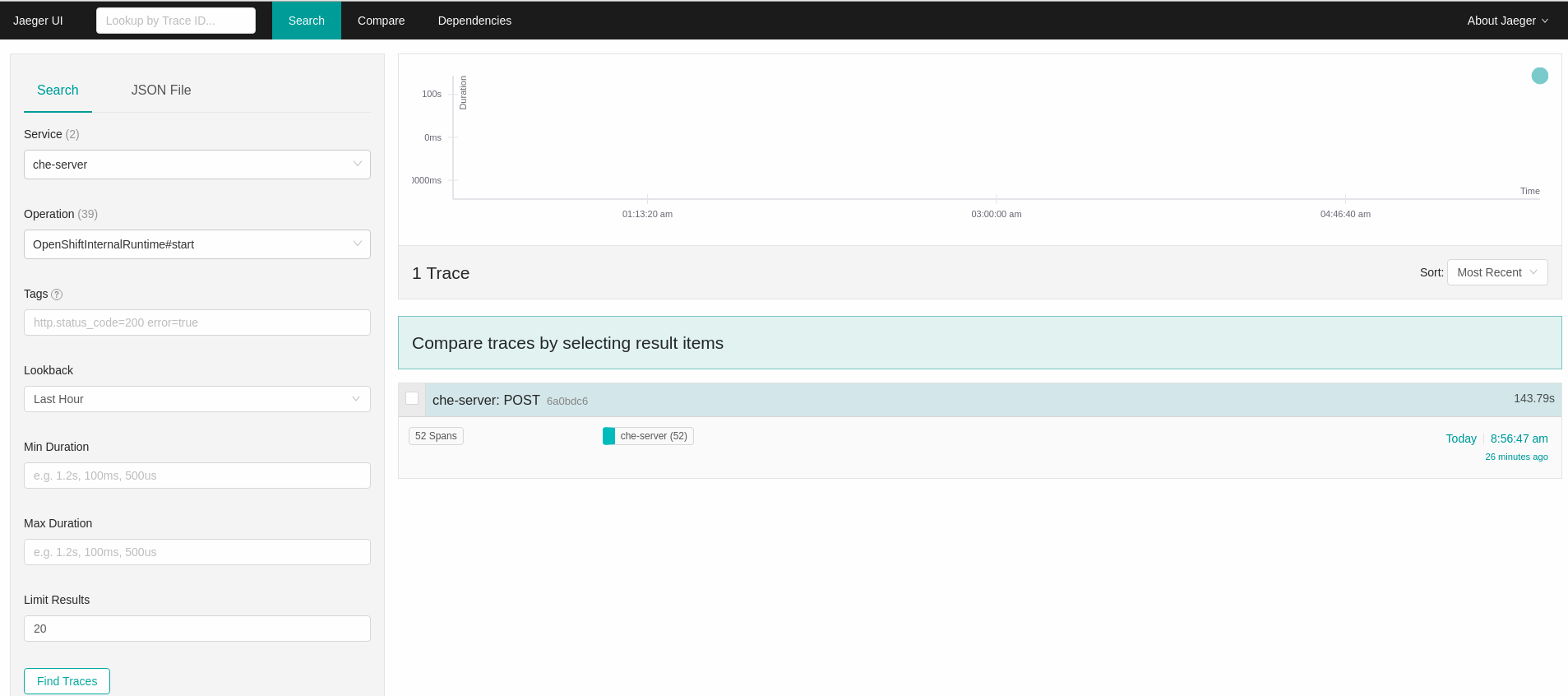

6.5. Viewing CodeReady Workspaces traces in Jaeger UI

This section demonstrates how to use the Jaeger UI to overview traces of CodeReady Workspaces operations.

Procedure

In this example, the CodeReady Workspaces instance has been running for some time and one workspace start has occurred.

To inspect the trace of the workspace start:

In the Search panel on the left, filter spans by the operation name (span name), tags, or time and duration.

Figure 6.1. Using Jaeger UI to trace CodeReady Workspaces

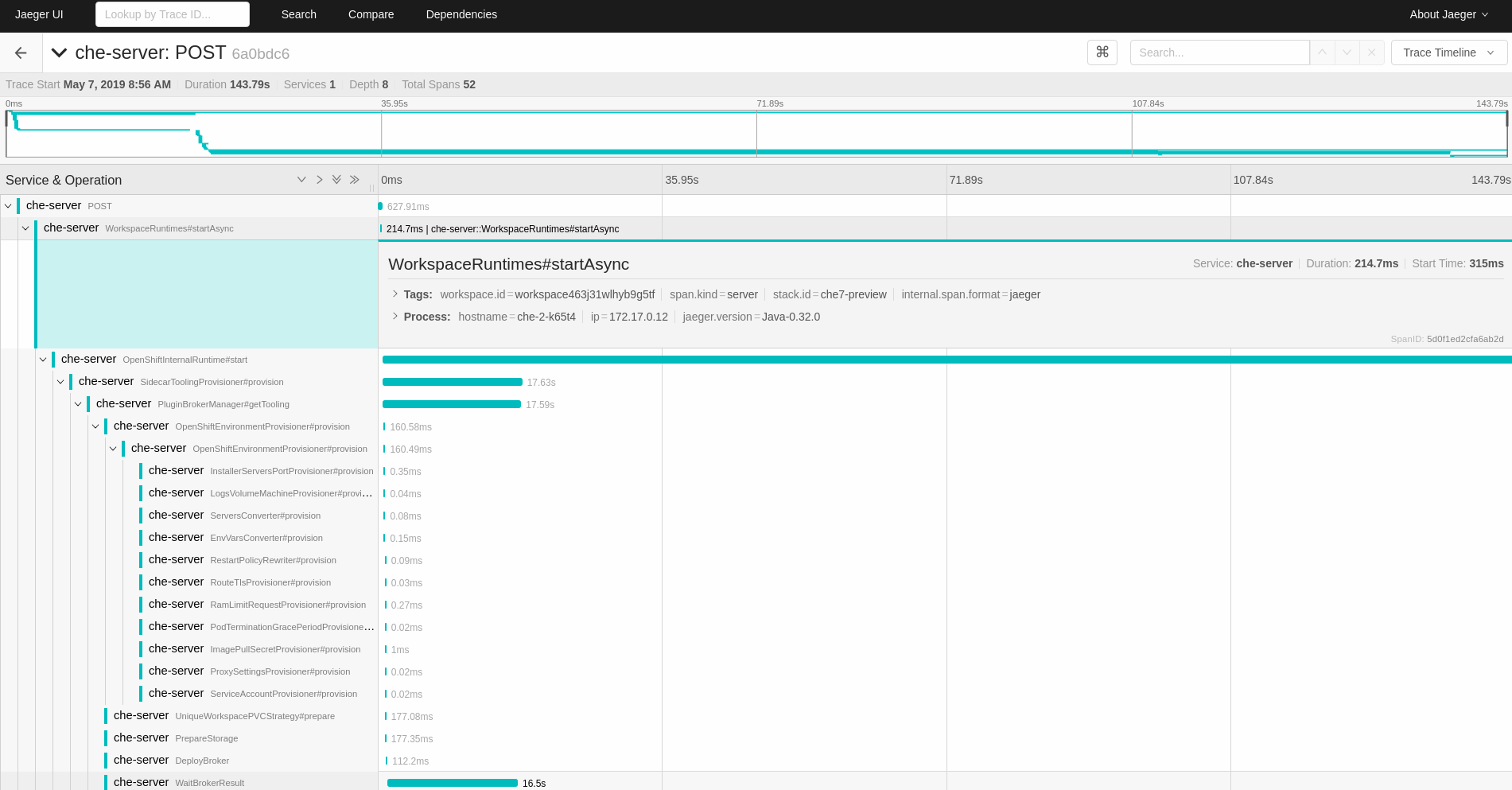

Select the trace to expand it and show the tree of nested spans and additional information about the highlighted span, such as tags or durations.

Figure 6.2. Expanded tracing tree

6.6. CodeReady Workspaces tracing codebase overview and extension guide

The core of the tracing implementation for CodeReady Workspaces is in the che-core-tracing-core and che-core-tracing-web modules.

All HTTP requests to the tracing API have their own trace. This is done by TracingFilter from the OpenTracing library, which is bound for the whole server application. Adding a @Traced annotation to methods causes the TracingInterceptor to add tracing spans for them.

6.6.1. Tagging

Spans may contain standard tags, such as operation name, span origin, error, and other tags that may help users with querying and filtering spans. Workspace-related operations (such as starting or stopping workspaces) have additional tags, including userId, workspaceID, and stackId. Spans created by TracingFilter also have an HTTP status code tag.

Declaring tags in a traced method is done statically by setting fields from the TracingTags class:

TracingTags.WORKSPACE_ID.set(workspace.getId());

TracingTags is a class where all commonly used tags are declared, as respective AnnotationAware tag implementations.

Additional resources

For more information about how to use Jaeger UI, visit Jaeger documentation: Jaeger Getting Started Guide.

Chapter 7. Backup and disaster recovery

This section describes aspects of the CodeReady Workspaces backup and disaster recovery.

7.1. External database setup

The PostgreSQL database is used by the CodeReady Workspaces server for persisting data about the state of CodeReady Workspaces. It contains information about user accounts, workspaces, preferences, and other details.

By default, the CodeReady Workspaces Operator creates and manages the database deployment.

However, the CodeReady Workspaces Operator does not support full life-cycle capabilities, such as backups and recovery.

For a business-critical setup, configure an external database with the following recommended disaster-recovery options:

- High Availability (HA)

- Point In Time Recovery (PITR)

Configure an external PostgreSQL instance on-premises or use a cloud service, such as Amazon Relational Database Service (Amazon RDS). With Amazon RDS, it is possible to deploy production databases in a Multi-Availability Zone configuration for a resilient disaster recovery strategy with daily and on-demand snapshots.

The recommended configuration of the example database is:

| Parameter | Value |

|---|---|

| Instance class | db.t2.small |

| vCPU | 1 |

| RAM | 2 GB |

| Multi-az | true, 2 replicas |

| Engine version | 9.6.11 |

| TLS | enabled |

| Automated backups | enabled (30 days) |

7.1.1. Configuring external PostgreSQL

Procedure

Use the following SQL script to create user and database for the CodeReady Workspaces server to persist workspaces metadata etc:

CREATE USER <database-user> WITH PASSWORD '<database-password>' 1 2 CREATE DATABASE <database> 3 GRANT ALL PRIVILEGES ON DATABASE <database> TO <database-user> ALTER USER <database-user> WITH SUPERUSER

Use the following SQL script to create database for RH-SSO back end to persist user information:

CREATE USER keycloak WITH PASSWORD '<identity-database-password>' 1 CREATE DATABASE keycloak GRANT ALL PRIVILEGES ON DATABASE keycloak TO keycloak- 1

- RH-SSO database password

7.1.2. Configuring CodeReady Workspaces to work with an external PostgreSQL

Prerequisites

-

The

octool is available.

Procedure

Pre-create a project for CodeReady Workspaces:

$ oc create namespace openshift-workspaces

Create a secret to store CodeReady Workspaces server database credentials:

$ oc create secret generic <server-database-credentials> \ 1 --from-literal=user=<database-user> \ 2 --from-literal=password=<database-password> \ 3 -n openshift-workspaces

Create a secret to store RH-SSO database credentials:

$ oc create secret generic <identity-database-credentials> \ 1 --from-literal=password=<identity-database-password> \ 2 -n openshift-workspaces

Deploy Red Hat CodeReady Workspaces by executing the

crwctlcommand with applying a patch. For example:$ crwctl server:deploy --che-operator-cr-patch-yaml=patch.yaml ...

patch.yaml should contain the following to make the Operator skip deploying a database and pass connection details of an existing database to a CodeReady Workspaces server:

spec:

database:

externalDb: true

chePostgresHostName: <hostname> 1

chePostgresPort: <port> 2

chePostgresSecret: <server-database-credentials> 3

chePostgresDb: <database> 4

spec:

auth:

identityProviderPostgresSecret: <identity-database-credentials> 5Additional resources

7.2. Persistent Volumes backups

Persistent Volumes (PVs) store the CodeReady Workspaces workspace data similarly to how workspace data is stored for desktop IDEs on the local hard disk drive.

To prevent data loss, back up PVs periodically. The recommended approach is to use storage-agnostic tools for backing up and restoring OpenShift resources, including PVs.

7.2.1. Recommended backup tool: Velero

Velero is an open-source tool for backing up OpenShift applications and their PVs. Velero allows you to:

- Deploy in the cloud or on premises.

- Back up the cluster and restore in case of data loss.

- Migrate cluster resources to other clusters.

- Replicate a production cluster to development and testing clusters.

Alternatively, you can use backup solutions dependent on the underlying storage system. For example, solutions that are Gluster or Ceph-specific.

Additional resources

Chapter 8. Caching images for faster workspace start

To improve the start time performance of CodeReady Workspaces workspaces, use the Image Puller. The Image Puller is an additional OpenShift deployment. It creates a DaemonSet downloading and running the relevant container images on each node. These images are already available when a CodeReady Workspaces workspace starts.

The Image Puller provides the following parameters for configuration.

Table 8.1. Image Puller parameters

| Parameter | Usage | Default |

|---|---|---|

|

| DaemonSets health checks interval in hours |

|

|

| The memory request for each cached image when the puller is running. See Section 8.2, “Defining the memory parameters for the Image Puller”. |

|

|

| The memory limit for each cached image when the puller is running. See Section 8.2, “Defining the memory parameters for the Image Puller”. |

|

|

| The processor request for each cached image when the puller is running |

|

|

| The processor limit for each cached image when the puller is running |

|

|

| Name of DaemonSet to create |

|

|

| Name of the Deployment to create |

|

|

| OpenShift project containing DaemonSet to create |

|

|

|

Semicolon separated list of images to pull, in the format | |

|

| Node selector to apply to the Pods created by the DaemonSet |

|

Additional resources

- Section 8.1, “Defining the list of images to pull”

- Section 8.2, “Defining the memory parameters for the Image Puller”.

- Section 8.3, “Installing Image Puller using the CodeReady Workspaces Operator”

- Section 8.4, “Installing Image Puller on OpenShift 4 using OperatorHub”

- Section 8.5, “Installing Image Puller on OpenShift using OpenShift templates”

- Kubernetes Image Puller source code repository

8.1. Defining the list of images to pull

Prerequisites

-

The

curltool is available. See curl homepage. -

The

jqtool is available. See jq homepage. -

The

yqtool is available. See yq homepage.

Procedure

Get the list of relevant container images.

Example 8.1. Getting the list of all relevant images for CodeReady Workspaces

$ curl -sSLo- https://raw.githubusercontent.com/redhat-developer/codeready-workspaces-operator/crw-2.8-rhel-8/manifests/codeready-workspaces.csv.yaml | \ yq -r '.spec.relatedImages[]'

Exclude from the list the container images not containing the

sleepcommand.Example 8.2. Images incompatibles with {image-puller-short}, missing the

sleepcommand-

FROM scratchimages. -

che-machine-exec

-

- Exclude from the list the container images mounting volumes in Dockerfile.

Additional resources

8.2. Defining the memory parameters for the Image Puller

Define the memory requests and limits parameters to ensure pulled containers and the platform have enough memory to run.

Prerequisites

Procedure

-

To define the minimal value for

CACHING_MEMORY_REQUESTorCACHING_MEMORY_LIMIT, consider the necessary amount of memory required to run each of the container images to pull. To define the maximal value for

CACHING_MEMORY_REQUESTorCACHING_MEMORY_LIMIT, consider the total memory allocated to the DaemonSet Pods in the cluster:(memory limit) * (number of images) * (number of nodes in the cluster)

Pulling 5 images on 20 nodes, with a container memory limit of

20Mirequires2000Miof memory.

8.3. Installing Image Puller using the CodeReady Workspaces Operator

This section describes how to use the CodeReady Workspaces Operator to install the Image Puller. This is a Community-supported technology preview feature.

Prerequisites

- Section 8.1, “Defining the list of images to pull”

- Section 8.2, “Defining the memory parameters for the Image Puller”

- Operator Lifecycle Manager and OperatorHub are available on the OpenShift instance. OpenShift provides them starting with version 4.2.

- The CodeReady Workspaces Operator is available. See Installing CodeReady Workspaces on OpenShift 4 using OperatorHub

Procedure

Edit the

CheClusterCustom Resource and set.spec.imagePuller.enabletotrueExample 8.3. Enabling Image Puller in the

CheClusterCustom ResourceapiVersion: org.eclipse.che/v1 kind: CheCluster metadata: name: codeready-workspaces spec: # ... imagePuller: enable: trueUninstalling Image Puller using CodeReady Workspaces Operator-

Edit the

CheClusterCustom Resource and set.spec.imagePuller.enabletofalse.

-

Edit the

Edit the

CheClusterCustom Resource and set the.spec.imagePuller.specto configure the optional Image Puller parameters for the CodeReady Workspaces Operator.Example 8.4. Configuring Image Puller in the

CheClusterCustom ResourceapiVersion: org.eclipse.che/v1 kind: CheCluster metadata: name: codeready-workspaces spec: ... imagePuller: enable: true spec: configMapName: <kubernetes-image-puller> daemonsetName: <kubernetes-image-puller> deploymentName: <kubernetes-image-puller> images: <{image-puller-images}>

Verification steps

-

OpenShift creates a

{image-puller-operator-id}Subscription. The

eclipse-che namespacecontains acommunity supported Kubernetes Image Puller OperatorClusterServiceVersion:$ oc get clusterserviceversions

The

eclipse-che namespacecontains these deployments:kubernetes-image-pullerand{image-puller-deployment-id}.$ oc get deployments

The community supported Kubernetes Image Puller Operator creates a

KubernetesImagePullerCustom Resource:$ oc get kubernetesimagepullers

8.4. Installing Image Puller on OpenShift 4 using OperatorHub

This procedure describes how to install the community supported Kubernetes Image Puller Operator on OpenShift 4 using the Operator.

Prerequisites

- An administrator account on a running instance of OpenShift 4.

- Section 8.1, “Defining the list of images to pull”

- Section 8.2, “Defining the memory parameters for the Image Puller”.

Procedure

- To create an OpenShift project <kubernetes-image-puller> to host the Image Puller, open the OpenShift web console, navigate to the Home → Projects section and click Create Project.

Specify the project details:

- Name: <kubernetes-image-puller>

- Display Name: <Image Puller>

- Description: <Kubernetes Image Puller>

- Navigate to Operators → OperatorHub.

-

Use the Filter by keyword box to search for

community supported Kubernetes Image Puller Operator. Click the community supported Kubernetes Image Puller Operator. - Read the description of the Operator. Click Continue → Install.

- Select A specific project on the cluster for the Installation Mode. In the drop-down find the OpenShift project <kubernetes-image-puller>. Click Subscribe.

- Wait for the community supported Kubernetes Image Puller Operator to install. Click the KubernetesImagePuller → Create instance.

-

In a redirected window with a YAML editor, make modifications to the

KubernetesImagePullerCustom Resource and click Create. - Navigate to the Workloads and Pods menu in the <kubernetes-image-puller> OpenShift project. Verify that the Image Puller is available.

8.5. Installing Image Puller on OpenShift using OpenShift templates

This procedure describes how to install the Kubernetes Image Puller on OpenShift using OpenShift templates.

Prerequisites

- A running OpenShift cluster.

-

The

octool is available. - Section 8.1, “Defining the list of images to pull”.

- Section 8.2, “Defining the memory parameters for the Image Puller”.

Procedure

Clone the Image Puller repository and get in the directory containing the OpenShift templates:

$ git clone https://github.com/che-incubator/kubernetes-image-puller $ cd kubernetes-image-puller/deploy/openshift

Configure the

app.yaml,configmap.yamlandserviceaccount.yamlOpenShift templates using following parameters:Table 8.2. Image Puller OpenShift templates parameters in

app.yamlValue Usage Default DEPLOYMENT_NAMEThe value of

DEPLOYMENT_NAMEin the ConfigMapkubernetes-image-pullerIMAGEImage used for the

kubernetes-image-pullerdeploymentregistry.redhat.io/codeready-workspaces/imagepuller-rhel8:2.8IMAGE_TAGThe image tag to pull

latestSERVICEACCOUNT_NAMEThe name of the ServiceAccount created and used by the deployment

{image-puller-serviceaccount-name}Table 8.3. Image Puller OpenShift templates parameters in

configmap.yamlValue Usage Default CACHING_CPU_LIMITThe value of

CACHING_CPU_LIMITin the ConfigMap.2CACHING_CPU_REQUESTThe value of

CACHING_CPU_REQUESTin the ConfigMap.05CACHING_INTERVAL_HOURSThe value of

CACHING_INTERVAL_HOURSin the ConfigMap"1"CACHING_MEMORY_LIMITThe value of

CACHING_MEMORY_LIMITin the ConfigMap"20Mi"`CACHING_MEMORY_REQUESTThe value of

CACHING_MEMORY_REQUESTin the ConfigMap"10Mi"DAEMONSET_NAMEThe value of

DAEMONSET_NAMEin the ConfigMapkubernetes-image-pullerDEPLOYMENT_NAMEThe value of

DEPLOYMENT_NAMEin the ConfigMapkubernetes-image-pullerIMAGESThe value of

IMAGESin the ConfigMap{image-puller-images}NODE_SELECTORThe value of

NODE_SELECTORin the ConfigMap"{}"Table 8.4. Image Puller OpenShift templates parameters in

serviceaccount.yamlValue Usage Default SERVICEACCOUNT_NAMEThe name of the ServiceAccount created and used by the deployment

{image-puller-serviceaccount-name}Create an OpenShift project to host the Image Puller:

$ oc new-project <kubernetes-image-puller>Process and apply the templates to install the puller:

$ oc process -f serviceaccount.yaml | oc apply -f - $ oc process -f configmap.yaml | oc apply -f - $ oc process -f app.yaml | oc apply -f -

Verification steps

Verify the existence of a <kubernetes-image-puller> deployment and a <kubernetes-image-puller> DaemonSet. The DaemonSet needs to have a Pod for each node in the cluster:

$ oc get deployment,daemonset,pod --namespace <kubernetes-image-puller>Verify the values of the <kubernetes-image-puller>

ConfigMap.$ oc get configmap <kubernetes-image-puller> --output yaml

Chapter 9. Managing identities and authorizations

This section describes different aspects of managing identities and authorizations of Red Hat CodeReady Workspaces.

9.1. Authenticating users

This document covers all aspects of user authentication in Red Hat CodeReady Workspaces, both on the CodeReady Workspaces server and in workspaces. This includes securing all REST API endpoints, WebSocket or JSON RPC connections, and some web resources.

All authentication types use the JWT open standard as a container for transferring user identity information. In addition, CodeReady Workspaces server authentication is based on the OpenID Connect protocol implementation, which is provided by default by RH-SSO.

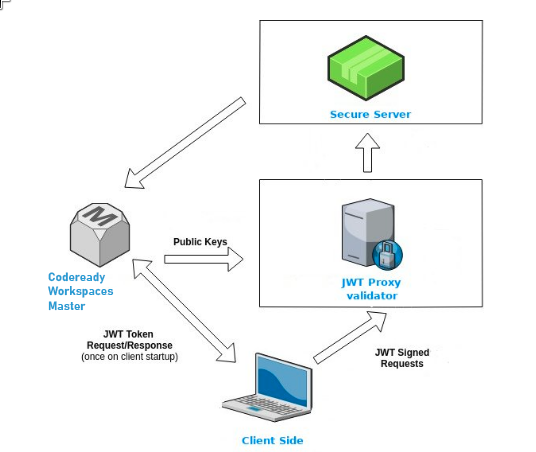

Authentication in workspaces implies the issuance of self-signed per-workspace JWT tokens and their verification on a dedicated service based on JWTProxy.

9.1.1. Authenticating to the CodeReady Workspaces server

9.1.1.1. Authenticating to the CodeReady Workspaces server using other authentication implementations

This procedure describes how to use an OpenID Connect (OIDC) authentication implementation other than RH-SSO.

Procedure

-

Update the authentication configuration parameters that are stored in the

multiuser.propertiesfile (such as client ID, authentication URL, realm name). -

Write a single filter or a chain of filters to validate tokens, create the user in the CodeReady Workspaces dashboard, and compose the

subjectobject. - If the new authorization provider supports the OpenID protocol, use the OIDC JS client library available at the settings endpoint because it is decoupled from specific implementations.

- If the selected provider stores additional data about the user (first and last name, job title), it is recommended to write a provider-specific ProfileDao implementation that provides this information.

9.1.1.2. Authenticating to the CodeReady Workspaces server using OAuth

For easy user interaction with third-party services, the CodeReady Workspaces server supports OAuth authentication. OAuth tokens are also used for GitHub-related plug-ins.

OAuth authentication has two main flows:

- delegated

- Default. Delegates OAuth authentication to RH-SSO server.

- embedded

- Uses built-in CodeReady Workspaces server mechanism to communicate with OAuth providers.

To switch between the two implementations, use the che.oauth.service_mode=<embedded|delegated> configuration property.

The main REST endpoint in the OAuth API is /api/oauth, which contains:

-

An authentication method,

/authenticate, that the OAuth authentication flow can start with. -

A callback method,

/callback, to process callbacks from the provider. -

A token GET method,

/token, to retrieve the current user’s OAuth token. -

A token DELETE method,

/token, to invalidated the current user’s OAuth token. -

A GET method,

/, to get the list of configured identity providers.

9.1.1.3. Using Swagger or REST clients to execute queries

The user’s RH-SSO token is used to execute queries to the secured API on the user’s behalf through REST clients. A valid token must be attached as the Request header or the ?token=$token query parameter.

Access the CodeReady Workspaces Swagger interface at \https://codeready-<openshift_deployment_name>.<domain_name>/swagger. The user must be signed in through RH-SSO, so that the access token is included in the Request header.

9.1.2. Authenticating in a CodeReady Workspaces workspace

Workspace containers may contain services that must be protected with authentication. Such protected services are called secure. To secure these services, use a machine authentication mechanism.

JWT tokens avoid the need to pass RH-SSO tokens to workspace containers (which can be insecure). Also, RH-SSO tokens may have a relatively shorter lifetime and require periodic renewals or refreshes, which is difficult to manage and keep in sync with the same user session tokens on clients.

Figure 9.1. Authentication inside a workspace

9.1.2.1. Creating secure servers

To create secure servers in CodeReady Workspaces workspaces, set the secure attribute of the endpoint to true in the dockerimage type component in the devfile.

Devfile snippet for a secure server

components:

- type: dockerimage

endpoints:

- attributes:

secure: 'true'

9.1.2.2. Workspace JWT token

Workspace tokens are JSON web tokens (JWT) that contain the following information in their claims:

-

uid: The ID of the user who owns this token -

uname: The name of the user who owns this token -

wsid: The ID of a workspace which can be queried with this token

Every user is provided with a unique personal token for each workspace. The structure of a token and the signature are different than they are in RH-SSO. The following is an example token view:

# Header

{

"alg": "RS512",

"kind": "machine_token"

}

# Payload

{

"wsid": "workspacekrh99xjenek3h571",

"uid": "b07e3a58-ed50-4a6e-be17-fcf49ff8b242",

"uname": "john",

"jti": "06c73349-2242-45f8-a94c-722e081bb6fd"

}

# Signature

{

"value": "RSASHA256(base64UrlEncode(header) + . + base64UrlEncode(payload))"

}The SHA-256 cipher with the RSA algorithm is used for signing JWT tokens. It is not configurable. Also, there is no public service that distributes the public part of the key pair with which the token is signed.

9.1.2.3. Machine token validation

The validation of machine tokens (JWT tokens) is performed using a dedicated per-workspace service with JWTProxy running on it in a separate Pod. When the workspace starts, this service receives the public part of the SHA key from the CodeReady Workspaces server. A separate verification endpoint is created for each secure server. When traffic comes to that endpoint, JWTProxy tries to extract the token from the cookies or headers and validates it using the public-key part.

To query the CodeReady Workspaces server, a workspace server can use the machine token provided in the CHE_MACHINE_TOKEN environment variable. This token is the user’s who starts the workspace. The scope of such requests is restricted to the current workspace only. The list of allowed operations is also strictly limited.

9.2. Authorizing users

User authorization in CodeReady Workspaces is based on the permissions model. Permissions are used to control the allowed actions of users and establish a security model. Every request is verified for the presence of the required permission in the current user subject after it passes authentication. You can control resources managed by CodeReady Workspaces and allow certain actions by assigning permissions to users.

Permissions can be applied to the following entities:

- Workspace

- System

All permissions can be managed using the provided REST API. The APIs are documented using Swagger at \https://codeready-<openshift_deployment_name>.<domain_name>/swagger/#!/permissions.

9.2.1. CodeReady Workspaces workspace permissions

The user who creates a workspace is the workspace owner. By default, the workspace owner has the following permissions: read, use, run, configure, setPermissions, and delete. Workspace owners can invite users into the workspace and control workspace permissions for other users.

The following permissions are associated with workspaces:

Table 9.1. CodeReady Workspaces workspace permissions

| Permission | Description |

|---|---|

| read | Allows reading the workspace configuration. |

| use | Allows using a workspace and interacting with it. |

| run | Allows starting and stopping a workspace. |

| configure | Allows defining and changing the workspace configuration. |

| setPermissions | Allows updating the workspace permissions for other users. |

| delete | Allows deleting the workspace. |

9.2.2. CodeReady Workspaces system permissions

CodeReady Workspaces system permissions control aspects of the whole CodeReady Workspaces installation. The following permissions are applicable to the system:

Table 9.2. CodeReady Workspaces system permission

| Permission | Description |

|---|---|

| manageSystem | Allows control of the system and workspaces. |

| setPermissions | Allows updating the permissions for users on the system. |

| manageUsers | Allows creating and managing users. |

| monitorSystem | Allows accessing endpoints used for monitoring the state of the server. |

All system permissions are granted to the administrative user who is configured in the CHE_SYSTEM_ADMIN__NAME property (the default is admin). The system permissions are granted when the CodeReady Workspaces server starts. If the user is not present in the CodeReady Workspaces user database, it happens after the first user’s login.

9.2.3. manageSystem permission

Users with the manageSystem permission have access to the following services:

| Path | HTTP Method | Description |

|---|---|---|

| /resource/free/ | GET | Get free resource limits. |

| /resource/free/{accountId} | GET | Get free resource limits for the given account. |

| /resource/free/{accountId} | POST | Edit free resource limit for the given account. |

| /resource/free/{accountId} | DELETE | Remove free resource limit for the given account. |

| /installer/ | POST | Add installer to the registry. |