Administration Guide

Administration of Red Hat Ceph Storage

Abstract

Chapter 1. Ceph administration

A Red Hat Ceph Storage cluster is the foundation for all Ceph deployments. After deploying a Red Hat Ceph Storage cluster, there are administrative operations for keeping a Red Hat Ceph Storage cluster healthy and performing optimally.

The Red Hat Ceph Storage Administration Guide helps storage administrators to perform such tasks as:

- How do I check the health of my Red Hat Ceph Storage cluster?

- How do I start and stop the Red Hat Ceph Storage cluster services?

- How do I add or remove an OSD from a running Red Hat Ceph Storage cluster?

- How do I manage user authentication and access controls to the objects stored in a Red Hat Ceph Storage cluster?

- I want to understand how to use overrides with a Red Hat Ceph Storage cluster.

- I want to monitor the performance of the Red Hat Ceph Storage cluster.

A basic Ceph storage cluster consist of two types of daemons:

- A Ceph Object Storage Device (OSD) stores data as objects within placement groups assigned to the OSD

- A Ceph Monitor maintains a master copy of the cluster map

A production system will have three or more Ceph Monitors for high availability and typically a minimum of 50 OSDs for acceptable load balancing, data re-balancing and data recovery.

Chapter 2. Understanding process management for Ceph

As a storage administrator, you can manipulate the various Ceph daemons by type or instance in a Red Hat Ceph Storage cluster. Manipulating these daemons allows you to start, stop and restart all of the Ceph services as needed.

2.1. Ceph process management

In Red Hat Ceph Storage, all process management is done through the Systemd service. Each time you want to start, restart, and stop the Ceph daemons, you must specify the daemon type or the daemon instance.

Additional Resources

-

For more information on using

systemd, see Managing system services with systemctl.

2.2. Starting, stopping, and restarting all Ceph daemons using systemctl command

You can start, stop, and restart all Ceph daemons as the root user from the host where you want to stop the Ceph daemons.

Prerequisites

- A running Red Hat Ceph Storage cluster.

-

Having

rootaccess to the node.

Procedure

On the host where you want to start, stop, and restart the daemons, run the systemctl service to get the SERVICE_ID of the service.

Example

[root@host01 ~]# systemctl --type=service ceph-499829b4-832f-11eb-8d6d-001a4a000635@mon.host01.service

Starting all Ceph daemons:

Syntax

systemctl start SERVICE_IDExample

[root@host01 ~]# systemctl start ceph-499829b4-832f-11eb-8d6d-001a4a000635@mon.host01.service

Stopping all Ceph daemons:

Syntax

systemctl stop SERVICE_IDExample

[root@host01 ~]# systemctl stop ceph-499829b4-832f-11eb-8d6d-001a4a000635@mon.host01.service

Restarting all Ceph daemons:

Syntax

systemctl restart SERVICE_IDExample

[root@host01 ~]# systemctl restart ceph-499829b4-832f-11eb-8d6d-001a4a000635@mon.host01.service

2.3. Starting, stopping, and restarting all Ceph services

Ceph services are logical groups of Ceph daemons of the same type, configured to run in the same Red Hat Ceph Storage cluster. The orchestration layer in Ceph allows the user to manage these services in a centralized way, making it easy to execute operations that affect all the Ceph daemons that belong to the same logical service. The Ceph daemons running in each host are managed through the Systemd service. You can start, stop, and restart all Ceph services from the host where you want to manage the Ceph services.

If you want to start,stop, or restart a specific Ceph daemon in a specific host, you need to use the SystemD service. To obtain a list of the SystemD services running in a specific host, connect to the host, and run the following command:

Example

[root@host01 ~]# systemctl list-units “ceph*”

The output will give you a list of the service names that you can use, to manage each Ceph daemon.

Prerequisites

- A running Red Hat Ceph Storage cluster.

-

Having

rootaccess to the node.

Procedure

Log into the Cephadm shell:

Example

[root@host01 ~]# cephadm shell

Run the

ceph orch lscommand to get a list of Ceph services configured in the Red Hat Ceph Storage cluster and to get the specific service ID.Example

[ceph: root@host01 /]# ceph orch ls NAME RUNNING REFRESHED AGE PLACEMENT IMAGE NAME IMAGE ID alertmanager 1/1 4m ago 4M count:1 registry.redhat.io/openshift4/ose-prometheus-alertmanager:v4.5 b7bae610cd46 crash 3/3 4m ago 4M * registry.redhat.io/rhceph-alpha/rhceph-6-rhel9:latest c88a5d60f510 grafana 1/1 4m ago 4M count:1 registry.redhat.io/rhceph-alpha/rhceph-6-dashboard-rhel9:latest bd3d7748747b mgr 2/2 4m ago 4M count:2 registry.redhat.io/rhceph-alpha/rhceph-6-rhel9:latest c88a5d60f510 mon 2/2 4m ago 10w count:2 registry.redhat.io/rhceph-alpha/rhceph-6-rhel9:latest c88a5d60f510 nfs.foo 0/1 - - count:1 <unknown> <unknown> node-exporter 1/3 4m ago 4M * registry.redhat.io/openshift4/ose-prometheus-node-exporter:v4.5 mix osd.all-available-devices 5/5 4m ago 3M * registry.redhat.io/rhceph-alpha/rhceph-6-rhel9:latest c88a5d60f510 prometheus 1/1 4m ago 4M count:1 registry.redhat.io/openshift4/ose-prometheus:v4.6 bebb0ddef7f0 rgw.test_realm.test_zone 2/2 4m ago 3M count:2 registry.redhat.io/rhceph-alpha/rhceph-6-rhel9:latest c88a5d60f510

To start a specific service, run the following command:

Syntax

ceph orch start SERVICE_IDExample

[ceph: root@host01 /]# ceph orch start node-exporter

To stop a specific service, run the following command:

ImportantThe

ceph orch stop SERVICE_IDcommand results in the Red Hat Ceph Storage cluster being inaccessible, only for the MON and MGR service. It is recommended to use thesystemctl stop SERVICE_IDcommand to stop a specific daemon in the host.Syntax

ceph orch stop SERVICE_IDExample

[ceph: root@host01 /]# ceph orch stop node-exporter

In the example the

ceph orch stop node-exportercommand removes all the daemons of thenode exporterservice.To restart a specific service, run the following command:

Syntax

ceph orch restart SERVICE_IDExample

[ceph: root@host01 /]# ceph orch restart node-exporter

2.4. Viewing log files of Ceph daemons that run in containers

Use the journald daemon from the container host to view a log file of a Ceph daemon from a container.

Prerequisites

- Installation of the Red Hat Ceph Storage software.

- Root-level access to the node.

Procedure

To view the entire Ceph log file, run a

journalctlcommand asrootcomposed in the following format:Syntax

journalctl -u ceph SERVICE_IDExample

[root@host01 ~]# journalctl -u ceph-499829b4-832f-11eb-8d6d-001a4a000635@osd.8.service

In the above example, you can view the entire log for the OSD with ID

osd.8.To show only the recent journal entries, use the

-foption.Syntax

journalctl -fu SERVICE_IDExample

[root@host01 ~]# journalctl -fu ceph-499829b4-832f-11eb-8d6d-001a4a000635@osd.8.service

You can also use the sosreport utility to view the journald logs. For more details about SOS reports, see the What is an sosreport and how to create one in Red Hat Enterprise Linux? solution on the Red Hat Customer Portal.

Additional Resources

-

The

journalctlmanual page.

2.5. Powering down and rebooting Red Hat Ceph Storage cluster

You can power down and reboot the Red Hat Ceph Storage cluster using two different approaches: systemctl commands and the Ceph Orchestrator. You can choose either approach to power down and reboot the cluster.

2.5.1. Powering down and rebooting the cluster using the systemctl commands

You can use the systemctl commands approach to power down and reboot the Red Hat Ceph Storage cluster. This approach follows the Linux way of stopping the services.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access.

Procedure

Powering down the Red Hat Ceph Storage cluster

- Stop the clients from using the Block Device images RADOS Gateway - Ceph Object Gateway on this cluster and any other clients.

Log into the Cephadm shell:

Example

[root@host01 ~]# cephadm shell

The cluster must be in healthy state (

Health_OKand all PGsactive+clean) before proceeding. Runceph statuson the host with the client keyrings, for example, the Ceph Monitor or OpenStack controller nodes, to ensure the cluster is healthy.Example

[ceph: root@host01 /]# ceph -s

If you use the Ceph File System (

CephFS), bring down theCephFScluster:Syntax

ceph fs set FS_NAME max_mds 1 ceph fs fail FS_NAME ceph status ceph fs set FS_NAME joinable false

Example

[ceph: root@host01 /]# ceph fs set cephfs max_mds 1 [ceph: root@host01 /]# ceph fs fail cephfs [ceph: root@host01 /]# ceph status [ceph: root@host01 /]# ceph fs set cephfs joinable false

Set the

noout,norecover,norebalance,nobackfill,nodown, andpauseflags. Run the following on a node with the client keyrings, for example, the Ceph Monitor or OpenStack controller node:Example

[ceph: root@host01 /]# ceph osd set noout [ceph: root@host01 /]# ceph osd set norecover [ceph: root@host01 /]# ceph osd set norebalance [ceph: root@host01 /]# ceph osd set nobackfill [ceph: root@host01 /]# ceph osd set nodown [ceph: root@host01 /]# ceph osd set pause

ImportantThe above example is only for stopping the service and each OSD in the OSD node and it needs to be repeated on each OSD node.

- If the MDS and Ceph Object Gateway nodes are on their own dedicated nodes, power them off.

Shut down the OSD nodes one by one:

Example

[root@host01 ~]# systemctl stop ceph-499829b4-832f-11eb-8d6d-001a4a000635@osd.2.service

Shut down the monitor nodes one by one:

Example

[root@host01 ~]# systemctl stop ceph-499829b4-832f-11eb-8d6d-001a4a000635@mon.host01.service

- Shut down the admin node.

Rebooting the Red Hat Ceph Storage cluster

- If network equipment was involved, ensure it is powered ON and stable prior to powering ON any Ceph hosts or nodes.

- Power ON the administration node.

Power ON the monitor nodes:

Example

[root@host01 ~]# systemctl start ceph-499829b4-832f-11eb-8d6d-001a4a000635@mon.host01.service

ImportantThe above example is only for stopping the service and each OSD in the OSD node and it needs to be repeated on each OSD node.

Power ON the OSD nodes:

Example

[root@host01 ~]# systemctl start ceph-499829b4-832f-11eb-8d6d-001a4a000635@osd.2.service

- Wait for all the nodes to come up. Verify all the services are up and there are no connectivity issues between the nodes.

Unset the

noout,norecover,norebalance,nobackfill,nodownandpauseflags. Run the following on a node with the client keyrings, for example, the Ceph Monitor or OpenStack controller node:Example

[ceph: root@host01 /]# ceph osd unset noout [ceph: root@host01 /]# ceph osd unset norecover [ceph: root@host01 /]# ceph osd unset norebalance [ceph: root@host01 /]# ceph osd unset nobackfill [ceph: root@host01 /]# ceph osd unset nodown [ceph: root@host01 /]# ceph osd unset pause

If you use the Ceph File System (

CephFS), bring theCephFScluster back up by setting thejoinableflag totrue:Syntax

ceph fs set FS_NAME joinable trueExample

[ceph: root@host01 /]# ceph fs set cephfs joinable true

Verification

-

Verify the cluster is in healthy state (

Health_OKand all PGsactive+clean). Runceph statuson a node with the client keyrings, for example, the Ceph Monitor or OpenStack controller nodes, to ensure the cluster is healthy.

Example

[ceph: root@host01 /]# ceph -s

Additional Resources

- For more information on installing Ceph, see the Red Hat Ceph Storage Installation Guide.

2.5.2. Powering down and rebooting the cluster using the Ceph Orchestrator

You can also use the capabilities of the Ceph Orchestrator to power down and reboot the Red Hat Ceph Storage cluster. In most cases, it is a single system login that can help in powering off the cluster.

The Ceph Orchestrator supports several operations, such as start, stop, and restart. You can use these commands with systemctl, for some cases, in powering down or rebooting the cluster.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the node.

Procedure

Powering down the Red Hat Ceph Storage cluster

- Stop the clients from using the user Block Device Image and Ceph Object Gateway on this cluster and any other clients.

Log into the Cephadm shell:

Example

[root@host01 ~]# cephadm shell

The cluster must be in healthy state (

Health_OKand all PGsactive+clean) before proceeding. Runceph statuson the host with the client keyrings, for example, the Ceph Monitor or OpenStack controller nodes, to ensure the cluster is healthy.Example

[ceph: root@host01 /]# ceph -s

If you use the Ceph File System (

CephFS), bring down theCephFScluster:Syntax

ceph fs set FS_NAME max_mds 1 ceph fs fail FS_NAME ceph status ceph fs set FS_NAME joinable false ceph mds fail FS_NAME:N

Example

[ceph: root@host01 /]# ceph fs set cephfs max_mds 1 [ceph: root@host01 /]# ceph fs fail cephfs [ceph: root@host01 /]# ceph status [ceph: root@host01 /]# ceph fs set cephfs joinable false [ceph: root@host01 /]# ceph mds fail cephfs:1

Set the

noout,norecover,norebalance,nobackfill,nodown, andpauseflags. Run the following on a node with the client keyrings, for example, the Ceph Monitor or OpenStack controller node:Example

[ceph: root@host01 /]# ceph osd set noout [ceph: root@host01 /]# ceph osd set norecover [ceph: root@host01 /]# ceph osd set norebalance [ceph: root@host01 /]# ceph osd set nobackfill [ceph: root@host01 /]# ceph osd set nodown [ceph: root@host01 /]# ceph osd set pause

Stop the MDS service.

Fetch the MDS service name:

Example

[ceph: root@host01 /]# ceph orch ls --service-type mds

Stop the MDS service using the fetched name in the previous step:

Syntax

ceph orch stop SERVICE-NAME

Stop the Ceph Object Gateway services. Repeat for each deployed service.

Fetch the Ceph Object Gateway service names:

Example

[ceph: root@host01 /]# ceph orch ls --service-type rgw

Stop the Ceph Object Gateway service using the fetched name:

Syntax

ceph orch stop SERVICE-NAME

Stop the Alertmanager service:

Example

[ceph: root@host01 /]# ceph orch stop alertmanager

Stop the node-exporter service which is a part of the monitoring stack:

Example

[ceph: root@host01 /]# ceph orch stop node-exporter

Stop the Prometheus service:

Example

[ceph: root@host01 /]# ceph orch stop prometheus

Stop the Grafana dashboard service:

Example

[ceph: root@host01 /]# ceph orch stop grafana

Stop the crash service:

Example

[ceph: root@host01 /]# ceph orch stop crash

Shut down the OSD nodes from the cephadm node, one by one. Repeat this step for all the OSDs in the cluster.

Fetch the OSD ID:

Example

[ceph: root@host01 /]# ceph orch ps --daemon-type=osd

Shut down the OSD node using the OSD ID you fetched:

Example

[ceph: root@host01 /]# ceph orch daemon stop osd.1 Scheduled to stop osd.1 on host 'host02'

Stop the monitors one by one.

Identify the hosts hosting the monitors:

Example

[ceph: root@host01 /]# ceph orch ps --daemon-type mon

On each host, stop the monitor.

Identify the

systemctlunit name:Example

[ceph: root@host01 /]# systemctl list-units ceph-* | grep mon

Stop the service:

Syntax

systemct stop SERVICE-NAME

- Shut down all the hosts.

Rebooting the Red Hat Ceph Storage cluster

- If network equipment was involved, ensure it is powered ON and stable prior to powering ON any Ceph hosts or nodes.

- Power ON all the Ceph hosts.

Log into the administration node from the Cephadm shell:

Example

[root@host01 ~]# cephadm shell

Verify all the services are in running state:

Example

[ceph: root@host01 /]# ceph orch ls

Ensure the cluster health is `Health_OK`status:

Example

[ceph: root@host01 /]# ceph -s

Unset the

noout,norecover,norebalance,nobackfill,nodownandpauseflags. Run the following on a node with the client keyrings, for example, the Ceph Monitor or OpenStack controller node:Example

[ceph: root@host01 /]# ceph osd unset noout [ceph: root@host01 /]# ceph osd unset norecover [ceph: root@host01 /]# ceph osd unset norebalance [ceph: root@host01 /]# ceph osd unset nobackfill [ceph: root@host01 /]# ceph osd unset nodown [ceph: root@host01 /]# ceph osd unset pause

If you use the Ceph File System (

CephFS), bring theCephFScluster back up by setting thejoinableflag totrue:Syntax

ceph fs set FS_NAME joinable trueExample

[ceph: root@host01 /]# ceph fs set cephfs joinable true

Verification

-

Verify the cluster is in healthy state (

Health_OKand all PGsactive+clean). Runceph statuson a node with the client keyrings, for example, the Ceph Monitor or OpenStack controller nodes, to ensure the cluster is healthy.

Example

[ceph: root@host01 /]# ceph -s

Additional Resources

- For more information on installing Ceph see the Red Hat Ceph Storage Installation Guide

Chapter 3. Monitoring a Ceph storage cluster

As a storage administrator, you can monitor the overall health of the Red Hat Ceph Storage cluster, along with monitoring the health of the individual components of Ceph.

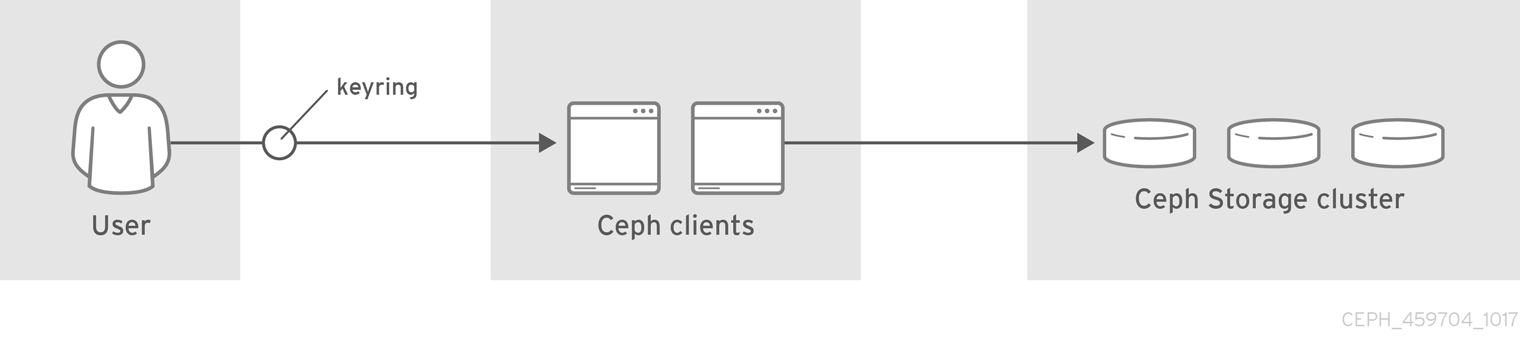

Once you have a running Red Hat Ceph Storage cluster, you might begin monitoring the storage cluster to ensure that the Ceph Monitor and Ceph OSD daemons are running, at a high-level. Ceph storage cluster clients connect to a Ceph Monitor and receive the latest version of the storage cluster map before they can read and write data to the Ceph pools within the storage cluster. So the monitor cluster must have agreement on the state of the cluster before Ceph clients can read and write data.

Ceph OSDs must peer the placement groups on the primary OSD with the copies of the placement groups on secondary OSDs. If faults arise, peering will reflect something other than the active + clean state.

3.1. High-level monitoring of a Ceph storage cluster

As a storage administrator, you can monitor the health of the Ceph daemons to ensure that they are up and running. High level monitoring also involves checking the storage cluster capacity to ensure that the storage cluster does not exceed its full ratio. The Red Hat Ceph Storage Dashboard is the most common way to conduct high-level monitoring. However, you can also use the command-line interface, the Ceph admin socket or the Ceph API to monitor the storage cluster.

3.1.1. Checking the storage cluster health

After you start the Ceph storage cluster, and before you start reading or writing data, check the storage cluster’s health first.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the node.

Procedure

Log into the Cephadm shell:

Example

root@host01 ~]# cephadm shell

You can check on the health of the Ceph storage cluster with the following command:

Example

[ceph: root@host01 /]# ceph health HEALTH_OK

You can check the status of the Ceph storage cluster by running

ceph statuscommand:Example

[ceph: root@host01 /]# ceph status

The output provides the following information:

- Cluster ID

- Cluster health status

- The monitor map epoch and the status of the monitor quorum.

- The OSD map epoch and the status of OSDs.

- The status of Ceph Managers.

- The status of Object Gateways.

- The placement group map version.

- The number of placement groups and pools.

- The notional amount of data stored and the number of objects stored.

- The total amount of data stored.

- The IO client operations.

An update on the upgrade process if the cluster is upgrading.

Upon starting the Ceph cluster, you will likely encounter a health warning such as

HEALTH_WARN XXX num placement groups stale. Wait a few moments and check it again. When the storage cluster is ready,ceph healthshould return a message such asHEALTH_OK. At that point, it is okay to begin using the cluster.

3.1.2. Watching storage cluster events

You can watch events that are happening with the Ceph storage cluster using the command-line interface.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the node.

Procedure

Log into the Cephadm shell:

Example

root@host01 ~]# cephadm shell

To watch the cluster’s ongoing events, run the following command:

Example

[ceph: root@host01 /]# ceph -w cluster: id: 8c9b0072-67ca-11eb-af06-001a4a0002a0 health: HEALTH_OK services: mon: 2 daemons, quorum Ceph5-2,Ceph5-adm (age 3d) mgr: Ceph5-1.nqikfh(active, since 3w), standbys: Ceph5-adm.meckej osd: 5 osds: 5 up (since 2d), 5 in (since 8w) rgw: 2 daemons active (test_realm.test_zone.Ceph5-2.bfdwcn, test_realm.test_zone.Ceph5-adm.acndrh) data: pools: 11 pools, 273 pgs objects: 459 objects, 32 KiB usage: 2.6 GiB used, 72 GiB / 75 GiB avail pgs: 273 active+clean io: client: 170 B/s rd, 730 KiB/s wr, 0 op/s rd, 729 op/s wr 2021-06-02 15:45:21.655871 osd.0 [INF] 17.71 deep-scrub ok 2021-06-02 15:45:47.880608 osd.1 [INF] 1.0 scrub ok 2021-06-02 15:45:48.865375 osd.1 [INF] 1.3 scrub ok 2021-06-02 15:45:50.866479 osd.1 [INF] 1.4 scrub ok 2021-06-02 15:45:01.345821 mon.0 [INF] pgmap v41339: 952 pgs: 952 active+clean; 17130 MB data, 115 GB used, 167 GB / 297 GB avail 2021-06-02 15:45:05.718640 mon.0 [INF] pgmap v41340: 952 pgs: 1 active+clean+scrubbing+deep, 951 active+clean; 17130 MB data, 115 GB used, 167 GB / 297 GB avail 2021-06-02 15:45:53.997726 osd.1 [INF] 1.5 scrub ok 2021-06-02 15:45:06.734270 mon.0 [INF] pgmap v41341: 952 pgs: 1 active+clean+scrubbing+deep, 951 active+clean; 17130 MB data, 115 GB used, 167 GB / 297 GB avail 2021-06-02 15:45:15.722456 mon.0 [INF] pgmap v41342: 952 pgs: 952 active+clean; 17130 MB data, 115 GB used, 167 GB / 297 GB avail 2021-06-02 15:46:06.836430 osd.0 [INF] 17.75 deep-scrub ok 2021-06-02 15:45:55.720929 mon.0 [INF] pgmap v41343: 952 pgs: 1 active+clean+scrubbing+deep, 951 active+clean; 17130 MB data, 115 GB used, 167 GB / 297 GB avail

3.1.3. How Ceph calculates data usage

The used value reflects the actual amount of raw storage used. The xxx GB / xxx GB value means the amount available, the lesser of the two numbers, of the overall storage capacity of the cluster. The notional number reflects the size of the stored data before it is replicated, cloned or snapshotted. Therefore, the amount of data actually stored typically exceeds the notional amount stored, because Ceph creates replicas of the data and may also use storage capacity for cloning and snapshotting.

3.1.4. Understanding the storage clusters usage stats

To check a cluster’s data usage and data distribution among pools, use the df option. It is similar to the Linux df command.

The SIZE/AVAIL/RAW USED in the ceph df and ceph status command output are different if some OSDs are marked OUT of the cluster compared to when all OSDs are IN. The SIZE/AVAIL/RAW USED is calculated from sum of SIZE (osd disk size), RAW USE (total used space on disk), and AVAIL of all OSDs which are in IN state. You can see the total of SIZE/AVAIL/RAW USED for all OSDs in ceph osd df tree command output.

Example

[ceph: root@host01 /]#ceph df --- RAW STORAGE --- CLASS SIZE AVAIL USED RAW USED %RAW USED hdd 5 TiB 2.9 TiB 2.1 TiB 2.1 TiB 42.98 TOTAL 5 TiB 2.9 TiB 2.1 TiB 2.1 TiB 42.98 --- POOLS --- POOL ID PGS STORED OBJECTS USED %USED MAX AVAIL .mgr 1 1 5.3 MiB 3 16 MiB 0 629 GiB .rgw.root 2 32 1.3 KiB 4 48 KiB 0 629 GiB default.rgw.log 3 32 3.6 KiB 209 408 KiB 0 629 GiB default.rgw.control 4 32 0 B 8 0 B 0 629 GiB default.rgw.meta 5 32 1.7 KiB 10 96 KiB 0 629 GiB default.rgw.buckets.index 7 32 5.5 MiB 22 17 MiB 0 629 GiB default.rgw.buckets.data 8 32 807 KiB 3 2.4 MiB 0 629 GiB default.rgw.buckets.non-ec 9 32 1.0 MiB 1 3.1 MiB 0 629 GiB source-ecpool-86 11 32 1.2 TiB 391.13k 2.1 TiB 53.49 1.1 TiB

The ceph df detail command gives more details about other pool statistics such as quota objects, quota bytes, used compression, and under compression.

The RAW STORAGE section of the output provides an overview of the amount of storage the storage cluster manages for data.

- CLASS: The class of OSD device.

SIZE: The amount of storage capacity managed by the storage cluster.

In the above example, if the

SIZEis 90 GiB, it is the total size without the replication factor, which is three by default. The total available capacity with the replication factor is 90 GiB/3 = 30 GiB. Based on the full ratio, which is 0.85% by default, the maximum available space is 30 GiB * 0.85 = 25.5 GiBAVAIL: The amount of free space available in the storage cluster.

In the above example, if the

SIZEis 90 GiB and theUSEDspace is 6 GiB, then theAVAILspace is 84 GiB. The total available space with the replication factor, which is three by default, is 84 GiB/3 = 28 GiBUSED: The amount of raw storage consumed by user data.

In the above example, 100 MiB is the total space available after considering the replication factor. The actual available size is 33 MiB. RAW USED: The amount of raw storage consumed by user data, internal overhead, or reserved capacity.

-

% RAW USED: The percentage of RAW USED. Use this number in conjunction with the

full ratioandnear full ratioto ensure that you are not reaching the storage cluster’s capacity.

The POOLS section of the output provides a list of pools and the notional usage of each pool. The output from this section DOES NOT reflect replicas, clones or snapshots. For example, if you store an object with 1 MB of data, the notional usage will be 1 MB, but the actual usage may be 3 MB or more depending on the number of replicas for example, size = 3, clones and snapshots.

- POOL: The name of the pool.

- ID: The pool ID.

- STORED: The actual amount of data stored by the user in the pool. This value changes based on the raw usage data based on (k+M)/K values, number of object copies, and the number of objects degraded at the time of pool stats calculation.

-

OBJECTS: The notional number of objects stored per pool. It is

STOREDsize * replication factor. - USED: The notional amount of data stored in kilobytes, unless the number appends M for megabytes or G for gigabytes.

- %USED: The notional percentage of storage used per pool.

MAX AVAIL: An estimate of the notional amount of data that can be written to this pool. It is the amount of data that can be used before the first OSD becomes full. It considers the projected distribution of data across disks from the CRUSH map and uses the first OSD to fill up as the target.

In the above example,

MAX AVAILis 153.85 MB without considering the replication factor, which is three by default.See the Red Hat Knowledgebase article titled ceph df MAX AVAIL is incorrect for simple replicated pool to calculate the value of

MAX AVAIL.- QUOTA OBJECTS: The number of quota objects.

- QUOTA BYTES: The number of bytes in the quota objects.

- USED COMPR: The amount of space allocated for compressed data including his includes compressed data, allocation, replication and erasure coding overhead.

- UNDER COMPR: The amount of data passed through compression and beneficial enough to be stored in a compressed form.

The numbers in the POOLS section are notional. They are not inclusive of the number of replicas, snapshots or clones. As a result, the sum of the USED and %USED amounts will not add up to the RAW USED and %RAW USED amounts in the GLOBAL section of the output.

The MAX AVAIL value is a complicated function of the replication or erasure code used, the CRUSH rule that maps storage to devices, the utilization of those devices, and the configured mon_osd_full_ratio.

Additional Resources

- See How Ceph calculates data usage for details.

- See Understanding the OSD usage stats for details.

3.1.5. Understanding the OSD usage stats

Use the ceph osd df command to view OSD utilization stats.

Example

[ceph: root@host01 /]# ceph osd df ID CLASS WEIGHT REWEIGHT SIZE USE DATA OMAP META AVAIL %USE VAR PGS 3 hdd 0.90959 1.00000 931GiB 70.1GiB 69.1GiB 0B 1GiB 861GiB 7.53 2.93 66 4 hdd 0.90959 1.00000 931GiB 1.30GiB 308MiB 0B 1GiB 930GiB 0.14 0.05 59 0 hdd 0.90959 1.00000 931GiB 18.1GiB 17.1GiB 0B 1GiB 913GiB 1.94 0.76 57 MIN/MAX VAR: 0.02/2.98 STDDEV: 2.91

- ID: The name of the OSD.

- CLASS: The type of devices the OSD uses.

- WEIGHT: The weight of the OSD in the CRUSH map.

- REWEIGHT: The default reweight value.

- SIZE: The overall storage capacity of the OSD.

- USE: The OSD capacity.

- DATA: The amount of OSD capacity that is used by user data.

-

OMAP: An estimate value of the

bluefsstorage that is being used to store object map (omap) data (key value pairs stored inrocksdb). -

META: The

bluefsspace allocated, or the value set in thebluestore_bluefs_minparameter, whichever is larger, for internal metadata which is calculated as the total space allocated inbluefsminus the estimatedomapdata size. - AVAIL: The amount of free space available on the OSD.

- %USE: The notional percentage of storage used by the OSD

- VAR: The variation above or below average utilization.

- PGS: The number of placement groups in the OSD.

- MIN/MAX VAR: The minimum and maximum variation across all OSDs.

Additional Resources

- See How Ceph calculates data usage for details.

- See Understanding the OSD usage stats for details.

- See CRUSH Weights in Red Hat Ceph Storage Storage Strategies Guide for details.

3.1.6. Checking the storage cluster status

You can check the status of the Red Hat Ceph Storage cluster from the command-line interface. The status sub command or the -s argument will display the current status of the storage cluster.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the node.

Procedure

Log into the Cephadm shell:

Example

[root@host01 ~]# cephadm shell

To check a storage cluster’s status, execute the following:

Example

[ceph: root@host01 /]# ceph status

Or

Example

[ceph: root@host01 /]# ceph -s

In interactive mode, type

cephand press Enter:Example

[ceph: root@host01 /]# ceph ceph> status cluster: id: 499829b4-832f-11eb-8d6d-001a4a000635 health: HEALTH_WARN 1 stray daemon(s) not managed by cephadm 1/3 mons down, quorum host03,host02 too many PGs per OSD (261 > max 250) services: mon: 3 daemons, quorum host03,host02 (age 3d), out of quorum: host01 mgr: host01.hdhzwn(active, since 9d), standbys: host05.eobuuv, host06.wquwpj osd: 12 osds: 11 up (since 2w), 11 in (since 5w) rgw: 2 daemons active (test_realm.test_zone.host04.hgbvnq, test_realm.test_zone.host05.yqqilm) rgw-nfs: 1 daemon active (nfs.foo.host06-rgw) data: pools: 8 pools, 960 pgs objects: 414 objects, 1.0 MiB usage: 5.7 GiB used, 214 GiB / 220 GiB avail pgs: 960 active+clean io: client: 41 KiB/s rd, 0 B/s wr, 41 op/s rd, 27 op/s wr ceph> health HEALTH_WARN 1 stray daemon(s) not managed by cephadm; 1/3 mons down, quorum host03,host02; too many PGs per OSD (261 > max 250) ceph> mon stat e3: 3 mons at {host01=[v2:10.74.255.0:3300/0,v1:10.74.255.0:6789/0],host02=[v2:10.74.249.253:3300/0,v1:10.74.249.253:6789/0],host03=[v2:10.74.251.164:3300/0,v1:10.74.251.164:6789/0]}, election epoch 6688, leader 1 host03, quorum 1,2 host03,host02

3.1.7. Checking the Ceph Monitor status

If the storage cluster has multiple Ceph Monitors, which is a requirement for a production Red Hat Ceph Storage cluster, then you can check the Ceph Monitor quorum status after starting the storage cluster, and before doing any reading or writing of data.

A quorum must be present when multiple Ceph Monitors are running.

Check the Ceph Monitor status periodically to ensure that they are running. If there is a problem with the Ceph Monitor, that prevents an agreement on the state of the storage cluster, the fault can prevent Ceph clients from reading and writing data.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the node.

Procedure

Log into the Cephadm shell:

Example

[root@host01 ~]# cephadm shell

To display the Ceph Monitor map, execute the following:

Example

[ceph: root@host01 /]# ceph mon stat

or

Example

[ceph: root@host01 /]# ceph mon dump

To check the quorum status for the storage cluster, execute the following:

[ceph: root@host01 /]# ceph quorum_status -f json-pretty

Ceph returns the quorum status.

Example

{ "election_epoch": 6686, "quorum": [ 0, 1, 2 ], "quorum_names": [ "host01", "host03", "host02" ], "quorum_leader_name": "host01", "quorum_age": 424884, "features": { "quorum_con": "4540138297136906239", "quorum_mon": [ "kraken", "luminous", "mimic", "osdmap-prune", "nautilus", "octopus", "pacific", "elector-pinging" ] }, "monmap": { "epoch": 3, "fsid": "499829b4-832f-11eb-8d6d-001a4a000635", "modified": "2021-03-15T04:51:38.621737Z", "created": "2021-03-12T12:35:16.911339Z", "min_mon_release": 16, "min_mon_release_name": "pacific", "election_strategy": 1, "disallowed_leaders: ": "", "stretch_mode": false, "features": { "persistent": [ "kraken", "luminous", "mimic", "osdmap-prune", "nautilus", "octopus", "pacific", "elector-pinging" ], "optional": [] }, "mons": [ { "rank": 0, "name": "host01", "public_addrs": { "addrvec": [ { "type": "v2", "addr": "10.74.255.0:3300", "nonce": 0 }, { "type": "v1", "addr": "10.74.255.0:6789", "nonce": 0 } ] }, "addr": "10.74.255.0:6789/0", "public_addr": "10.74.255.0:6789/0", "priority": 0, "weight": 0, "crush_location": "{}" }, { "rank": 1, "name": "host03", "public_addrs": { "addrvec": [ { "type": "v2", "addr": "10.74.251.164:3300", "nonce": 0 }, { "type": "v1", "addr": "10.74.251.164:6789", "nonce": 0 } ] }, "addr": "10.74.251.164:6789/0", "public_addr": "10.74.251.164:6789/0", "priority": 0, "weight": 0, "crush_location": "{}" }, { "rank": 2, "name": "host02", "public_addrs": { "addrvec": [ { "type": "v2", "addr": "10.74.249.253:3300", "nonce": 0 }, { "type": "v1", "addr": "10.74.249.253:6789", "nonce": 0 } ] }, "addr": "10.74.249.253:6789/0", "public_addr": "10.74.249.253:6789/0", "priority": 0, "weight": 0, "crush_location": "{}" } ] } }

3.1.8. Using the Ceph administration socket

Use the administration socket to interact with a given daemon directly by using a UNIX socket file. For example, the socket enables you to:

- List the Ceph configuration at runtime

-

Set configuration values at runtime directly without relying on Monitors. This is useful when Monitors are

down. - Dump historic operations

- Dump the operation priority queue state

- Dump operations without rebooting

- Dump performance counters

In addition, using the socket is helpful when troubleshooting problems related to Ceph Monitors or OSDs.

Regardless, if the daemon is not running, a following error is returned when attempting to use the administration socket:

Error 111: Connection Refused

The administration socket is only available while a daemon is running. When you shut down the daemon properly, the administration socket is removed. However, if the daemon terminates unexpectedly, the administration socket might persist.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the node.

Procedure

Log into the Cephadm shell:

Example

[root@host01 ~]# cephadm shell

To use the socket:

Syntax

ceph daemon MONITOR_ID COMMAND

Replace:

-

MONITOR_IDof the daemon COMMANDwith the command to run. Usehelpto list the available commands for a given daemon.To view the status of a Ceph Monitor:

Example

[ceph: root@host01 /]# ceph daemon mon.host01 help { "add_bootstrap_peer_hint": "add peer address as potential bootstrap peer for cluster bringup", "add_bootstrap_peer_hintv": "add peer address vector as potential bootstrap peer for cluster bringup", "compact": "cause compaction of monitor's leveldb/rocksdb storage", "config diff": "dump diff of current config and default config", "config diff get": "dump diff get <field>: dump diff of current and default config setting <field>", "config get": "config get <field>: get the config value", "config help": "get config setting schema and descriptions", "config set": "config set <field> <val> [<val> ...]: set a config variable", "config show": "dump current config settings", "config unset": "config unset <field>: unset a config variable", "connection scores dump": "show the scores used in connectivity-based elections", "connection scores reset": "reset the scores used in connectivity-based elections", "counter dump": "dump all labeled and non-labeled counters and their values", "counter schema": "dump all labeled and non-labeled counters schemas", "dump_historic_ops": "show recent ops", "dump_historic_slow_ops": "show recent slow ops", "dump_mempools": "get mempool stats", "get_command_descriptions": "list available commands", "git_version": "get git sha1", "heap": "show heap usage info (available only if compiled with tcmalloc)", "help": "list available commands", "injectargs": "inject configuration arguments into running daemon", "log dump": "dump recent log entries to log file", "log flush": "flush log entries to log file", "log reopen": "reopen log file", "mon_status": "report status of monitors", "ops": "show the ops currently in flight", "perf dump": "dump non-labeled counters and their values", "perf histogram dump": "dump perf histogram values", "perf histogram schema": "dump perf histogram schema", "perf reset": "perf reset <name>: perf reset all or one perfcounter name", "perf schema": "dump non-labeled counters schemas", "quorum enter": "force monitor back into quorum", "quorum exit": "force monitor out of the quorum", "sessions": "list existing sessions", "smart": "Query health metrics for underlying device", "sync_force": "force sync of and clear monitor store", "version": "get ceph version" }Example

[ceph: root@host01 /]# ceph daemon mon.host01 mon_status { "name": "host01", "rank": 0, "state": "leader", "election_epoch": 120, "quorum": [ 0, 1, 2 ], "quorum_age": 206358, "features": { "required_con": "2449958747317026820", "required_mon": [ "kraken", "luminous", "mimic", "osdmap-prune", "nautilus", "octopus", "pacific", "elector-pinging" ], "quorum_con": "4540138297136906239", "quorum_mon": [ "kraken", "luminous", "mimic", "osdmap-prune", "nautilus", "octopus", "pacific", "elector-pinging" ] }, "outside_quorum": [], "extra_probe_peers": [], "sync_provider": [], "monmap": { "epoch": 3, "fsid": "81a4597a-b711-11eb-8cb8-001a4a000740", "modified": "2021-05-18T05:50:17.782128Z", "created": "2021-05-17T13:13:13.383313Z", "min_mon_release": 16, "min_mon_release_name": "pacific", "election_strategy": 1, "disallowed_leaders: ": "", "stretch_mode": false, "features": { "persistent": [ "kraken", "luminous", "mimic", "osdmap-prune", "nautilus", "octopus", "pacific", "elector-pinging" ], "optional": [] }, "mons": [ { "rank": 0, "name": "host01", "public_addrs": { "addrvec": [ { "type": "v2", "addr": "10.74.249.41:3300", "nonce": 0 }, { "type": "v1", "addr": "10.74.249.41:6789", "nonce": 0 } ] }, "addr": "10.74.249.41:6789/0", "public_addr": "10.74.249.41:6789/0", "priority": 0, "weight": 0, "crush_location": "{}" }, { "rank": 1, "name": "host02", "public_addrs": { "addrvec": [ { "type": "v2", "addr": "10.74.249.55:3300", "nonce": 0 }, { "type": "v1", "addr": "10.74.249.55:6789", "nonce": 0 } ] }, "addr": "10.74.249.55:6789/0", "public_addr": "10.74.249.55:6789/0", "priority": 0, "weight": 0, "crush_location": "{}" }, { "rank": 2, "name": "host03", "public_addrs": { "addrvec": [ { "type": "v2", "addr": "10.74.249.49:3300", "nonce": 0 }, { "type": "v1", "addr": "10.74.249.49:6789", "nonce": 0 } ] }, "addr": "10.74.249.49:6789/0", "public_addr": "10.74.249.49:6789/0", "priority": 0, "weight": 0, "crush_location": "{}" } ] }, "feature_map": { "mon": [ { "features": "0x3f01cfb9fffdffff", "release": "luminous", "num": 1 } ], "osd": [ { "features": "0x3f01cfb9fffdffff", "release": "luminous", "num": 3 } ] }, "stretch_mode": false }

-

Alternatively, specify the Ceph daemon by using its socket file:

Syntax

ceph daemon /var/run/ceph/SOCKET_FILE COMMAND

To view the status of a Ceph OSD named

osd.0on the specific host:Example

[ceph: root@host01 /]# ceph daemon /var/run/ceph/ceph-osd.0.asok status { "cluster_fsid": "9029b252-1668-11ee-9399-001a4a000429", "osd_fsid": "1de9b064-b7a5-4c54-9395-02ccda637d21", "whoami": 0, "state": "active", "oldest_map": 1, "newest_map": 58, "num_pgs": 33 }NoteYou can use

helpinstead ofstatusfor the various options that are available for the specific daemon.To list all socket files for the Ceph processes:

Example

[ceph: root@host01 /]# ls /var/run/ceph

Additional Resources

- See the Red Hat Ceph Storage Troubleshooting Guide for more information.

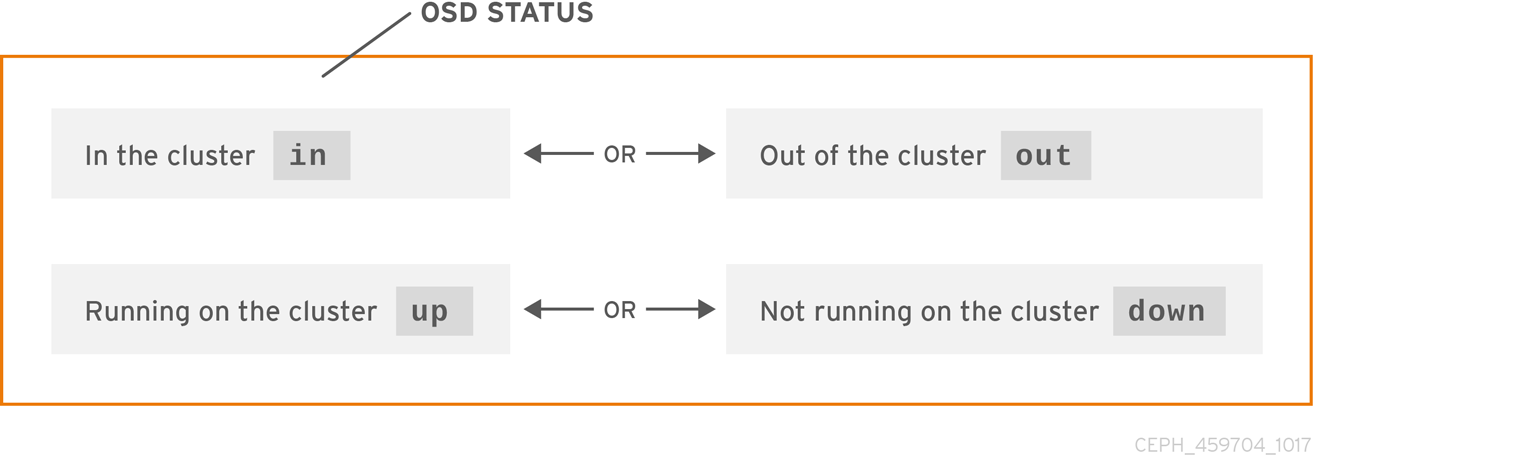

3.1.9. Understanding the Ceph OSD status

A Ceph OSD’s status is either in the storage cluster, or out of the storage cluster. It is either up and running, or it is down and not running. If a Ceph OSD is up, it can be either in the storage cluster, where data can be read and written, or it is out of the storage cluster. If it was in the storage cluster and recently moved out of the storage cluster, Ceph starts migrating placement groups to other Ceph OSDs. If a Ceph OSD is out of the storage cluster, CRUSH will not assign placement groups to the Ceph OSD. If a Ceph OSD is down, it should also be out.

If a Ceph OSD is down and in, there is a problem, and the storage cluster will not be in a healthy state.

If you execute a command such as ceph health, ceph -s or ceph -w, you might notice that the storage cluster does not always echo back HEALTH OK. Do not panic. With respect to Ceph OSDs, you can expect that the storage cluster will NOT echo HEALTH OK in a few expected circumstances:

- You have not started the storage cluster yet, and it is not responding.

- You have just started or restarted the storage cluster, and it is not ready yet, because the placement groups are getting created and the Ceph OSDs are in the process of peering.

- You just added or removed a Ceph OSD.

- You just modified the storage cluster map.

An important aspect of monitoring Ceph OSDs is to ensure that when the storage cluster is up and running that all Ceph OSDs that are in the storage cluster are up and running, too.

To see if all OSDs are running, execute:

Example

[ceph: root@host01 /]# ceph osd stat

or

Example

[ceph: root@host01 /]# ceph osd dump

The result should tell you the map epoch, eNNNN, the total number of OSDs, x, how many, y, are up, and how many, z, are in:

eNNNN: x osds: y up, z in

If the number of Ceph OSDs that are in the storage cluster are more than the number of Ceph OSDs that are up. Execute the following command to identify the ceph-osd daemons that are not running:

Example

[ceph: root@host01 /]# ceph osd tree # id weight type name up/down reweight -1 3 pool default -3 3 rack mainrack -2 3 host osd-host 0 1 osd.0 up 1 1 1 osd.1 up 1 2 1 osd.2 up 1

The ability to search through a well-designed CRUSH hierarchy can help you troubleshoot the storage cluster by identifying the physical locations faster.

If a Ceph OSD is down, connect to the node and start it. You can use Red Hat Storage Console to restart the Ceph OSD daemon, or you can use the command line.

Syntax

systemctl start CEPH_OSD_SERVICE_ID

Example

[root@host01 ~]# systemctl start ceph-499829b4-832f-11eb-8d6d-001a4a000635@osd.6.service

Additional Resources

- See the Red Hat Ceph Storage Dashboard Guide for more details.

3.2. Low-level monitoring of a Ceph storage cluster

As a storage administrator, you can monitor the health of a Red Hat Ceph Storage cluster from a low-level perspective. Low-level monitoring typically involves ensuring that Ceph OSDs are peering properly. When peering faults occur, placement groups operate in a degraded state. This degraded state can be the result of many different things, such as hardware failure, a hung or crashed Ceph daemon, network latency, or a complete site outage.

3.2.1. Monitoring Placement Group Sets

When CRUSH assigns placement groups to Ceph OSDs, it looks at the number of replicas for the pool and assigns the placement group to Ceph OSDs such that each replica of the placement group gets assigned to a different Ceph OSD. For example, if the pool requires three replicas of a placement group, CRUSH may assign them to osd.1, osd.2 and osd.3 respectively. CRUSH actually seeks a pseudo-random placement that will take into account failure domains you set in the CRUSH map, so you will rarely see placement groups assigned to nearest neighbor Ceph OSDs in a large cluster. We refer to the set of Ceph OSDs that should contain the replicas of a particular placement group as the Acting Set. In some cases, an OSD in the Acting Set is down or otherwise not able to service requests for objects in the placement group. When these situations arise, do not panic. Common examples include:

- You added or removed an OSD. Then, CRUSH reassigned the placement group to other Ceph OSDs, thereby changing the composition of the acting set and spawning the migration of data with a "backfill" process.

-

A Ceph OSD was

down, was restarted and is nowrecovering. -

A Ceph OSD in the acting set is

downor unable to service requests, and another Ceph OSD has temporarily assumed its duties.

Ceph processes a client request using the Up Set, which is the set of Ceph OSDs that actually handle the requests. In most cases, the up set and the Acting Set are virtually identical. When they are not, it can indicate that Ceph is migrating data, an Ceph OSD is recovering, or that there is a problem, that is, Ceph usually echoes a HEALTH WARN state with a "stuck stale" message in such scenarios.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the node.

Procedure

Log into the Cephadm shell:

Example

[root@host01 ~]# cephadm shell

To retrieve a list of placement groups:

Example

[ceph: root@host01 /]# ceph pg dump

View which Ceph OSDs are in the Acting Set or in the Up Set for a given placement group:

Syntax

ceph pg map PG_NUMExample

[ceph: root@host01 /]# ceph pg map 128

NoteIf the Up Set and Acting Set do not match, this may be an indicator that the storage cluster rebalancing itself or of a potential problem with the storage cluster.

3.2.2. Ceph OSD peering

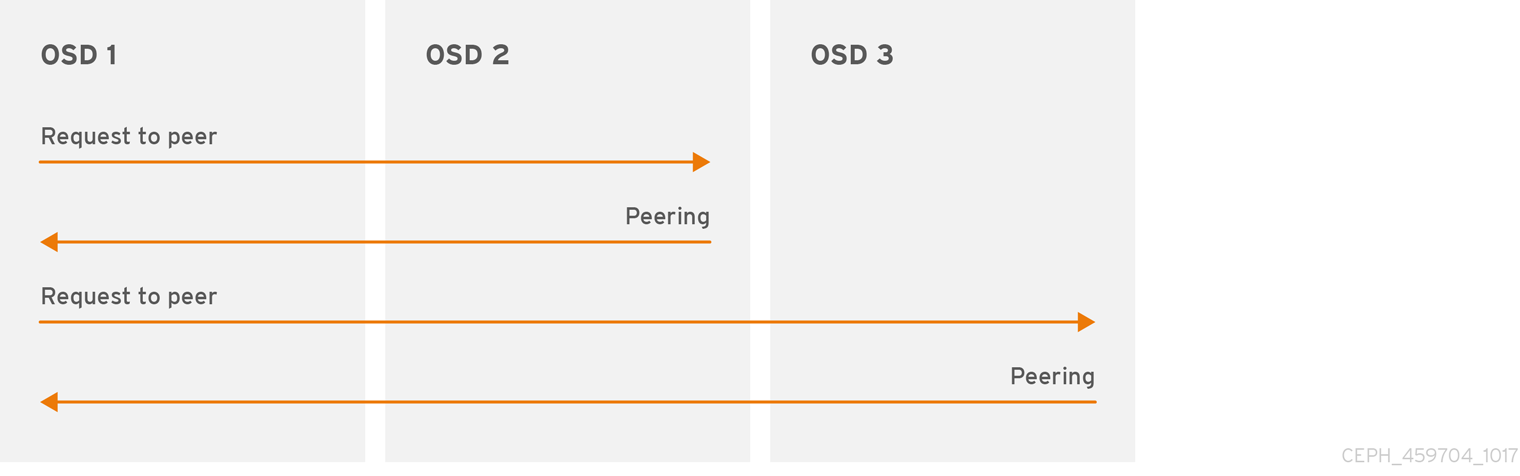

Before you can write data to a placement group, it must be in an active state, and it should be in a clean state. For Ceph to determine the current state of a placement group, the primary OSD of the placement group that is, the first OSD in the acting set, peers with the secondary and tertiary OSDs to establish agreement on the current state of the placement group. Assuming a pool with three replicas of the PG.

Figure 3.1. Peering

3.2.3. Placement Group States

If you execute a command such as ceph health, ceph -s or ceph -w, you may notice that the cluster does not always echo back HEALTH OK. After you check to see if the OSDs are running, you should also check placement group states. You should expect that the cluster will NOT echo HEALTH OK in a number of placement group peering-related circumstances:

- You have just created a pool and placement groups haven’t peered yet.

- The placement groups are recovering.

- You have just added an OSD to or removed an OSD from the cluster.

- You have just modified the CRUSH map and the placement groups are migrating.

- There is inconsistent data in different replicas of a placement group.

- Ceph is scrubbing a placement group’s replicas.

- Ceph doesn’t have enough storage capacity to complete backfilling operations.

If one of the foregoing circumstances causes Ceph to echo HEALTH WARN, don’t panic. In many cases, the cluster will recover on its own. In some cases, you may need to take action. An important aspect of monitoring placement groups is to ensure that when the cluster is up and running that all placement groups are active, and preferably in the clean state.

To see the status of all placement groups, execute:

Example

[ceph: root@host01 /]# ceph pg stat

The result should tell you the placement group map version, vNNNNNN, the total number of placement groups, x, and how many placement groups, y, are in a particular state such as active+clean:

vNNNNNN: x pgs: y active+clean; z bytes data, aa MB used, bb GB / cc GB avail

It is common for Ceph to report multiple states for placement groups.

Snapshot Trimming PG States

When snapshots exist, two additional PG states will be reported.

-

snaptrim: The PGs are currently being trimmed -

snaptrim_wait: The PGs are waiting to be trimmed

Example Output:

244 active+clean+snaptrim_wait 32 active+clean+snaptrim

In addition to the placement group states, Ceph will also echo back the amount of data used, aa, the amount of storage capacity remaining, bb, and the total storage capacity for the placement group. These numbers can be important in a few cases:

-

You are reaching the

near full ratioorfull ratio. - Your data isn’t getting distributed across the cluster due to an error in the CRUSH configuration.

Placement Group IDs

Placement group IDs consist of the pool number, and not the pool name, followed by a period (.) and the placement group ID—a hexadecimal number. You can view pool numbers and their names from the output of ceph osd lspools. The default pool names data, metadata and rbd correspond to pool numbers 0, 1 and 2 respectively. A fully qualified placement group ID has the following form:

Syntax

POOL_NUM.PG_ID

Example output:

0.1f

To retrieve a list of placement groups:

Example

[ceph: root@host01 /]# ceph pg dump

To format the output in JSON format and save it to a file:

Syntax

ceph pg dump -o FILE_NAME --format=jsonExample

[ceph: root@host01 /]# ceph pg dump -o test --format=json

Query a particular placement group:

Syntax

ceph pg POOL_NUM.PG_ID query

Example

[ceph: root@host01 /]# ceph pg 5.fe query { "snap_trimq": "[]", "snap_trimq_len": 0, "state": "active+clean", "epoch": 2449, "up": [ 3, 8, 10 ], "acting": [ 3, 8, 10 ], "acting_recovery_backfill": [ "3", "8", "10" ], "info": { "pgid": "5.ff", "last_update": "0'0", "last_complete": "0'0", "log_tail": "0'0", "last_user_version": 0, "last_backfill": "MAX", "purged_snaps": [], "history": { "epoch_created": 114, "epoch_pool_created": 82, "last_epoch_started": 2402, "last_interval_started": 2401, "last_epoch_clean": 2402, "last_interval_clean": 2401, "last_epoch_split": 114, "last_epoch_marked_full": 0, "same_up_since": 2401, "same_interval_since": 2401, "same_primary_since": 2086, "last_scrub": "0'0", "last_scrub_stamp": "2021-06-17T01:32:03.763988+0000", "last_deep_scrub": "0'0", "last_deep_scrub_stamp": "2021-06-17T01:32:03.763988+0000", "last_clean_scrub_stamp": "2021-06-17T01:32:03.763988+0000", "prior_readable_until_ub": 0 }, "stats": { "version": "0'0", "reported_seq": "2989", "reported_epoch": "2449", "state": "active+clean", "last_fresh": "2021-06-18T05:16:59.401080+0000", "last_change": "2021-06-17T01:32:03.764162+0000", "last_active": "2021-06-18T05:16:59.401080+0000", ....

Additional Resources

- See the chapter Object Storage Daemon (OSD) configuration options in the OSD Object storage daemon configuratopn options section in Red Hat Ceph Storage Configuration Guide for more details on the snapshot trimming settings.

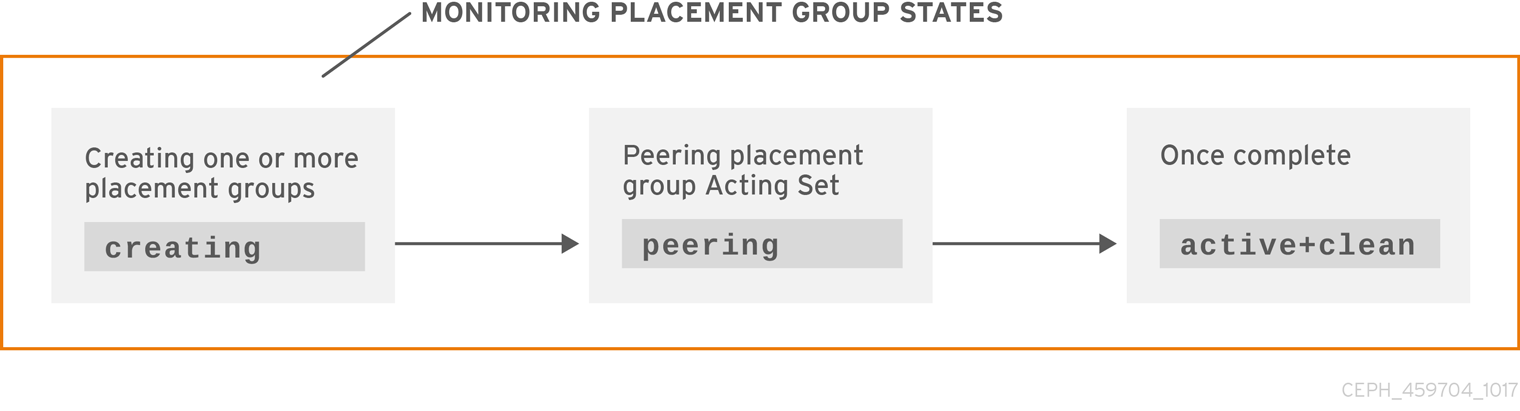

3.2.4. Placement Group creating state

When you create a pool, it will create the number of placement groups you specified. Ceph will echo creating when it is creating one or more placement groups. Once they are created, the OSDs that are part of a placement group’s Acting Set will peer. Once peering is complete, the placement group status should be active+clean, which means a Ceph client can begin writing to the placement group.

3.2.5. Placement group peering state

When Ceph is Peering a placement group, Ceph is bringing the OSDs that store the replicas of the placement group into agreement about the state of the objects and metadata in the placement group. When Ceph completes peering, this means that the OSDs that store the placement group agree about the current state of the placement group. However, completion of the peering process does NOT mean that each replica has the latest contents.

Authoritative History

Ceph will NOT acknowledge a write operation to a client, until all OSDs of the acting set persist the write operation. This practice ensures that at least one member of the acting set will have a record of every acknowledged write operation since the last successful peering operation.

With an accurate record of each acknowledged write operation, Ceph can construct and disseminate a new authoritative history of the placement group. A complete, and fully ordered set of operations that, if performed, would bring an OSD’s copy of a placement group up to date.

3.2.6. Placement group active state

Once Ceph completes the peering process, a placement group may become active. The active state means that the data in the placement group is generally available in the primary placement group and the replicas for read and write operations.

3.2.7. Placement Group clean state

When a placement group is in the clean state, the primary OSD and the replica OSDs have successfully peered and there are no stray replicas for the placement group. Ceph replicated all objects in the placement group the correct number of times.

3.2.8. Placement Group degraded state

When a client writes an object to the primary OSD, the primary OSD is responsible for writing the replicas to the replica OSDs. After the primary OSD writes the object to storage, the placement group will remain in a degraded state until the primary OSD has received an acknowledgement from the replica OSDs that Ceph created the replica objects successfully.

The reason a placement group can be active+degraded is that an OSD may be active even though it doesn’t hold all of the objects yet. If an OSD goes down, Ceph marks each placement group assigned to the OSD as degraded. The Ceph OSDs must peer again when the Ceph OSD comes back online. However, a client can still write a new object to a degraded placement group if it is active.

If an OSD is down and the degraded condition persists, Ceph may mark the down OSD as out of the cluster and remap the data from the down OSD to another OSD. The time between being marked down and being marked out is controlled by mon_osd_down_out_interval, which is set to 600 seconds by default.

A placement group can also be degraded, because Ceph cannot find one or more objects that Ceph thinks should be in the placement group. While you cannot read or write to unfound objects, you can still access all of the other objects in the degraded placement group.

For example, if there are nine OSDs in a three way replica pool. If OSD number 9 goes down, the PGs assigned to OSD 9 goes into a degraded state. If OSD 9 does not recover, it goes out of the storage cluster and the storage cluster rebalances. In that scenario, the PGs are degraded and then recover to an active state.

3.2.9. Placement Group recovering state

Ceph was designed for fault-tolerance at a scale where hardware and software problems are ongoing. When an OSD goes down, its contents may fall behind the current state of other replicas in the placement groups. When the OSD is back up, the contents of the placement groups must be updated to reflect the current state. During that time period, the OSD may reflect a recovering state.

Recovery is not always trivial, because a hardware failure might cause a cascading failure of multiple Ceph OSDs. For example, a network switch for a rack or cabinet may fail, which can cause the OSDs of a number of host machines to fall behind the current state of the storage cluster. Each one of the OSDs must recover once the fault is resolved.

Ceph provides a number of settings to balance the resource contention between new service requests and the need to recover data objects and restore the placement groups to the current state. The osd recovery delay start setting allows an OSD to restart, re-peer and even process some replay requests before starting the recovery process. The osd recovery threads setting limits the number of threads for the recovery process, by default one thread. The osd recovery thread timeout sets a thread timeout, because multiple Ceph OSDs can fail, restart and re-peer at staggered rates. The osd recovery max active setting limits the number of recovery requests a Ceph OSD works on simultaneously to prevent the Ceph OSD from failing to serve . The osd recovery max chunk setting limits the size of the recovered data chunks to prevent network congestion.

3.2.10. Back fill state

When a new Ceph OSD joins the storage cluster, CRUSH will reassign placement groups from OSDs in the cluster to the newly added Ceph OSD. Forcing the new OSD to accept the reassigned placement groups immediately can put excessive load on the new Ceph OSD. Backfilling the OSD with the placement groups allows this process to begin in the background. Once backfilling is complete, the new OSD will begin serving requests when it is ready.

During the backfill operations, you might see one of several states:

-

backfill_waitindicates that a backfill operation is pending, but isn’t underway yet -

backfillindicates that a backfill operation is underway -

backfill_too_fullindicates that a backfill operation was requested, but couldn’t be completed due to insufficient storage capacity.

When a placement group cannot be backfilled, it can be considered incomplete.

Ceph provides a number of settings to manage the load spike associated with reassigning placement groups to a Ceph OSD, especially a new Ceph OSD. By default, osd_max_backfills sets the maximum number of concurrent backfills to or from a Ceph OSD to 10. The osd backfill full ratio enables a Ceph OSD to refuse a backfill request if the OSD is approaching its full ratio, by default 85%. If an OSD refuses a backfill request, the osd backfill retry interval enables an OSD to retry the request, by default after 10 seconds. OSDs can also set osd backfill scan min and osd backfill scan max to manage scan intervals, by default 64 and 512.

For some workloads, it is beneficial to avoid regular recovery entirely and use backfill instead. Since backfilling occurs in the background, this allows I/O to proceed on the objects in the OSD. You can force a backfill rather than a recovery by setting the osd_min_pg_log_entries option to 1, and setting the osd_max_pg_log_entries option to 2. Contact your Red Hat Support account team for details on when this situation is appropriate for your workload.

3.2.11. Placement Group remapped state

When the Acting Set that services a placement group changes, the data migrates from the old acting set to the new acting set. It may take some time for a new primary OSD to service requests. So it may ask the old primary to continue to service requests until the placement group migration is complete. Once data migration completes, the mapping uses the primary OSD of the new acting set.

3.2.12. Placement Group stale state

While Ceph uses heartbeats to ensure that hosts and daemons are running, the ceph-osd daemons may also get into a stuck state where they aren’t reporting statistics in a timely manner. For example, a temporary network fault. By default, OSD daemons report their placement group, up thru, boot and failure statistics every half second, that is, 0.5, which is more frequent than the heartbeat thresholds. If the Primary OSD of a placement group’s acting set fails to report to the monitor or if other OSDs have reported the primary OSD down, the monitors will mark the placement group stale.

When you start the storage cluster, it is common to see the stale state until the peering process completes. After the storage cluster has been running for awhile, seeing placement groups in the stale state indicates that the primary OSD for those placement groups is down or not reporting placement group statistics to the monitor.

3.2.13. Placement Group misplaced state

There are some temporary backfilling scenarios where a PG gets mapped temporarily to an OSD. When that temporary situation should no longer be the case, the PGs might still reside in the temporary location and not in the proper location. In which case, they are said to be misplaced. That’s because the correct number of extra copies actually exist, but one or more copies is in the wrong place.

For example, there are 3 OSDs: 0,1,2 and all PGs map to some permutation of those three. If you add another OSD (OSD 3), some PGs will now map to OSD 3 instead of one of the others. However, until OSD 3 is backfilled, the PG will have a temporary mapping allowing it to continue to serve I/O from the old mapping. During that time, the PG is misplaced, because it has a temporary mapping, but not degraded, since there are 3 copies.

Example

pg 1.5: up=acting: [0,1,2]

ADD_OSD_3

pg 1.5: up: [0,3,1] acting: [0,1,2]

[0,1,2] is a temporary mapping, so the up set is not equal to the acting set and the PG is misplaced but not degraded since [0,1,2] is still three copies.

Example

pg 1.5: up=acting: [0,3,1]

OSD 3 is now backfilled and the temporary mapping is removed, not degraded and not misplaced.

3.2.14. Placement Group incomplete state

A PG goes into a incomplete state when there is incomplete content and peering fails, that is, when there are no complete OSDs which are current enough to perform recovery.

Lets say OSD 1, 2, and 3 are the acting OSD set and it switches to OSD 1, 4, and 3, then osd.1 will request a temporary acting set of OSD 1, 2, and 3 while backfilling 4. During this time, if OSD 1, 2, and 3 all go down, osd.4 will be the only one left which might not have fully backfilled all the data. At this time, the PG will go incomplete indicating that there are no complete OSDs which are current enough to perform recovery.

Alternately, if osd.4 is not involved and the acting set is simply OSD 1, 2, and 3 when OSD 1, 2, and 3 go down, the PG would likely go stale indicating that the mons have not heard anything on that PG since the acting set changed. The reason being there are no OSDs left to notify the new OSDs.

3.2.15. Identifying stuck Placement Groups

A placement group is not necessarily problematic just because it is not in a active+clean state. Generally, Ceph’s ability to self repair might not be working when placement groups get stuck. The stuck states include:

- Unclean: Placement groups contain objects that are not replicated the desired number of times. They should be recovering.

-

Inactive: Placement groups cannot process reads or writes because they are waiting for an OSD with the most up-to-date data to come back

up. -

Stale: Placement groups are in an unknown state, because the OSDs that host them have not reported to the monitor cluster in a while, and can be configured with the

mon osd report timeoutsetting.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the node.

Procedure

To identify stuck placement groups, execute the following:

Syntax

ceph pg dump_stuck {inactive|unclean|stale|undersized|degraded [inactive|unclean|stale|undersized|degraded...]} {<int>}Example

[ceph: root@host01 /]# ceph pg dump_stuck stale OK

3.2.16. Finding an object’s location

The Ceph client retrieves the latest cluster map and the CRUSH algorithm calculates how to map the object to a placement group, and then calculates how to assign the placement group to an OSD dynamically.

Prerequisites

- A running Red Hat Ceph Storage cluster.

- Root-level access to the node.

Procedure

To find the object location, all you need is the object name and the pool name:

Syntax

ceph osd map POOL_NAME OBJECT_NAME

Example

[ceph: root@host01 /]# ceph osd map mypool myobject

Chapter 4. Stretch clusters for Ceph storage

As a storage administrator, you can configure stretch clusters by entering stretch mode with 2-site clusters.

Red Hat Ceph Storage is capable of withstanding the loss of Ceph OSDs because of its network and cluster, which are equally reliable with failures randomly distributed across the CRUSH map. If a number of OSDs is shut down, the remaining OSDs and monitors still manage to operate.

However, this might not be the best solution for some stretched cluster configurations where a significant part of the Ceph cluster can use only a single network component. The example is a single cluster located in multiple data centers, for which the user wants to sustain a loss of a full data center.

The standard configuration is with two data centers. Other configurations are in clouds or availability zones. Each site holds two copies of the data, therefore, the replication size is four. The third site should have a tiebreaker monitor, this can be a virtual machine or high-latency compared to the main sites. This monitor chooses one of the sites to restore data if the network connection fails and both data centers remain active.

The standard Ceph configuration survives many failures of the network or data centers and it never compromises data consistency. If you restore enough Ceph servers following a failure, it recovers. Ceph maintains availability if you lose a data center, but can still form a quorum of monitors and have all the data available with enough copies to satisfy pools’ min_size, or CRUSH rules that replicate again to meet the size.

There are no additional steps to power down a stretch cluster. You can see the Powering down and rebooting Red Hat Ceph Storage cluster for more information.

Stretch cluster failures

Red Hat Ceph Storage never compromises on data integrity and consistency. If there is a network failure or a loss of nodes and the services can still be restored, Ceph returns to normal functionality on its own.

However, there are situations where you lose data availability even if you have enough servers available to meet Ceph’s consistency and sizing constraints, or where you unexpectedly do not meet the constraints.

First important type of failure is caused by inconsistent networks. If there is a network split, Ceph might be unable to mark OSD as down to remove it from the acting placement group (PG) sets despite the primary OSD being unable to replicate data. When this happens, the I/O is not permitted because Ceph cannot meet its durability guarantees.

The second important category of failures is when it appears that you have data replicated across data enters, but the constraints are not sufficient to guarantee this. For example, you might have data centers A and B, and the CRUSH rule targets three copies and places a copy in each data center with a min_size of 2. The PG might go active with two copies in site A and no copies in site B, which means that if you lose site A, you lose the data and Ceph cannot operate on it. This situation is difficult to avoid with standard CRUSH rules.

4.1. Stretch mode for a storage cluster

To configure stretch clusters, you must enter the stretch mode. When stretch mode is enabled, the Ceph OSDs only take PGs as active when they peer across data centers, or whichever other CRUSH bucket type you specified, assuming both are active. Pools increase in size from the default three to four, with two copies on each site.

In stretch mode, Ceph OSDs are only allowed to connect to monitors within the same data center. New monitors are not allowed to join the cluster without specified location.

If all the OSDs and monitors from a data center become inaccessible at once, the surviving data center will enter a degraded stretch mode. This issues a warning, reduces the min_size to 1, and allows the cluster to reach an active state with the data from the remaining site.

The degraded state also triggers warnings that the pools are too small, because the pool size does not get changed. However, a special stretch mode flag prevents the OSDs from creating extra copies in the remaining data center, therefore it still keeps 2 copies.

When the missing data center becomes accesible again, the cluster enters recovery stretch mode. This changes the warning and allows peering, but still requires only the OSDs from the data center, which was up the whole time.

When all PGs are in a known state and are not degraded or incomplete, the cluster goes back to the regular stretch mode, ends the warning, and restores min_size to its starting value 2. The cluster again requires both sites to peer, not only the site that stayed up the whole time, therefore you can fail over to the other site, if necessary.

Stretch mode limitations

- It is not possible to exit from stretch mode once it is entered.

- You cannot use erasure-coded pools with clusters in stretch mode. You can neither enter the stretch mode with erasure-coded pools, nor create an erasure-coded pool when the stretch mode is active.

- Stretch mode with no more than two sites is supported.

The weights of the two sites should be the same. If they are not, you receive the following error:

Example

[ceph: root@host01 /]# ceph mon enable_stretch_mode host05 stretch_rule datacenter Error EINVAL: the 2 datacenter instances in the cluster have differing weights 25947 and 15728 but stretch mode currently requires they be the same!

To achieve same weights on both sites, the Ceph OSDs deployed in the two sites should be of equal size, that is, storage capacity in the first site is equivalent to storage capacity in the second site.

- While it is not enforced, you should run two Ceph monitors on each site and a tiebreaker, for a total of five. This is because OSDs can only connect to monitors in their own site when in stretch mode.

- You have to create your own CRUSH rule, which provides two copies on each site, which totals to four on both sites.

-

You cannot enable stretch mode if you have existing pools with non-default size or

min_size. -

Because the cluster runs with

min_size 1when degraded, you should only use stretch mode with all-flash OSDs. This minimizes the time needed to recover once connectivity is restored, and minimizes the potential for data loss.

Additional Resources

- See Troubleshooting clusters in stretch mode for troubleshooting steps.

4.1.1. Setting the crush location for the daemons

Before you enter the stretch mode, you need to prepare the cluster by setting the crush location to the daemons in the Red Hat Ceph Storage cluster. There are two ways to do this:

- Bootstrap the cluster through a service configuration file, where the locations are added to the hosts as part of deployment.

-

Set the locations manually through

ceph osd crush add-bucketandceph osd crush movecommands after the cluster is deployed.

Method 1: Bootstrapping the cluster

Prerequisites

- Root-level access to the nodes.

Procedure

If you are bootstrapping your new storage cluster, you can create the service configuration

.yamlfile that adds the nodes to the Red Hat Ceph Storage cluster and also sets specific labels for where the services should run:Example

service_type: host addr: host01 hostname: host01 location: root: default datacenter: DC1 labels: - osd - mon - mgr --- service_type: host addr: host02 hostname: host02 location: datacenter: DC1 labels: - osd - mon --- service_type: host addr: host03 hostname: host03 location: datacenter: DC1 labels: - osd - mds - rgw --- service_type: host addr: host04 hostname: host04 location: root: default datacenter: DC2 labels: - osd - mon - mgr --- service_type: host addr: host05 hostname: host05 location: datacenter: DC2 labels: - osd - mon --- service_type: host addr: host06 hostname: host06 location: datacenter: DC2 labels: - osd - mds - rgw --- service_type: host addr: host07 hostname: host07 labels: - mon --- service_type: mon placement: label: "mon" --- service_id: cephfs placement: label: "mds" --- service_type: mgr service_name: mgr placement: label: "mgr" --- service_type: osd service_id: all-available-devices service_name: osd.all-available-devices placement: label: "osd" spec: data_devices: all: true --- service_type: rgw service_id: objectgw service_name: rgw.objectgw placement: count: 2 label: "rgw" spec: rgw_frontend_port: 8080Bootstrap the storage cluster with the

--apply-specoption:Syntax

cephadm bootstrap --apply-spec CONFIGURATION_FILE_NAME --mon-ip MONITOR_IP_ADDRESS --ssh-private-key PRIVATE_KEY --ssh-public-key PUBLIC_KEY --registry-url REGISTRY_URL --registry-username USER_NAME --registry-password PASSWORD

Example

[root@host01 ~]# cephadm bootstrap --apply-spec initial-config.yaml --mon-ip 10.10.128.68 --ssh-private-key /home/ceph/.ssh/id_rsa --ssh-public-key /home/ceph/.ssh/id_rsa.pub --registry-url registry.redhat.io --registry-username myuser1 --registry-password mypassword1

ImportantYou can use different command options with the

cephadm bootstrapcommand. However, always include the--apply-specoption to use the service configuration file and configure the host locations.

Additional Resources

-

See Bootstrapping a new storage cluster for more information about Ceph bootstrapping and different

cephadm bootstrapcommand options.

Method 2: Setting the locations after the deployment

Prerequisites

- Root-level access to the nodes.

Procedure

Add two buckets to which you plan to set the location of your non-tiebreaker monitors to the CRUSH map, specifying the bucket type as as

datacenter:Syntax

ceph osd crush add-bucket BUCKET_NAME BUCKET_TYPE

Example

[ceph: root@host01 /]# ceph osd crush add-bucket DC1 datacenter [ceph: root@host01 /]# ceph osd crush add-bucket DC2 datacenter

Move the buckets under

root=default:Syntax

ceph osd crush move BUCKET_NAME root=defaultExample

[ceph: root@host01 /]# ceph osd crush move DC1 root=default [ceph: root@host01 /]# ceph osd crush move DC2 root=default

Move the OSD hosts according to the required CRUSH placement:

Syntax

ceph osd crush move HOST datacenter=DATACENTER

Example

[ceph: root@host01 /]# ceph osd crush move host01 datacenter=DC1

4.1.2. Entering the stretch mode

The new stretch mode is designed to handle two sites. There is a lower risk of component availability outages with 2-site clusters.

Prerequisites

- Root-level access to the nodes.

- The crush location is set to the hosts.

Procedure

Set the location of each monitor, matching your CRUSH map:

Syntax

ceph mon set_location HOST datacenter=DATACENTER

Example

[ceph: root@host01 /]# ceph mon set_location host01 datacenter=DC1 [ceph: root@host01 /]# ceph mon set_location host02 datacenter=DC1 [ceph: root@host01 /]# ceph mon set_location host04 datacenter=DC2 [ceph: root@host01 /]# ceph mon set_location host05 datacenter=DC2 [ceph: root@host01 /]# ceph mon set_location host07 datacenter=DC3

Generate a CRUSH rule which places two copies on each data center:

Syntax

ceph osd getcrushmap > COMPILED_CRUSHMAP_FILENAME crushtool -d COMPILED_CRUSHMAP_FILENAME -o DECOMPILED_CRUSHMAP_FILENAME

Example

[ceph: root@host01 /]# ceph osd getcrushmap > crush.map.bin [ceph: root@host01 /]# crushtool -d crush.map.bin -o crush.map.txt

Edit the decompiled CRUSH map file to add a new rule:

Example

rule stretch_rule { id 1 1 type replicated min_size 1 max_size 10 step take DC1 2 step chooseleaf firstn 2 type host step emit step take DC2 3 step chooseleaf firstn 2 type host step emit }NoteThis rule makes the cluster have read-affinity towards data center

DC1. Therefore, all the reads or writes happen through Ceph OSDs placed inDC1.If this is not desirable, and reads or writes are to be distributed evenly across the zones, the crush rule is the following:

Example

rule stretch_rule { id 1 type replicated min_size 1 max_size 10 step take default step choose firstn 0 type datacenter step chooseleaf firstn 2 type host step emit }In this rule, the data center is selected randomly and automatically.

See CRUSH rules for more information on

firstnandindepoptions.

Inject the CRUSH map to make the rule available to the cluster:

Syntax

crushtool -c DECOMPILED_CRUSHMAP_FILENAME -o COMPILED_CRUSHMAP_FILENAME ceph osd setcrushmap -i COMPILED_CRUSHMAP_FILENAME

Example

[ceph: root@host01 /]# crushtool -c crush.map.txt -o crush2.map.bin [ceph: root@host01 /]# ceph osd setcrushmap -i crush2.map.bin

If you do not run the monitors in connectivity mode, set the election strategy to

connectivity:Example

[ceph: root@host01 /]# ceph mon set election_strategy connectivity

Enter stretch mode by setting the location of the tiebreaker monitor to split across the data centers:

Syntax

ceph mon set_location HOST datacenter=DATACENTER ceph mon enable_stretch_mode HOST stretch_rule datacenter

Example