Chapter 4. Pools

Ceph clients store data in pools. When you create pools, you are creating an I/O interface for clients to store data. From the perspective of a Ceph client, that is, block device, gateway, and the rest, interacting with the Ceph storage cluster is simple: create a cluster handle and connect to the cluster; then, create an I/O context for reading and writing objects and their extended attributes.

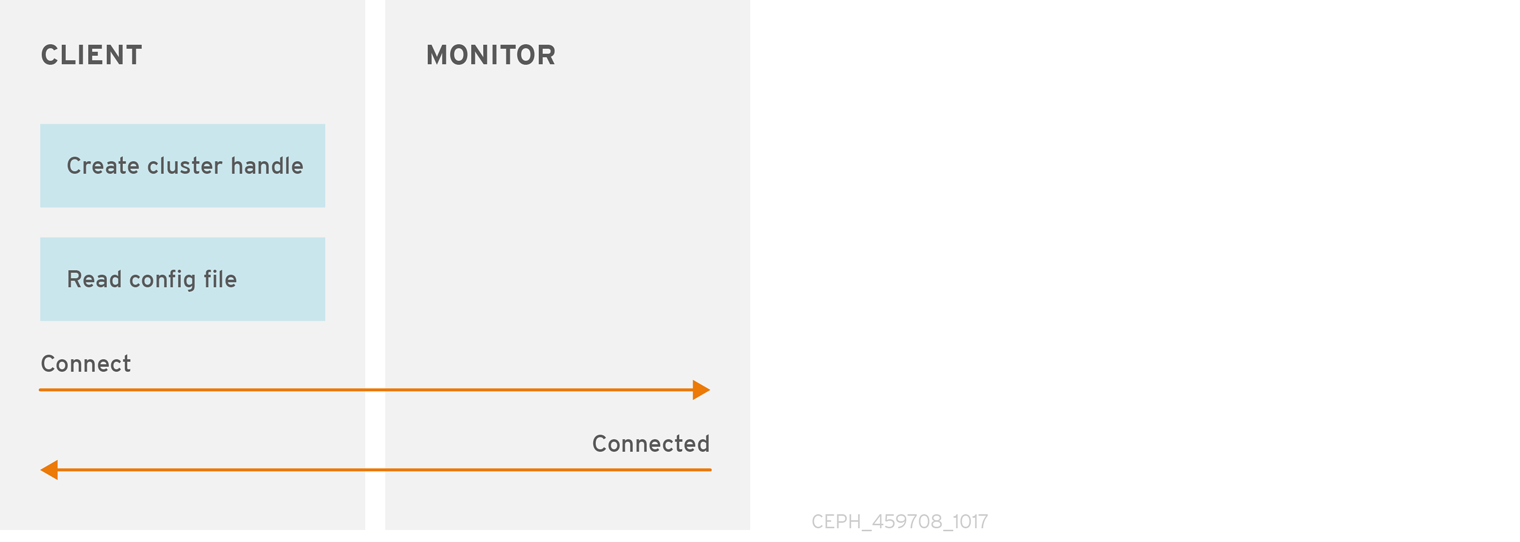

Create a Cluster Handle and Connect to the Cluster

To connect to the Ceph storage cluster, the Ceph client needs the cluster name, which is usually ceph by default, and an initial monitor address. Ceph clients usually retrieve these parameters using the default path for the Ceph configuration file and then read it from the file, but a user might also specify the parameters on the command line. The Ceph client also provides a user name and secret key, authentication is on by default. Then, the client contacts the Ceph monitor cluster and retrieves a recent copy of the cluster map, including its monitors, OSDs and pools.

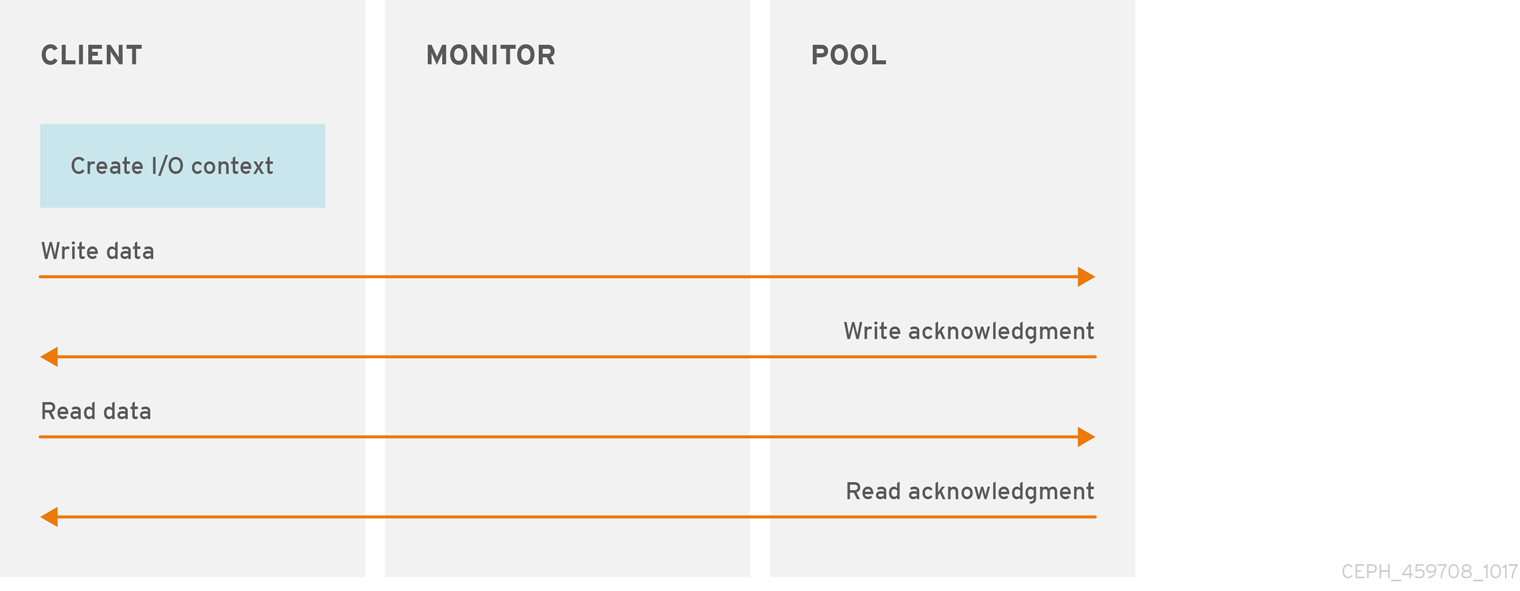

Create a Pool I/O Context

To read and write data, the Ceph client creates an I/O context to a specific pool in the Ceph storage cluster. If the specified user has permissions for the pool, the Ceph client can read from and write to the specified pool.

Ceph’s architecture enables the storage cluster to provide a simple interface to Ceph clients. Clients might select one of the defined sophisticated storage strategies by specifying a pool name and creating an I/O context. Storage strategies are invisible to the Ceph client in all but capacity and performance. Similarly, the complexities of Ceph clients, such as mapping objects into a block device representation or providing an S3 or Swift RESTful service, are invisible to the Ceph storage cluster.

A pool provides you with:

-

Resilience: You can set how many OSDs are allowed to fail without losing data. For replicated pools, it is the desired number of copies or replicas of an object. A typical configuration stores an object and one additional copy, that is

size = 2, but you can determine the number of copies or replicas. For erasure coded pools, it is the number of coding chunks, that ism=2in the erasure code profile. - Placement Groups: You can set the number of placement groups (PGs) for the pool. A typical configuration uses approximately 50-100 PGs per OSD to provide optimal balancing without using too many computing resources. When setting up multiple pools, ensure you set a reasonable number of PGs for both the pool, and the cluster as a whole.

- CRUSH Rules: When you store data in a pool, a CRUSH rule mapped to the pool enables CRUSH to identify the rule for the placement of each object and its replicas, or chunks for erasure coded pools, in your cluster. You can create a custom CRUSH rule for your pool.

-

Quotas: When you set quotas on a pool with

ceph osd pool set-quota, you might limit the maximum number of objects or the maximum number of bytes stored in the specified pool.

4.1. Pools and Storage Strategies

To manage pools, you can list, create, and remove pools. You can also view the utilization statistics for each pool.

4.2. List Pools

To list your cluster’s pools, run:

Example

[ceph: root@host01 /]# ceph osd lspools

4.3. Create a Pool

Before creating pools, see the Pool, PG and CRUSH Configuration Reference chapter in the Configuration Guide for Red Hat Ceph Storage 5.

In Red Hat Ceph Storage 3 and later releases, system administrators must explicitly enable a pool to receive I/O operations from Ceph clients. See Enable Application for details. Failure to enable a pool will result in a HEALTH_WARN status.

It is better to adjust the default value for the number of placement groups in the Ceph configuration file, because the default value does not have to suit your needs.

Example

# osd pool default pg num = 100 # osd pool default pgp num = 100

To create a replicated pool, run:

Syntax

ceph osd pool create POOL_NAME PG_NUMBER PGP_NUMBER [replicated] \ [crush-rule-name] [expected-num-objects]

To create a bulk pool, run:

Syntax

ceph osd pool create POOL_NAME --bulk

To create an erasure-coded pool, run:

Syntax

ceph osd pool create POOL_NAME PG_NUMBER PGP_NUMBER erasure \ [erasure-code-profile] [crush-rule-name] [expected-num-objects]

Where:

- POOL_NAME

- Description

- The name of the pool. It must be unique.

- Type

- String

- Required

- Yes. If not specified, it is set to the value listed in the Ceph configuration file, or to the default value.

- Default

-

ceph

- PG_NUMBER

- Description

-

The total number of placement groups for the pool. See the Placement Groups section and the Ceph Placement Groups (PGs) per Pool Calculator for details on calculating a suitable number. The default value

8is not suitable for most systems. - Type

- Integer

- Required

- Yes

- Default

-

8

- PGP_NUMBER

- Description

- The total number of placement groups for placement purposes. This value must be equal to the total number of placement groups, except for placement group splitting scenarios.

- Type

- Integer

- Required

- Yes. If not specified it is set to the value listed in the Ceph configuration file, or to the default value.

- Default

-

8

replicatedorerasure- Description

-

The pool type can be either

replicatedto recover from lost OSDs by keeping multiple copies of the objects, orerasureto get a kind of generalized RAID5 capability. The replicated pools require more raw storage but implement all Ceph operations. The erasure-coded pools require less raw storage but only implement a subset of the available operations. - Type

- String

- Required

- No

- Default

-

replicated

- crush-rule-name

- Description

-

The name of the crush rule for the pool. The rule MUST exist. For replicated pools, the name is the rule specified by the

osd_pool_default_crush_ruleconfiguration setting. For erasure-coded pools the name iserasure-codeif you specify the default erasure code profile or{pool-name}otherwise. Ceph creates this rule with the specified name implicitly if the rule doesn’t already exist. - Type

- String

- Required

- No

- Default

-

Uses

erasure-codefor an erasure-coded pool. For replicated pools, it uses the value of theosd_pool_default_crush_rulevariable from the Ceph configuration.

- expected-num-objects

- Description

-

The expected number of objects for the pool. By setting this value together with a negative

filestore_merge_thresholdvariable, Ceph splits the placement groups at pool creation time to avoid the latency impact to perform runtime directory splitting. - Type

- Integer

- Required

- No

- Default

-

0, no splitting at the pool creation time.

- erasure-code-profile

- Description

-

For erasure-coded pools, use the erasure code profile. It must be an existing profile as defined by the

osd erasure-code-profile setvariable in the Ceph configuration file. For further information, see the Erasure Code Profiles section. - Type

- String

- Required

- No

When you create a pool, set the number of placement groups to a reasonable value, for example to 100. Consider the total number of placement groups per OSD. Placement groups are computationally expensive, so performance will degrade when you have many pools with many placement groups, for example, 50 pools with 100 placement groups each. The point of diminishing returns depends upon the power of the OSD host.

Additional Resources

See the Placement Groups section and Ceph Placement Groups (PGs) per Pool Calculator for details on calculating an appropriate number of placement groups for your pool.

4.4. Set Pool Quotas

You can set pool quotas for the maximum number of bytes and the maximum number of objects per pool.

Syntax

ceph osd pool set-quota POOL_NAME [max_objects OBJECT_COUNT] [max_bytes BYTES]

Example

[ceph: root@host01 /]# ceph osd pool set-quota data max_objects 10000

To remove a quota, set its value to 0.

In-flight write operations might overrun pool quotas until Ceph propagates the pool usage across the cluster. This is normal behavior. Enforcing pool quotas on in-flight write operations would impose significant performance penalties.

4.5. Delete a Pool

To delete a pool, run:

Syntax

ceph osd pool delete POOL_NAME [POOL_NAME --yes-i-really-really-mean-it]

To protect data, storage administrators cannot delete pools by default. Set the mon_allow_pool_delete configuration option before deleting pools.

If a pool has its own rule, consider removing it after deleting the pool. If a pool has users strictly for its own use, consider deleting those users after deleting the pool.

4.6. Rename a Pool

To rename a pool, run:

Syntax

ceph osd pool rename CURRENT_POOL_NAME NEW_POOL_NAME

If you rename a pool and you have per-pool capabilities for an authenticated user, you must update the user’s capabilities, that is caps, with the new pool name.

4.7. Migrating a pool

Sometimes it is necessary to migrate all objects from one pool to another. This is done in cases such as needing to change parameters that cannot be modified on a specific pool. For example, needing to reduce the number of placement groups of a pool.

When a workload is using only Ceph Block Device images, follow the procedures documented for moving and migrating a pool within the Red Hat Ceph Storage Block Device Guide:

The migration methods described for Ceph Block Device are more recommended than those documented here. using the cppool does not preserve all snapshots and snapshot related metadata, resulting in an unfaithful copy of the data. For example, copying an RBD pool does not completely copy the image. In this case, snaps are not present and will not work properly. The cppool also does not preserve the user_version field that some librados users may rely on.

If migrating a pool is necessary and your user workloads contain images other than Ceph Block Devices, continue with one of the procedures documented here.

Prerequisites

If using the

rados cppoolcommand:- Read-only access to the pool is required.

-

Only use this command if you do not have RBD images and its snaps and

user_versionconsumed by librados.

- If using the local drive RADOS commands, verify that sufficient cluster space is available. Two, three, or more copies of data will be present as per pool replication factor.

Procedure

Method one - the recommended direct way

Copy all objects with the rados cppool command.

Read-only access to the pool is required during copy.

Syntax

ceph osd pool create NEW_POOL PG_NUM [ <other new pool parameters> ] rados cppool SOURCE_POOL NEW_POOL ceph osd pool rename SOURCE_POOL NEW_SOURCE_POOL_NAME ceph osd pool rename NEW_POOL SOURCE_POOL

Example

[ceph: root@host01 /]# ceph osd pool create pool1 250 [ceph: root@host01 /]# rados cppool pool2 pool1 [ceph: root@host01 /]# ceph osd pool rename pool2 pool3 [ceph: root@host01 /]# ceph osd pool rename pool1 pool2

Method two - using a local drive

Use the

rados exportandrados importcommands and a temporary local directory to save all exported data.Syntax

ceph osd pool create NEW_POOL PG_NUM [ <other new pool parameters> ] rados export --create SOURCE_POOL FILE_PATH rados import FILE_PATH NEW_POOL

Example

[ceph: root@host01 /]# ceph osd pool create pool1 250 [ceph: root@host01 /]# rados export --create pool2 <path of export file> [ceph: root@host01 /]# rados import <path of export file> pool1

- Required. Stop all I/O to the source pool.

Required. Resynchronize all modified objects.

Syntax

rados export --workers 5 SOURCE_POOL FILE_PATH rados import --workers 5 FILE_PATH NEW_POOL

Example

[ceph: root@host01 /]# rados export --workers 5 pool2 <path of export file> [ceph: root@host01 /]# rados import --workers 5 <path of export file> pool1

4.8. Show Pool Statistics

To show a pool’s utilization statistics, run:

Example

[ceph: root@host01 /] rados df

4.9. Set Pool Values

To set a value to a pool, run the following command:

Syntax

ceph osd pool set POOL_NAME KEY VALUE

The Pool Values section lists all key-values pairs that you can set.

4.10. Get Pool Values

To get a value from a pool, run the following command:

Syntax

ceph osd pool get POOL_NAME KEY

The Pool Values section lists all key-values pairs that you can get.

4.11. Enable Application

Red Hat Ceph Storage provides additional protection for pools to prevent unauthorized types of clients from writing data to the pool. This means that system administrators must expressly enable pools to receive I/O operations from Ceph Block Device, Ceph Object Gateway, Ceph Filesystem, or for a custom application.

To enable a client application to conduct I/O operations on a pool, run the following:

Syntax

ceph osd pool application enable POOL_NAME APPLICATION {--yes-i-really-mean-it}

Where APPLICATION is:

-

cephfsfor the Ceph Filesystem. -

rbdfor the Ceph Block Device. -

rgwfor the Ceph Object Gateway.

Specify a different APPLICATION value for a custom application.

A pool that is not enabled will generate a HEALTH_WARN status. In that scenario, the output for ceph health detail -f json-pretty will output the following:

{

"checks": {

"POOL_APP_NOT_ENABLED": {

"severity": "HEALTH_WARN",

"summary": {

"message": "application not enabled on 1 pool(s)"

},

"detail": [

{

"message": "application not enabled on pool '<pool-name>'"

},

{

"message": "use 'ceph osd pool application enable <pool-name> <app-name>', where <app-name> is 'cephfs', 'rbd', 'rgw', or freeform for custom applications."

}

]

}

},

"status": "HEALTH_WARN",

"overall_status": "HEALTH_WARN",

"detail": [

"'ceph health' JSON format has changed in luminous. If you see this your monitoring system is scraping the wrong fields. Disable this with 'mon health preluminous compat warning = false'"

]

}

Initialize pools for the Ceph Block Device with rbd pool init POOL_NAME.

4.12. Disable Application

To disable a client application from conducting I/O operations on a pool, run the following:

Syntax

ceph osd pool application disable POOL_NAME APPLICATION {--yes-i-really-mean-it}

Where APPLICATION is:

-

cephfsfor the Ceph Filesystem. -

rbdfor the Ceph Block Device -

rgwfor the Ceph Object Gateway

Specify a different APPLICATION value for a custom application.

4.13. Set Application Metadata

Provides the functionality to set key-value pairs describing attributes of the client application.

To set client application metadata on a pool, run the following:

Syntax

ceph osd pool application set POOL_NAME APPLICATION KEY VALUE

Where APPLICATION is:

-

cephfsfor the Ceph Filesystem -

rbdfor the Ceph Block Device -

rgwfor the Ceph Object Gateway

Specify a different APPLICATION value for a custom application.

4.14. Remove Application Metadata

To remove client application metadata on a pool, run the following:

Syntax

ceph osd pool application set POOL_NAME APPLICATION KEY

Where APPLICATION is:

-

cephfsfor the Ceph Filesystem -

rbdfor the Ceph Block Device -

rgwfor the Ceph Object Gateway

Specify a different APPLICATION value for a custom application.

4.15. Set the Number of Object Replicas

To set the number of object replicas on a replicated pool, run the following command:

Syntax

ceph osd pool set POOL_NAME size NUMBER_OF_REPLICAS

You can run this command for each pool.

The NUMBER_OF_REPLICAS parameter includes the object itself. If you want to include the object and two copies of the object for a total of three instances of the object, specify 3.

Example

[ceph: root@host01 /]# ceph osd pool set data size 3

An object might accept I/O operations in degraded mode with fewer replicas than specified by the pool size setting. To set a minimum number of required replicas for I/O, use the min_size setting.

Example

[ceph: root@host01 /]# ceph osd pool set data min_size 2

This ensures that no object in the data pool will receive I/O with fewer replicas than specified by the min_size setting.

4.16. Get the Number of Object Replicas

To get the number of object replicas, run the following command:

Example

[ceph: root@host01 /]# ceph osd dump | grep 'replicated size'

Ceph will list the pools with the replicated size attribute highlighted. By default, Ceph creates two replicas of an object, that is a total of three copies, or a size of 3.

4.17. Pool Values

The following list contains key-values pairs that you can set or get. For further information, see the Set Pool Values and Get Pool Values sections.

- size

- Description

- Specifies the number of replicas for objects in the pool. See the Set the Number of Object Replicas section for further details. Applicable for the replicated pools only.

- Type

- Integer

- min_size

- Description

-

Specifies the minimum number of replicas required for I/O. See the Set the Number of Object Replicas section for further details. For erasure-coded pools, this should be set to a value greater than

k. If I/O is allowed at the valuek, then there is no redundancy and data is lost in the event of a permanent OSD failure. For more information, see Erasure code pools overview. - Type

- Integer

- crash_replay_interval

- Description

- Specifies the number of seconds to allow clients to replay acknowledged, but uncommitted requests.

- Type

- Integer

- pg-num

- Description

-

The total number of placement groups for the pool. See the Pool, placement groups, and CRUSH Configuration Reference section in the Red Hat Ceph Storage 5 Configuration Guide for details on calculating a suitable number. The default value

8is not suitable for most systems. - Type

- Integer

- Required

- Yes.

- Default

- 8

- pgp-num

- Description

- The total number of placement groups for placement purposes. This should be equal to the total number of placement groups, except for placement group splitting scenarios.

- Type

- Integer

- Required

- Yes. Picks up default or Ceph configuration value if not specified.

- Default

- 8

- Valid Range

-

Equal to or less than what specified by the

pg_numvariable.

- crush_rule

- Description

- The rule to use for mapping object placement in the cluster.

- Type

- String

- hashpspool

- Description

-

Enable or disable the

HASHPSPOOLflag on a given pool. With this option enabled, pool hashing and placement group mapping are changed to improve the way pools and placement groups overlap. - Type

- Integer

- Valid Range

1enables the flag,0disables the flag.ImportantDo not enable this option on production pools of a cluster with a large amount of OSDs and data. All placement groups in the pool would have to be remapped causing too much data movement.

- fast_read

- Description

-

On a pool that uses erasure coding, if this flag is enabled, the read request issues subsequent reads to all shards, and waits until it receives enough shards to decode to serve the client. In the case of the

jerasureandisa erasureplug-ins, once the first K replies return, the client’s request is served immediately using the data decoded from these replies. This helps to allocate some resources for better performance. Currently, this flag is only supported for erasure coding pools. - Type

- Boolean

- Defaults

-

0

- allow_ec_overwrites

- Description

- Whether writes to an erasure coded pool can update part of an object, so the Ceph Filesystem and Ceph Block Device can use it.

- Type

- Boolean

- compression_algorithm

- Description

-

Sets inline compression algorithm to use with the BlueStore storage backend. This setting overrides the

bluestore_compression_algorithmconfiguration setting. - Type

- String

- Valid Settings

-

lz4,snappy,zlib,zstd

- compression_mode

- Description

-

Sets the policy for the inline compression algorithm for the BlueStore storage backend. This setting overrides the

bluestore_compression_modeconfiguration setting. - Type

- String

- Valid Settings

-

none,passive,aggressive,force

- compression_min_blob_size

- Description

-

BlueStore will not compress chunks smaller than this size. This setting overrides the

bluestore_compression_min_blob_sizeconfiguration setting. - Type

- Unsigned Integer

- compression_max_blob_size

- Description

-

BlueStore will break chunks larger than this size into smaller blobs of

compression_max_blob_sizebefore compressing the data. - Type

- Unsigned Integer

- nodelete

- Description

-

Set or unset the

NODELETEflag on a given pool. - Type

- Integer

- Valid Range

-

1sets flag.0unsets flag.

- nopgchange

- Description

-

Set or unset the

NOPGCHANGEflag on a given pool. - Type

- Integer

- Valid Range

-

1sets the flag.0unsets the flag.

- nosizechange

- Description

-

Set or unset the

NOSIZECHANGEflag on a given pool. - Type

- Integer

- Valid Range

-

1sets the flag.0unsets the flag.

- write_fadvise_dontneed

- Description

-

Set or unset the

WRITE_FADVISE_DONTNEEDflag on a given pool. - Type

- Integer

- Valid Range

-

1sets the flag.0unsets the flag.

- noscrub

- Description

-

Set or unset the

NOSCRUBflag on a given pool. - Type

- Integer

- Valid Range

-

1sets the flag.0unsets the flag.

- nodeep-scrub

- Description

-

Set or unset the

NODEEP_SCRUBflag on a given pool. - Type

- Integer

- Valid Range

-

1sets the flag.0unsets the flag.

- scrub_min_interval

- Description

-

The minimum interval in seconds for pool scrubbing when load is low. If it is

0, Ceph uses theosd_scrub_min_intervalconfiguration setting. - Type

- Double

- Default

-

0

- scrub_max_interval

- Description

-

The maximum interval in seconds for pool scrubbing irrespective of cluster load. If it is

0, Ceph uses theosd_scrub_max_intervalconfiguration setting. - Type

- Double

- Default

-

0

- deep_scrub_interval

- Description

-

The interval in seconds for pool 'deep' scrubbing. If it is

0, Ceph uses theosd_deep_scrub_intervalconfiguration setting. - Type

- Double

- Default

-

0