-

Language:

English

-

Language:

English

Red Hat Training

A Red Hat training course is available for Red Hat Ceph Storage

Chapter 1. What is the Ceph File System (CephFS)?

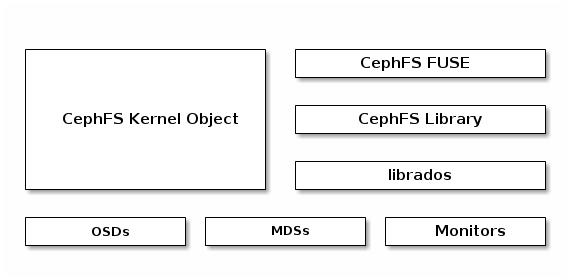

The Ceph File System (CephFS) is a file system compatible with POSIX standards that uses a Ceph Storage Cluster to store its data. The Ceph File System uses the same Ceph Storage Cluster system as the Ceph Block Device, Ceph Object Gateway, or librados API.

The Ceph File System is a Technology Preview only. Technology Preview features are not supported with Red Hat production service level agreements (SLAs), might not be functionally complete, and Red Hat does not recommend to use them for production. These features provide early access to upcoming product features, enabling customers to test functionality and provide feedback during the development process.

For more information on Red Hat Technology Preview features support scope, see https://access.redhat.com/support/offerings/techpreview/.

In addition, see Section 1.2, “Limitations” for details on current CephFS limitations and experimental features.

To run the Ceph File System, you must have a running Ceph Storage Cluster with at least one Ceph Metadata Server (MDS) running. For details on installing the Ceph Storage Cluster, see the Installation Guide for Red Hat Enterprise Linux or Installation Guide for Ubuntu. See Chapter 2, Installing and Configuring Ceph Metadata Servers (MDS) for details on installing the Ceph Metadata Server.

1.1. Features

The Ceph File System introduces the following features and enhancements:

- Scalability

- The Ceph File System is highly scalable because clients read directly from and write to all OSD nodes.

- Shared File System

- The Ceph File System is a shared file system so multiple clients can work on the same file system at once.

- High Availability

- The Ceph File System provides a cluster of Ceph Metadata Servers (MDS). One is active and others are in standby mode. If the active MDS terminates unexpectedly, one of the standby MDS becomes active. As a result, client mounts continue working through a server failure. This behavior makes the Ceph File System highly available.

- File and Directory Layouts

- The Ceph File System allows users to configure file and directory layouts to use multiple pools.

- POSIX Access Control Lists (ACL)

The Ceph File System supports the POSIX Access Control Lists (ACL). ACL are enabled by default with the Ceph File Systems mounted as kernel clients with kernel version

kernel-3.10.0-327.18.2.el7.To use ACL with the Ceph File Systems mounted as FUSE clients, you must enabled them. See Section 1.2, “Limitations” for details.

- Client Quotas

The Ceph File System FUSE client supports setting quotas on any directory in a system. The quota can restrict the number of bytes or the number of files stored beneath that point in the directory hierarchy.

To enable the client quotas, set the

client quotaoption totruein the Ceph configuration file:[client] client quota = true

1.2. Limitations

The Ceph File System is provided as a Technical Preview and as such, there are several limitations:

- Access Control Lists (ACL) support in FUSE clients

To use the ACL feature with the Ceph File System mounted as a FUSE client, you must enable it. To do so, add the following options to the Ceph configuration file:

[client] fuse_default_permission=0 client_acl_type=posix_acl

Then restart the Ceph services.

- Snapshots

Creating snapshots is not enabled by default because this feature is still experimental and it can cause the MDS or client nodes to terminate unexpectedly.

If you understand the risks and still wish to enable snapshots, use:

ceph mds set allow_new_snaps true --yes-i-really-mean-it

- Multiple active MDS

By default, only configurations with one active MDS are supported. Having more active MDS can cause the Ceph File System to fail.

If you understand the risks and still wish to use multiple active MDS, increase the value of the

max_mdsoption and set theallow_multimdsoption totruein the Ceph configuration file.- Multiple Ceph File Systems

By default, creation of multiple Ceph File Systems in one cluster is disabled. An attempt to create an additional Ceph File System fails with the following error:

Error EINVAL: Creation of multiple filesystems is disabled.

Creating multiple Ceph File Systems in one cluster is not fully supported yet and can cause the MDS or client nodes to terminate unexpectedly.

If you understand the risks and still wish to enable multiple Ceph file systems, use:

ceph fs flag set enable_multiple true --yes-i-really-mean-it

- FUSE clients cannot be mounted permanently on Red Hat Enterprise Linux 7.2

-

The

util-linuxpackage shipped with Red Hat Enterprise Linux 7.2 does not support mounting CephFS FUSE clients in/etc/fstab. Red Hat Enterprise Linux 7.3 includes a new version ofutil-linuxthat supports mounting CephFS FUSE clients permanently. - The kernel clients in Red Hat Enterprise Linux 7.3 do not support the

pool_namespacelayout setting -

As a consequence, files written from FUSE clients with a namespace set might not be accessible from Red Hat Enterprise Linux 7.3 kernel clients. Attempts to read or set the

ceph.file.layout.pool_namespaceextended attribute fail with the "No such attribute" error.

1.3. Differences from POSIX Compliance

The Ceph File System aims to adhere to POSIX semantics wherever possible. For example, in contrast to many other common network file systems like NFS, CephFS maintains strong cache coherency across clients. The goal is for processes using the file system to behave the same when they are on different hosts as when they are on the same host.

However, there are a few places where CephFS diverges from strict POSIX semantics for various reasons:

-

If a client’s attempt to write a file fails, the write operations are not necessarily atomic. That is, the client might call the

write()system call on a file opened with theO_SYNCflag with an 8MB buffer and then terminates unexpectedly and the write operation can be only partially applied. Almost all file systems, even local file systems, have this behavior. - In situations when the write operations occur simultaneously, a write operation that exceeds object boundaries is not necessarily atomic. For example, writer A writes "aa|aa" and writer B writes "bb|bb" simultaneously (where "|" is the object boundary) and "aa|bb" is written rather than the proper "aa|aa" or "bb|bb".

-

POSIX includes the

telldir()andseekdir()system calls that allow you to obtain the current directory offset and seek back to it. Because CephFS can fragment directories at any time, it is difficult to return a stable integer offset for a directory. As such, calling theseekdir()system call to a non-zero offset might often work but is not guaranteed to do so. Callingseekdir()to offset 0 will always work. This is an equivalent to therewinddir()system call. -

Sparse files propagate incorrectly to the

st_blocksfield of thestat()system call. Because CephFS does not explicitly track which parts of a file are allocated or written, thest_blocksfield is always populated by the file size divided by the block size. This behavior causes utilities, such asdu, to overestimate consumed space. -

When the

mmap()system call maps a file into memory on multiple hosts, write operations are not coherently propagated to caches of other hosts. That is, if a page is cached on host A, and then updated on host B, host A page is not coherently invalidated. -

CephFS clients present a hidden

.snapdirectory that is used to access, create, delete, and rename snapshots. Although the this directory is excluded from thereaddir()system call, any process that tries to create a file or directory with the same name returns an error. You can change the name of this hidden directory at mount time with the-o snapdirname=.<new_name>option or by using theclient_snapdirconfiguration option.