Chapter 4. Operators

4.1. Cluster Operator

Use the Cluster Operator to deploy a Kafka cluster and other Kafka components.

The Cluster Operator is deployed using YAML installation files. For information on deploying the Cluster Operator, see the Deploying the Cluster Operator.

For information on the deployment options available for Kafka, see Kafka Cluster configuration.

On OpenShift, a Kafka Connect deployment can incorporate a Source2Image feature to provide a convenient way to add additional connectors.

4.1.1. Cluster Operator

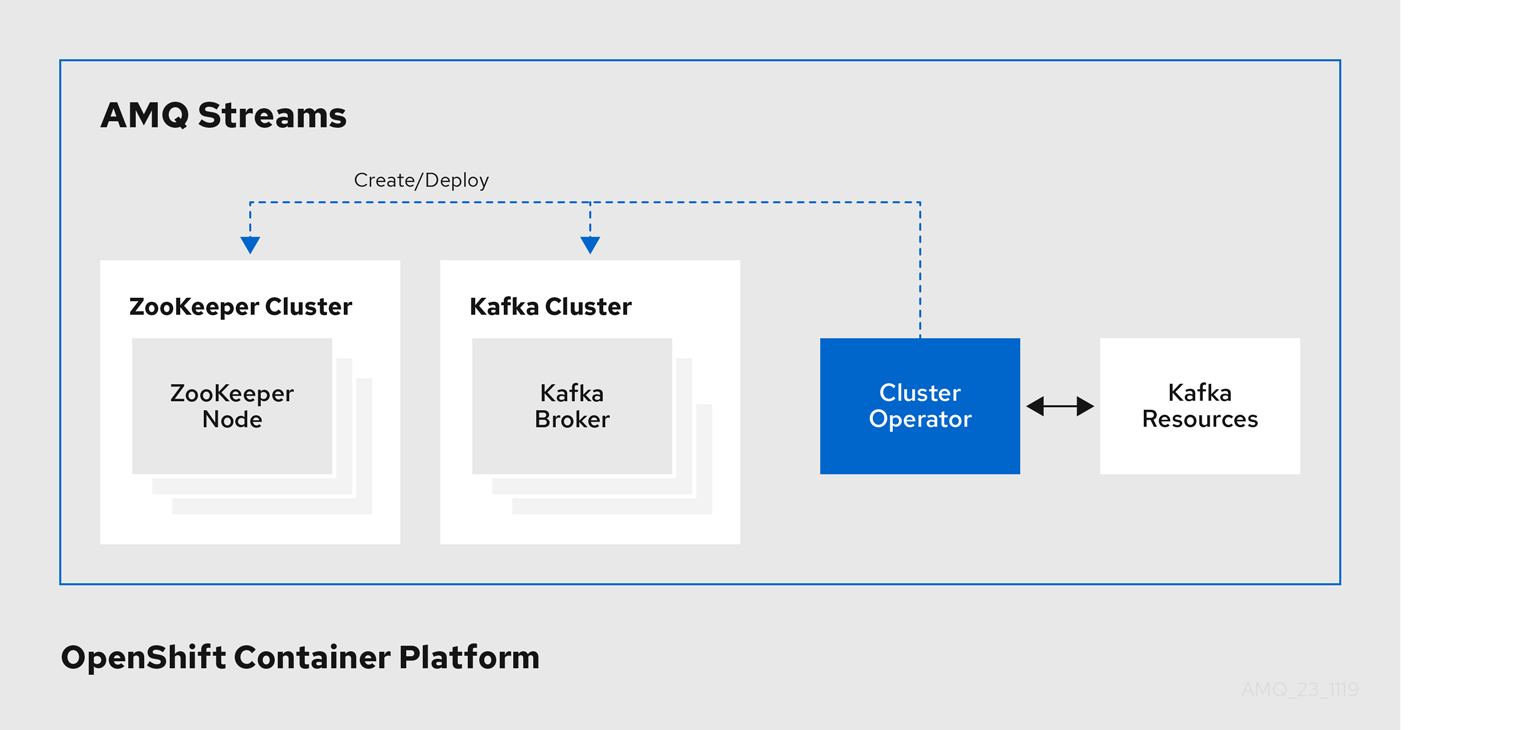

AMQ Streams uses the Cluster Operator to deploy and manage clusters for:

- Kafka (including ZooKeeper, Entity Operator, Kafka Exporter, and Cruise Control)

- Kafka Connect

- Kafka MirrorMaker

- Kafka Bridge

Custom resources are used to deploy the clusters.

For example, to deploy a Kafka cluster:

-

A

Kafkaresource with the cluster configuration is created within the OpenShift cluster. -

The Cluster Operator deploys a corresponding Kafka cluster, based on what is declared in the

Kafkaresource.

The Cluster Operator can also deploy (through configuration of the Kafka resource):

-

A Topic Operator to provide operator-style topic management through

KafkaTopiccustom resources -

A User Operator to provide operator-style user management through

KafkaUsercustom resources

The Topic Operator and User Operator function within the Entity Operator on deployment.

Example architecture for the Cluster Operator

4.1.2. Reconciliation

Although the operator reacts to all notifications about the desired cluster resources received from the OpenShift cluster, if the operator is not running, or if a notification is not received for any reason, the desired resources will get out of sync with the state of the running OpenShift cluster.

In order to handle failovers properly, a periodic reconciliation process is executed by the Cluster Operator so that it can compare the state of the desired resources with the current cluster deployments in order to have a consistent state across all of them. You can set the time interval for the periodic reconciliations using the [STRIMZI_FULL_RECONCILIATION_INTERVAL_MS] variable.

4.1.3. Cluster Operator Configuration

The Cluster Operator can be configured through the following supported environment variables:

STRIMZI_NAMESPACEA comma-separated list of namespaces that the operator should operate in. When not set, set to empty string, or to

*the Cluster Operator will operate in all namespaces. The Cluster Operator deployment might use the OpenShift Downward API to set this automatically to the namespace the Cluster Operator is deployed in. See the example below:env: - name: STRIMZI_NAMESPACE valueFrom: fieldRef: fieldPath: metadata.namespace-

STRIMZI_FULL_RECONCILIATION_INTERVAL_MS - Optional, default is 120000 ms. The interval between periodic reconciliations, in milliseconds.

STRIMZI_LOG_LEVEL-

Optional, default

INFO. The level for printing logging messages. The value can be set to:ERROR,WARNING,INFO,DEBUG, andTRACE. STRIMZI_OPERATION_TIMEOUT_MS- Optional, default 300000 ms. The timeout for internal operations, in milliseconds. This value should be increased when using AMQ Streams on clusters where regular OpenShift operations take longer than usual (because of slow downloading of Docker images, for example).

STRIMZI_KAFKA_IMAGES-

Required. This provides a mapping from Kafka version to the corresponding Docker image containing a Kafka broker of that version. The required syntax is whitespace or comma separated

<version>=<image>pairs. For example2.4.1=registry.redhat.io/amq7/amq-streams-kafka-24-rhel7:1.5.0, 2.5.0=registry.redhat.io/amq7/amq-streams-kafka-25-rhel7:1.5.0. This is used when aKafka.spec.kafka.versionproperty is specified but not theKafka.spec.kafka.image, as described in Section 3.1.19, “Container images”. STRIMZI_DEFAULT_KAFKA_INIT_IMAGE-

Optional, default

registry.redhat.io/amq7/amq-streams-rhel7-operator:1.5.0. The image name to use as default for the init container started before the broker for initial configuration work (that is, rack support), if no image is specified as thekafka-init-imagein the Section 3.1.19, “Container images”. STRIMZI_DEFAULT_TLS_SIDECAR_KAFKA_IMAGE-

Optional, default

registry.redhat.io/amq7/amq-streams-kafka-25-rhel7:1.5.0. The image name to use as the default when deploying the sidecar container which provides TLS support for Kafka, if no image is specified as theKafka.spec.kafka.tlsSidecar.imagein the Section 3.1.19, “Container images”. STRIMZI_KAFKA_CONNECT_IMAGES-

Required. This provides a mapping from the Kafka version to the corresponding Docker image containing a Kafka connect of that version. The required syntax is whitespace or comma separated

<version>=<image>pairs. For example2.4.1=registry.redhat.io/amq7/amq-streams-kafka-24-rhel7:1.5.0, 2.5.0=registry.redhat.io/amq7/amq-streams-kafka-25-rhel7:1.5.0. This is used when aKafkaConnect.spec.versionproperty is specified but not theKafkaConnect.spec.image, as described in Section 3.2.12, “Container images”. STRIMZI_KAFKA_CONNECT_S2I_IMAGES-

Required. This provides a mapping from the Kafka version to the corresponding Docker image containing a Kafka connect of that version. The required syntax is whitespace or comma separated

<version>=<image>pairs. For example2.4.1=registry.redhat.io/amq7/amq-streams-kafka-24-rhel7:1.5.0, 2.5.0=registry.redhat.io/amq7/amq-streams-kafka-25-rhel7:1.5.0. This is used when aKafkaConnectS2I.spec.versionproperty is specified but not theKafkaConnectS2I.spec.image, as described in Section 3.3.12, “Container images”. STRIMZI_KAFKA_MIRROR_MAKER_IMAGES-

Required. This provides a mapping from the Kafka version to the corresponding Docker image containing a Kafka mirror maker of that version. The required syntax is whitespace or comma separated

<version>=<image>pairs. For example2.4.1=registry.redhat.io/amq7/amq-streams-kafka-24-rhel7:1.5.0, 2.5.0=registry.redhat.io/amq7/amq-streams-kafka-25-rhel7:1.5.0. This is used when aKafkaMirrorMaker.spec.versionproperty is specified but not theKafkaMirrorMaker.spec.image, as described in Section 3.4.2.14, “Container images”. STRIMZI_DEFAULT_TOPIC_OPERATOR_IMAGE-

Optional, default

registry.redhat.io/amq7/amq-streams-rhel7-operator:1.5.0. The image name to use as the default when deploying the topic operator, if no image is specified as theKafka.spec.entityOperator.topicOperator.imagein the Section 3.1.19, “Container images” of theKafkaresource. STRIMZI_DEFAULT_USER_OPERATOR_IMAGE-

Optional, default

registry.redhat.io/amq7/amq-streams-rhel7-operator:1.5.0. The image name to use as the default when deploying the user operator, if no image is specified as theKafka.spec.entityOperator.userOperator.imagein the Section 3.1.19, “Container images” of theKafkaresource. STRIMZI_DEFAULT_TLS_SIDECAR_ENTITY_OPERATOR_IMAGE-

Optional, default

registry.redhat.io/amq7/amq-streams-kafka-25-rhel7:1.5.0. The image name to use as the default when deploying the sidecar container which provides TLS support for the Entity Operator, if no image is specified as theKafka.spec.entityOperator.tlsSidecar.imagein the Section 3.1.19, “Container images”. STRIMZI_IMAGE_PULL_POLICY-

Optional. The

ImagePullPolicywhich will be applied to containers in all pods managed by AMQ Streams Cluster Operator. The valid values areAlways,IfNotPresent, andNever. If not specified, the OpenShift defaults will be used. Changing the policy will result in a rolling update of all your Kafka, Kafka Connect, and Kafka MirrorMaker clusters. STRIMZI_IMAGE_PULL_SECRETS-

Optional. A comma-separated list of

Secretnames. The secrets referenced here contain the credentials to the container registries where the container images are pulled from. The secrets are used in theimagePullSecretsfield for allPodscreated by the Cluster Operator. Changing this list results in a rolling update of all your Kafka, Kafka Connect, and Kafka MirrorMaker clusters. STRIMZI_KUBERNETES_VERSIONOptional. Overrides the OpenShift version information detected from the API server. See the example below:

env: - name: STRIMZI_KUBERNETES_VERSION value: | major=1 minor=16 gitVersion=v1.16.2 gitCommit=c97fe5036ef3df2967d086711e6c0c405941e14b gitTreeState=clean buildDate=2019-10-15T19:09:08Z goVersion=go1.12.10 compiler=gc platform=linux/amd64KUBERNETES_SERVICE_DNS_DOMAINOptional. Overrides the default OpenShift DNS domain name suffix.

By default, services assigned in the OpenShift cluster have a DNS domain name that uses the default suffix

cluster.local.For example, for broker kafka-0:

<cluster-name>-kafka-0.<cluster-name>-kafka-brokers.<namespace>.svc.cluster.local

The DNS domain name is added to the Kafka broker certificates used for hostname verification.

If you are using a different DNS domain name suffix in your cluster, change the

KUBERNETES_SERVICE_DNS_DOMAINenvironment variable from the default to the one you are using in order to establish a connection with the Kafka brokers.

4.1.4. Role-Based Access Control (RBAC)

4.1.4.1. Provisioning Role-Based Access Control (RBAC) for the Cluster Operator

For the Cluster Operator to function it needs permission within the OpenShift cluster to interact with resources such as Kafka, KafkaConnect, and so on, as well as the managed resources, such as ConfigMaps, Pods, Deployments, StatefulSets, Services, and so on. Such permission is described in terms of OpenShift role-based access control (RBAC) resources:

-

ServiceAccount, -

RoleandClusterRole, -

RoleBindingandClusterRoleBinding.

In addition to running under its own ServiceAccount with a ClusterRoleBinding, the Cluster Operator manages some RBAC resources for the components that need access to OpenShift resources.

OpenShift also includes privilege escalation protections that prevent components operating under one ServiceAccount from granting other ServiceAccounts privileges that the granting ServiceAccount does not have. Because the Cluster Operator must be able to create the ClusterRoleBindings, and RoleBindings needed by resources it manages, the Cluster Operator must also have those same privileges.

4.1.4.2. Delegated privileges

When the Cluster Operator deploys resources for a desired Kafka resource it also creates ServiceAccounts, RoleBindings, and ClusterRoleBindings, as follows:

The Kafka broker pods use a

ServiceAccountcalledcluster-name-kafka-

When the rack feature is used, the

strimzi-cluster-name-kafka-initClusterRoleBindingis used to grant thisServiceAccountaccess to the nodes within the cluster via aClusterRolecalledstrimzi-kafka-broker - When the rack feature is not used no binding is created

-

When the rack feature is used, the

-

The ZooKeeper pods use a

ServiceAccountcalledcluster-name-zookeeper The Entity Operator pod uses a

ServiceAccountcalledcluster-name-entity-operator-

The Topic Operator produces OpenShift events with status information, so the

ServiceAccountis bound to aClusterRolecalledstrimzi-entity-operatorwhich grants this access via thestrimzi-entity-operatorRoleBinding

-

The Topic Operator produces OpenShift events with status information, so the

-

The pods for

KafkaConnectandKafkaConnectS2Iresources use aServiceAccountcalledcluster-name-cluster-connect -

The pods for

KafkaMirrorMakeruse aServiceAccountcalledcluster-name-mirror-maker -

The pods for

KafkaBridgeuse aServiceAccountcalledcluster-name-bridge

4.1.4.3. ServiceAccount

The Cluster Operator is best run using a ServiceAccount:

Example ServiceAccount for the Cluster Operator

apiVersion: v1

kind: ServiceAccount

metadata:

name: strimzi-cluster-operator

labels:

app: strimzi

The Deployment of the operator then needs to specify this in its spec.template.spec.serviceAccountName:

Partial example of Deployment for the Cluster Operator

apiVersion: apps/v1

kind: Deployment

metadata:

name: strimzi-cluster-operator

labels:

app: strimzi

spec:

replicas: 1

selector:

matchLabels:

name: strimzi-cluster-operator

strimzi.io/kind: cluster-operator

template:

# ...

Note line 12, where the the strimzi-cluster-operator ServiceAccount is specified as the serviceAccountName.

4.1.4.4. ClusterRoles

The Cluster Operator needs to operate using ClusterRoles that gives access to the necessary resources. Depending on the OpenShift cluster setup, a cluster administrator might be needed to create the ClusterRoles.

Cluster administrator rights are only needed for the creation of the ClusterRoles. The Cluster Operator will not run under the cluster admin account.

The ClusterRoles follow the principle of least privilege and contain only those privileges needed by the Cluster Operator to operate Kafka, Kafka Connect, and ZooKeeper clusters. The first set of assigned privileges allow the Cluster Operator to manage OpenShift resources such as StatefulSets, Deployments, Pods, and ConfigMaps.

Cluster Operator uses ClusterRoles to grant permission at the namespace-scoped resources level and cluster-scoped resources level:

ClusterRole with namespaced resources for the Cluster Operator

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: strimzi-cluster-operator-namespaced

labels:

app: strimzi

rules:

- apiGroups:

- ""

resources:

# The cluster operator needs to access and manage service accounts to grant Strimzi components cluster permissions

- serviceaccounts

verbs:

- get

- create

- delete

- patch

- update

- apiGroups:

- "rbac.authorization.k8s.io"

resources:

# The cluster operator needs to access and manage rolebindings to grant Strimzi components cluster permissions

- rolebindings

verbs:

- get

- create

- delete

- patch

- update

- apiGroups:

- ""

resources:

# The cluster operator needs to access and manage config maps for Strimzi components configuration

- configmaps

# The cluster operator needs to access and manage services to expose Strimzi components to network traffic

- services

# The cluster operator needs to access and manage secrets to handle credentials

- secrets

# The cluster operator needs to access and manage persistent volume claims to bind them to Strimzi components for persistent data

- persistentvolumeclaims

verbs:

- get

- list

- watch

- create

- delete

- patch

- update

- apiGroups:

- "kafka.strimzi.io"

resources:

# The cluster operator runs the KafkaAssemblyOperator, which needs to access and manage Kafka resources

- kafkas

- kafkas/status

# The cluster operator runs the KafkaConnectAssemblyOperator, which needs to access and manage KafkaConnect resources

- kafkaconnects

- kafkaconnects/status

# The cluster operator runs the KafkaConnectS2IAssemblyOperator, which needs to access and manage KafkaConnectS2I resources

- kafkaconnects2is

- kafkaconnects2is/status

# The cluster operator runs the KafkaConnectorAssemblyOperator, which needs to access and manage KafkaConnector resources

- kafkaconnectors

- kafkaconnectors/status

# The cluster operator runs the KafkaMirrorMakerAssemblyOperator, which needs to access and manage KafkaMirrorMaker resources

- kafkamirrormakers

- kafkamirrormakers/status

# The cluster operator runs the KafkaBridgeAssemblyOperator, which needs to access and manage BridgeMaker resources

- kafkabridges

- kafkabridges/status

# The cluster operator runs the KafkaMirrorMaker2AssemblyOperator, which needs to access and manage KafkaMirrorMaker2 resources

- kafkamirrormaker2s

- kafkamirrormaker2s/status

# The cluster operator runs the KafkaRebalanceAssemblyOperator, which needs to access and manage KafkaRebalance resources

- kafkarebalances

- kafkarebalances/status

verbs:

- get

- list

- watch

- create

- delete

- patch

- update

- apiGroups:

- ""

resources:

# The cluster operator needs to access and delete pods, this is to allow it to monitor pod health and coordinate rolling updates

- pods

verbs:

- get

- list

- watch

- delete

- apiGroups:

- ""

resources:

- endpoints

verbs:

- get

- list

- watch

- apiGroups:

# The cluster operator needs the extensions api as the operator supports Kubernetes version 1.11+

# apps/v1 was introduced in Kubernetes 1.14

- "extensions"

resources:

# The cluster operator needs to access and manage deployments to run deployment based Strimzi components

- deployments

- deployments/scale

# The cluster operator needs to access replica sets to manage Strimzi components and to determine error states

- replicasets

# The cluster operator needs to access and manage replication controllers to manage replicasets

- replicationcontrollers

# The cluster operator needs to access and manage network policies to lock down communication between Strimzi components

- networkpolicies

# The cluster operator needs to access and manage ingresses which allow external access to the services in a cluster

- ingresses

verbs:

- get

- list

- watch

- create

- delete

- patch

- update

- apiGroups:

- "apps"

resources:

# The cluster operator needs to access and manage deployments to run deployment based Strimzi components

- deployments

- deployments/scale

- deployments/status

# The cluster operator needs to access and manage stateful sets to run stateful sets based Strimzi components

- statefulsets

# The cluster operator needs to access replica-sets to manage Strimzi components and to determine error states

- replicasets

verbs:

- get

- list

- watch

- create

- delete

- patch

- update

- apiGroups:

- ""

resources:

# The cluster operator needs to be able to create events and delegate permissions to do so

- events

verbs:

- create

- apiGroups:

# OpenShift S2I requirements

- apps.openshift.io

resources:

- deploymentconfigs

- deploymentconfigs/scale

- deploymentconfigs/status

- deploymentconfigs/finalizers

verbs:

- get

- list

- watch

- create

- delete

- patch

- update

- apiGroups:

# OpenShift S2I requirements

- build.openshift.io

resources:

- buildconfigs

- builds

verbs:

- create

- delete

- get

- list

- patch

- watch

- update

- apiGroups:

# OpenShift S2I requirements

- image.openshift.io

resources:

- imagestreams

- imagestreams/status

verbs:

- create

- delete

- get

- list

- watch

- patch

- update

- apiGroups:

- networking.k8s.io

resources:

# The cluster operator needs to access and manage network policies to lock down communication between Strimzi components

- networkpolicies

verbs:

- get

- list

- watch

- create

- delete

- patch

- update

- apiGroups:

- route.openshift.io

resources:

# The cluster operator needs to access and manage routes to expose Strimzi components for external access

- routes

- routes/custom-host

verbs:

- get

- list

- create

- delete

- patch

- update

- apiGroups:

- policy

resources:

# The cluster operator needs to access and manage pod disruption budgets this limits the number of concurrent disruptions

# that a Strimzi component experiences, allowing for higher availability

- poddisruptionbudgets

verbs:

- get

- list

- watch

- create

- delete

- patch

- update

The second includes the permissions needed for cluster-scoped resources.

ClusterRole with cluster-scoped resources for the Cluster Operator

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: strimzi-cluster-operator-global

labels:

app: strimzi

rules:

- apiGroups:

- "rbac.authorization.k8s.io"

resources:

# The cluster operator needs to create and manage cluster role bindings in the case of an install where a user

# has specified they want their cluster role bindings generated

- clusterrolebindings

verbs:

- get

- create

- delete

- patch

- update

- watch

- apiGroups:

- storage.k8s.io

resources:

# The cluster operator requires "get" permissions to view storage class details

# This is because only a persistent volume of a supported storage class type can be resized

- storageclasses

verbs:

- get

- apiGroups:

- ""

resources:

# The cluster operator requires "list" permissions to view all nodes in a cluster

# The listing is used to determine the node addresses when NodePort access is configured

# These addresses are then exposed in the custom resource states

- nodes

verbs:

- list

The strimzi-kafka-broker ClusterRole represents the access needed by the init container in Kafka pods that is used for the rack feature. As described in the Delegated privileges section, this role is also needed by the Cluster Operator in order to be able to delegate this access.

ClusterRole for the Cluster Operator allowing it to delegate access to OpenShift nodes to the Kafka broker pods

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: strimzi-kafka-broker

labels:

app: strimzi

rules:

- apiGroups:

- ""

resources:

# The Kafka Brokers require "get" permissions to view the node they are on

# This information is used to generate a Rack ID that is used for High Availability configurations

- nodes

verbs:

- get

The strimzi-topic-operator ClusterRole represents the access needed by the Topic Operator. As described in the Delegated privileges section, this role is also needed by the Cluster Operator in order to be able to delegate this access.

ClusterRole for the Cluster Operator allowing it to delegate access to events to the Topic Operator

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: strimzi-entity-operator

labels:

app: strimzi

rules:

- apiGroups:

- "kafka.strimzi.io"

resources:

# The entity operator runs the KafkaTopic assembly operator, which needs to access and manage KafkaTopic resources

- kafkatopics

- kafkatopics/status

# The entity operator runs the KafkaUser assembly operator, which needs to access and manage KafkaUser resources

- kafkausers

- kafkausers/status

verbs:

- get

- list

- watch

- create

- patch

- update

- delete

- apiGroups:

- ""

resources:

- events

verbs:

# The entity operator needs to be able to create events

- create

- apiGroups:

- ""

resources:

# The entity operator user-operator needs to access and manage secrets to store generated credentials

- secrets

verbs:

- get

- list

- create

- patch

- update

- delete

4.1.4.5. ClusterRoleBindings

The operator needs ClusterRoleBindings and RoleBindings which associates its ClusterRole with its ServiceAccount: ClusterRoleBindings are needed for ClusterRoles containing cluster-scoped resources.

Example ClusterRoleBinding for the Cluster Operator

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: strimzi-cluster-operator

labels:

app: strimzi

subjects:

- kind: ServiceAccount

name: strimzi-cluster-operator

namespace: myproject

roleRef:

kind: ClusterRole

name: strimzi-cluster-operator-global

apiGroup: rbac.authorization.k8s.io

ClusterRoleBindings are also needed for the ClusterRoles needed for delegation:

Examples RoleBinding for the Cluster Operator

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: strimzi-cluster-operator-kafka-broker-delegation

labels:

app: strimzi

# The Kafka broker cluster role must be bound to the cluster operator service account so that it can delegate the cluster role to the Kafka brokers.

# This must be done to avoid escalating privileges which would be blocked by Kubernetes.

subjects:

- kind: ServiceAccount

name: strimzi-cluster-operator

namespace: myproject

roleRef:

kind: ClusterRole

name: strimzi-kafka-broker

apiGroup: rbac.authorization.k8s.io

ClusterRoles containing only namespaced resources are bound using RoleBindings only.

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

name: strimzi-cluster-operator

labels:

app: strimzi

subjects:

- kind: ServiceAccount

name: strimzi-cluster-operator

namespace: myproject

roleRef:

kind: ClusterRole

name: strimzi-cluster-operator-namespaced

apiGroup: rbac.authorization.k8s.ioapiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

name: strimzi-cluster-operator-entity-operator-delegation

labels:

app: strimzi

# The Entity Operator cluster role must be bound to the cluster operator service account so that it can delegate the cluster role to the Entity Operator.

# This must be done to avoid escalating privileges which would be blocked by Kubernetes.

subjects:

- kind: ServiceAccount

name: strimzi-cluster-operator

namespace: myproject

roleRef:

kind: ClusterRole

name: strimzi-entity-operator

apiGroup: rbac.authorization.k8s.io4.2. Topic Operator

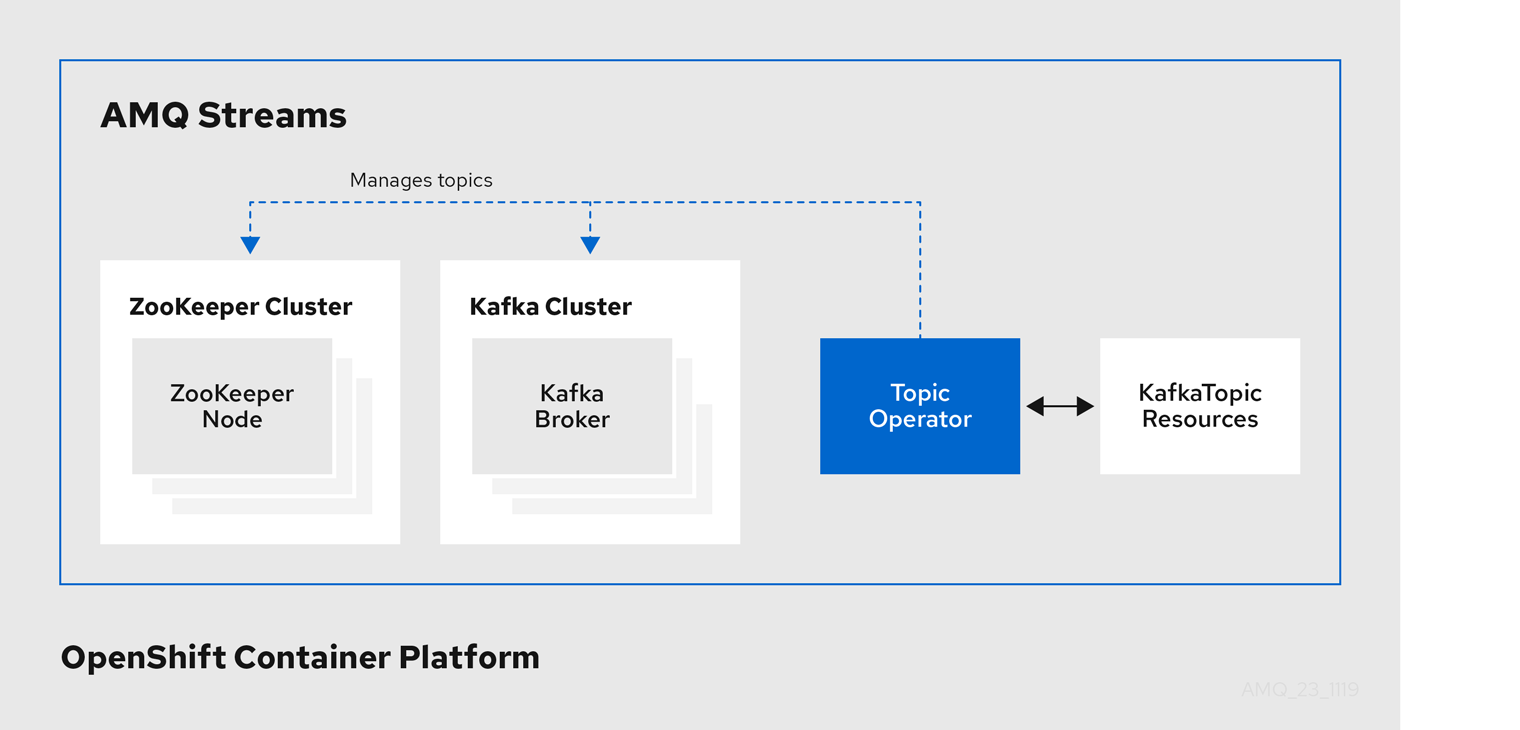

The Topic Operator manages Kafka topics through custom resources.

The Topic Operator is deployed:

4.2.1. Topic Operator

The Topic Operator provides a way of managing topics in a Kafka cluster through OpenShift resources.

Example architecture for the Topic Operator

The role of the Topic Operator is to keep a set of KafkaTopic OpenShift resources describing Kafka topics in-sync with corresponding Kafka topics.

Specifically, if a KafkaTopic is:

- Created, the Topic Operator creates the topic

- Deleted, the Topic Operator deletes the topic

- Changed, the Topic Operator updates the topic

Working in the other direction, if a topic is:

-

Created within the Kafka cluster, the Operator creates a

KafkaTopic -

Deleted from the Kafka cluster, the Operator deletes the

KafkaTopic -

Changed in the Kafka cluster, the Operator updates the

KafkaTopic

This allows you to declare a KafkaTopic as part of your application’s deployment and the Topic Operator will take care of creating the topic for you. Your application just needs to deal with producing or consuming from the necessary topics.

If the topic is reconfigured or reassigned to different Kafka nodes, the KafkaTopic will always be up to date.

4.2.2. Identifying a Kafka cluster for topic handling

A KafkaTopic resource includes a label that defines the appropriate name of the Kafka cluster (derived from the name of the Kafka resource) to which it belongs.

apiVersion: kafka.strimzi.io/v1beta1

kind: KafkaTopic

metadata:

name: my-topic

labels:

strimzi.io/cluster: my-cluster

The label is used by the Topic Operator to identify the KafkaTopic resource and create a new topic, and also in subsequent handling of the topic.

If the label does not match the Kafka cluster, the Topic Operator cannot identify the KafkaTopic and the topic is not created.

4.2.3. Understanding the Topic Operator

A fundamental problem that the operator has to solve is that there is no single source of truth: Both the KafkaTopic resource and the topic within Kafka can be modified independently of the operator. Complicating this, the Topic Operator might not always be able to observe changes at each end in real time (for example, the operator might be down).

To resolve this, the operator maintains its own private copy of the information about each topic. When a change happens either in the Kafka cluster, or in OpenShift, it looks at both the state of the other system and at its private copy in order to determine what needs to change to keep everything in sync. The same thing happens whenever the operator starts, and periodically while it is running.

For example, suppose the Topic Operator is not running, and a KafkaTopic my-topic gets created. When the operator starts it will lack a private copy of "my-topic", so it can infer that the KafkaTopic has been created since it was last running. The operator will create the topic corresponding to "my-topic" and also store a private copy of the metadata for "my-topic".

The private copy allows the operator to cope with scenarios where the topic configuration gets changed both in Kafka and in OpenShift, so long as the changes are not incompatible (for example, both changing the same topic config key, but to different values). In the case of incompatible changes, the Kafka configuration wins, and the KafkaTopic will be updated to reflect that.

The private copy is held in the same ZooKeeper ensemble used by Kafka itself. This mitigates availability concerns, because if ZooKeeper is not running then Kafka itself cannot run, so the operator will be no less available than it would even if it was stateless.

4.2.4. Configuring the Topic Operator with resource requests and limits

You can allocate resources, such as CPU and memory, to the Topic Operator and set a limit on the amount of resources it can consume.

Prerequisites

- The Cluster Operator is running.

Procedure

Update the Kafka cluster configuration in an editor, as required:

Use

oc edit:oc edit kafka my-cluster

In the

spec.entityOperator.topicOperator.resourcesproperty in theKafkaresource, set the resource requests and limits for the Topic Operator.apiVersion: kafka.strimzi.io/v1beta1 kind: Kafka spec: # kafka and zookeeper sections... entityOperator: topicOperator: resources: request: cpu: "1" memory: 500Mi limit: cpu: "1" memory: 500MiApply the new configuration to create or update the resource.

Use

oc apply:oc apply -f kafka.yaml

Additional resources

-

For more information about the schema of the

resourcesobject, see {K8sResourceRequirementsAPI}.

4.3. User Operator

The User Operator manages Kafka users through custom resources.

The User Operator is deployed:

4.3.1. User Operator

The User Operator manages Kafka users for a Kafka cluster by watching for KafkaUser resources that describe Kafka users, and ensuring that they are configured properly in the Kafka cluster.

For example, if a KafkaUser is:

- Created, the User Operator creates the user it describes

- Deleted, the User Operator deletes the user it describes

- Changed, the User Operator updates the user it describes

Unlike the Topic Operator, the User Operator does not sync any changes from the Kafka cluster with the OpenShift resources. Kafka topics can be created by applications directly in Kafka, but it is not expected that the users will be managed directly in the Kafka cluster in parallel with the User Operator.

The User Operator allows you to declare a KafkaUser resource as part of your application’s deployment. You can specify the authentication and authorization mechanism for the user. You can also configure user quotas that control usage of Kafka resources to ensure, for example, that a user does not monopolize access to a broker.

When the user is created, the user credentials are created in a Secret. Your application needs to use the user and its credentials for authentication and to produce or consume messages.

In addition to managing credentials for authentication, the User Operator also manages authorization rules by including a description of the user’s access rights in the KafkaUser declaration.

4.3.2. Identifying a Kafka cluster for user handling

A KafkaUser resource includes a label that defines the appropriate name of the Kafka cluster (derived from the name of the Kafka resource) to which it belongs.

apiVersion: kafka.strimzi.io/v1beta1

kind: KafkaUser

metadata:

name: my-user

labels:

strimzi.io/cluster: my-cluster

The label is used by the User Operator to identify the KafkaUser resource and create a new user, and also in subsequent handling of the user.

If the label does not match the Kafka cluster, the User Operator cannot identify the kafkaUser and the user is not created.

4.3.3. Configuring the User Operator with resource requests and limits

You can allocate resources, such as CPU and memory, to the User Operator and set a limit on the amount of resources it can consume.

Prerequisites

- The Cluster Operator is running.

Procedure

Update the Kafka cluster configuration in an editor, as required:

oc edit kafka my-cluster

In the

spec.entityOperator.userOperator.resourcesproperty in theKafkaresource, set the resource requests and limits for the User Operator.apiVersion: kafka.strimzi.io/v1beta1 kind: Kafka spec: # kafka and zookeeper sections... entityOperator: userOperator: resources: request: cpu: "1" memory: 500Mi limit: cpu: "1" memory: 500MiSave the file and exit the editor. The Cluster Operator will apply the changes automatically.

Additional resources

-

For more information about the schema of the

resourcesobject, see {K8sResourceRequirementsAPI}.

4.4. Monitoring Operators

4.4.1. Prometheus metrics

AMQ Streams operators expose Prometheus metrics. The metrics are automatically enabled and contain information about:

- Number of reconciliations

- Number of Custom Resources the operator is processing

- Duration of reconciliations

- JVM metrics from the operators

Additionally, we provide an example Grafana dashboard.

For more information about Prometheus, see the Introducing Metrics to Kafka.