Operators

Working with Operators in OpenShift Container Platform

Abstract

Chapter 1. Understanding Operators

Conceptually, Operators take human operational knowledge and encode it into software that is more easily shared with consumers.

Operators are pieces of software that ease the operational complexity of running another piece of software. They act like an extension of the software vendor’s engineering team, watching over a Kubernetes environment (such as OpenShift Container Platform) and using its current state to make decisions in real time. Advanced Operators are designed to handle upgrades seamlessly, react to failures automatically, and not take shortcuts, like skipping a software backup process to save time.

More technically, Operators are a method of packaging, deploying, and managing a Kubernetes application.

A Kubernetes application is an app that is both deployed on Kubernetes and managed using the Kubernetes APIs and kubectl or oc tooling. To be able to make the most of Kubernetes, you require a set of cohesive APIs to extend in order to service and manage your apps that run on Kubernetes. Think of Operators as the runtime that manages this type of app on Kubernetes.

1.1. Why use Operators?

Operators provide:

- Repeatability of installation and upgrade.

- Constant health checks of every system component.

- Over-the-air (OTA) updates for OpenShift components and ISV content.

- A place to encapsulate knowledge from field engineers and spread it to all users, not just one or two.

- Why deploy on Kubernetes?

- Kubernetes (and by extension, OpenShift Container Platform) contains all of the primitives needed to build complex distributed systems – secret handling, load balancing, service discovery, autoscaling – that work across on-premise and cloud providers.

- Why manage your app with Kubernetes APIs and

kubectltooling? -

These APIs are feature rich, have clients for all platforms and plug into the cluster’s access control/auditing. An Operator uses the Kubernetes' extension mechanism, Custom Resource Definitions (CRDs), so your custom object, for example

MongoDB, looks and acts just like the built-in, native Kubernetes objects. - How do Operators compare with Service Brokers?

- A Service Broker is a step towards programmatic discovery and deployment of an app. However, because it is not a long running process, it cannot execute Day 2 operations like upgrade, failover, or scaling. Customizations and parameterization of tunables are provided at install time, versus an Operator that is constantly watching your cluster’s current state. Off-cluster services continue to be a good match for a Service Broker, although Operators exist for these as well.

1.2. Operator Framework

The Operator Framework is a family of tools and capabilities to deliver on the customer experience described above. It is not just about writing code; testing, delivering, and updating Operators is just as important. The Operator Framework components consist of open source tools to tackle these problems:

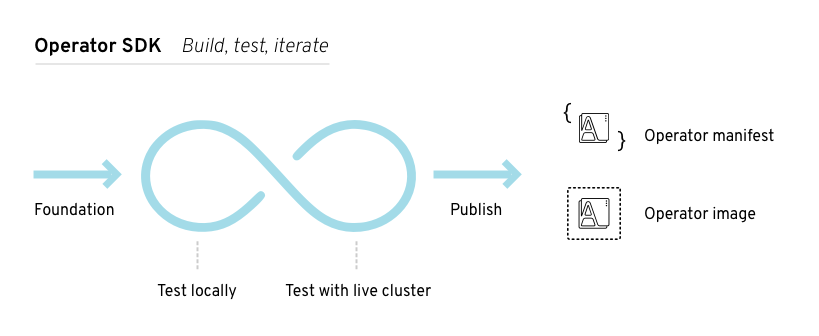

- Operator SDK

- The Operator SDK assists Operator authors in bootstrapping, building, testing, and packaging their own Operator based on their expertise without requiring knowledge of Kubernetes API complexities.

- Operator Lifecycle Manager

- The Operator Lifecycle Manager (OLM) controls the installation, upgrade, and role-based access control (RBAC) of Operators in a cluster. Deployed by default in OpenShift Container Platform 4.4.

- Operator Registry

- The Operator Registry stores ClusterServiceVersions (CSVs) and Custom Resource Definitions (CRDs) for creation in a cluster and stores Operator metadata about packages and channels. It runs in a Kubernetes or OpenShift cluster to provide this Operator catalog data to the OLM.

- OperatorHub

- The OperatorHub is a web console for cluster administrators to discover and select Operators to install on their cluster. It is deployed by default in OpenShift Container Platform.

- Operator Metering

- Operator Metering collects operational metrics about Operators on the cluster for Day 2 management and aggregating usage metrics.

These tools are designed to be composable, so you can use any that are useful to you.

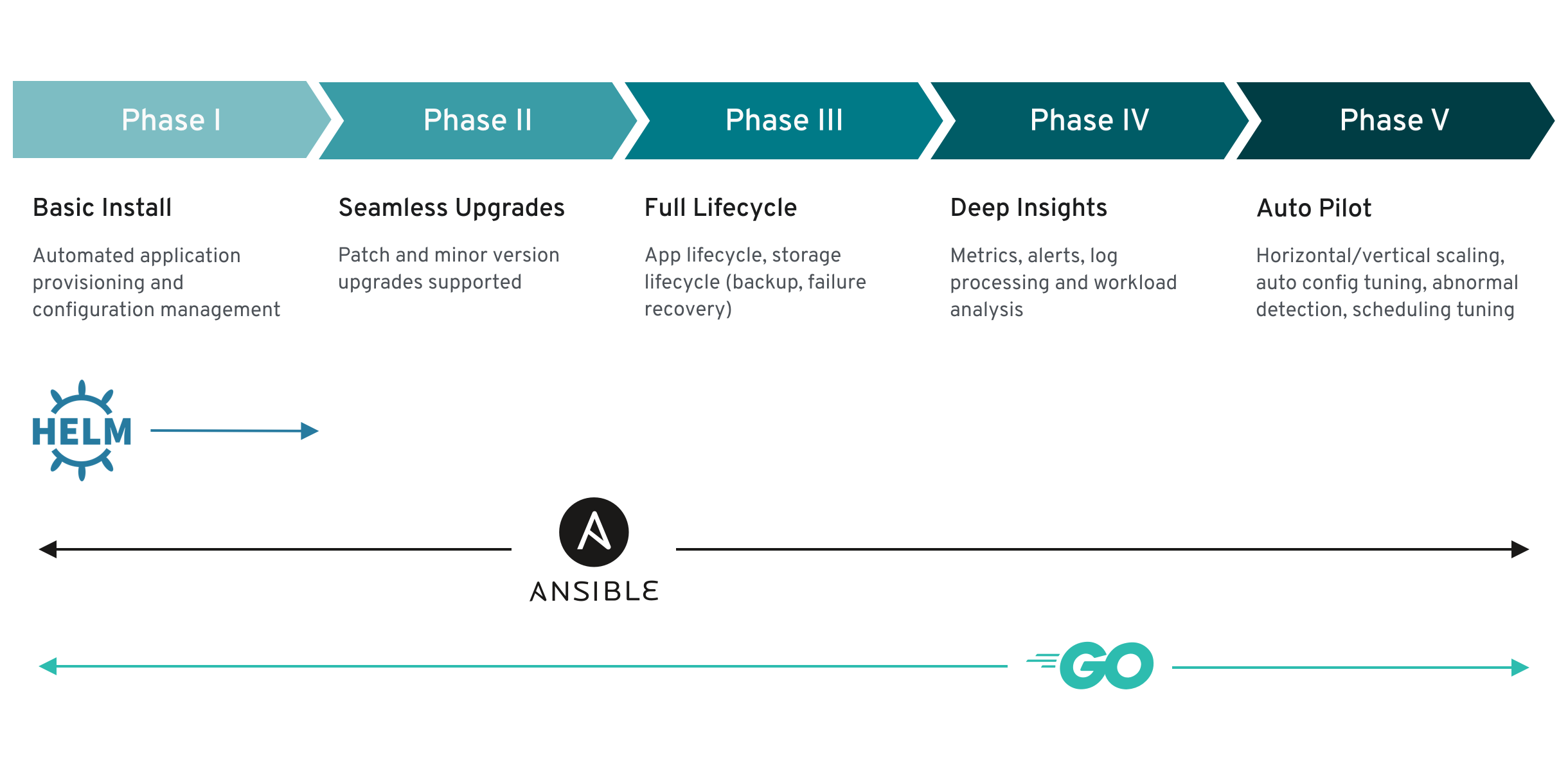

1.3. Operator maturity model

The level of sophistication of the management logic encapsulated within an Operator can vary. This logic is also in general highly dependent on the type of the service represented by the Operator.

One can however generalize the scale of the maturity of an Operator’s encapsulated operations for certain set of capabilities that most Operators can include. To this end, the following Operator Maturity model defines five phases of maturity for generic day two operations of an Operator:

Figure 1.1. Operator maturity model

The above model also shows how these capabilities can best be developed through the Operator SDK’s Helm, Go, and Ansible capabilities.

Chapter 2. Understanding the Operator Lifecycle Manager (OLM)

2.1. Operator Lifecycle Manager workflow and architecture

This guide outlines the concepts and architecture of the Operator Lifecycle Manager (OLM) in OpenShift Container Platform.

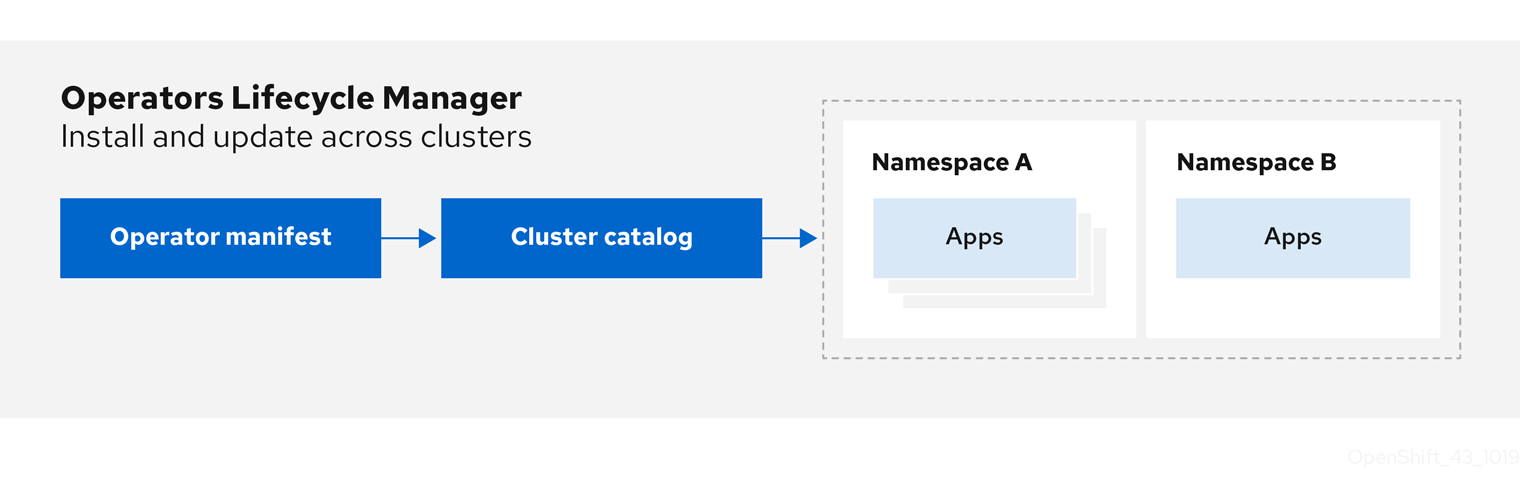

2.1.1. Overview of the Operator Lifecycle Manager

In OpenShift Container Platform 4.4, the Operator Lifecycle Manager (OLM) helps users install, update, and manage the lifecycle of all Operators and their associated services running across their clusters. It is part of the Operator Framework, an open source toolkit designed to manage Kubernetes native applications (Operators) in an effective, automated, and scalable way.

Figure 2.1. Operator Lifecycle Manager workflow

The OLM runs by default in OpenShift Container Platform 4.4, which aids cluster administrators in installing, upgrading, and granting access to Operators running on their cluster. The OpenShift Container Platform web console provides management screens for cluster administrators to install Operators, as well as grant specific projects access to use the catalog of Operators available on the cluster.

For developers, a self-service experience allows provisioning and configuring instances of databases, monitoring, and big data services without having to be subject matter experts, because the Operator has that knowledge baked into it.

2.1.2. ClusterServiceVersions (CSVs)

A ClusterServiceVersion (CSV) is a YAML manifest created from Operator metadata that assists the Operator Lifecycle Manager (OLM) in running the Operator in a cluster.

A CSV is the metadata that accompanies an Operator container image, used to populate user interfaces with information like its logo, description, and version. It is also a source of technical information needed to run the Operator, like the RBAC rules it requires and which Custom Resources (CRs) it manages or depends on.

A CSV is composed of:

- Metadata

Application metadata:

- Name, description, version (semver compliant), links, labels, icon, etc.

- Install strategy

Type: Deployment

- Set of service accounts and required permissions

- Set of Deployments.

- CRDs

- Type

- Owned: Managed by this service

- Required: Must exist in the cluster for this service to run

- Resources: A list of resources that the Operator interacts with

- Descriptors: Annotate CRD spec and status fields to provide semantic information

2.1.3. Operator installation and upgrade workflow in OLM

In the Operator Lifecycle Manager (OLM) ecosystem, the following resources are used to resolve Operator installations and upgrades:

- ClusterServiceVersion (CSV)

- CatalogSource

- Subscription

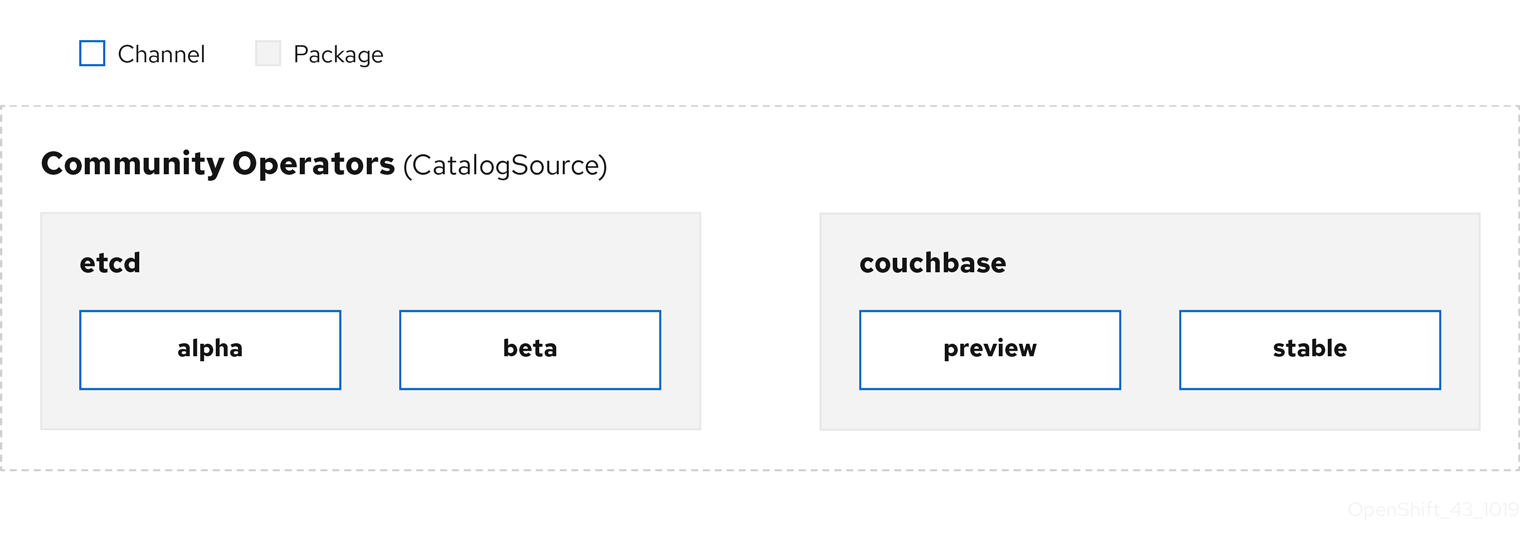

Operator metadata, defined in CSVs, can be stored in a collection called a CatalogSource. OLM uses CatalogSources, which use the Operator Registry API, to query for available Operators as well as upgrades for installed Operators.

Figure 2.2. CatalogSource overview

Within a CatalogSource, Operators are organized into packages and streams of updates called channels, which should be a familiar update pattern from OpenShift Container Platform or other software on a continuous release cycle like web browsers.

Figure 2.3. Packages and channels in a CatalogSource

A user indicates a particular package and channel in a particular CatalogSource in a Subscription, for example an etcd package and its alpha channel. If a Subscription is made to a package that has not yet been installed in the namespace, the latest Operator for that package is installed.

OLM deliberately avoids version comparisons, so the "latest" or "newest" Operator available from a given catalog → channel → package path does not necessarily need to be the highest version number. It should be thought of more as the head reference of a channel, similar to a Git repository.

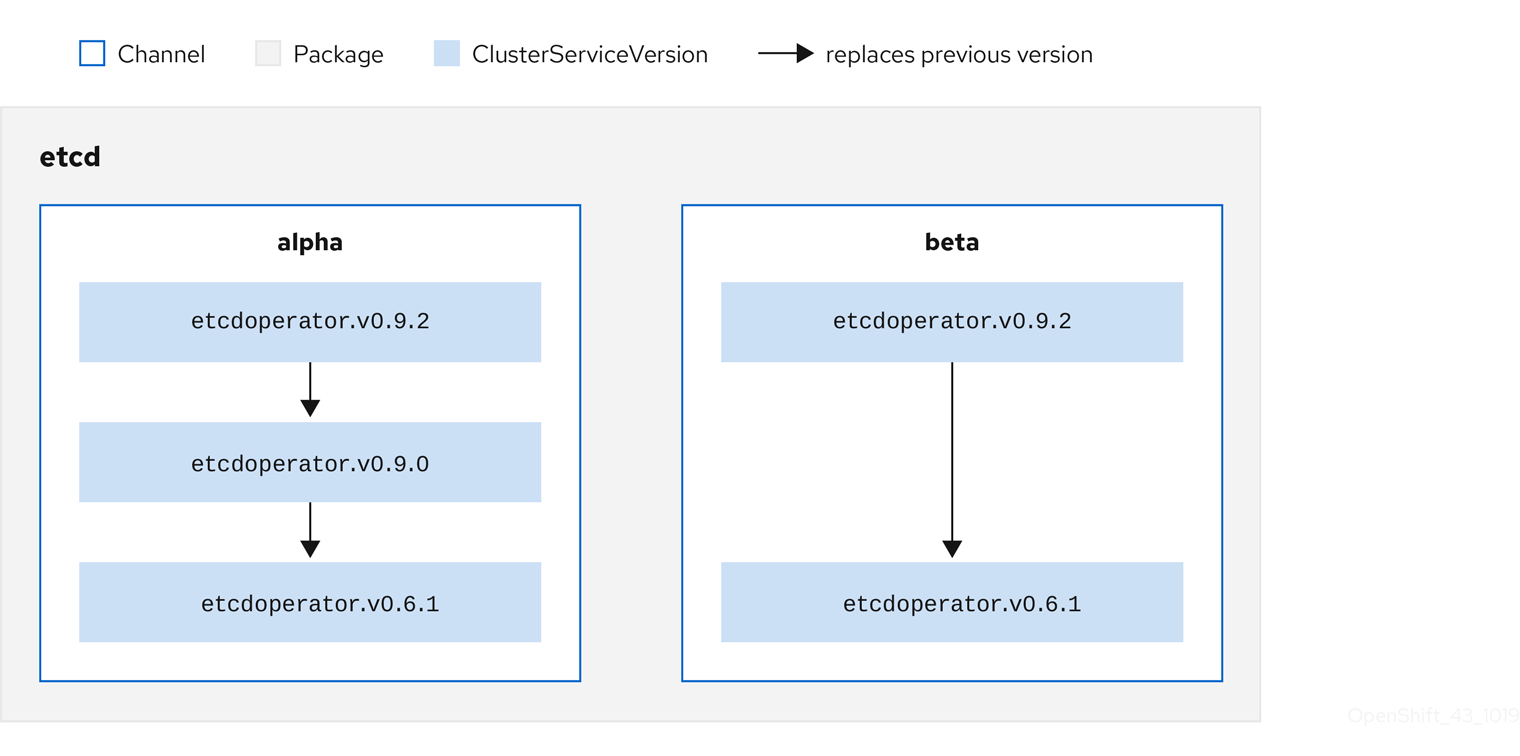

Each CSV has a replaces parameter that indicates which Operator it replaces. This builds a graph of CSVs that can be queried by OLM, and updates can be shared between channels. Channels can be thought of as entry points into the graph of updates:

Figure 2.4. OLM’s graph of available channel updates

For example:

Channels in a package

packageName: example channels: - name: alpha currentCSV: example.v0.1.2 - name: beta currentCSV: example.v0.1.3 defaultChannel: alpha

For OLM to successfully query for updates, given a CatalogSource, package, channel, and CSV, a catalog must be able to return, unambiguously and deterministically, a single CSV that replaces the input CSV.

2.1.3.1. Example upgrade path

For an example upgrade scenario, consider an installed Operator corresponding to CSV version 0.1.1. OLM queries the CatalogSource and detects an upgrade in the subscribed channel with new CSV version 0.1.3 that replaces an older but not-installed CSV version 0.1.2, which in turn replaces the older and installed CSV version 0.1.1.

OLM walks back from the channel head to previous versions via the replaces field specified in the CSVs to determine the upgrade path 0.1.3 → 0.1.2 → 0.1.1; the direction of the arrow indicates that the former replaces the latter. OLM upgrades the Operator one version at the time until it reaches the channel head.

For this given scenario, OLM installs Operator version 0.1.2 to replace the existing Operator version 0.1.1. Then, it installs Operator version 0.1.3 to replace the previously installed Operator version 0.1.2. At this point, the installed operator version 0.1.3 matches the channel head and the upgrade is completed.

2.1.3.2. Skipping upgrades

OLM’s basic path for upgrades is:

- A CatalogSource is updated with one or more updates to an Operator.

- OLM traverses every version of the Operator until reaching the latest version the CatalogSource contains.

However, sometimes this is not a safe operation to perform. There will be cases where a published version of an Operator should never be installed on a cluster if it has not already, for example because a version introduces a serious vulnerability.

In those cases, OLM must consider two cluster states and provide an update graph that supports both:

- The "bad" intermediate Operator has been seen by the cluster and installed.

- The "bad" intermediate Operator has not yet been installed onto the cluster.

By shipping a new catalog and adding a skipped release, OLM is ensured that it can always get a single unique update regardless of the cluster state and whether it has seen the bad update yet.

For example:

CSV with skipped release

apiVersion: operators.coreos.com/v1alpha1

kind: ClusterServiceVersion

metadata:

name: etcdoperator.v0.9.2

namespace: placeholder

annotations:

spec:

displayName: etcd

description: Etcd Operator

replaces: etcdoperator.v0.9.0

skips:

- etcdoperator.v0.9.1

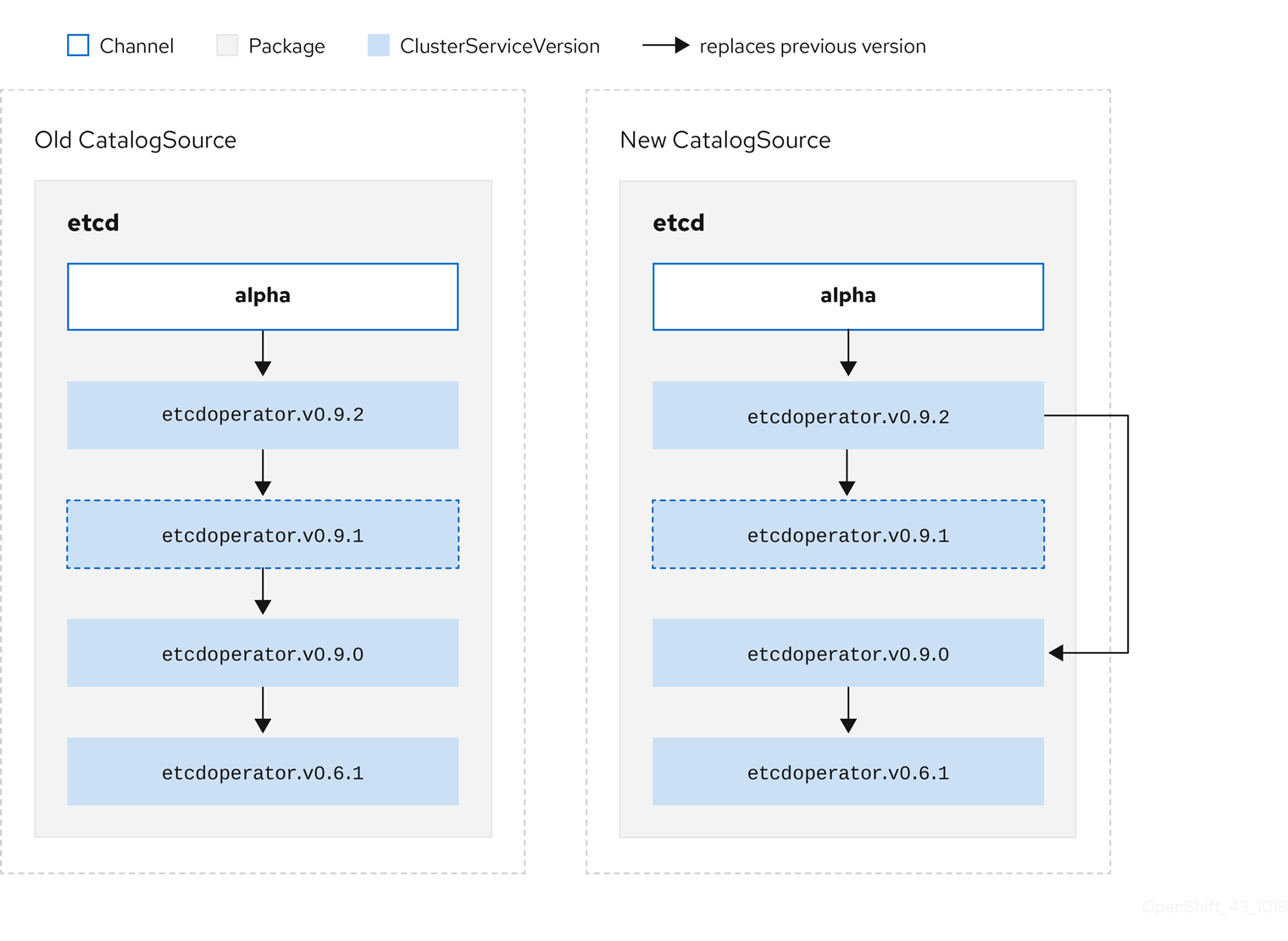

Consider the following example Old CatalogSource and New CatalogSource:

Figure 2.5. Skipping updates

This graph maintains that:

- Any Operator found in Old CatalogSource has a single replacement in New CatalogSource.

- Any Operator found in New CatalogSource has a single replacement in New CatalogSource.

- If the bad update has not yet been installed, it will never be.

2.1.3.3. Replacing multiple Operators

Creating the New CatalogSource as described requires publishing CSVs that replace one Operator, but can skip several. This can be accomplished using the skipRange annotation:

olm.skipRange: <semver_range>

where <semver_range> has the version range format supported by the semver library.

When searching catalogs for updates, if the head of a channel has a skipRange annotation and the currently installed Operator has a version field that falls in the range, OLM updates to the latest entry in the channel.

The order of precedence is:

-

Channel head in the source specified by

sourceNameon the Subscription, if the other criteria for skipping are met. -

The next Operator that replaces the current one, in the source specified by

sourceName. - Channel head in another source that is visible to the Subscription, if the other criteria for skipping are met.

- The next Operator that replaces the current one in any source visible to the Subscription.

For example:

CSV with skipRange

apiVersion: operators.coreos.com/v1alpha1

kind: ClusterServiceVersion

metadata:

name: elasticsearch-operator.v4.1.2

namespace: <namespace>

annotations:

olm.skipRange: '>=4.1.0 <4.1.2'

2.1.3.4. Z-stream support

A z-stream, or patch release, must replace all previous z-stream releases for the same minor version. OLM does not care about major, minor, or patch versions, it just needs to build the correct graph in a catalog.

In other words, OLM must be able to take a graph as in Old CatalogSource and, similar to before, generate a graph as in New CatalogSource:

Figure 2.6. Replacing several Operators

This graph maintains that:

- Any Operator found in Old CatalogSource has a single replacement in New CatalogSource.

- Any Operator found in New CatalogSource has a single replacement in New CatalogSource.

- Any z-stream release in Old CatalogSource will update to the latest z-stream release in New CatalogSource.

- Unavailable releases can be considered "virtual" graph nodes; their content does not need to exist, the registry just needs to respond as if the graph looks like this.

2.1.4. Operator Lifecycle Manager architecture

The Operator Lifecycle Manager is composed of two Operators: the OLM Operator and the Catalog Operator.

Each of these Operators is responsible for managing the Custom Resource Definitions (CRDs) that are the basis for the OLM framework:

Table 2.1. CRDs managed by OLM and Catalog Operators

| Resource | Short name | Owner | Description |

|---|---|---|---|

| ClusterServiceVersion |

| OLM | Application metadata: name, version, icon, required resources, installation, etc. |

| InstallPlan |

| Catalog | Calculated list of resources to be created in order to automatically install or upgrade a CSV. |

| CatalogSource |

| Catalog | A repository of CSVs, CRDs, and packages that define an application. |

| Subscription |

| Catalog | Used to keep CSVs up to date by tracking a channel in a package. |

| OperatorGroup |

| OLM | Used to group multiple namespaces and prepare them for use by an Operator. |

Each of these Operators is also responsible for creating resources:

Table 2.2. Resources created by OLM and Catalog Operators

| Resource | Owner |

|---|---|

| Deployments | OLM |

| ServiceAccounts | |

| (Cluster)Roles | |

| (Cluster)RoleBindings | |

| Custom Resource Definitions (CRDs) | Catalog |

| ClusterServiceVersions (CSVs) |

2.1.4.1. OLM Operator

The OLM Operator is responsible for deploying applications defined by CSV resources after the required resources specified in the CSV are present in the cluster.

The OLM Operator is not concerned with the creation of the required resources; users can choose to manually create these resources using the CLI, or users can choose to create these resources using the Catalog Operator. This separation of concern allows users incremental buy-in in terms of how much of the OLM framework they choose to leverage for their application.

While the OLM Operator is often configured to watch all namespaces, it can also be operated alongside other OLM Operators so long as they all manage separate namespaces.

OLM Operator workflow

Watches for ClusterServiceVersion (CSVs) in a namespace and checks that requirements are met. If so, runs the install strategy for the CSV.

NoteA CSV must be an active member of an OperatorGroup in order for the install strategy to be run.

2.1.4.2. Catalog Operator

The Catalog Operator is responsible for resolving and installing CSVs and the required resources they specify. It is also responsible for watching CatalogSources for updates to packages in channels and upgrading them (optionally automatically) to the latest available versions.

A user who wishes to track a package in a channel creates a Subscription resource configuring the desired package, channel, and the CatalogSource from which to pull updates. When updates are found, an appropriate InstallPlan is written into the namespace on behalf of the user.

Users can also create an InstallPlan resource directly, containing the names of the desired CSV and an approval strategy, and the Catalog Operator creates an execution plan for the creation of all of the required resources. After it is approved, the Catalog Operator creates all of the resources in an InstallPlan; this then independently satisfies the OLM Operator, which proceeds to install the CSVs.

Catalog Operator workflow

- Has a cache of CRDs and CSVs, indexed by name.

Watches for unresolved InstallPlans created by a user:

- Finds the CSV matching the name requested and adds it as a resolved resource.

- For each managed or required CRD, adds it as a resolved resource.

- For each required CRD, finds the CSV that manages it.

- Watches for resolved InstallPlans and creates all of the discovered resources for it (if approved by a user or automatically).

- Watches for CatalogSources and Subscriptions and creates InstallPlans based on them.

2.1.4.3. Catalog Registry

The Catalog Registry stores CSVs and CRDs for creation in a cluster and stores metadata about packages and channels.

A package manifest is an entry in the Catalog Registry that associates a package identity with sets of CSVs. Within a package, channels point to a particular CSV. Because CSVs explicitly reference the CSV that they replace, a package manifest provides the Catalog Operator all of the information that is required to update a CSV to the latest version in a channel, stepping through each intermediate version.

2.1.5. Exposed metrics

The Operator Lifecycle Manager (OLM) exposes certain OLM-specific resources for use by the Prometheus-based OpenShift Container Platform cluster monitoring stack.

Table 2.3. Metrics exposed by OLM

| Name | Description |

|---|---|

|

| Number of CatalogSources. |

|

|

When reconciling a ClusterServiceVersion (CSV), present whenever a CSV version is in any state other than |

|

| Number of CSVs successfully registered. |

|

|

When reconciling a CSV, represents whether a CSV version is in a |

|

| Monotonic count of CSV upgrades. |

|

| Number of InstallPlans. |

|

| Number of Subscriptions. |

|

|

Monotonic count of Subscription syncs. Includes the |

2.2. Operator Lifecycle Manager dependency resolution

This guide outlines dependency resolution and Custom Resource Definition (CRD) upgrade lifecycles within the Operator Lifecycle Manager (OLM) in OpenShift Container Platform.

2.2.1. About dependency resolution

OLM manages the dependency resolution and upgrade lifecycle of running Operators. In many ways, the problems OLM faces are similar to other operating system package managers like yum and rpm.

However, there is one constraint that similar systems do not generally have that OLM does: because Operators are always running, OLM attempts to ensure that you are never left with a set of Operators that do not work with each other.

This means that OLM must never:

- install a set of Operators that require APIs that cannot be provided, or

- update an Operator in a way that breaks another that depends upon it.

2.2.2. Custom Resource Definition (CRD) upgrades

OLM upgrades a Custom Resource Definition (CRD) immediately if it is owned by a singular Cluster Service Version (CSV). If a CRD is owned by multiple CSVs, then the CRD is upgraded when it has satisfied all of the following backward compatible conditions:

- All existing serving versions in the current CRD are present in the new CRD.

- All existing instances, or Custom Resources (CRs), that are associated with the serving versions of the CRD are valid when validated against the new CRD’s validation schema.

2.2.2.1. Adding a new CRD version

Procedure

To add a new version of a CRD:

Add a new entry in the CRD resource under the

versionssection.For example, if the current CRD has one version

v1alpha1and you want to add a new versionv1beta1and mark it as the new storage version:versions: - name: v1alpha1 served: true storage: false - name: v1beta1 1 served: true storage: true- 1

- Add a new entry for

v1beta1.

Ensure the referencing version of the CRD in your CSV’s

ownedsection is updated if the CSV intends to use the new version:customresourcedefinitions: owned: - name: cluster.example.com version: v1beta1 1 kind: cluster displayName: Cluster- 1

- Update the

version.

- Push the updated CRD and CSV to your bundle.

2.2.2.2. Deprecating or removing a CRD version

OLM does not allow a serving version of a CRD to be removed right away. Instead, a deprecated version of the CRD must be first disabled by setting the served field in the CRD to false. Then, the non-serving version can be removed on the subsequent CRD upgrade.

Procedure

To deprecate and remove a specific version of a CRD:

Mark the deprecated version as non-serving to indicate this version is no longer in use and may be removed in a subsequent upgrade. For example:

versions: - name: v1alpha1 served: false 1 storage: true- 1

- Set to

false.

Switch the

storageversion to a serving version if the version to be deprecated is currently thestorageversion. For example:versions: - name: v1alpha1 served: false storage: false 1 - name: v1beta1 served: true storage: true 2NoteIn order to remove a specific version that is or was the

storageversion from a CRD, that version must be removed from thestoredVersionin the CRD’s status. OLM will attempt to do this for you if it detects a stored version no longer exists in the new CRD.- Upgrade the CRD with the above changes.

In subsequent upgrade cycles, the non-serving version can be removed completely from the CRD. For example:

versions: - name: v1beta1 served: true storage: true-

Ensure the referencing version of the CRD in your CSV’s

ownedsection is updated accordingly if that version is removed from the CRD.

2.2.3. Example dependency resolution scenarios

In the following examples, a provider is an Operator which "owns" a CRD or APIService.

Example: Deprecating dependent APIs

A and B are APIs (e.g., CRDs):

- A’s provider depends on B.

- B’s provider has a Subscription.

- B’s provider updates to provide C but deprecates B.

This results in:

- B no longer has a provider.

- A no longer works.

This is a case OLM prevents with its upgrade strategy.

Example: Version deadlock

A and B are APIs:

- A’s provider requires B.

- B’s provider requires A.

- A’s provider updates to (provide A2, require B2) and deprecate A.

- B’s provider updates to (provide B2, require A2) and deprecate B.

If OLM attempts to update A without simultaneously updating B, or vice-versa, it is unable to progress to new versions of the Operators, even though a new compatible set can be found.

This is another case OLM prevents with its upgrade strategy.

2.3. OperatorGroups

This guide outlines the use of OperatorGroups with the Operator Lifecycle Manager (OLM) in OpenShift Container Platform.

2.3.1. About OperatorGroups

An OperatorGroup is an OLM resource that provides multitenant configuration to OLM-installed Operators. An OperatorGroup selects target namespaces in which to generate required RBAC access for its member Operators.

The set of target namespaces is provided by a comma-delimited string stored in the ClusterServiceVersion’s (CSV) olm.targetNamespaces annotation. This annotation is applied to member Operator’s CSV instances and is projected into their deployments.

2.3.2. OperatorGroup membership

An Operator is considered a member of an OperatorGroup if the following conditions are true:

- The Operator’s CSV exists in the same namespace as the OperatorGroup.

- The Operator’s CSV’s InstallModes support the set of namespaces targeted by the OperatorGroup.

An InstallMode consists of an InstallModeType field and a boolean Supported field. A CSV’s spec can contain a set of InstallModes of four distinct InstallModeTypes:

Table 2.4. InstallModes and supported OperatorGroups

| InstallModeType | Description |

|---|---|

|

| The Operator can be a member of an OperatorGroup that selects its own namespace. |

|

| The Operator can be a member of an OperatorGroup that selects one namespace. |

|

| The Operator can be a member of an OperatorGroup that selects more than one namespace. |

|

|

The Operator can be a member of an OperatorGroup that selects all namespaces (target namespace set is the empty string |

If a CSV’s spec omits an entry of InstallModeType, then that type is considered unsupported unless support can be inferred by an existing entry that implicitly supports it.

2.3.3. Target namespace selection

You can explicitly name the target namespace for an OperatorGroup using the spec.targetNamespaces parameter:

apiVersion: operators.coreos.com/v1 kind: OperatorGroup metadata: name: my-group namespace: my-namespace spec: targetNamespaces: - my-namespace

You can alternatively specify a namespace using a label selector with the spec.selector parameter:

apiVersion: operators.coreos.com/v1

kind: OperatorGroup

metadata:

name: my-group

namespace: my-namespace

spec:

selector:

cool.io/prod: "true"

Listing multiple namespaces via spec.targetNamespaces or use of a label selector via spec.selector is not recommended, as the support for more than one target namespace in an OperatorGroup will likely be removed in a future release.

If both spec.targetNamespaces and spec.selector are defined, spec.selector is ignored. Alternatively, you can omit both spec.selector and spec.targetNamespaces to specify a global OperatorGroup, which selects all namespaces:

apiVersion: operators.coreos.com/v1 kind: OperatorGroup metadata: name: my-group namespace: my-namespace

The resolved set of selected namespaces is shown in an OperatorGroup’s status.namespaces parameter. A global OperatorGroup’s status.namespace contains the empty string (""), which signals to a consuming Operator that it should watch all namespaces.

2.3.4. OperatorGroup CSV annotations

Member CSVs of an OperatorGroup have the following annotations:

| Annotation | Description |

|---|---|

|

| Contains the name of the OperatorGroup. |

|

| Contains the namespace of the OperatorGroup. |

|

| Contains a comma-delimited string that lists the OperatorGroup’s target namespace selection. |

All annotations except olm.targetNamespaces are included with copied CSVs. Omitting the olm.targetNamespaces annotation on copied CSVs prevents the duplication of target namespaces between tenants.

2.3.5. Provided APIs annotation

Information about what GroupVersionKinds (GVKs) are provided by an OperatorGroup are shown in an olm.providedAPIs annotation. The annotation’s value is a string consisting of <kind>.<version>.<group> delimited with commas. The GVKs of CRDs and APIServices provided by all active member CSVs of an OperatorGroup are included.

Review the following example of an OperatorGroup with a single active member CSV that provides the PackageManifest resource:

apiVersion: operators.coreos.com/v1

kind: OperatorGroup

metadata:

annotations:

olm.providedAPIs: PackageManifest.v1alpha1.packages.apps.redhat.com

name: olm-operators

namespace: local

...

spec:

selector: {}

serviceAccount:

metadata:

creationTimestamp: null

targetNamespaces:

- local

status:

lastUpdated: 2019-02-19T16:18:28Z

namespaces:

- local2.3.6. Role-based access control

When an OperatorGroup is created, three ClusterRoles are generated. Each contains a single AggregationRule with a ClusterRoleSelector set to match a label, as shown below:

| ClusterRole | Label to match |

|---|---|

|

|

|

|

|

|

|

|

|

The following RBAC resources are generated when a CSV becomes an active member of an OperatorGroup, as long as the CSV is watching all namespaces with the AllNamespaces InstallMode and is not in a failed state with reason InterOperatorGroupOwnerConflict.

- ClusterRoles for each API resource from a CRD

- ClusterRoles for each API resource from an APIService

- Additional Roles and RoleBindings

Table 2.5. ClusterRoles generated for each API resource from a CRD

| ClusterRole | Settings |

|---|---|

|

|

Verbs on

Aggregation labels:

|

|

|

Verbs on

Aggregation labels:

|

|

|

Verbs on

Aggregation labels:

|

|

|

Verbs on

Aggregation labels:

|

Table 2.6. ClusterRoles generated for each API resource from an APIService

| ClusterRole | Settings |

|---|---|

|

|

Verbs on

Aggregation labels:

|

|

|

Verbs on

Aggregation labels:

|

|

|

Verbs on

Aggregation labels:

|

Additional Roles and RoleBindings

-

If the CSV defines exactly one target namespace that contains

*, then a ClusterRole and corresponding ClusterRoleBinding are generated for each permission defined in the CSV’s permissions field. All resources generated are given theolm.owner: <csv_name>andolm.owner.namespace: <csv_namespace>labels. -

If the CSV does not define exactly one target namespace that contains

*, then all Roles and RoleBindings in the Operator namespace with theolm.owner: <csv_name>andolm.owner.namespace: <csv_namespace>labels are copied into the target namespace.

2.3.7. Copied CSVs

OLM creates copies of all active member CSVs of an OperatorGroup in each of that OperatorGroup’s target namespaces. The purpose of a copied CSV is to tell users of a target namespace that a specific Operator is configured to watch resources created there. Copied CSVs have a status reason Copied and are updated to match the status of their source CSV. The olm.targetNamespaces annotation is stripped from copied CSVs before they are created on the cluster. Omitting the target namespace selection avoids the duplication of target namespaces between tenants. Copied CSVs are deleted when their source CSV no longer exists or the OperatorGroup that their source CSV belongs to no longer targets the copied CSV’s namespace.

2.3.8. Static OperatorGroups

An OperatorGroup is static if its spec.staticProvidedAPIs field is set to true. As a result, OLM does not modify the OperatorGroup’s olm.providedAPIs annotation, which means that it can be set in advance. This is useful when a user wants to use an OperatorGroup to prevent resource contention in a set of namespaces but does not have active member CSVs that provide the APIs for those resources.

Below is an example of an OperatorGroup that protects Prometheus resources in all namespaces with the something.cool.io/cluster-monitoring: "true" annotation:

apiVersion: operators.coreos.com/v1

kind: OperatorGroup

metadata:

name: cluster-monitoring

namespace: cluster-monitoring

annotations:

olm.providedAPIs: Alertmanager.v1.monitoring.coreos.com,Prometheus.v1.monitoring.coreos.com,PrometheusRule.v1.monitoring.coreos.com,ServiceMonitor.v1.monitoring.coreos.com

spec:

staticProvidedAPIs: true

selector:

matchLabels:

something.cool.io/cluster-monitoring: "true"2.3.9. OperatorGroup intersection

Two OperatorGroups are said to have intersecting provided APIs if the intersection of their target namespace sets is not an empty set and the intersection of their provided API sets, defined by olm.providedAPIs annotations, is not an empty set.

A potential issue is that OperatorGroups with intersecting provided APIs can compete for the same resources in the set of intersecting namespaces.

When checking intersection rules, an OperatorGroup’s namespace is always included as part of its selected target namespaces.

Rules for intersection

Each time an active member CSV synchronizes, OLM queries the cluster for the set of intersecting provided APIs between the CSV’s OperatorGroup and all others. OLM then checks if that set is an empty set:

If

trueand the CSV’s provided APIs are a subset of the OperatorGroup’s:- Continue transitioning.

If

trueand the CSV’s provided APIs are not a subset of the OperatorGroup’s:If the OperatorGroup is static:

- Clean up any deployments that belong to the CSV.

-

Transition the CSV to a failed state with status reason

CannotModifyStaticOperatorGroupProvidedAPIs.

If the OperatorGroup is not static:

-

Replace the OperatorGroup’s

olm.providedAPIsannotation with the union of itself and the CSV’s provided APIs.

-

Replace the OperatorGroup’s

If

falseand the CSV’s provided APIs are not a subset of the OperatorGroup’s:- Clean up any deployments that belong to the CSV.

-

Transition the CSV to a failed state with status reason

InterOperatorGroupOwnerConflict.

If

falseand the CSV’s provided APIs are a subset of the OperatorGroup’s:If the OperatorGroup is static:

- Clean up any deployments that belong to the CSV.

-

Transition the CSV to a failed state with status reason

CannotModifyStaticOperatorGroupProvidedAPIs.

If the OperatorGroup is not static:

-

Replace the OperatorGroup’s

olm.providedAPIsannotation with the difference between itself and the CSV’s provided APIs.

-

Replace the OperatorGroup’s

Failure states caused by OperatorGroups are non-terminal.

The following actions are performed each time an OperatorGroup synchronizes:

- The set of provided APIs from active member CSVs is calculated from the cluster. Note that copied CSVs are ignored.

-

The cluster set is compared to

olm.providedAPIs, and ifolm.providedAPIscontains any extra APIs, then those APIs are pruned. - All CSVs that provide the same APIs across all namespaces are requeued. This notifies conflicting CSVs in intersecting groups that their conflict has possibly been resolved, either through resizing or through deletion of the conflicting CSV.

2.3.10. Troubleshooting OperatorGroups

Membership

-

If more than one OperatorGroup exists in a single namespace, any CSV created in that namespace will transition to a failure state with the reason

TooManyOperatorGroups. CSVs in a failed state for this reason will transition to pending once the number of OperatorGroups in their namespaces reaches one. -

If a CSV’s InstallModes do not support the target namespace selection of the OperatorGroup in its namespace, the CSV will transition to a failure state with the reason

UnsupportedOperatorGroup. CSVs in a failed state for this reason will transition to pending once either the OperatorGroup’s target namespace selection changes to a supported configuration, or the CSV’s InstallModes are modified to support the OperatorGroup’s target namespace selection.

Chapter 3. Understanding the OperatorHub

This guide outlines the architecture of the OperatorHub.

3.1. Overview of the OperatorHub

The OperatorHub is available via the OpenShift Container Platform web console and is the interface that cluster administrators use to discover and install Operators. With one click, an Operator can be pulled from their off-cluster source, installed and subscribed on the cluster, and made ready for engineering teams to self-service manage the product across deployment environments using the Operator Lifecycle Manager (OLM).

Cluster administrators can choose from OperatorSources grouped into the following categories:

| Category | Description |

|---|---|

| Red Hat Operators | Red Hat products packaged and shipped by Red Hat. Supported by Red Hat. |

| Certified Operators | Products from leading independent software vendors (ISVs). Red Hat partners with ISVs to package and ship. Supported by the ISV. |

| Community Operators | Optionally-visible software maintained by relevant representatives in the operator-framework/community-operators GitHub repository. No official support. |

| Custom Operators | Operators you add to the cluster yourself. If you have not added any Custom Operators, the Custom category does not appear in the web console on your OperatorHub. |

OperatorHub content automatically refreshes every 60 minutes.

Operators on the OperatorHub are packaged to run on OLM. This includes a YAML file called a ClusterServiceVersion (CSV) containing all of the CRDs, RBAC rules, Deployments, and container images required to install and securely run the Operator. It also contains user-visible information like a description of its features and supported Kubernetes versions.

The Operator SDK can be used to assist developers packaging their Operators for use on OLM and the OperatorHub. If you have a commercial application that you want to make accessible to your customers, get it included using the certification workflow provided by Red Hat’s ISV partner portal at connect.redhat.com.

3.2. OperatorHub architecture

The OperatorHub UI component is driven by the Marketplace Operator by default on OpenShift Container Platform in the openshift-marketplace namespace.

The Marketplace Operator manages OperatorHub and OperatorSource Custom Resource Definitions (CRDs).

Although some OperatorSource information is exposed through the OperatorHub user interface, it is only used directly by those who are creating their own Operators.

While OperatorHub no longer uses CatalogSourceConfig resources, they are still supported in OpenShift Container Platform.

3.2.1. OperatorHub CRD

You can use the OperatorHub CRD to change the state of the default OperatorSources provided with OperatorHub on the cluster between enabled and disabled. This capability is useful when configuring OpenShift Container Platform in restricted network environments.

Example OperatorHub Custom Resource

apiVersion: config.openshift.io/v1 kind: OperatorHub metadata: name: cluster spec: disableAllDefaultSources: true 1 sources: [ 2 { name: "community-operators", disabled: false } ]

3.2.2. OperatorSource CRD

For each Operator, the OperatorSource CRD is used to define the external data store used to store Operator bundles.

Example OperatorSource Custom Resource

apiVersion: operators.coreos.com/v1 kind: OperatorSource metadata: name: community-operators namespace: marketplace spec: type: appregistry 1 endpoint: https://quay.io/cnr 2 registryNamespace: community-operators 3 displayName: "Community Operators" 4 publisher: "Red Hat" 5

- 1

- To identify the data store as an application registry,

typeis set toappregistry. - 2

- Currently, Quay is the external data store used by the OperatorHub, so the endpoint is set to

https://quay.io/cnrfor the Quay.ioappregistry. - 3

- For a Community Operator,

registryNamespaceis set tocommunity-operator. - 4

- Optionally, set

displayNameto a name that appears for the Operator in the OperatorHub UI. - 5

- Optionally, set

publisherto the person or organization publishing the Operator that appears in the OperatorHub UI.

Chapter 4. Adding Operators to a cluster

This guide walks cluster administrators through installing Operators to an OpenShift Container Platform cluster and subscribing Operators to namespaces.

4.1. Installing Operators from the OperatorHub

As a cluster administrator, you can install an Operator from the OperatorHub using the OpenShift Container Platform web console or the CLI. You can then subscribe the Operator to one or more namespaces to make it available for developers on your cluster.

During installation, you must determine the following initial settings for the Operator:

- Installation Mode

- Choose All namespaces on the cluster (default) to have the Operator installed on all namespaces or choose individual namespaces, if available, to only install the Operator on selected namespaces. This example chooses All namespaces… to make the Operator available to all users and projects.

- Update Channel

- If an Operator is available through multiple channels, you can choose which channel you want to subscribe to. For example, to deploy from the stable channel, if available, select it from the list.

- Approval Strategy

- You can choose Automatic or Manual updates. If you choose Automatic updates for an installed Operator, when a new version of that Operator is available, the Operator Lifecycle Manager (OLM) automatically upgrades the running instance of your Operator without human intervention. If you select Manual updates, when a newer version of an Operator is available, the OLM creates an update request. As a cluster administrator, you must then manually approve that update request to have the Operator updated to the new version.

4.1.1. Installing from the OperatorHub using the web console

This procedure uses the Couchbase Operator as an example to install and subscribe to an Operator from the OperatorHub using the OpenShift Container Platform web console.

Prerequisites

-

Access to an OpenShift Container Platform cluster using an account with

cluster-adminpermissions.

Procedure

- Navigate in the web console to the Operators → OperatorHub page.

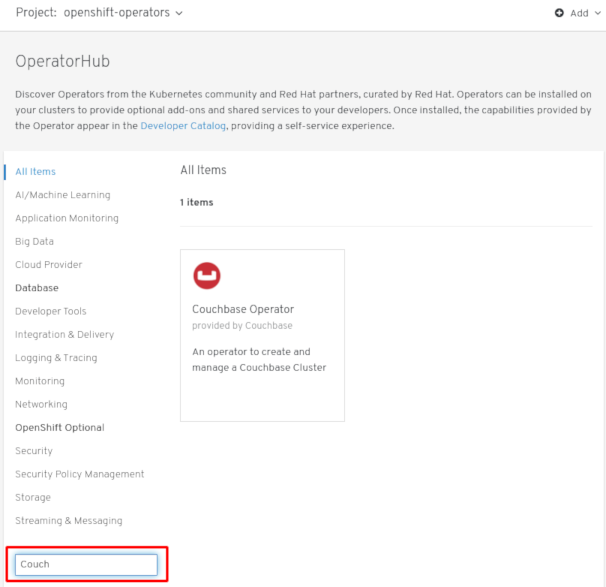

Scroll or type a keyword into the Filter by keyword box (in this case,

Couchbase) to find the Operator you want.Figure 4.1. Filter Operators by keyword

- Select the Operator. For a Community Operator, you are warned that Red Hat does not certify those Operators. You must acknowledge that warning before continuing. Information about the Operator is displayed.

- Read the information about the Operator and click Install.

On the Create Operator Subscription page:

Select one of the following:

-

All namespaces on the cluster (default) installs the Operator in the default

openshift-operatorsnamespace to watch and be made available to all namespaces in the cluster. This option is not always available. - A specific namespace on the cluster allows you to choose a specific, single namespace in which to install the Operator. The Operator will only watch and be made available for use in this single namespace.

-

All namespaces on the cluster (default) installs the Operator in the default

- Select an Update Channel (if more than one is available).

- Select Automatic or Manual approval strategy, as described earlier.

Click Subscribe to make the Operator available to the selected namespaces on this OpenShift Container Platform cluster.

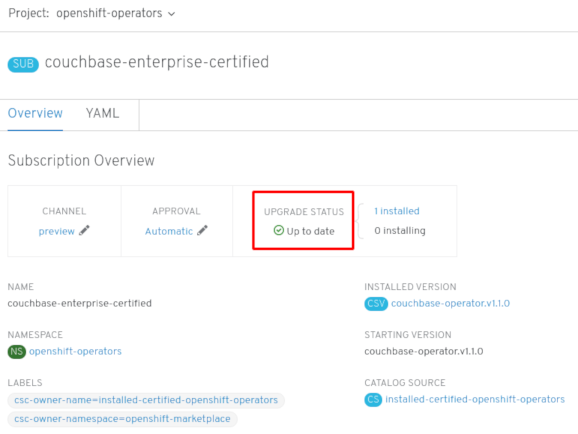

If you selected a Manual approval strategy, the Subscription’s upgrade status will remain Upgrading until you review and approve its Install Plan.

Figure 4.2. Manually approving from the Install Plan page

After approving on the Install Plan page, the Subscription upgrade status moves to Up to date.

If you selected an Automatic approval strategy, the upgrade status should resolve to Up to date without intervention.

Figure 4.3. Subscription upgrade status Up to date

After the Subscription’s upgrade status is Up to date, select Operators → Installed Operators to verify that the Couchbase ClusterServiceVersion (CSV) eventually shows up and its Status ultimately resolves to InstallSucceeded in the relevant namespace.

NoteFor the All namespaces… Installation Mode, the status resolves to InstallSucceeded in the

openshift-operatorsnamespace, but the status is Copied if you check in other namespaces.If it does not:

-

Check the logs in any pods in the

openshift-operatorsproject (or other relevant namespace if A specific namespace… Installation Mode was selected) on the Workloads → Pods page that are reporting issues to troubleshoot further.

-

Check the logs in any pods in the

4.1.2. Installing from the OperatorHub using the CLI

Instead of using the OpenShift Container Platform web console, you can install an Operator from the OperatorHub using the CLI. Use the oc command to create or update a Subscription object.

Prerequisites

-

Access to an OpenShift Container Platform cluster using an account with

cluster-adminpermissions. - Install the oc command to your local system.

Procedure

View the list of Operators available to the cluster from the OperatorHub.

$ oc get packagemanifests -n openshift-marketplace NAME CATALOG AGE 3scale-operator Red Hat Operators 91m amq-online Red Hat Operators 91m amq-streams Red Hat Operators 91m ... couchbase-enterprise-certified Certified Operators 91m mariadb Certified Operators 91m mongodb-enterprise Certified Operators 91m ... etcd Community Operators 91m jaeger Community Operators 91m kubefed Community Operators 91m ...

Note the CatalogSource(s) for your desired Operator(s).

Inspect your desired Operator to verify its supported InstallModes and available Channels:

$ oc describe packagemanifests <operator_name> -n openshift-marketplace

An OperatorGroup is an OLM resource that selects target namespaces in which to generate required RBAC access for all Operators in the same namespace as the OperatorGroup.

The namespace to which you subscribe the Operator must have an OperatorGroup that matches the Operator’s InstallMode, either the

AllNamespacesorSingleNamespacemode. If the Operator you intend to install uses theAllNamespaces, then theopenshift-operatorsnamespace already has an appropriate OperatorGroup in place.However, if the Operator uses the

SingleNamespacemode and you do not already have an appropriate OperatorGroup in place, you must create one.NoteThe web console version of this procedure handles the creation of the OperatorGroup and Subscription objects automatically behind the scenes for you when choosing

SingleNamespacemode.Create an OperatorGroup object YAML file, for example

operatorgroup.yaml:Example OperatorGroup

apiVersion: operators.coreos.com/v1 kind: OperatorGroup metadata: name: <operatorgroup_name> namespace: <namespace> spec: targetNamespaces: - <namespace>

Create the OperatorGroup object:

$ oc apply -f operatorgroup.yaml

Create a Subscription object YAML file to subscribe a namespace to an Operator, for example

sub.yaml:Example Subscription

apiVersion: operators.coreos.com/v1alpha1 kind: Subscription metadata: name: <operator_name> namespace: openshift-operators 1 spec: channel: alpha name: <operator_name> 2 source: redhat-operators 3 sourceNamespace: openshift-marketplace 4

- 1

- For

AllNamespacesInstallMode usage, specify theopenshift-operatorsnamespace. Otherwise, specify the relevant single namespace forSingleNamespaceInstallMode usage. - 2

- Name of the Operator to subscribe to.

- 3

- Name of the CatalogSource that provides the Operator.

- 4

- Namespace of the CatalogSource. Use

openshift-marketplacefor the default OperatorHub CatalogSources.

Create the Subscription object:

$ oc apply -f sub.yaml

At this point, the OLM is now aware of the selected Operator. A ClusterServiceVersion (CSV) for the Operator should appear in the target namespace, and APIs provided by the Operator should be available for creation.

Additional resources

Chapter 5. Configuring proxy support in Operator Lifecycle Manager

If a global proxy is configured on the OpenShift Container Platform cluster, Operator Lifecycle Manager automatically configures Operators that it manages with the cluster-wide proxy. However, you can also configure installed Operators to override the global proxy or inject a custom CA certificate.

Additional resources

- Configuring the cluster-wide proxy

- Configuring a custom PKI (custom CA certificate)

5.1. Overriding an Operator’s proxy settings

If a cluster-wide egress proxy is configured, Operators running with Operator Lifecycle Manager (OLM) inherit the cluster-wide proxy settings on their deployments. Cluster administrators can also override these proxy settings by configuring the subscription of an Operator.

Operators must handle setting environment variables for proxy settings in the pods for any managed Operands.

Prerequisites

-

Access to an OpenShift Container Platform cluster using an account with

cluster-adminpermissions.

Procedure

- Navigate in the web console to the Operators → OperatorHub page.

- Select the Operator and click Install.

On the Create Operator Subscription page, modify the Subscription object’s YAML to include one or more of the following environment variables in the

specsection:-

HTTP_PROXY -

HTTPS_PROXY -

NO_PROXY

For example:

Subscription object with proxy setting overrides

apiVersion: operators.coreos.com/v1alpha1 kind: Subscription metadata: name: etcd-config-test namespace: openshift-operators spec: config: env: - name: HTTP_PROXY value: test_http - name: HTTPS_PROXY value: test_https - name: NO_PROXY value: test channel: clusterwide-alpha installPlanApproval: Automatic name: etcd source: community-operators sourceNamespace: openshift-marketplace startingCSV: etcdoperator.v0.9.4-clusterwideNoteThese environment variables can also be unset using an empty value to remove any previously set cluster-wide or custom proxy settings.

OLM handles these environment variables as a unit; if at least one of them is set, all three are considered overridden and the cluster-wide defaults are not used for the subscribed Operator’s Deployments.

-

- Click Subscribe to make the Operator available to the selected namespaces.

After the Operator’s CSV appears in the relevant namespace, you can verify that custom proxy environment variables are set in the Deployment. For example, using the CLI:

$ oc get deployment -n openshift-operators etcd-operator -o yaml | grep -i "PROXY" -A 2 - name: HTTP_PROXY value: test_http - name: HTTPS_PROXY value: test_https - name: NO_PROXY value: test image: quay.io/coreos/etcd-operator@sha256:66a37fd61a06a43969854ee6d3e21088a98b93838e284a6086b13917f96b0d9c ...

5.2. Injecting a custom CA certificate

When a cluster administrator adds a custom CA certificate to a cluster using a ConfigMap, the Cluster Network Operator merges the user-provided certificates and system CA certificates into a single bundle. You can inject this merged bundle into your Operator running on Operator Lifecycle Manager (OLM), which is useful if you have a man-in-the-middle HTTPS proxy.

Prerequisites

-

Access to an OpenShift Container Platform cluster using an account with

cluster-adminpermissions. - Custom CA certificate added to the cluster using a ConfigMap.

- Desired Operator installed and running on OLM.

Procedure

Create an empty ConfigMap in the namespace where your Operator’s Subscription exists and include the following label:

apiVersion: v1 kind: ConfigMap metadata: name: trusted-ca 1 labels: config.openshift.io/inject-trusted-cabundle: "true" 2

After creating this ConfigMap, the ConfigMap is immediately populated with the certificate contents of the merged bundle.

Update your Operator’s Subscription object to include a

spec.configsection that mounts thetrusted-caConfigMap as a volume to each container within a Pod that requires a custom CA:kind: Subscription metadata: name: my-operator spec: package: etcd channel: alpha config: 1 - selector: matchLabels: <labels_for_pods> 2 volumes: 3 - name: trusted-ca configMap: name: trusted-ca items: - key: ca-bundle.crt 4 path: tls-ca-bundle.pem 5 volumeMounts: 6 - name: trusted-ca mountPath: /etc/pki/ca-trust/extracted/pem readOnly: true

Chapter 6. Deleting Operators from a cluster

The following describes how to delete Operators from a cluster using either the web console or the CLI.

6.1. Deleting Operators from a cluster using the web console

Cluster administrators can delete installed Operators from a selected namespace by using the web console.

Prerequisites

-

Access to an OpenShift Container Platform cluster web console using an account with

cluster-adminpermissions.

Procedure

- From the Operators → Installed Operators page, scroll or type a keyword into the Filter by name to find the Operator you want. Then, click on it.

On the right-hand side of the Operator Details page, select Uninstall Operator from the Actions drop-down menu.

An Uninstall Operator? dialog box is displayed, reminding you that Removing the operator will not remove any of its custom resource definitions or managed resources. If your operator has deployed applications on the cluster or configured off-cluster resources, these will continue to run and need to be cleaned up manually.

The Operator, any Operator deployments, and pods are removed by this action. Any resources managed by the Operator, including CRDs and CRs are not removed. The web console enables dashboards and navigation items for some Operators. To remove these after uninstalling the Operator, you might need to manually delete the Operator CRDs.

- Select Uninstall. This Operator stops running and no longer receives updates.

6.2. Deleting Operators from a cluster using the CLI

Cluster administrators can delete installed Operators from a selected namespace by using the CLI.

Prerequisites

-

Access to an OpenShift Container Platform cluster using an account with

cluster-adminpermissions. -

occommand installed on workstation.

Procedure

Check the current version of the subscribed Operator (for example,

jaeger) in thecurrentCSVfield:$ oc get subscription jaeger -n openshift-operators -o yaml | grep currentCSV currentCSV: jaeger-operator.v1.8.2

Delete the Operator’s Subscription (for example,

jaeger):$ oc delete subscription jaeger -n openshift-operators subscription.operators.coreos.com "jaeger" deleted

Delete the CSV for the Operator in the target namespace using the

currentCSVvalue from the previous step:$ oc delete clusterserviceversion jaeger-operator.v1.8.2 -n openshift-operators clusterserviceversion.operators.coreos.com "jaeger-operator.v1.8.2" deleted

Chapter 7. Creating applications from installed Operators

This guide walks developers through an example of creating applications from an installed Operator using the OpenShift Container Platform web console.

7.1. Creating an etcd cluster using an Operator

This procedure walks through creating a new etcd cluster using the etcd Operator, managed by the Operator Lifecycle Manager (OLM).

Prerequisites

- Access to an OpenShift Container Platform 4.4 cluster.

- The etcd Operator already installed cluster-wide by an administrator.

Procedure

- Create a new project in the OpenShift Container Platform web console for this procedure. This example uses a project called my-etcd.

Navigate to the Operators → Installed Operators page. The Operators that have been installed to the cluster by the cluster administrator and are available for use are shown here as a list of ClusterServiceVersions (CSVs). CSVs are used to launch and manage the software provided by the Operator.

TipYou can get this list from the CLI using:

$ oc get csv

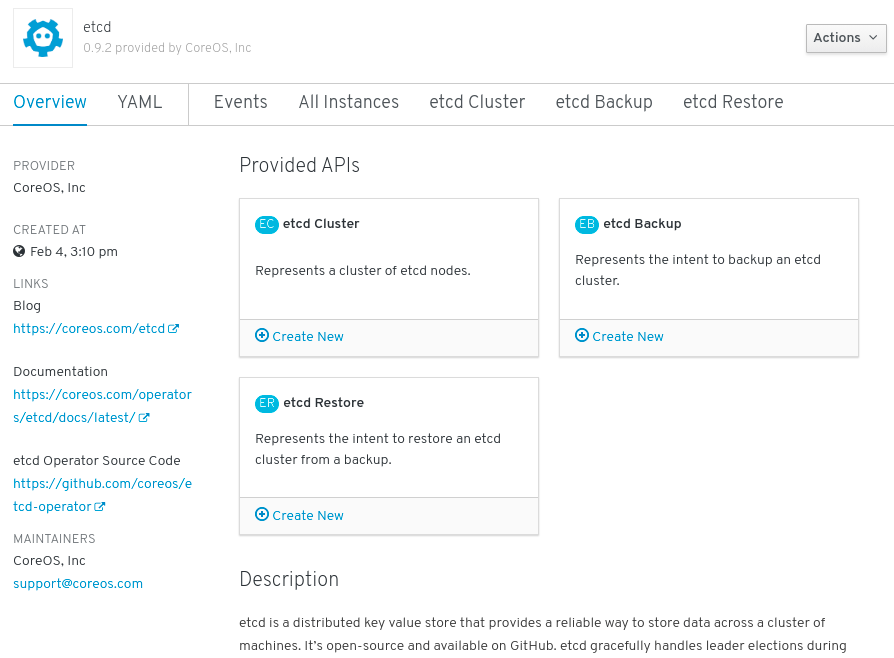

On the Installed Operators page, click Copied, and then click the etcd Operator to view more details and available actions:

Figure 7.1. etcd Operator overview

As shown under Provided APIs, this Operator makes available three new resource types, including one for an etcd Cluster (the

EtcdClusterresource). These objects work similar to the built-in native Kubernetes ones, such asDeploymentsorReplicaSets, but contain logic specific to managing etcd.Create a new etcd cluster:

- In the etcd Cluster API box, click Create New.

-

The next screen allows you to make any modifications to the minimal starting template of an

EtcdClusterobject, such as the size of the cluster. For now, click Create to finalize. This triggers the Operator to start up the pods, services, and other components of the new etcd cluster.

Click the Resources tab to see that your project now contains a number of resources created and configured automatically by the Operator.

Figure 7.2. etcd Operator resources

Verify that a Kubernetes service has been created that allows you to access the database from other pods in your project.

All users with the

editrole in a given project can create, manage, and delete application instances (an etcd cluster, in this example) managed by Operators that have already been created in the project, in a self-service manner, just like a cloud service. If you want to enable additional users with this ability, project administrators can add the role using the following command:$ oc policy add-role-to-user edit <user> -n <target_project>

You now have an etcd cluster that will react to failures and rebalance data as Pods become unhealthy or are migrated between nodes in the cluster. Most importantly, cluster administrators or developers with proper access can now easily use the database with their applications.

Chapter 8. Viewing Operator status

Understanding the state of the system in Operator Lifecycle Manager (OLM) is important for making decisions about and debugging problems with installed Operators. OLM provides insight into Subscriptions and related Catalog Source resources regarding their state and actions performed. This helps users better understand the healthiness of their Operators.

8.1. Condition types

Subscriptions can report the following condition types:

Table 8.1. Subscription condition types

| Condition | Description |

|---|---|

|

| Some or all of the Catalog Sources to be used in resolution are unhealthy. |

|

| A Subscription’s InstallPlan is missing. |

|

| A Subscription’s InstallPlan is pending installation. |

|

| A Subscription’s InstallPlan has failed. |

8.2. Viewing Operator status using the CLI

You can view Operator status using the CLI.

Procedure

Use the

oc describecommand to inspect the Subscription resource:$ oc describe sub <subscription_name>

In the command output, find the

Conditionssection:Conditions: Last Transition Time: 2019-07-29T13:42:57Z Message: all available catalogsources are healthy Reason: AllCatalogSourcesHealthy Status: False Type: CatalogSourcesUnhealthy

Chapter 9. Creating policy for Operator installations and upgrades

Operators can require wide privileges to run, and the required privileges can change between versions. Operator Lifecycle Manager (OLM) runs with cluster-admin privileges. By default, Operator authors can specify any set of permissions in the ClusterServiceVersion (CSV) and OLM will consequently grant it to the Operator.

Cluster administrators should take measures to ensure that an Operator cannot achieve cluster-scoped privileges and that users cannot escalate privileges using OLM. One method for locking this down requires cluster administrators auditing Operators before they are added to the cluster. Cluster administrators are also provided tools for determining and constraining which actions are allowed during an Operator installation or upgrade using service accounts.

By associating an OperatorGroup with a service account that has a set of privileges granted to it, cluster administrators can set policy on Operators to ensure they operate only within predetermined boundaries using RBAC rules. The Operator is unable to do anything that is not explicitly permitted by those rules.

This self-sufficient, limited scope installation of Operators by non-cluster administrators means that more of the Operator Framework tools can safely be made available to more users, providing a richer experience for building applications with Operators.

9.1. Understanding Operator installation policy

Using OLM, cluster administrators can choose to specify a service account for an OperatorGroup so that all Operators associated with the OperatorGroup are deployed and run against the privileges granted to the service account.

APIService and CustomResourceDefinition resources are always created by OLM using the cluster-admin role. A service account associated with an OperatorGroup should never be granted privileges to write these resources.

If the specified service account does not have adequate permissions for an Operator that is being installed or upgraded, useful and contextual information is added to the status of the respective resource(s) so that it is easy for the cluster administrator to troubleshoot and resolve the issue.

Any Operator tied to this OperatorGroup is now confined to the permissions granted to the specified service account. If the Operator asks for permissions that are outside the scope of the service account, the install fails with appropriate errors.

9.1.1. Installation scenarios

When determining whether an Operator can be installed or upgraded on a cluster, OLM considers the following scenarios:

- A cluster administrator creates a new OperatorGroup and specifies a service account. All Operator(s) associated with this OperatorGroup are installed and run against the privileges granted to the service account.

- A cluster administrator creates a new OperatorGroup and does not specify any service account. OpenShift Container Platform maintains backward compatibility, so the default behavior remains and Operator installs and upgrades are permitted.

- For existing OperatorGroups that do not specify a service account, the default behavior remains and Operator installs and upgrades are permitted.

- A cluster administrator updates an existing OperatorGroup and specifies a service account. OLM allows the existing Operator to continue to run with their current privileges. When such an existing Operator is going through an upgrade, it is reinstalled and run against the privileges granted to the service account like any new Operator.

- A service account specified by an OperatorGroup changes by adding or removing permissions, or the existing service account is swapped with a new one. When existing Operators go through an upgrade, it is reinstalled and run against the privileges granted to the updated service account like any new Operator.

- A cluster administrator removes the service account from an OperatorGroup. The default behavior remains and Operator installs and upgrades are permitted.

9.1.2. Installation workflow

When an OperatorGroup is tied to a service account and an Operator is installed or upgraded, OLM uses the following workflow:

- The given Subscription object is picked up by OLM.

- OLM fetches the OperatorGroup tied to this Subscription.

- OLM determines that the OperatorGroup has a service account specified.

- OLM creates a client scoped to the service account and uses the scoped client to install the Operator. This ensures that any permission requested by the Operator is always confined to that of the service account in the OperatorGroup.

- OLM creates a new service account with the set of permissions specified in the CSV and assigns it to the Operator. The Operator runs as the assigned service account.

9.2. Scoping Operator installations

To provide scoping rules to Operator installations and upgrades on OLM, associate a service account with an OperatorGroup.

Using this example, a cluster administrator can confine a set of Operators to a designated namespace.

Procedure

Create a new namespace:

$ cat <<EOF | oc create -f - apiVersion: v1 kind: Namespace metadata: name: scoped EOF

Allocate permissions that you want the Operator(s) to be confined to. This involves creating a new service account, relevant Role(s), and RoleBinding(s).

$ cat <<EOF | oc create -f - apiVersion: v1 kind: ServiceAccount metadata: name: scoped namespace: scoped EOF

The following example grants the service account permissions to do anything in the designated namespace for simplicity. In a production environment, you should create a more fine-grained set of permissions:

$ cat <<EOF | oc create -f - apiVersion: rbac.authorization.k8s.io/v1 kind: Role metadata: name: scoped namespace: scoped rules: - apiGroups: ["*"] resources: ["*"] verbs: ["*"] --- apiVersion: rbac.authorization.k8s.io/v1 kind: RoleBinding metadata: name: scoped-bindings namespace: scoped roleRef: apiGroup: rbac.authorization.k8s.io kind: Role name: scoped subjects: - kind: ServiceAccount name: scoped namespace: scoped EOF

Create an OperatorGroup in the designated namespace. This OperatorGroup targets the designated namespace to ensure that its tenancy is confined to it. In addition, OperatorGroups allow a user to specify a service account. Specify the ServiceAccount created in the previous step:

$ cat <<EOF | oc create -f - apiVersion: operators.coreos.com/v1 kind: OperatorGroup metadata: name: scoped namespace: scoped spec: serviceAccountName: scoped targetNamespaces: - scoped EOF

Any Operator installed in the designated namespace is tied to this OperatorGroup and therefore to the service account specified.

Create a Subscription in the designated namespace to install an Operator:

$ cat <<EOF | oc create -f - apiVersion: operators.coreos.com/v1alpha1 kind: Subscription metadata: name: etcd namespace: scoped spec: channel: singlenamespace-alpha name: etcd source: <catalog_source_name> 1 sourceNamespace: <catalog_source_namespace> 2 EOF

Any Operator tied to this OperatorGroup is confined to the permissions granted to the specified service account. If the Operator requests permissions that are outside the scope of the service account, the installation fails with appropriate errors.

9.2.1. Fine-grained permissions

OLM uses the service account specified in OperatorGroup to create or update the following resources related to the Operator being installed:

- ClusterServiceVersion

- Subscription

- Secret

- ServiceAccount

- Service

- ClusterRole and ClusterRoleBinding

- Role and RoleBinding

In order to confine Operators to a designated namespace, cluster administrators can start by granting the following permissions to the service account:

The following role is a generic example and additional rules might be required based on the specific Operator.

kind: Role rules: - apiGroups: ["operators.coreos.com"] resources: ["subscriptions", "clusterserviceversions"] verbs: ["get", "create", "update", "patch"] - apiGroups: [""] resources: ["services", "serviceaccounts"] verbs: ["get", "create", "update", "patch"] - apiGroups: ["rbac.authorization.k8s.io"] resources: ["roles", "rolebindings"] verbs: ["get", "create", "update", "patch"] - apiGroups: ["apps"] 1 resources: ["deployments"] verbs: ["list", "watch", "get", "create", "update", "patch", "delete"] - apiGroups: [""] 2 resources: ["pods"] verbs: ["list", "watch", "get", "create", "update", "patch", "delete"]

In addition, if any Operator specifies a pull secret, the following permissions must also be added:

kind: ClusterRole 1

rules:

- apiGroups: [""]

resources: ["secrets"]

verbs: ["get"]

---

kind: Role

rules:

- apiGroups: [""]

resources: ["secrets"]

verbs: ["create", "update", "patch"]- 1

- Required to get the secret from the OLM namespace.

9.3. Troubleshooting permission failures

If an Operator installation fails due to lack of permissions, identify the errors using the following procedure.

Procedure

Review the Subscription object. Its status has an object reference

installPlanRefthat points to the InstallPlan object that attempted to create the necessary [Cluster]Role[Binding](s) for the Operator:apiVersion: operators.coreos.com/v1 kind: Subscription metadata: name: etcd namespace: scoped status: installPlanRef: apiVersion: operators.coreos.com/v1 kind: InstallPlan name: install-4plp8 namespace: scoped resourceVersion: "117359" uid: 2c1df80e-afea-11e9-bce3-5254009c9c23Check the status of the InstallPlan object for any errors:

apiVersion: operators.coreos.com/v1 kind: InstallPlan status: conditions: - lastTransitionTime: "2019-07-26T21:13:10Z" lastUpdateTime: "2019-07-26T21:13:10Z" message: 'error creating clusterrole etcdoperator.v0.9.4-clusterwide-dsfx4: clusterroles.rbac.authorization.k8s.io is forbidden: User "system:serviceaccount:scoped:scoped" cannot create resource "clusterroles" in API group "rbac.authorization.k8s.io" at the cluster scope' reason: InstallComponentFailed status: "False" type: Installed phase: FailedThe error message tells you:

-

The type of resource it failed to create, including the API group of the resource. In this case, it was

clusterrolesin therbac.authorization.k8s.iogroup. - The name of the resource.

-

The type of error:

is forbiddentells you that the user does not have enough permission to do the operation. - The name of the user who attempted to create or update the resource. In this case, it refers to the service account specified in the OperatorGroup.

The scope of the operation:

cluster scopeor not.The user can add the missing permission to the service account and then iterate.

NoteOLM does not currently provide the complete list of errors on the first try, but may be added in a future release.

-

The type of resource it failed to create, including the API group of the resource. In this case, it was

Chapter 10. Using Operator Lifecycle Manager on restricted networks

When OpenShift Container Platform is installed on restricted networks, also known as a disconnected cluster, Operator Lifecycle Manager (OLM) can no longer use the default OperatorHub sources because they require full Internet connectivity. Cluster administrators can disable those default sources and create local mirrors so that OLM can install and manage Operators from the local sources instead.

While OLM can manage Operators from local sources, the ability for a given Operator to run successfully in a restricted network still depends on the Operator itself. The Operator must:

-

List any related images, or other container images that the Operator might require to perform their functions, in the

relatedImagesparameter of its ClusterServiceVersion (CSV) object. - Reference all specified images by a digest (SHA) and not by a tag.

See the following Red Hat Knowledgebase Article for a list of Red Hat Operators that support running in disconnected mode:

Additional resources

10.1. Understanding Operator catalog images

Operator Lifecycle Manager (OLM) always installs Operators from the latest version of an Operator catalog. As of OpenShift Container Platform 4.3, Red Hat-provided Operators are distributed via Quay App Registry catalogs from quay.io.

Table 10.1. Red Hat-provided App Registry catalogs

| Catalog | Description |

|---|---|

|

| Public catalog for Red Hat products packaged and shipped by Red Hat. Supported by Red Hat. |

|

| Public catalog for products from leading independent software vendors (ISVs). Red Hat partners with ISVs to package and ship. Supported by the ISV. |

|

| Public catalog for software maintained by relevant representatives in the operator-framework/community-operators GitHub repository. No official support. |

As catalogs are updated, the latest versions of Operators change, and older versions may be removed or altered. This behavior can cause problems maintaining reproducible installs over time. In addition, when OLM runs on an OpenShift Container Platform cluster in a restricted network environment, it is unable to access the catalogs from quay.io directly.

Using the oc adm catalog build command, cluster administrators can create an Operator catalog image. An Operator catalog image is:

- a point-in-time export of an App Registry type catalog’s content.

- the result of converting an App Registry catalog to a container image type catalog.

- an immutable artifact.

Creating an Operator catalog image provides a simple way to use this content without incurring the aforementioned issues.

10.2. Building an Operator catalog image

Cluster administrators can build a custom Operator catalog image to be used by Operator Lifecycle Manager (OLM) and push the image to a container image registry that supports Docker v2-2. For a cluster on a restricted network, this registry can be a registry that the cluster has network access to, such as the mirror registry created during the restricted network installation.

The OpenShift Container Platform cluster’s internal registry cannot be used as the target registry because it does not support pushing without a tag, which is required during the mirroring process.

For this example, the procedure assumes use of the mirror registry that has access to both your network and the internet.

Prerequisites

- A Linux workstation with unrestricted network access [1]

-

ocversion 4.3.5+ -

podmanversion 1.4.4+ - Access to mirror registry that supports Docker v2-2

If you are working with private registries, set the

REG_CREDSenvironment variable to the file path of your registry credentials for use in later steps. For example, for thepodmanCLI:$ REG_CREDS=${XDG_RUNTIME_DIR}/containers/auth.jsonIf you are working with private namespaces that your quay.io account has access to, you must set a Quay authentication token. Set the

AUTH_TOKENenvironment variable for use with the--auth-tokenflag by making a request against the login API using your quay.io credentials:$ AUTH_TOKEN=$(curl -sH "Content-Type: application/json" \ -XPOST https://quay.io/cnr/api/v1/users/login -d ' { "user": { "username": "'"<quay_username>"'", "password": "'"<quay_password>"'" } }' | jq -r '.token')

Procedure

On the workstation with unrestricted network access, authenticate with the target mirror registry:

$ podman login <registry_host_name>

Also authenticate with

registry.redhat.ioso that the base image can be pulled during the build:$ podman login registry.redhat.io

Build a catalog image based on the

redhat-operatorscatalog from quay.io, tagging and pushing it to your mirror registry:$ oc adm catalog build \ --appregistry-org redhat-operators \1 --from=registry.redhat.io/openshift4/ose-operator-registry:v4.4 \2 --filter-by-os="linux/amd64" \3 --to=<registry_host_name>:<port>/olm/redhat-operators:v1 \4 [-a ${REG_CREDS}] \5 [--insecure] \6 [--auth-token "${AUTH_TOKEN}"] 7 INFO[0013] loading Bundles dir=/var/folders/st/9cskxqs53ll3wdn434vw4cd80000gn/T/300666084/manifests-829192605 ... Pushed sha256:f73d42950021f9240389f99ddc5b0c7f1b533c054ba344654ff1edaf6bf827e3 to example_registry:5000/olm/redhat-operators:v1- 1

- Organization (namespace) to pull from an App Registry instance.

- 2

- Set

--fromto theose-operator-registrybase image using the tag that matches the target OpenShift Container Platform cluster major and minor version. - 3

- Set

--filter-by-osto the operating system and architecture to use for the base image, which must match the target OpenShift Container Platform cluster. Valid values arelinux/amd64,linux/ppc64le, andlinux/s390x. - 4

- Name your catalog image and include a tag, for example,

v1. - 5

- Optional: If required, specify the location of your registry credentials file.

- 6

- Optional: If you do not want to configure trust for the target registry, add the

--insecureflag. - 7

- Optional: If other application registry catalogs are used that are not public, specify a Quay authentication token.

Sometimes invalid manifests are accidentally introduced into Red Hat’s catalogs; when this happens, you might see some errors:

... INFO[0014] directory dir=/var/folders/st/9cskxqs53ll3wdn434vw4cd80000gn/T/300666084/manifests-829192605 file=4.2 load=package W1114 19:42:37.876180 34665 builder.go:141] error building database: error loading package into db: fuse-camel-k-operator.v7.5.0 specifies replacement that couldn't be found Uploading ... 244.9kB/s

These errors are usually non-fatal, and if the Operator package mentioned does not contain an Operator you plan to install or a dependency of one, then they can be ignored.

Additional resources

10.3. Configuring OperatorHub for restricted networks