-

Language:

English

-

Language:

English

Chapter 10. Continuing Node Host Installation for Enterprise

10.1. Front-End Server Proxies

Virtual Hosts front-end is the default for deployments. However, alternate front-end servers can be installed and configured and are available as a set of plug-ins. When multiple plug-ins are used at one time, every method call for a front-end event, such as creating or deleting a gear, becomes a method call in each loaded plug-in. The results are merged in a contextually sensitive manner. For example, each plug-in typically only records and returns the specific connection options that it parses. In OpenShift Enterprise, connection options for all loaded plug-ins are merged and reported as one connection with all set options from all plug-ins.

iptables to listen on external-facing ports and forwards incoming requests to the appropriate application. High-range ports are reserved on node hosts for scaled applications to allow inter-node connections. See Section 9.10.7, “Configuring the Port Proxy” for information on iptables port proxy.

iptables proxy listens on ports that are unique to each gear.

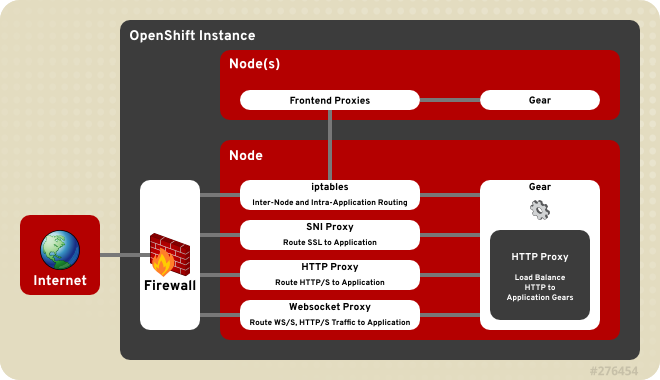

Figure 10.1. OpenShift Enterprise Front-End Server Proxies

10.1.1. Configuring Front-end Server Plug-ins

OPENSHIFT_FRONTEND_HTTP_PLUGINS parameter in the /etc/openshift/node.conf file. The value of this parameter is a comma-separated list of names of the Ruby gems that must be loaded for each plug-in. The gem name of a plug-in is found after the rubygem- in its RPM package name.

/etc/openshift/node.conf file, any plug-ins which are loaded into the running environment as a dependency of those explicitly listed are also used.

Example 10.1. Front-end Server Plug-in Configuration

# Gems for managing the frontend http server # NOTE: Steps must be taken both before and after these values are changed. # Run "oo-frontend-plugin-modify --help" for more information. OPENSHIFT_FRONTEND_HTTP_PLUGINS=openshift-origin-frontend-apache-vhost,openshift-origin-frontend-nodejs-websocket,openshift-origin-frontend-haproxy-sni-proxy

10.1.2. Installing and Configuring the HTTP Proxy Plug-in

Note

prefork module and the multi-threaded worker module in Apache are supported. The prefork module is used by default, but for better performance you can change to the worker module. This can be changed in the /etc/sysconfig/httpd file:

HTTPD=/usr/sbin/httpd.worker

apache-vhost and apache-mod-rewrite, and both have a dependency, the apachedb plug-in, which is installed when using either.

apache-vhost, which is based on Apache Virtual Hosts. The apache-vhost plug-in is provided by the rubygem-openshift-origin-frontend-apache-vhost RPM package. The virtual host configurations are written to .conf files in the /etc/httpd/conf.d/openshift directory, which is a symbolic link to the /var/lib/openshift/.httpd.d directory.

apache-mod-rewrite plug-in provides a front end based on Apache's mod_rewrite module, but configured by a set of Berkley DB files to route application web requests to their respective gears. The mod_rewrite front end owns the default Apache Virtual Hosts with limited flexibility. However, it can scale high-density deployments with thousands of gears on a node host and maintain optimum performance. The apache-mod-rewrite plug-in is provided by the rubygem-openshift-origin-frontend-apache-mod-rewrite RPM package, and the mappings for each application are persisted in the /var/lib/openshift/.httpd.d/*.txt file.

apache-mod-rewrite plug-in as the default. However, this has been deprecated, making the apache-vhost plug-in the new default for OpenShift Enterprise 2.2. The Apache mod_rewrite front end plug-in is best suited for deployments with thousands of gears per node host, and where gears are frequently created and destroyed. However, the default Apache Virtual Hosts plug-in is best suited for more stable deployments with hundreds of gears per node host, and where gears are infrequently created and destroyed. See Section 10.1.2.1, “Changing the Front-end HTTP Configuration for Existing Deployments” for information on how to change the HTTP front-end proxy of an already existing deployment to the new default.

Table 10.1. Apache Virtual Hosts Front-end Plug-in Information

| Plug-in Name | openshift-origin-frontend-apache-vhost |

| RPM | rubygem-openshift-origin-frontend-apache-vhost |

| Service | httpd |

| Ports | 80, 443 |

| Configuration Files | /etc/httpd/conf.d/000001_openshift_origin_frontend_vhost.conf |

| | /var/lib/openshift/.httpd.d/frontend-vhost-http-template.erb, the configurable template for HTTP vhosts

|

| | /var/lib/openshift/.httpd.d/frontend-vhost-https-template.erb, the configurable template for HTTPS vhosts

|

| | /etc/httpd/conf.d/openshift-http-vhost.include, optional, included by each HTTP vhost if present

|

| | /etc/httpd/conf.d/openshift-https-vhost.include, optional, included by each HTTPS vhost if present

|

Table 10.2. Apache mod_rewrite Front-end Plug-in Information

| Plug-in Name | openshift-origin-frontend-apache-mod-rewrite |

| RPM | rubygem-openshift-origin-frontend-apache-mod-rewrite |

| Service | httpd |

| Ports | 80, 443 |

| Configuration Files | /etc/httpd/conf.d/000001_openshift_origin_node.conf |

| | /var/lib/openshift/.httpd.d/frontend-mod-rewrite-https-template.erb, configurable template for alias-with-custom-cert HTTPS vhosts

|

Important

apache-mod-rewrite plug-in is not compatible with the apache-vhost plug-in, ensure your HTTP front-end proxy is consistent across your deployment. Installing both of their RPMs on the same node host will cause conflicts at the host level. Whichever HTTP front-end you use must be consistent across the node hosts of your deployment. If your node hosts have a mix of HTTP front-ends, moving gears between them will cause conflicts at the deployment level. This is important to note if you change from the default front-end.

The apachedb plug-in is a dependency of the apache-mod-rewrite, apache-vhost, and nodejs-websocket plug-ins and provides base functionality. The GearDBPlugin plug-in provides common bookkeeping operations and is automatically included in plug-ins that require apachedb. The apachedb plug-in is provided by the rubygem-openshift-origin-frontend-apachedb RPM package.

Note

CONF_NODE_APACHE_FRONTEND parameter can be specified to override the default HTTP front-end server configuration.

10.1.2.1. Changing the Front-end HTTP Configuration for Existing Deployments

Virtual Hosts front-end HTTP proxy is the default for new deployments. If your nodes are currently using the previous default, the Apache mod_rewrite plug-in, you can use the following procedure to change the front-end configuration of your existing deployment.

Procedure 10.1. To Change the Front-end HTTP Configuration on an Existing Deployment:

- To prevent the broker from making any changes to the front-end during this procedure, stop the ruby193-mcollective service on the node host:

#

Then set the following environment variable to prevent each front-end change from restarting the httpd service:service ruby193-mcollective stop#

export APACHE_HTTPD_DO_NOT_RELOAD=1 - Back up the existing front-end configuration. You will use this backup to restore the complete state of the front end after the process is complete. Replace filename with your desired backup storage location:

#

oo-frontend-plugin-modify --save > filename - Delete the existing front-end configuration:

#

oo-frontend-plugin-modify --delete - Remove and install the front-end plug-in packages as necessary:

#

yum remove rubygem-openshift-origin-frontend-apache-mod-rewrite#yum -y install rubygem-openshift-origin-frontend-apache-vhost - Replicate any Apache customizations reliant on the old plug-in onto the new plug-in, then restart the httpd service:

#

service httpd restart - Change the

OPENSHIFT_FRONTEND_HTTP_PLUGINSvalue in the/etc/openshift/node.conffile fromopenshift-origin-frontend-apache-mod-rewritetoopenshift-origin-frontend-apache-vhost:OPENSHIFT_FRONTEND_HTTP_PLUGINS="openshift-origin-frontend-apache-vhost"

- Un-set the previous environment variable to restarting the httpd service as normal after any front-end changes:

#

export APACHE_HTTPD_DO_NOT_RELOAD="" - Restart the MCollective service:

#

service ruby193-mcollective restart - Restore the HTTP front-end configuration from the backup you created in step one:

#

oo-frontend-plugin-modify --restore < filename

10.1.3. Installing and Configuring the SNI Proxy Plug-in

PROXY_PORTS parameter in the /etc/openshift/node-plugins.d/openshift-origin-frontend-haproxy-sni-proxy.conf file. The configured ports must be exposed externally by adding a rule in iptables so that they are accessible on all node hosts where the SNI proxy is running. These ports must be available to all application end users. The SNI proxy also requires that a client uses TLS with the SNI extension and a URL containing either the fully-qualified domain name or OpenShift Enterprise alias of the application. See the OpenShift Enterprise User Guide [9] for more information on setting application aliases.

/var/lib/openshift/.httpd.d/sniproxy.json file. These mappings must be entered during gear creation, so the SNI proxy must be enabled prior to deploying any applications that require the proxy.

Table 10.3. SNI Proxy Plug-in Information

| Plug-in Name | openshift-origin-frontend-haproxy-sni-proxy |

| RPM | rubygem-openshift-origin-frontend-haproxy-sni-proxy |

| Service | openshift-sni-proxy |

| Ports | 2303-2308 (configurable) |

| Configuration Files | /etc/openshift/node-plugins.d/openshift-origin-frontend-haproxy-sni-proxy.conf |

Important

Procedure 10.2. To Enable the SNI Front-end Plug-in:

- Install the required RPM package:

#

yum install rubygem-openshift-origin-frontend-haproxy-sni-proxy - Open the necessary ports in the firewall. Add the following to the

/etc/sysconfig/iptablesfile just before the-A INPUT -j REJECTrule:-A INPUT -m state --state NEW -m tcp -p tcp --dport 2303:2308 -j ACCEPT

- Restart the

iptablesservice:#

If gears have already been deployed on the node, you might need to also restart the port proxy to enable connections to the gears of scaled applications:service iptables restart#

service node-iptables-port-proxy restart - Enable and start the SNI proxy service:

#

chkconfig openshift-sni-proxy on#service openshift-sni-proxy start - Add

openshift-origin-frontend-haproxy-sni-proxyto theOPENSHIFT_FRONTEND_HTTP_PLUGINSparameter in the/etc/openshift/node.conffile:Example 10.2. Adding the SNI Plug-in to the

/etc/openshift/node.confFileOPENSHIFT_FRONTEND_HTTP_PLUGINS=openshift-origin-frontend-apache-vhost,openshift-origin-frontend-nodejs-websocket,openshift-origin-frontend-haproxy-sni-proxy

- Restart the MCollective service:

#

service ruby193-mcollective restart

Note

CONF_ENABLE_SNI_PROXY parameter is set to "true", which is the default if the CONF_NODE_PROFILE parameter is set to "xpaas".

10.1.4. Installing and Configuring the Websocket Proxy Plug-in

nodejs-websocket plug-in manages the Node.js proxy with Websocket support at ports 8000 and 8443 by default. Requests are routed to the application according to the application's fully qualified domain name or alias. It can be installed with either of the HTTP plug-ins outlined in Section 10.1.2, “Installing and Configuring the HTTP Proxy Plug-in”.

nodejs-websocket plug-in is provided by the rubygem-openshift-origin-frontend-nodejs-websocket RPM package. The mapping rules of the external node address to the cartridge's listening ports are persisted in the /var/lib/openshift/.httpd.d/routes.json file. The configuration of the default ports and SSL certificates can be found in the /etc/openshift/web-proxy-config.json file.

Important

nodejs-websocket plug-in, because all traffic is routed to the first gear of an application.

Table 10.4. Websocket Front-end Plug-in Information

| Plug-in Name | nodejs-websocket |

| RPM | rubygem-openshift-origin-frontend-nodejs-websocket (required and configured by the rubygem-openshift-origin-node RPM) |

| Service | openshift-node-web-proxy |

| Ports | 8000, 8443 |

| Configuration Files | /etc/openshift/web-proxy-config.json |

OPENSHIFT_FRONTEND_HTTP_PLUGINS parameter in the node.conf file, stop and disable the service, and close the firewall ports. Any of these would disable the plug-ins, but to be consistent perform all.

10.1.5. Installing and Configuring the iptables Proxy Plug-in

iptables port proxy is essential for scalable applications. While not exactly a plug-in like the others outlined above, it is a required functionality of any scalable application, and does not need to be listed in the OPENSHIFT_FRONTEND_HTTP_PLUGINS parameter in the node.conf file. The configuration steps for this plug-in were performed earlier in Section 9.10.7, “Configuring the Port Proxy”. The iptables rules generated for the port proxy are stored in the /etc/openshift/iptables.filter.rules and /etc/openshift/iptables.nat.rules files and are applied each time the service is restarted.

iptables plug-in is intended to provide external ports that bypass the other front-end proxies. These ports have two main uses:

- Direct HTTP requests from load-balancer gears or the routing layer.

- Exposing services (such as a database service) on one gear to the other gears in the application.

iptables rules to route a single external port to a single internal port belonging to the corresponding gear.

Important

PORTS_PER_USER and PORT_BEGIN parameters in the /etc/openshift/node.conf file allow for carefully configuring the number of external ports allocated to each gear and the range of ports used by the proxy. Ensure these are consistent across all nodes in order to enable gear movement between them.

Table 10.5. iptables Front-end Plug-in Information

| RPM | rubygem-openshift-origin-node |

| Service | openshift-iptables-port-proxy |

| Ports | 35531 - 65535 |

| Configuration Files | The PORTS_PER_USER and PORT_BEGIN parameters in the /etc/openshift/node.conf file |